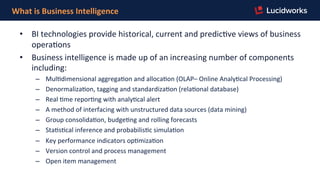

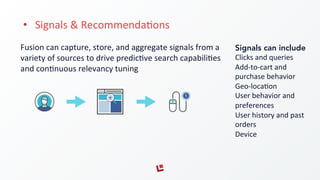

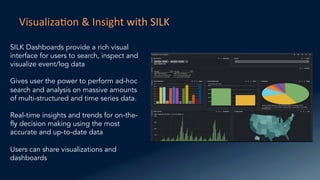

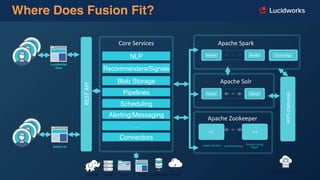

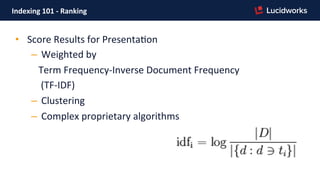

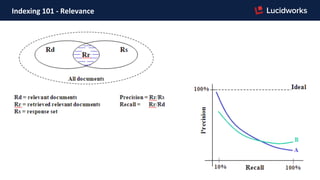

The document outlines a session led by Allan Syiek on the functionalities of business intelligence and enterprise search, highlighting the differences between querying, data mining, and machine learning. It discusses components of business intelligence, log analytics, and the role of Fusion in managing large-scale log data with predictive capabilities. Key topics include indexing, ranking, and real-time insights, emphasizing the ease of setup and use for businesses looking to leverage data effectively.

![Index Pipeline

Tika

Parser

Exclusion

Filter

Field

Mapper

HTML

Transform

Stage

XML

Transform

Stage

OpenNLP

EnFty

ExtracFon

Gaze]eer

ExtracFon

Regular

Expression

AggregaFng

Javascript

(custom

scripts)

…and

others…

SearchCollection

SearchUI

Search

Fields/Parameters

Facets

Landing

Pages

Boost

Documents

Block

Documents

Security

Trimming

RecommendaFon

BoosFng

Rollup

Aggregator

Sub

Query

Javascript

(custom

scripts)

…and

others…

Documents

Query Pipeline](https://image.slidesharecdn.com/webinar-fusionforbusinessintelligence-160914193204/85/Webinar-Fusion-for-Business-Intelligence-10-320.jpg)

![Indexing

101

A

system

used

to

make

finding

informa,on

easier.

Every

word

is

converted

into

a

wordID

by

using

an

in-‐memory

hash

table

-‐-‐

the

lexicon.

Occurrences

in

the

current

document

are

translated

into

hit

lists

and

are

wri]en

into

the

forward

“barrels”.

Inverted

Barrels

have

been

sorted.](https://image.slidesharecdn.com/webinar-fusionforbusinessintelligence-160914193204/85/Webinar-Fusion-for-Business-Intelligence-11-320.jpg)

![Sta,s,cs

vs.

Data

Mining

vs.

Machine

Learning

– Sta,s,cs

quan%fies

numbers

– Data

Mining

explains

pa]erns

– Machine

Learning

predicts

with

models

– Ar,ficial

Intelligence

behaves

and

reasons](https://image.slidesharecdn.com/webinar-fusionforbusinessintelligence-160914193204/85/Webinar-Fusion-for-Business-Intelligence-14-320.jpg)