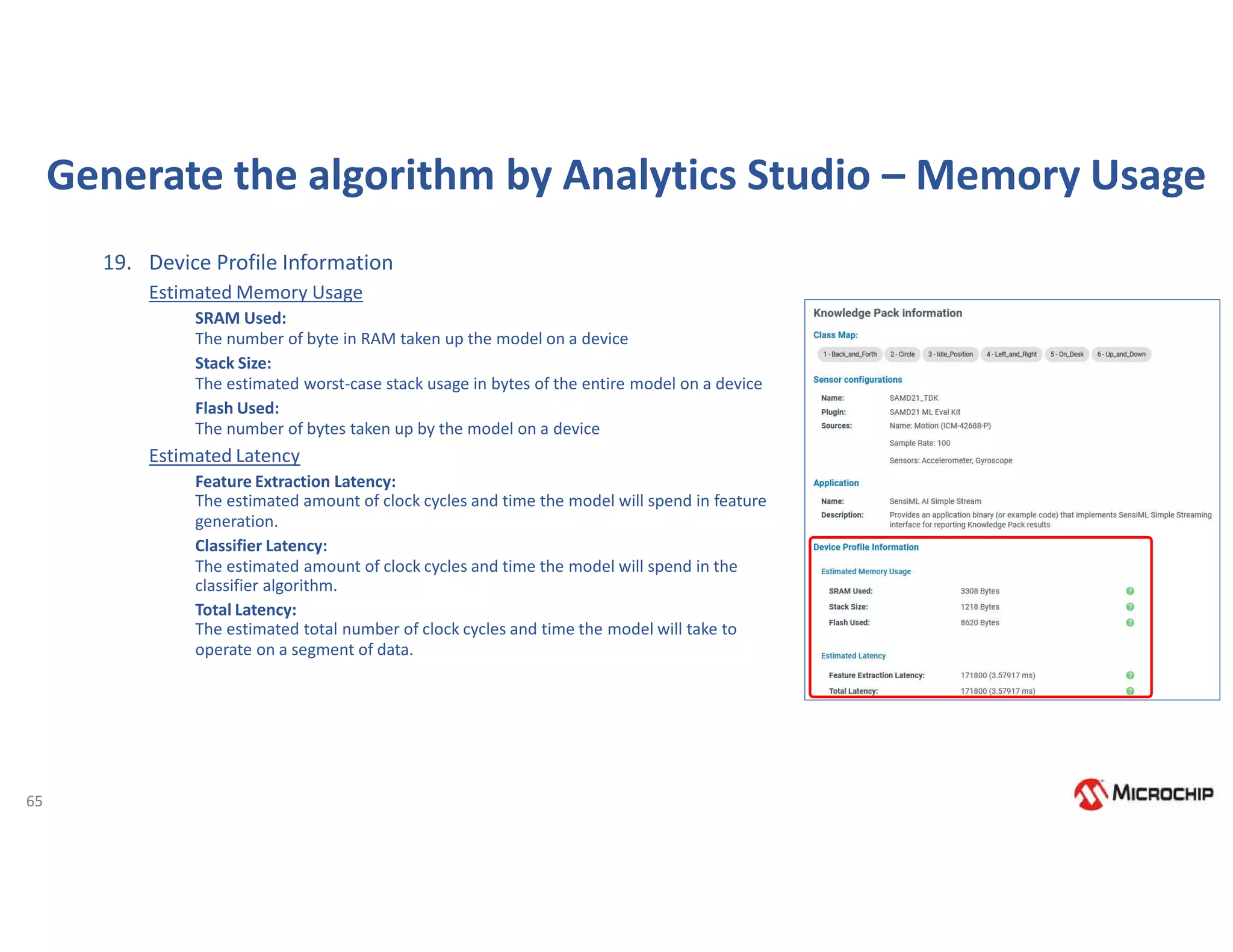

The document provides detailed information on Microchip's embedded development tools and machine learning solutions, including the MPLAB X IDE, various compilers, and the MPLAB Harmony framework. It outlines numerous demos and evaluation kits available for machine learning development, specifically highlighting TensorFlow Lite for microcontrollers and the workflow for gesture recognition using the SAMD21 ML evaluation kit. Additionally, it covers setup instructions, licensing options, and integration strategies for using Sensiml's toolkit with Microchip platforms.

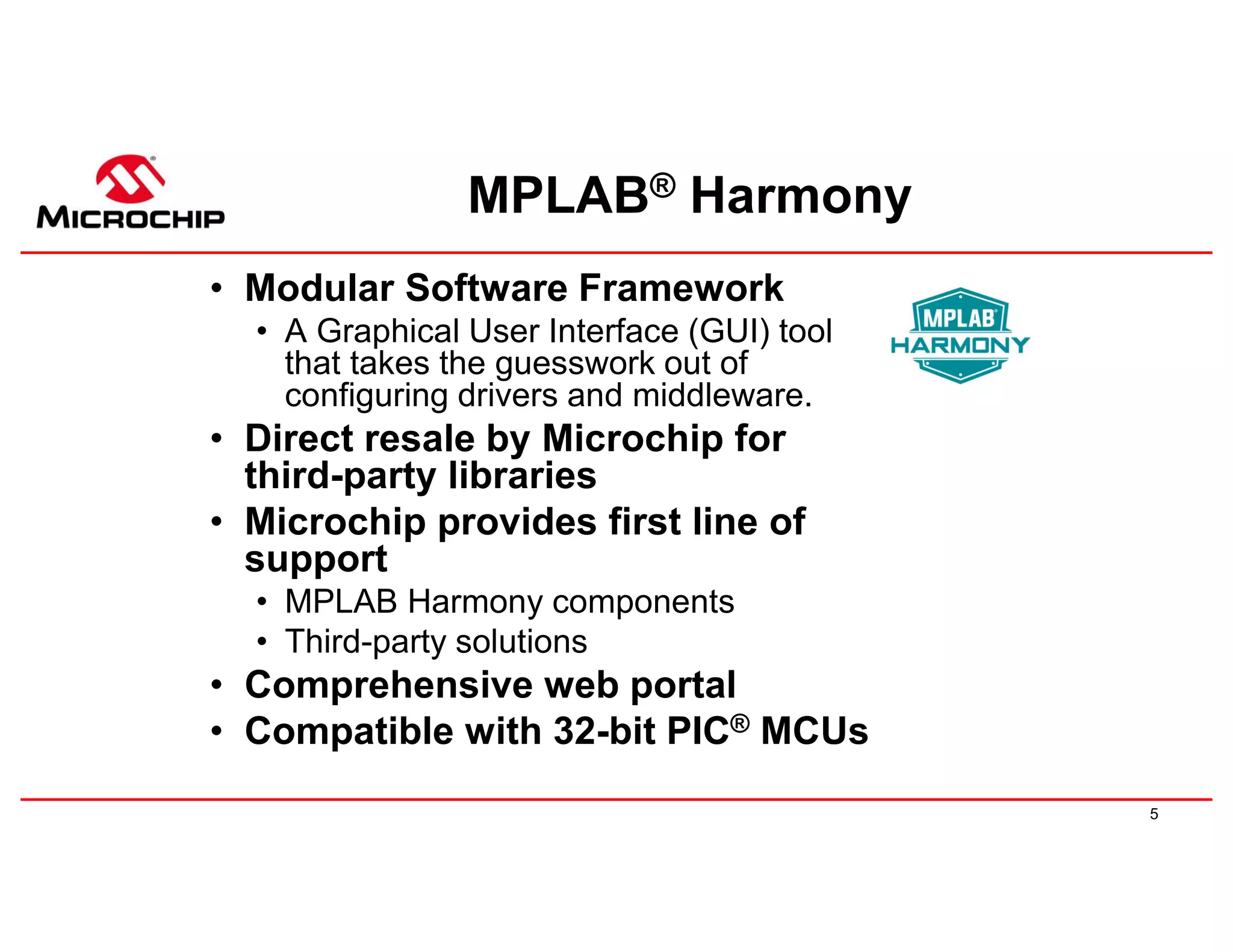

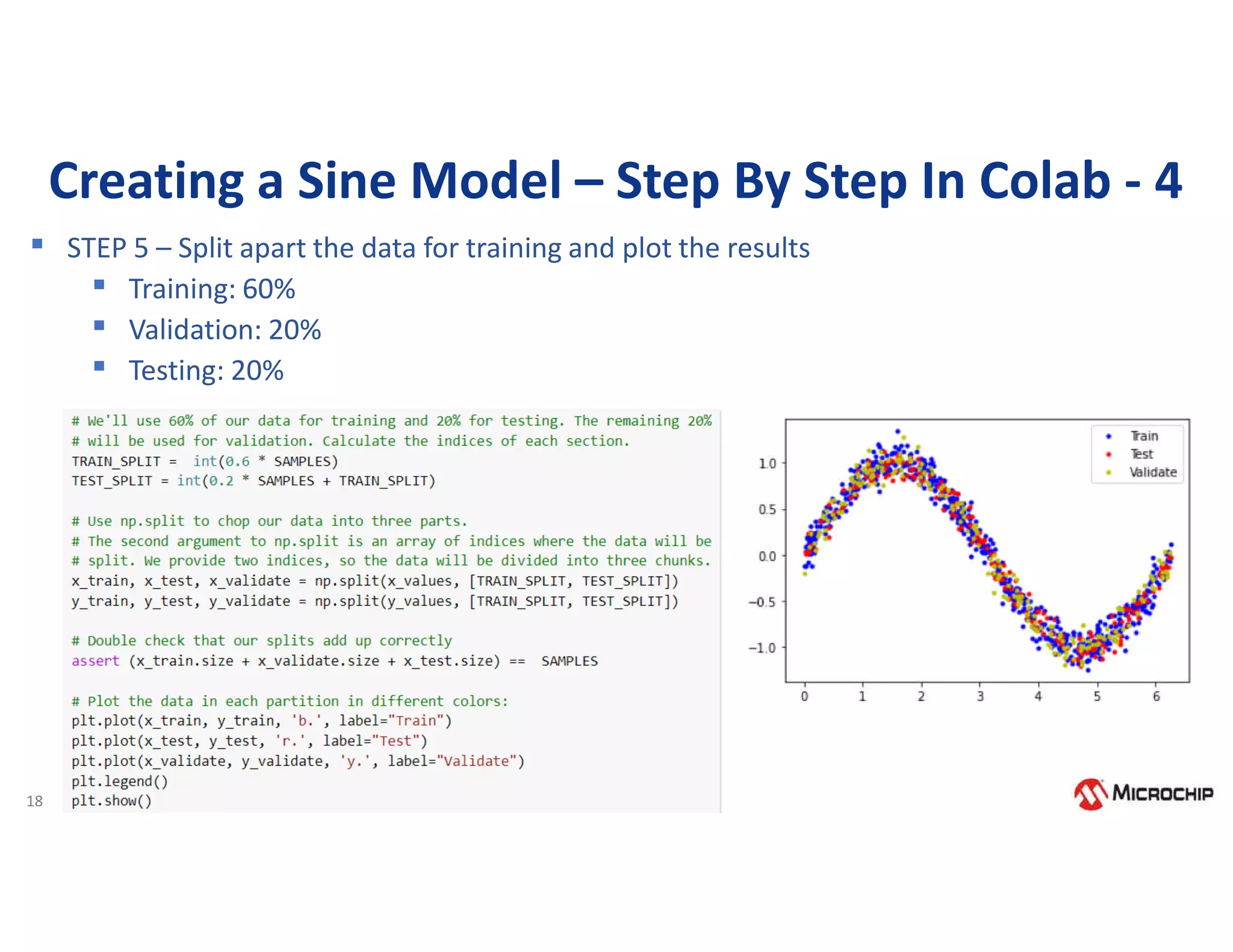

![14

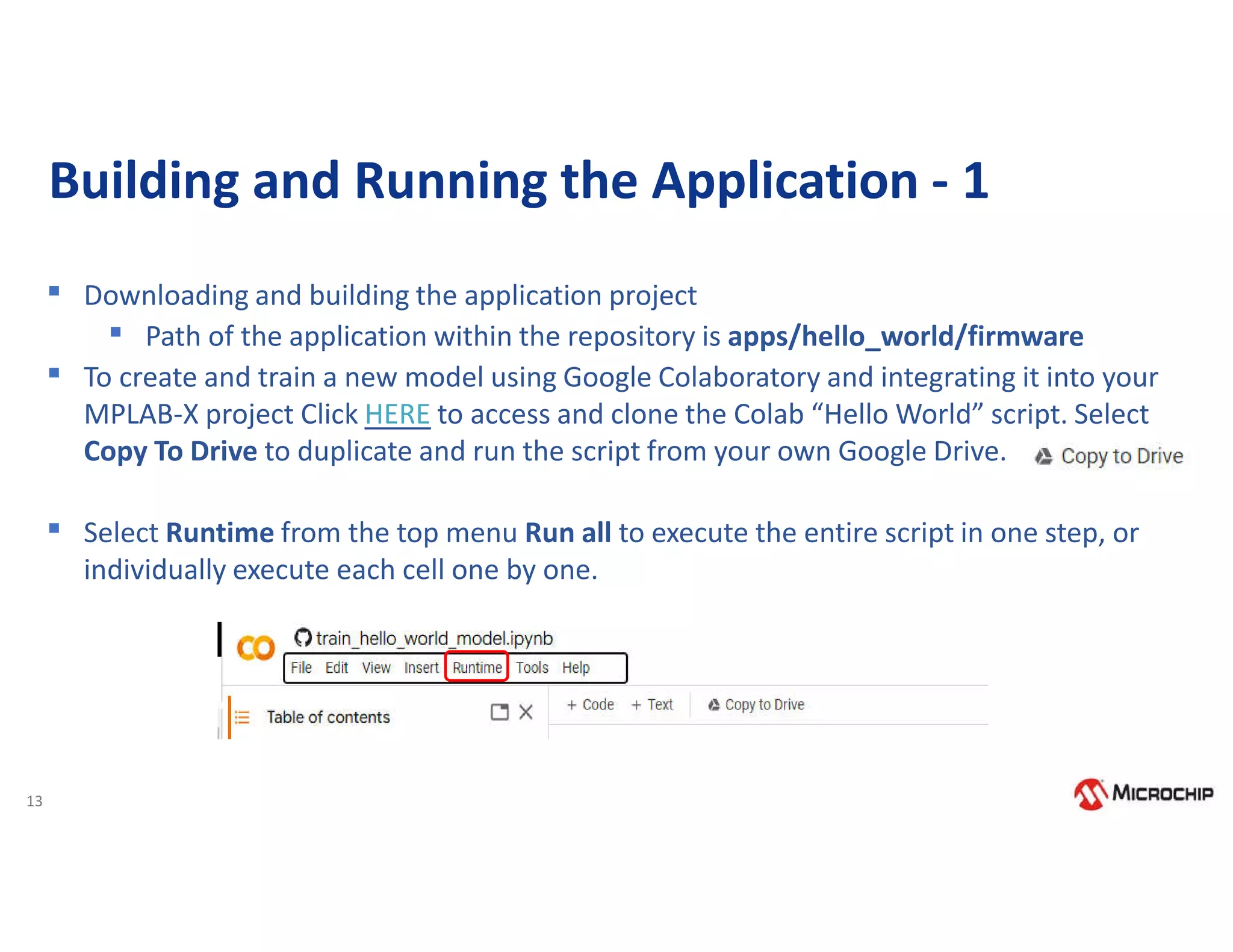

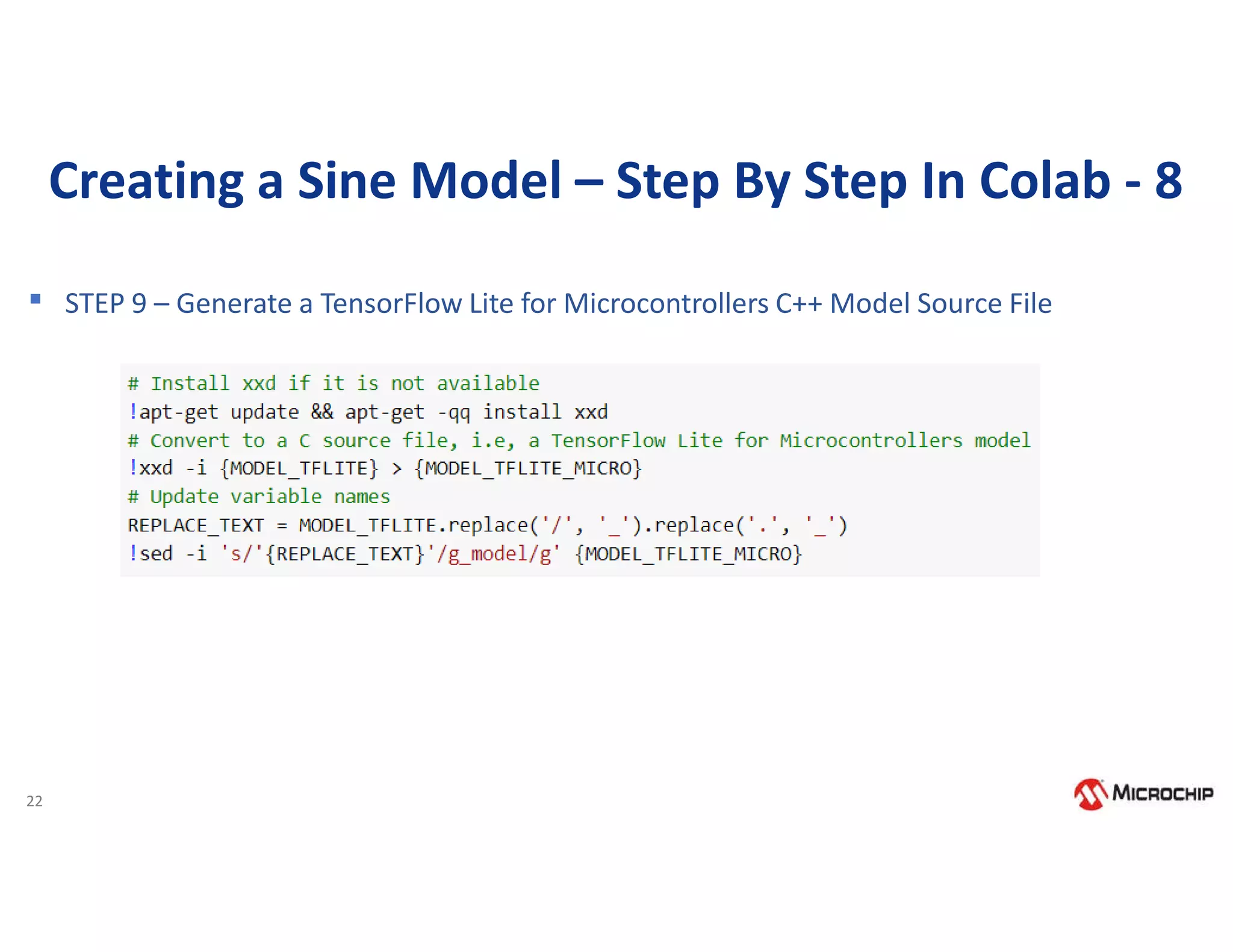

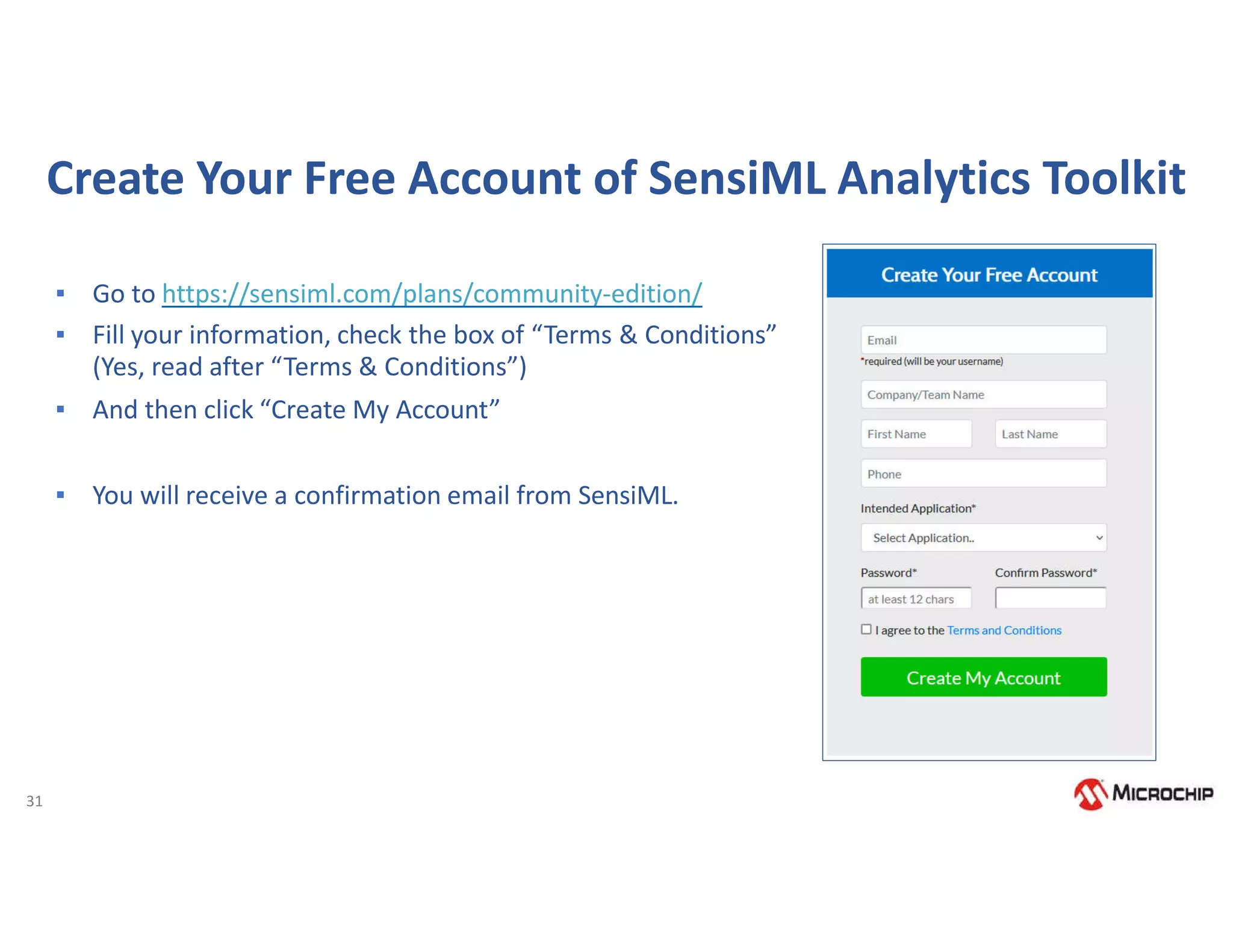

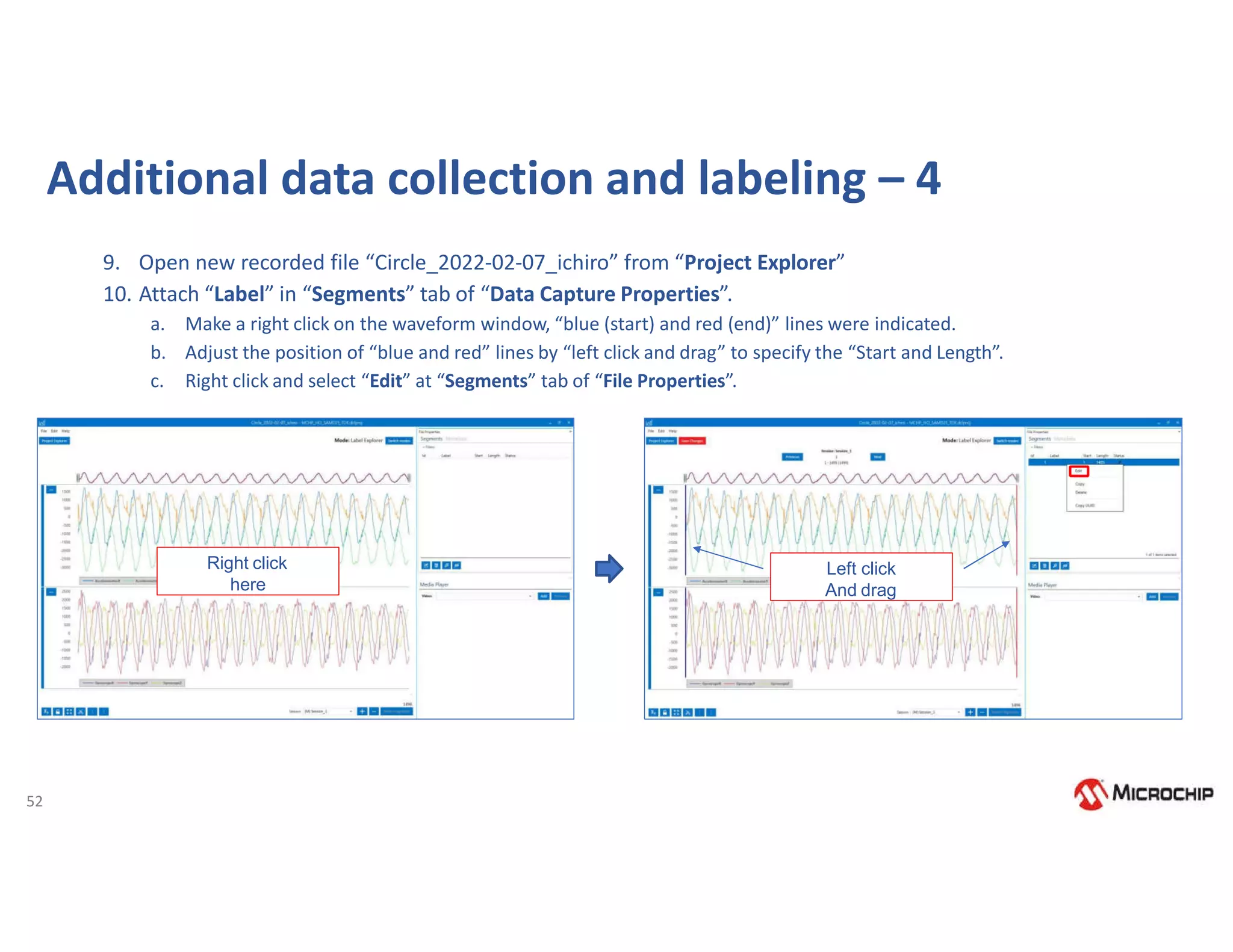

Building and Running the Application - 2

▪ After completing Run all, We can download

models directory in Colab as shown on the

right

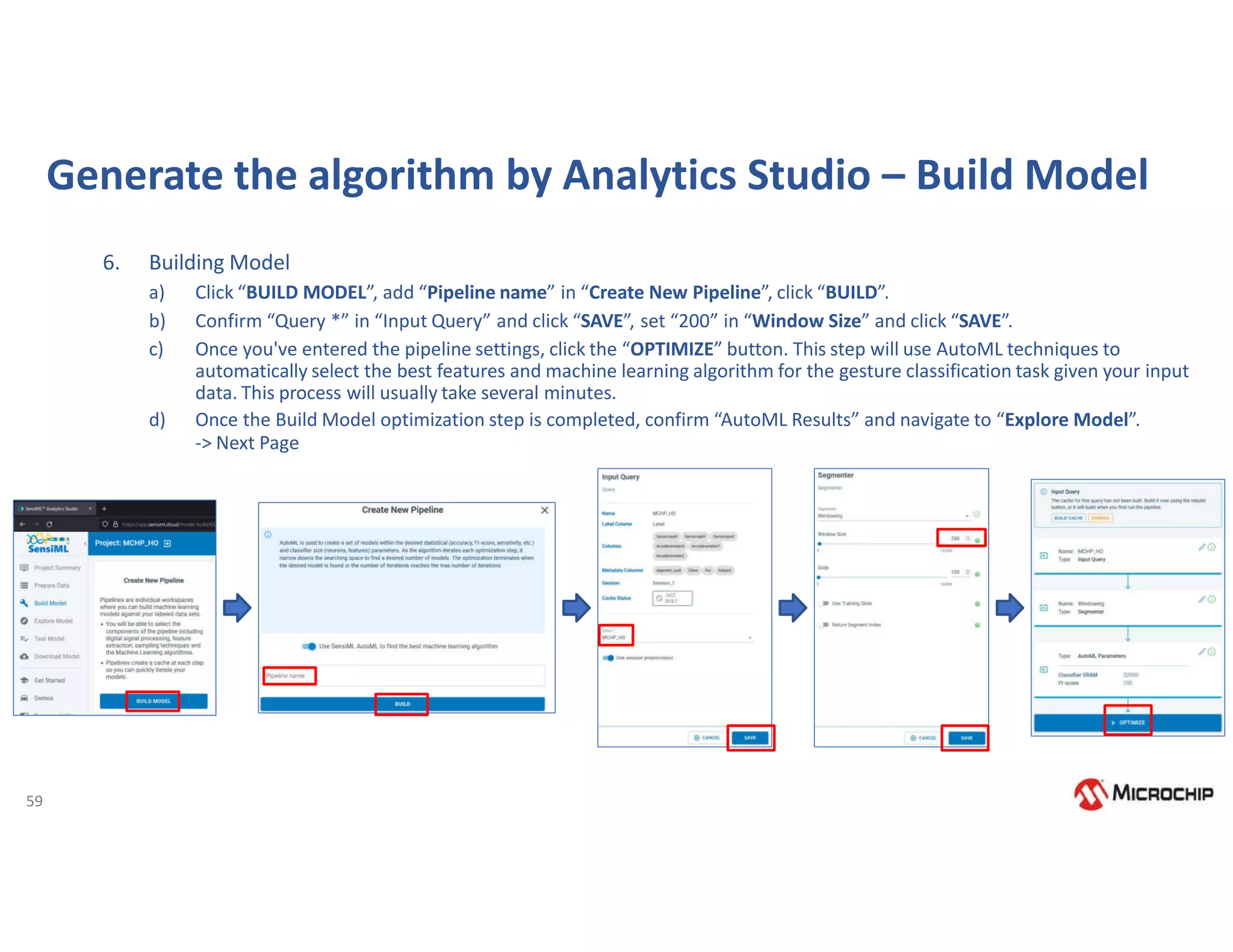

▪ The models directory includes the 3 model

files: model.pb , model.tflite and model.cc

▪ Copy the contents from model.cc file from

models directory and replace it in model.cpp

in your MPLAB-X project. Specifically, we need

to copy and paste the g_model[] array and

g_model_len variable declarations into

your model.cpp file defined you MPLAB

project to update the model.

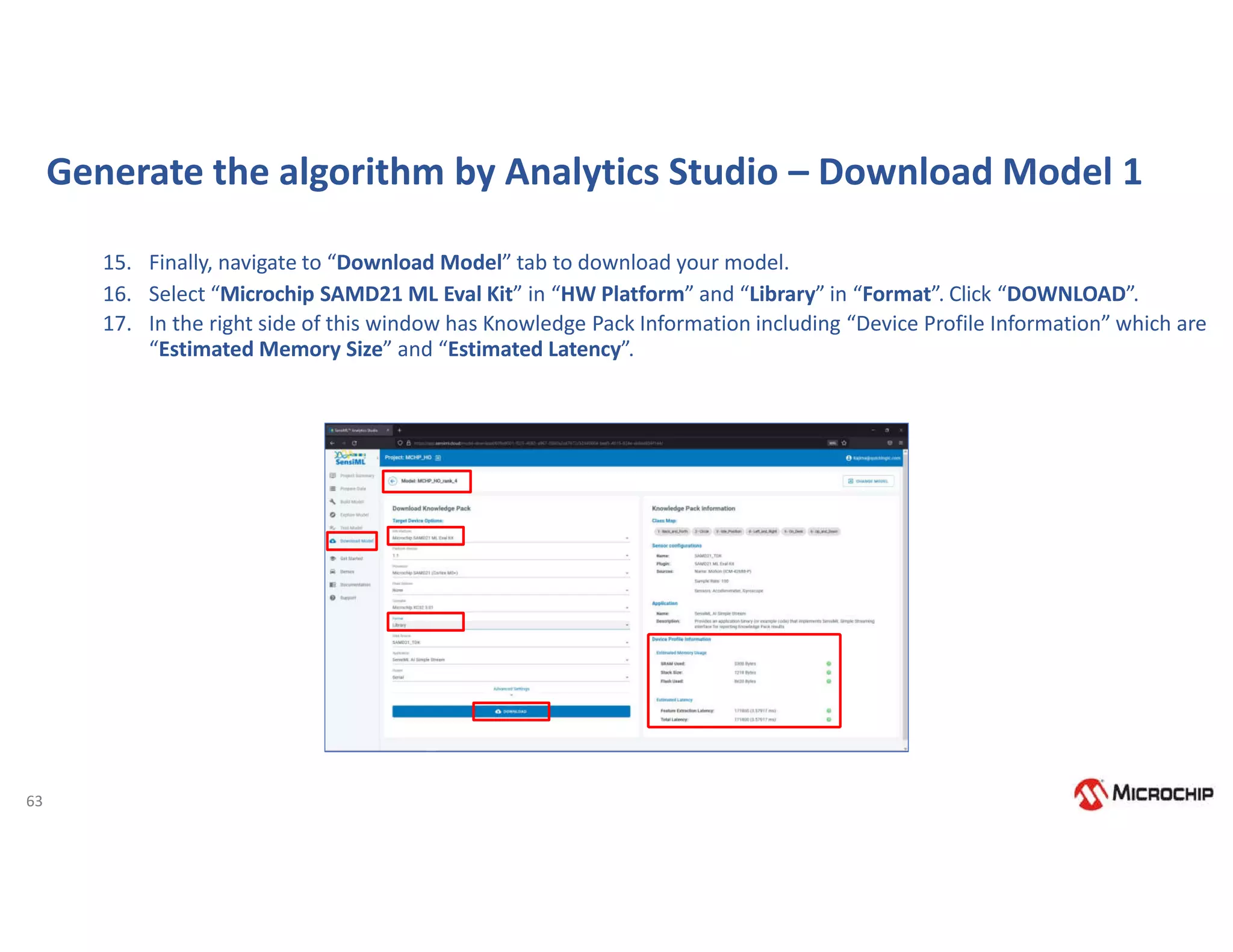

▪ Rebuild and Run the project. The sine wave

will be displayed on the screen.](https://image.slidesharecdn.com/rodrigo16nov-221117175505-00a22b53/75/Webinar-Comecando-seus-trabalhos-com-Machine-Learning-utilizando-ferramentas-e-demoboards-da-Microchip-15-2048.jpg)

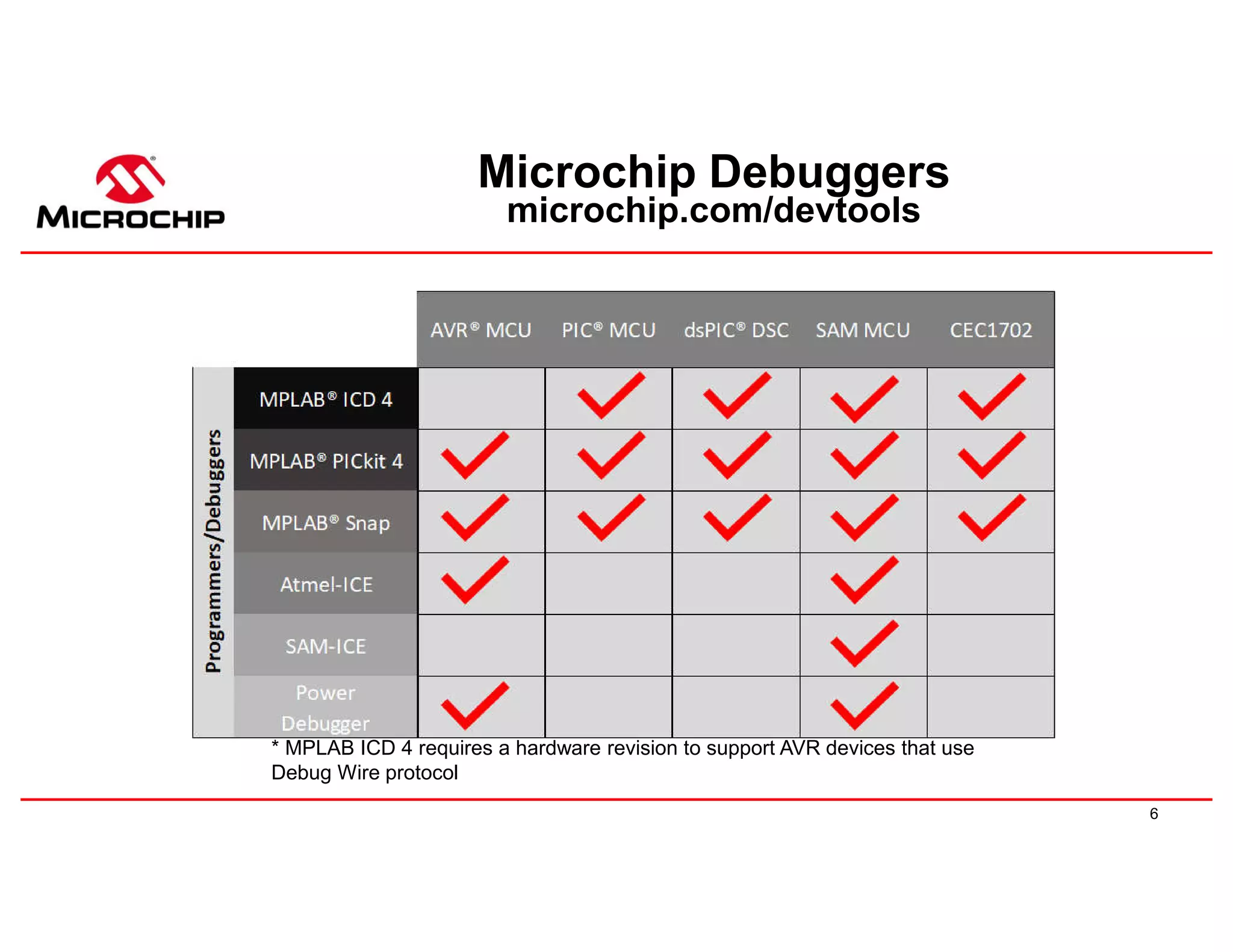

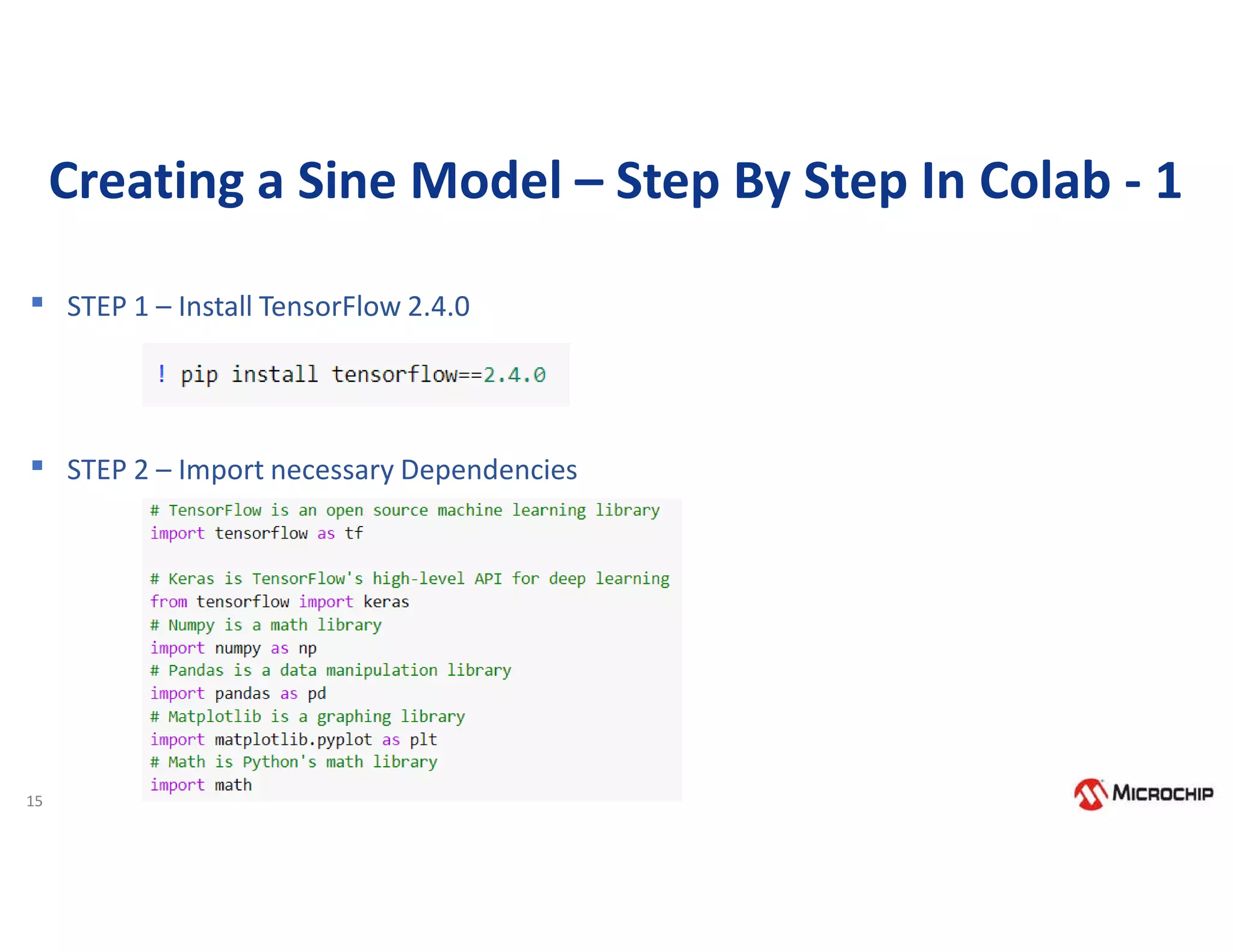

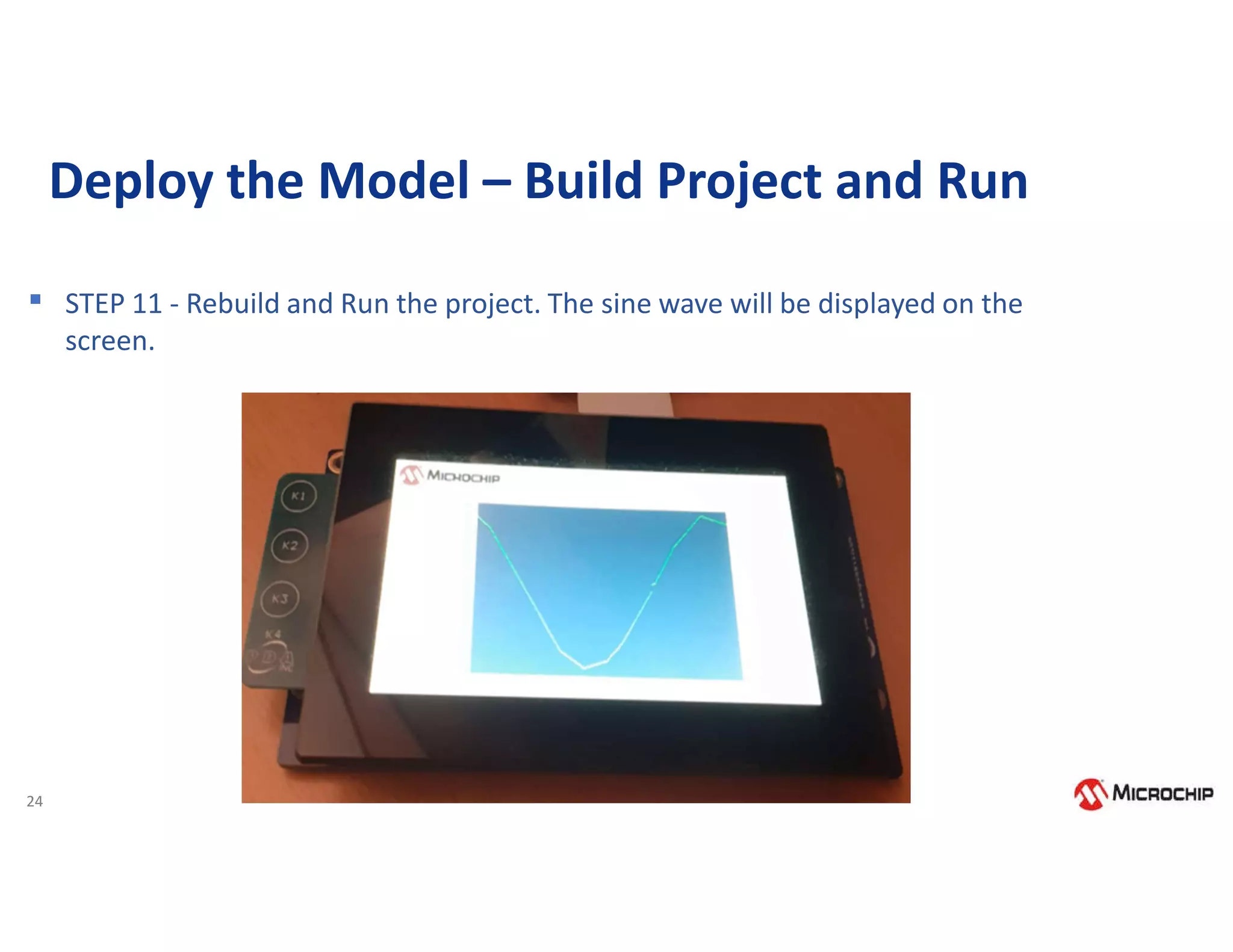

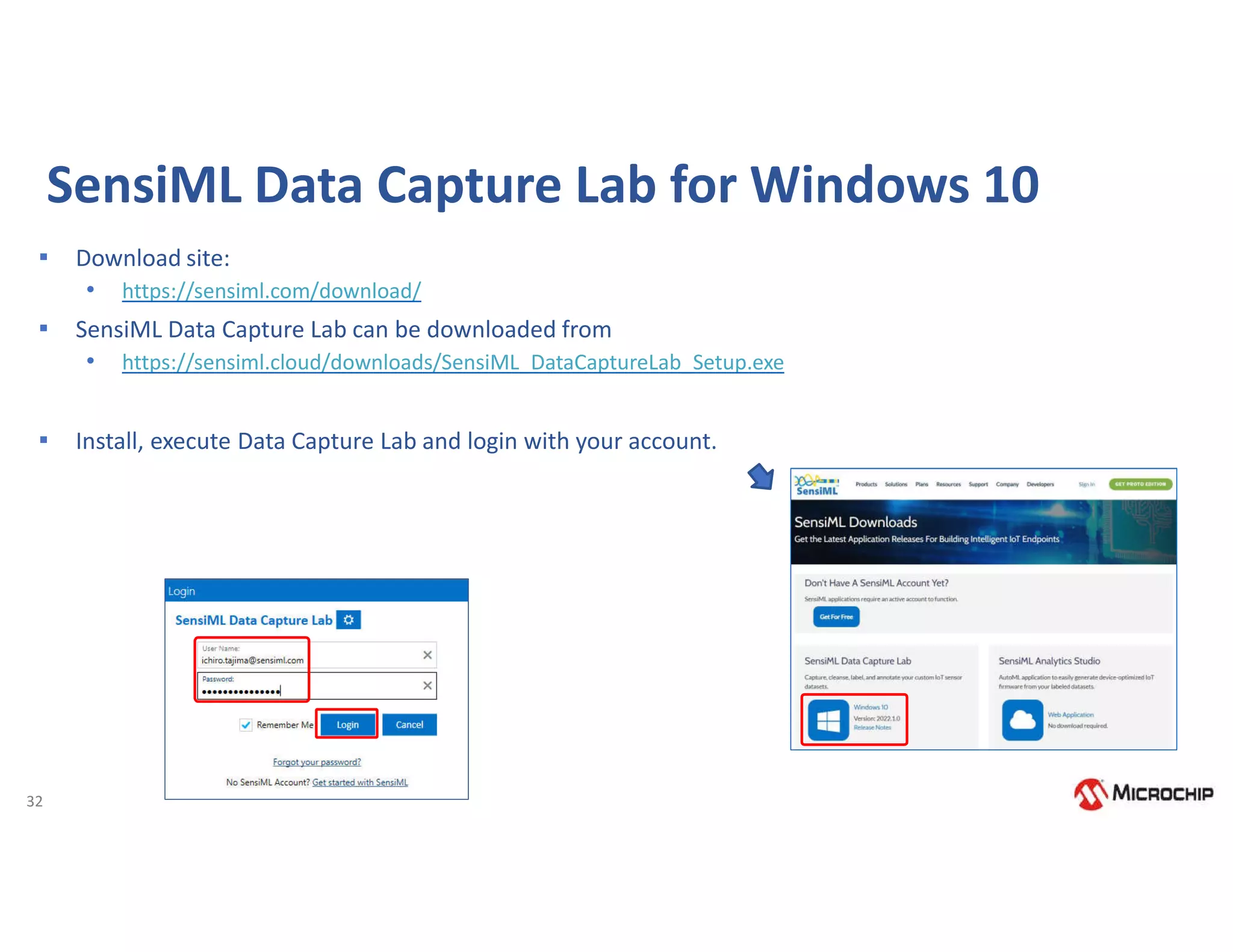

![23

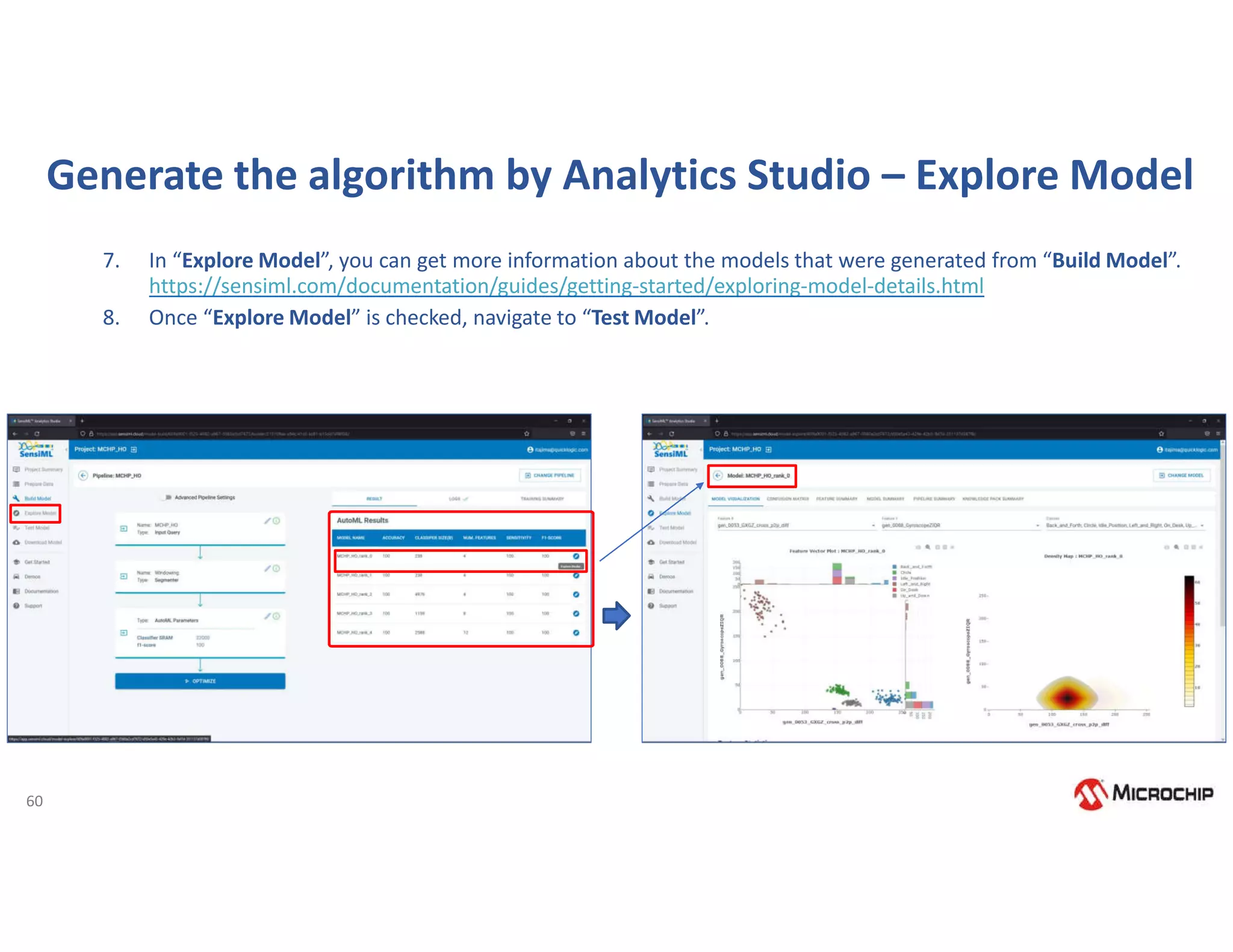

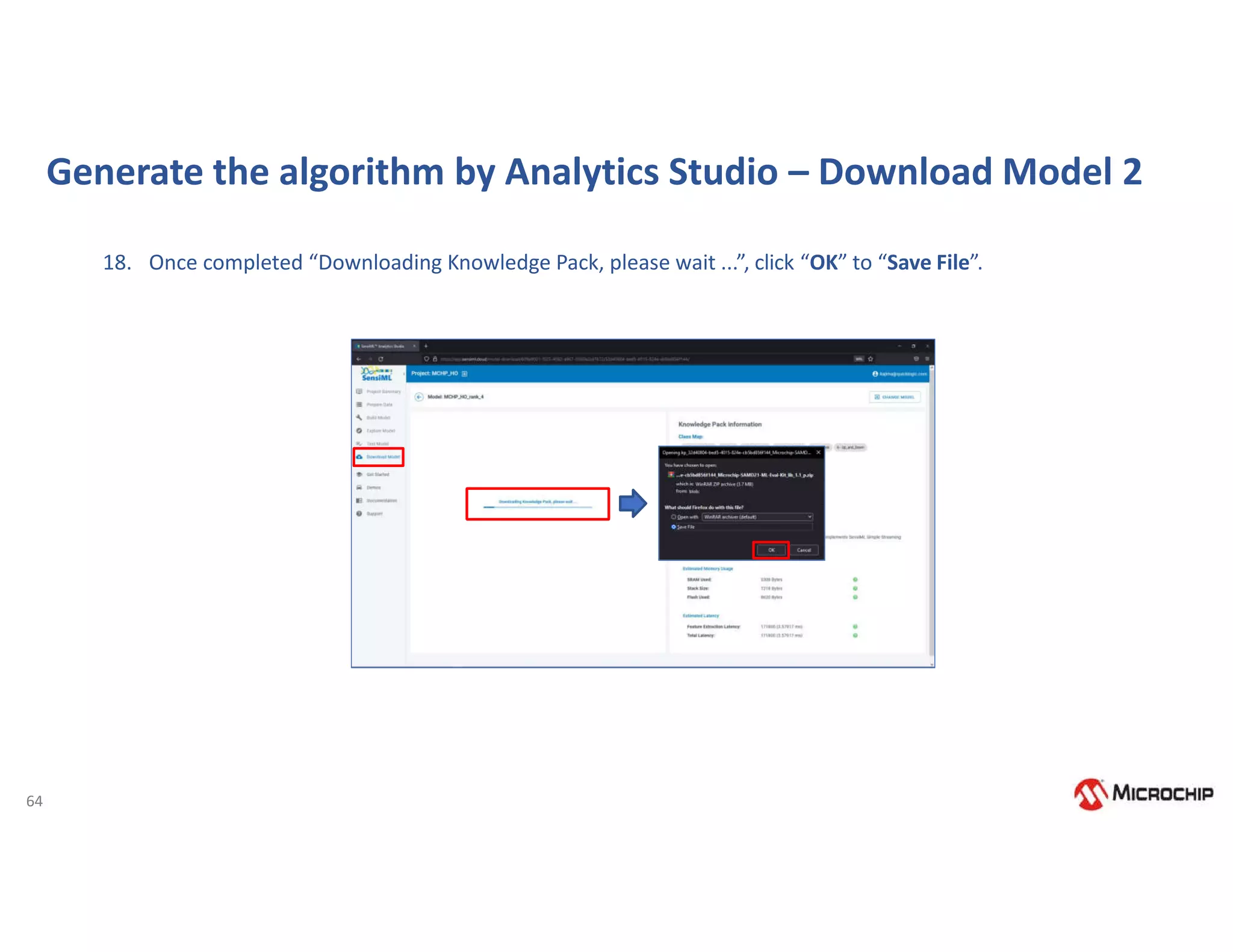

Deploy the Model – Copy to MPLAB-X Project

▪ STEP 10 – DEPLOY THE MODEL. Copy the

contents from model.cc file from models

directory and replace it in model.cpp in your

MPLAB-X project. Specifically, we need to copy

and paste the g_model[] array and

g_model_len variable declarations into

your model.cpp file defined you MPLAB

project to update the model.](https://image.slidesharecdn.com/rodrigo16nov-221117175505-00a22b53/75/Webinar-Comecando-seus-trabalhos-com-Machine-Learning-utilizando-ferramentas-e-demoboards-da-Microchip-24-2048.jpg)

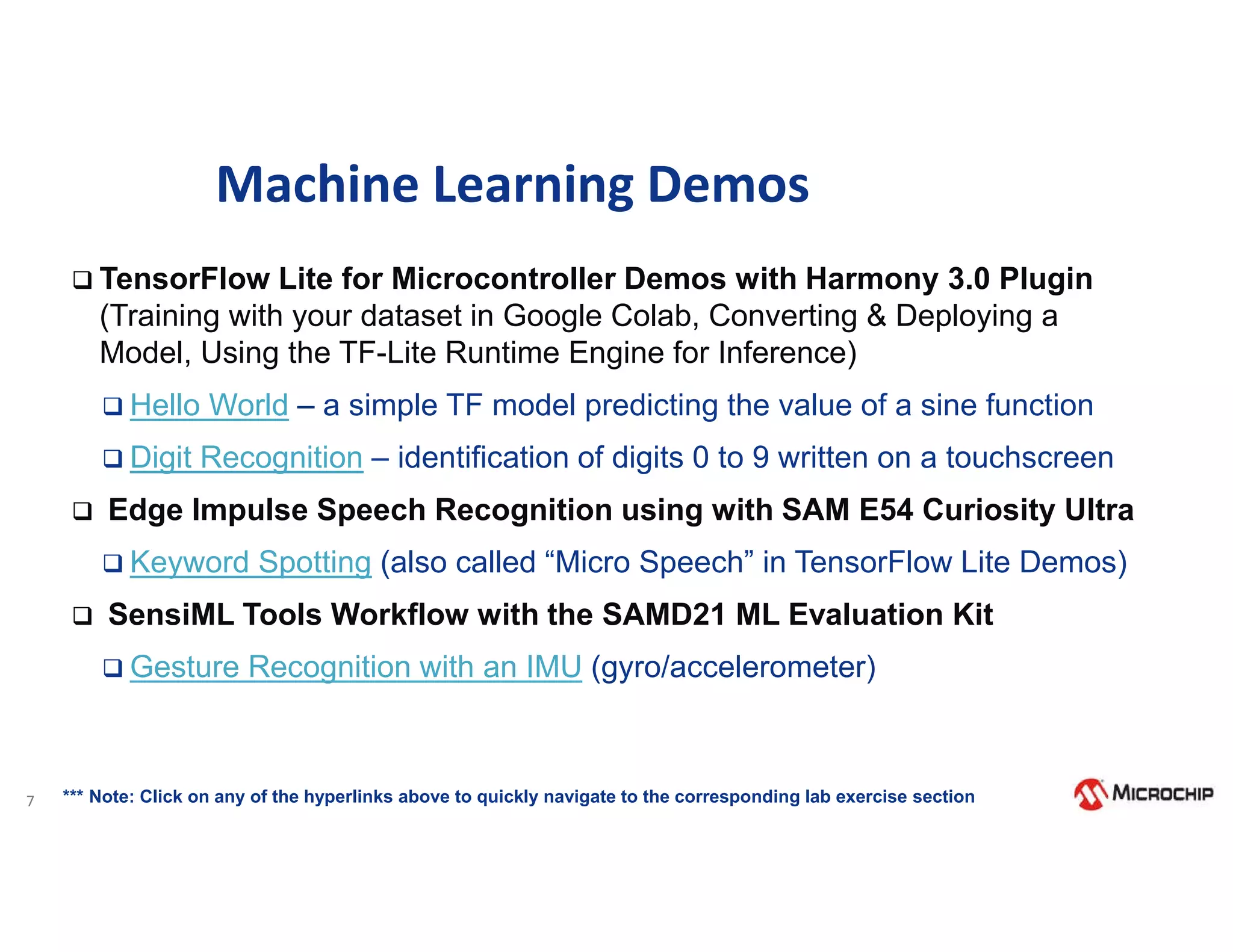

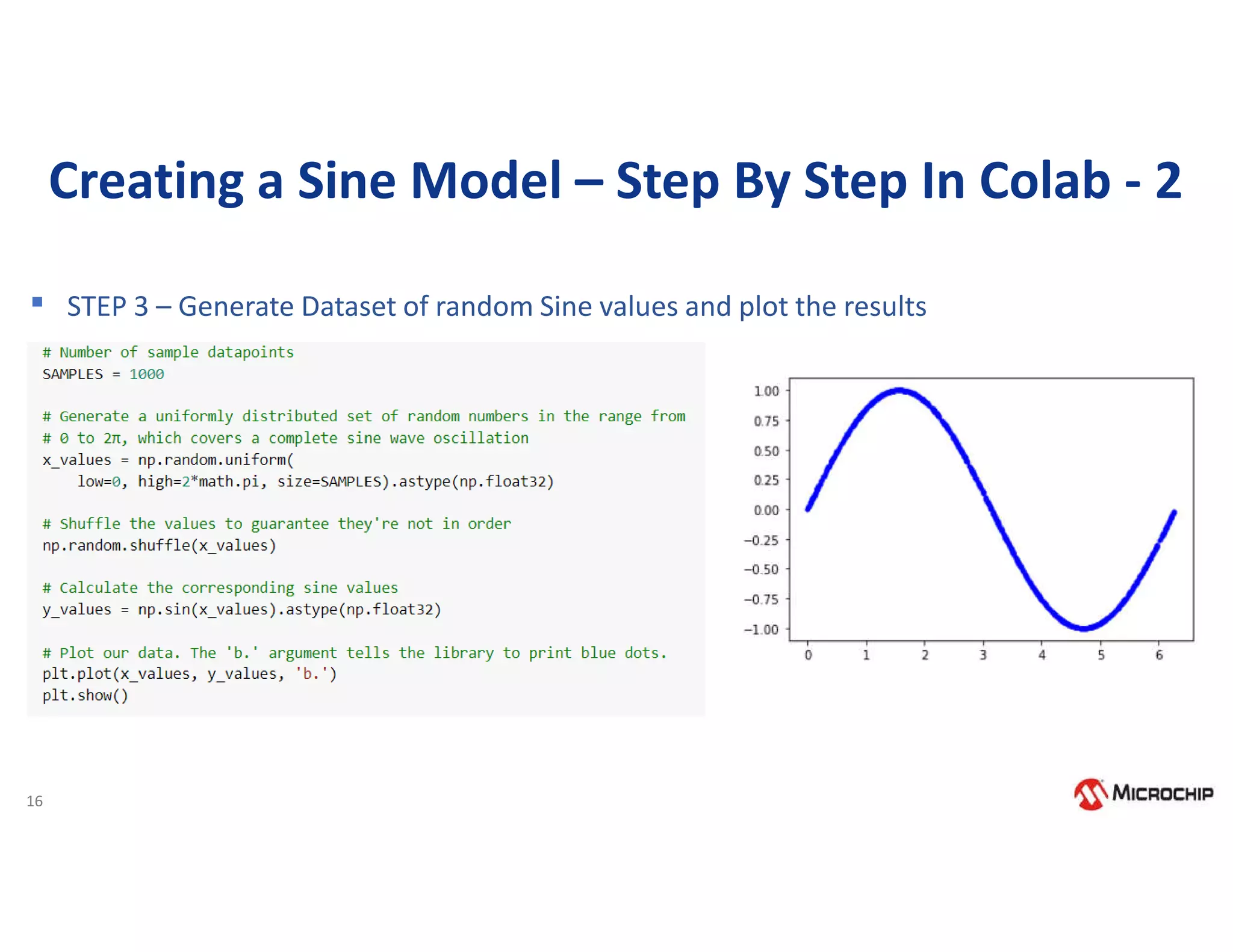

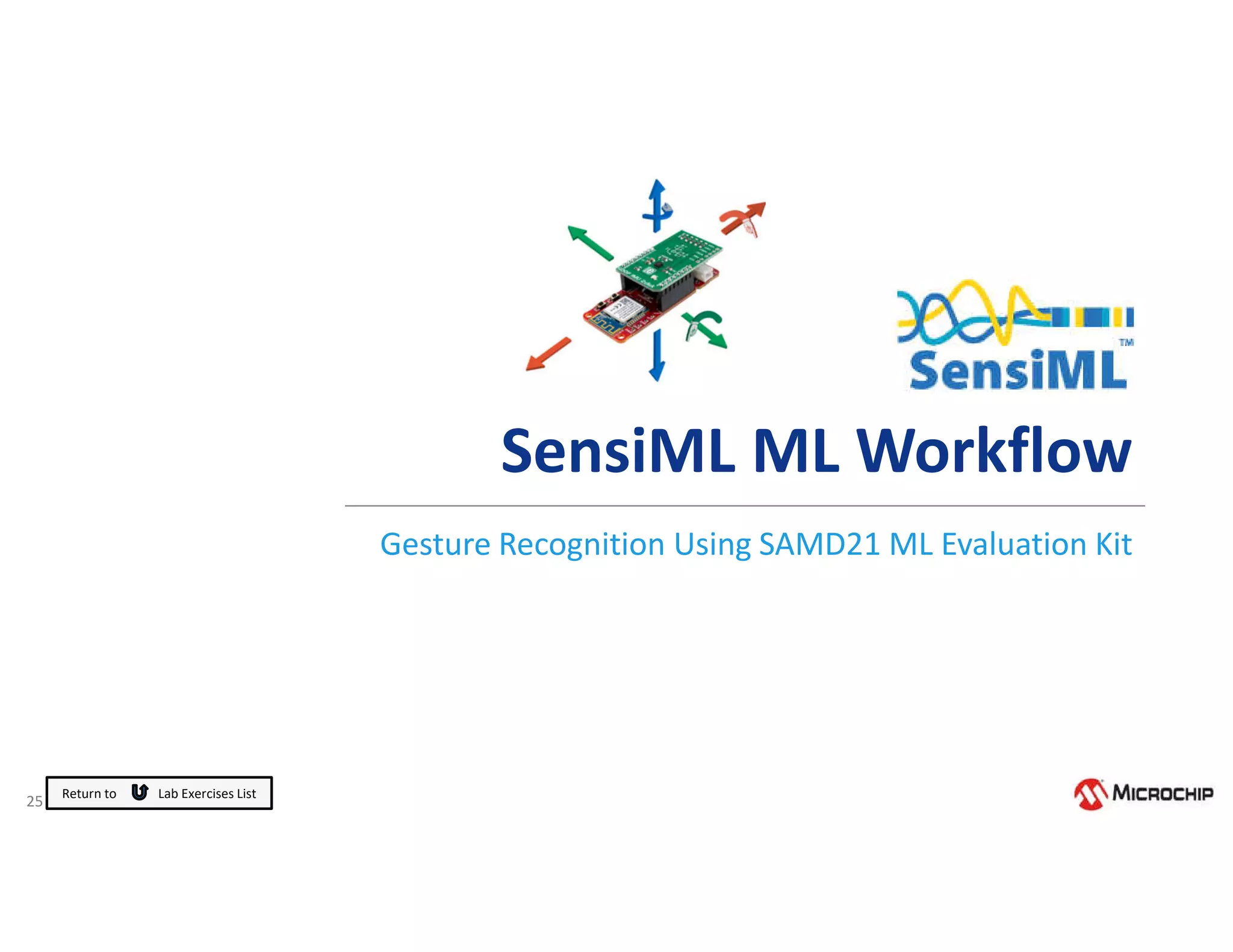

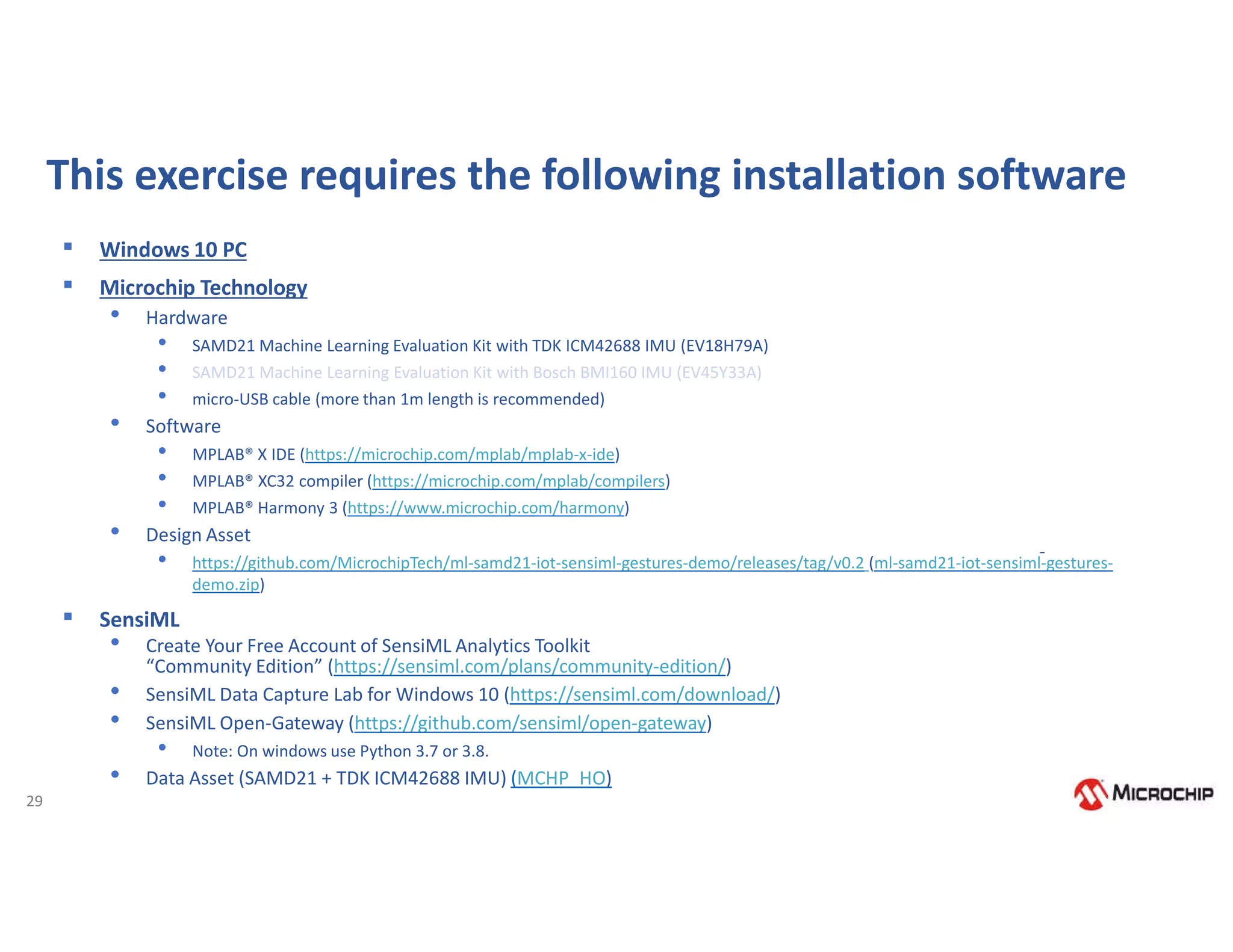

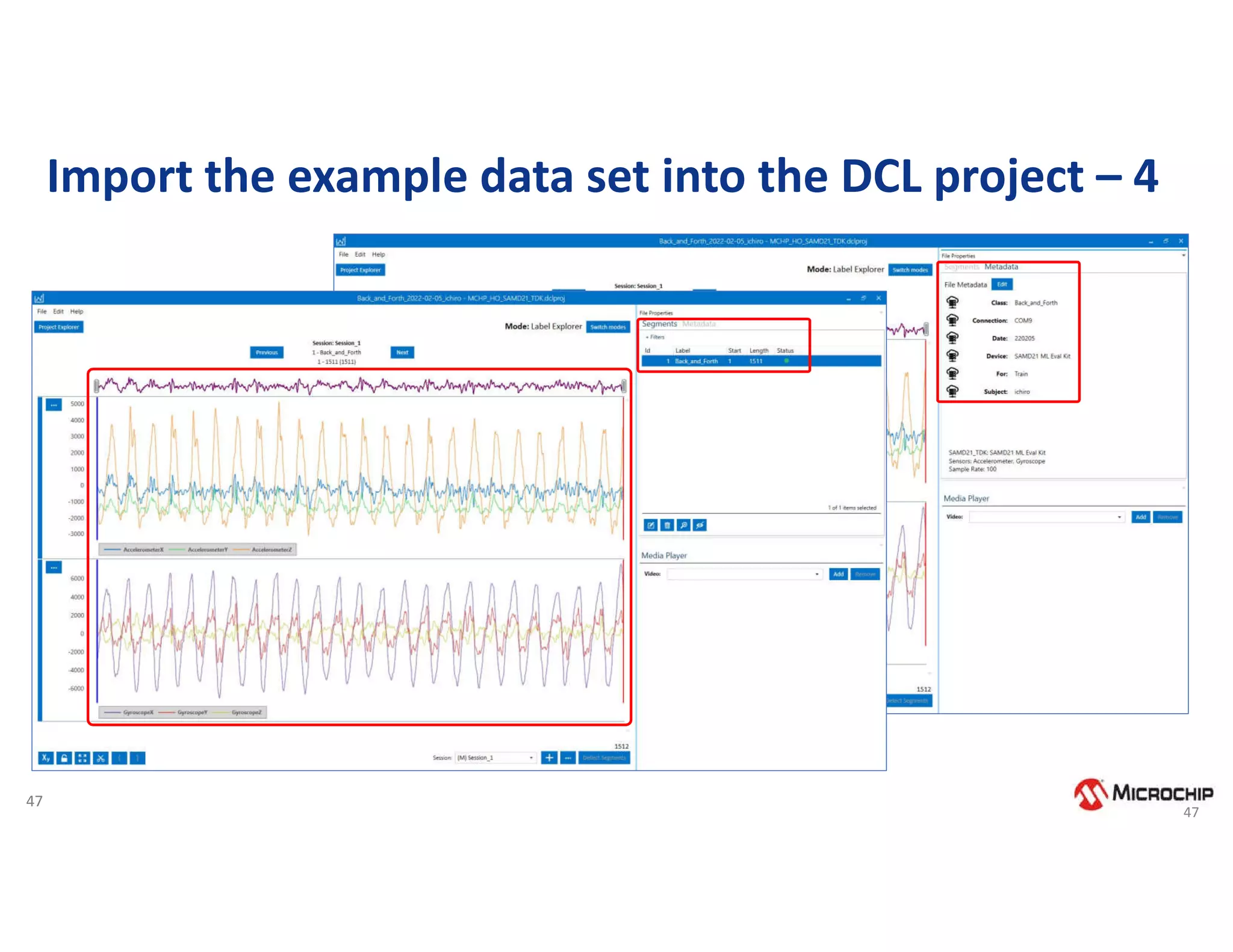

![48

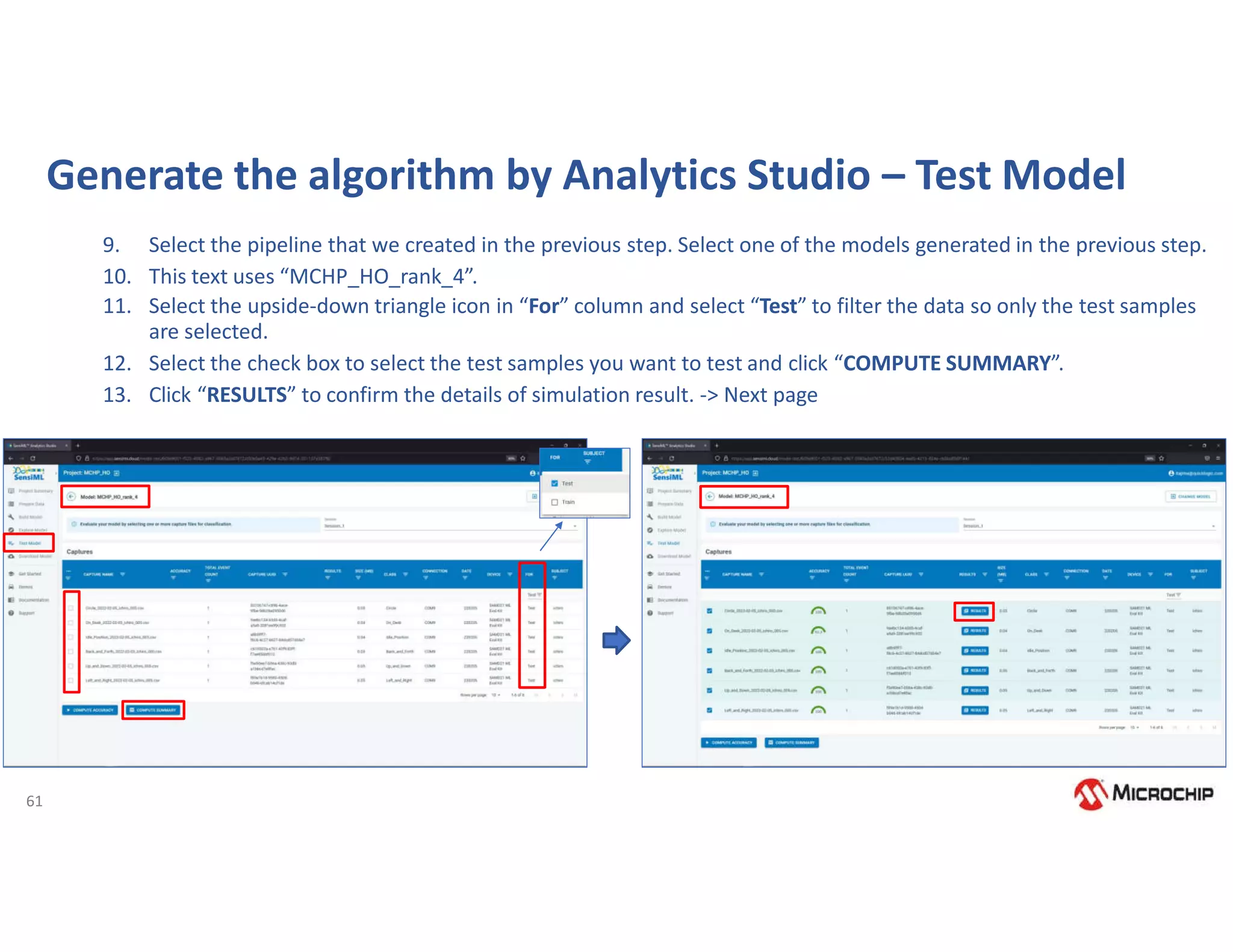

Exercise: Introducing SensiML Toolkit Endpoint AI Workflow

1. Write FW for data collection in SAMD21 by MPLAB X and connect it to DCL.

2. Import the example data set into the DCL project.

3. Additional data collection and labeling. [Optional - NOT Required for Steps 4-7]

4. Generate the algorithm by Analytics Studio.

5. Testing a Model Using the Data Capture Lab

6. Compile the downloaded library of Knowledge Pack with MPLAB X and write it to SAMD21

7. Verify the actual operation of gesture recognition using Open-Gateway](https://image.slidesharecdn.com/rodrigo16nov-221117175505-00a22b53/75/Webinar-Comecando-seus-trabalhos-com-Machine-Learning-utilizando-ferramentas-e-demoboards-da-Microchip-49-2048.jpg)

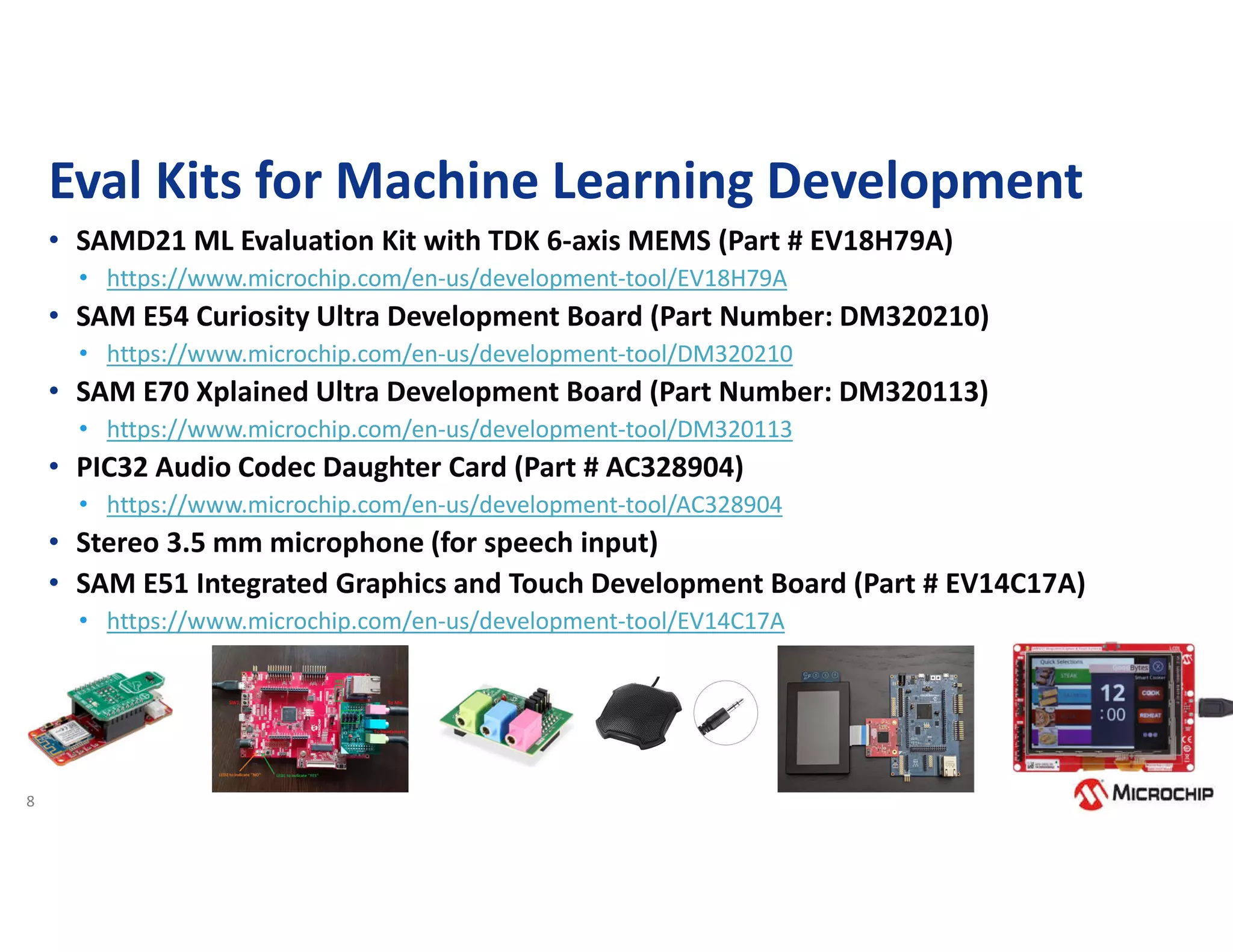

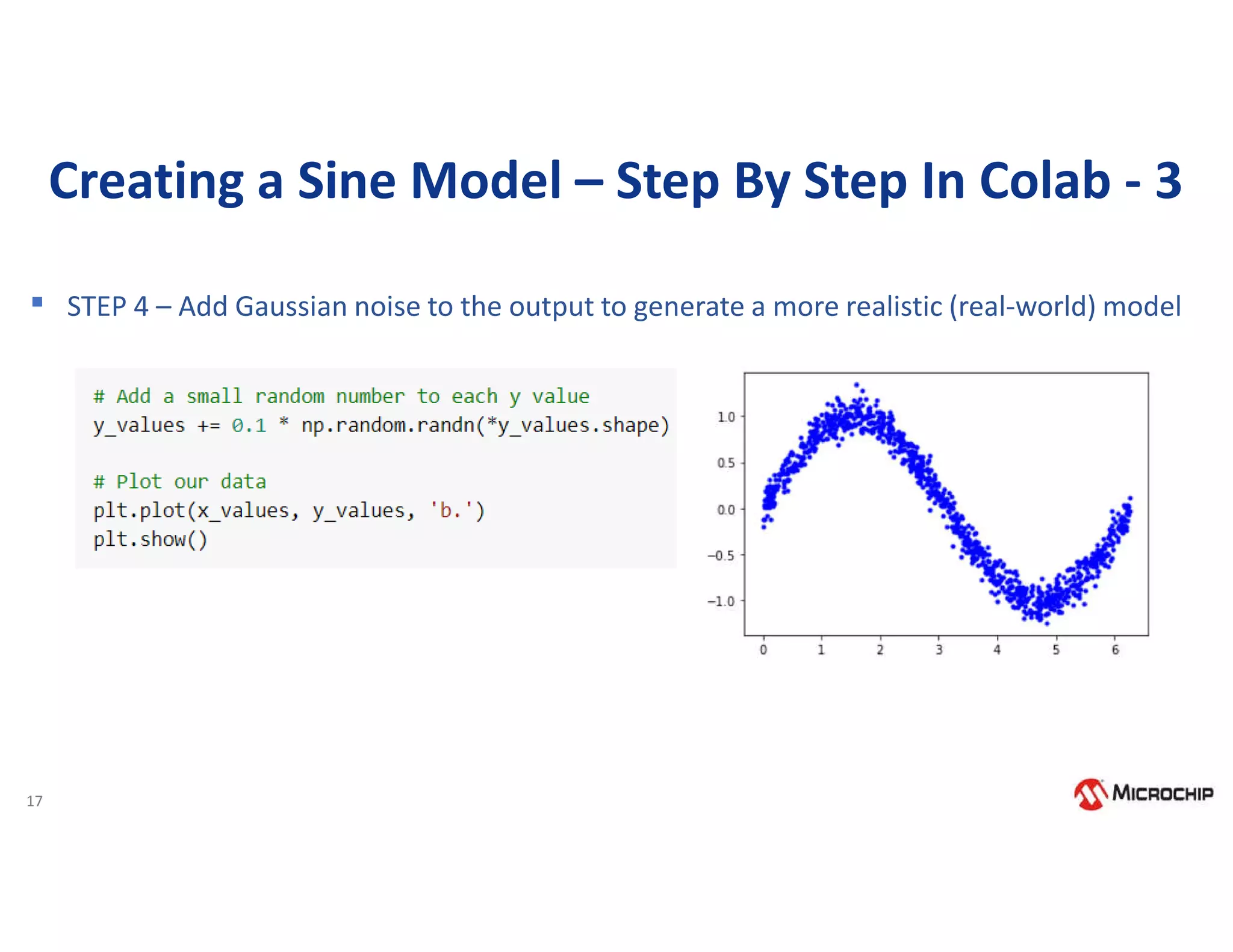

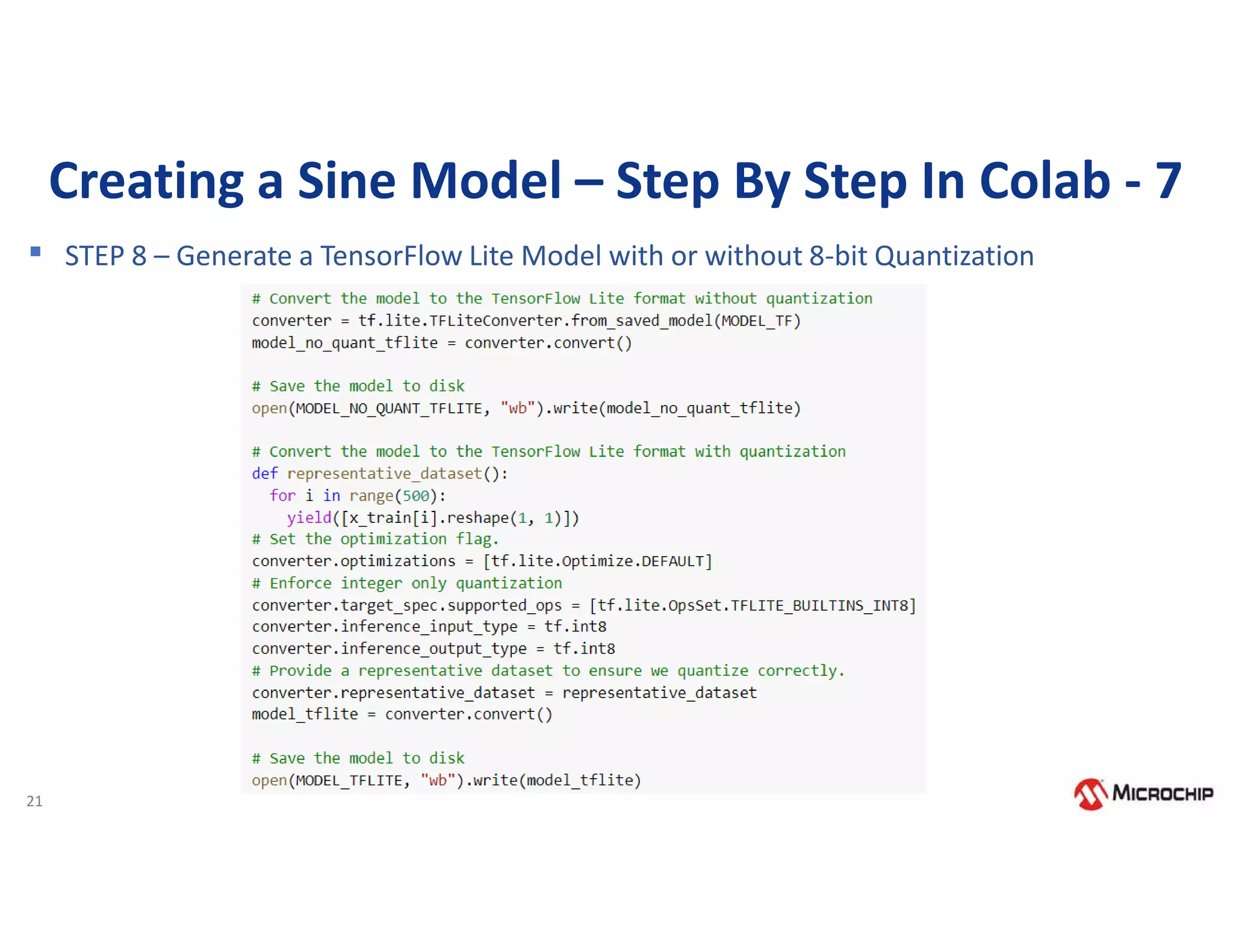

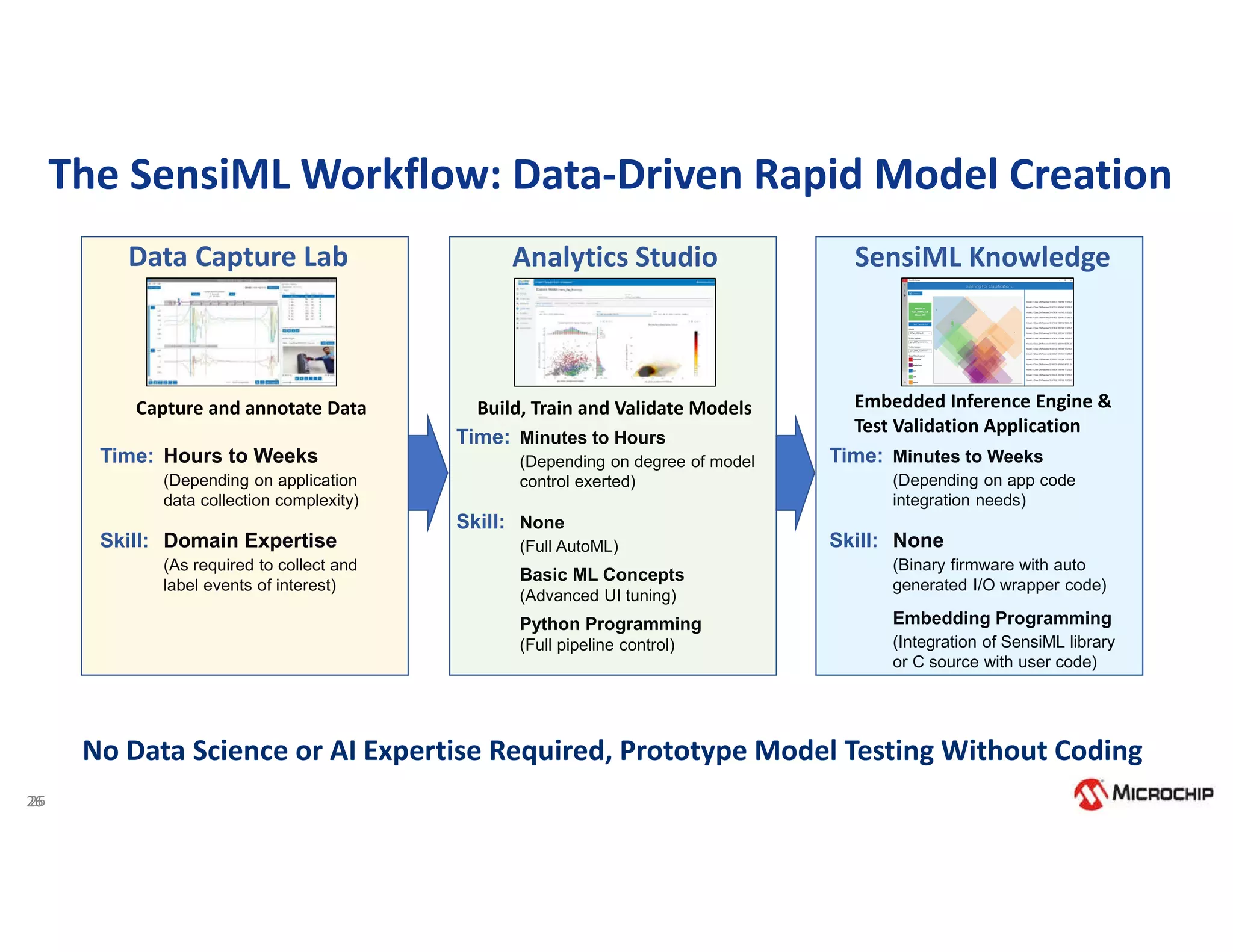

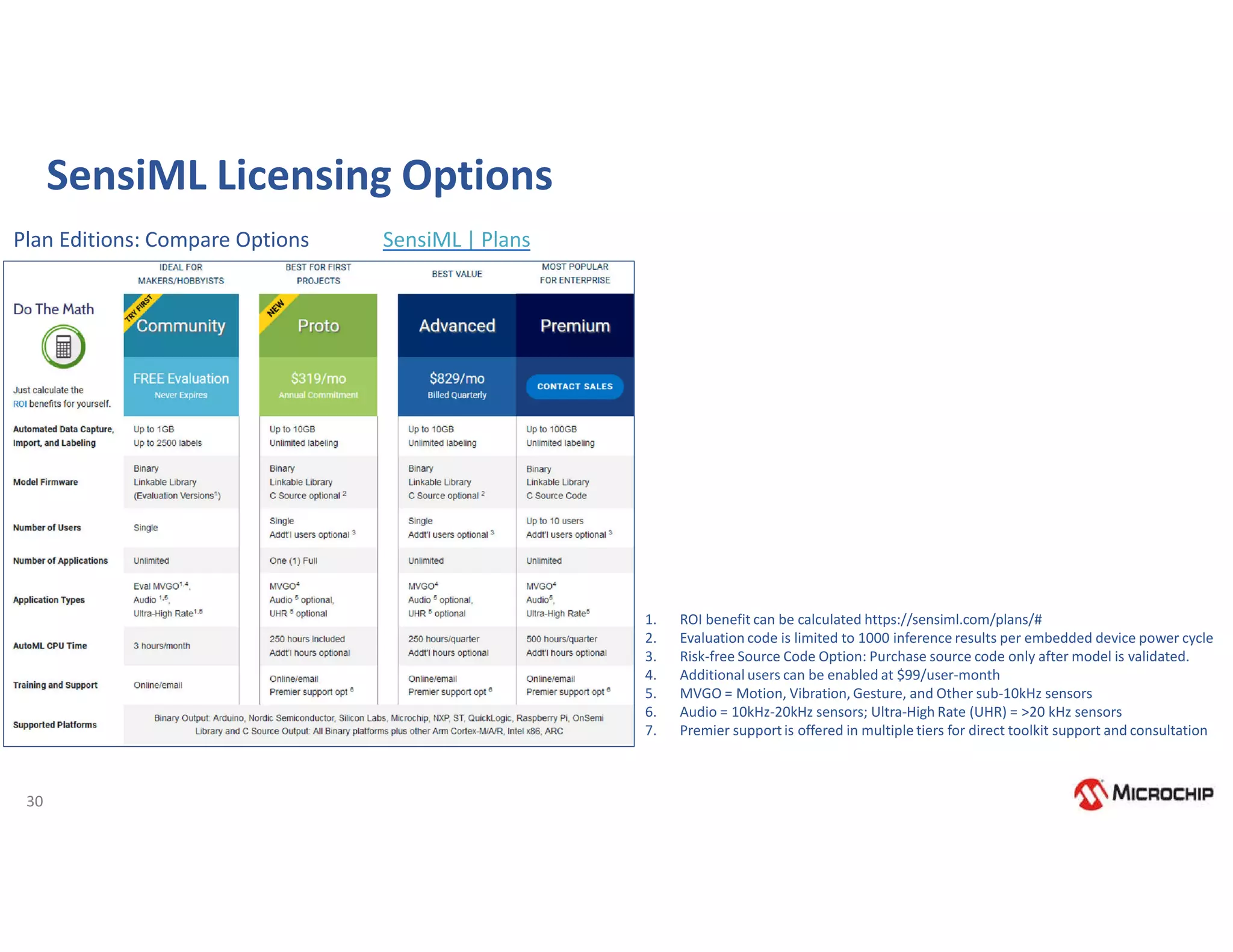

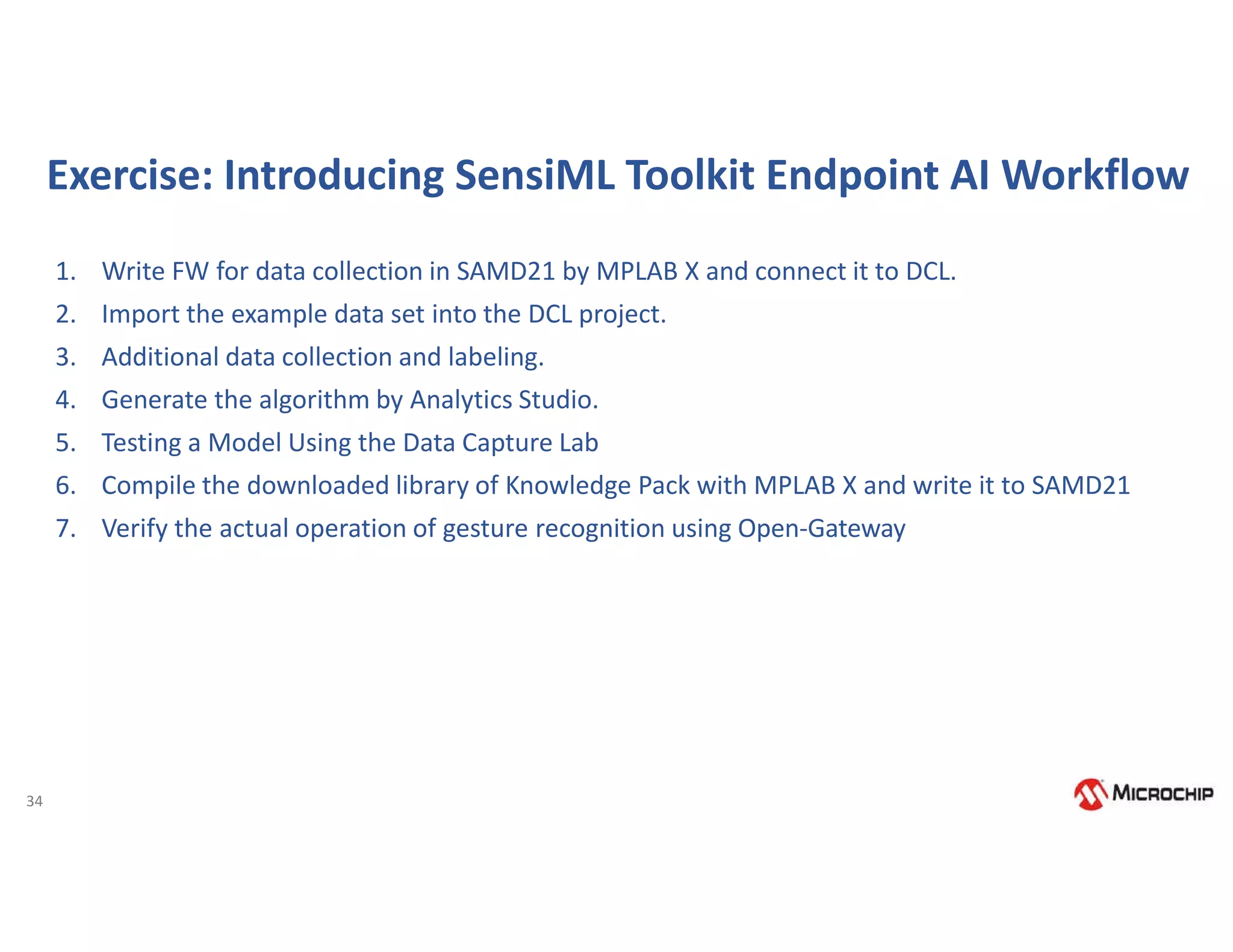

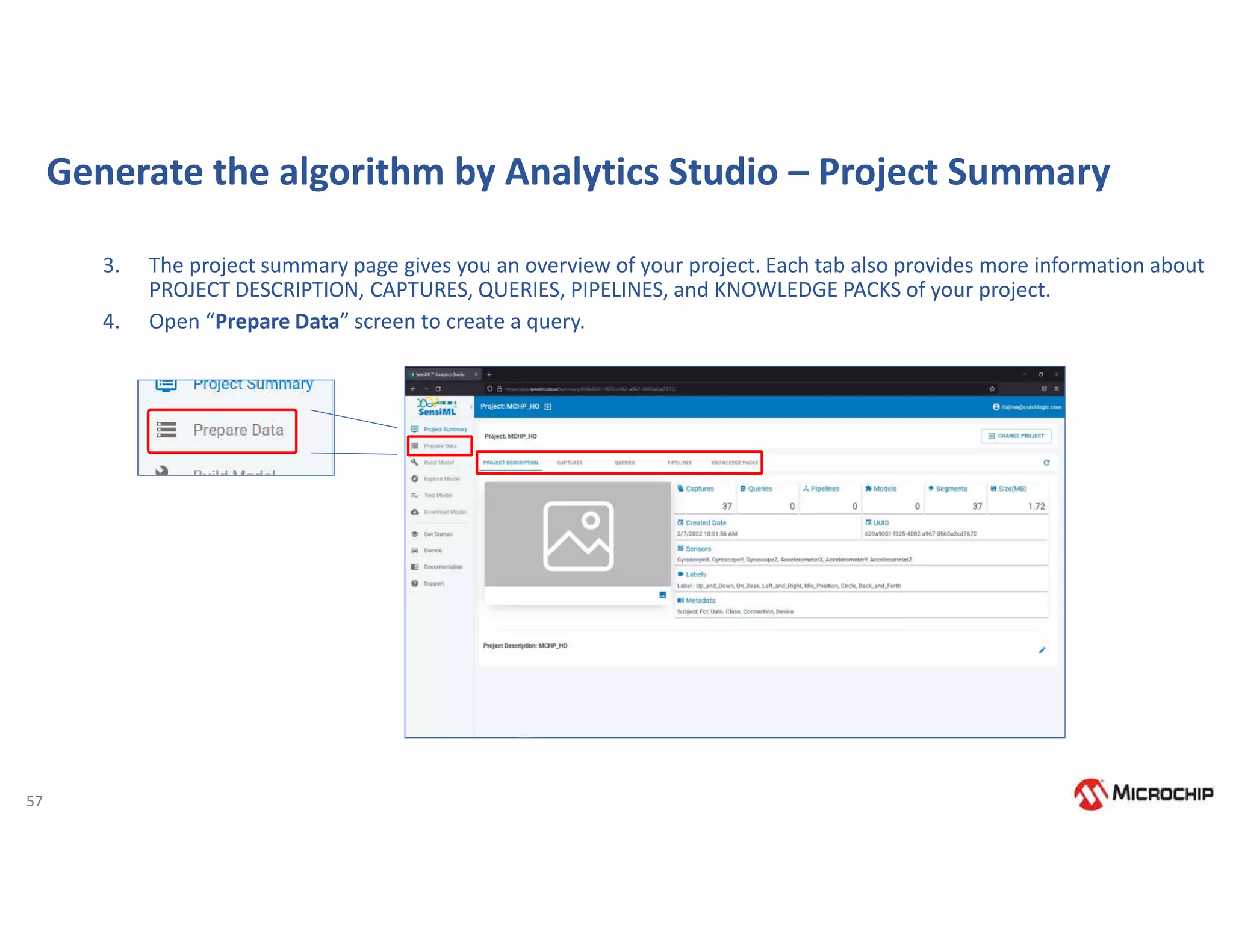

![58

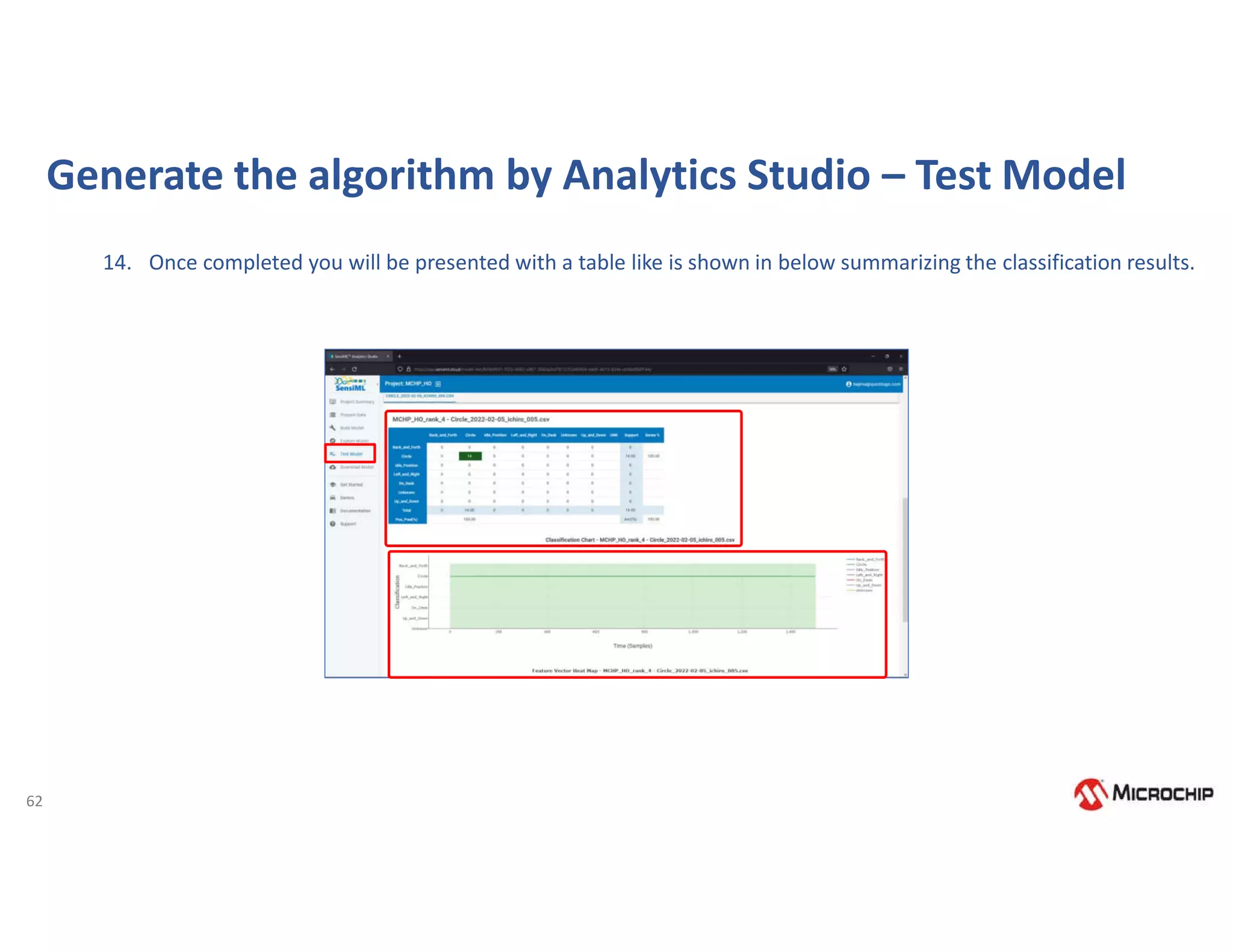

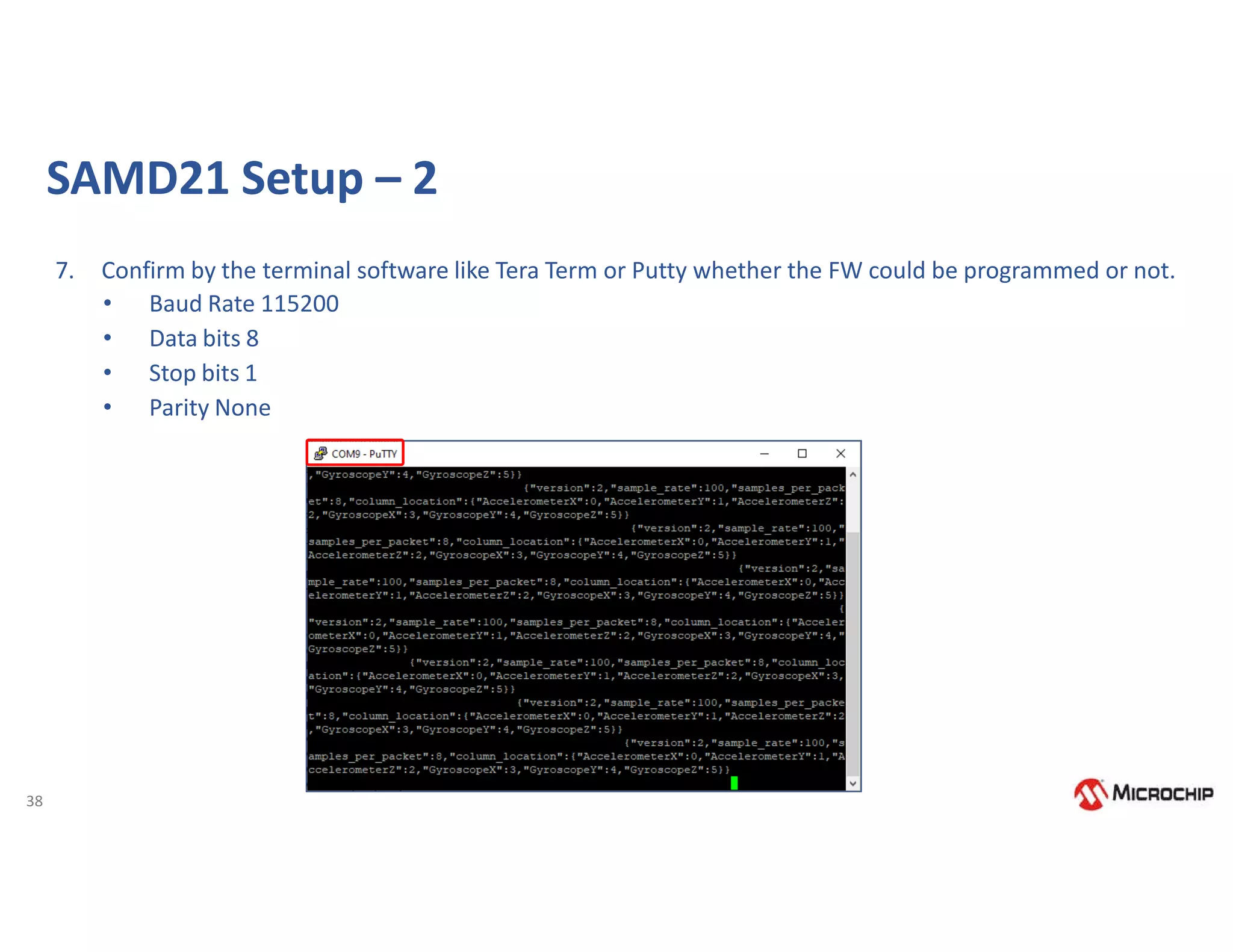

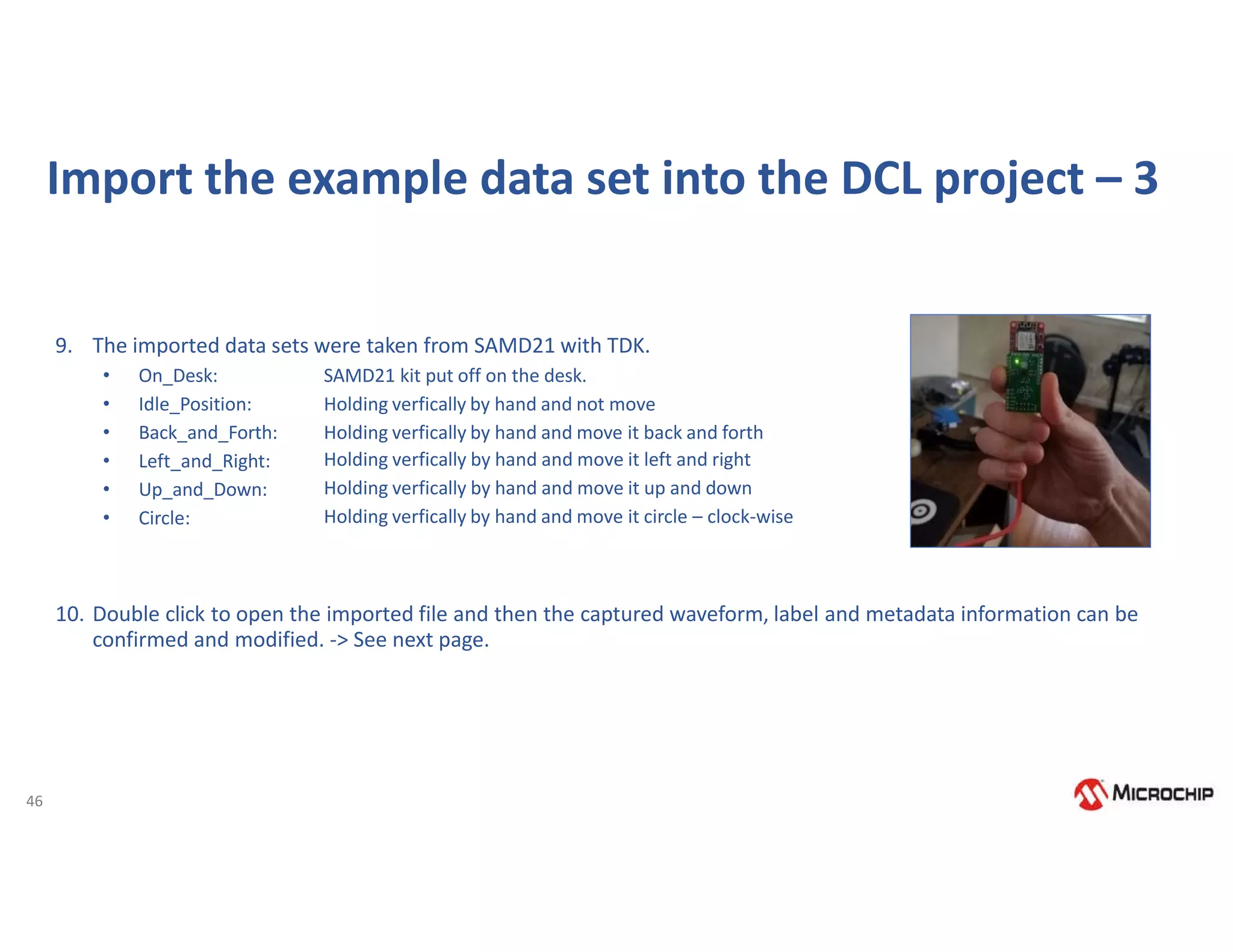

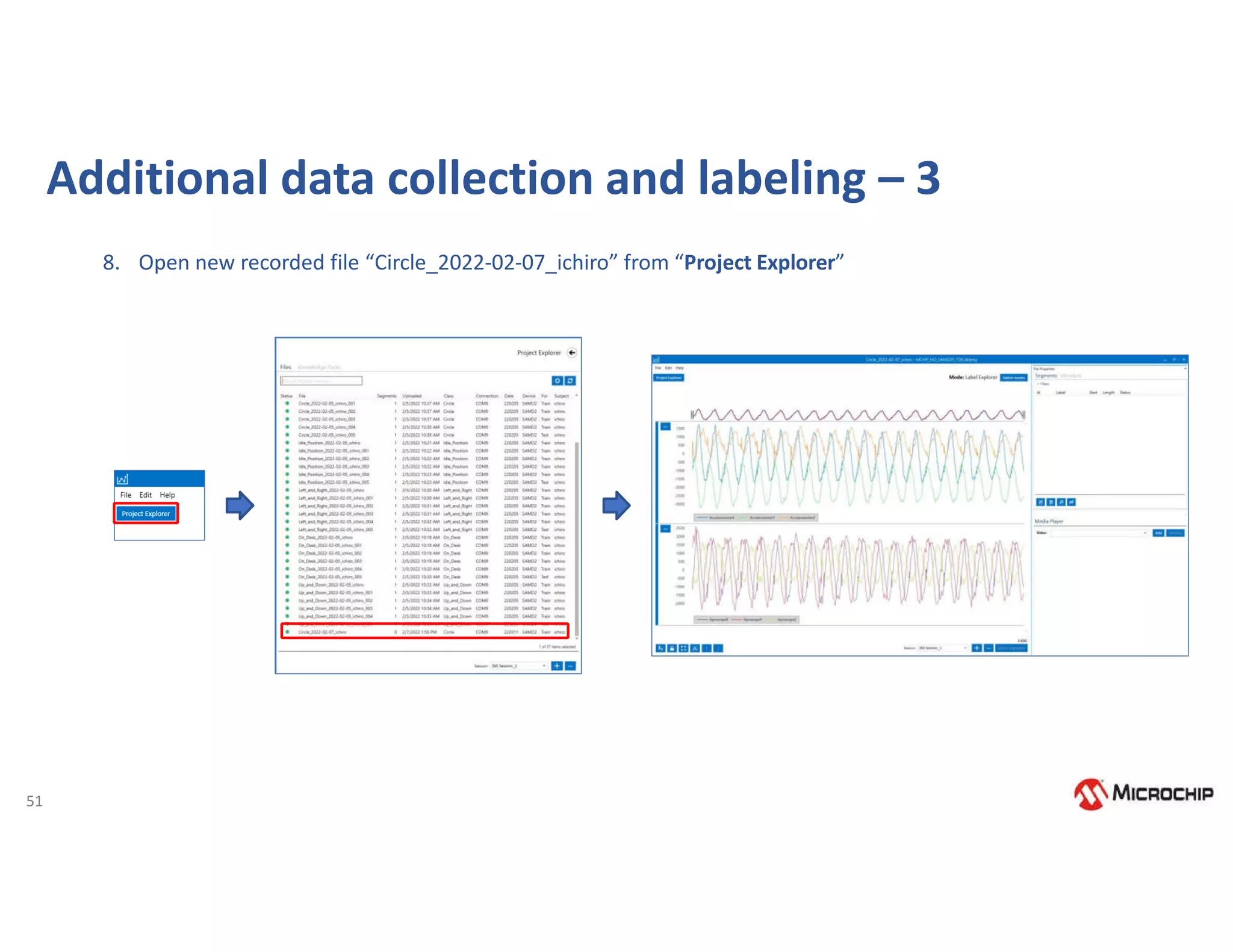

Generate the algorithm by Analytics Studio – Prepare Data

5. Querying Data

The query is used to select your sensor data from your project. If you need to filter out certain parts of your

sensor data based on metadata or labels, you can specify that here.

a) Query: MCHP_HO, (specify your unique name)

b) Session: Session_1, (session name was specified in DCL)

c) Label: Label, (you could select labels if you made other label(s) in DCL on same data)

d) Metadata: segment_uuid, For

(Differentiate the subset of captures that you want to work with for modeling)

e) Source: GyroscopeX, GyroscopeY, GyroscopeZ, AccelerometerX, AccelerometerY, AccelerometerZ

(You can select which sensor data use for modeling)

f) Query Filter: [For] in [train]

(File Metadata “train” will be used for modeling)

g) Plot: Segment

(Segment or Samples could be indicated)

6. Click “SAVE”

7. Click “Build Model” from the left menu](https://image.slidesharecdn.com/rodrigo16nov-221117175505-00a22b53/75/Webinar-Comecando-seus-trabalhos-com-Machine-Learning-utilizando-ferramentas-e-demoboards-da-Microchip-59-2048.jpg)