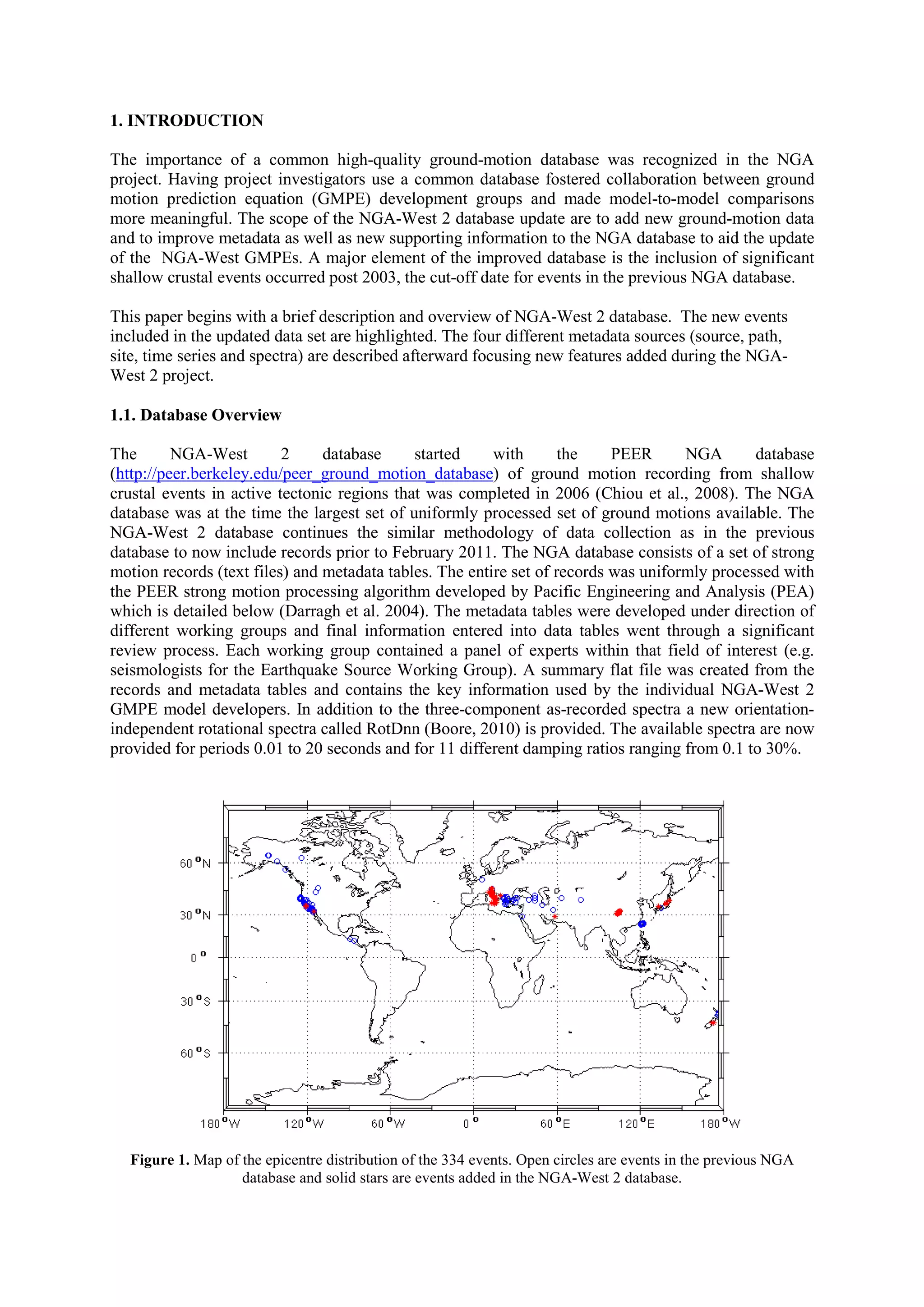

The NGA-West 2 project expands the existing NGA ground motion database to include over 8,600 strong motion records from 334 shallow crustal earthquakes worldwide between 2003-2011. This more than doubles the size of the original NGA database. Extensive new metadata on earthquake sources, propagation paths, and site conditions were collected. In addition, all time series were reprocessed using a uniform methodology and new orientation-independent response spectra were calculated. The updated database will be used by researchers to develop revised ground motion prediction equations as part of the NGA-West 2 project.