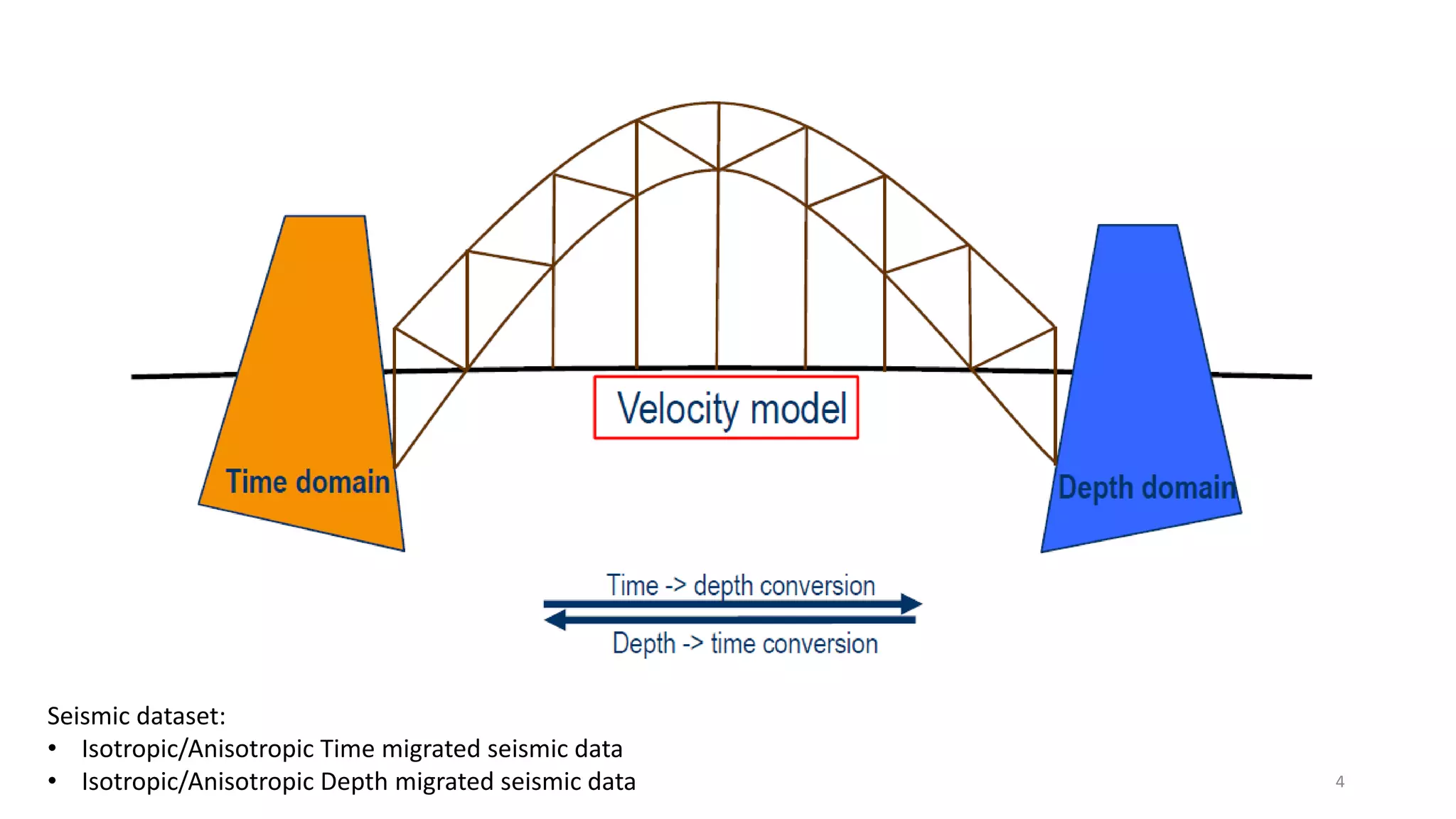

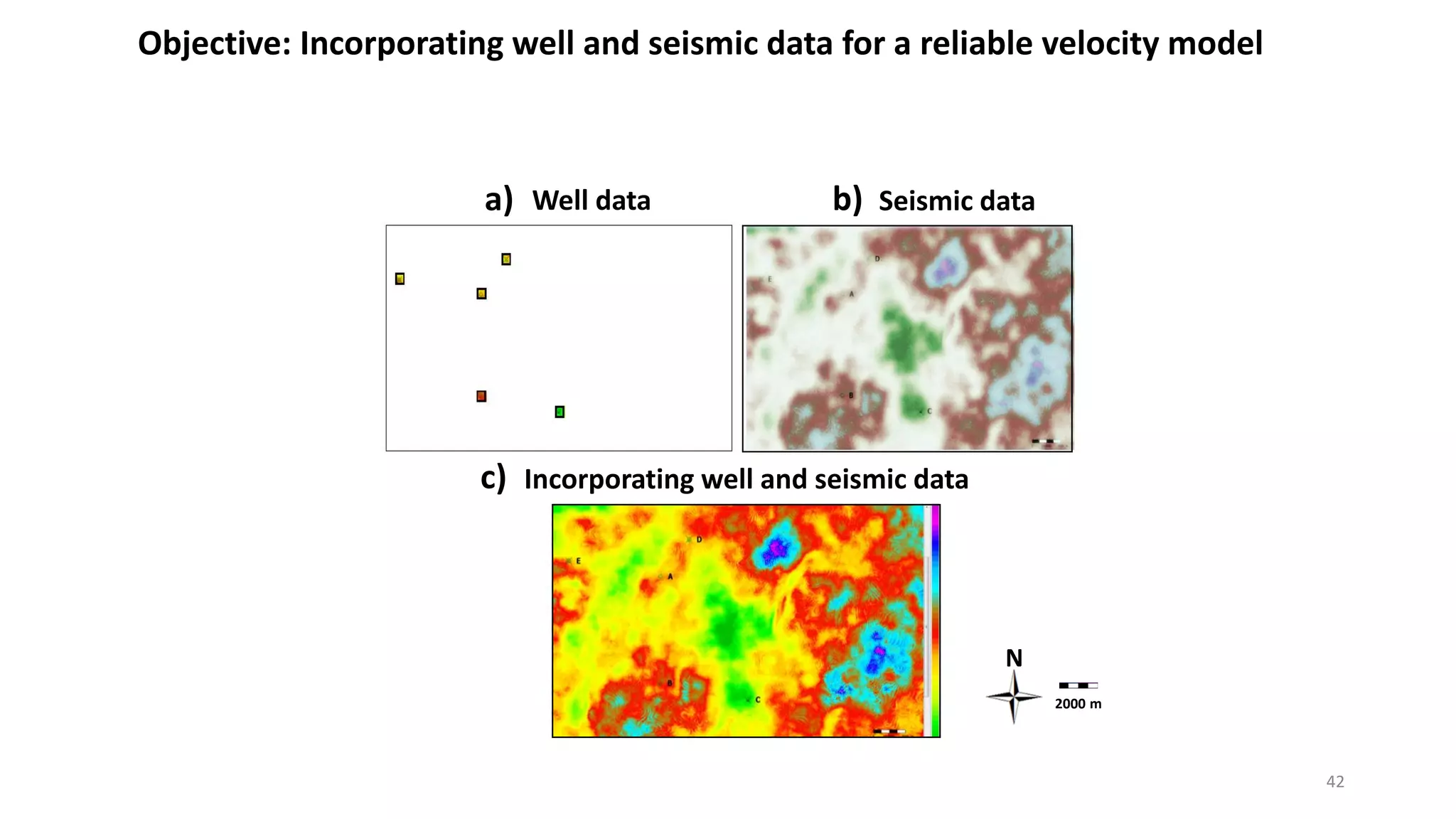

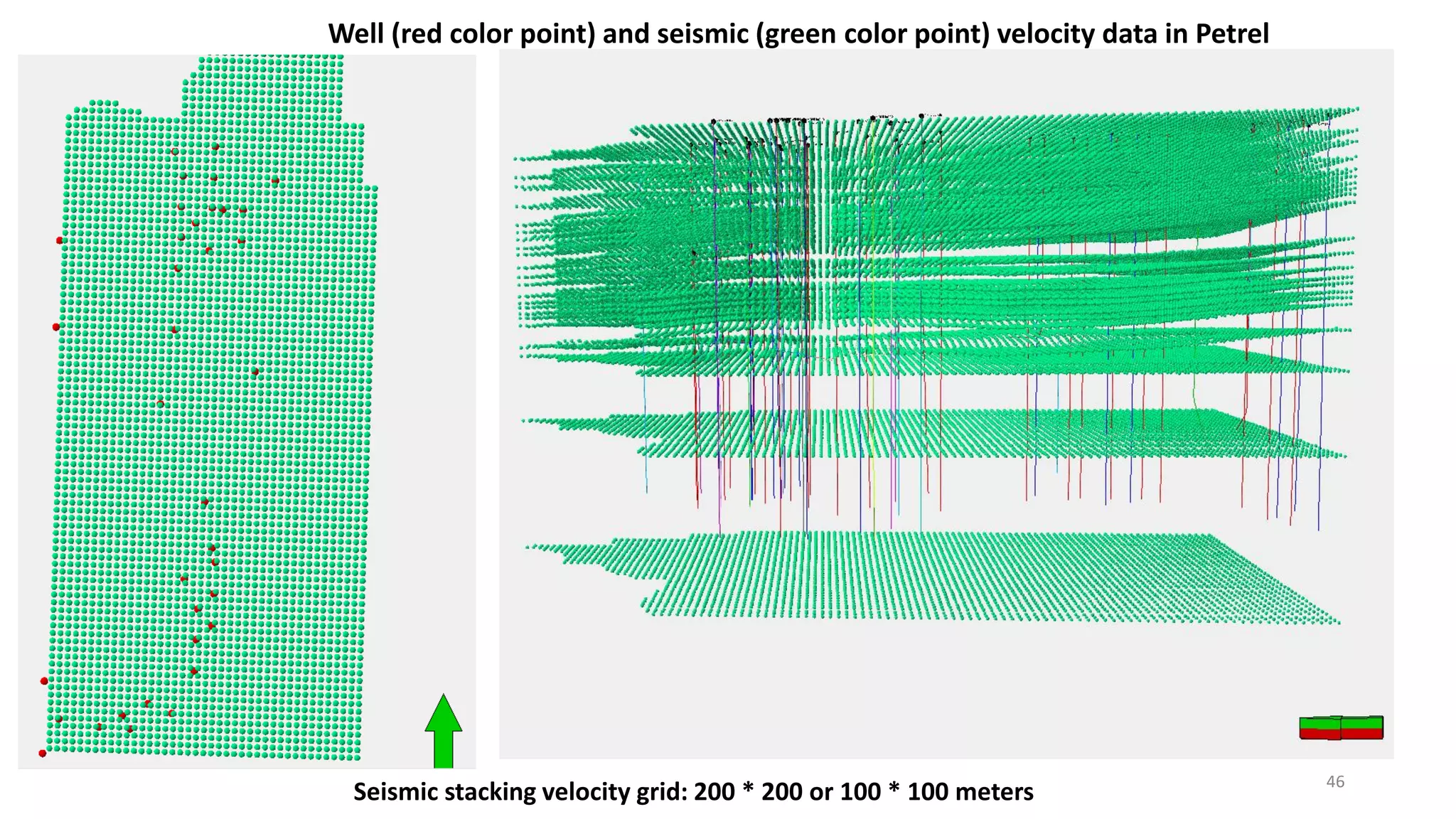

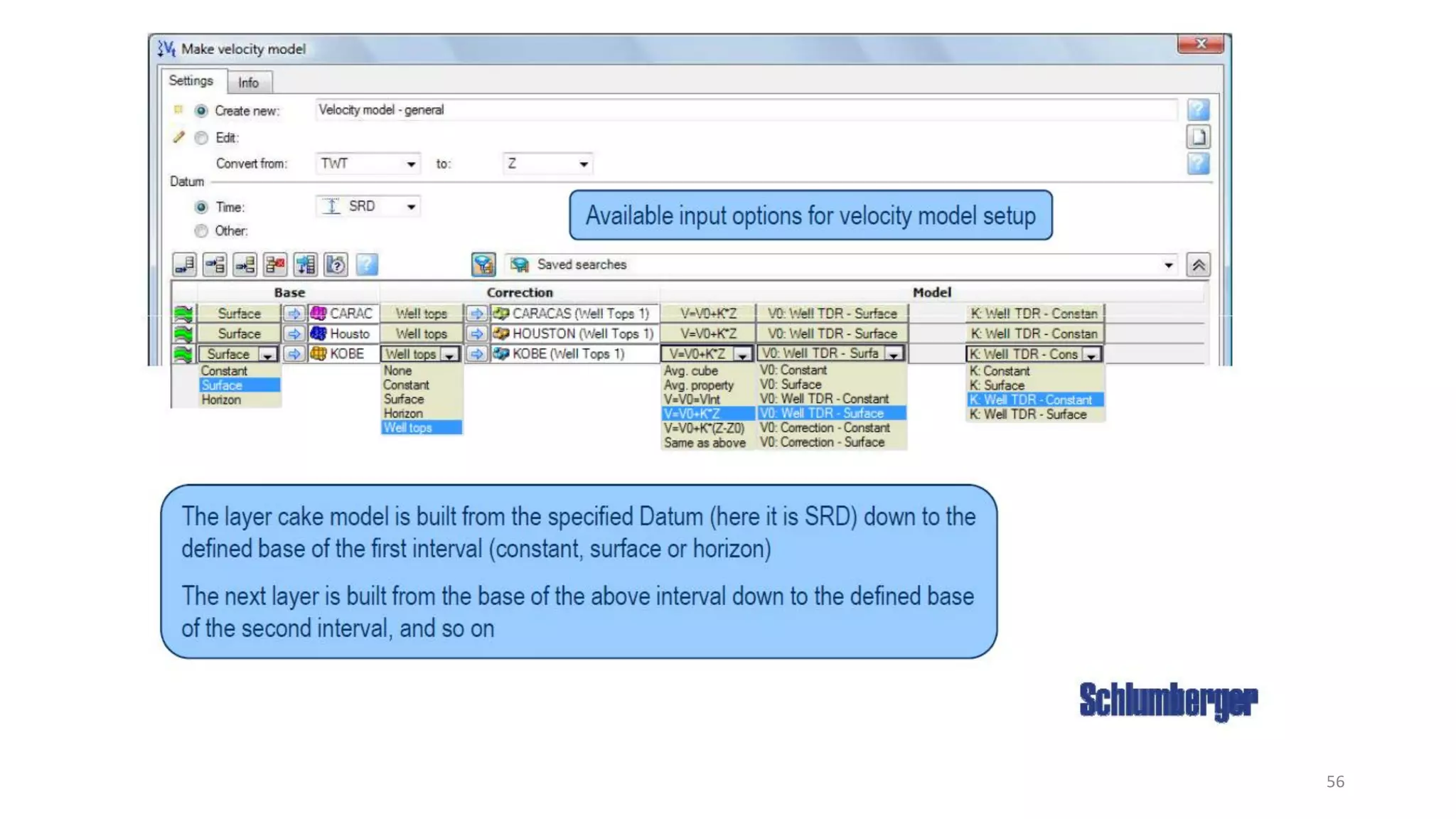

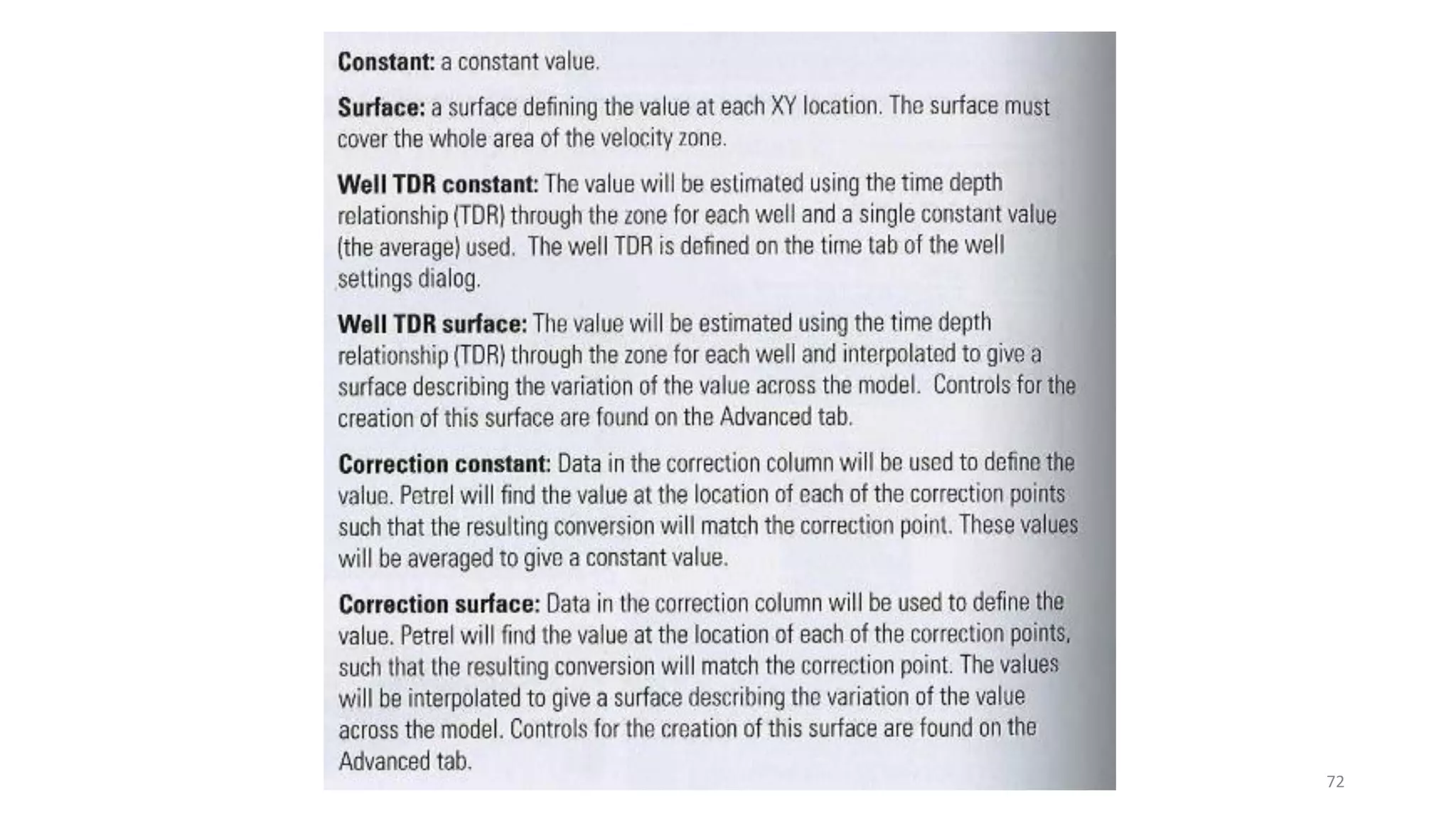

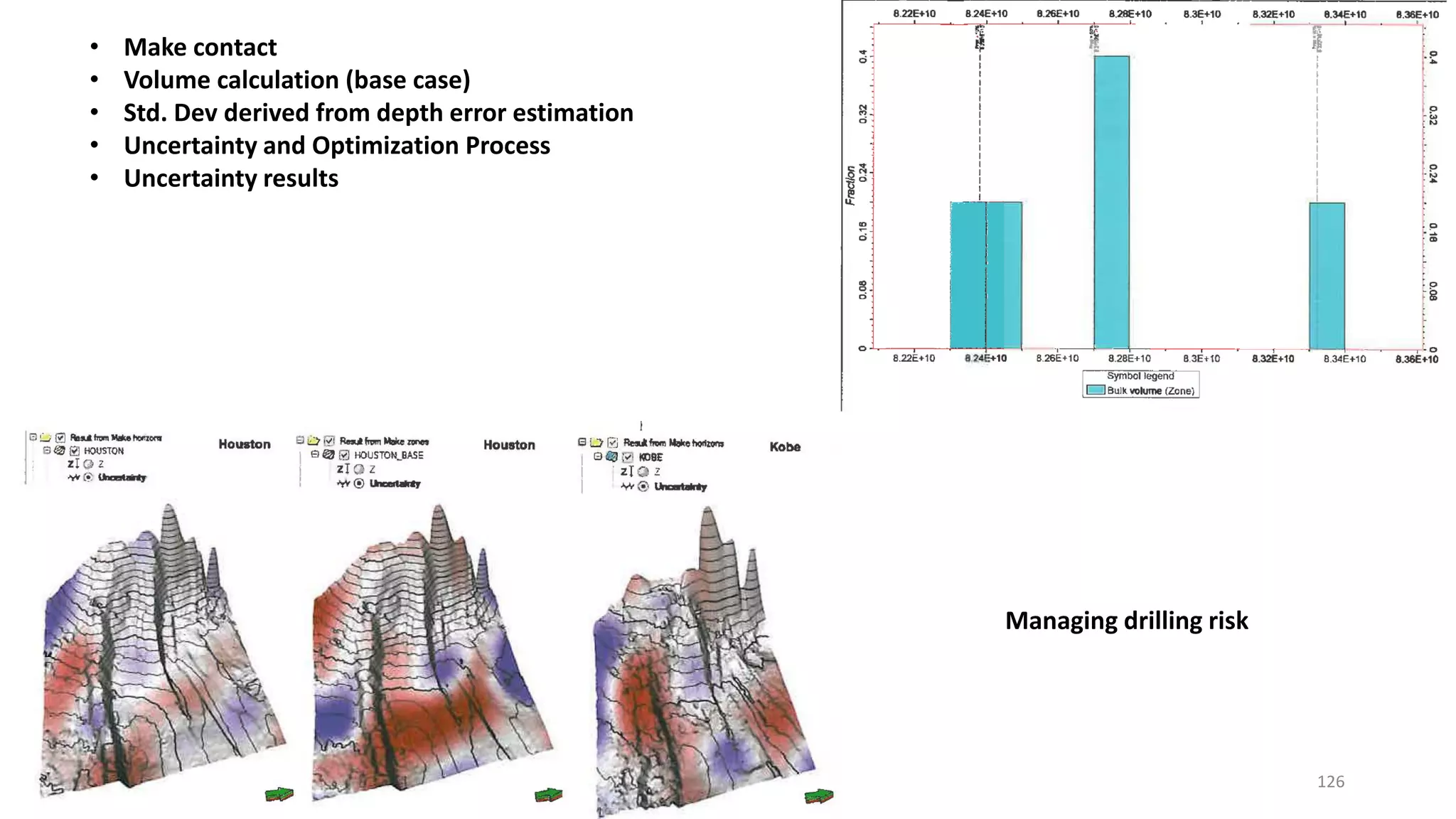

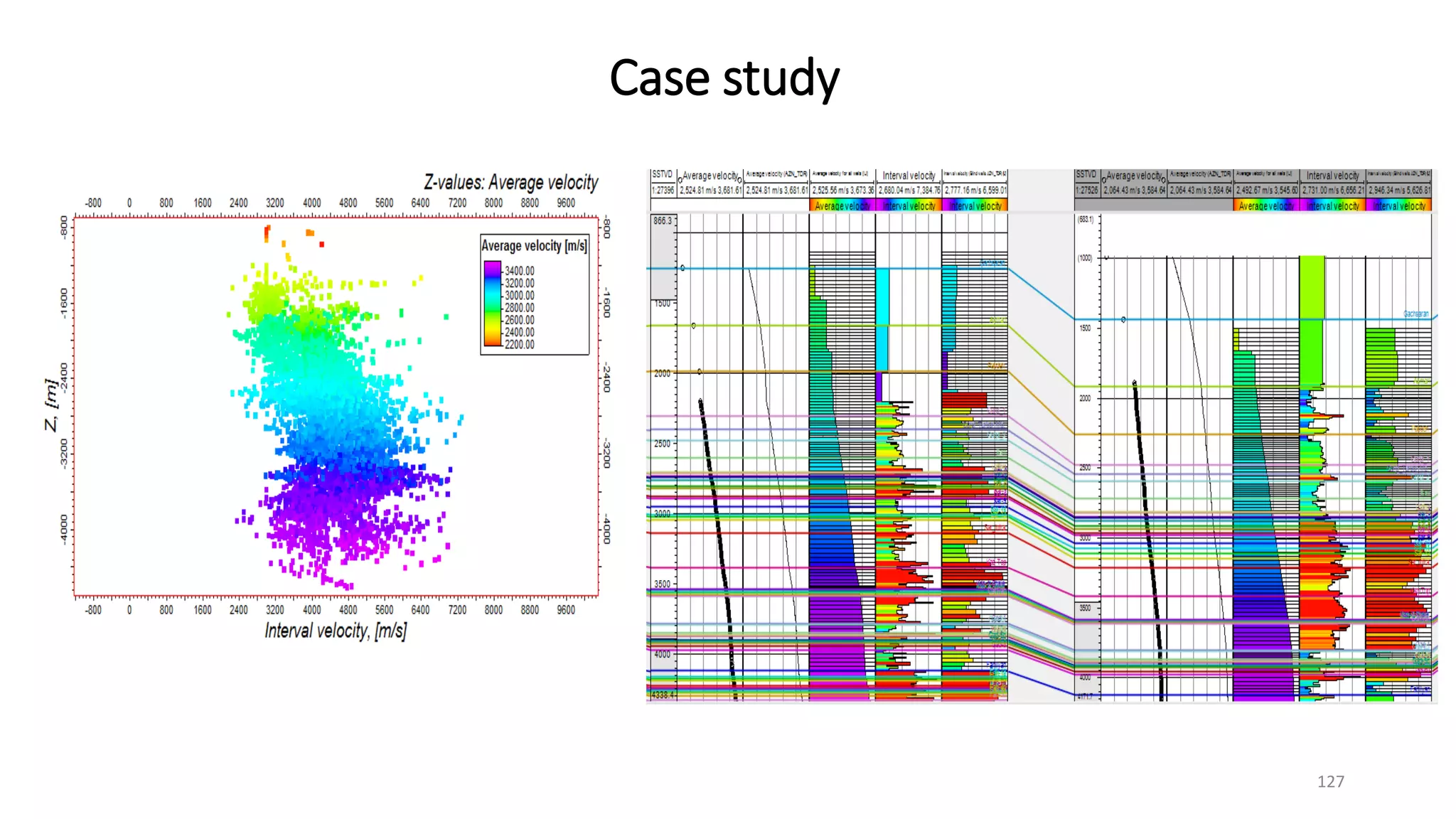

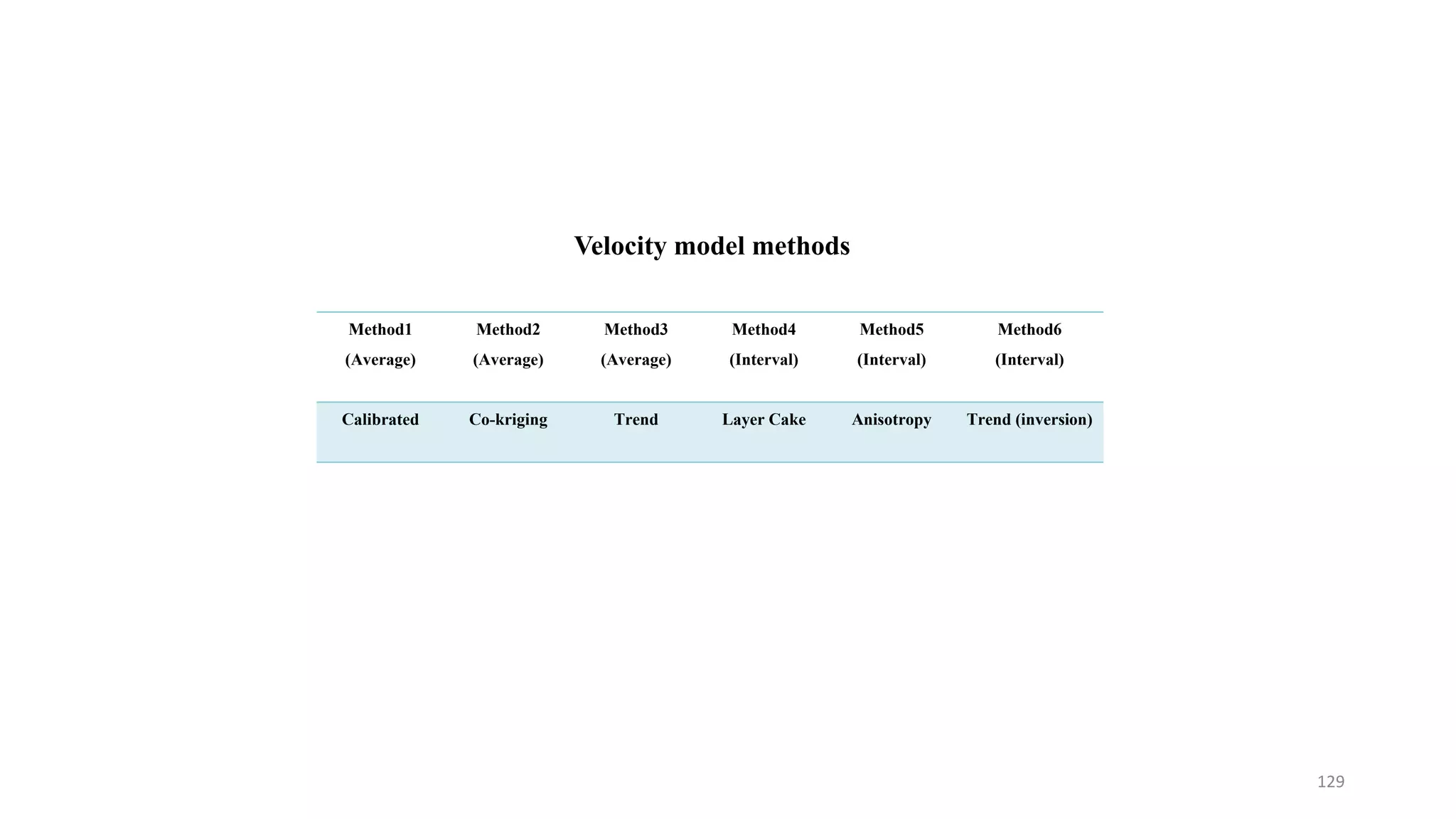

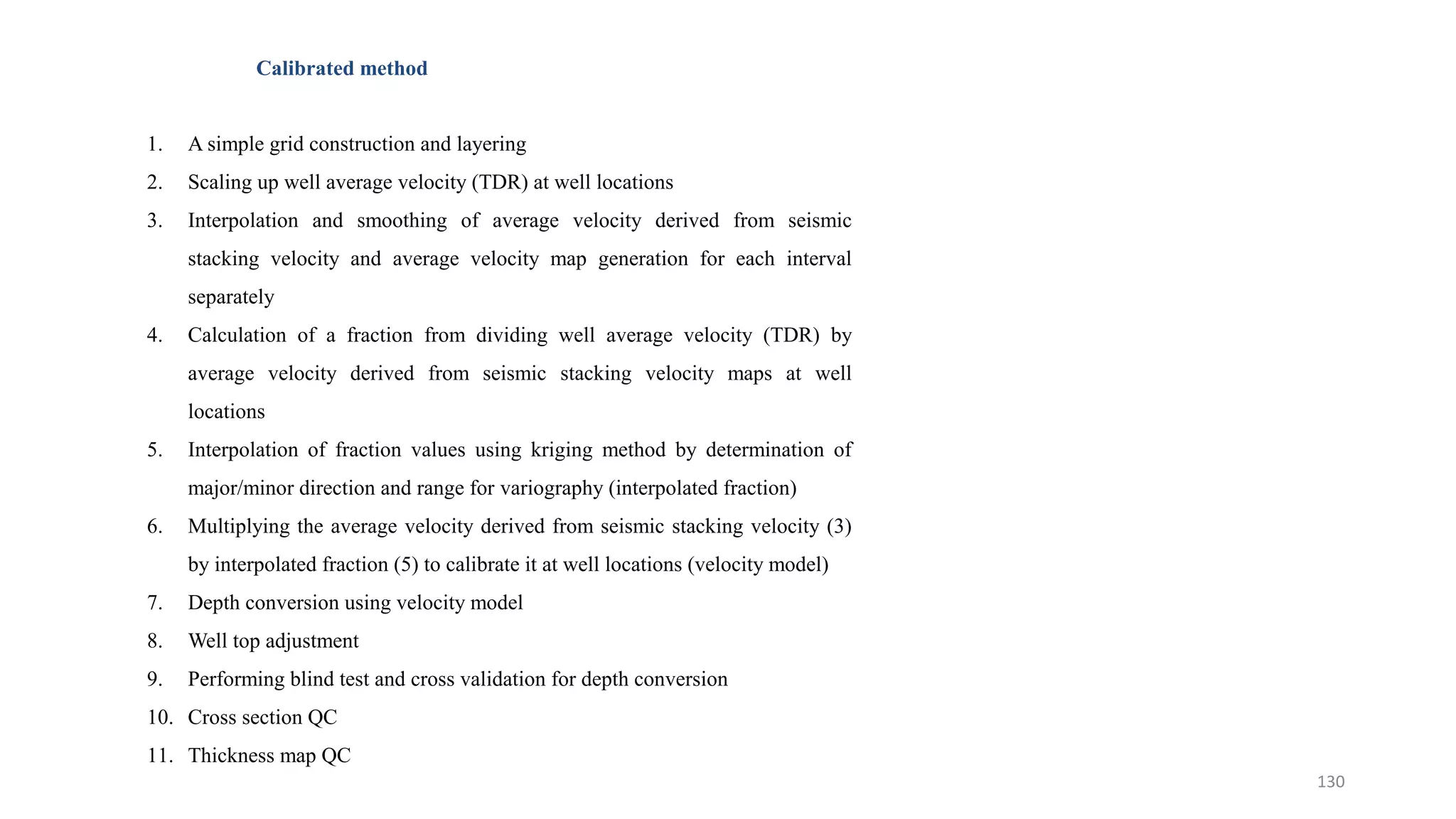

1. The document discusses various methods for building velocity models in Petrel software using well and seismic data, including average velocity, layer cake, and anisotropic approaches.

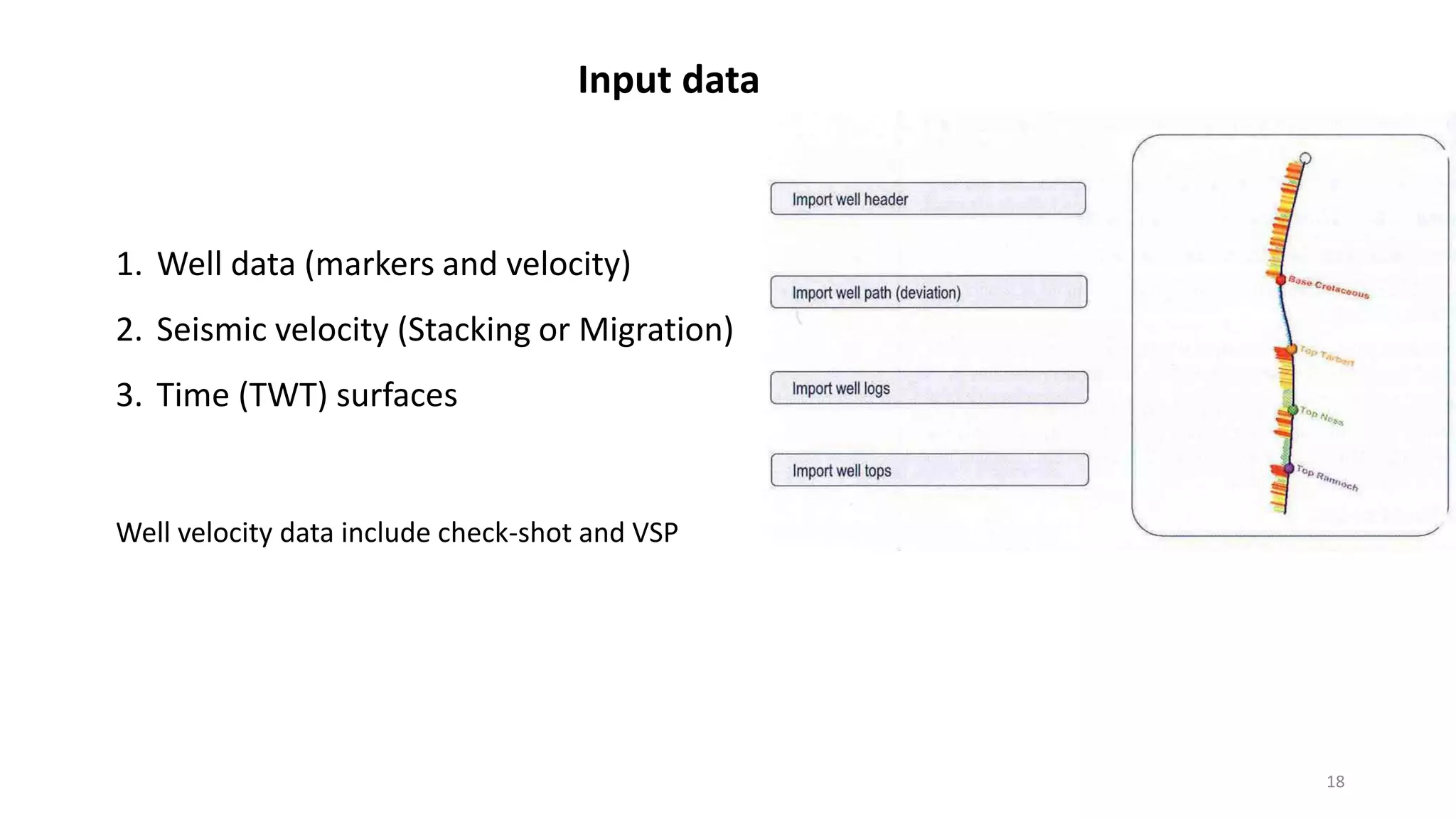

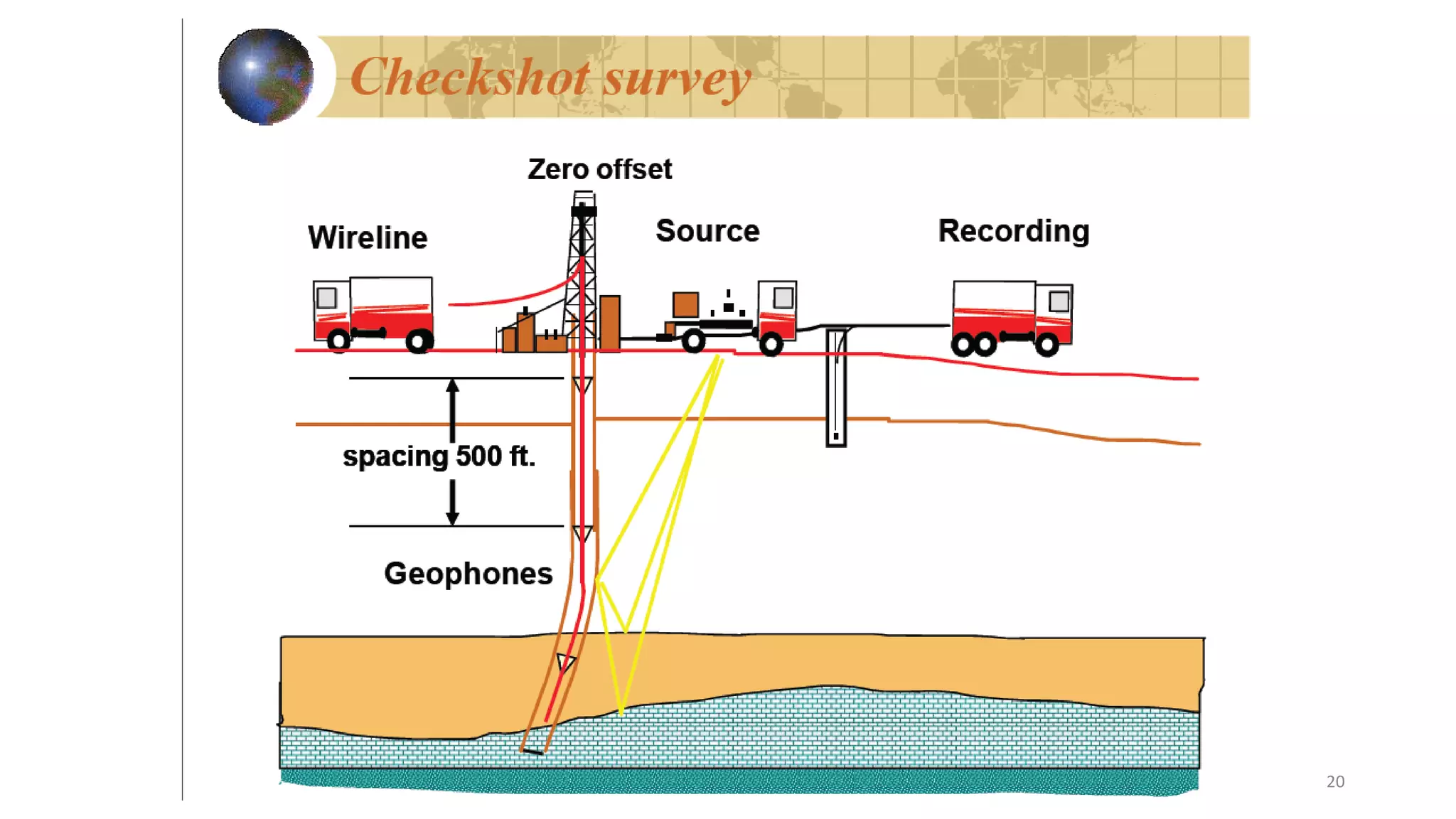

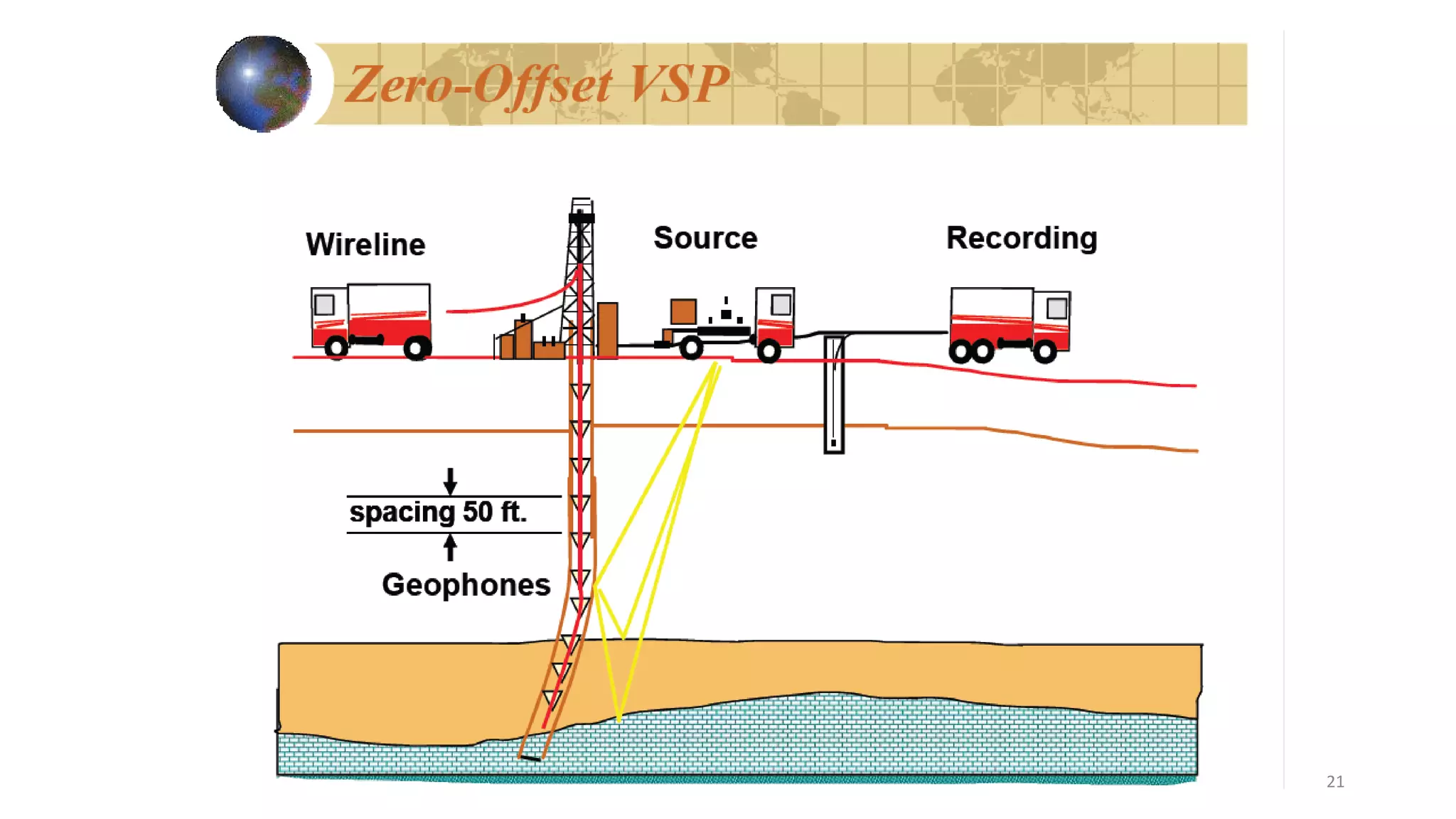

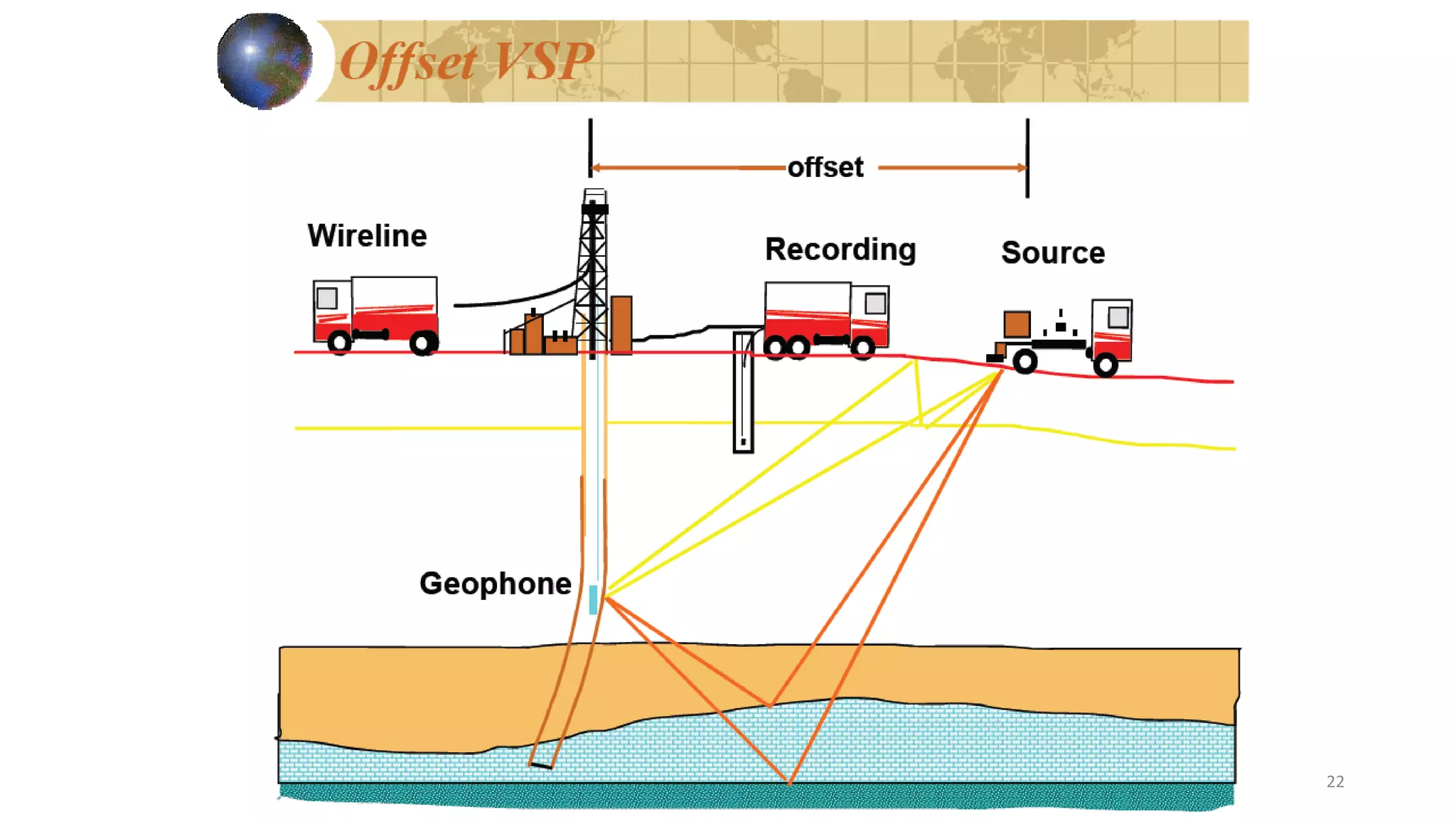

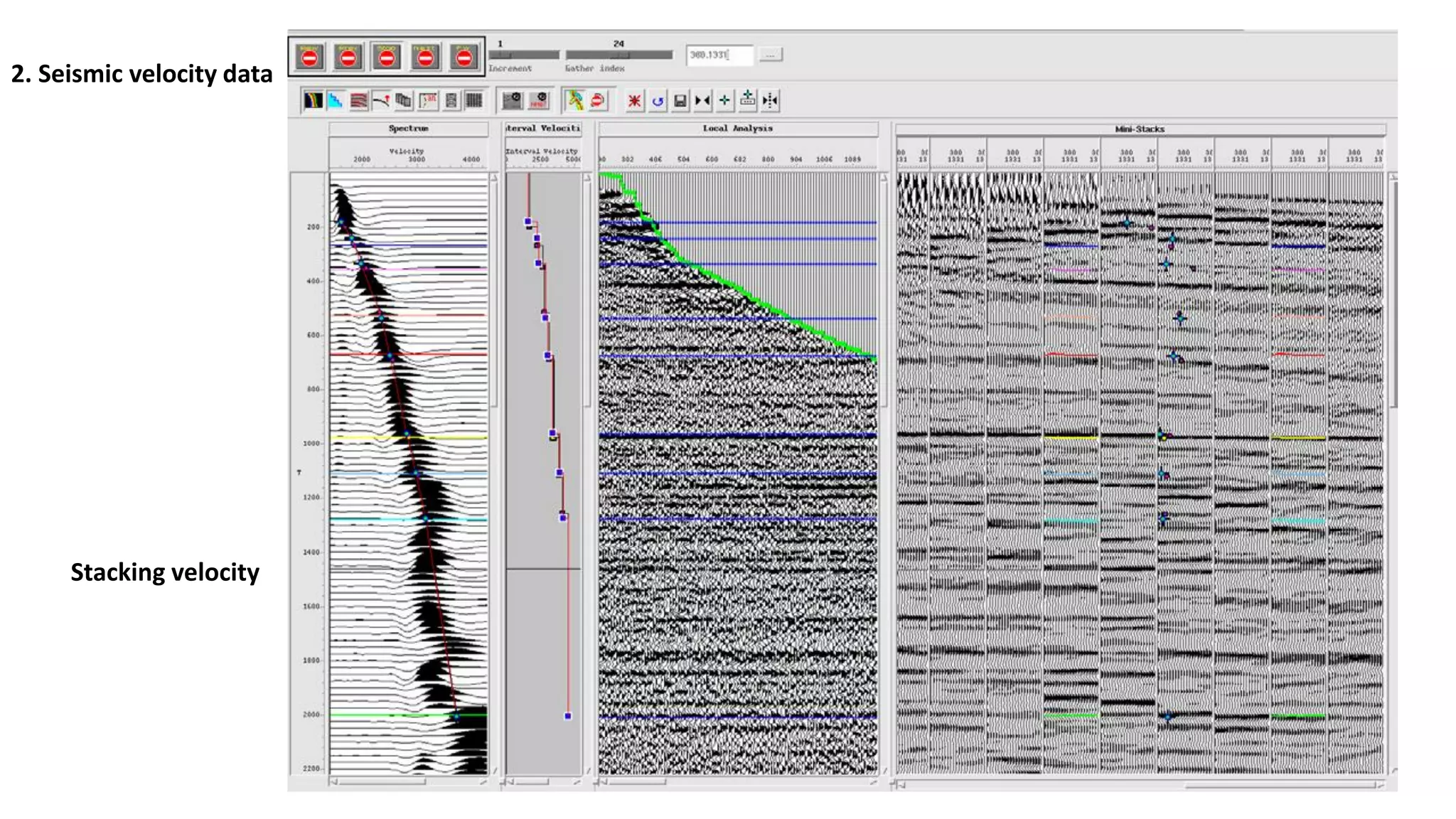

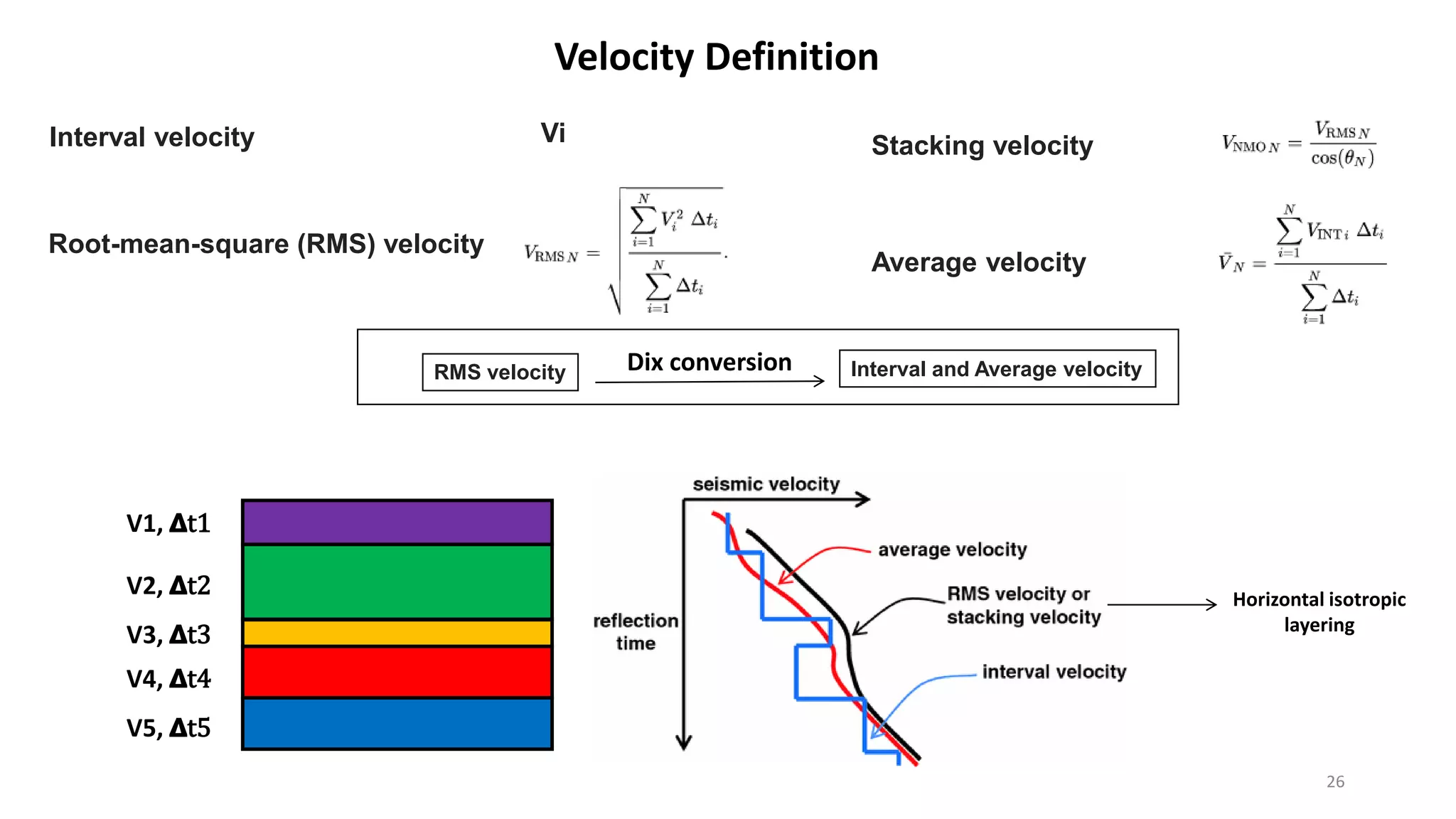

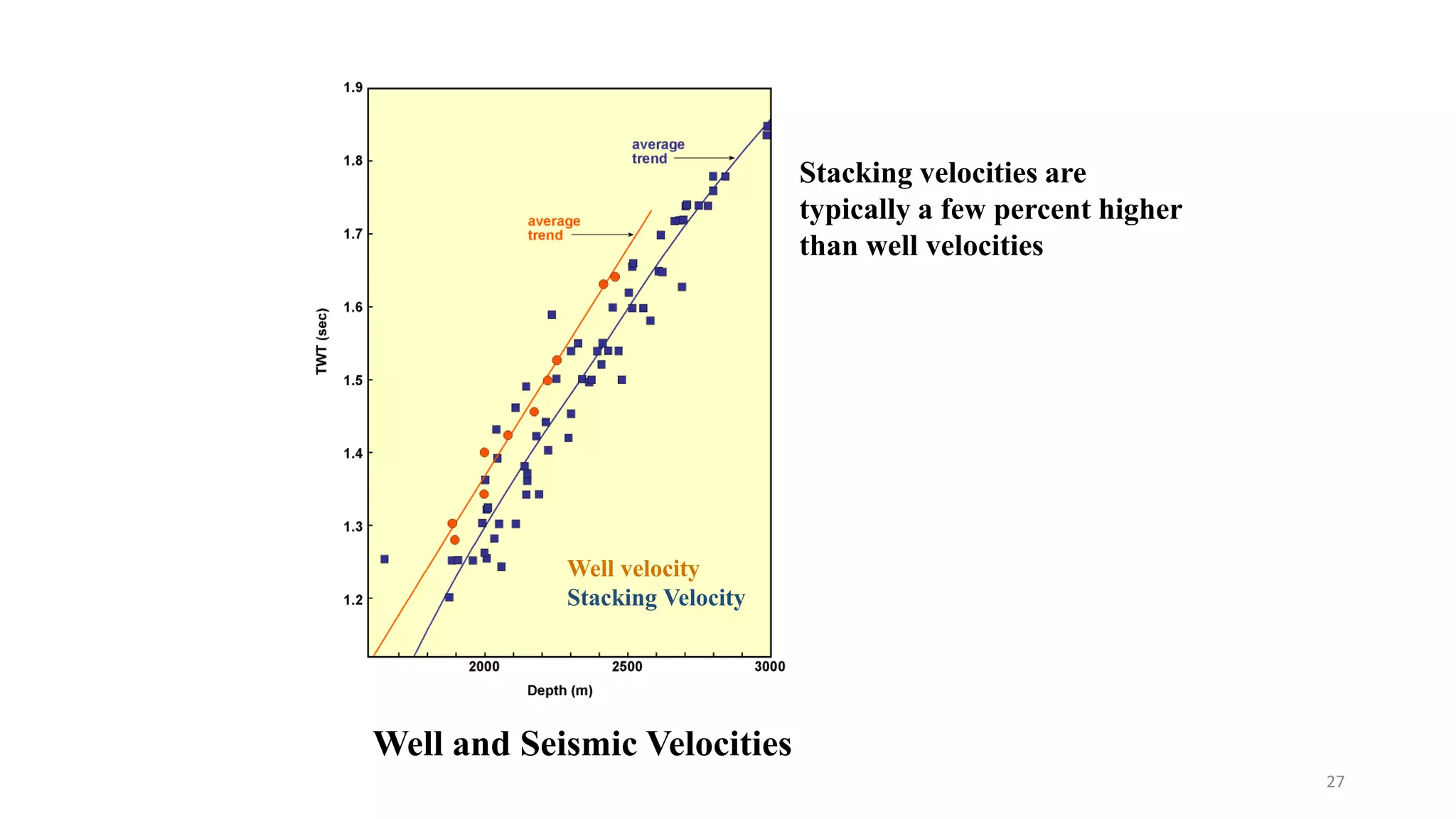

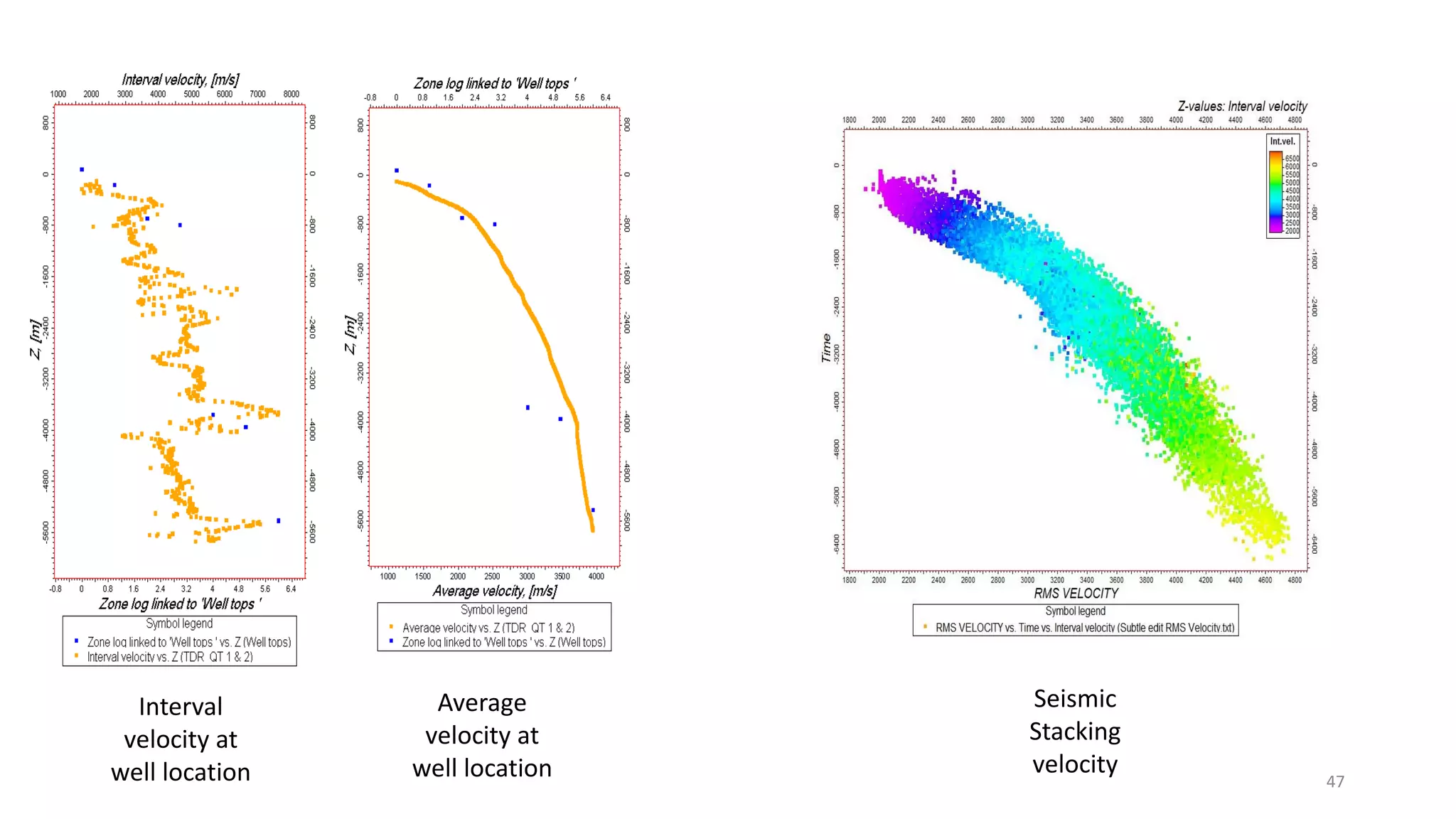

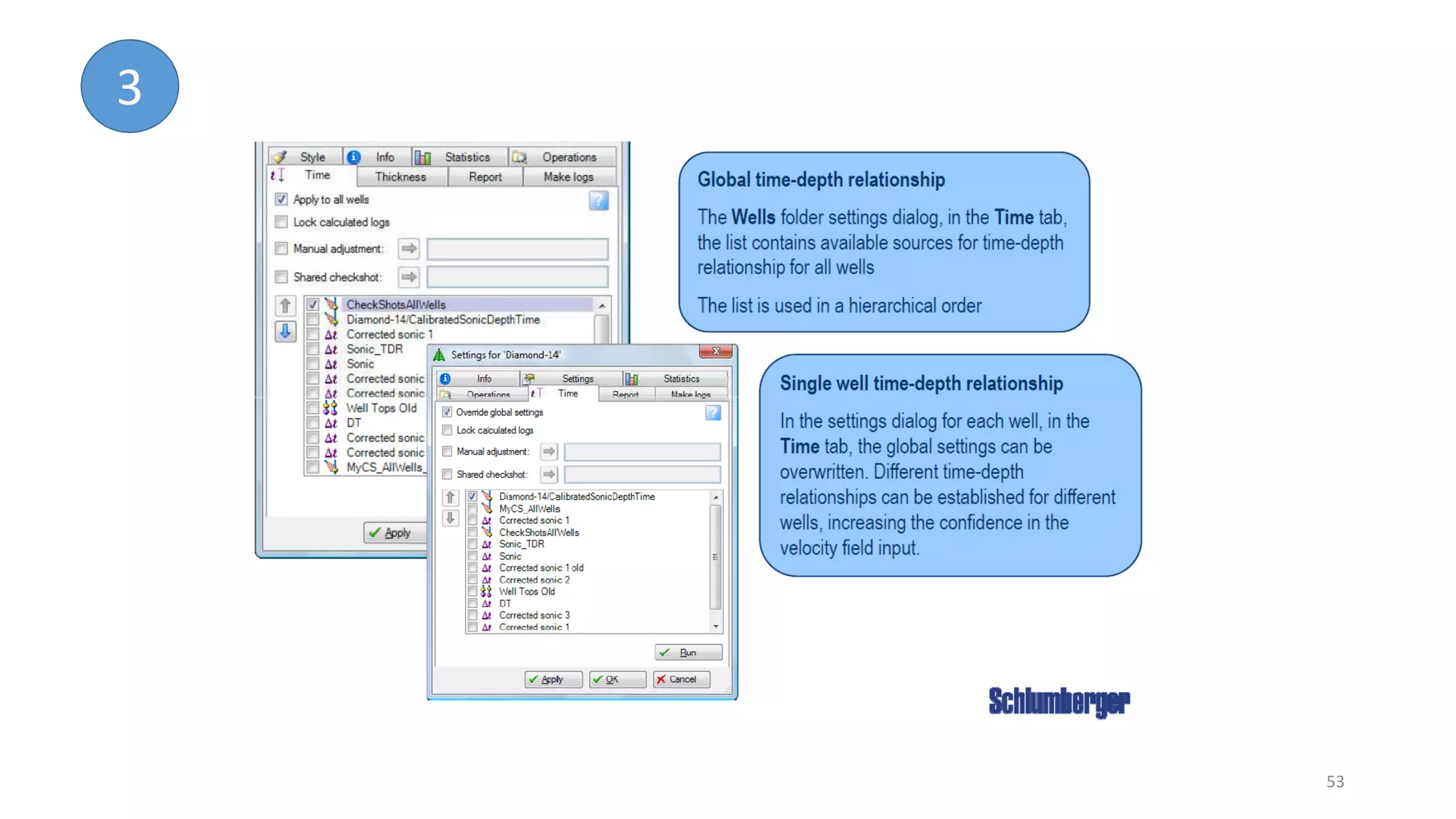

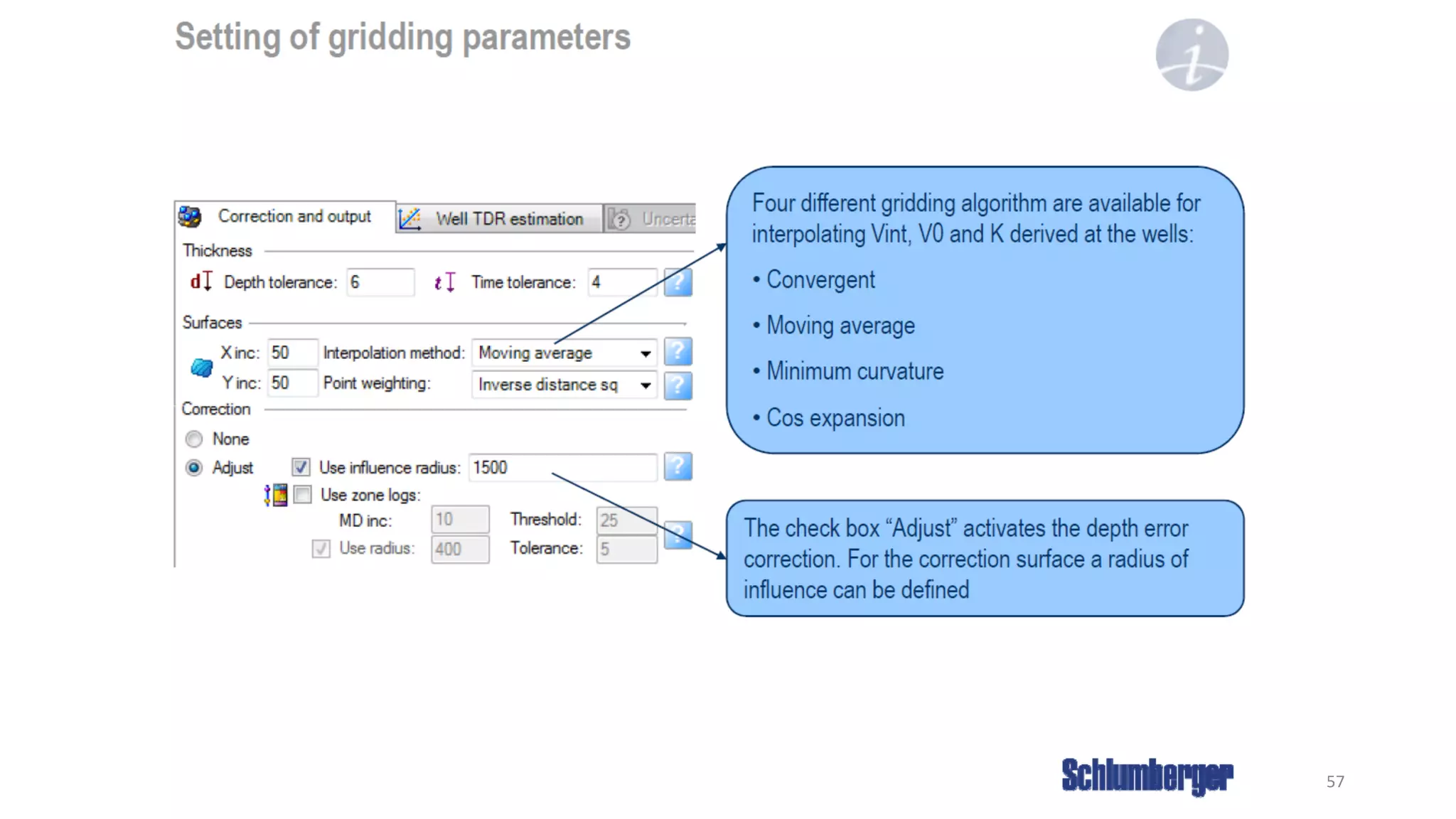

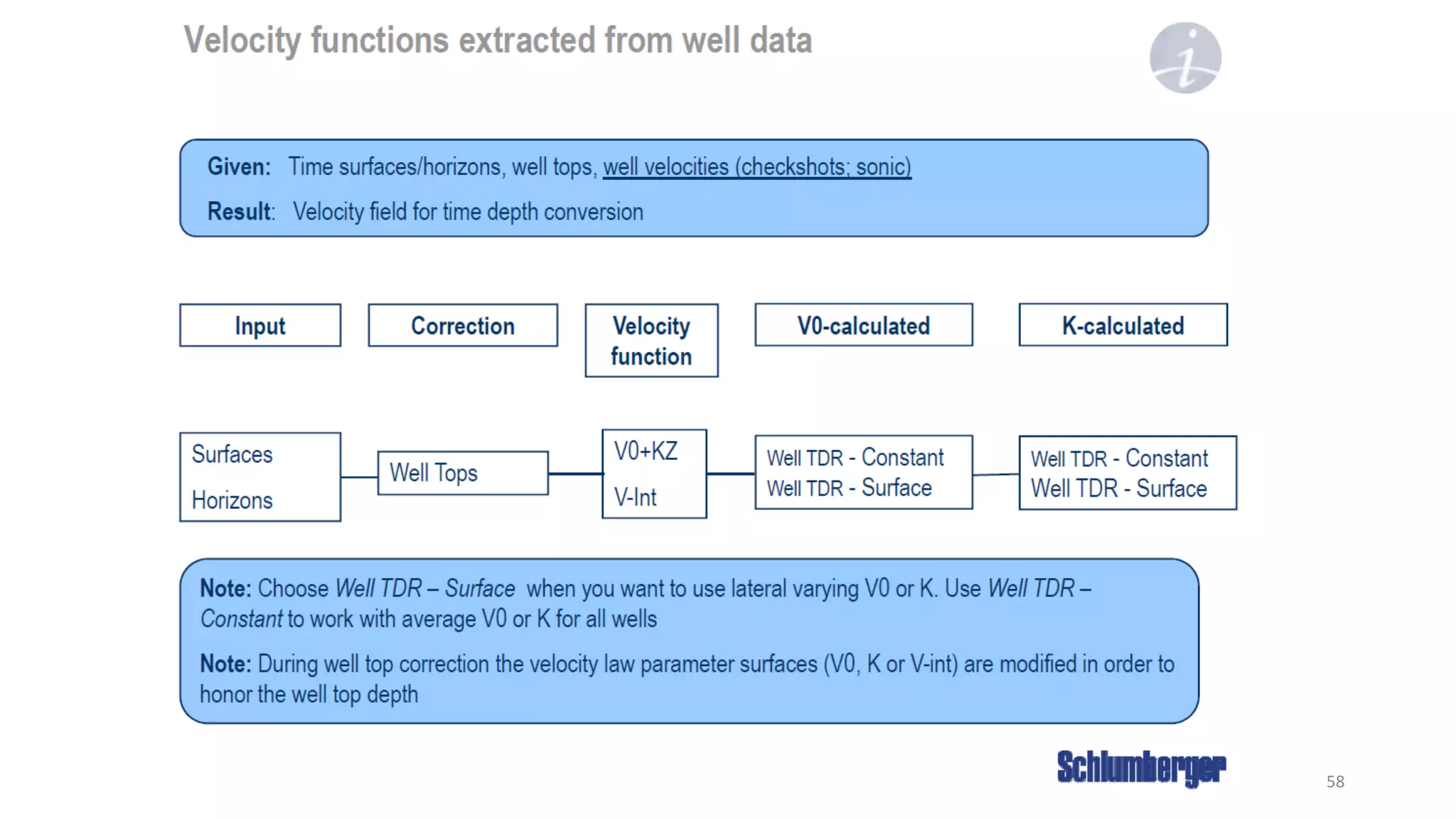

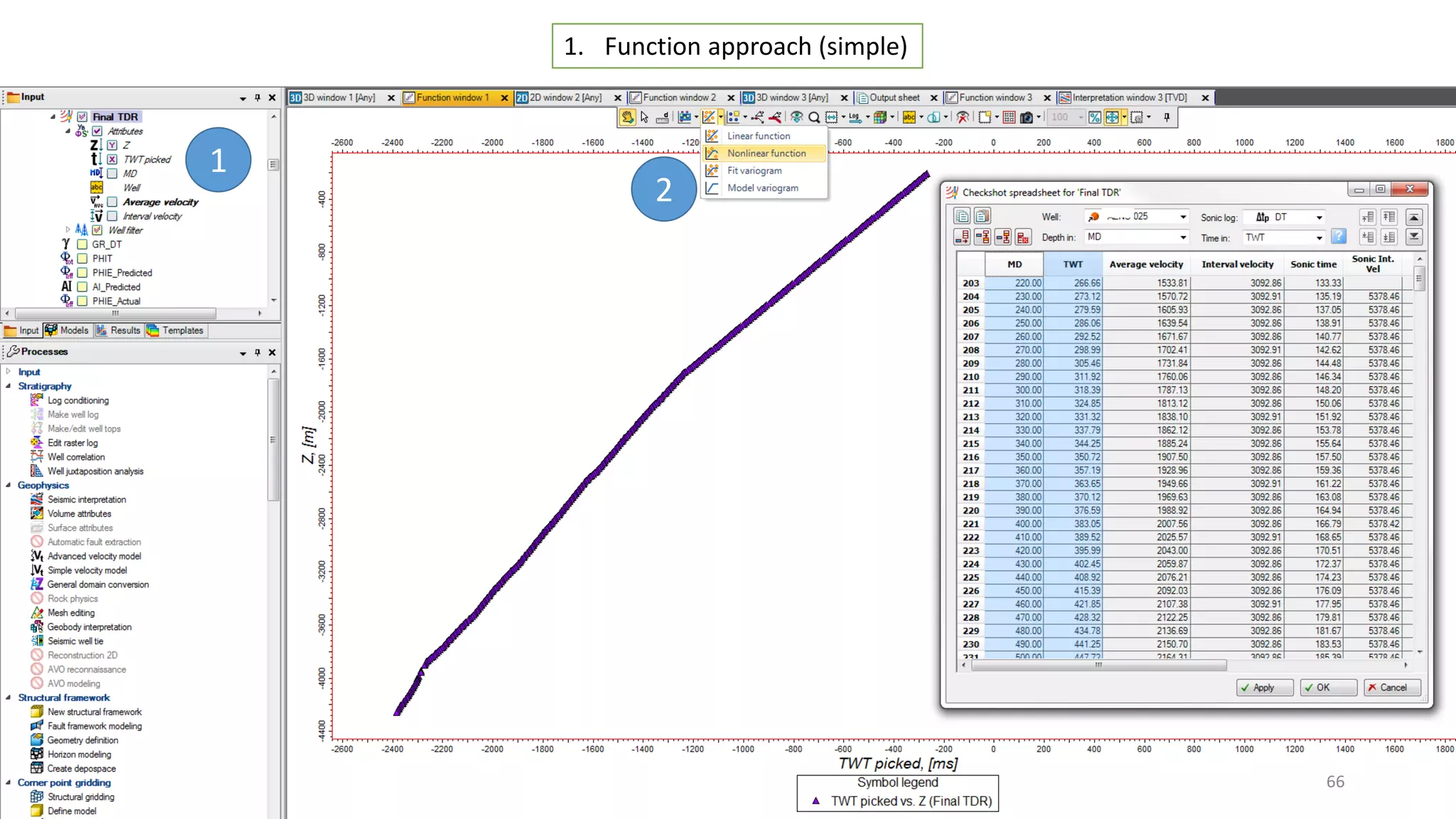

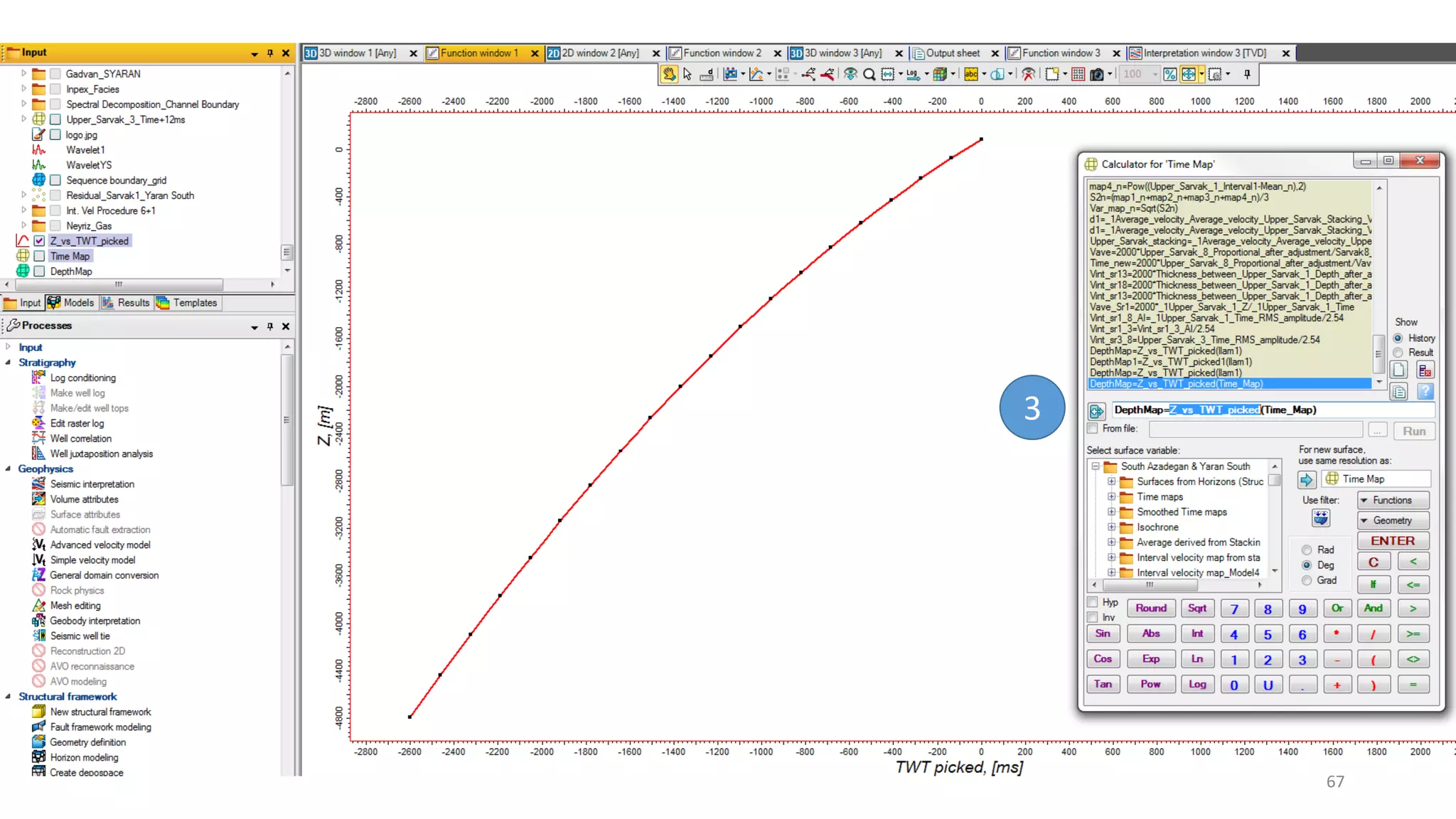

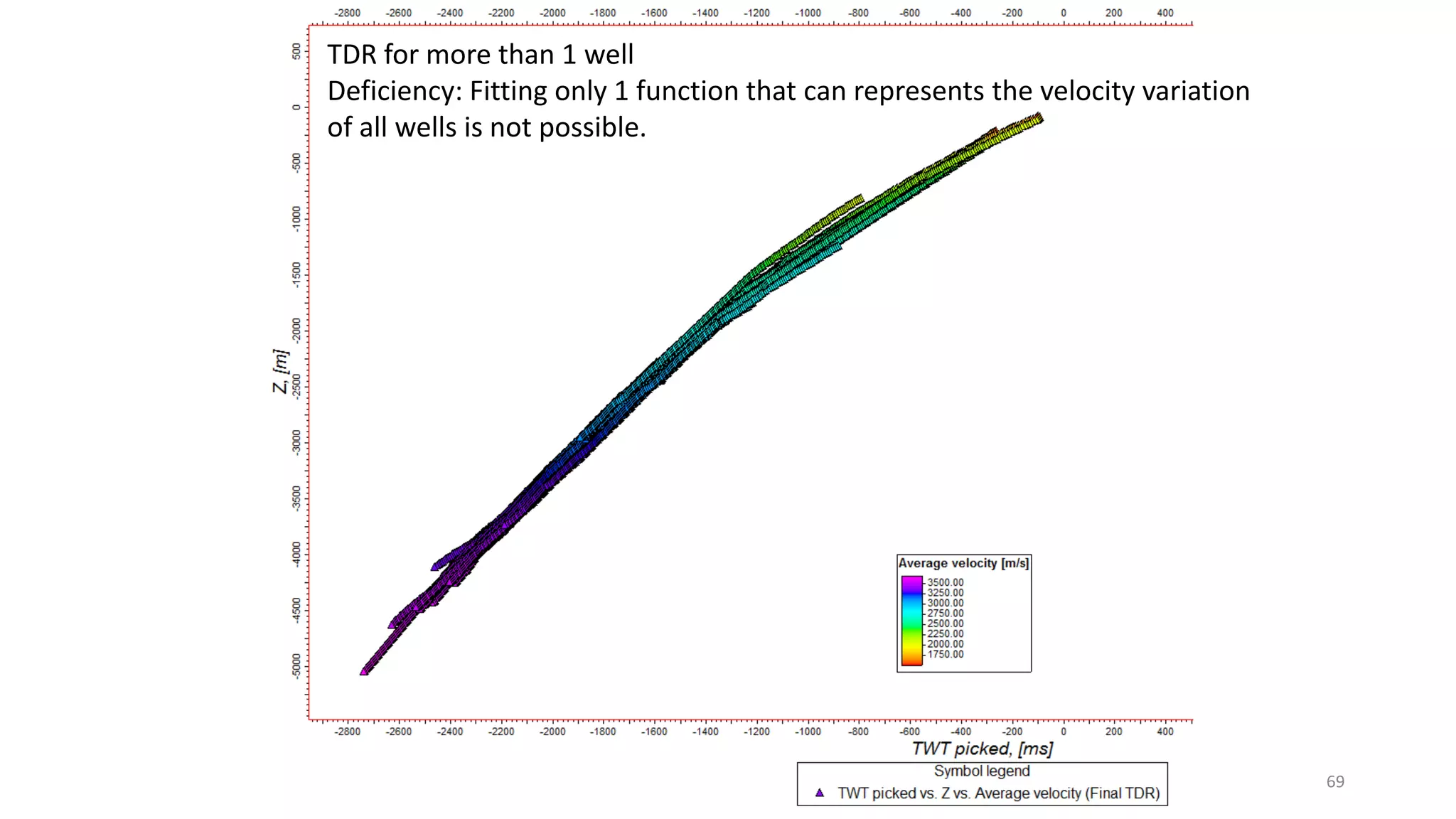

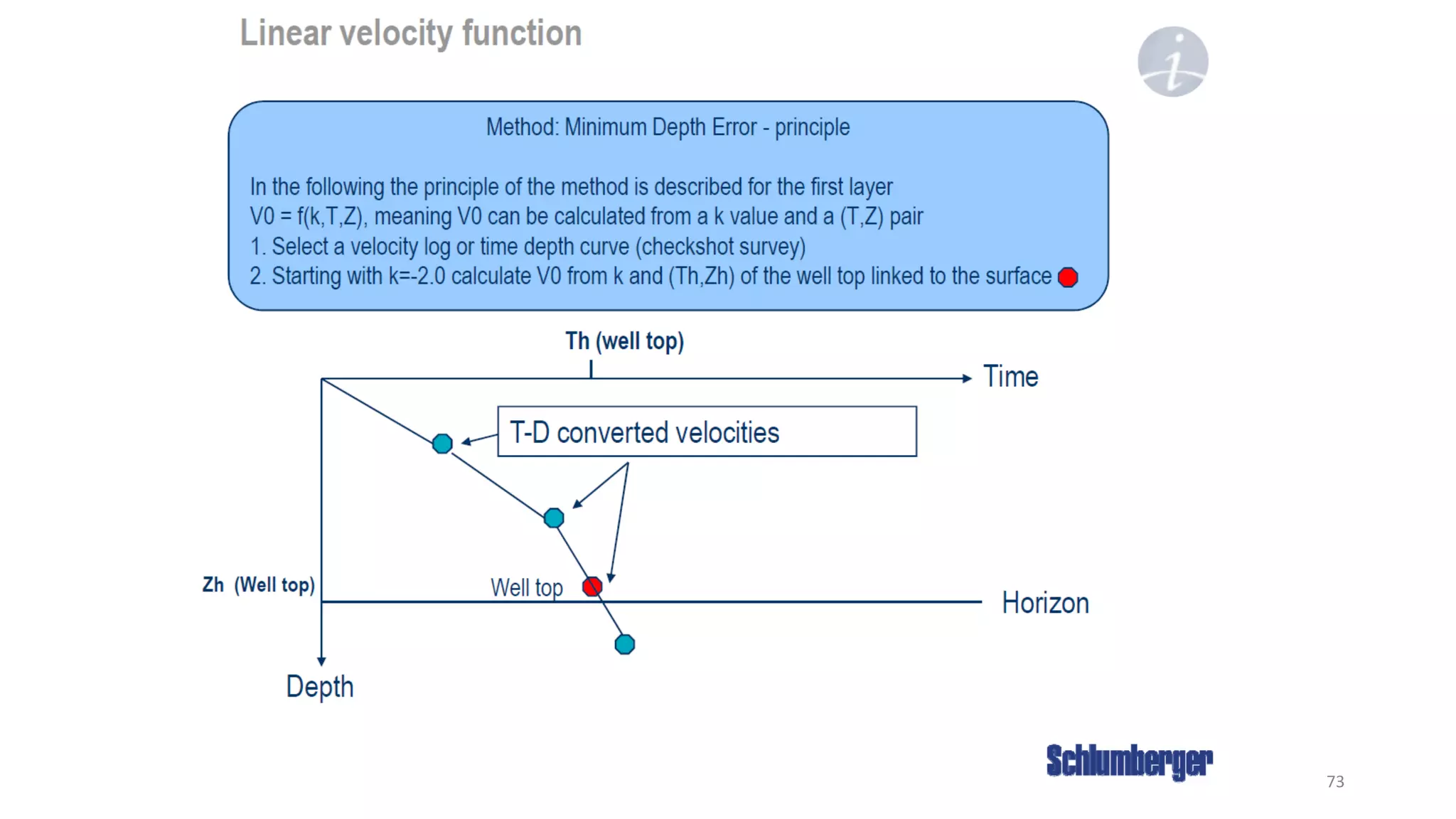

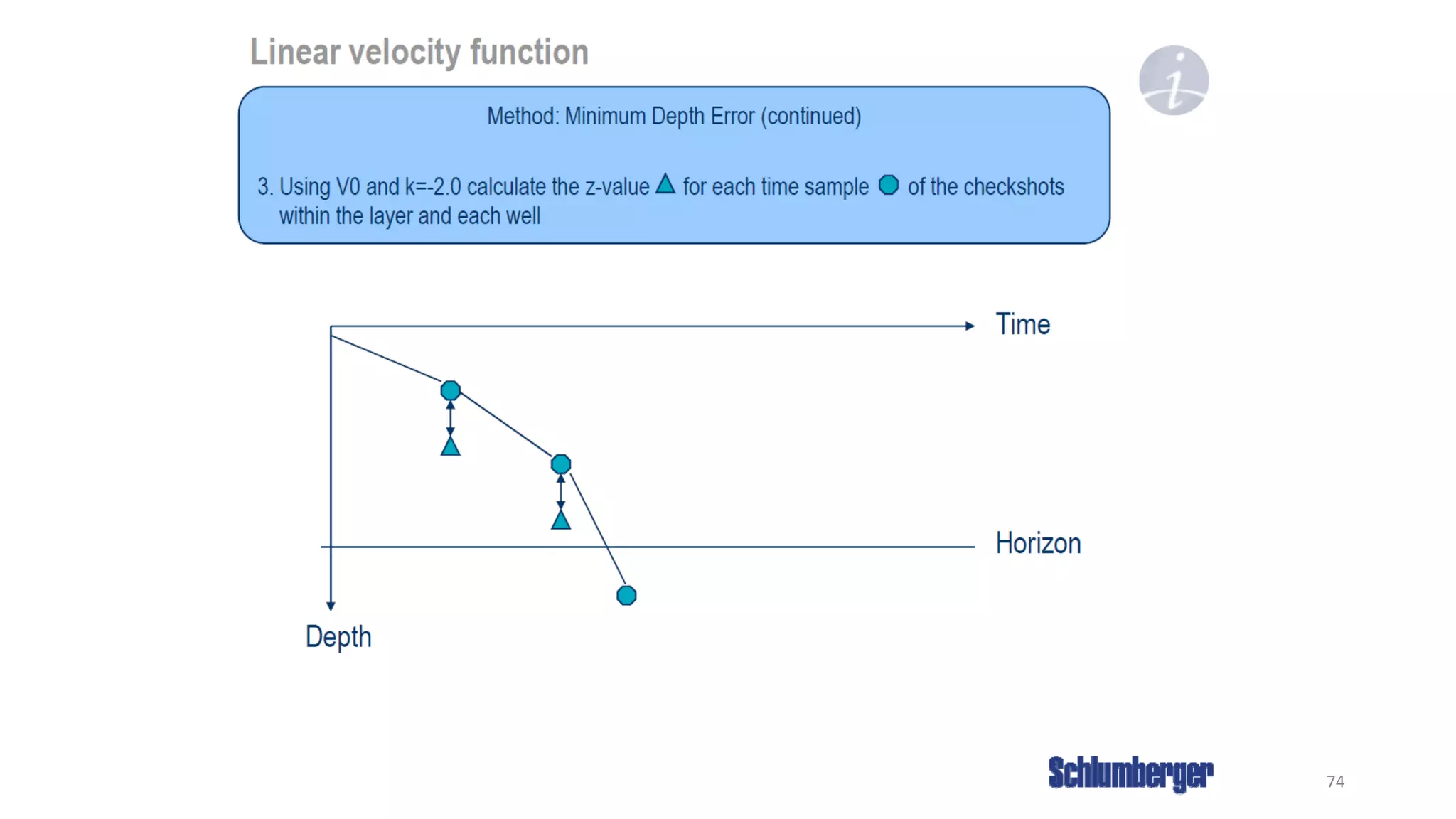

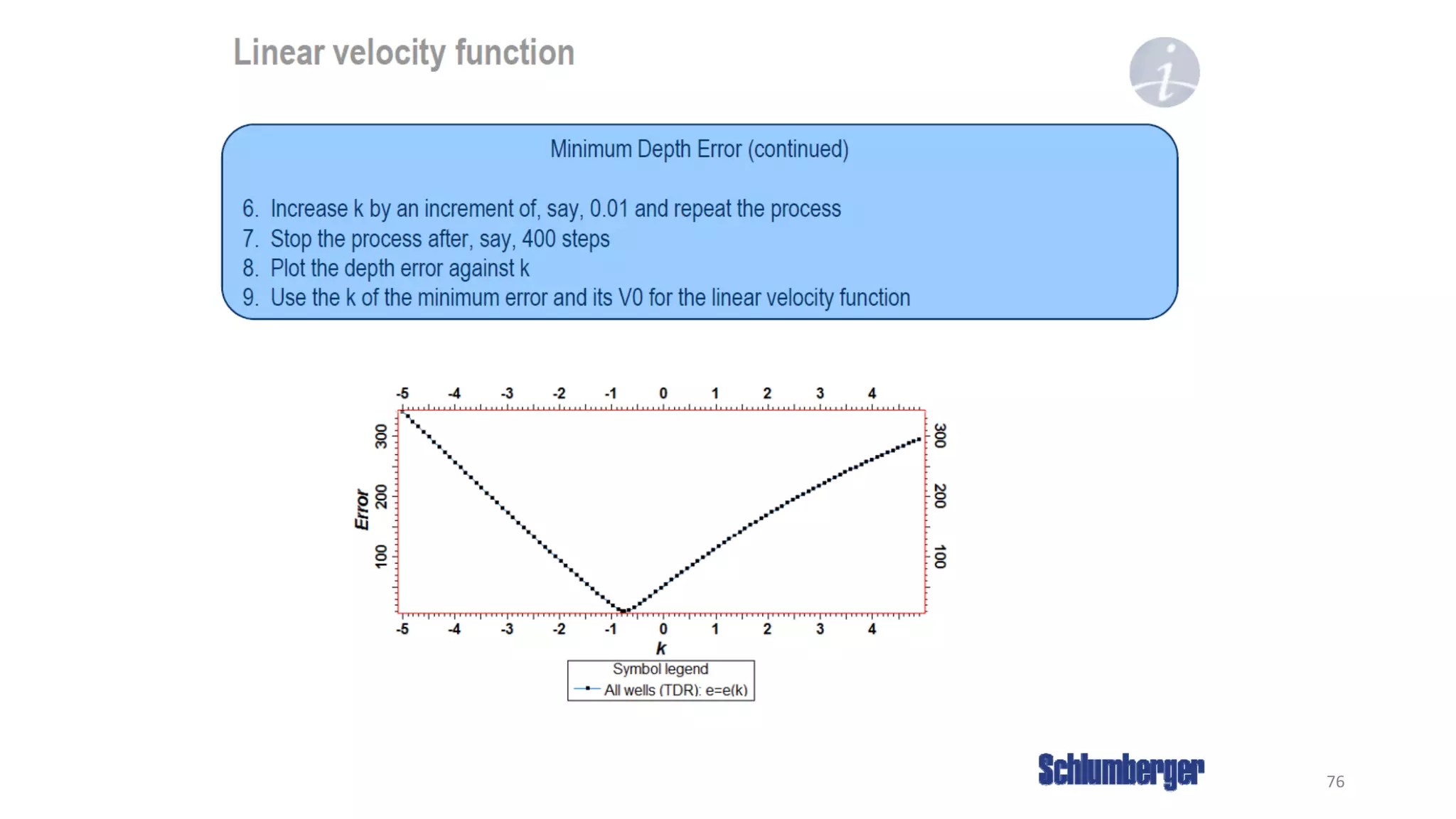

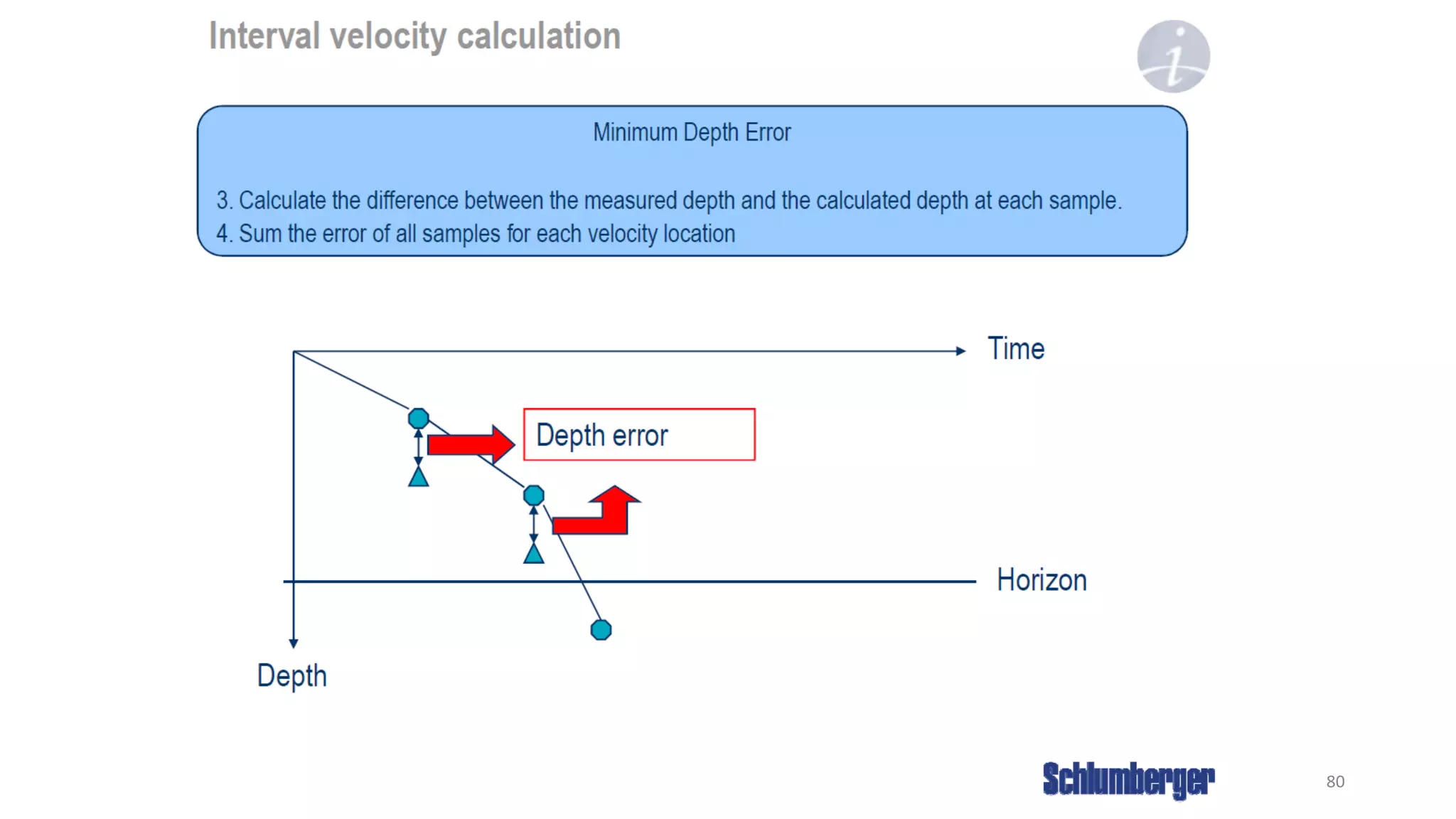

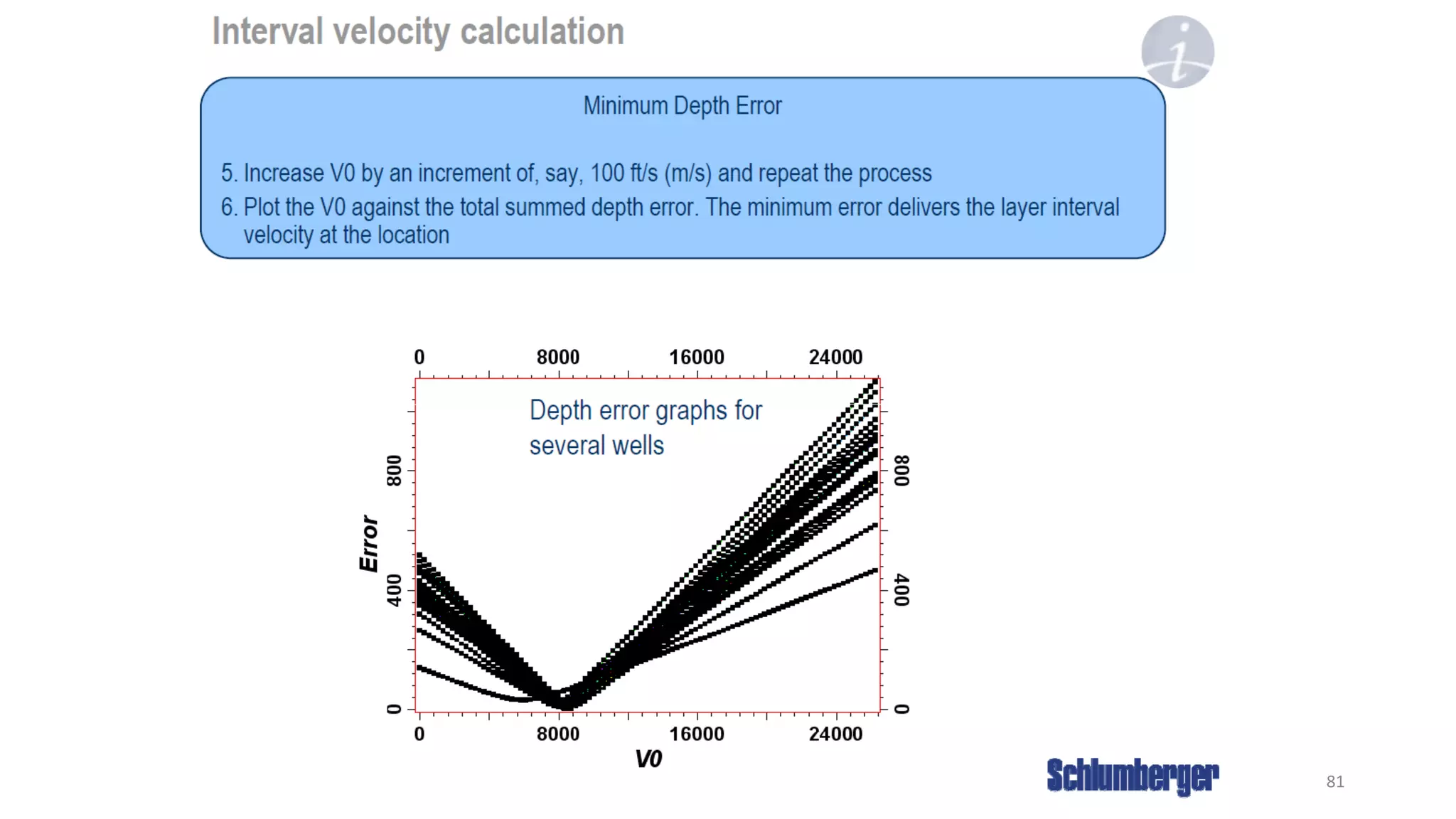

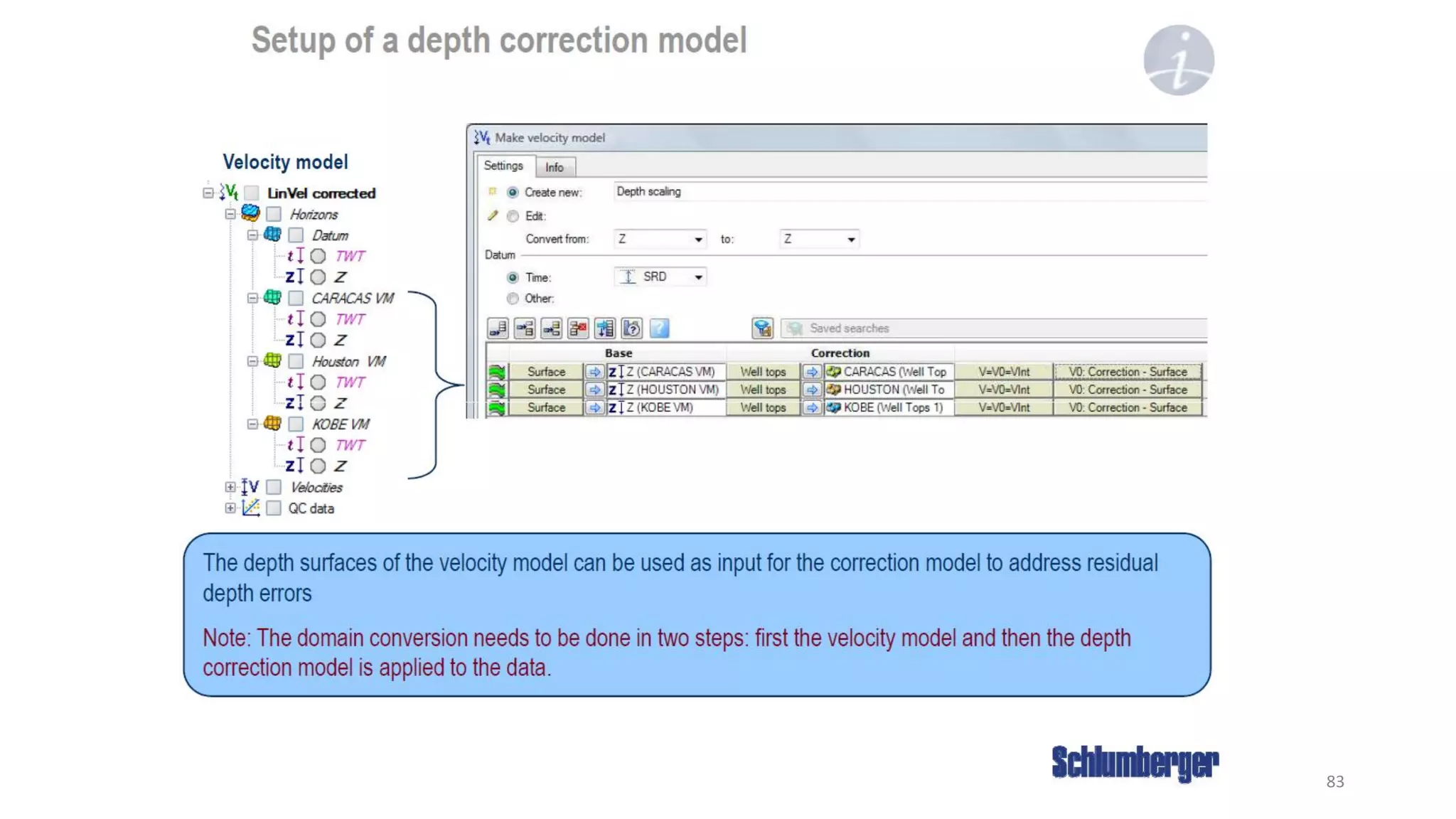

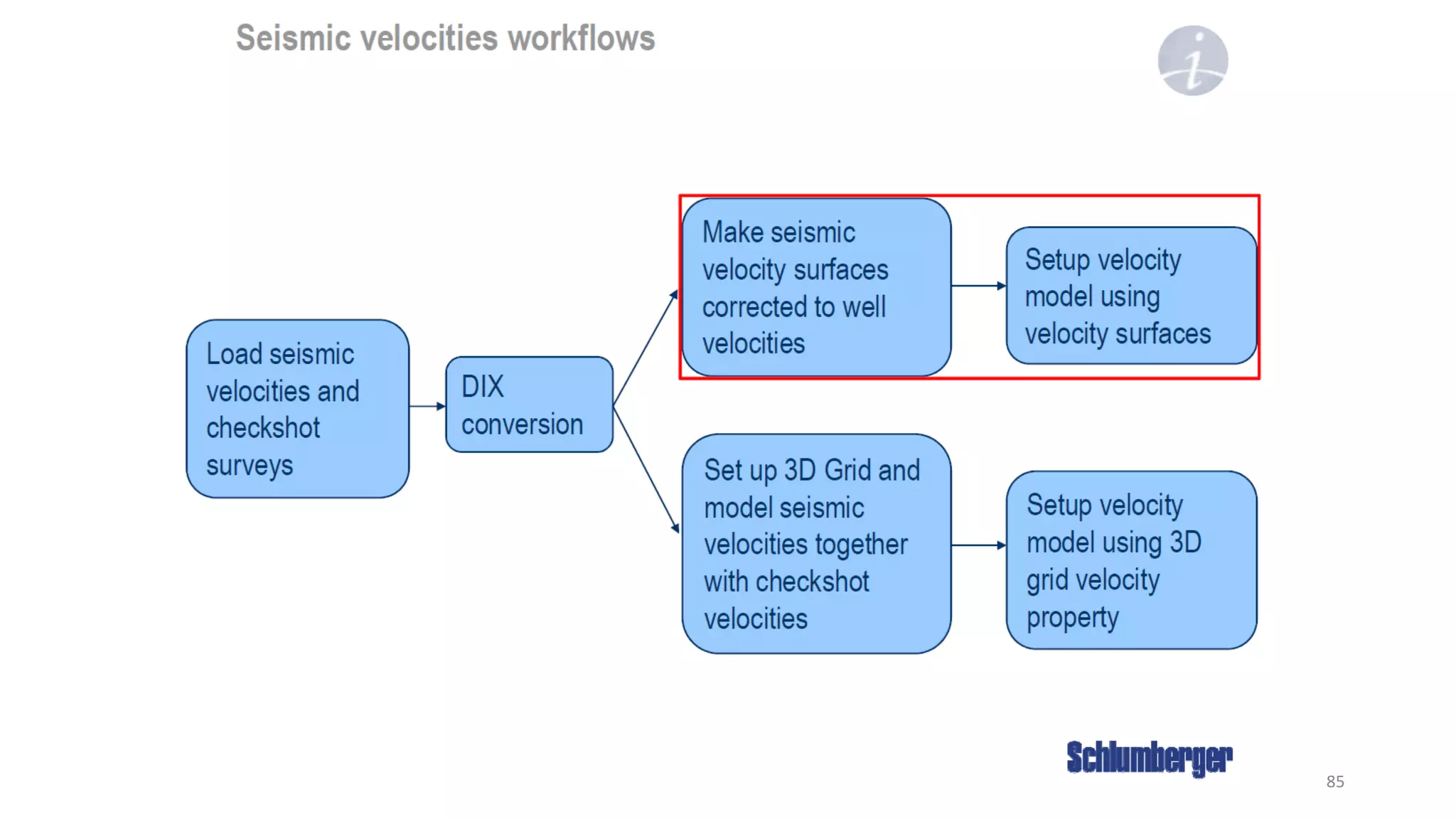

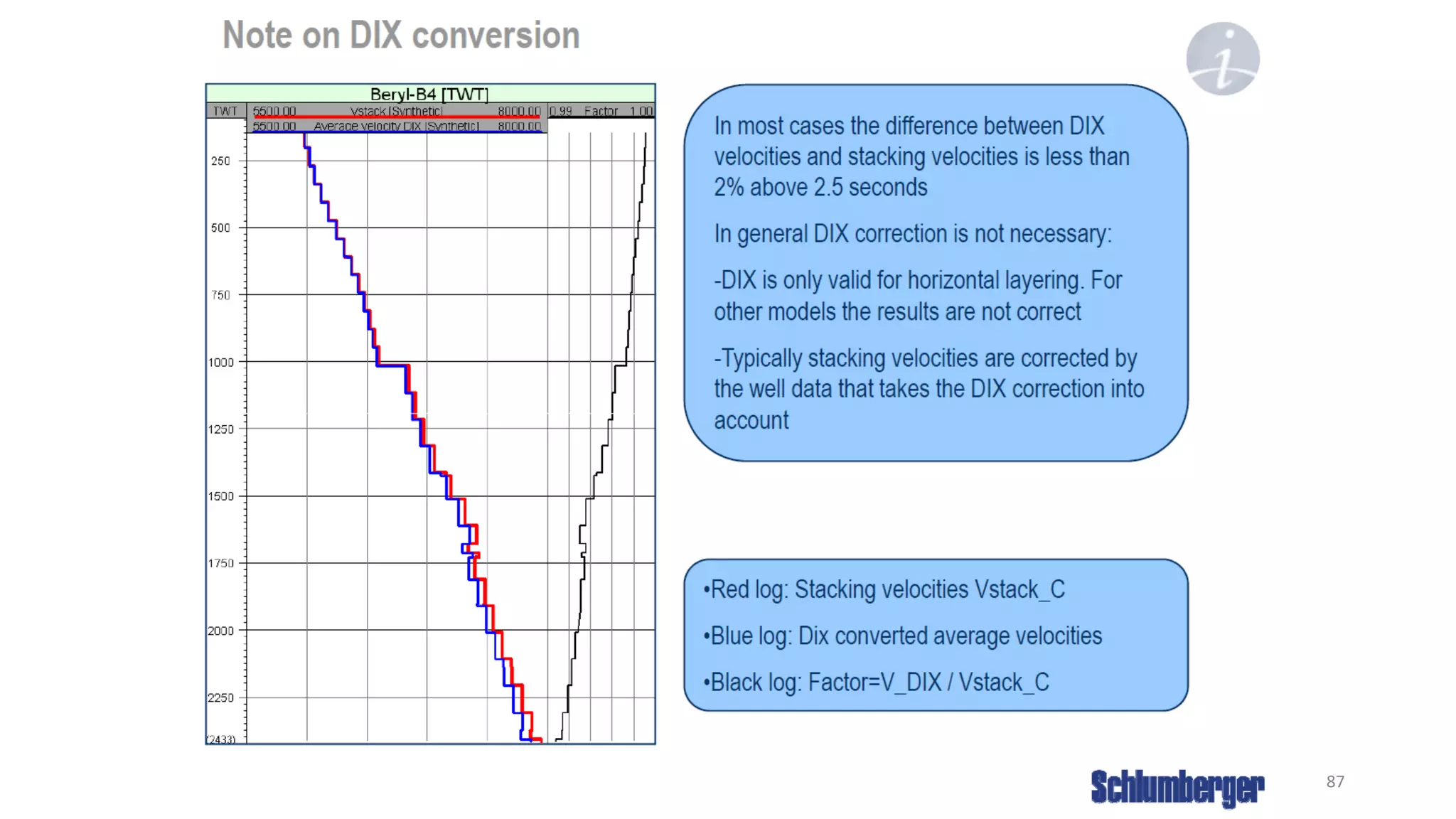

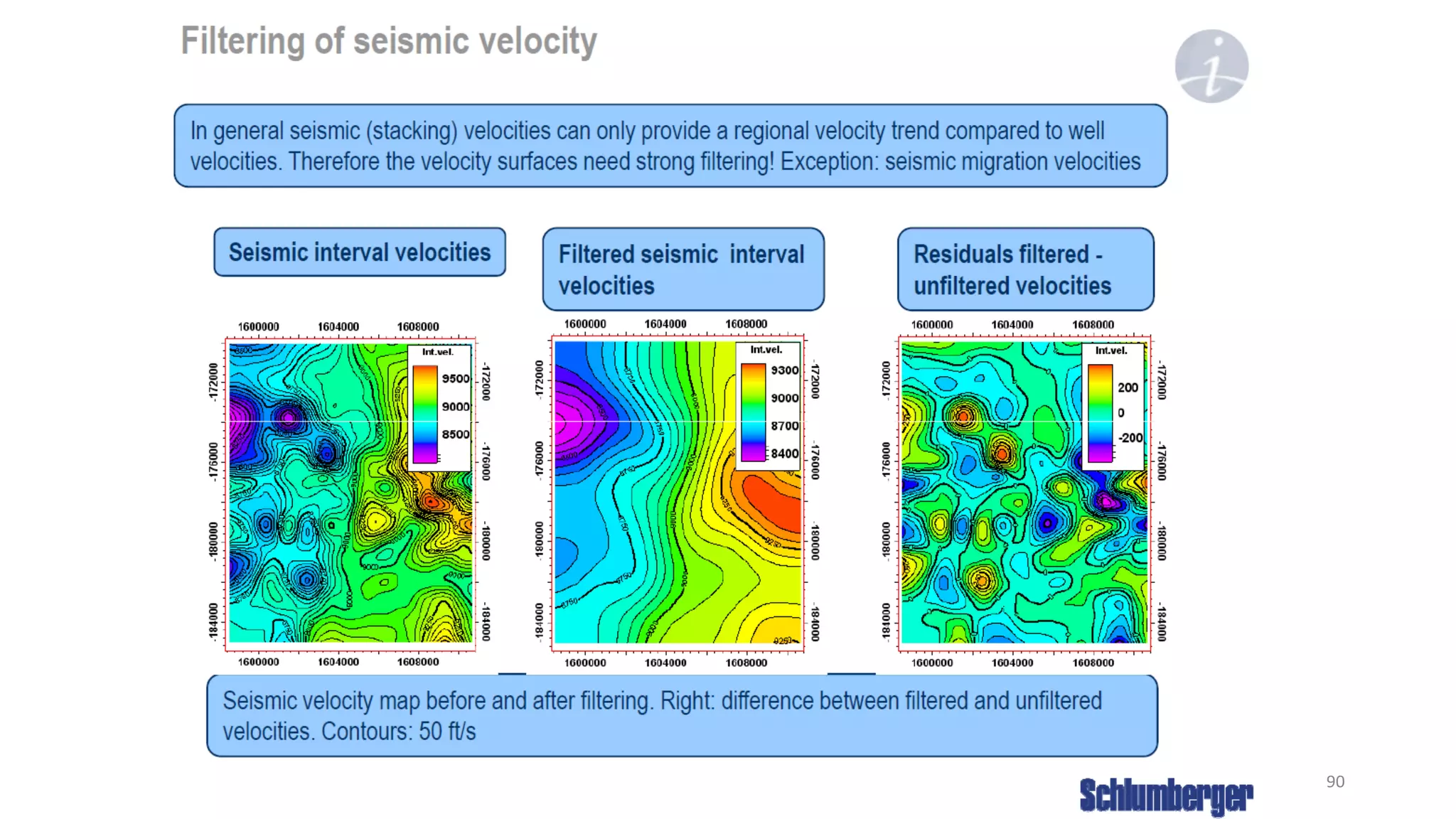

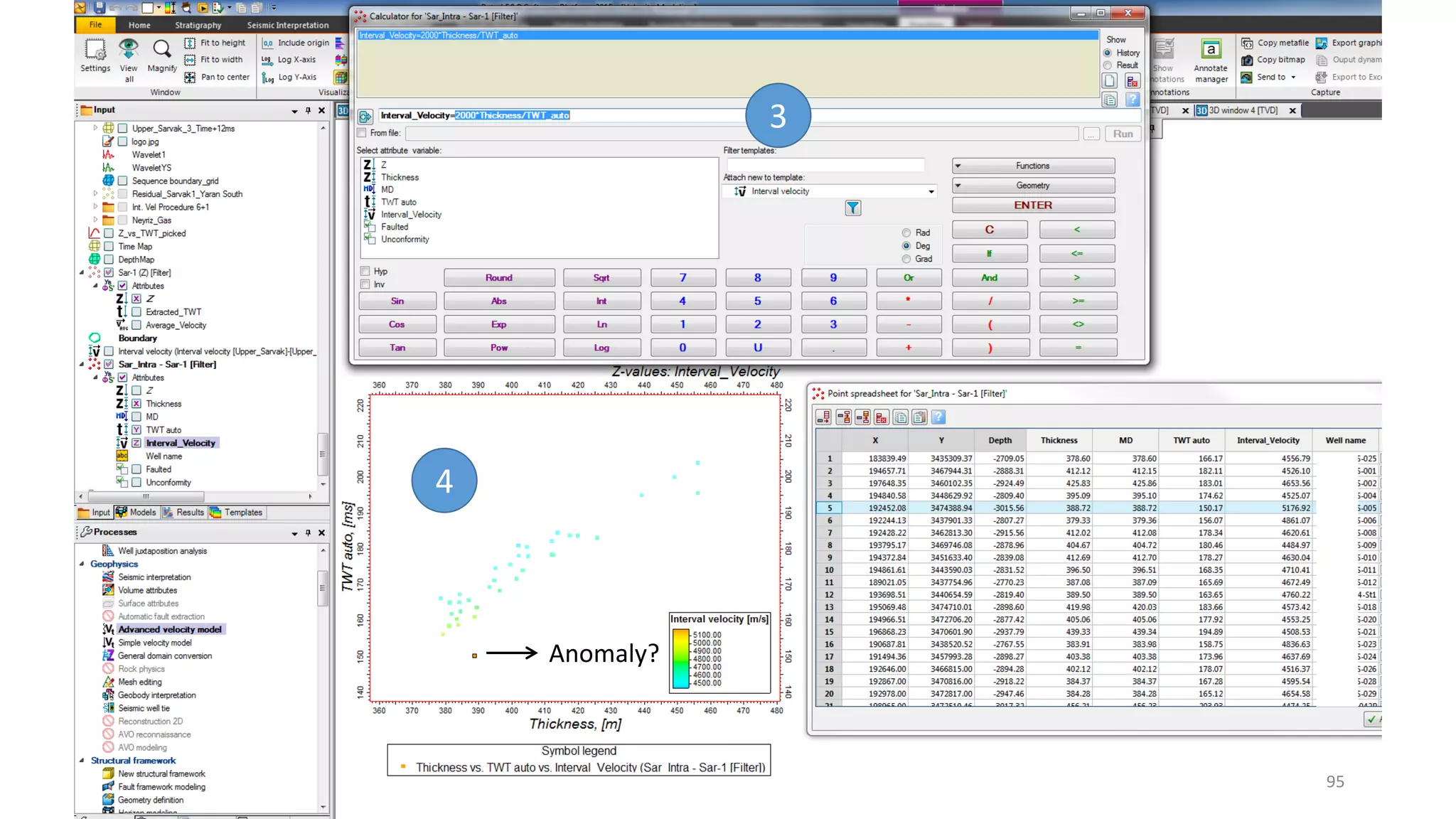

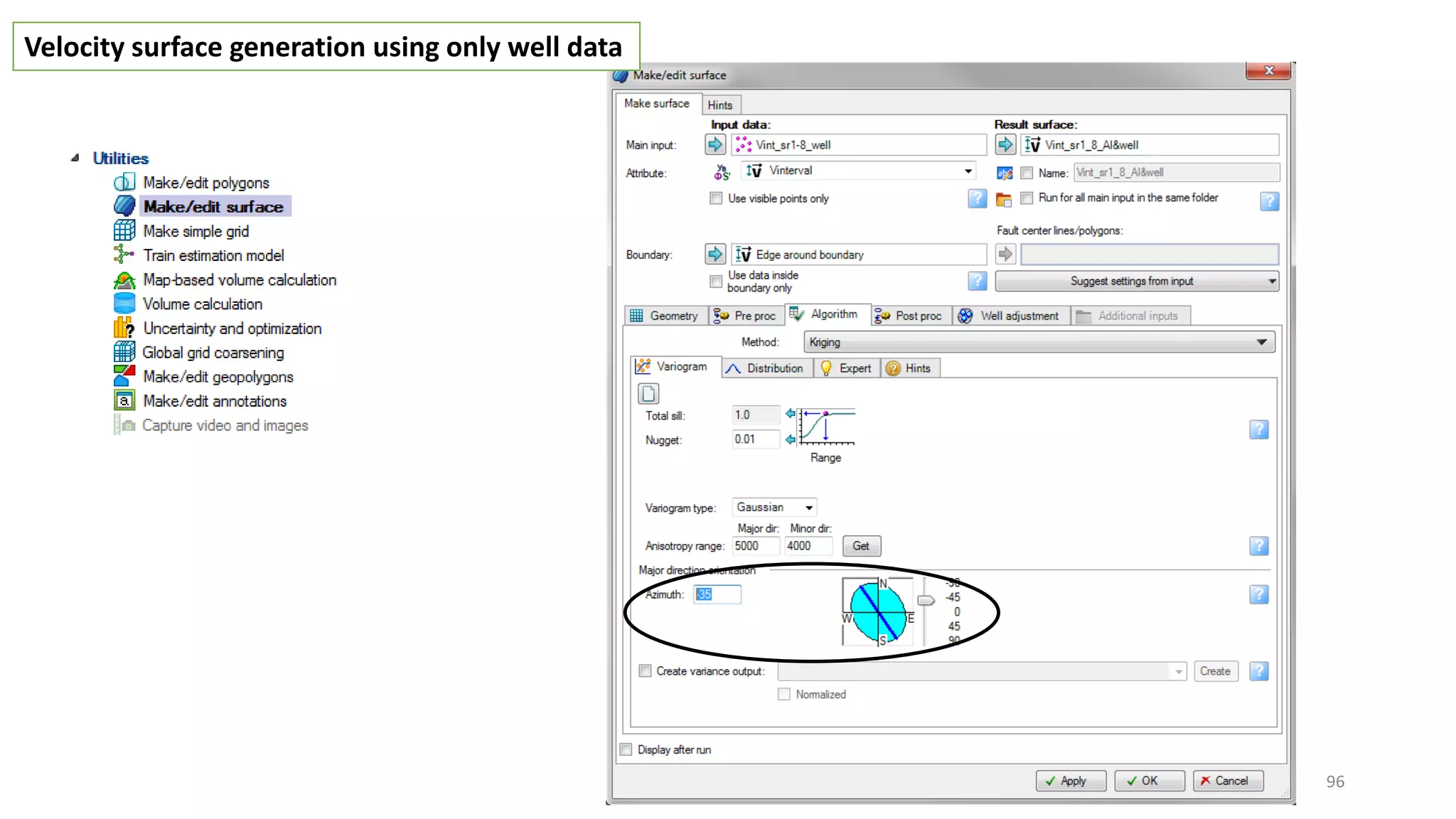

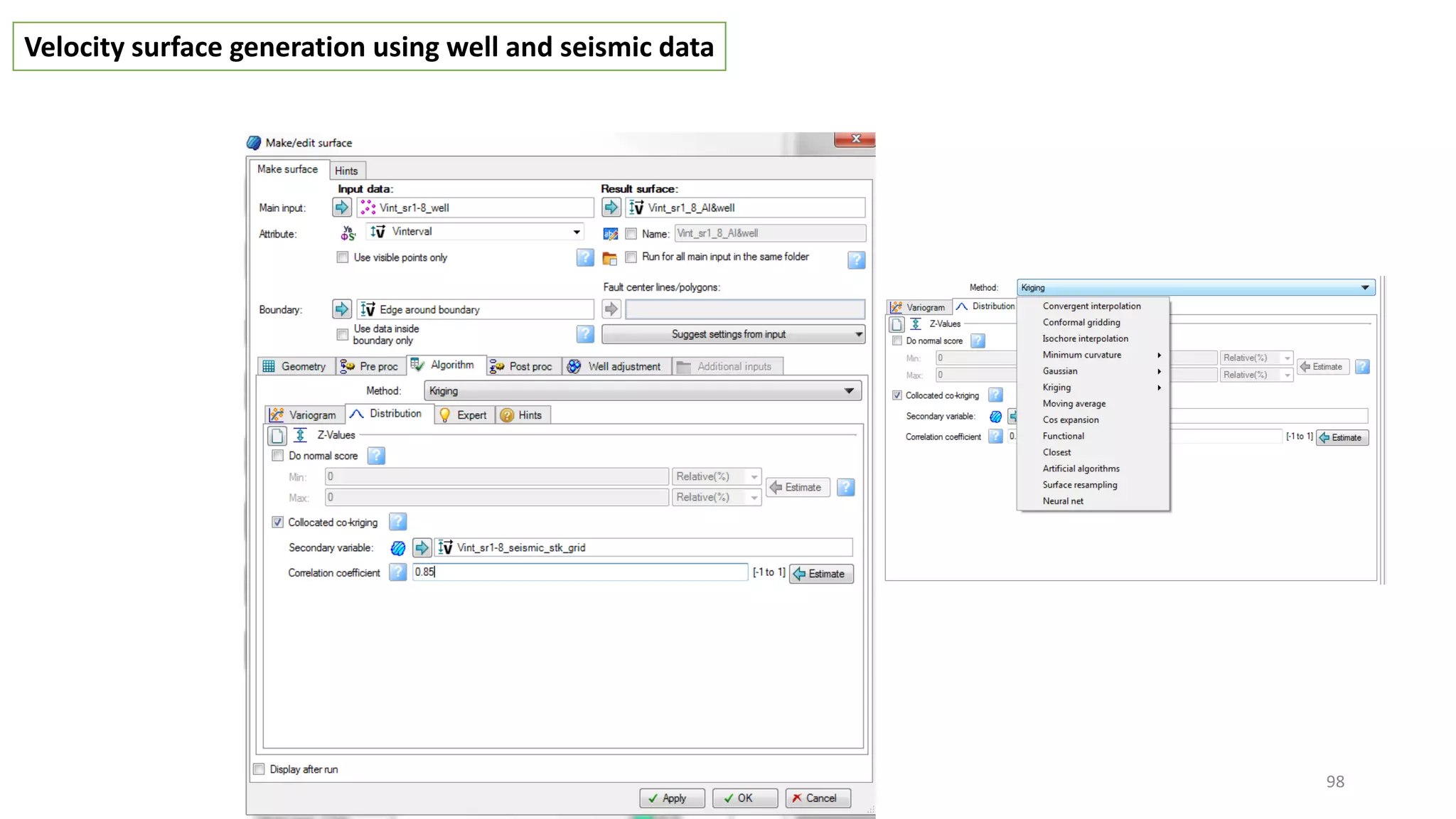

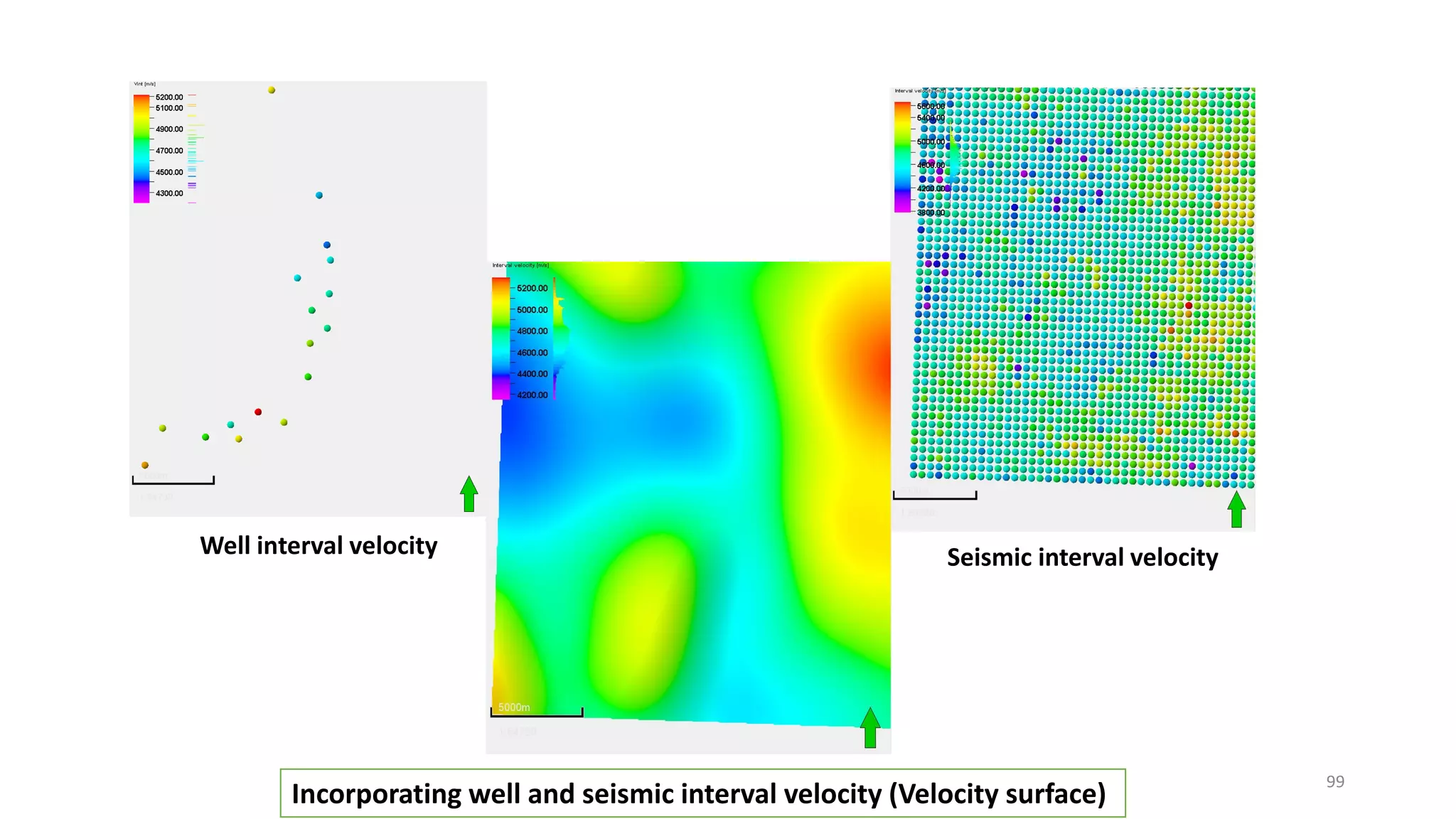

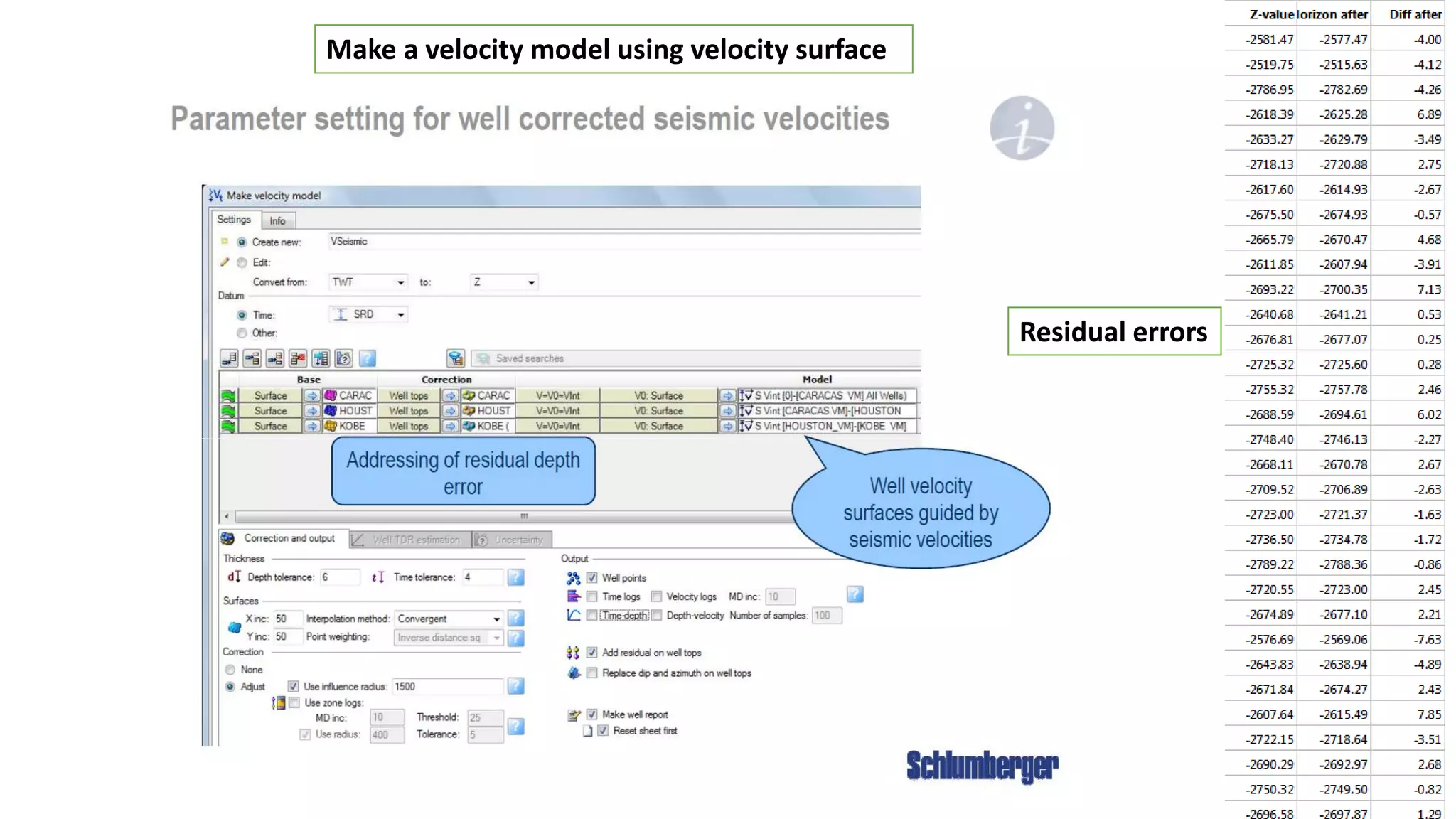

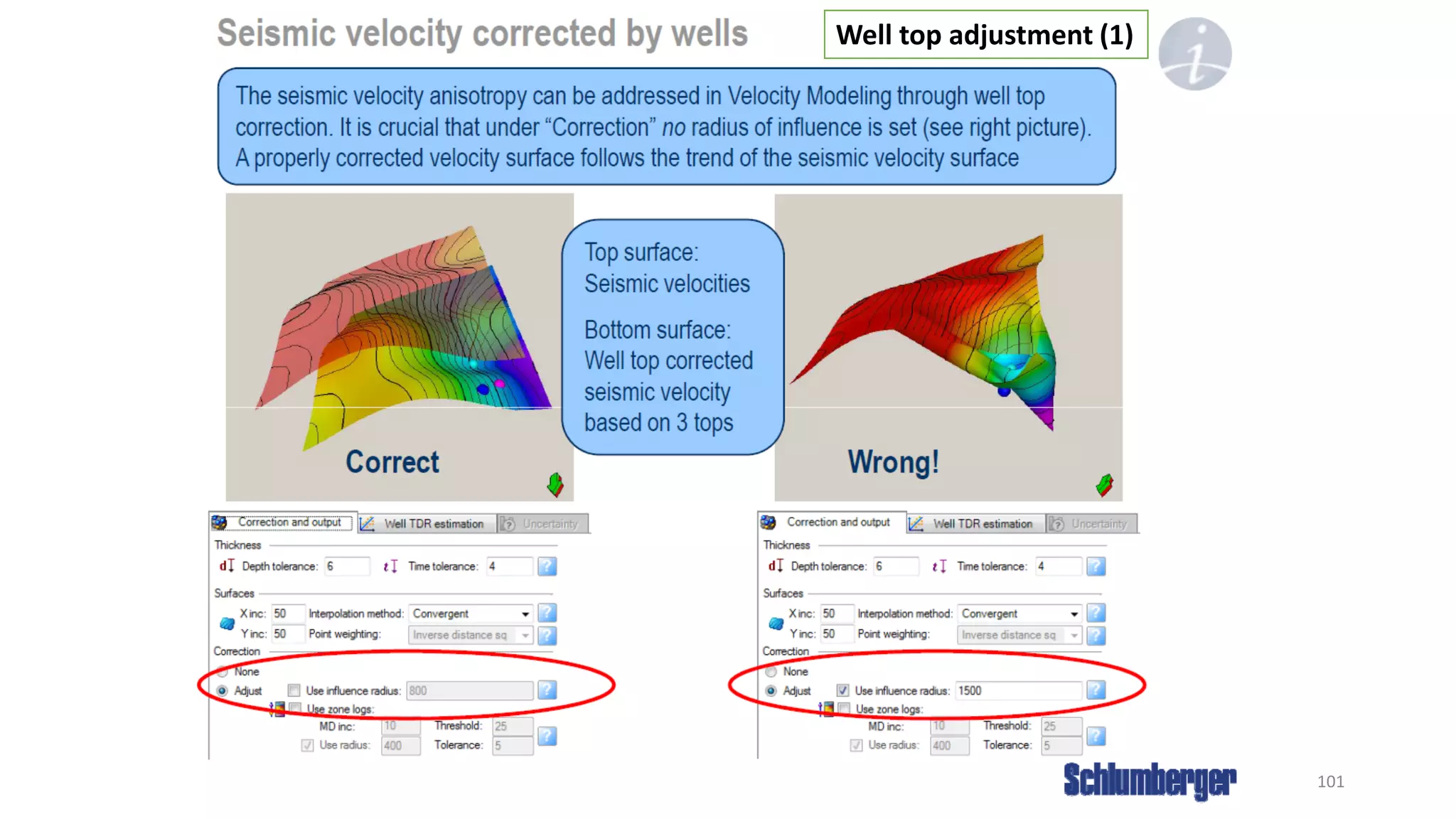

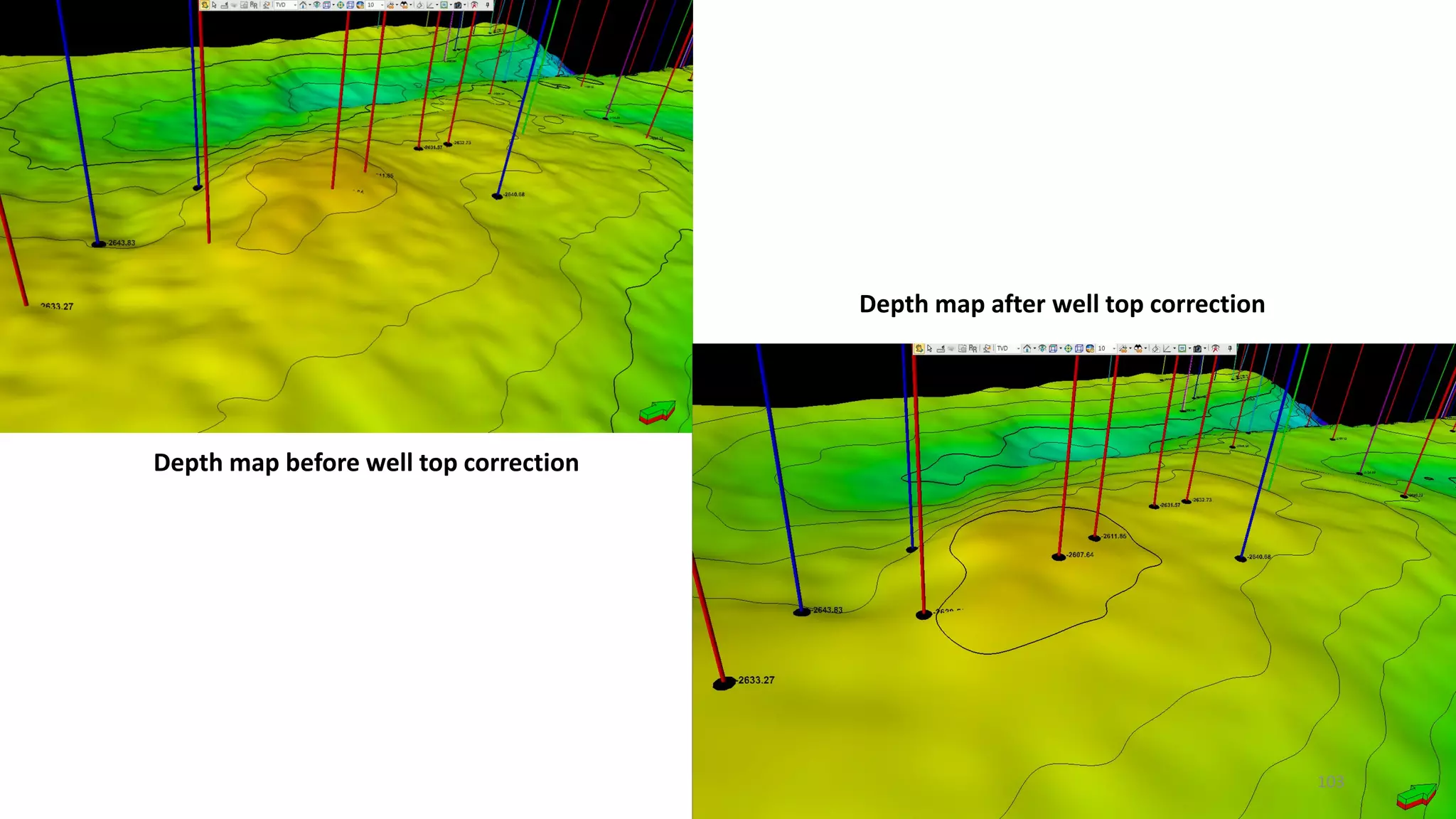

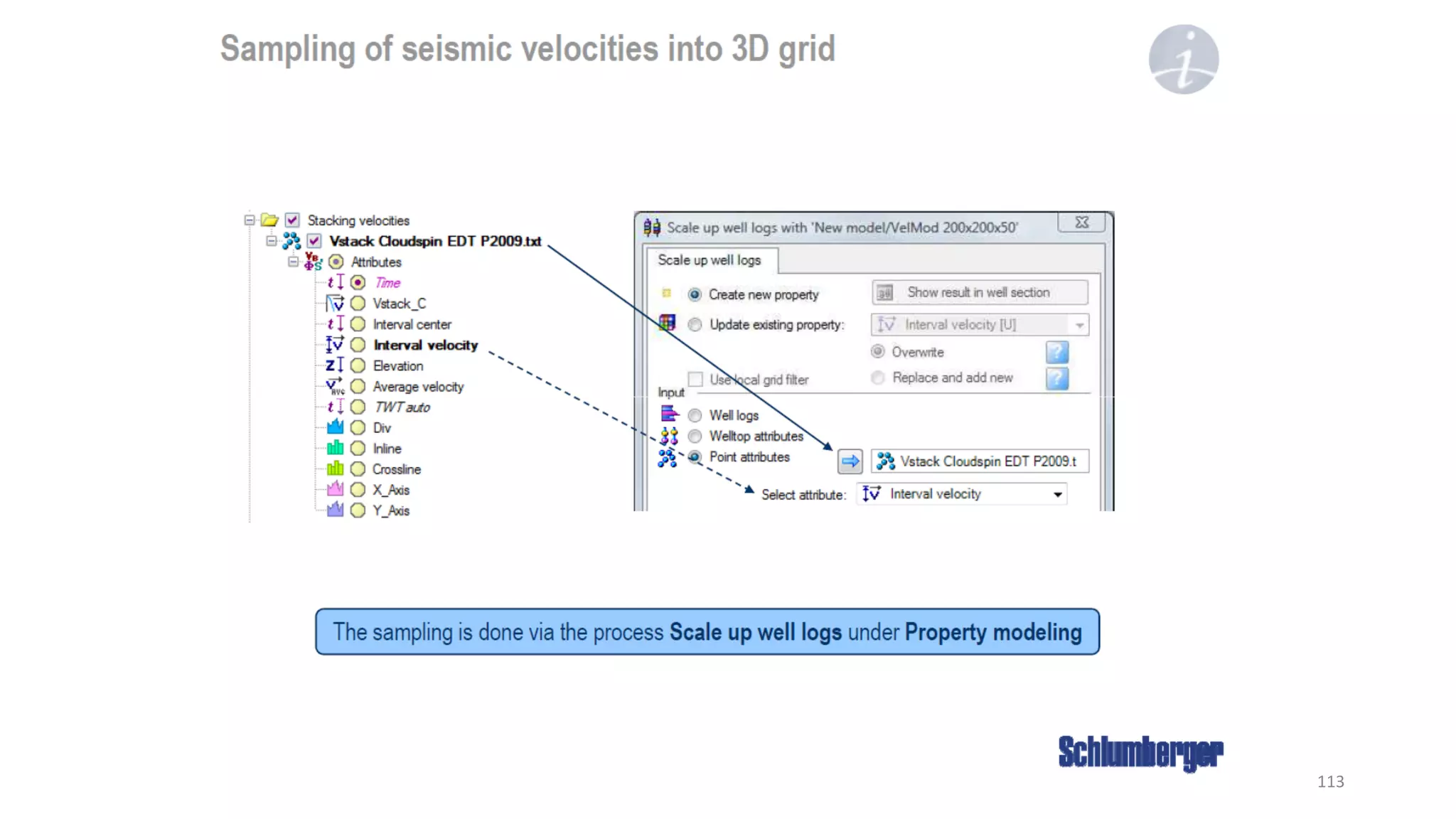

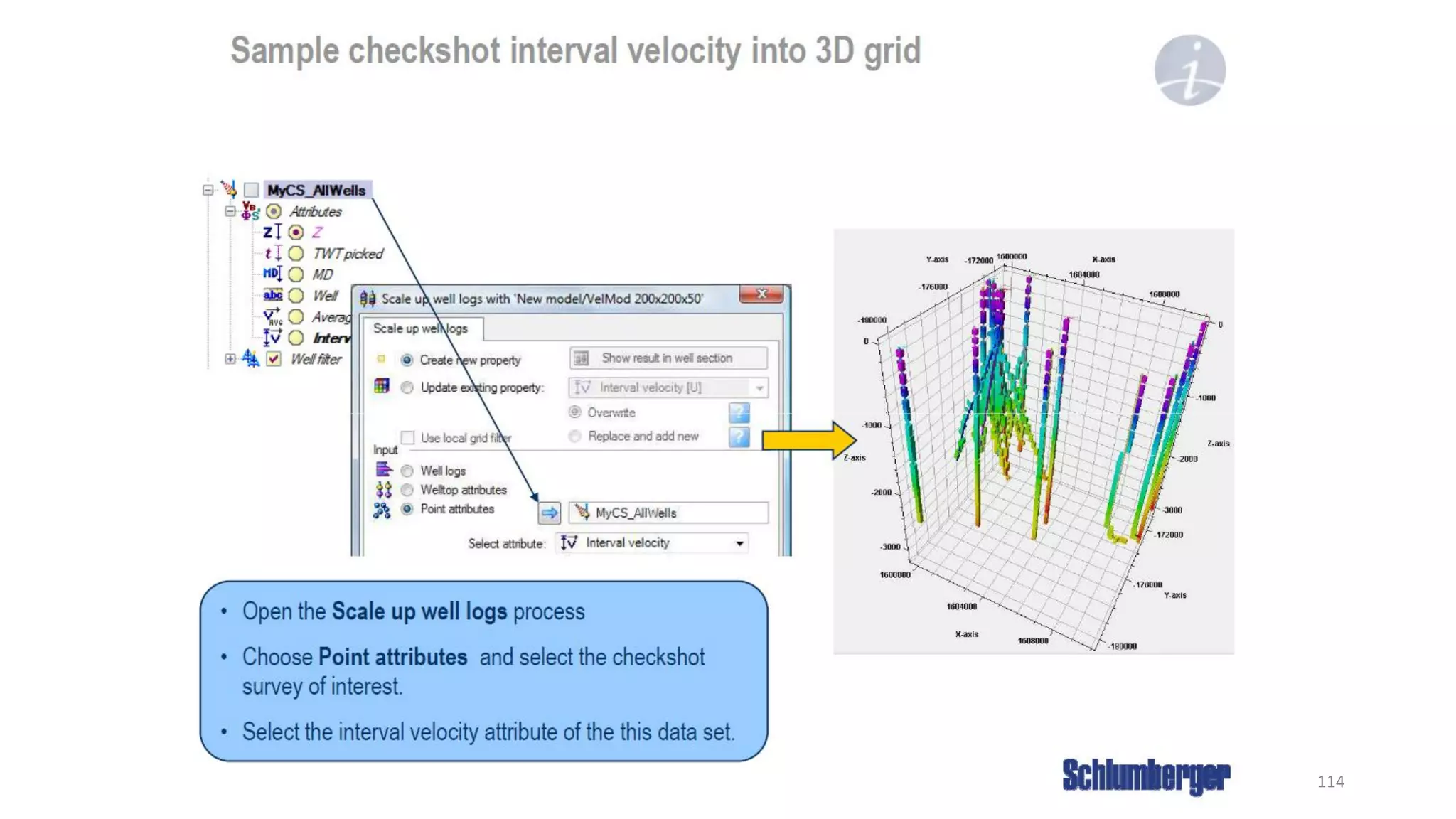

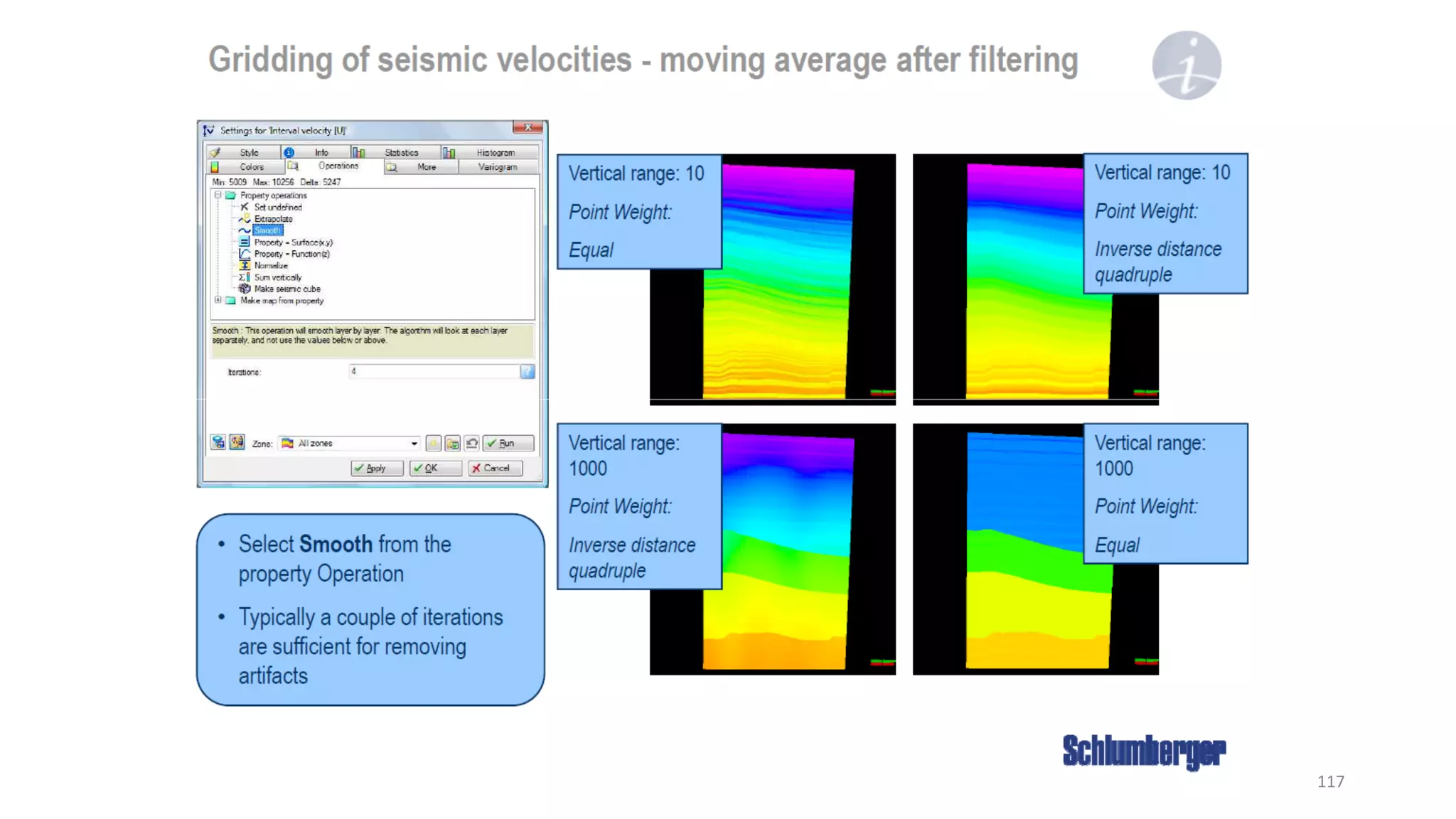

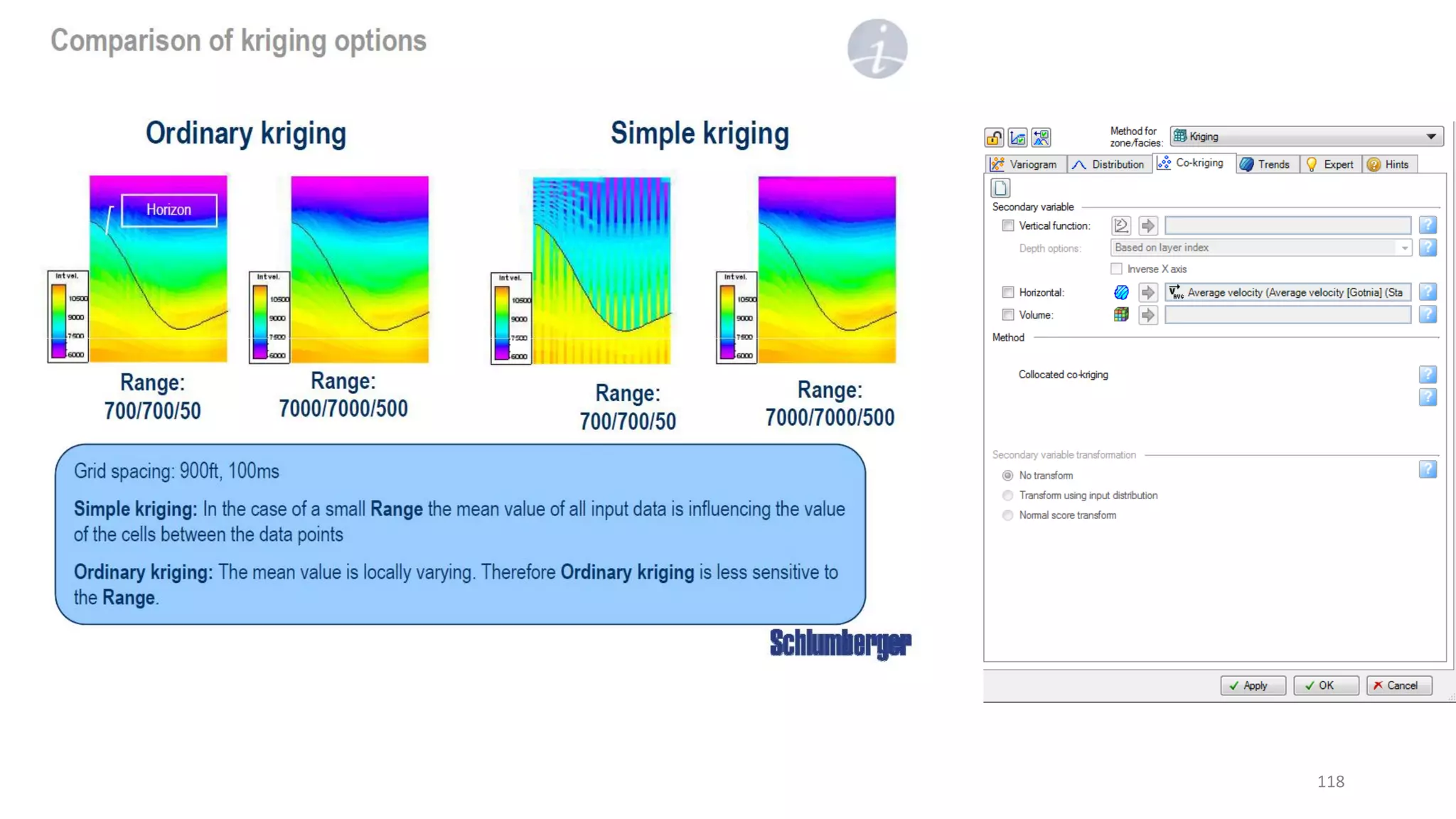

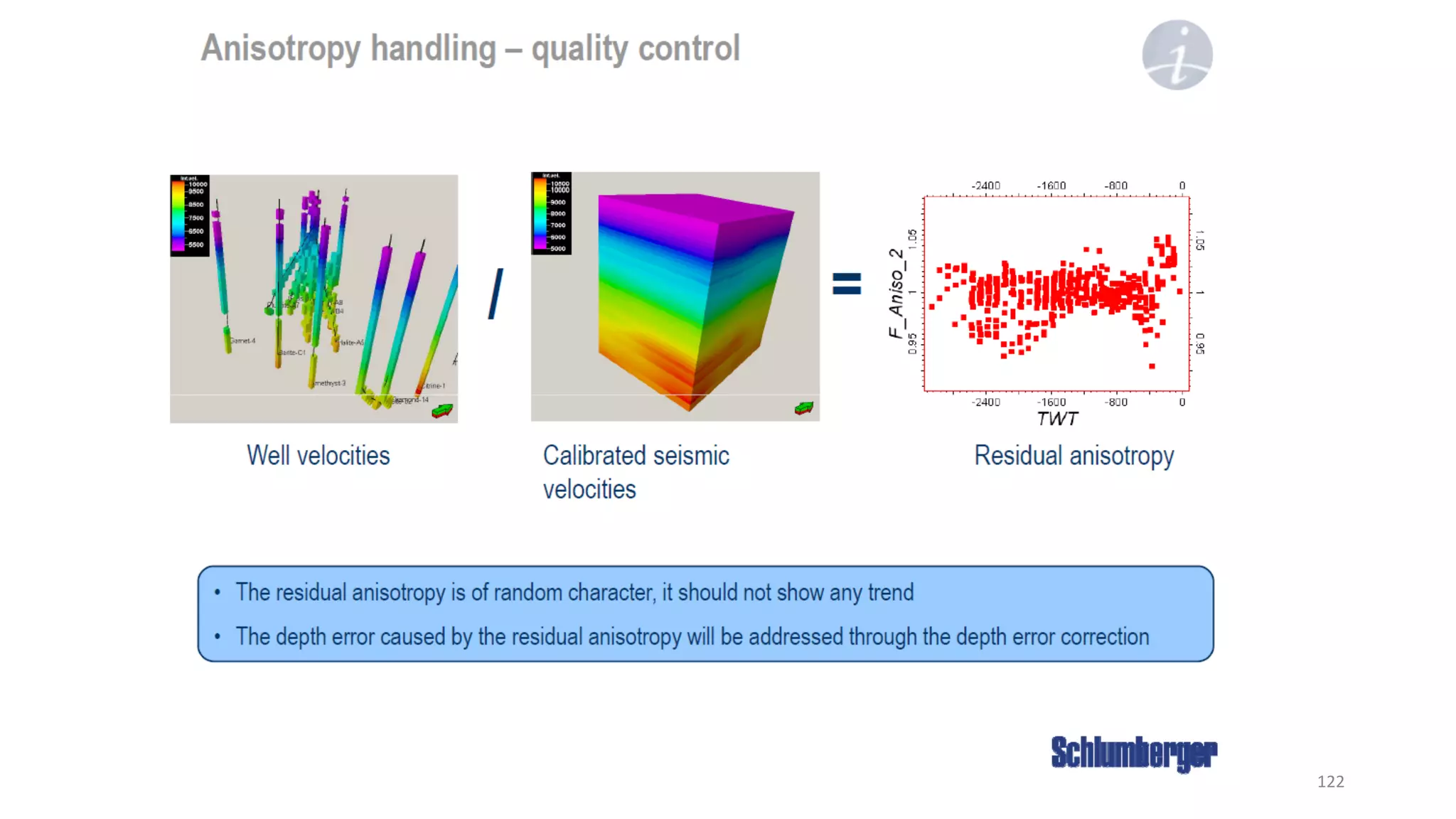

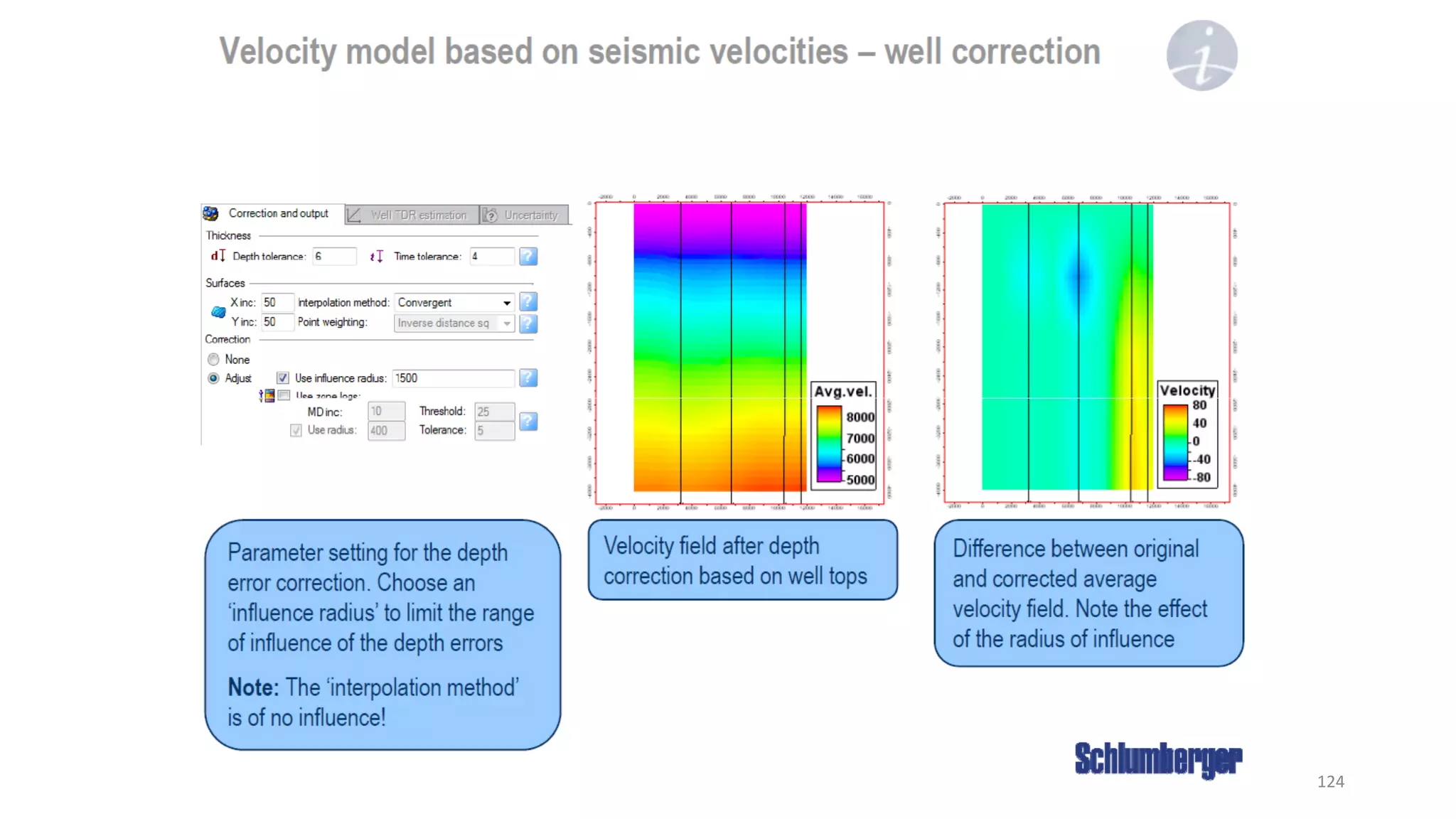

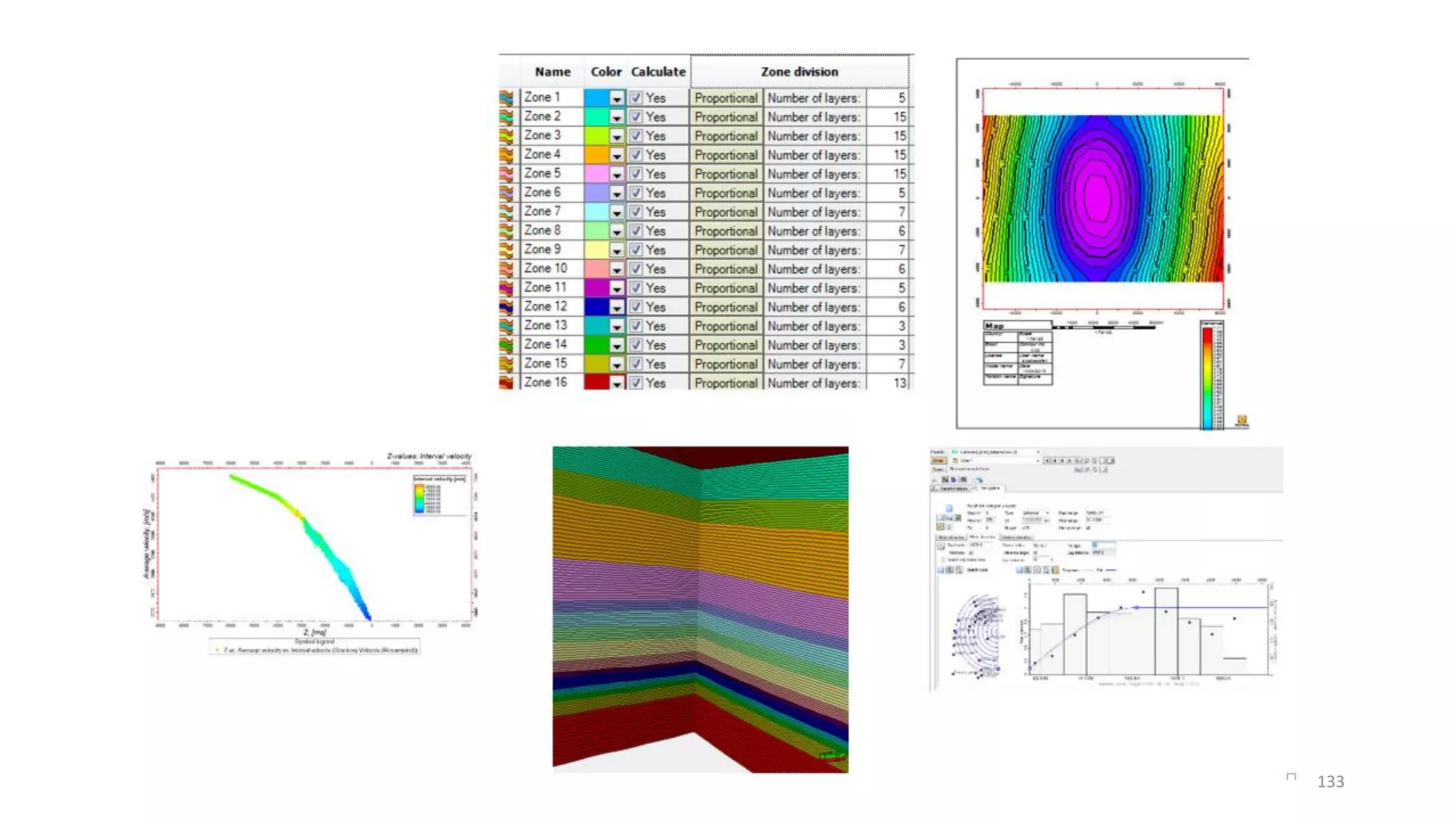

2. It provides steps for incorporating well velocity data, seismic stacking velocities, and time-depth curves to create interval velocity surfaces. Quality control steps are described for horizon and fault interpretation before velocity modeling.

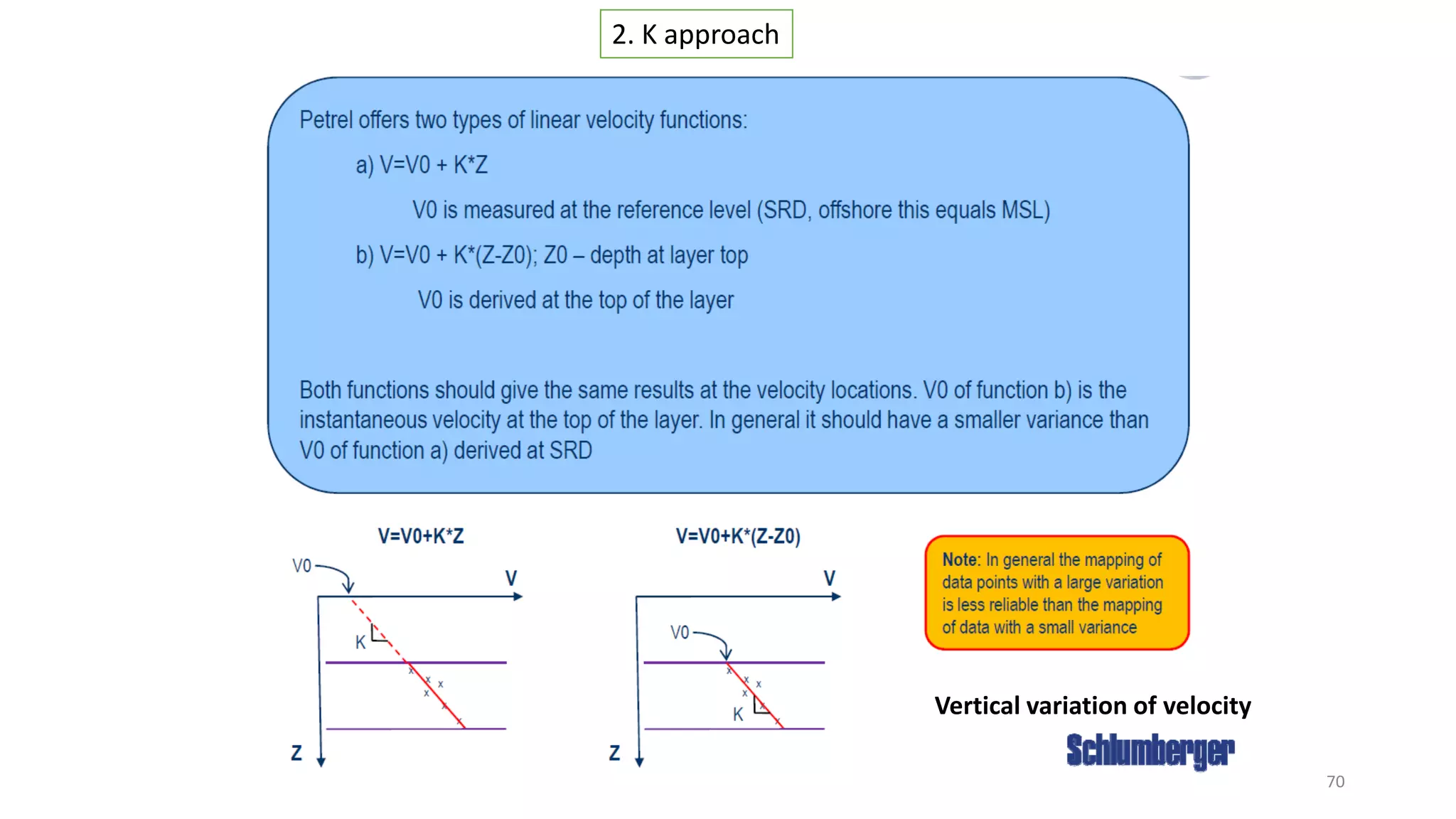

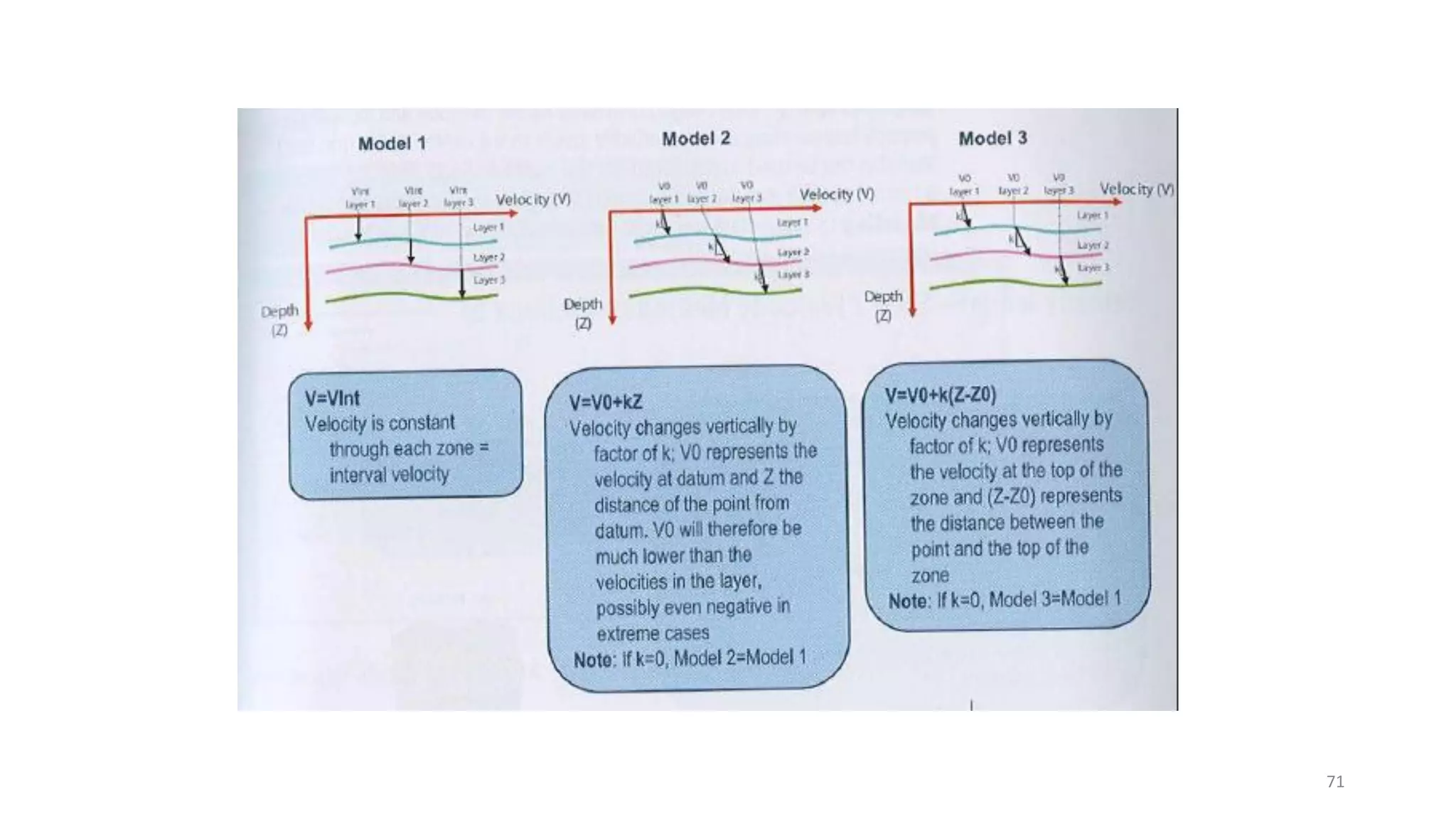

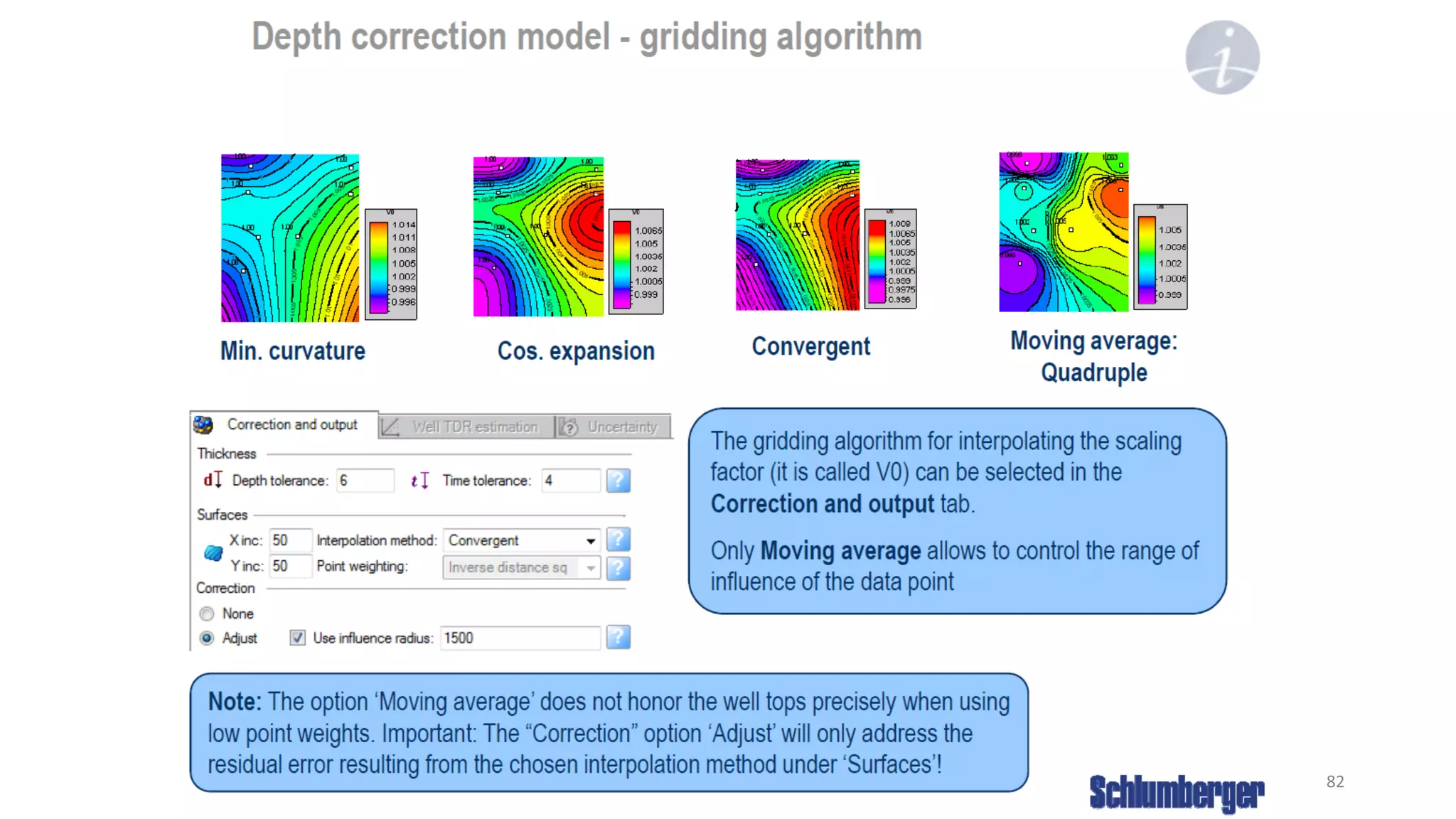

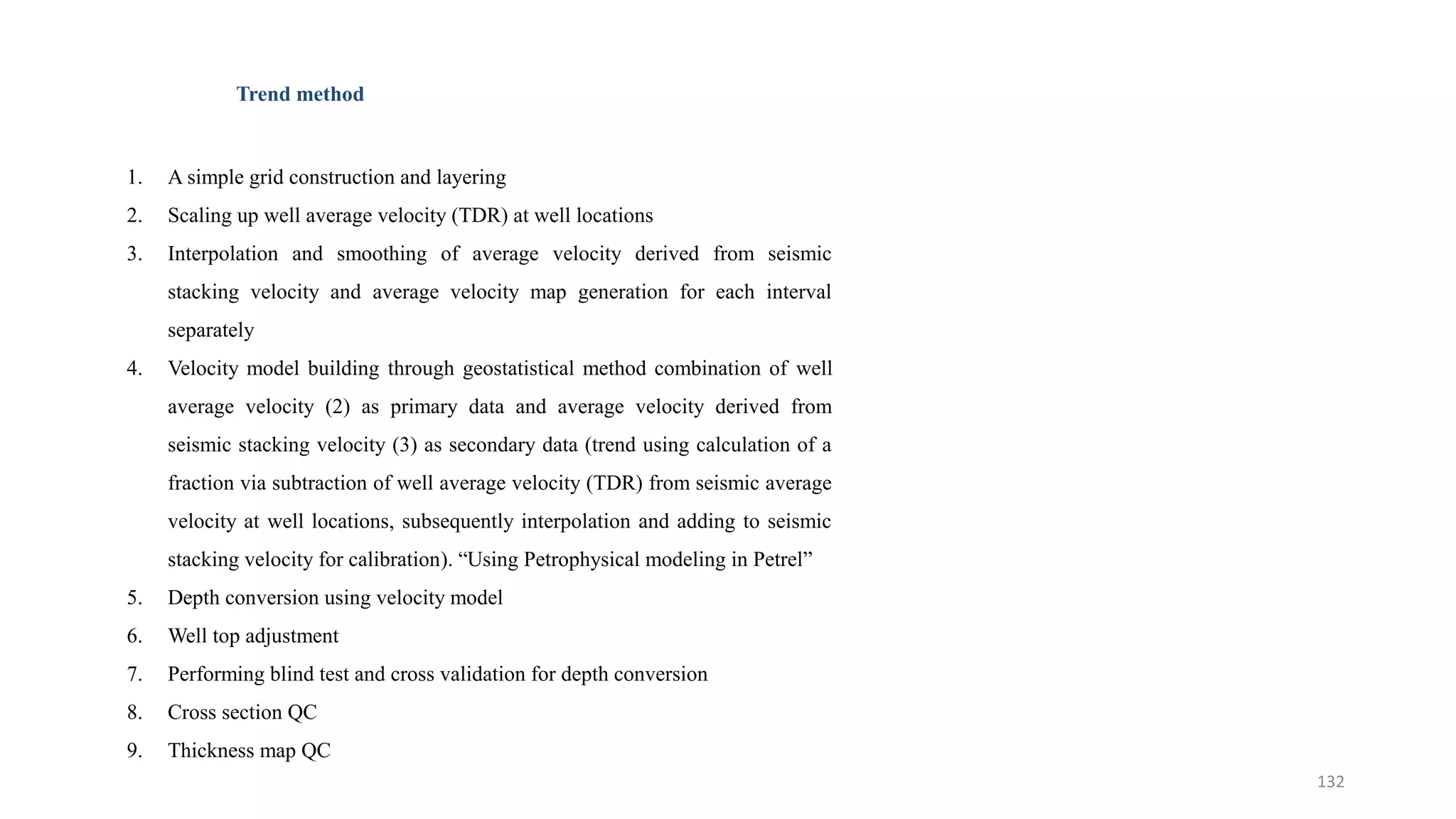

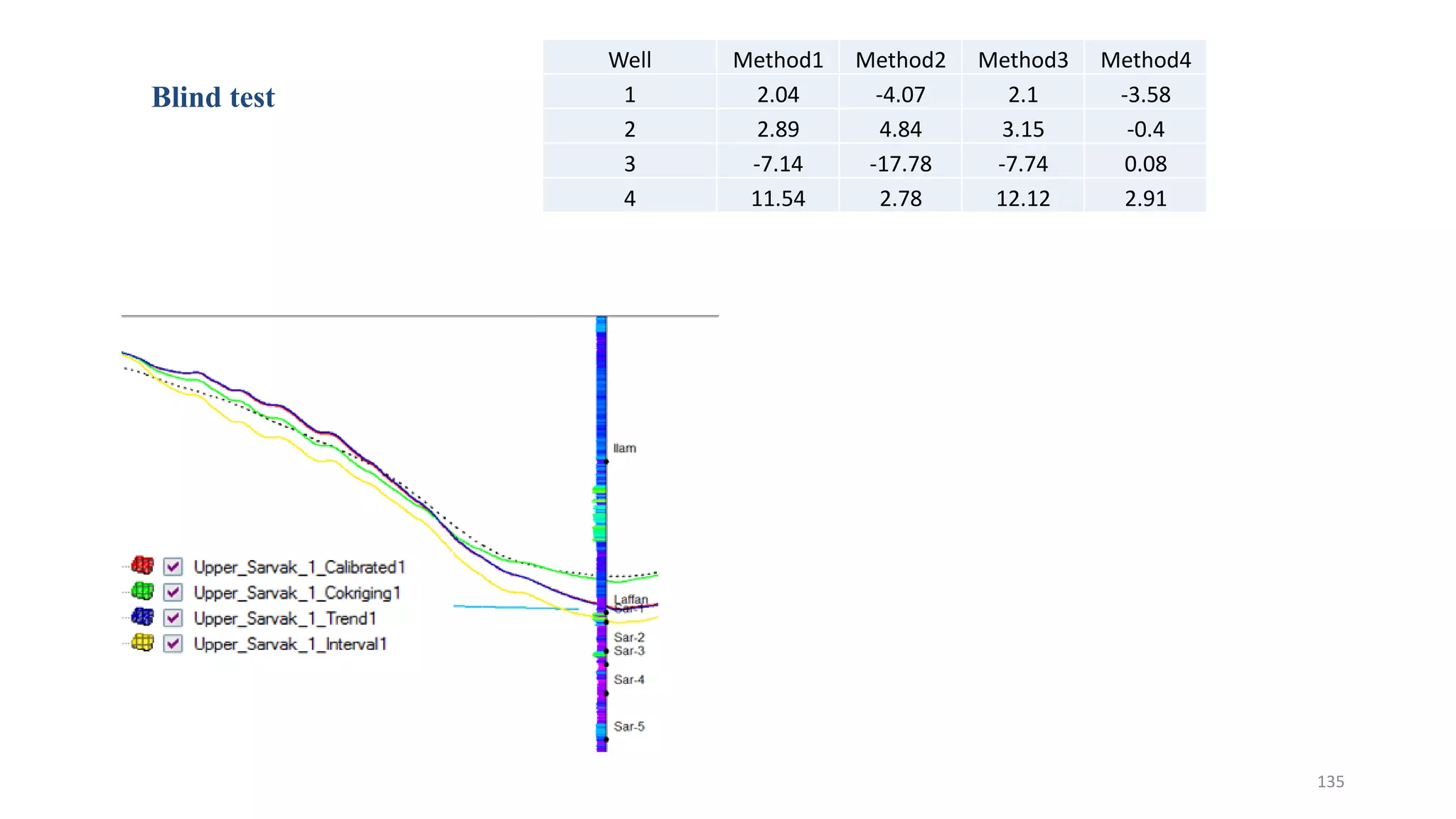

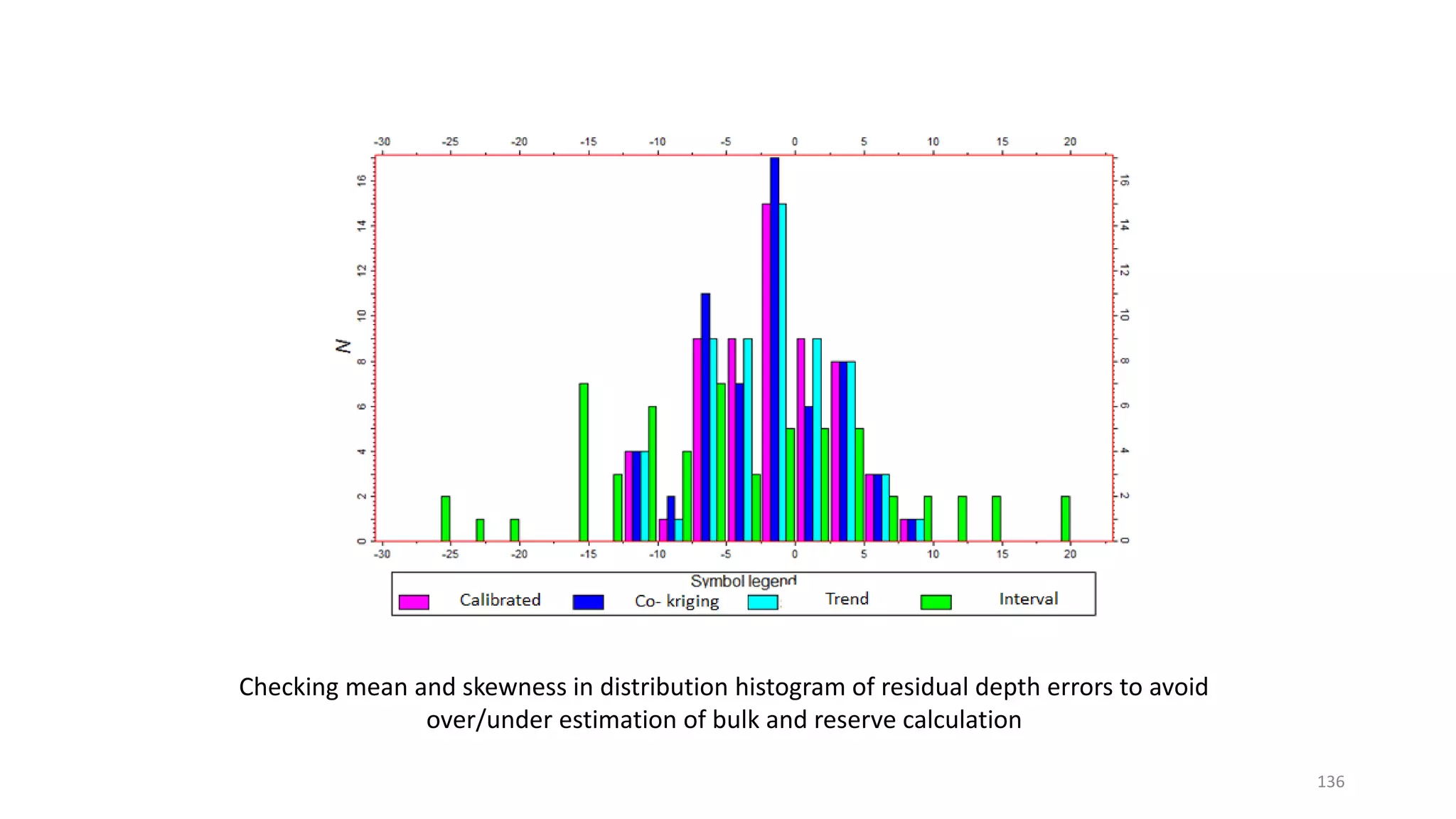

3. Examples are given of different velocity modeling methods in Petrel and their applications, including calibrated co-kriging, trend modeling, and layer cake approaches. Metrics for evaluating velocity model accuracy like well tie errors are also discussed.