The document outlines the fundamentals of sound in the context of a media literacy course, detailing aspects such as sound characteristics, recording techniques, and audio editing. It emphasizes the importance of quality sound production and provides an overview of relevant technology, including microphones and sound cards. The course aims to equip learners with the skills necessary for effective sound management in media contexts, including radio and digital platforms.

![FUNDAMENTALS OF SOUND MODULEADVANCED COURSE OF MEDIA LITERACY 7

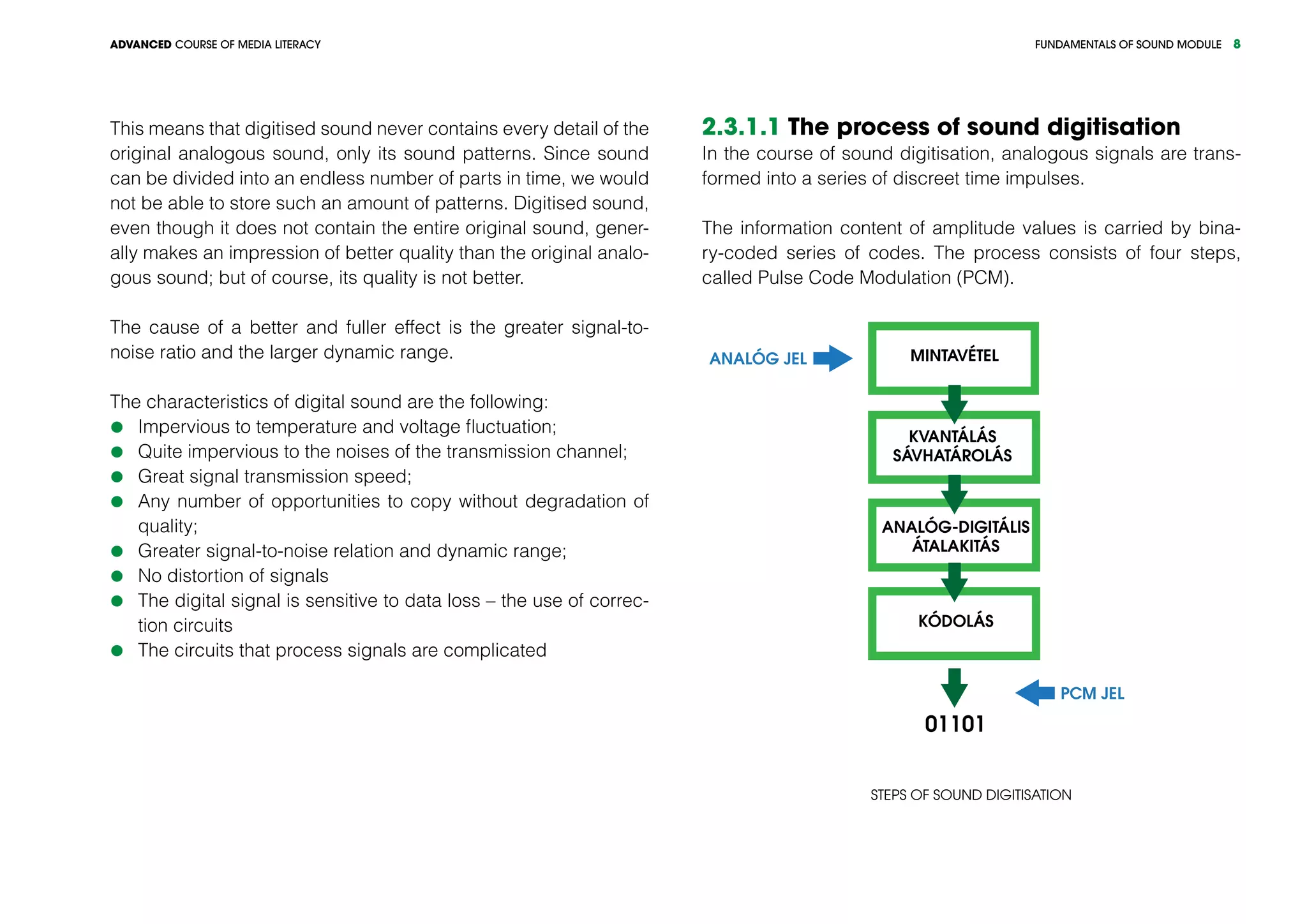

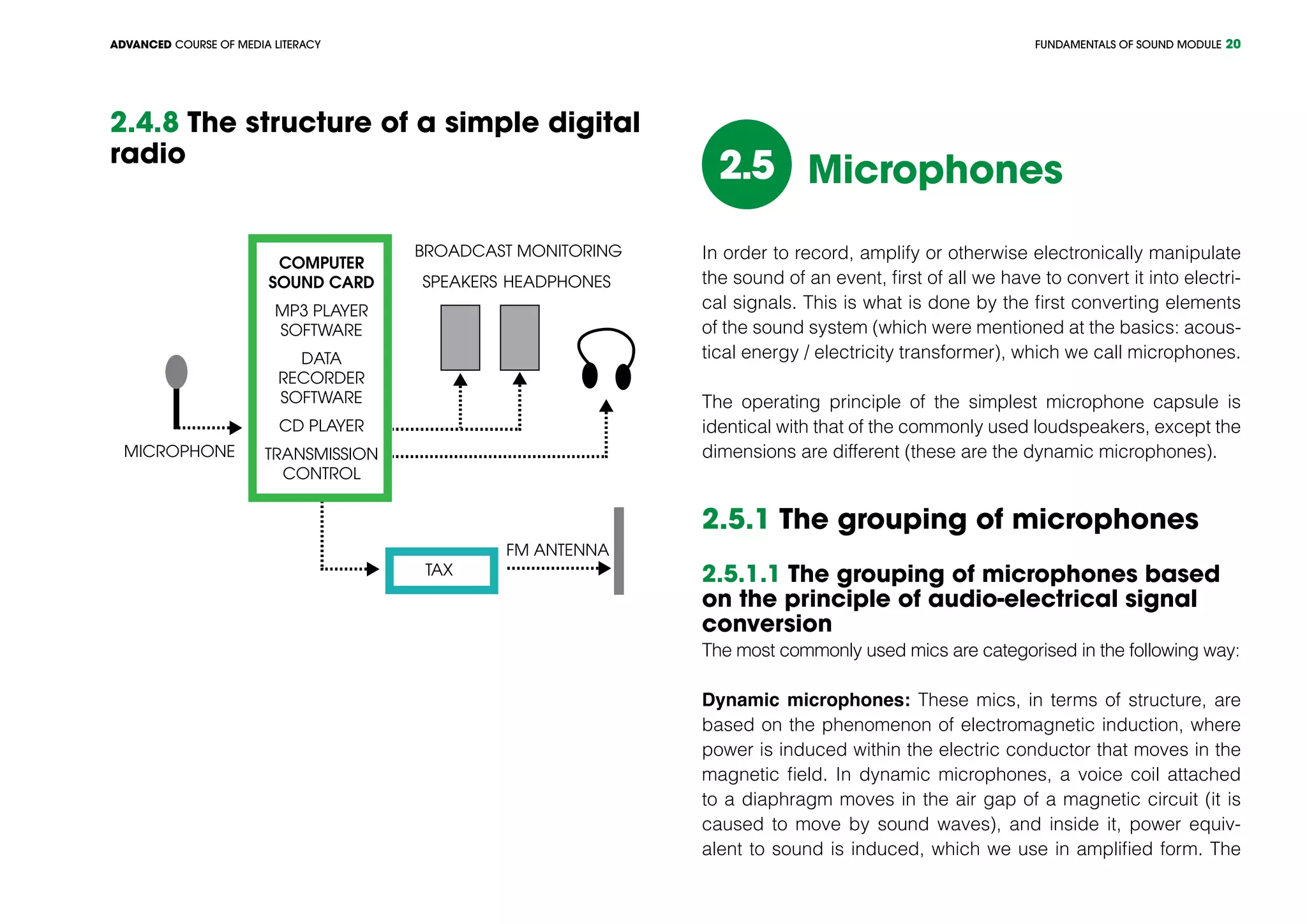

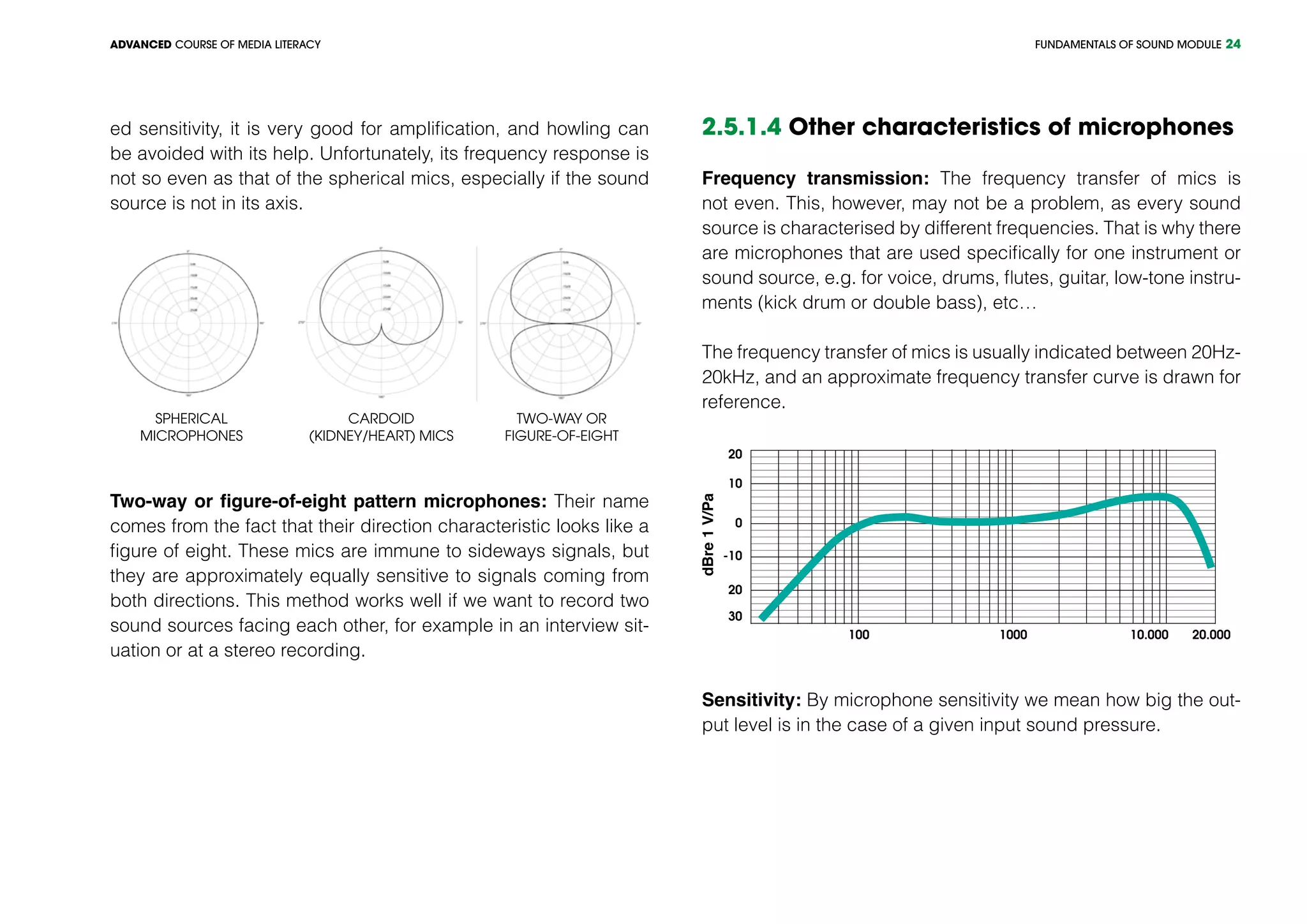

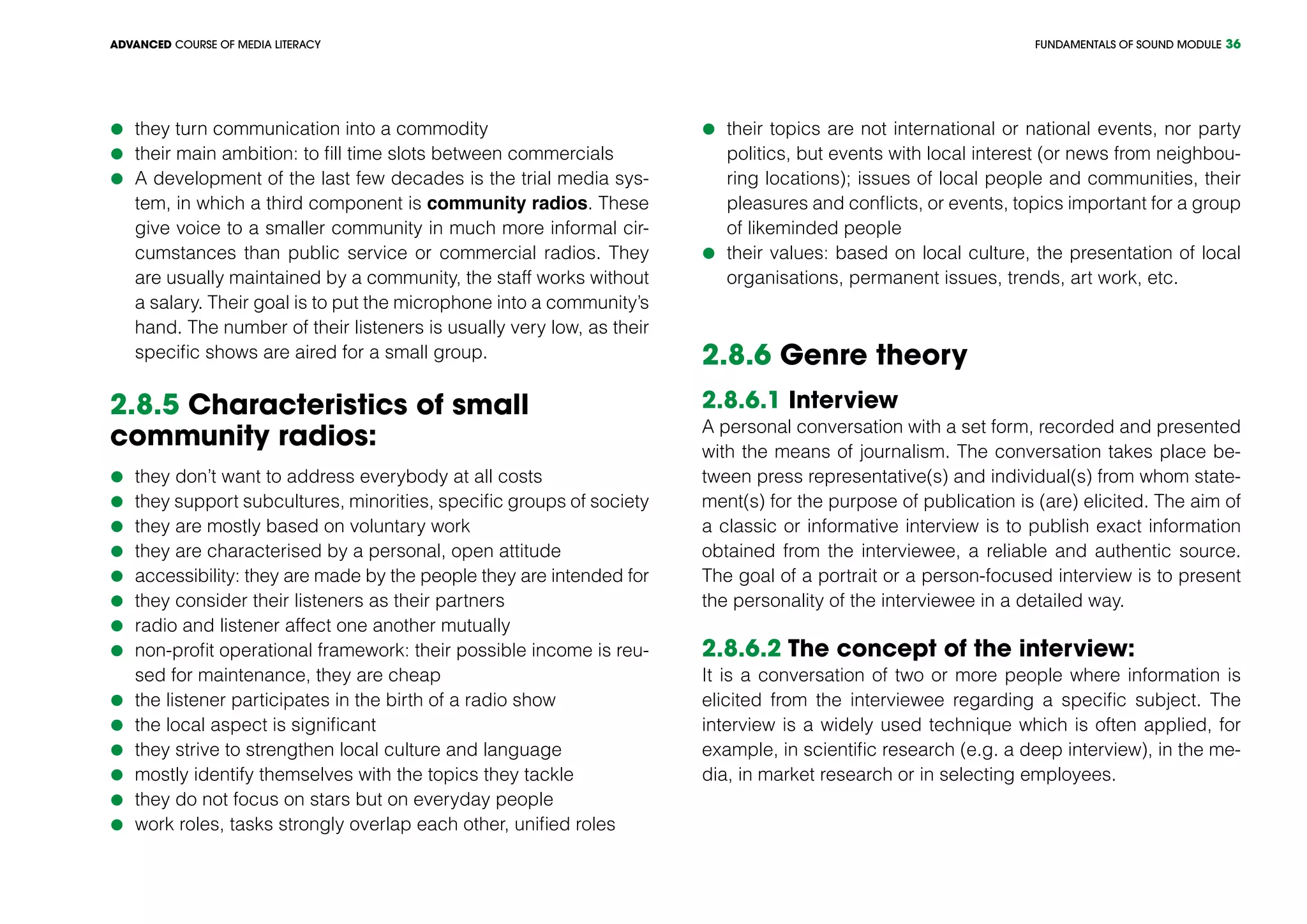

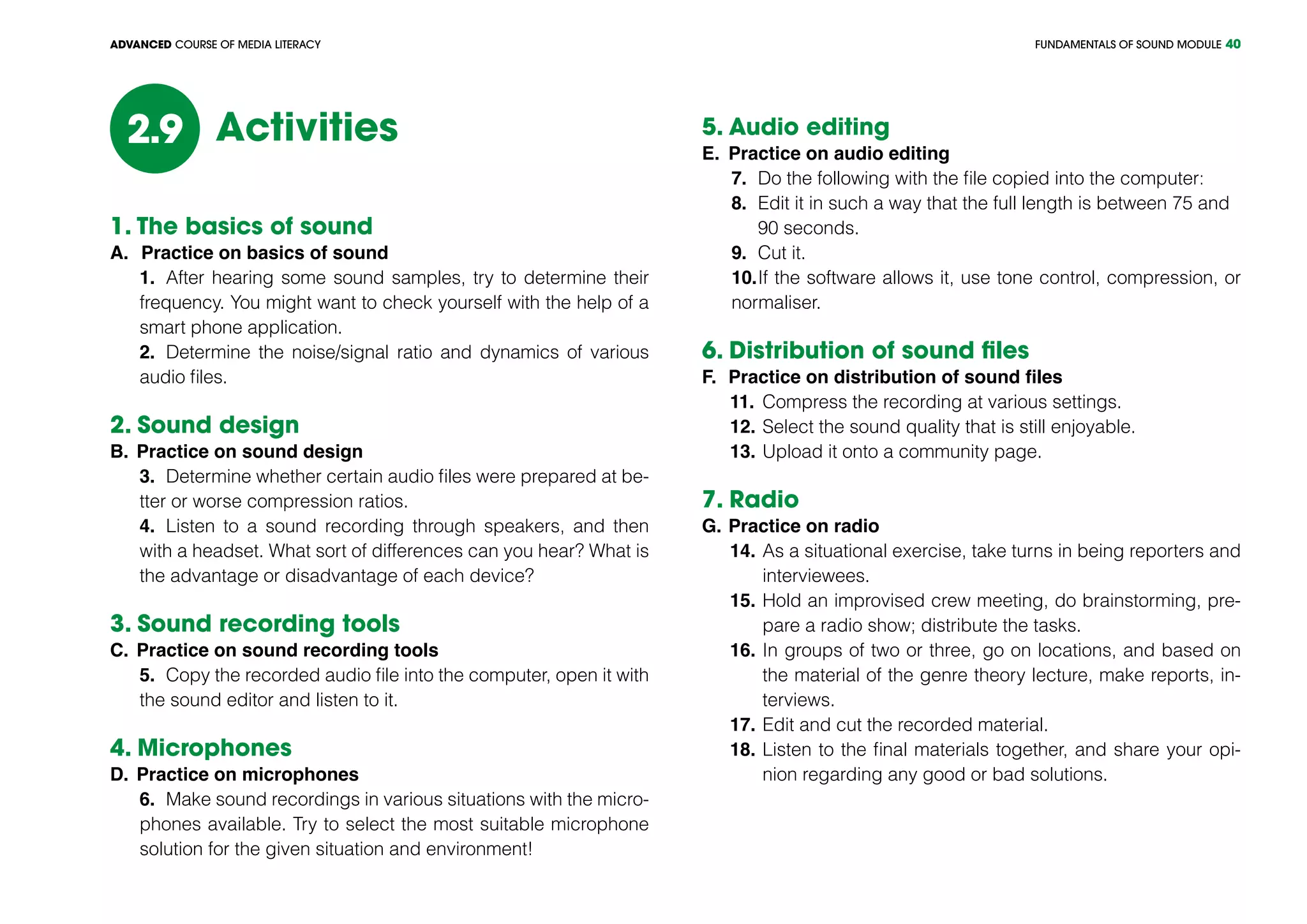

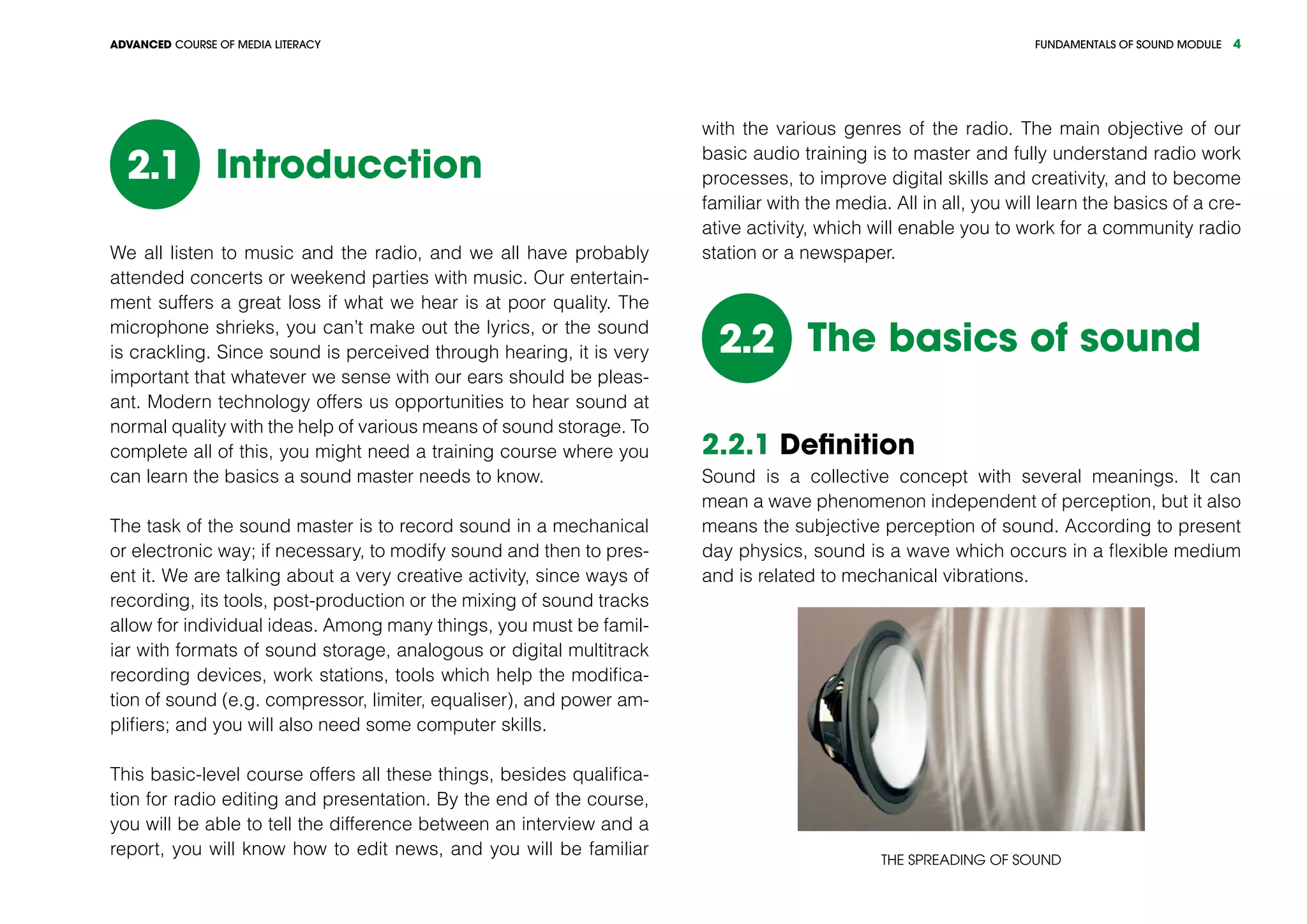

dB(SPL) Source (with distance)

194

A theoretical limit of sound waves,

in the case of 1 atmosphere pressure

180

missile engine from 30 m; The explosion of the Cra-

catau volcano from 160 km (100 miles) in the air[1]

150 jet engine from 30 m

140 a shot from 1 m

120 pain threshold; train horn from 10 m

110

accelerating motorcycle from 5 m; chain saw

from 1 m

100 air hammer from 2 m; disco room inside

90 a noisy workshop, heavy truck from 1 m

80

vacuum cleaner from 1 m, sidewalk of a busy

street at heavy traffic

70 heavy traffic from 5 m

60 office or restaurant inside

50 quiet restaurant inside

40 populated area at night

30 theatre, completely silent

10 human breath from 3 m

0

human hearing threshold (in the case of healthy

ears); the sound of a mosquito’s flight from 3 m

Sound design2.3

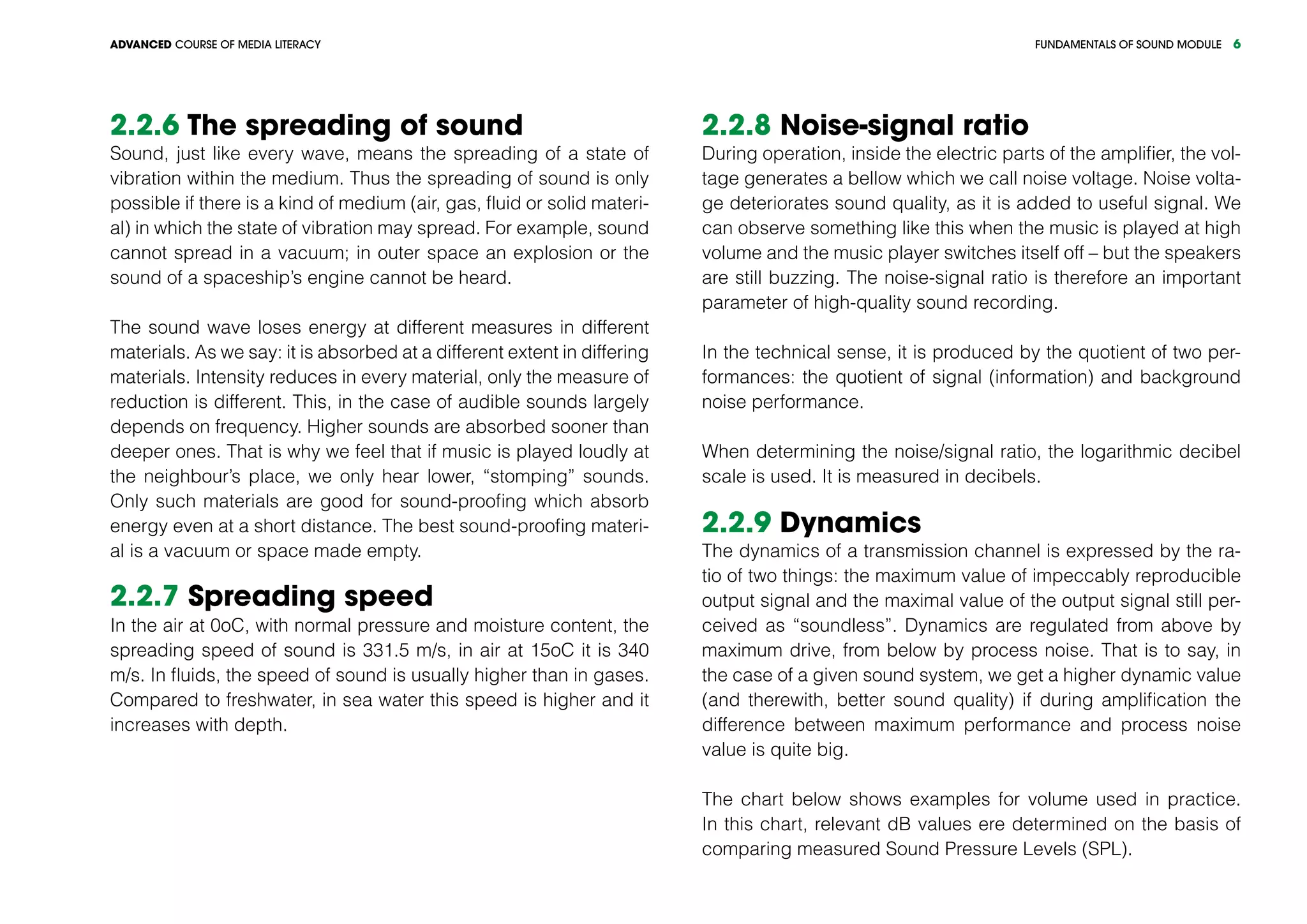

2.3.1 Analogous and digital signals

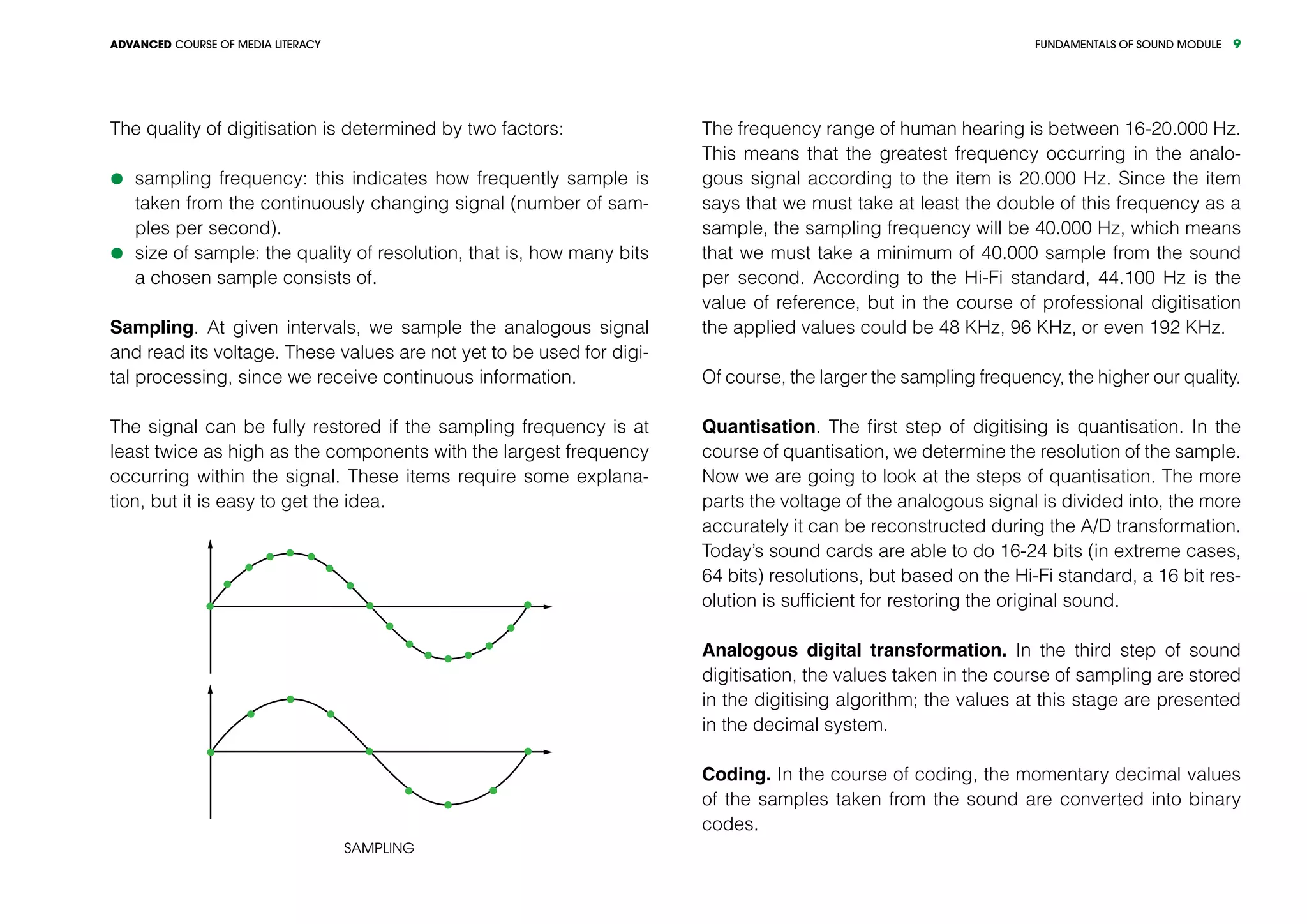

Analogous signals are continuously changing in terms of signal,

time and amplitude alike.

A digital signal consists of a series of impulses, as opposed to the

continuous nature of the analogous signal.

The digitisation (blue) of the analogous signal (green)](https://image.slidesharecdn.com/tmabasicmodulesounden-150413090455-conversion-gate01/75/Fundamentals-of-sound-module-basic-level-7-2048.jpg)