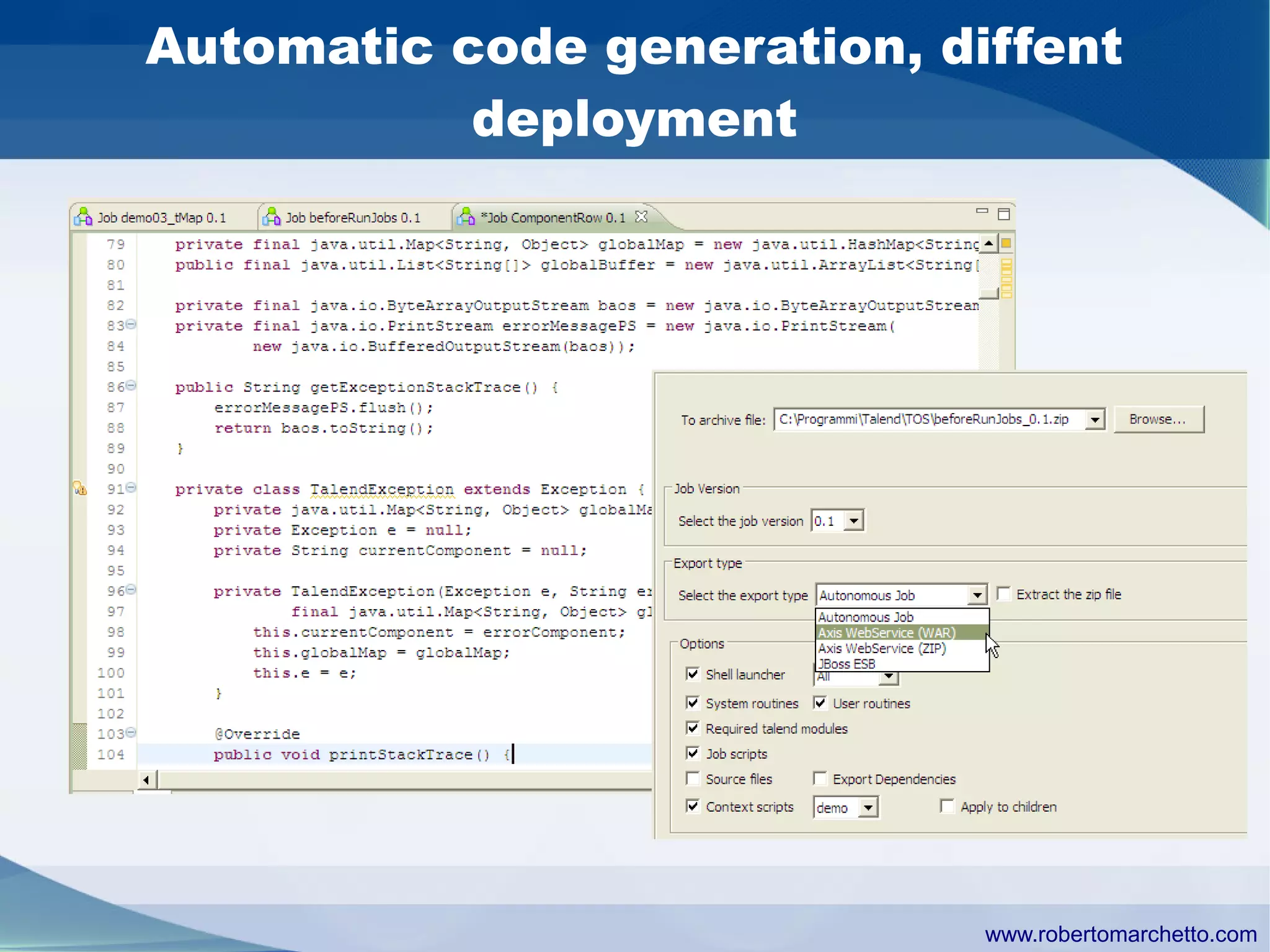

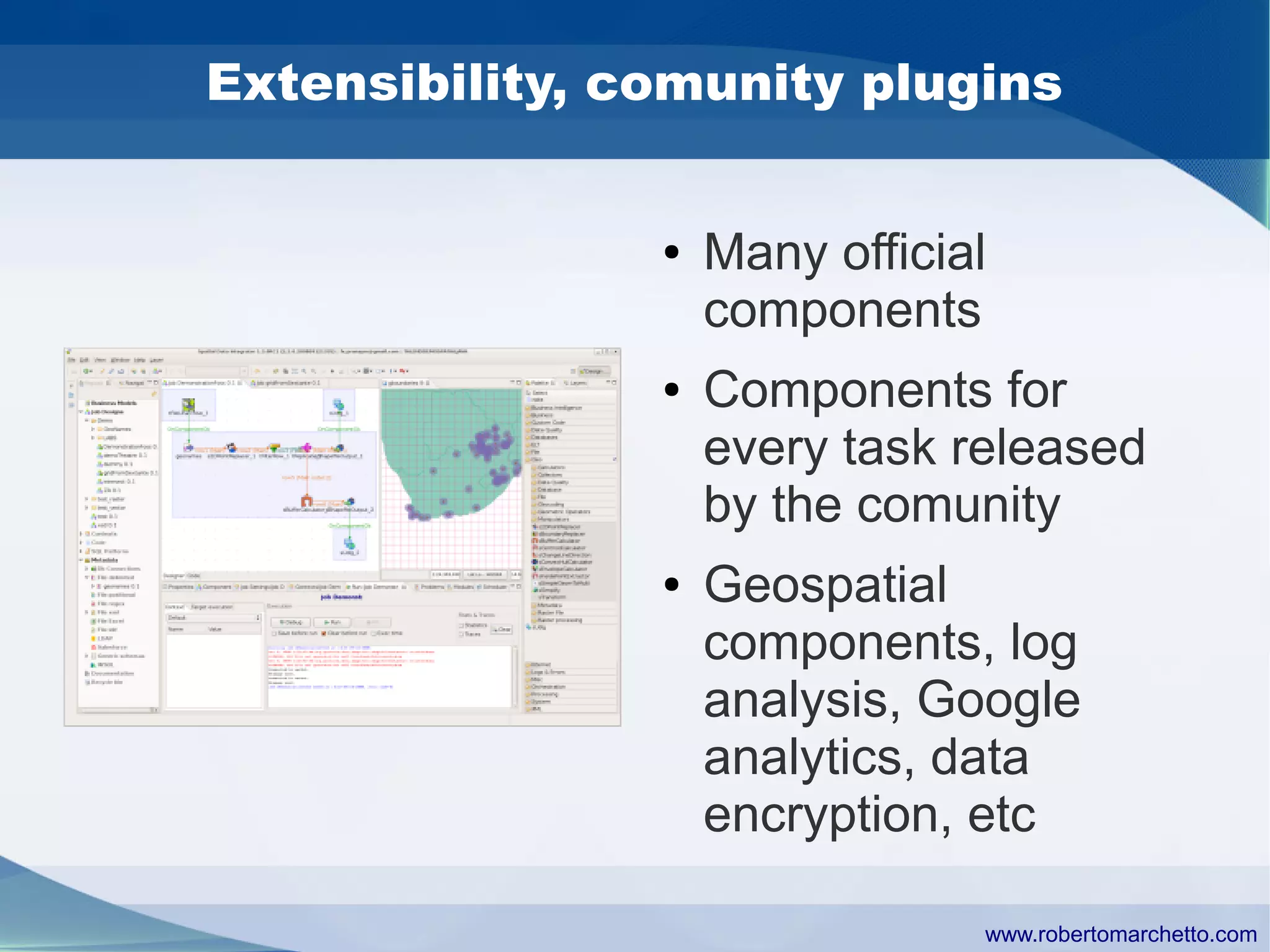

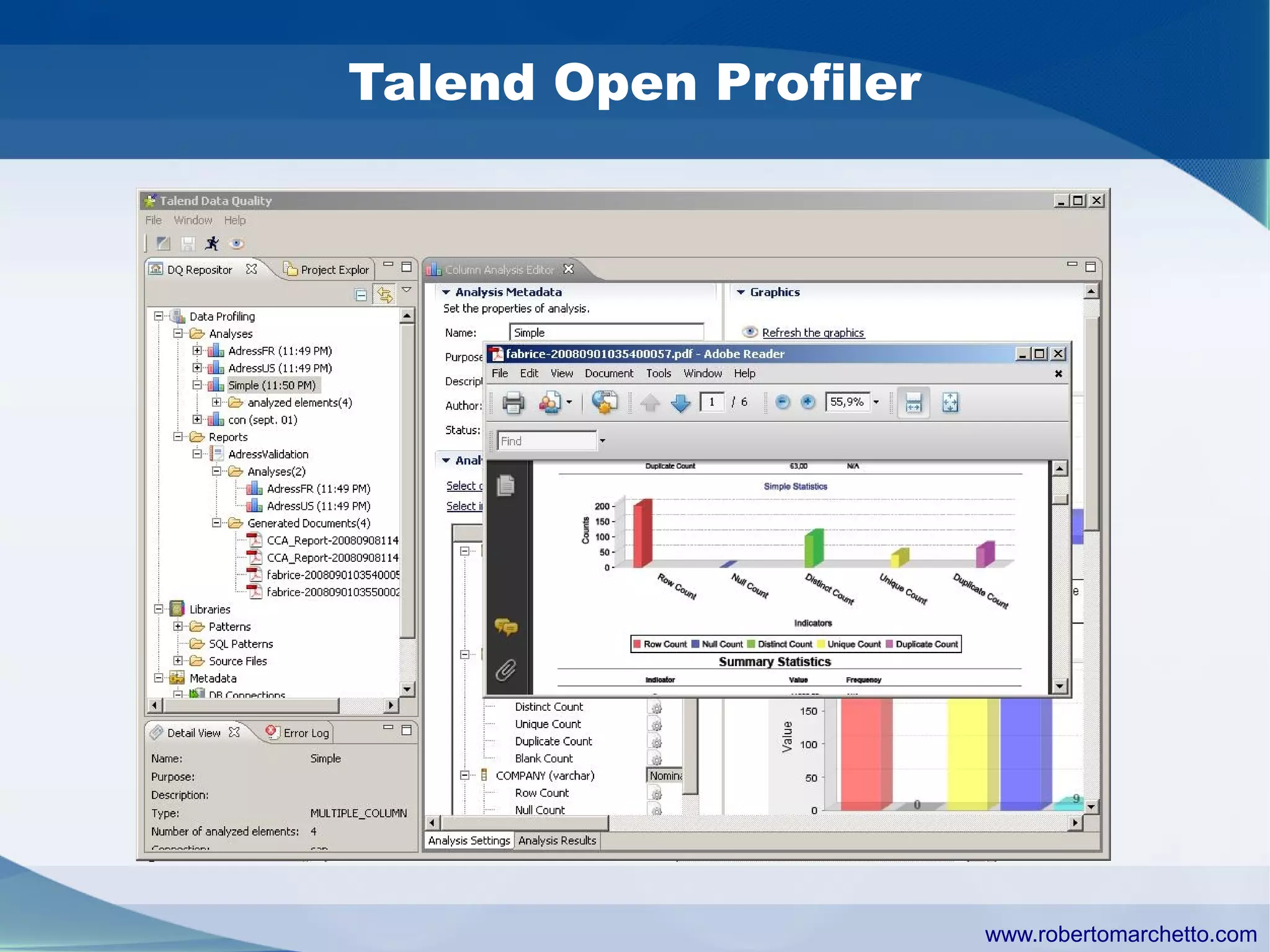

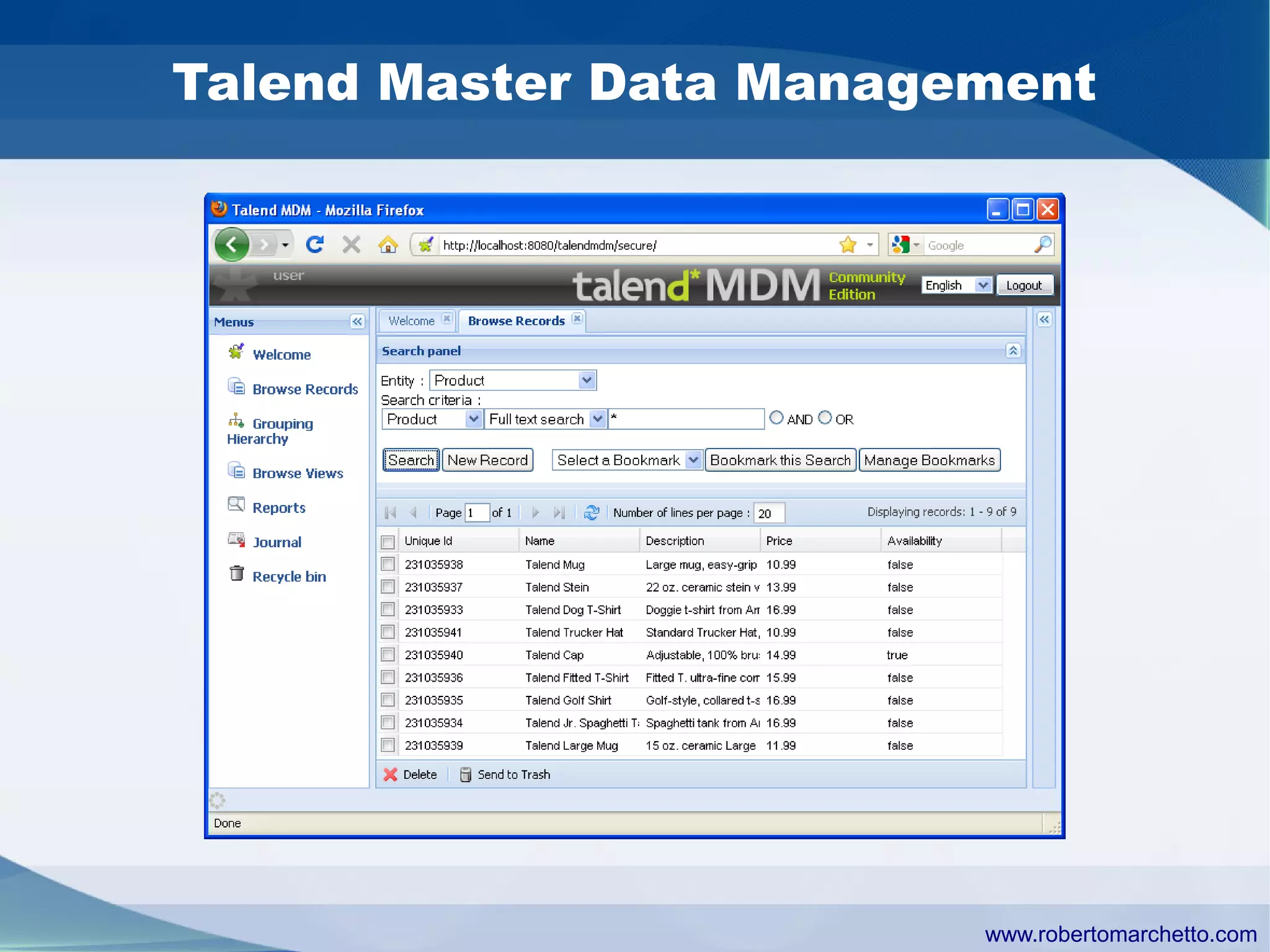

Talend provides data integration and management solutions. It focuses on combining data from different sources into a unified view for users. Talend offers an open source tool called Talend Open Studio that allows users to visually design procedures to extract, transform, and load data between various databases and file types. It also offers features for data quality, storage optimization, master data management, and reporting.