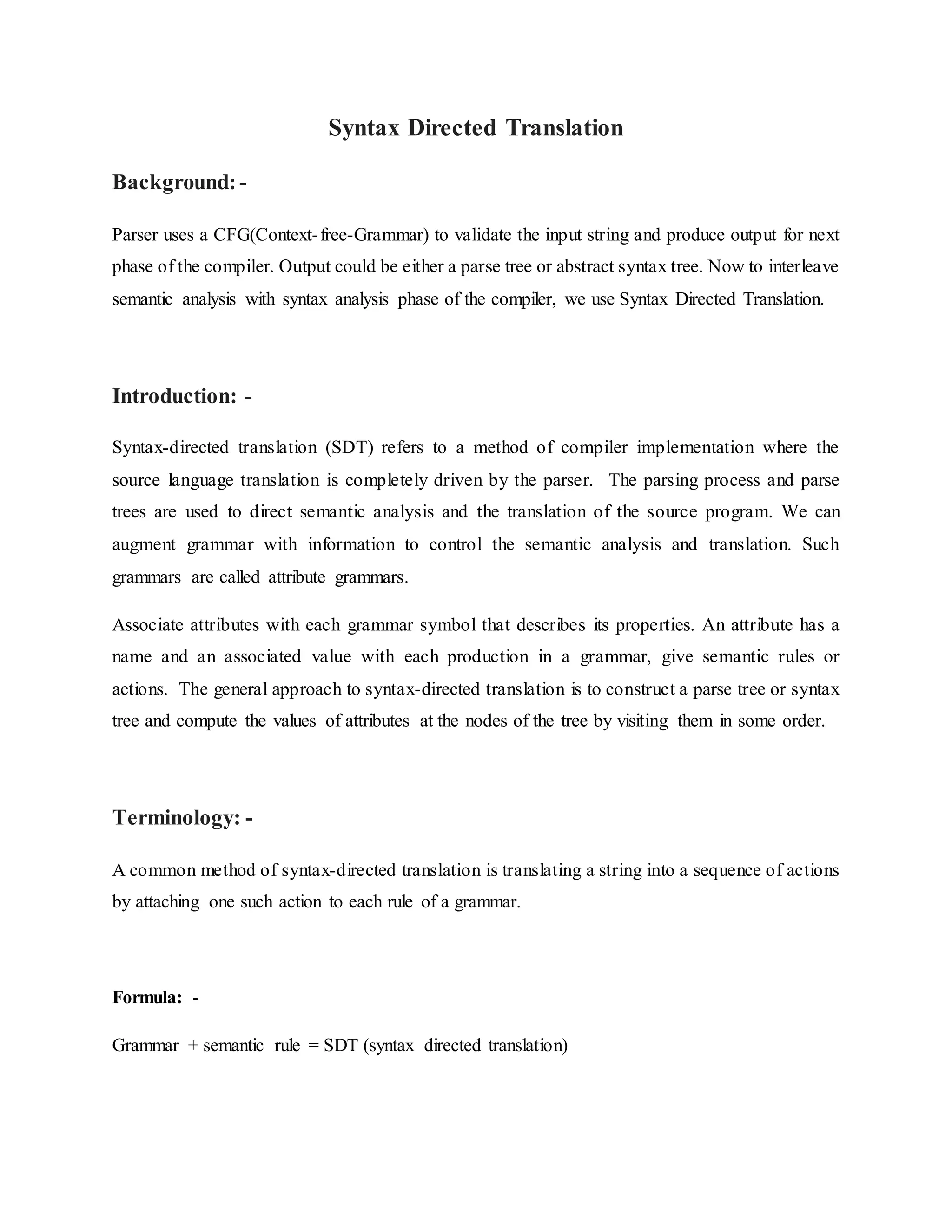

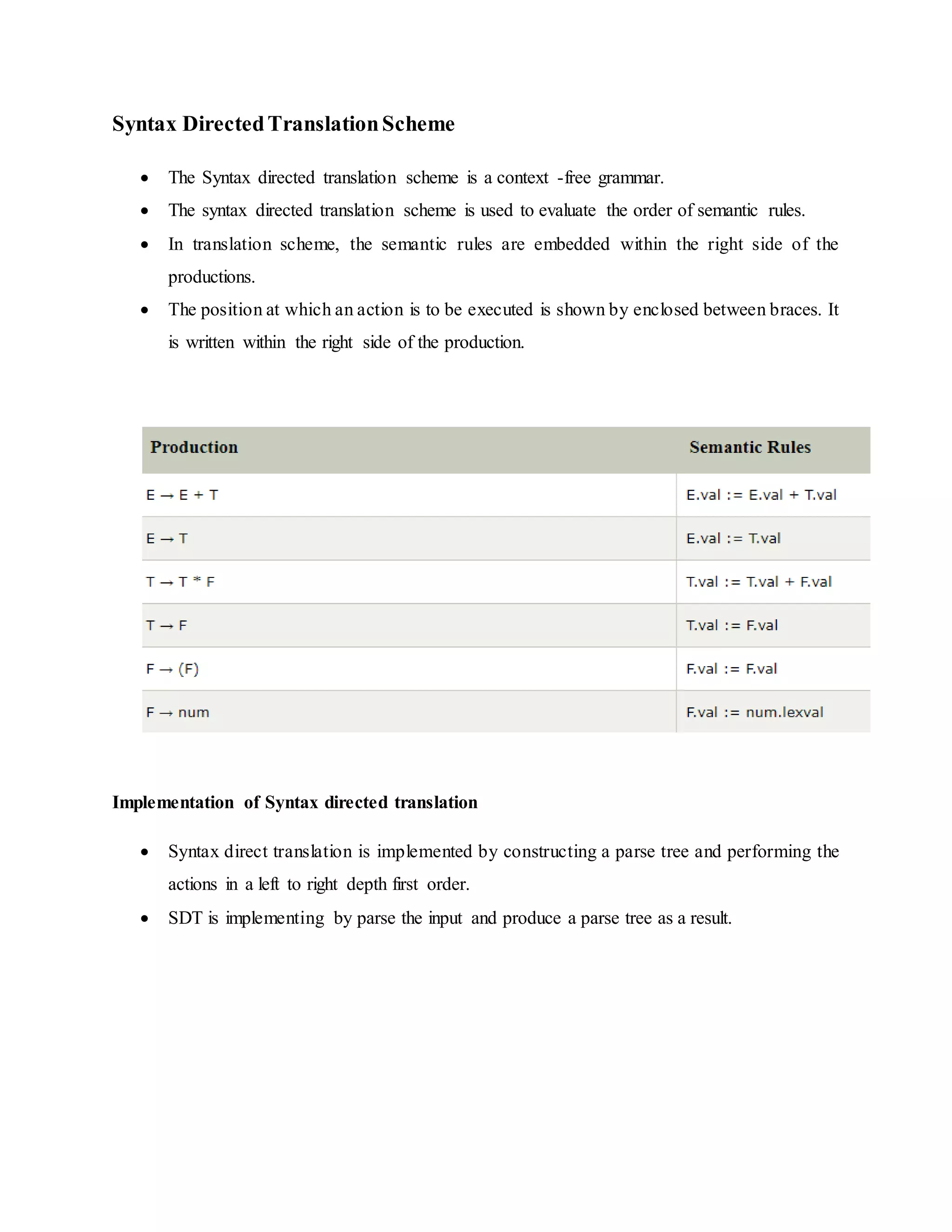

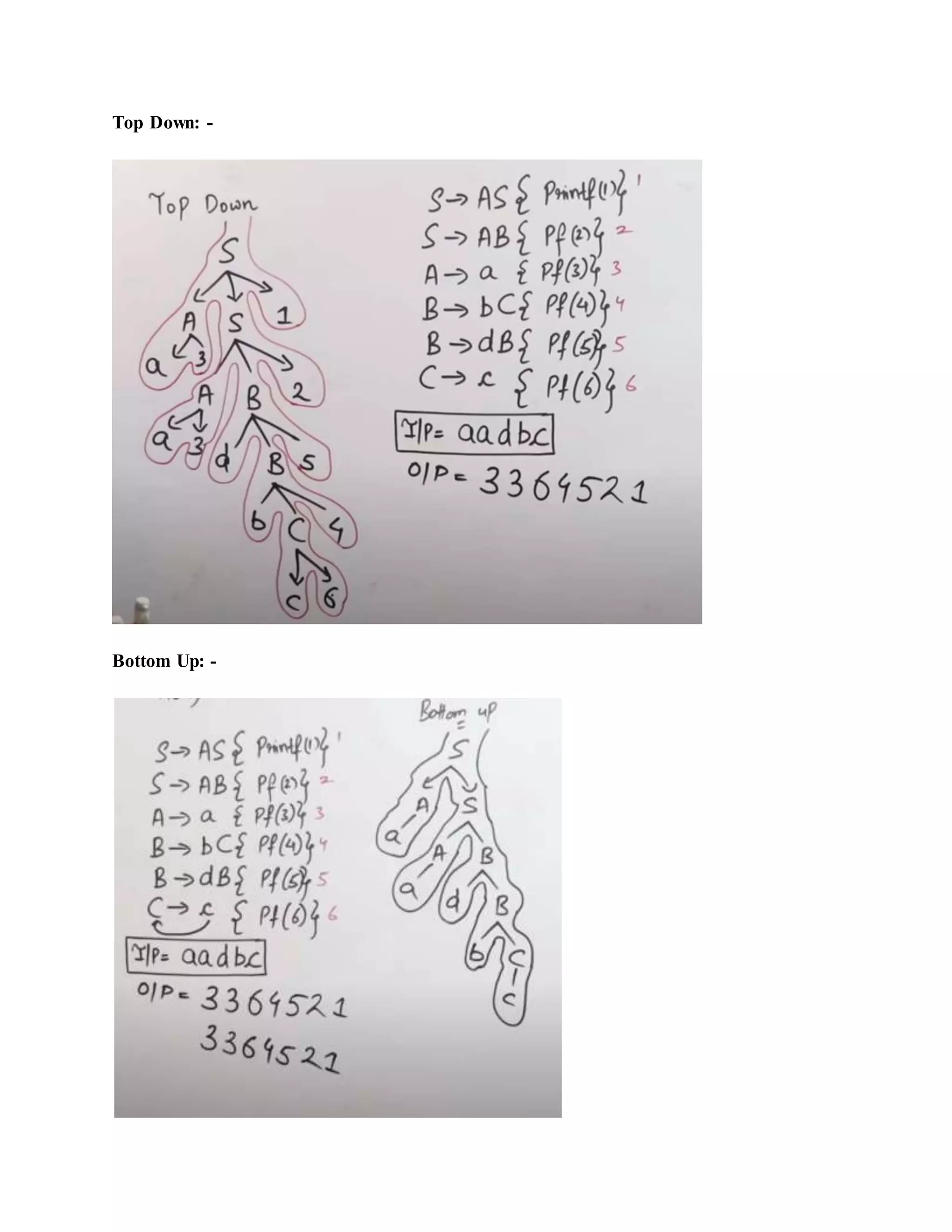

Syntax-directed translation (SDT) is a compiler implementation method where translation is driven by the parser, using parse trees to guide semantic analysis. It involves augmenting context-free grammar with attributes and semantic rules to compute values during parsing. While SDT simplifies the translation process, limitations exist, such as the challenge of managing global data for semantic actions.