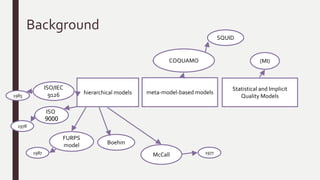

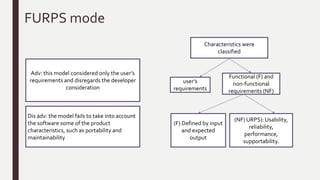

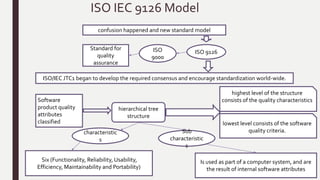

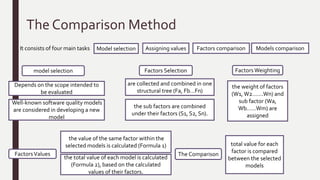

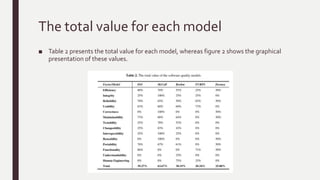

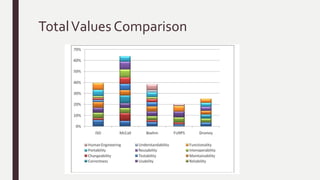

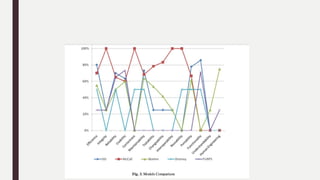

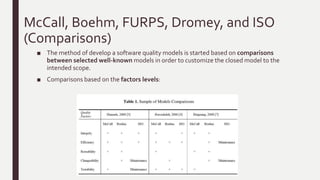

The document presents a comparative study of various software quality models, including McCall, Boehm, FURPS, Dromey, and ISO/IEC 9126, outlining their advantages and disadvantages. It proposes a formal comparison method based on mathematical evaluation to assess strengths and weaknesses and aid in creating standardized software quality metrics. The study also emphasizes the importance of defining clear evaluation criteria and the relationships between quality factors and software characteristics.

![Introduction

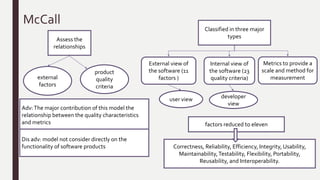

■ McCall [2] model was developed in 1976-7, which is one of the oldest software quality models.

This model started with a volume of 55 quality characteristics which have an important

influence on quality, and called them "factors".

■ The quality factors were compressed into eleven main factors in order to simplify the model.

■ The quality of software products was defined according to three major perspectives,

– product revision (ability to undergo changes),

– product transition (adaptability to new environments) and

– product operations (its operation characteristics).

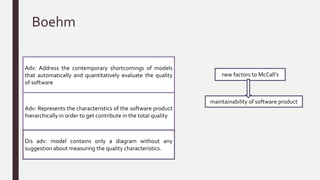

■ Boehm model[4], the model was based on McCall model, he defined the second set of quality

factors.

■ SPARDAT is a commercial quality model was developed in the banking environment.The

model classified three significant factors: applicability, maintainability, and adaptability.](https://image.slidesharecdn.com/presentation1-170521145141/85/Software-Quality-Models-A-Comparative-Study-paper-5-320.jpg)