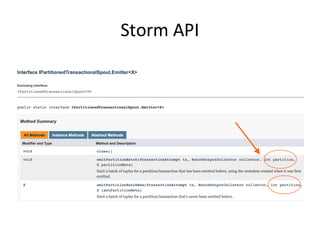

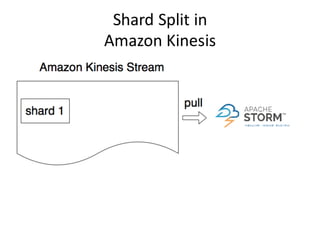

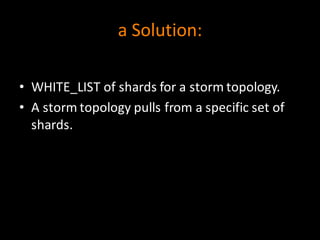

Apache Storm is a stream processing framework that can be used to process real-time data from data streams like Apache Kafka or Amazon Kinesis. When data in Amazon Kinesis is repartitioned into new shards, the partition metadata used by Storm becomes invalid. To address this, a solution is to define a white list of shards for each Storm topology, so that individual topologies are not affected when shards are added or removed from the stream.