Embed presentation

Download to read offline

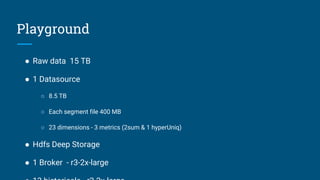

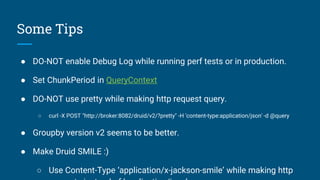

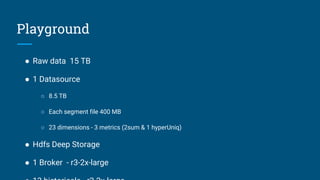

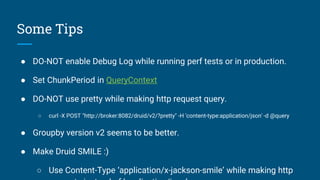

The document provides performance tips for using Druid with a setup involving 15 TB of raw data and a single datasource of 8.5 TB. Key recommendations include not enabling debug logs during performance tests, optimizing query context settings, and utilizing the 'application/x-jackson-smile' content type for better efficiency. The author also notes the effectiveness of groupby version v2 for improved performance.