Soham Patra_13000120121.pdf

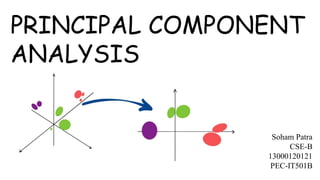

- 2. It is an unsupervised learning algorithm that is used for the dimensionality reduction in machine learning Unsupervised learning – discovering patterns in the data set without human help example - clustering Dimensionality Reduction – Types: 1. Feature Elimination – eliminating some of the features to reduce feature space 2. Feature Extraction – create new features where each new feature is a combination of the old features What is Principal Component Analysis ?

- 3. PCA converts correlated features into a set of uncorrelated features with the help of orthogonal transformation. These new features are called Principal Components. It is a technique to draw strong patterns from the given dataset by reducing the no of variables. PCA Algorithm is based on mainly two mathematical concepts: 1. Variance and Covariance 2. Eigenvalues and Eigenvector What is Principal Component Analysis ?

- 4. Lets consider a dataset in which we compare 6 algorithms to solve a problem on the basis of Time complexity, Space Complexity and Lines of Code Algo 1 Algo 2 Algo 3 Algo 4 Algo 5 Algo 6 Time Complexity 9 8 6 3 3 1 Space Complexity 7 8 9 5 3 4 Lines of Code 7 8 7 2 4 2 1 2 5 4 6 3 Example

- 5. Algo 1 Algo 2 Algo 3 Algo 4 Algo 5 Algo 6 Time Complexity 4 3 1 -2 -2 -4 Space Complexity 1 2 3 -1 -3 -2 Lines of Code 2 3 2 -3 -1 -3 So in order to standardize the data we need to find the mean vector and subtract it from each point Mean for Time complexity = (9+8+6+3+3+1) / 6 = 5 Mean for Space Complexity = (7+8+9+5+3+4) / 6 = 6 Mean for Lines of Code = (7+8+7+2+4+2) / 6 = 5 Mean vector = (5,6,5) So the new data set looks like this: 1 2 5 4 6 3 Standardize the Data

- 6. So now we need to form a n * m matrix from the data given, let it be matrix A (n = no of dimensions variables A = m = no of points ) Now the covariance matrix C = A * AT C = * = 4 3 1 -2 -2 -4 1 2 3 -1 -3 -2 2 3 2 -3 -1 -3 4 1 2 3 2 3 1 3 2 -2 -1 -3 -2 -3 -1 -4 -2 -3 50 29 39 29 28 26 39 26 36 Finding the Covariance Matrix 4 3 1 -2 -2 -4 1 2 3 -1 -3 -2 2 3 2 -3 -1 -3

- 7. To find the eigenvalues of a n*n matrix we need to solve the following equation: det(C – 𝝀I) =0 – = = = – 𝝀3 + 114 𝝀 2 – 1170 𝝀 + 2548 = 0 Solving the above equation gives us the roots as 𝝀 1 = 102.86 𝝀 2 = 8.06 𝝀 3 = 3.07 Finding the Eigenvalues 50 29 39 29 28 26 39 26 36 50 - 𝝀 29 39 29 28 - 𝝀 26 39 26 36 - 𝝀 (50 – 𝝀) [ (28- 𝝀)(36- 𝝀) – 26*26 ] + 29 [ 26*39 – 29*(36- 𝝀) ] + 39 [ 29*26 – (28- 𝝀)*39 ] Since the eigenvalue 𝝀 3 is very less it can be left out and we can carry out the operations with 𝝀 1 and 𝝀 2 𝝀 0 0 0 𝝀 0 0 0 𝝀

- 8. Lets find the first eigenvector by solving the equation: Cx = 𝝀1x x = 102.86 Let z = 1, eq1 ⇒ – 52.86 x + 29 y + 39 = 0………...eq4 eq2 ⇒ 29 x – 74.86 y + 26 = 0…………..eq5 solving these we get y = 0.682 x………eq6 Replacing eq6 in eq4 : – 52.86 x + 29 * 0.682 x + 39 = 0 ⇒ x = 1.178 Finding the Eigenvectors 50 29 39 29 28 26 39 26 36 x y z x y z Solving this gives us three equations: – 52.86 x + 29 y + 39 z = 0………..eq1 29 x – 74.86 y + 26 z = 0………….eq2 39 x + 26 y – 66.86 z = 0………….eq3 Therefore the eigenvector for the eigenvalue 102.86 is (1.178 , 0.803 , 1) This is also known as Principal Component 1 (PC1)

- 9. 50 29 39 29 28 26 39 26 36 Let’s find the second eigenvector by solving the equation: Cx = 𝝀2x x = 8.06 Let z = 1, eq1 ⇒ 41.94 x + 29 y + 39 = 0……………eq4 eq2 ⇒ 29 x + 19.94 y + 26 = 0……………eq5 solving these we get y = – 1.714 x………eq6 Replacing eq6 in eq4 : 41.94 x + 29 * (– 1.714) x + 39 = 0 ⇒ x = 5.021 x y z x y z Solving this gives us three equations: 41.94 x + 29 y + 39 z = 0………….eq1 29 x + 19.94 y + 26 z = 0………….eq2 39 x + 26 y + 27.94 z = 0………….eq3 Therefore the eigenvector for the eigenvalue 8.06 is (5.021 , – 8.605 , 1) This is also known as Principal Component 2 (PC2) 50 29 39 29 28 26 39 26 36 Finding the Eigenvectors

- 10. So to get the axes of the new 2D plot we need to find the unit vector along the principal components: Unit vector along PC1 = (1.178,0.803,1) (1.178)2 + (0.803)2 + (1)2 = ( 0.676, 0.461, 0.574 ) Unit vector along PC2 = (5.021,−8.605,1) (5.021)2 + (−8.605)2 + (1)2 = ( 0.501, – 0.859, 0.099) Getting the new axes

- 11. Plotting the Data on the new graph TC SC LOC PC1 (0.676,0.471, 0.574) PC2 (0.501, -0.859, 0.099) Algo 1 9 7 7 13.399 -0.811 Algo 2 8 8 8 13.768 -2.072 Algo 3 6 9 7 12.313 -4.032 Algo 4 3 5 2 5.531 -2.594 Algo 5 3 3 4 5.737 -0.678 Algo 6 1 4 2 3.708 -2.737 1 2 3 5 4 6 PC2 PC1

- 12. Variation in PCA for each PC is determined by dividing the eigenvalue by sample size -1 Variation for PC1 = 102.86/5 = 20.572 Variation for PC2 = 8.06/5 = 1.612 Variation for PC3 = 3.07/5 = 0.614 Total variation = 22.798 Percentage Variation: PC1 = 20.572 / 22.798 % = 90.2 % PC2 = 1.612 / 22.798 % = 7.07 % PC3 = 0.614 / 22.798 % = 2.73 % Variation in PCA 90.2 7.07 2.73 0 10 20 30 40 50 60 70 80 90 100 PC 1 PC 2 PC 3 SCREE PLOT

- 13. Application The principal component analysis is a widely used unsupervised learning method to perform dimensionality reduction. 1. used for finding hidden patterns if data has high dimensions like in finance, data mining, Psychology 2. Used in image compression 3. Used in noise cancellation

- 14. Advantages and Disadvantages Advantages: 1. Less misleading data means model accuracy improves. 2. Fewer dimensions mean less computing. Less data means that algorithms train faster. 3. Less data means less storage space required. 4. Removes redundant features and noise. 5. Dimensionality Reduction helps us to visualize the data that is present in higher dimensions in 2D or 3D Disadvantages: 1. While doing dimensionality reduction, we lost some of the information, which can possibly affect the performance of subsequent training algorithms. 2. It can be computationally intensive. 3. Transformed features are often hard to interpret. 4. It makes the independent variables less interpretable.

- 15. References 1. https://towardsdatascience.com/a-one-stop-shop-for-principal-component-analysis-5582fb7e0a9c 2. https://www.youtube.com/watch?v=FgakZw6K1QQ 3. https://www.youtube.com/watch?v=FgakZw6K1QQ 4. https://www.javatpoint.com/principal-component-analysis 5. https://www.simplilearn.com/tutorials/machine-learning-tutorial/principal-component-analysis 6. https://www.analyticsvidhya.com/blog/2021/05/20-questions-to-test-your-skills-on-dimensionality- reduction-pca/ 7. https://www.wolframalpha.com/ 8. https://c3d.libretexts.org/CalcPlot3D/index.html 9. https://research.google.com/colaboratory/