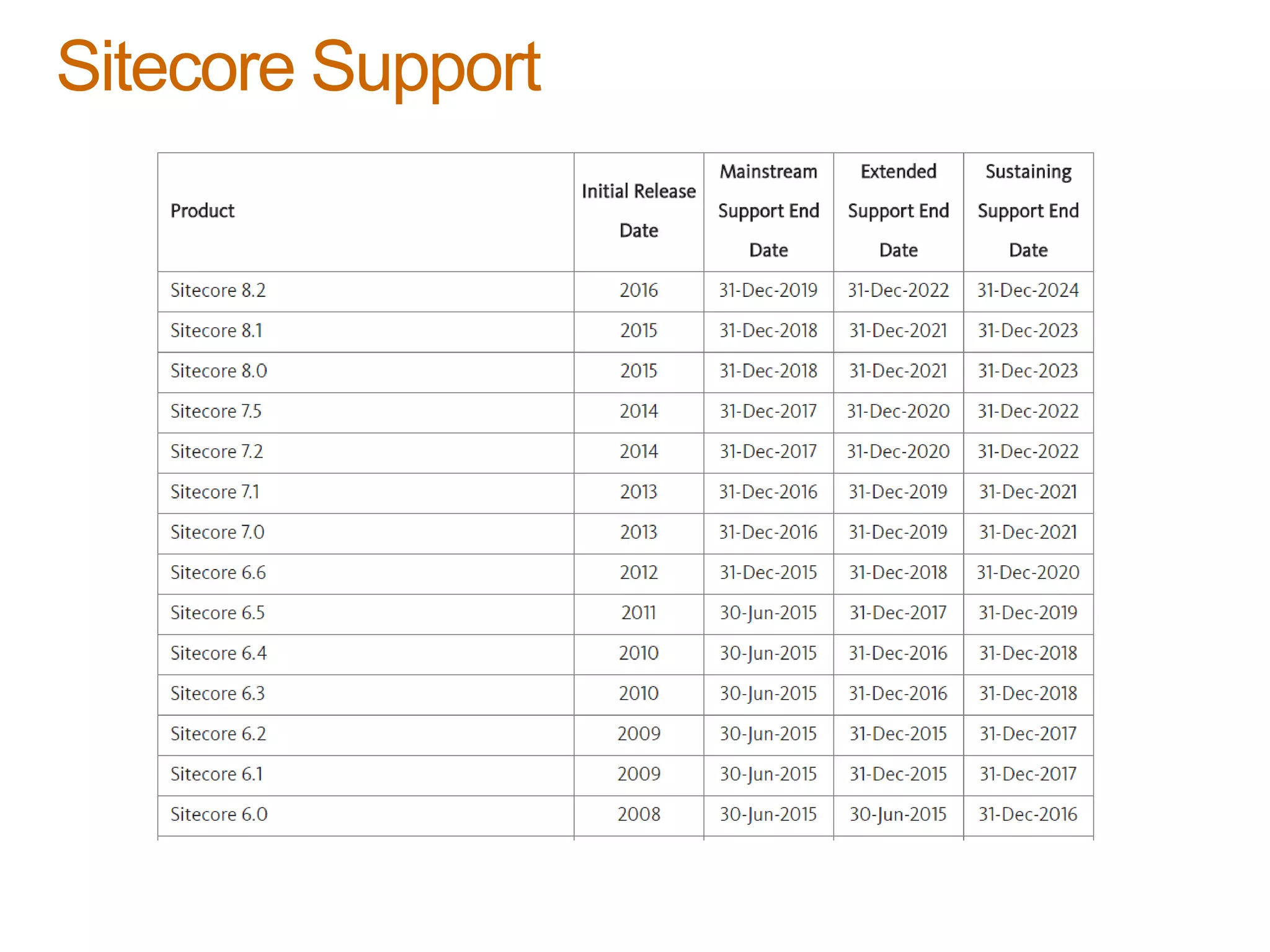

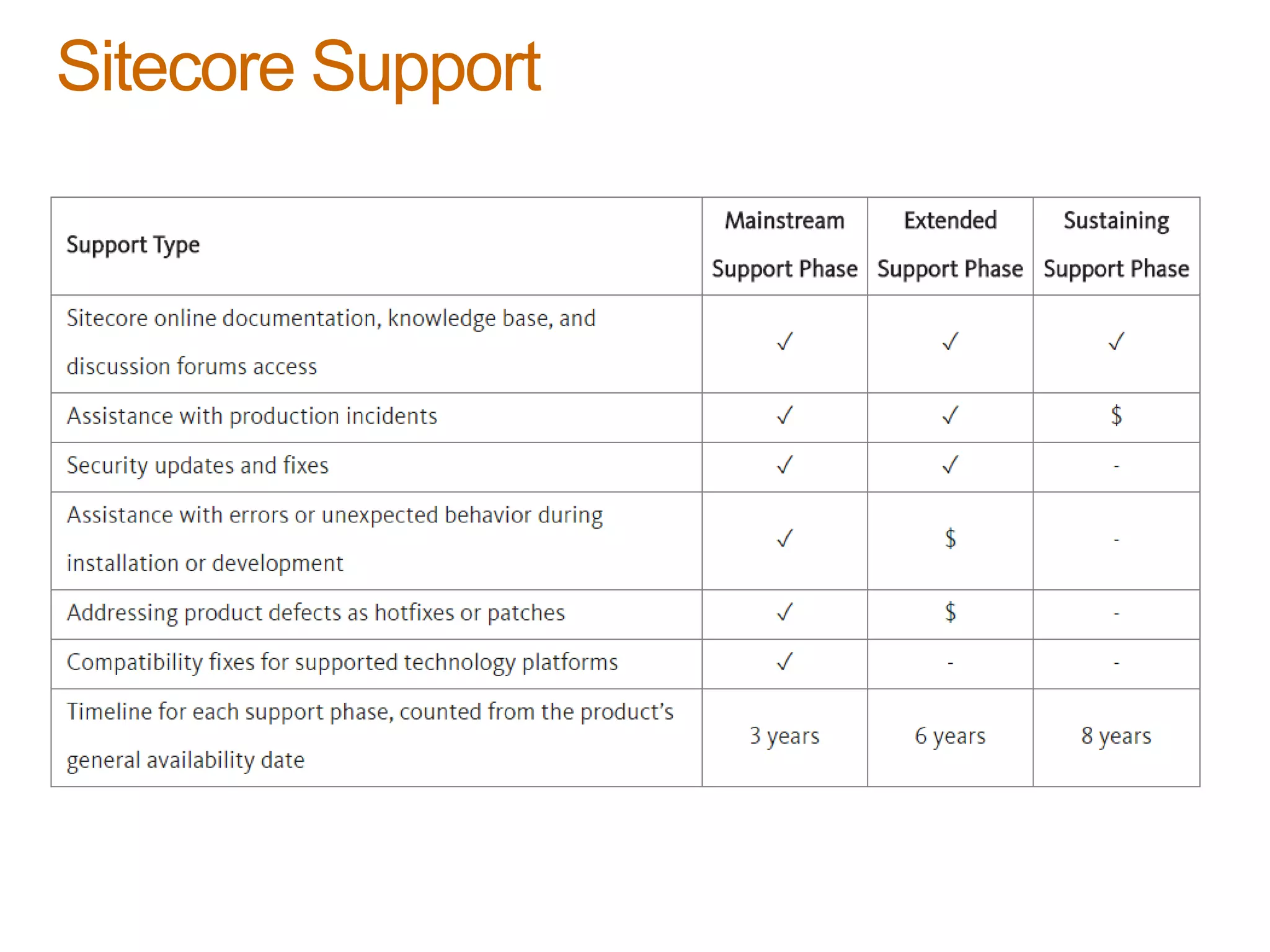

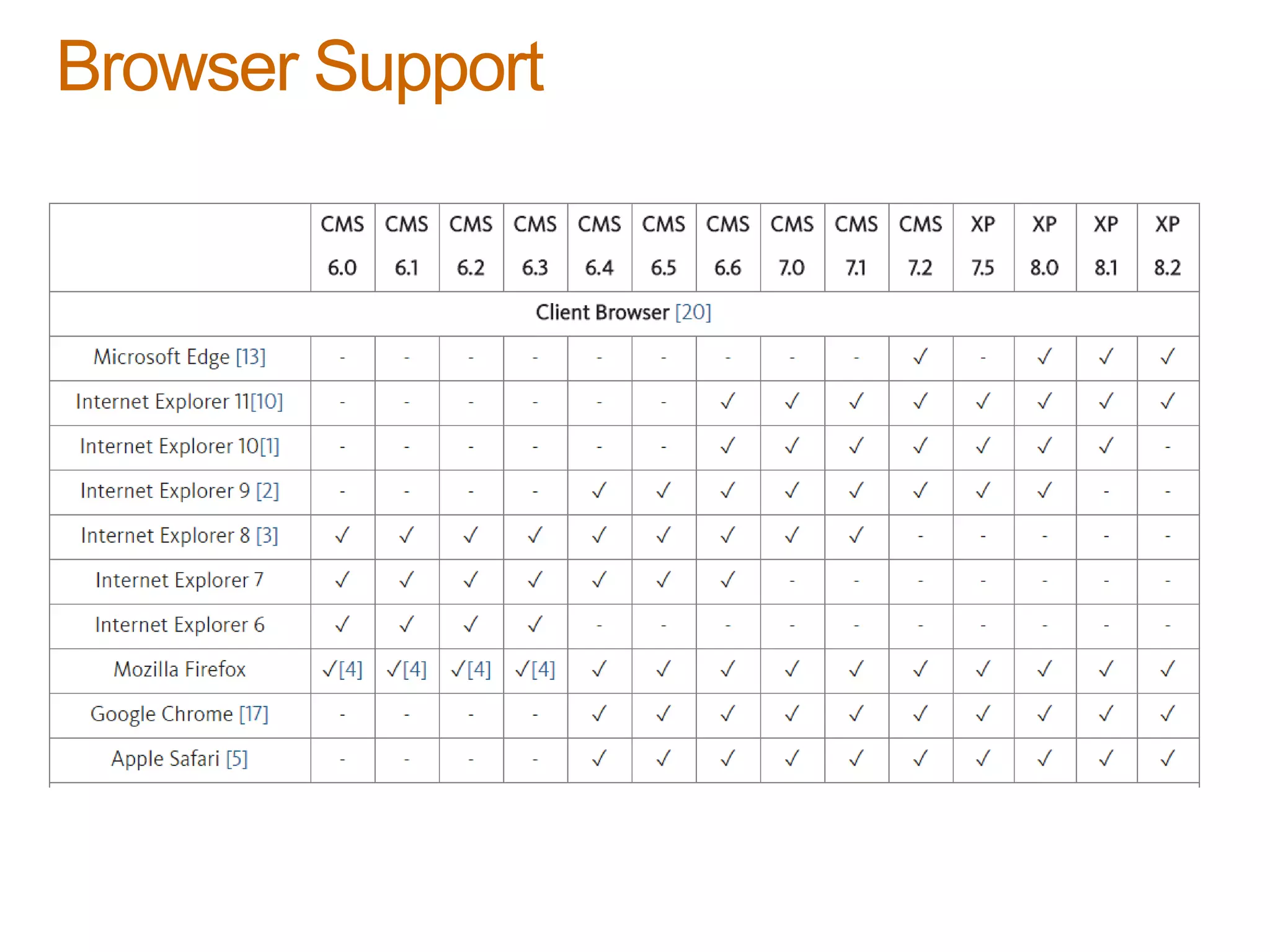

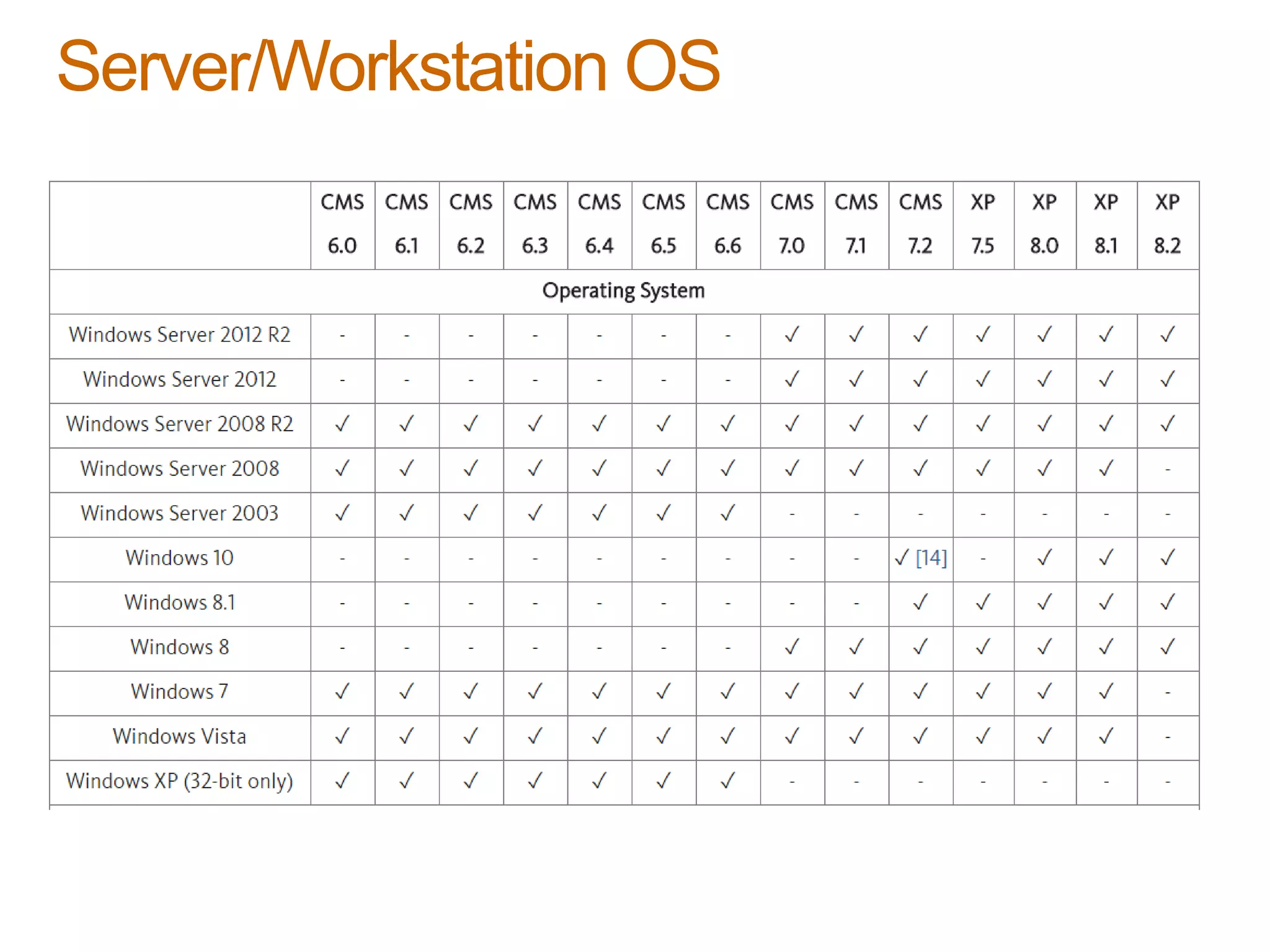

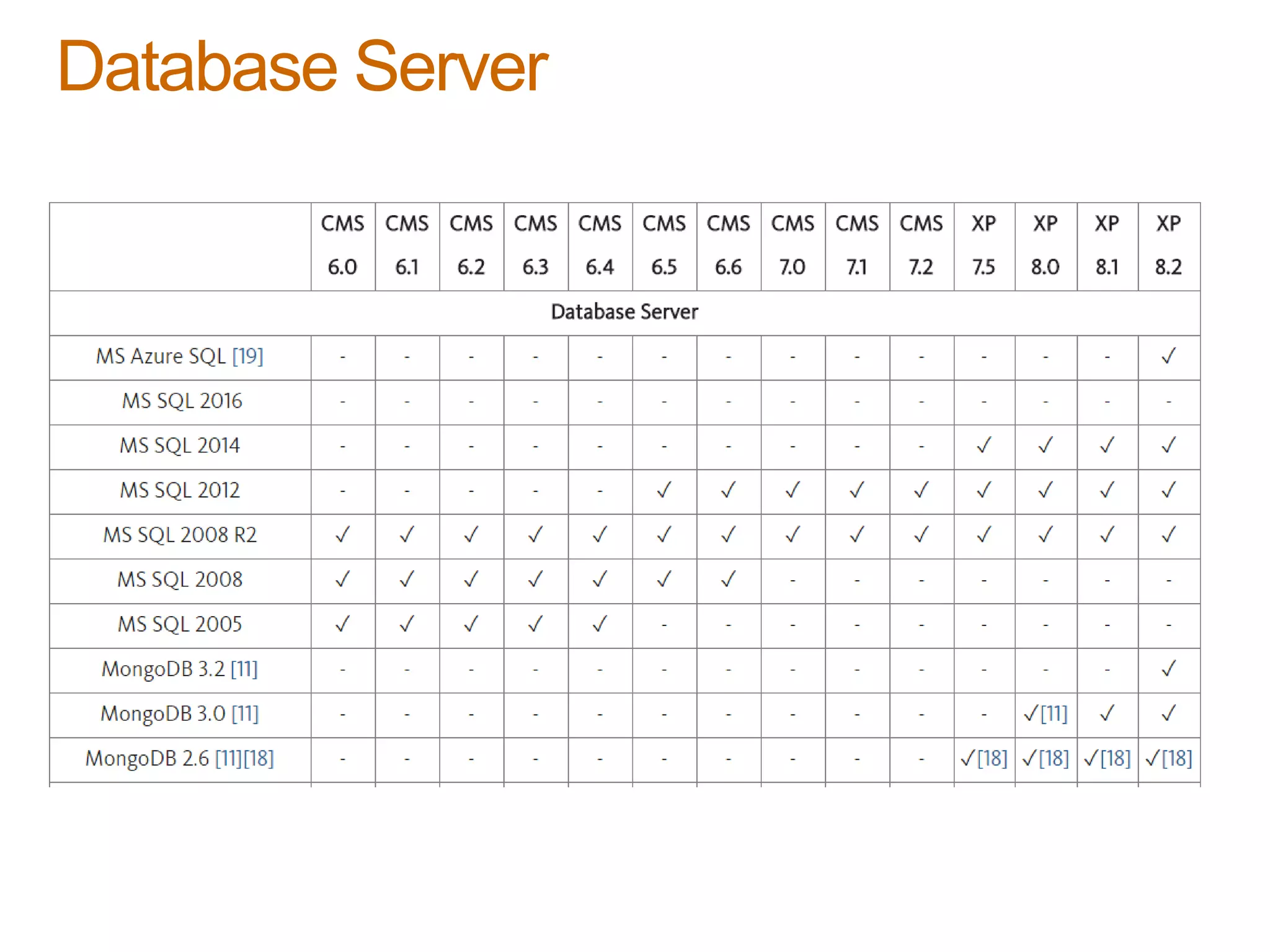

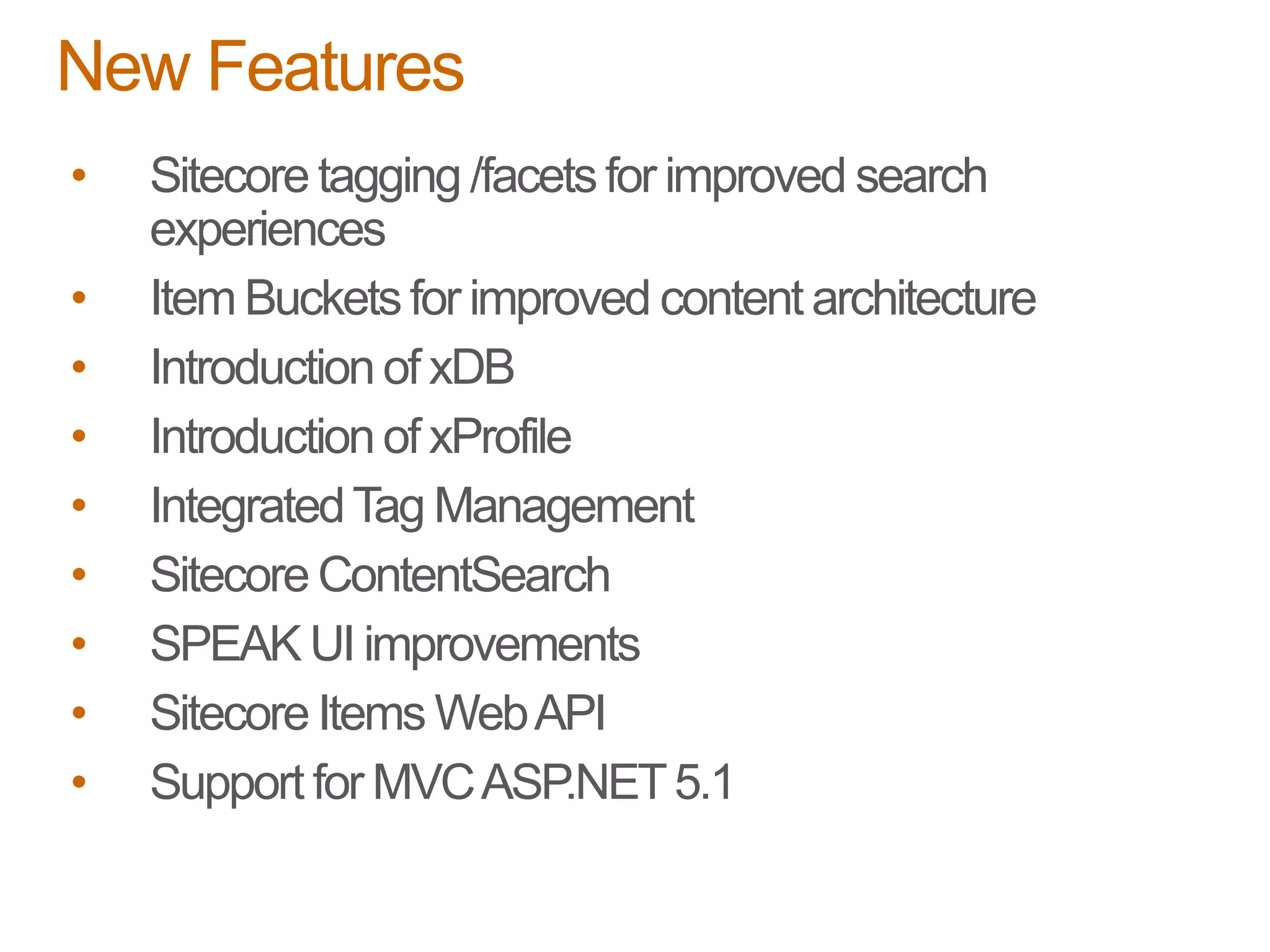

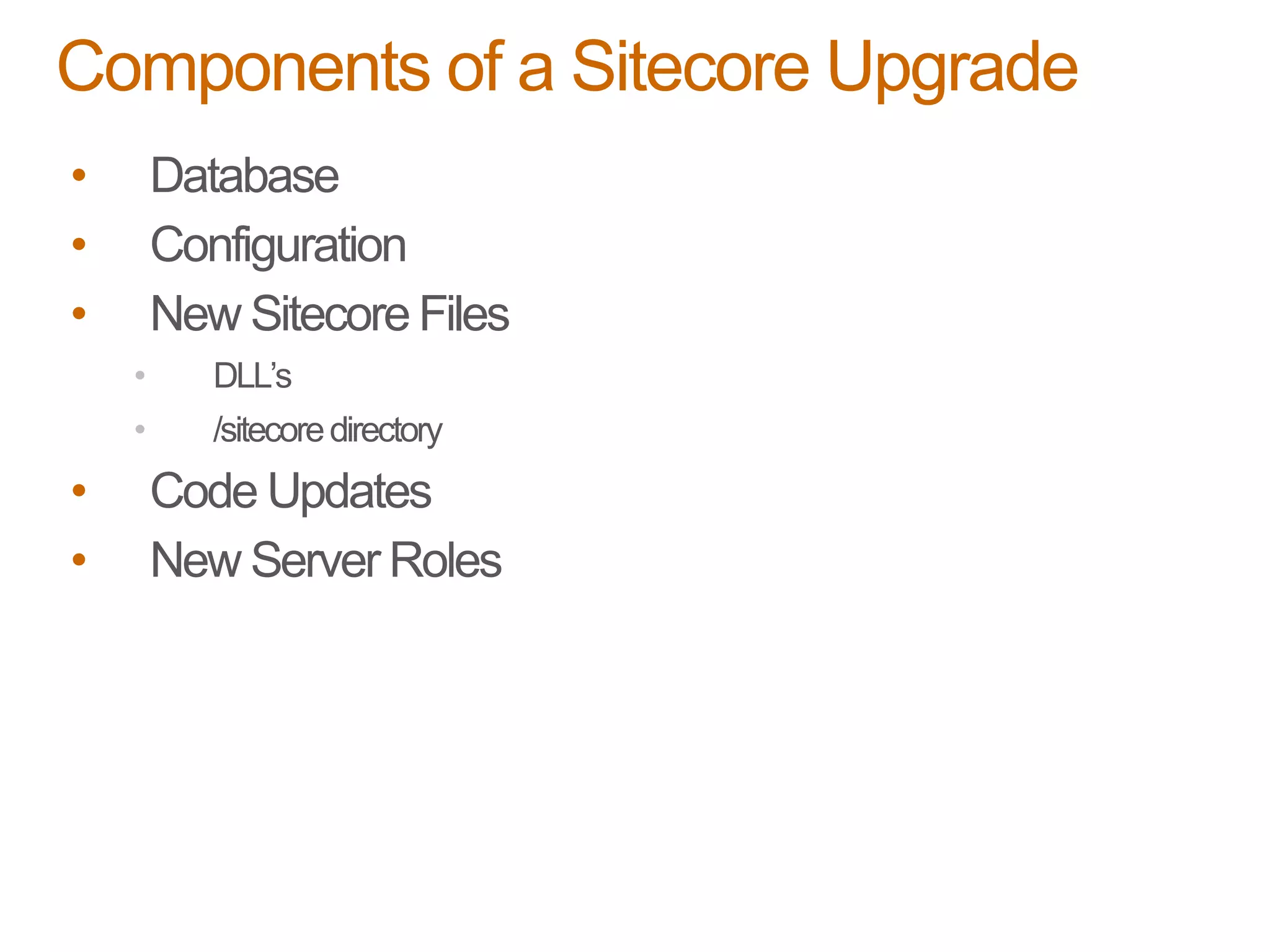

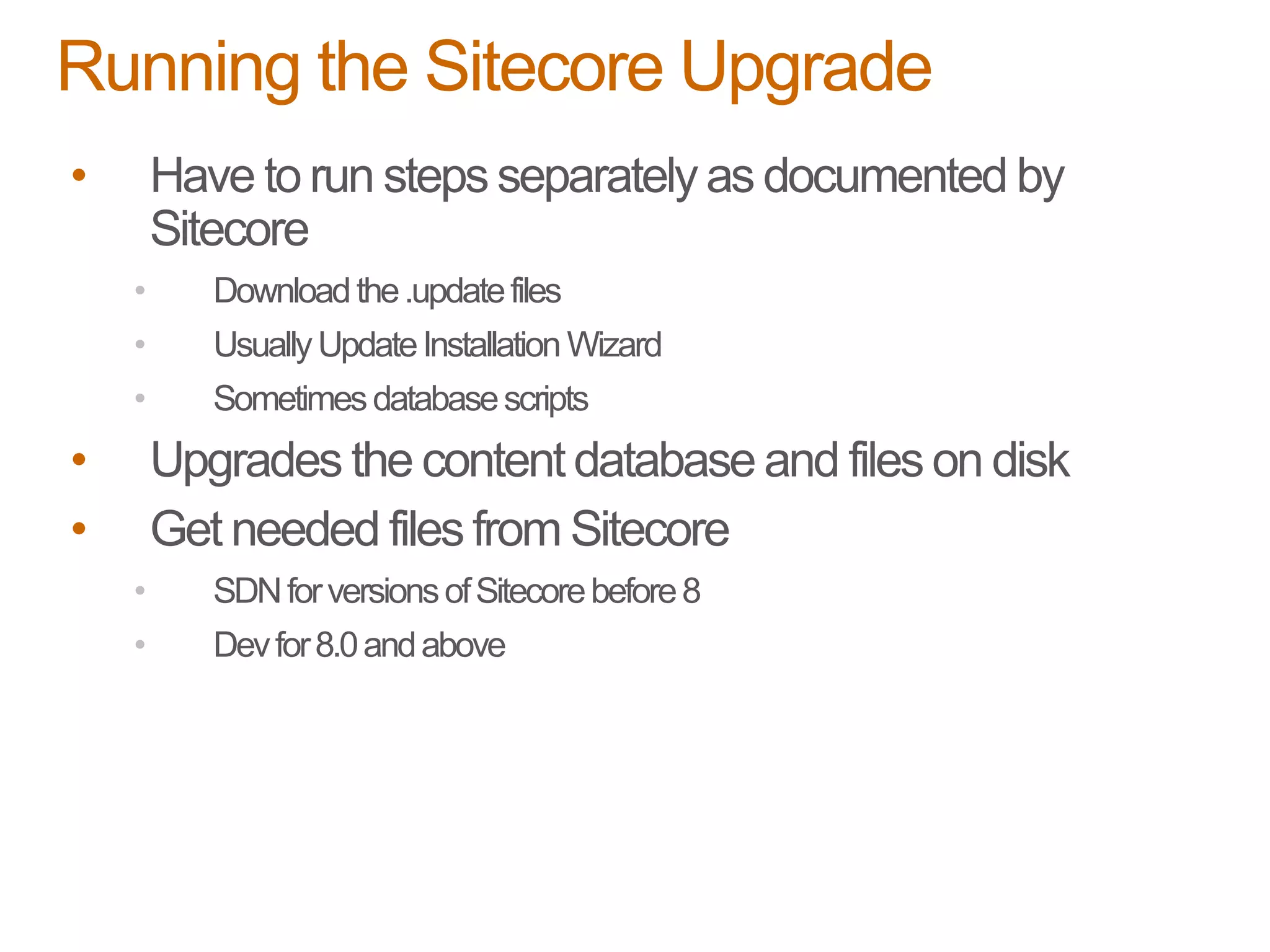

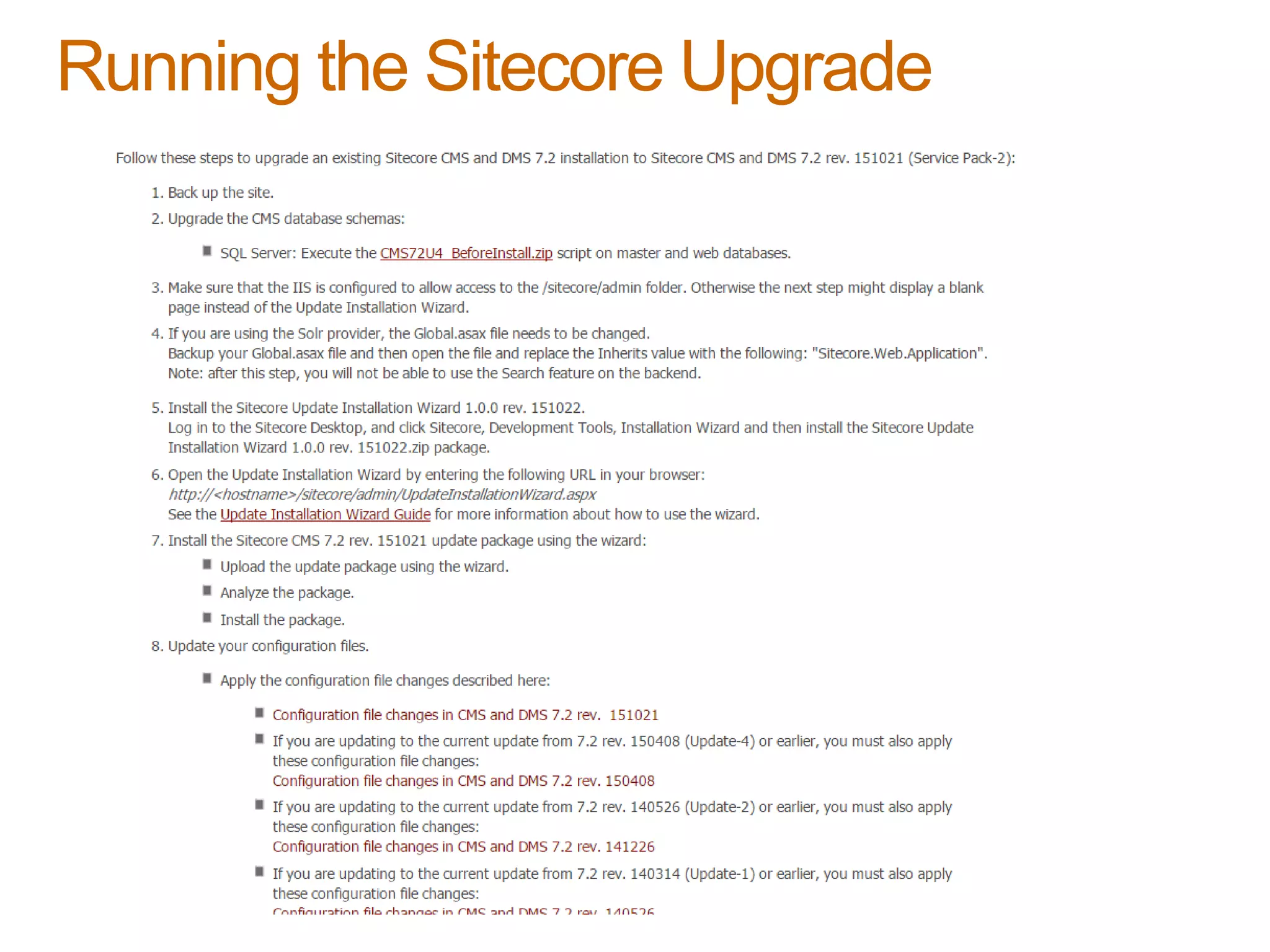

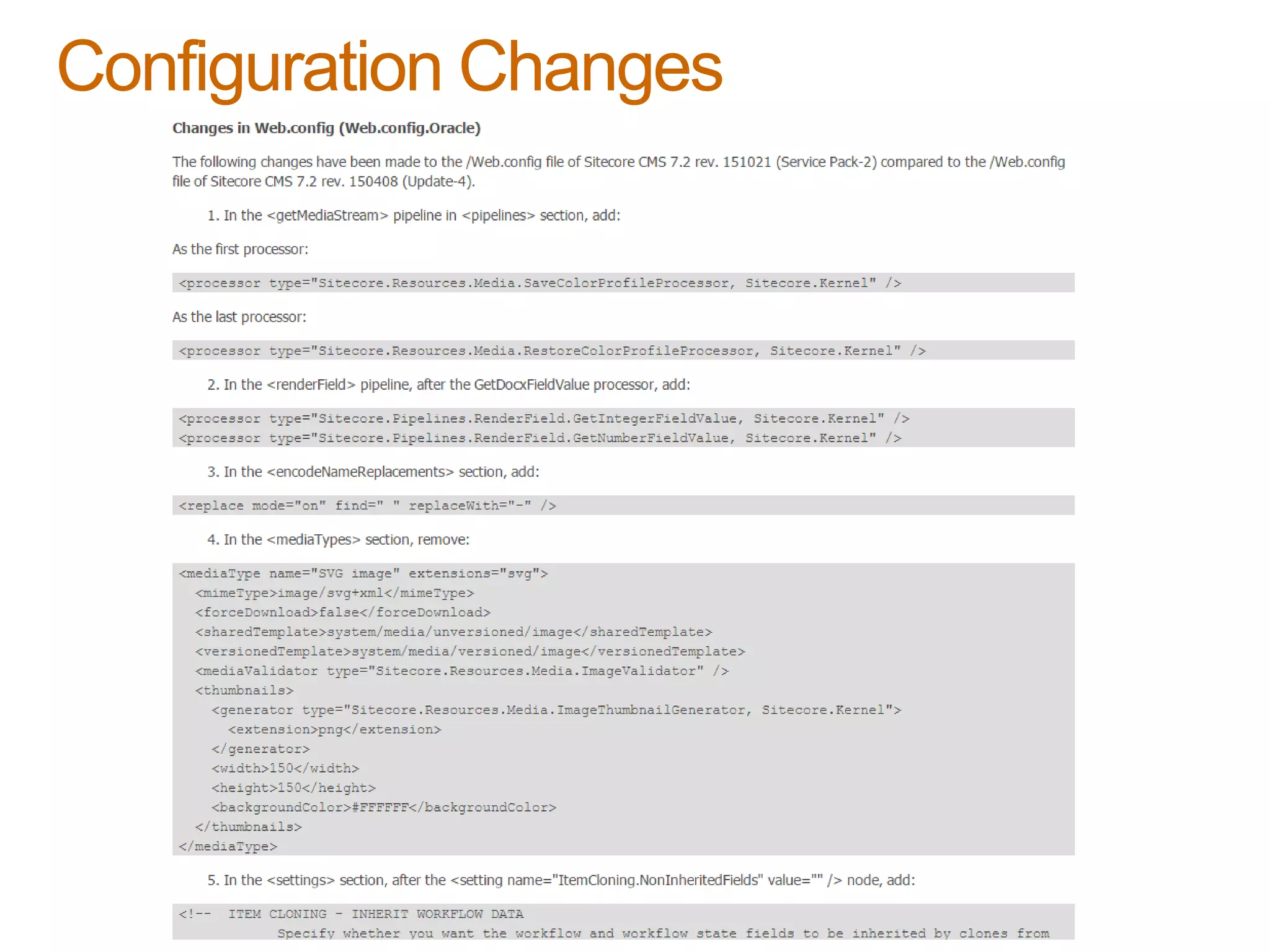

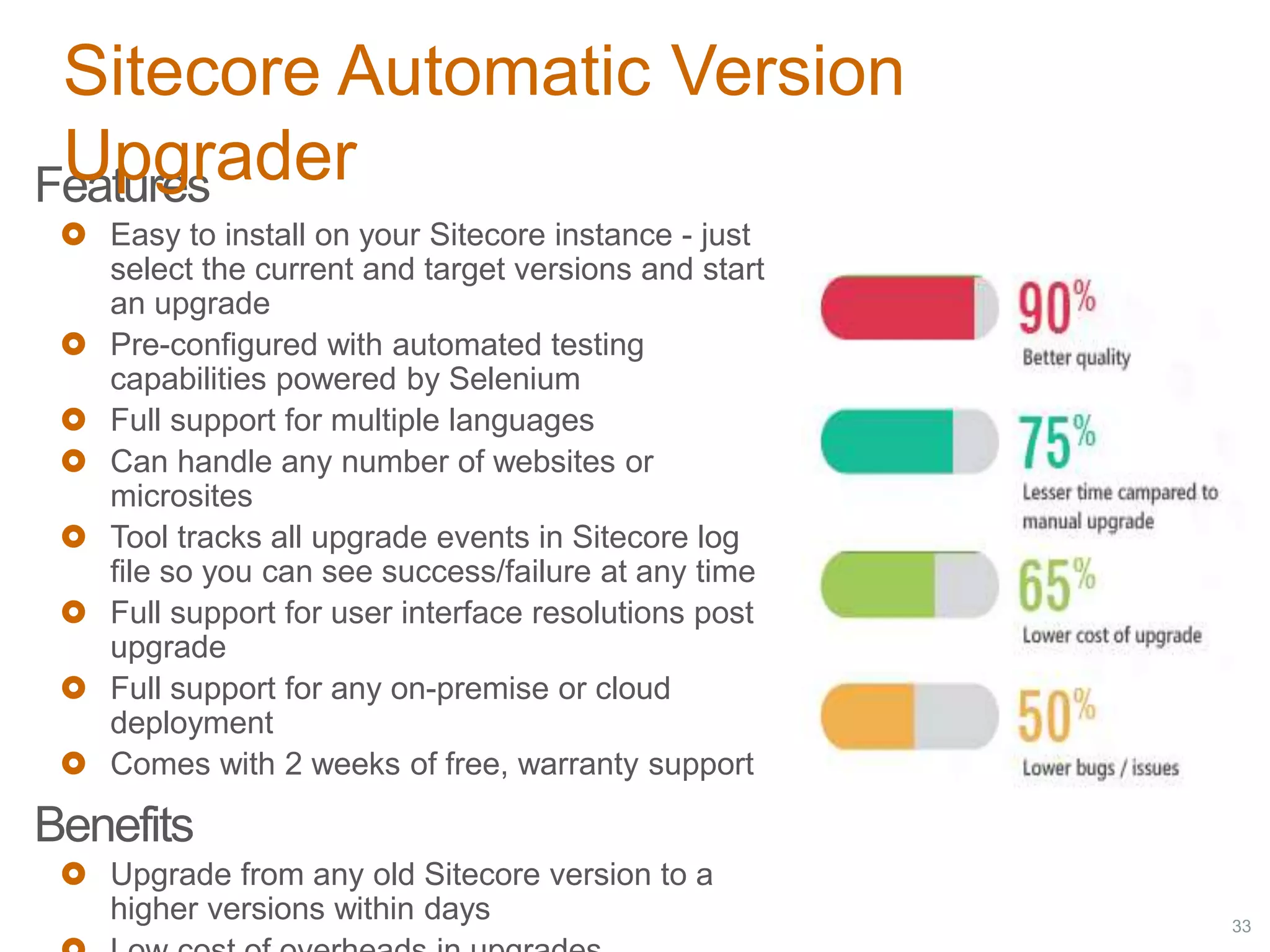

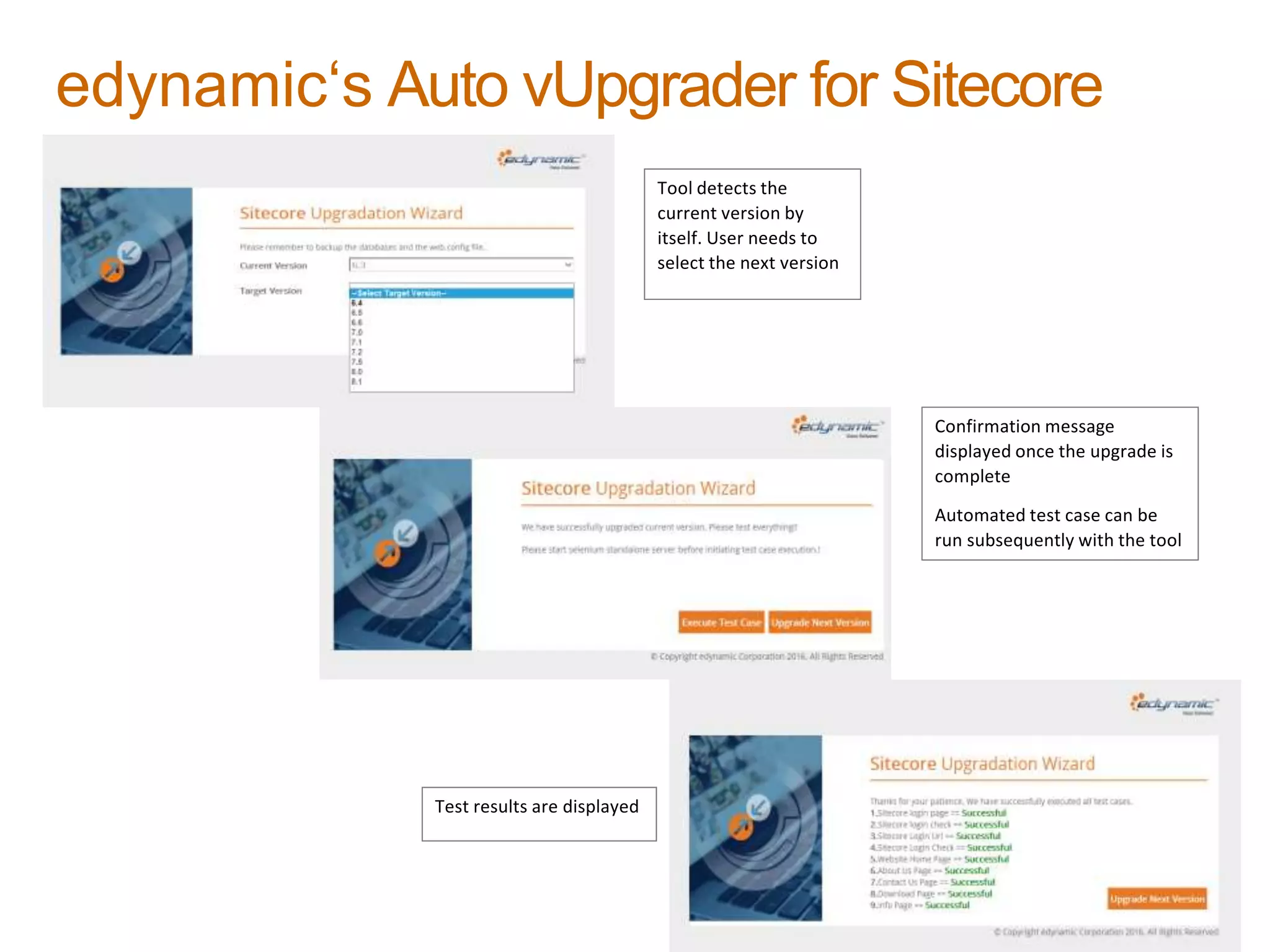

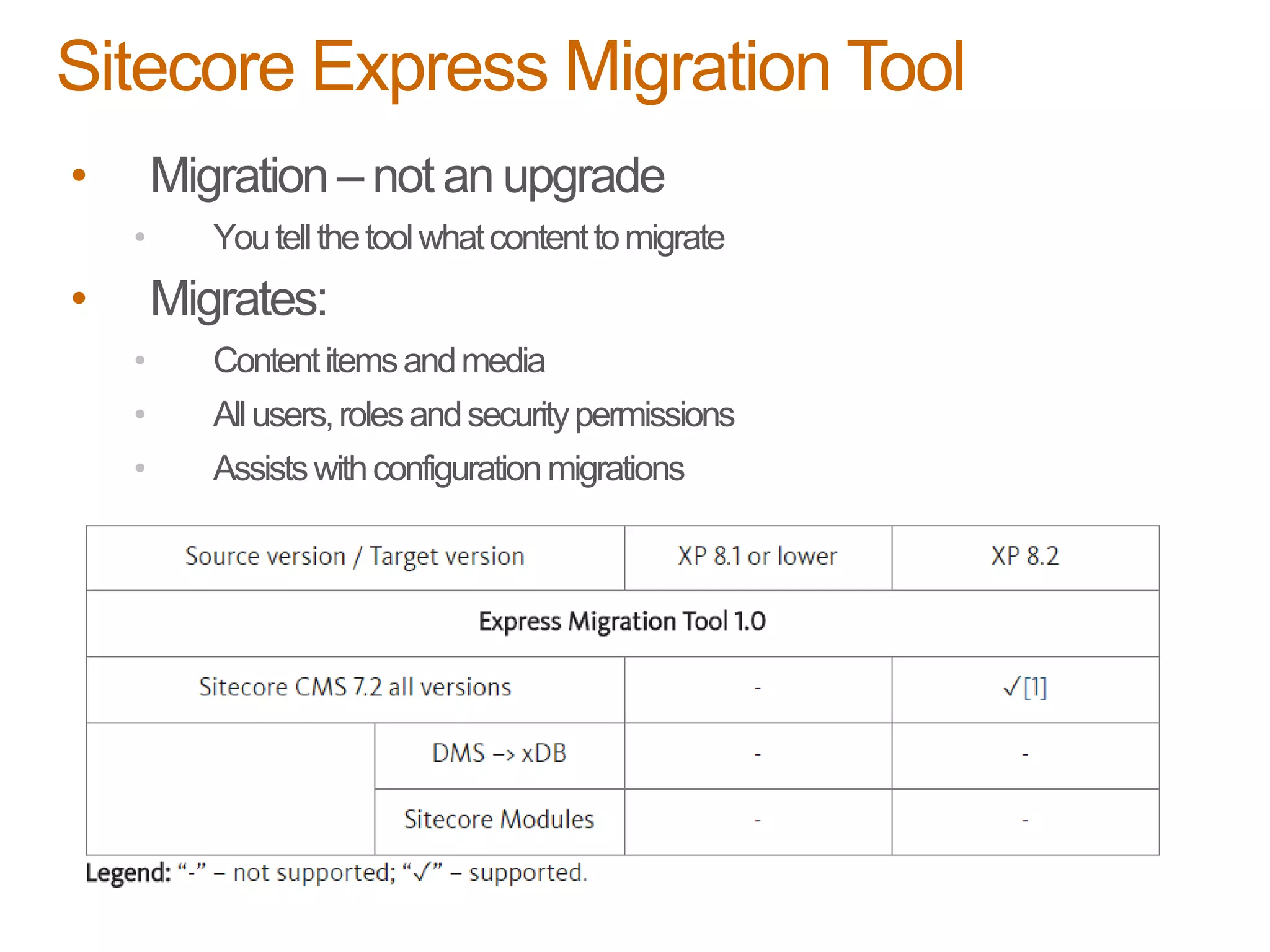

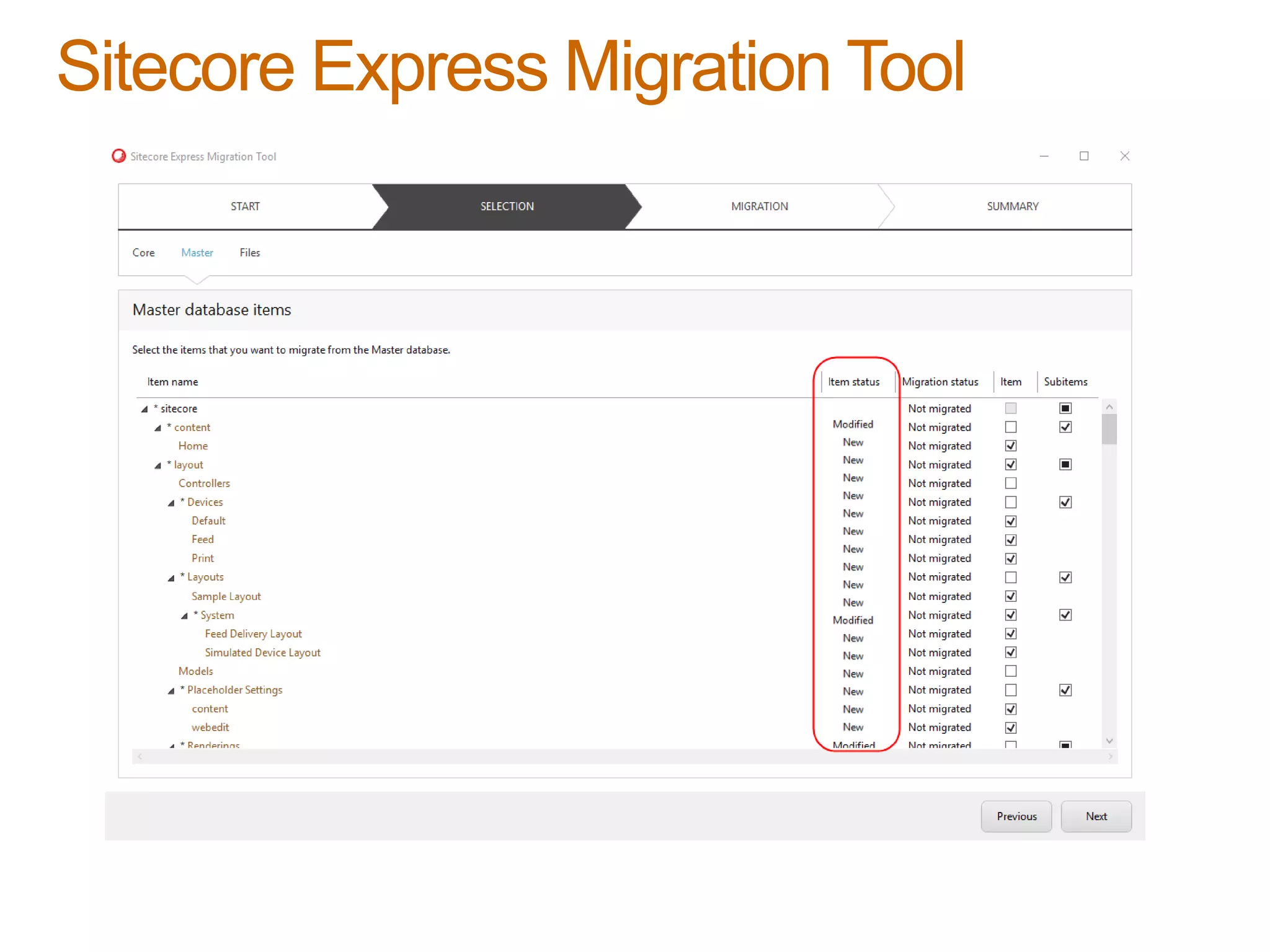

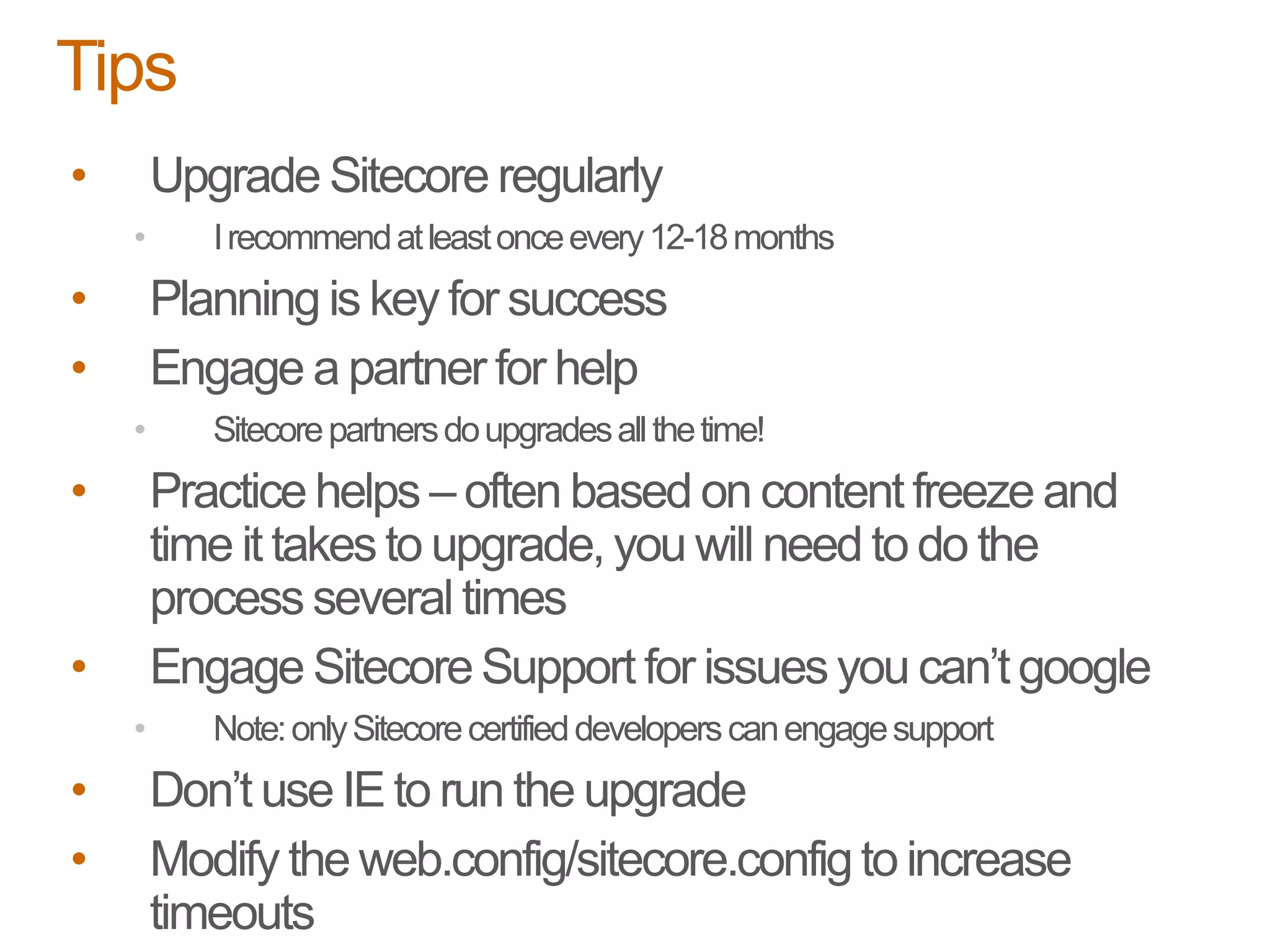

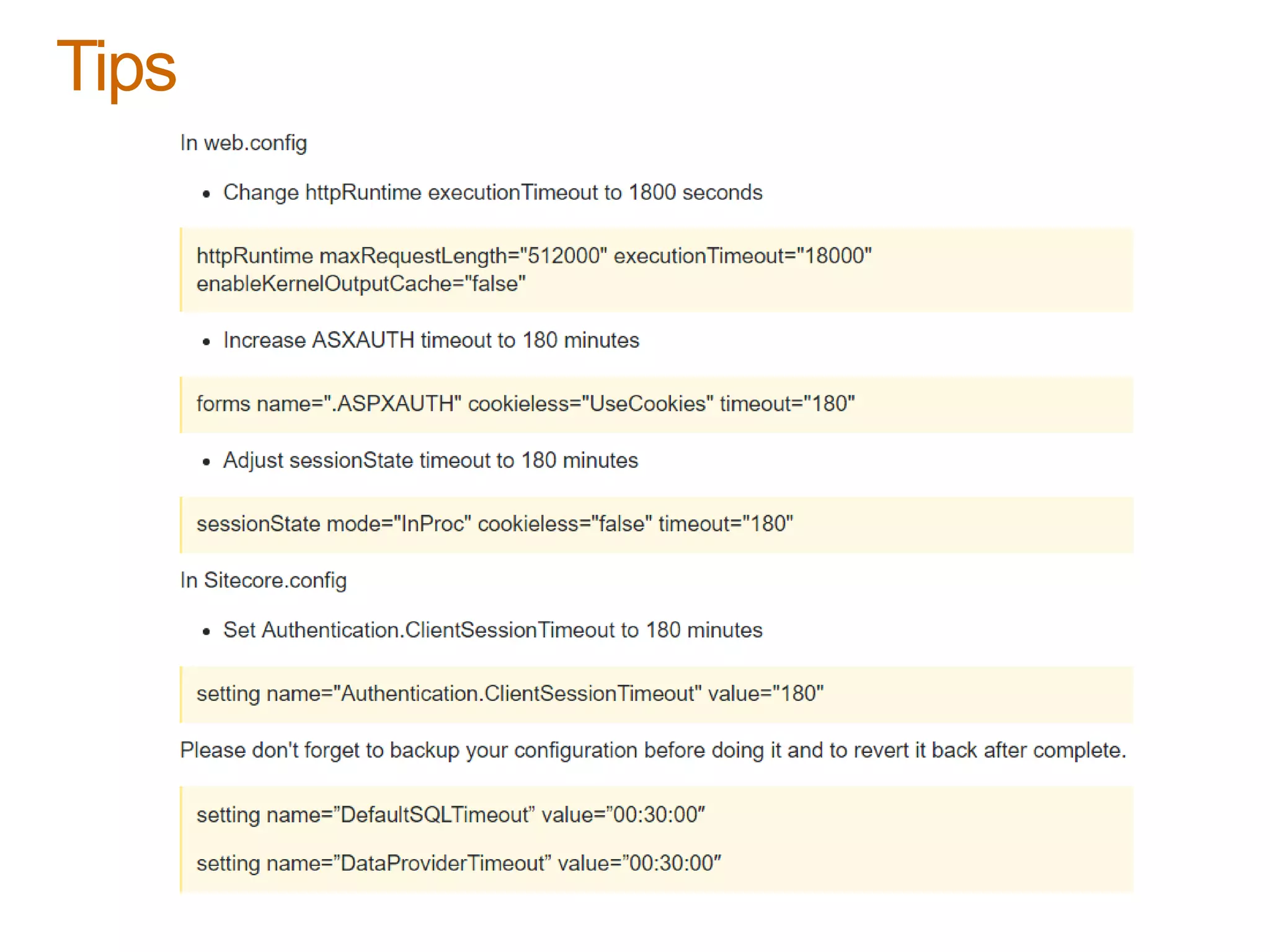

The document provides best practices for upgrading Sitecore, emphasizing the importance of staying current with versions for support, browser compatibility, and new features. It outlines the components and steps involved in an upgrade, including planning, testing, and configuration changes, as well as the use of tools for automation. The document also shares a case study highlighting a successful rapid upgrade of multiple sites from Sitecore 6.6 to 8.1 using eDynamic's upgrade utility.