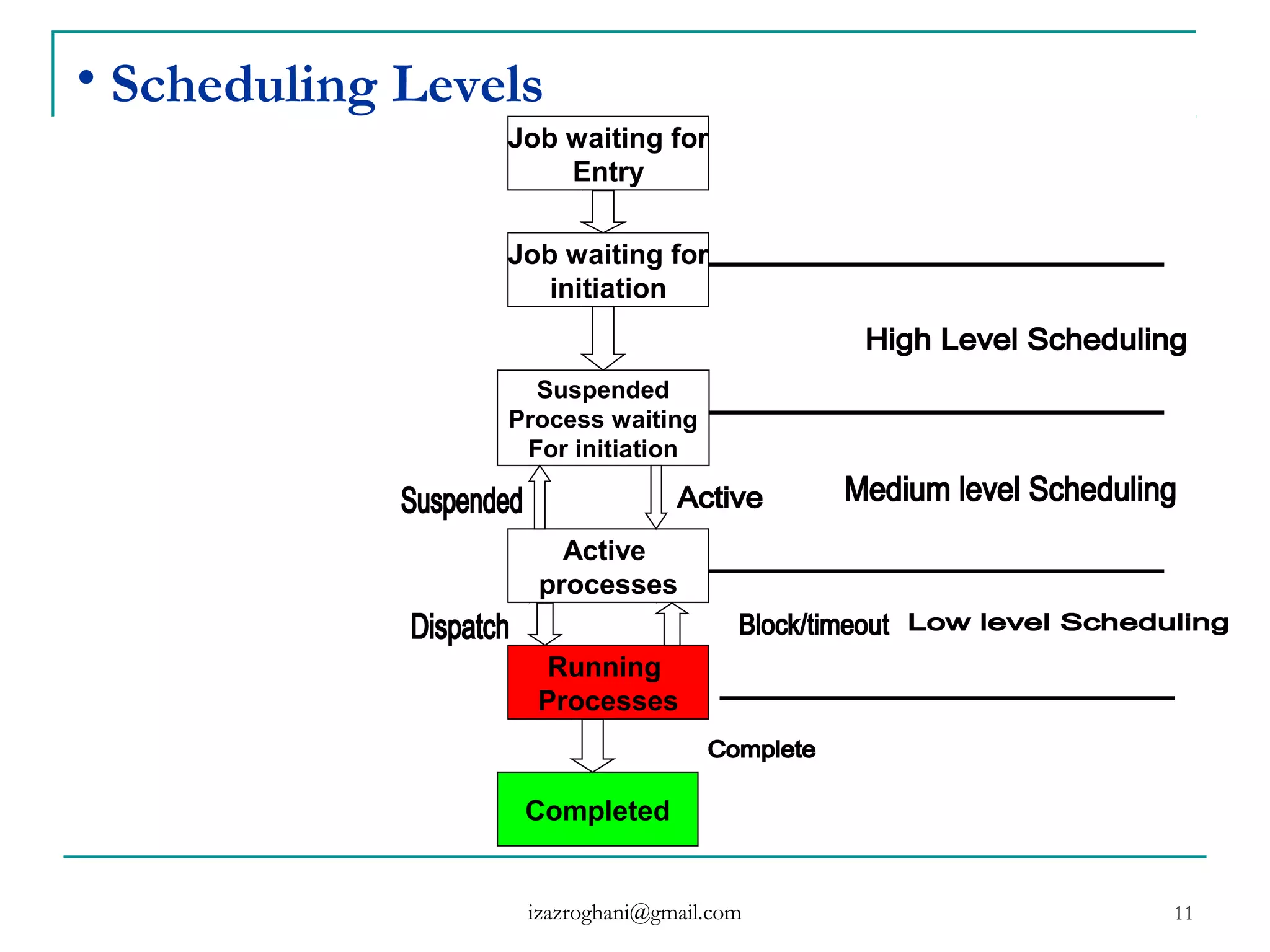

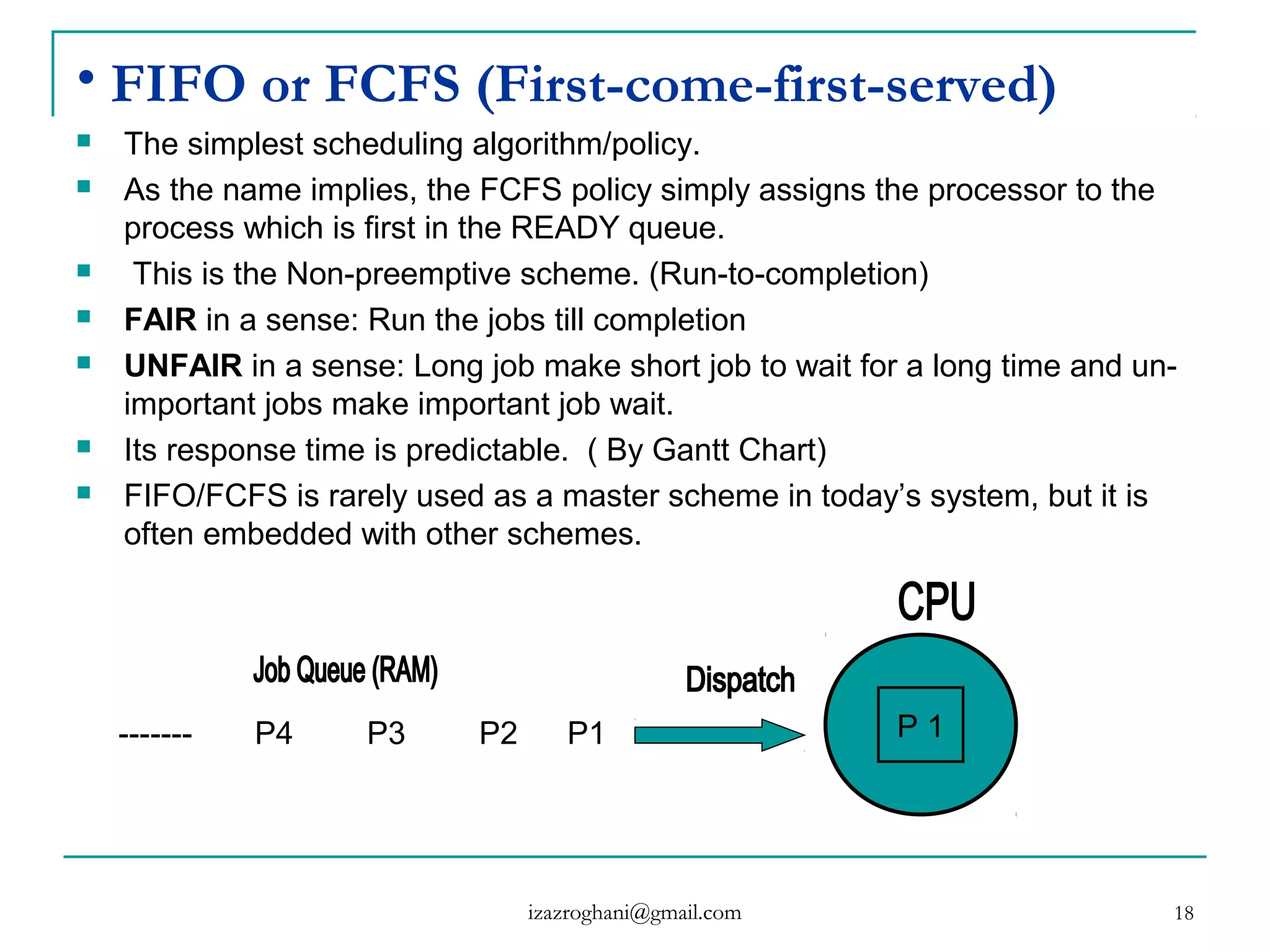

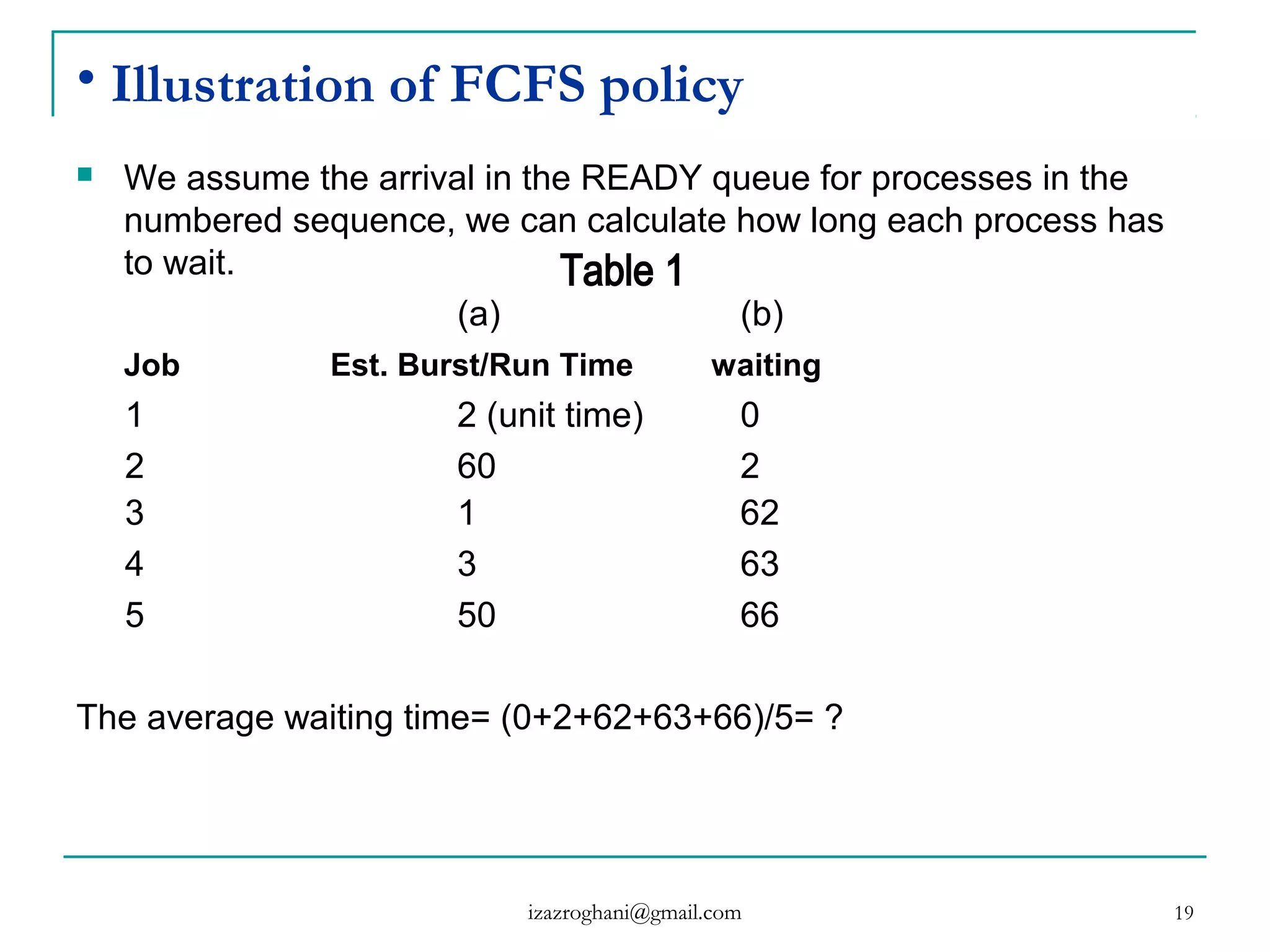

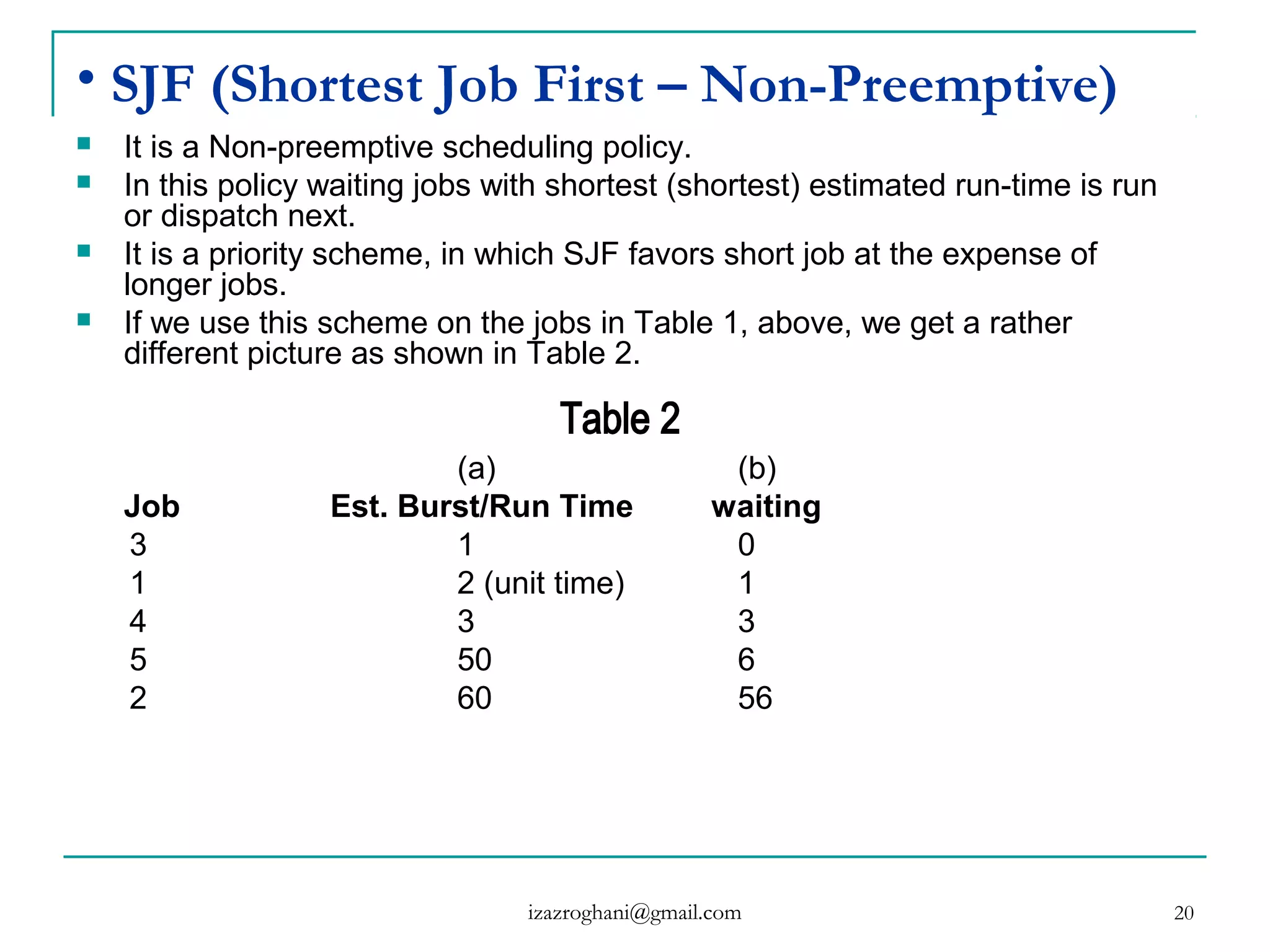

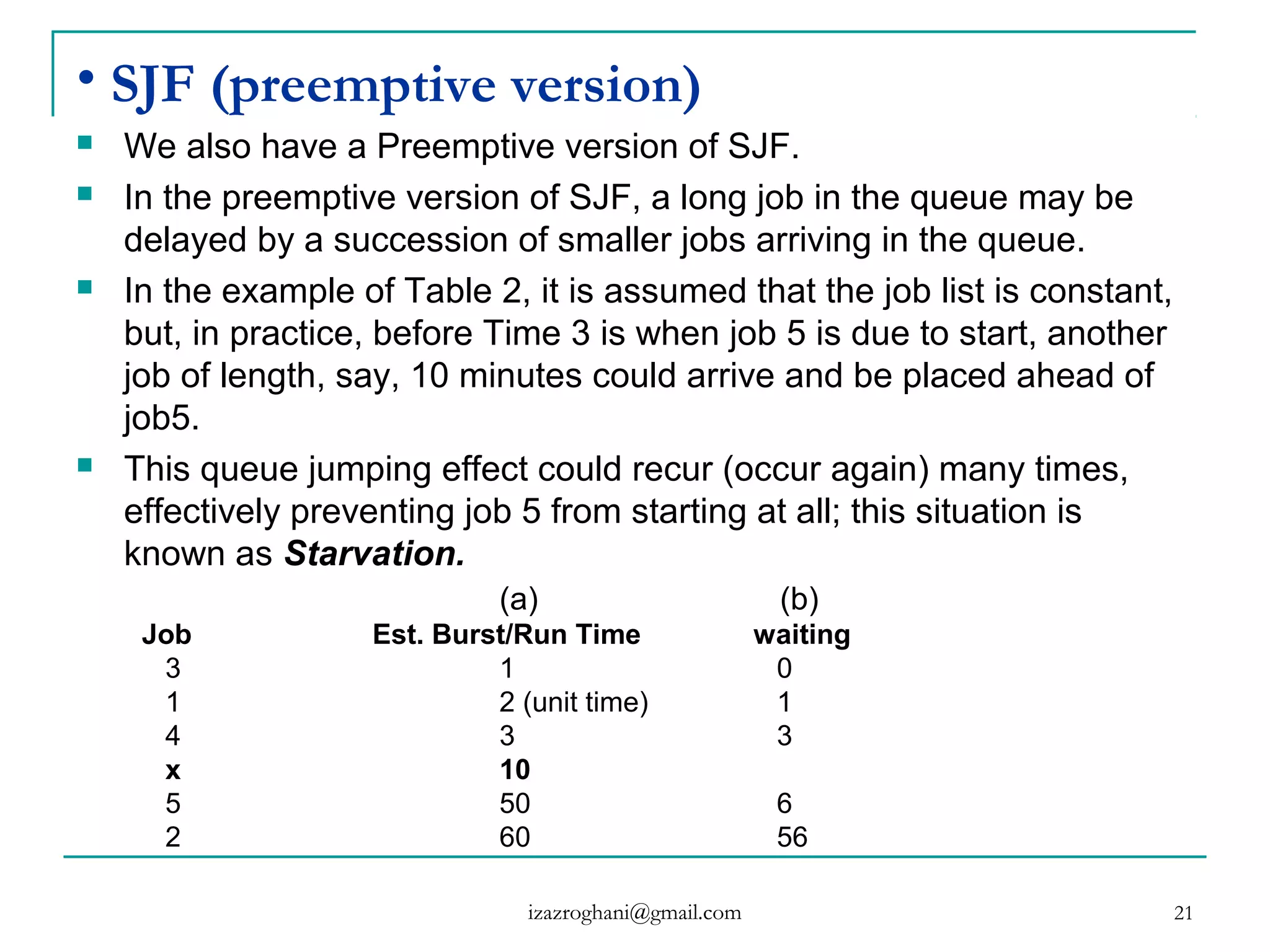

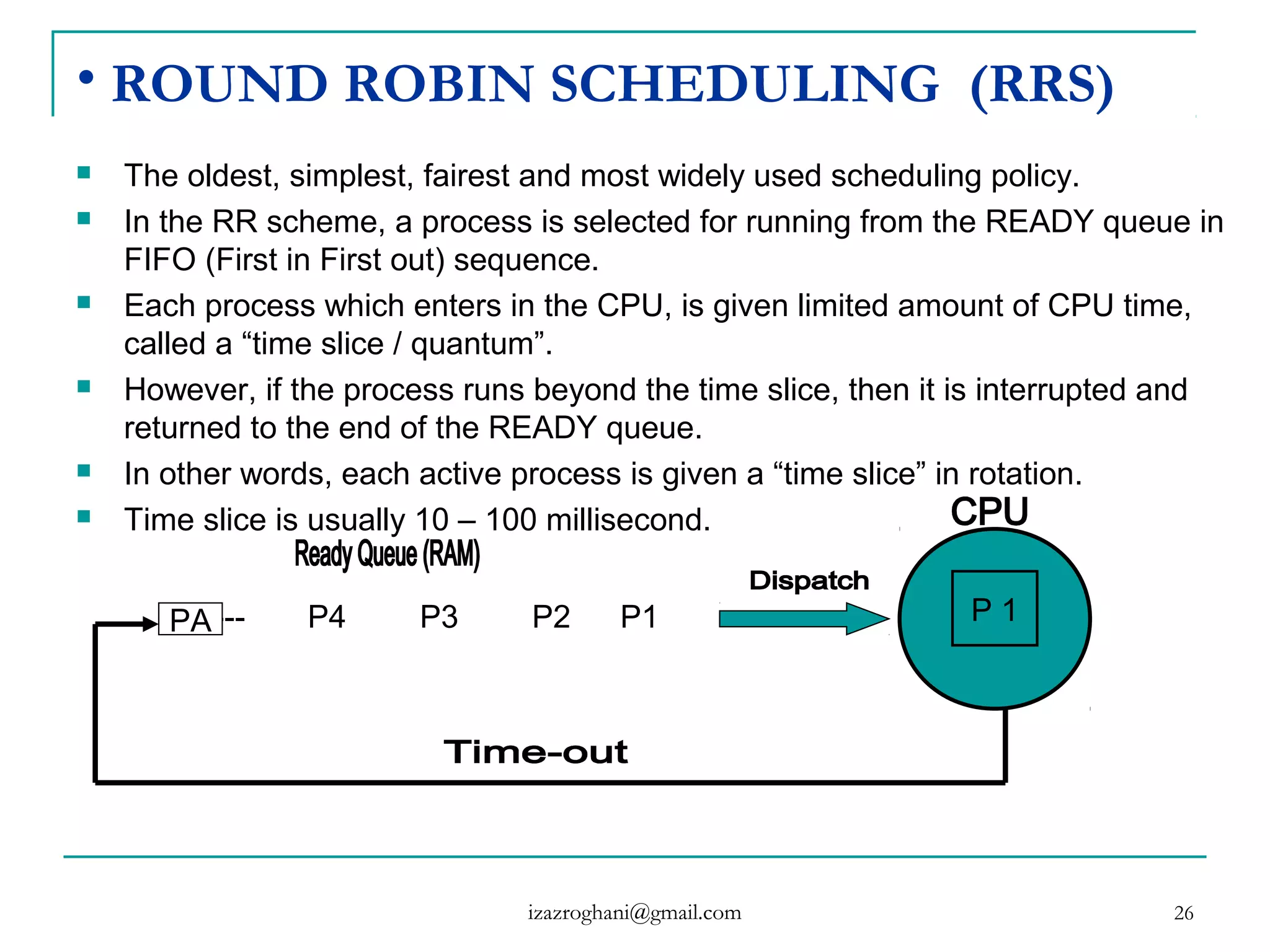

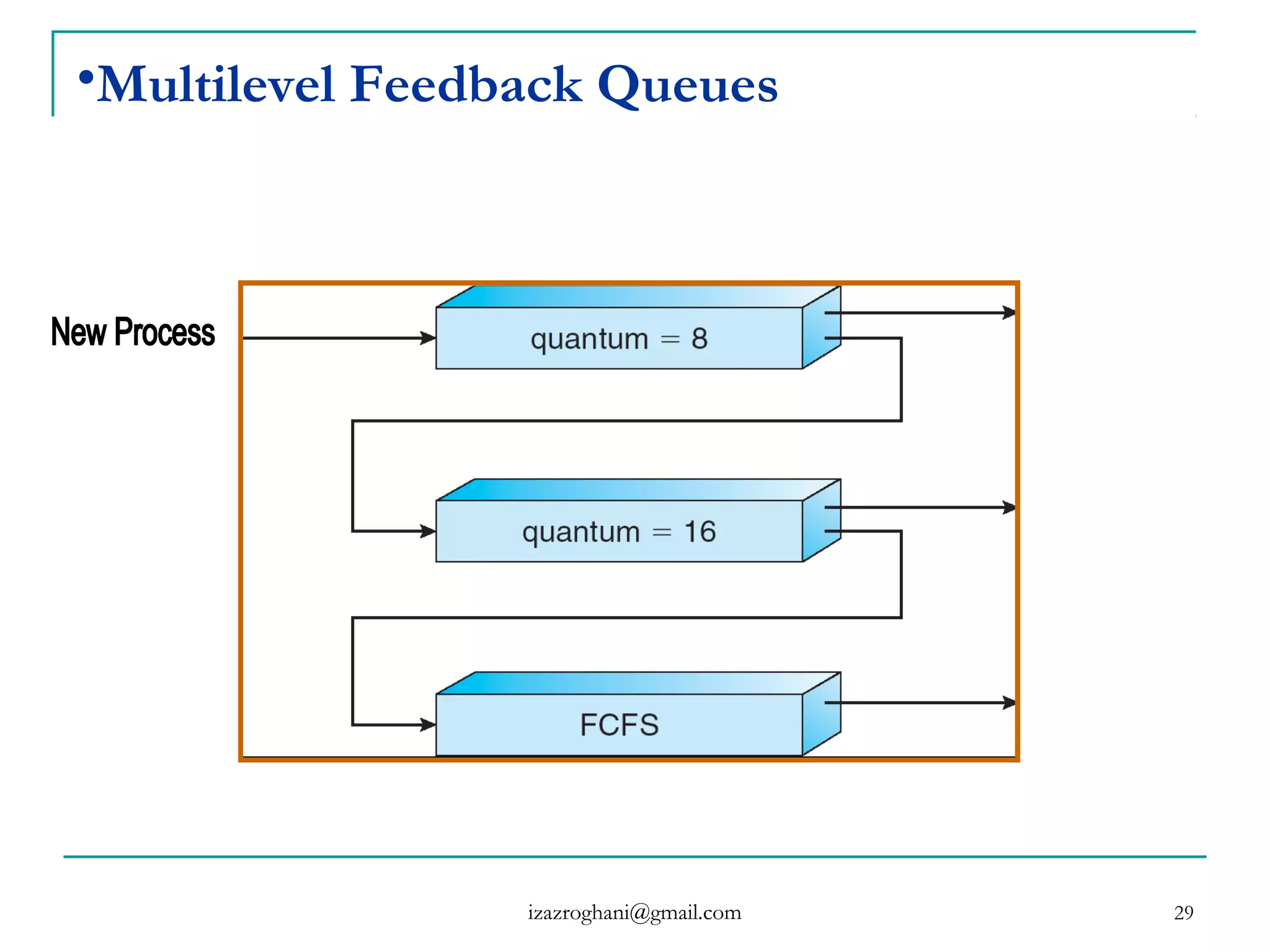

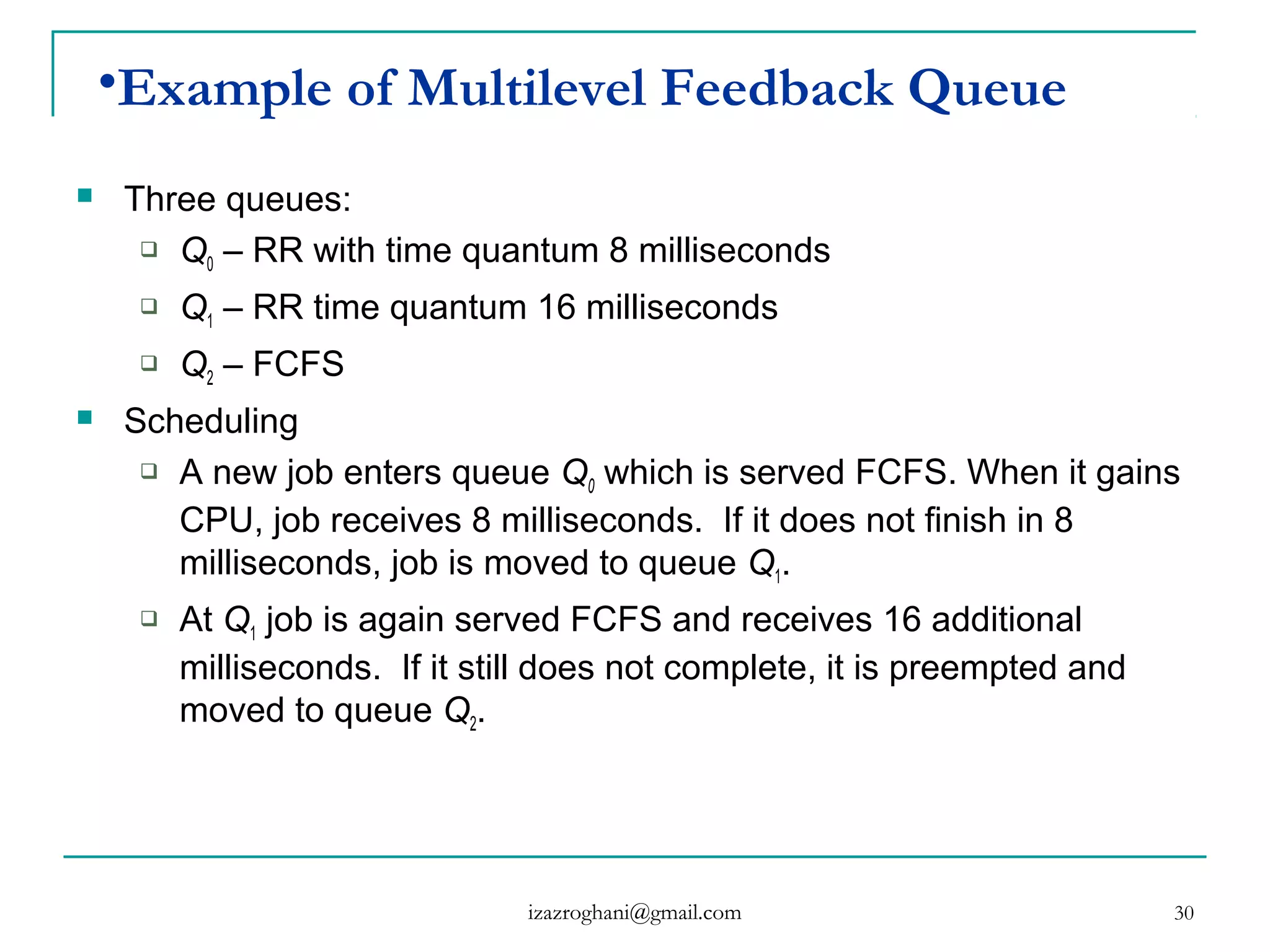

This document discusses various concepts related to CPU scheduling. It begins with definitions of scheduling and explains that the CPU requires a mechanism to allocate time to different processes in a fair manner. It then covers key scheduling concepts like scheduling levels (high, intermediate, low), types (preemptive vs non-preemptive), objectives, and algorithms like FCFS, SJF, priority scheduling, and round robin. The document provides examples and comparisons of different scheduling techniques.