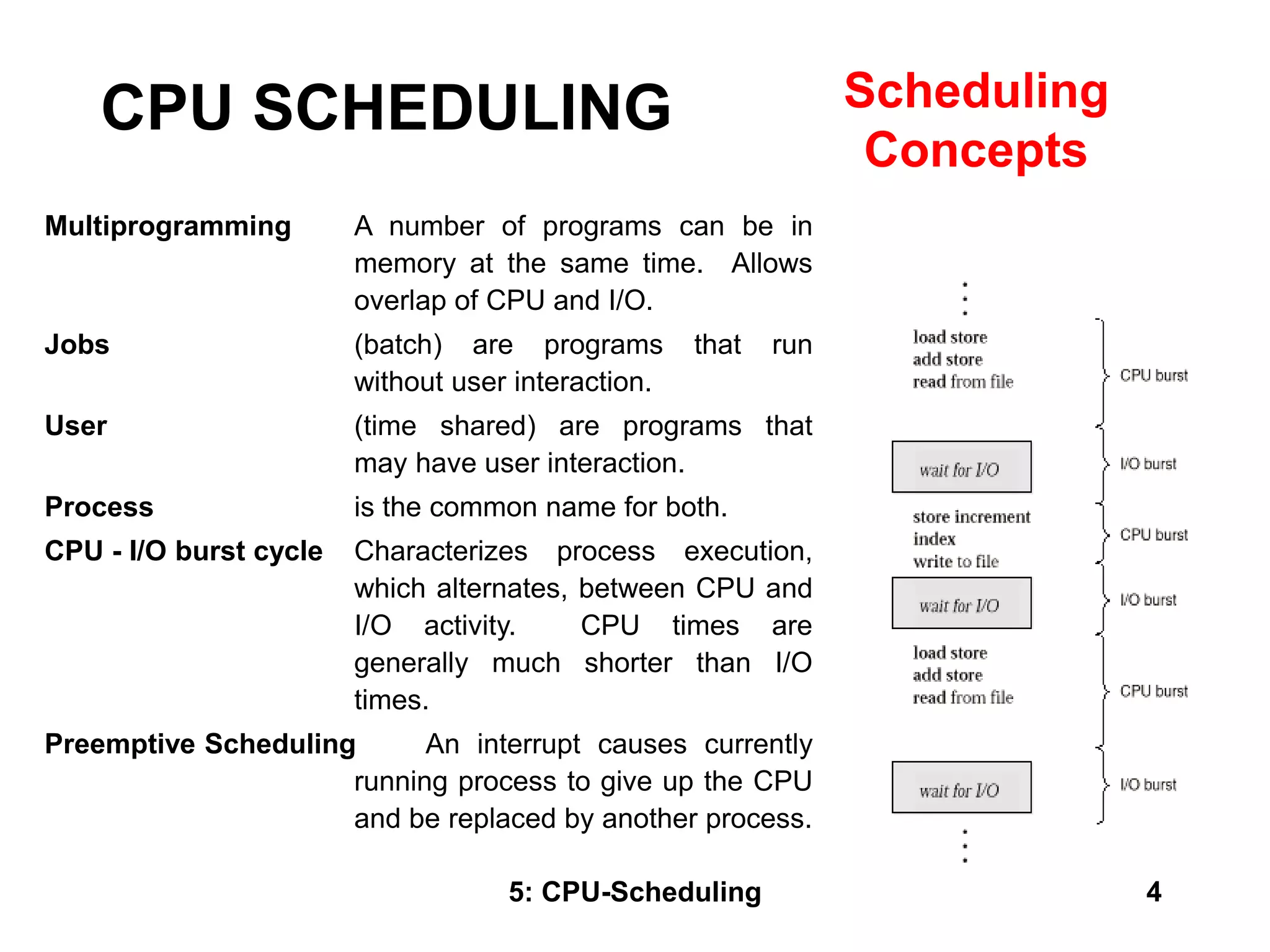

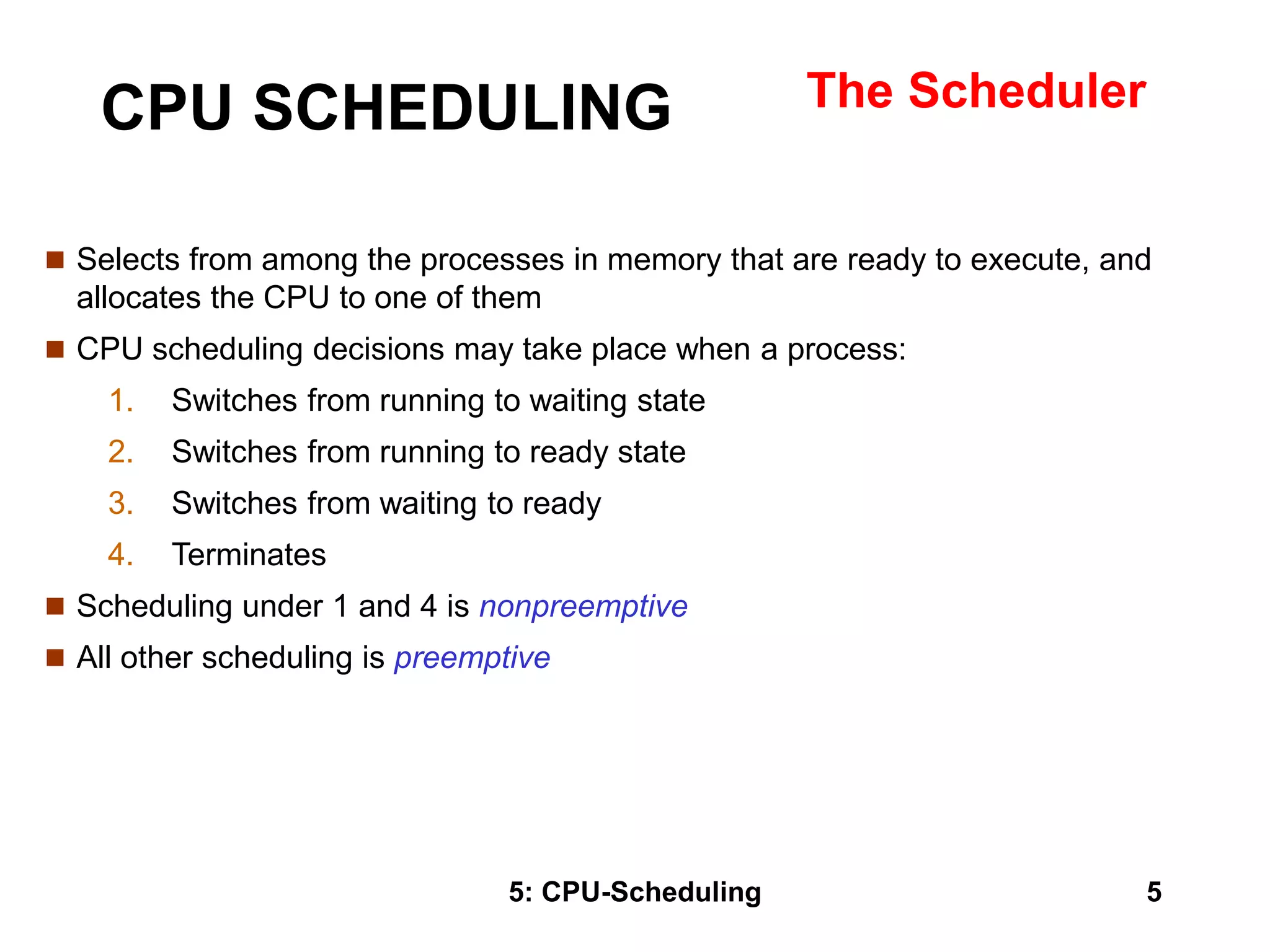

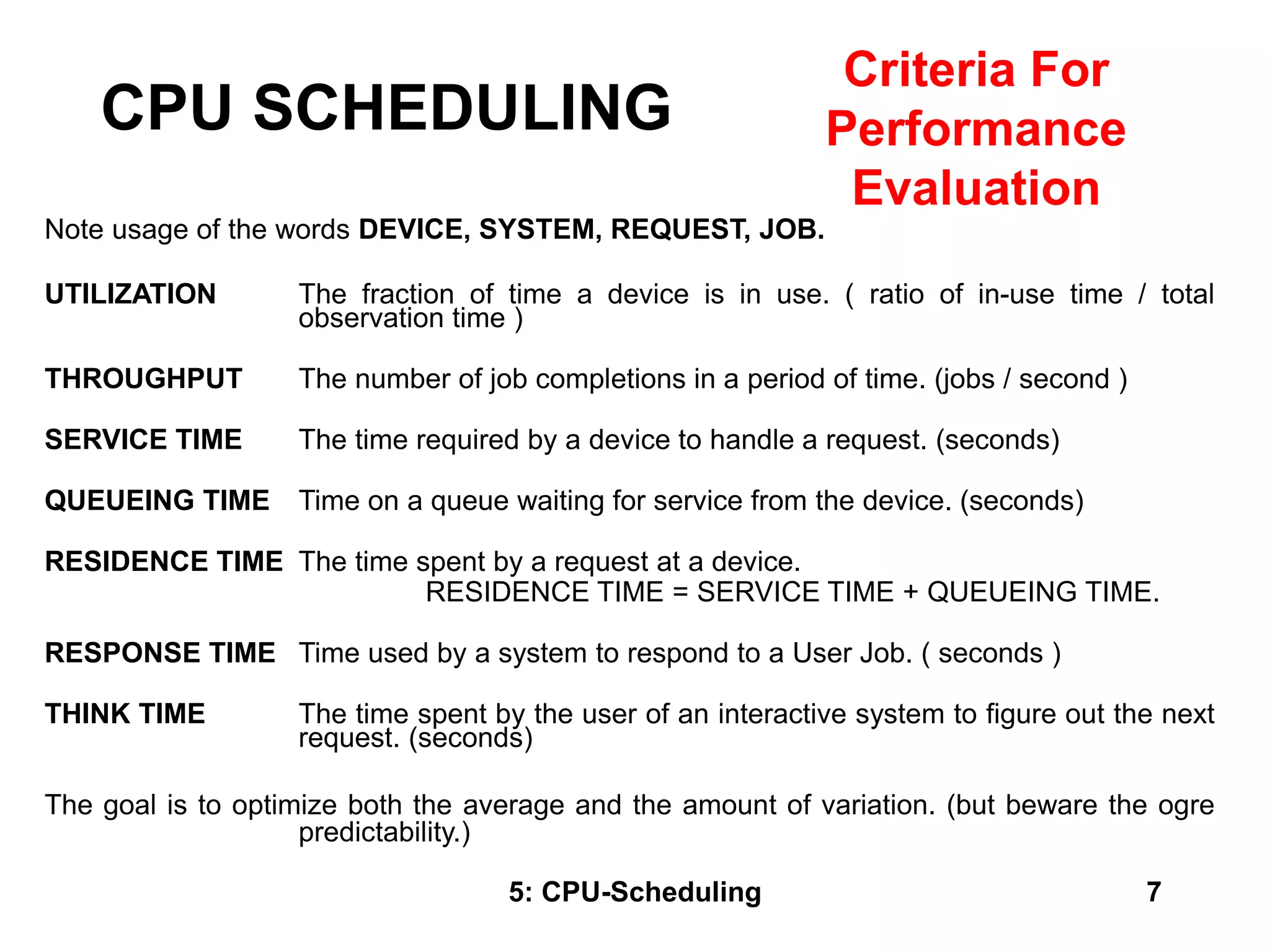

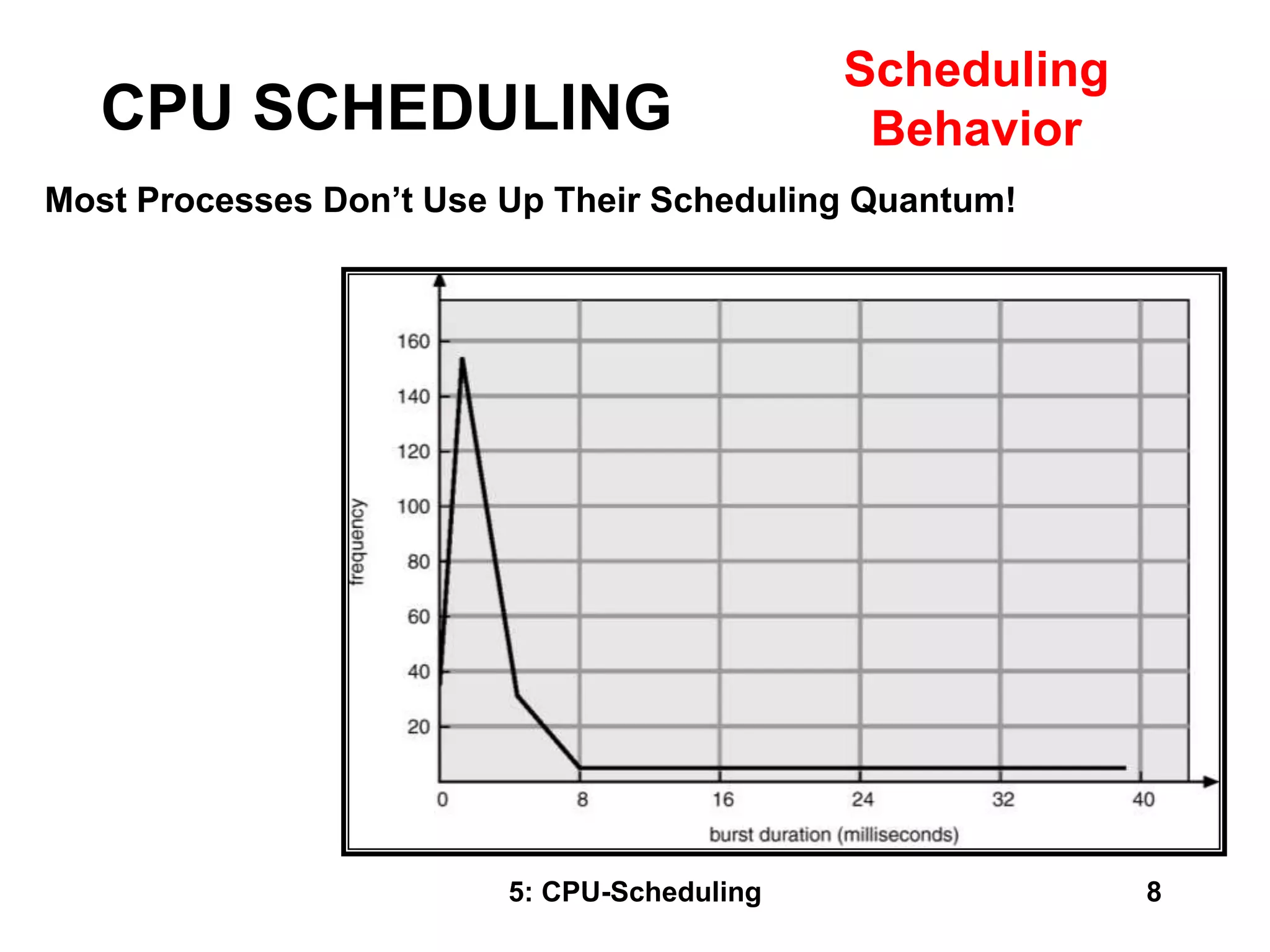

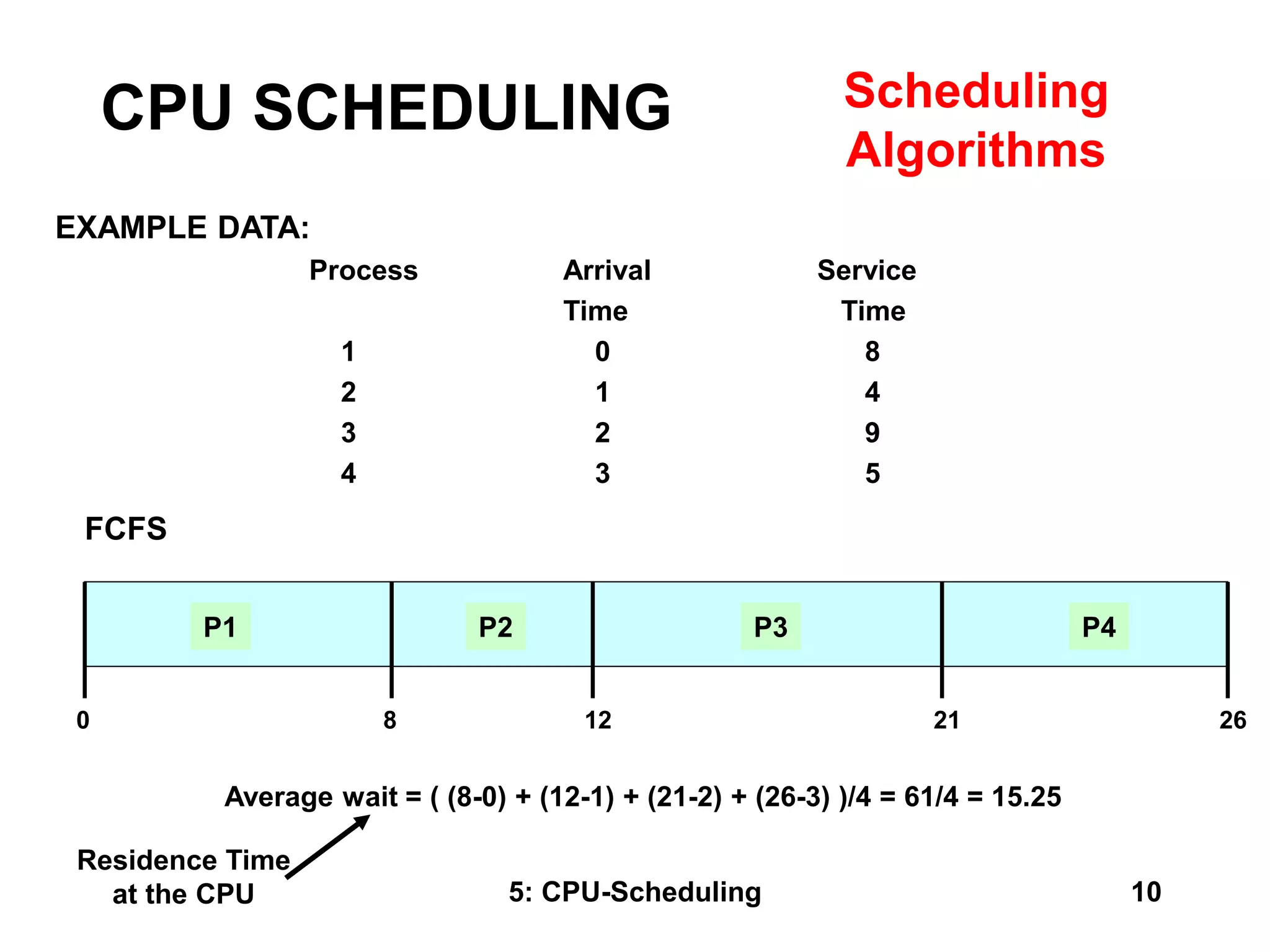

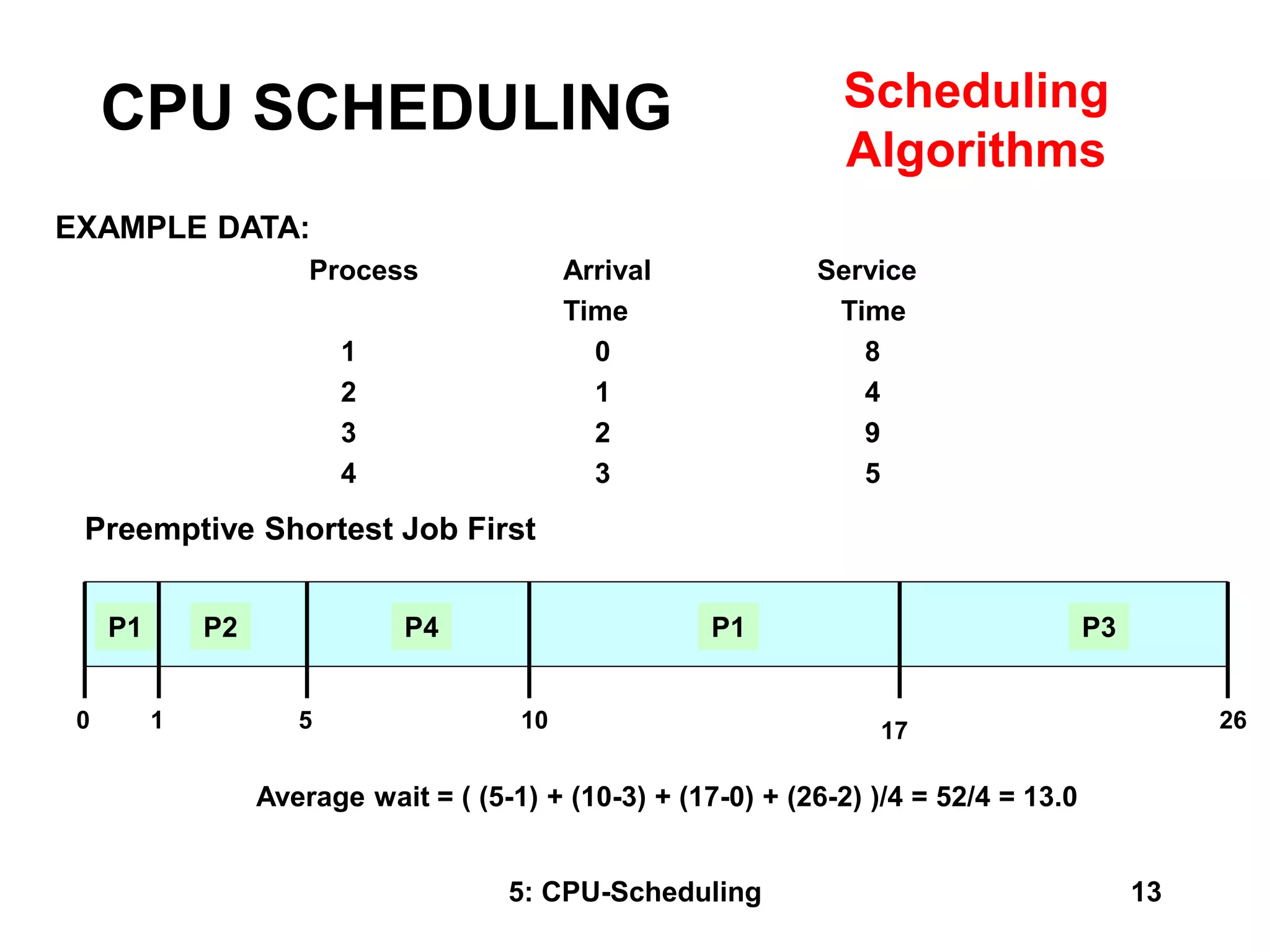

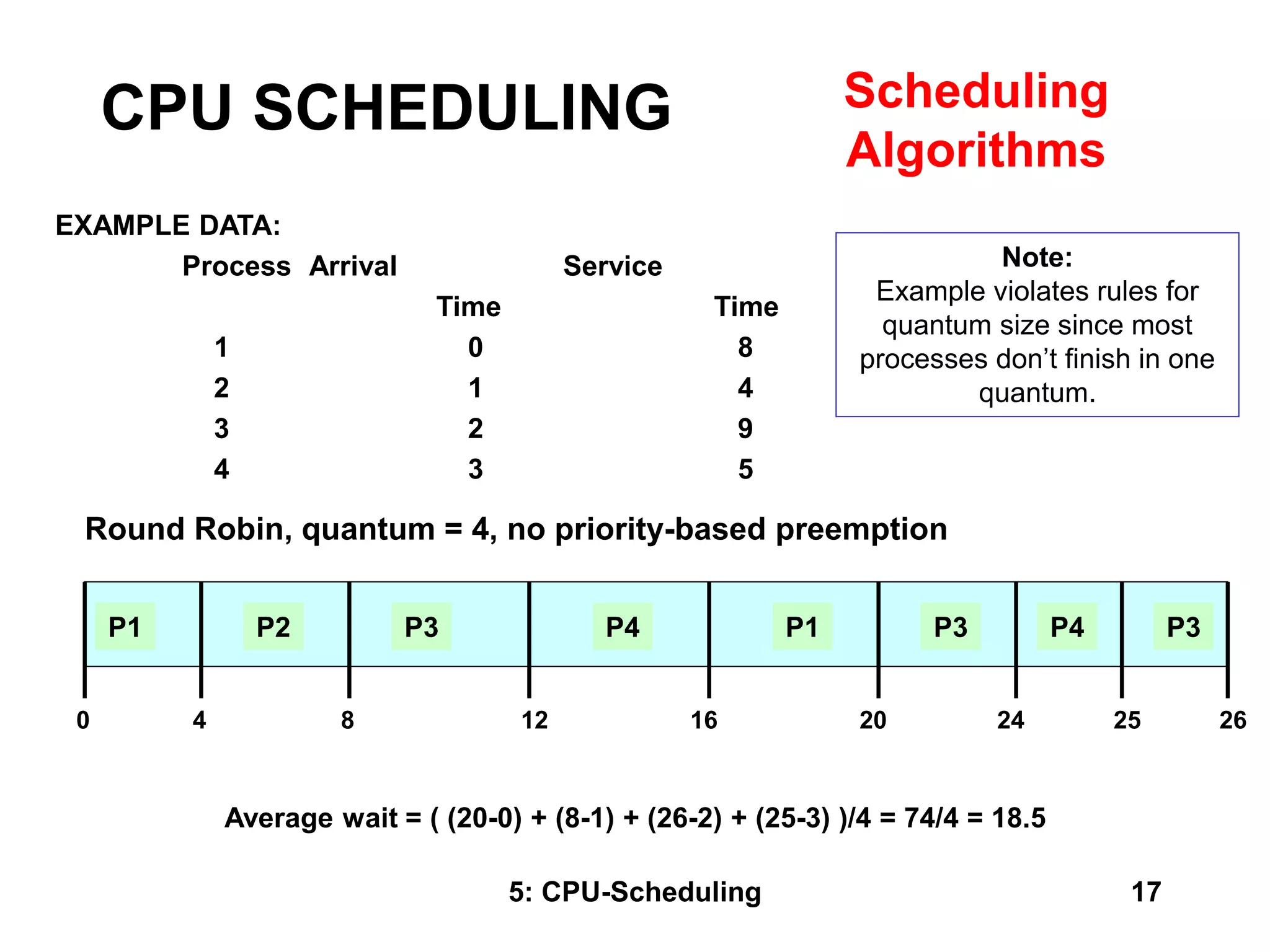

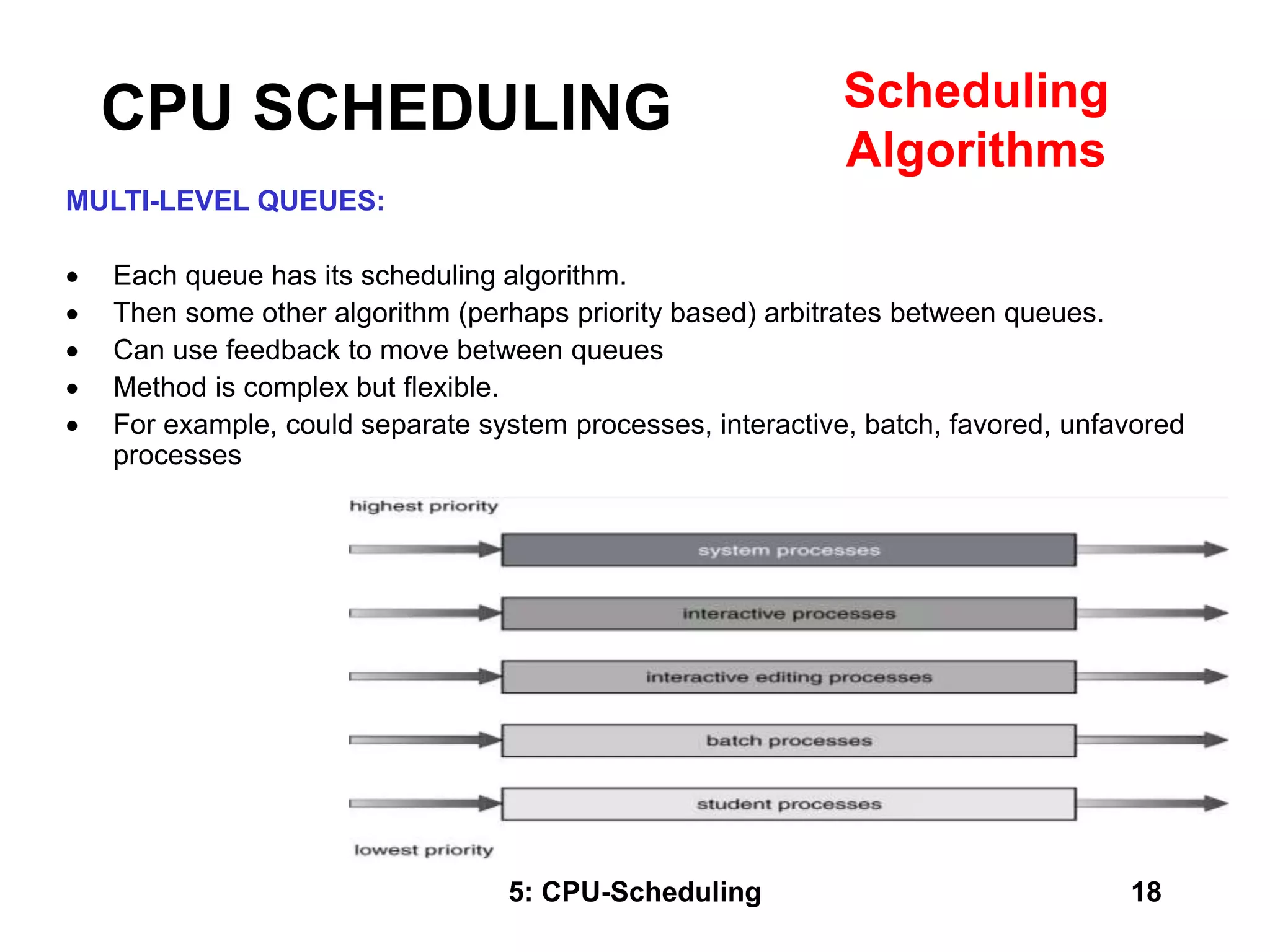

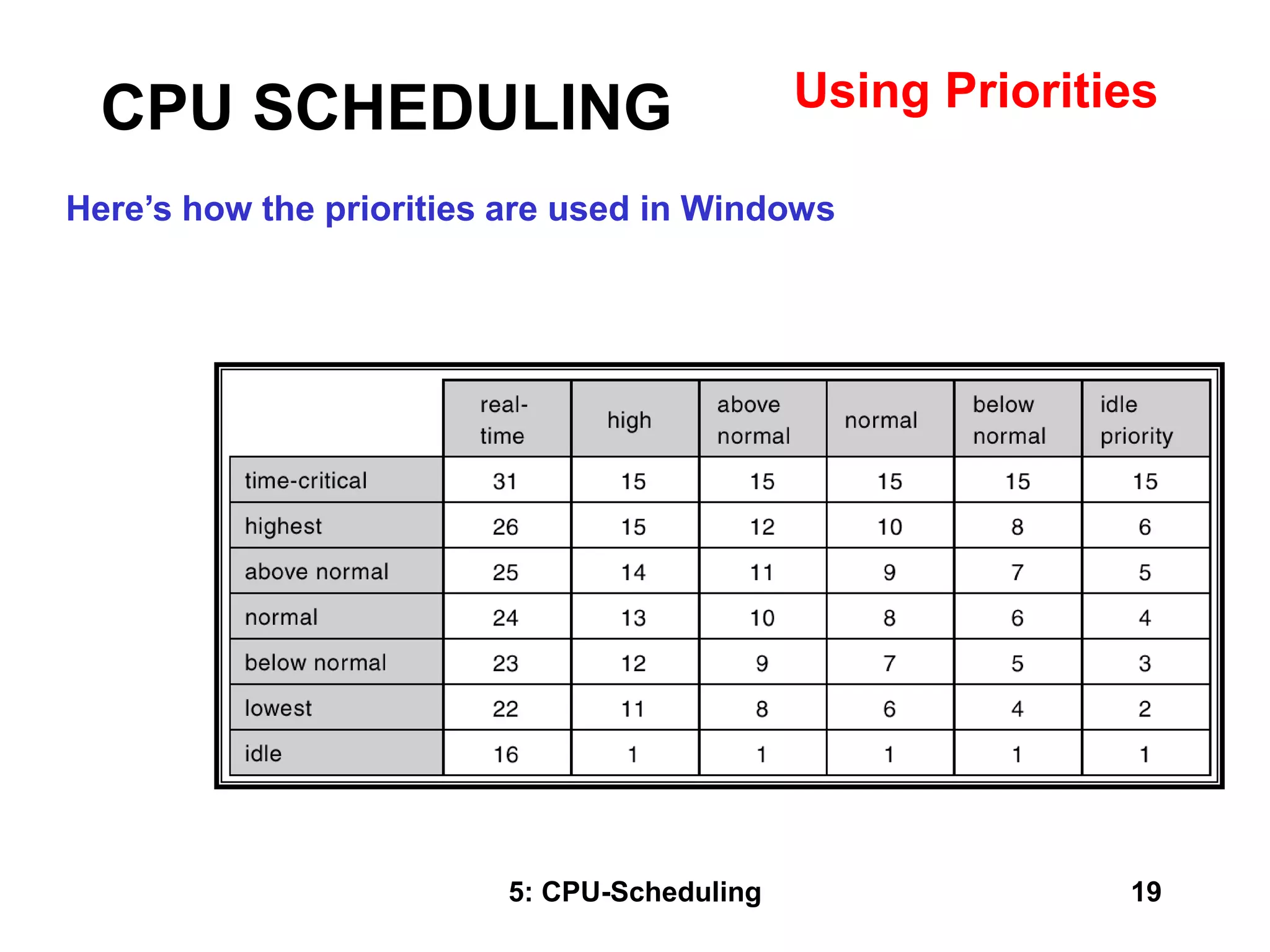

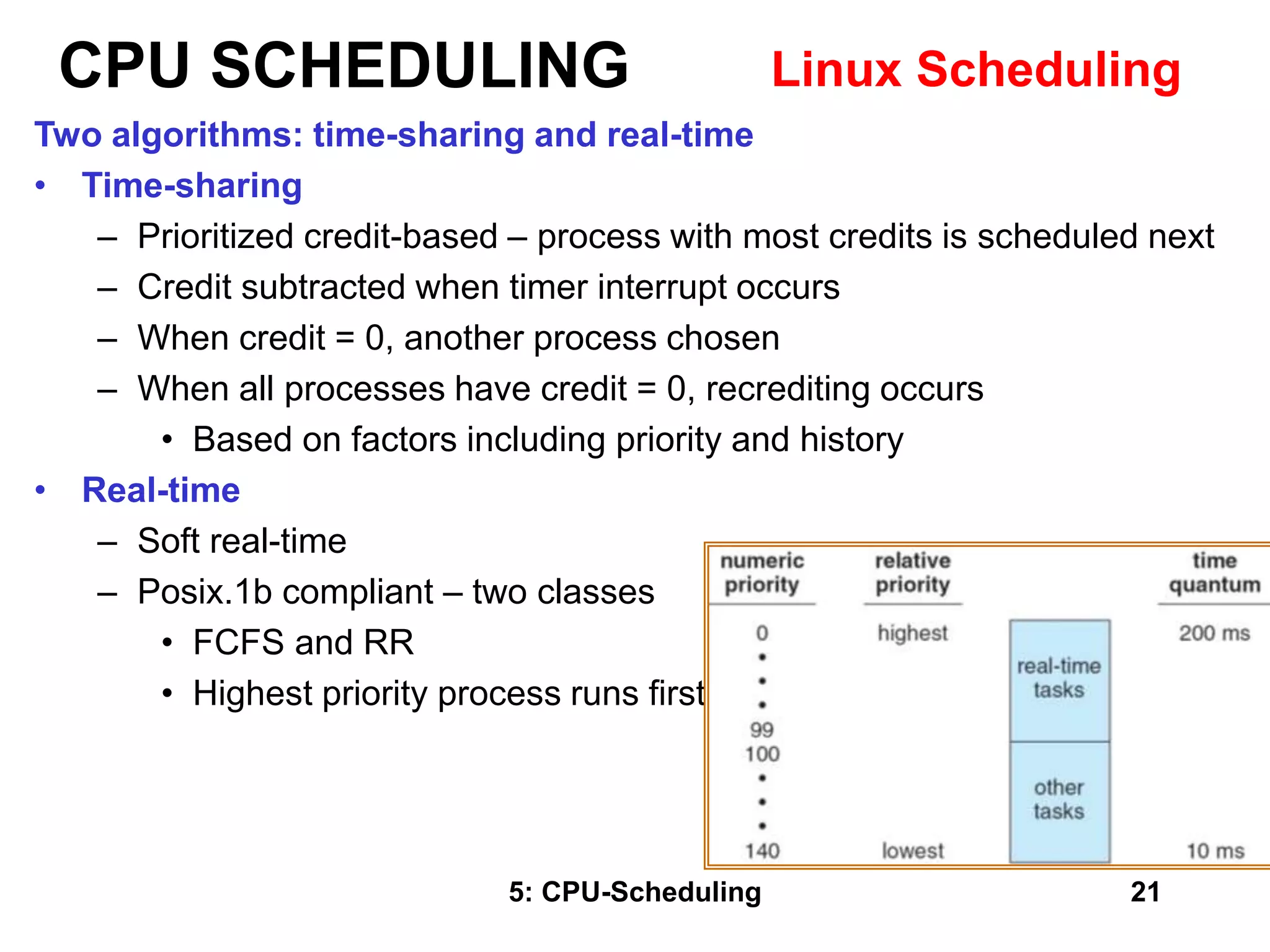

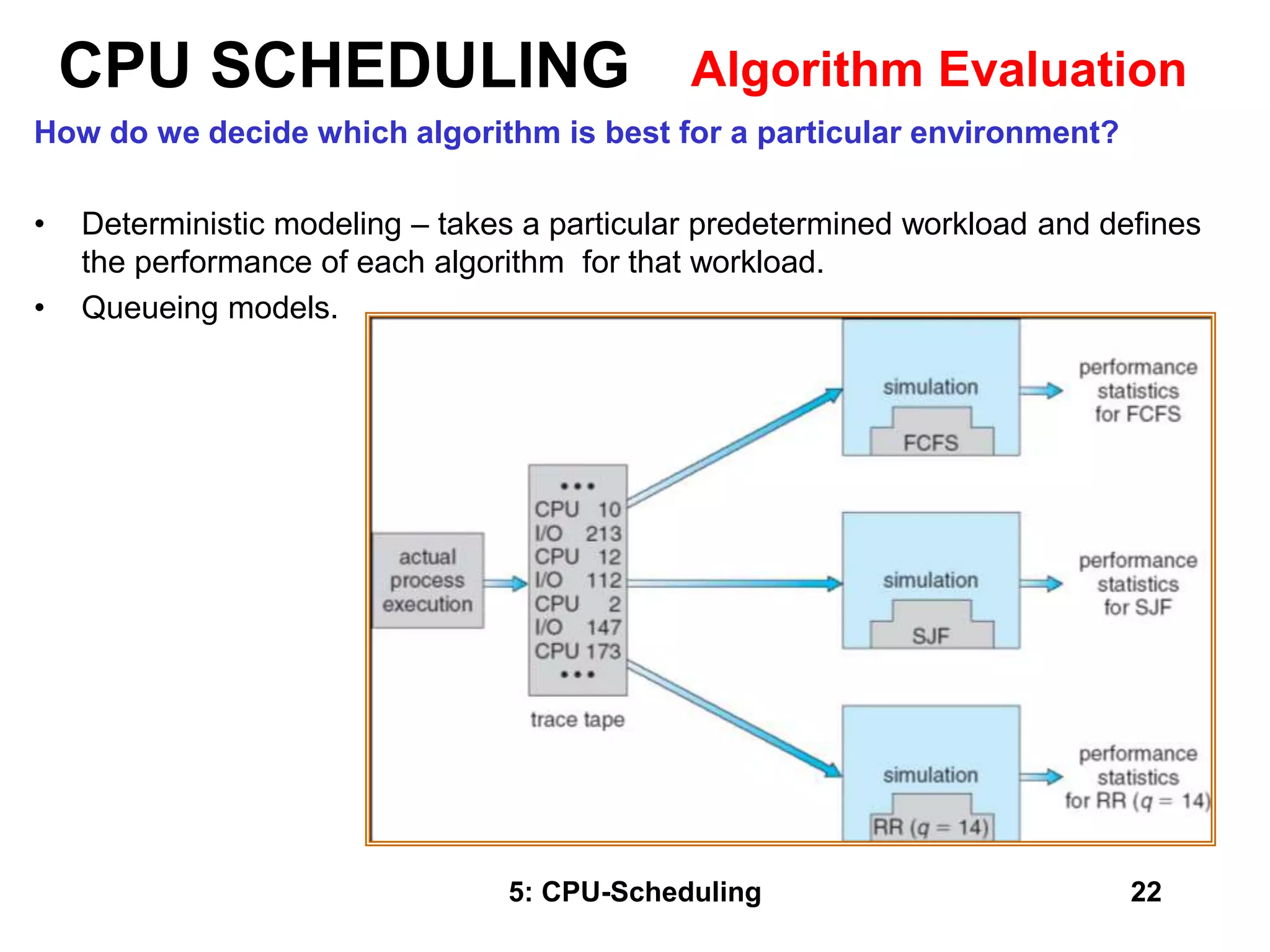

The document covers CPU scheduling in operating systems, focusing on efficient algorithms and their design to ensure fair resource allocation. It discusses various scheduling concepts, criteria, and algorithms, including first-come, first-served, shortest job first, priority-based scheduling, and round-robin methods. Additionally, it outlines the challenges of scheduling in multi-processor systems and the implications of these strategies in real-time environments.