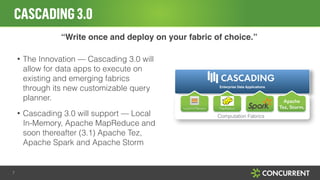

The document discusses log file analytics and large-scale log processing using Logstash and Cascading for big data applications. It highlights the advantages of using Cascading for developing data applications and integrates it with Logstash for enhanced log processing capabilities. Additionally, it emphasizes the importance of operational visibility and application management using the Driven architecture.

![WHAT IS LOG FILE ANALYTICS?

• Making sense of large amounts of [semi|un]structured data

• What type of log file data?

‣ Syslog

‣ Web log files (Apache, Nginix, WebTrends, Omniture)

‣ POS transactions

‣ Advertising impressions (Doubleclick DART, OpenX, Atlas)

‣ Twitter firehose (yes, it’s a log file!)

• Anything with a timestamp and data

2](https://image.slidesharecdn.com/scalablelogprocessingwithcascadingv3-141031134353-conversion-gate02/85/Elasticsearch-Cascading-for-Scalable-Log-Processing-2-320.jpg)

![DEMO: WORD COUNT EXAMPLE WITH CASCADING

String docPath = args[ 0 ];

String wcPath = args[ 1 ];

Properties properties = new Properties();

AppProps.setApplicationJarClass( properties, Main.class );

HadoopFlowConnector flowConnector = new HadoopFlowConnector( properties );

9

configuration

integration

// create source and sink taps

Tap docTap = new Hfs( new TextDelimited( true, "t" ), docPath );

Tap wcTap = new Hfs( new TextDelimited( true, "t" ), wcPath );

processing

// specify a regex to split "document" text lines into token stream

Fields token = new Fields( "token" );

Fields text = new Fields( "text" );

RegexSplitGenerator splitter = new RegexSplitGenerator( token, "[ [](),.]" );

// only returns "token"

Pipe docPipe = new Each( "token", text, splitter, Fields.RESULTS );

// determine the word counts

Pipe wcPipe = new Pipe( "wc", docPipe );

wcPipe = new GroupBy( wcPipe, token );

wcPipe = new Every( wcPipe, Fields.ALL, new Count(), Fields.ALL );

scheduling

// connect the taps, pipes, etc., into a flow definition

FlowDef flowDef = FlowDef.flowDef().setName( "wc" )

.addSource( docPipe, docTap )

.addTailSink( wcPipe, wcTap );

// create the Flow

Flow wcFlow = flowConnector.connect( flowDef ); // <<-- Unit of Work

wcFlow.complete(); // <<-- Runs jobs on Cluster](https://image.slidesharecdn.com/scalablelogprocessingwithcascadingv3-141031134353-conversion-gate02/85/Elasticsearch-Cascading-for-Scalable-Log-Processing-9-320.jpg)