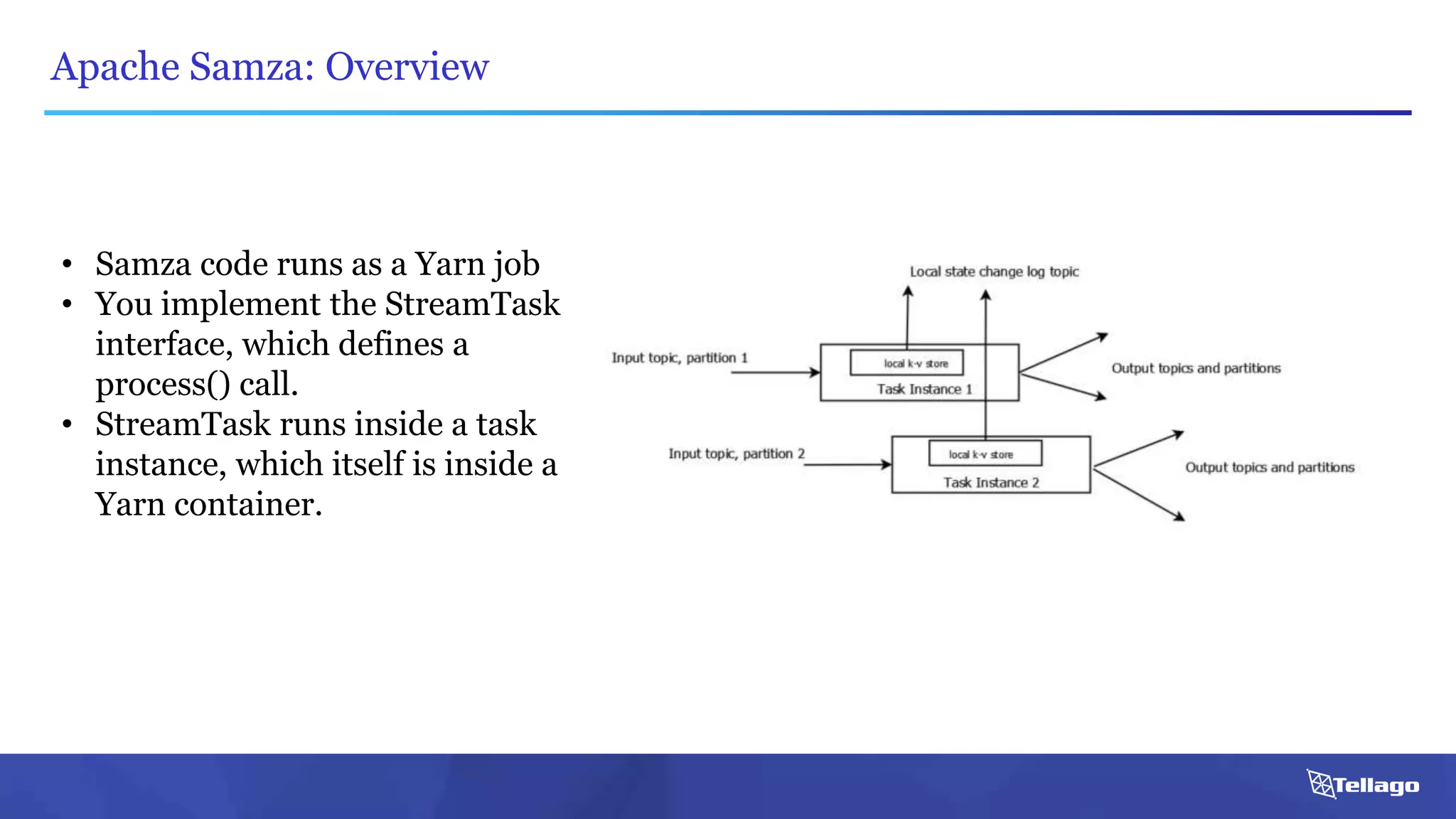

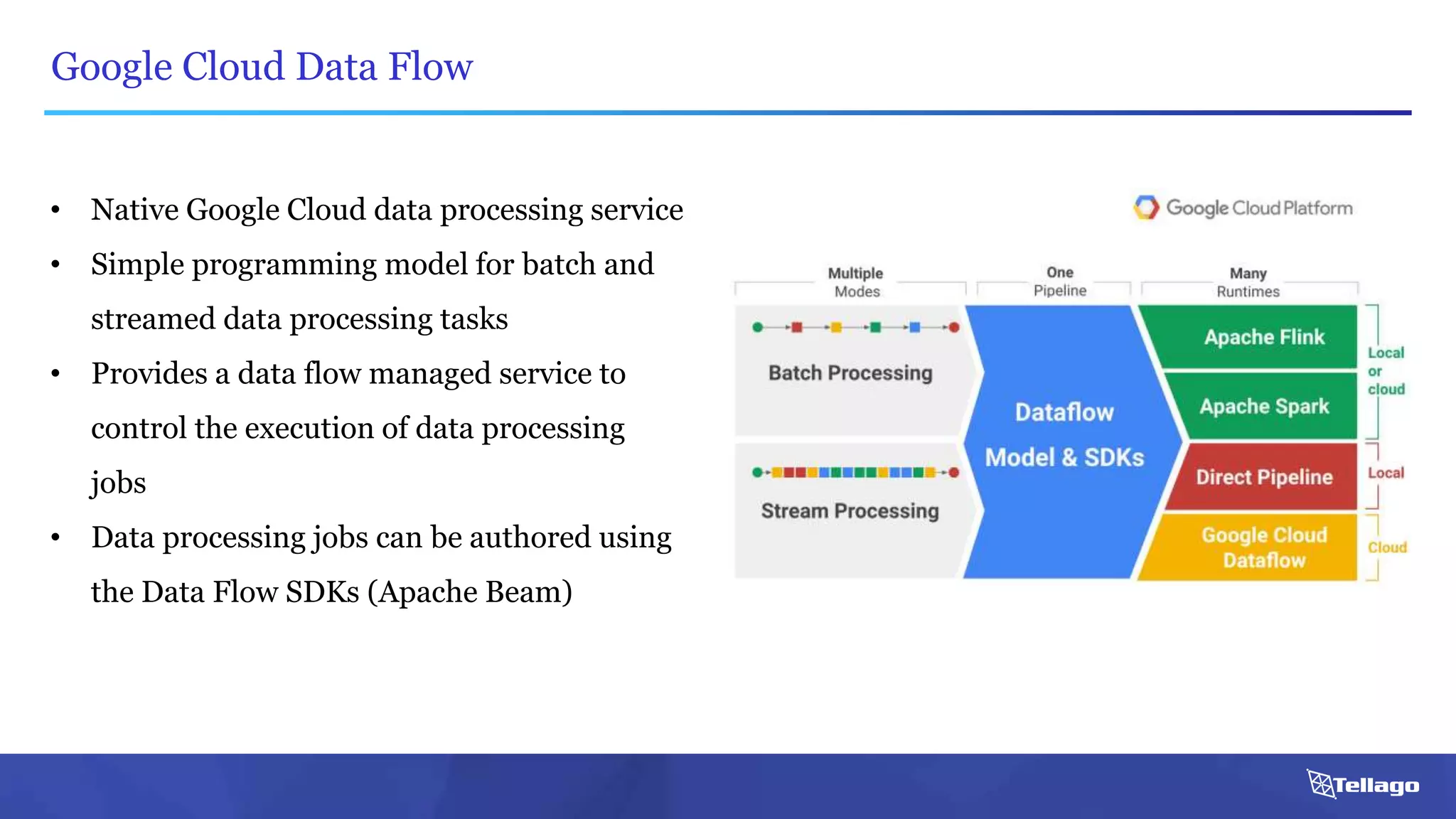

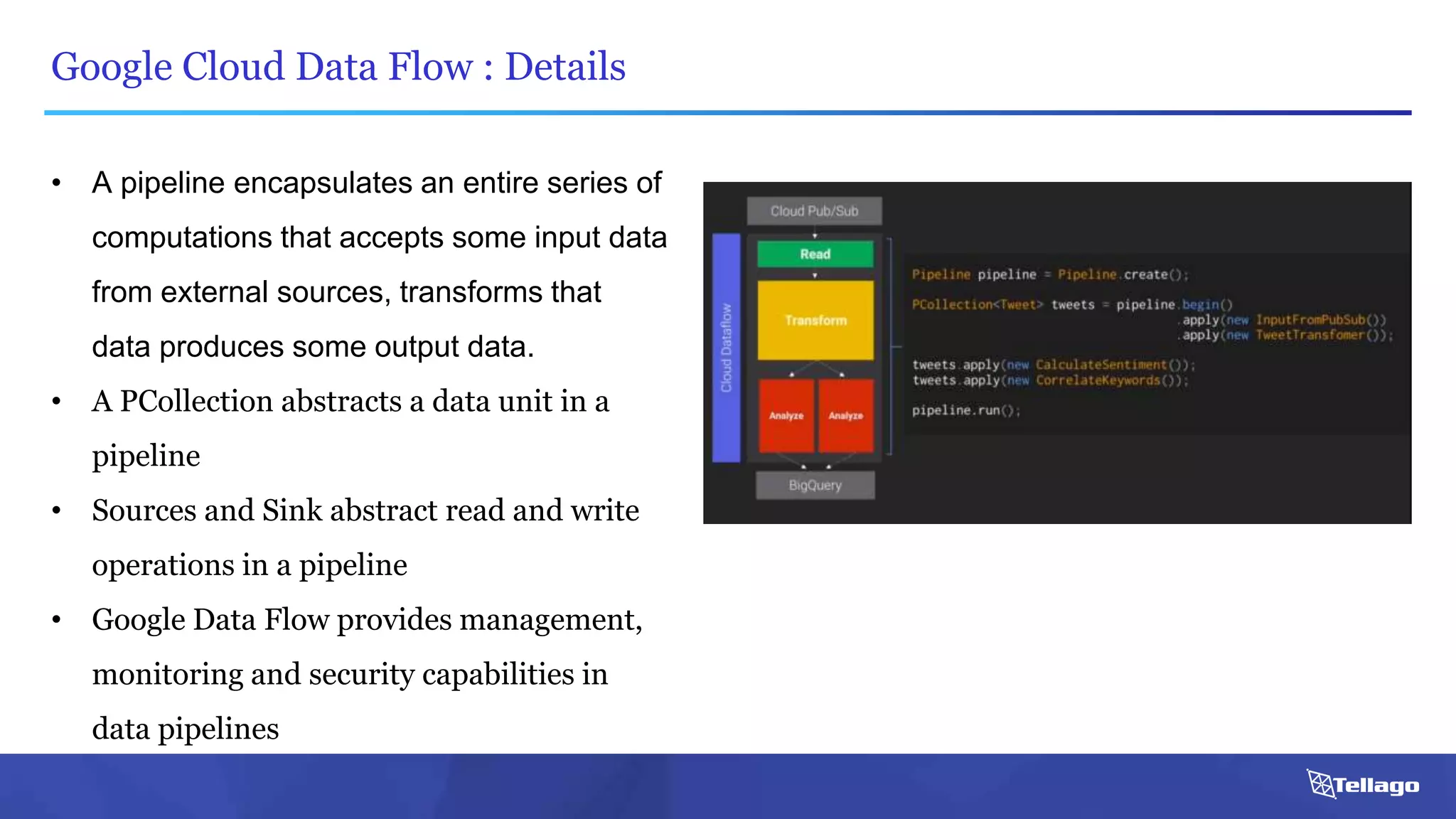

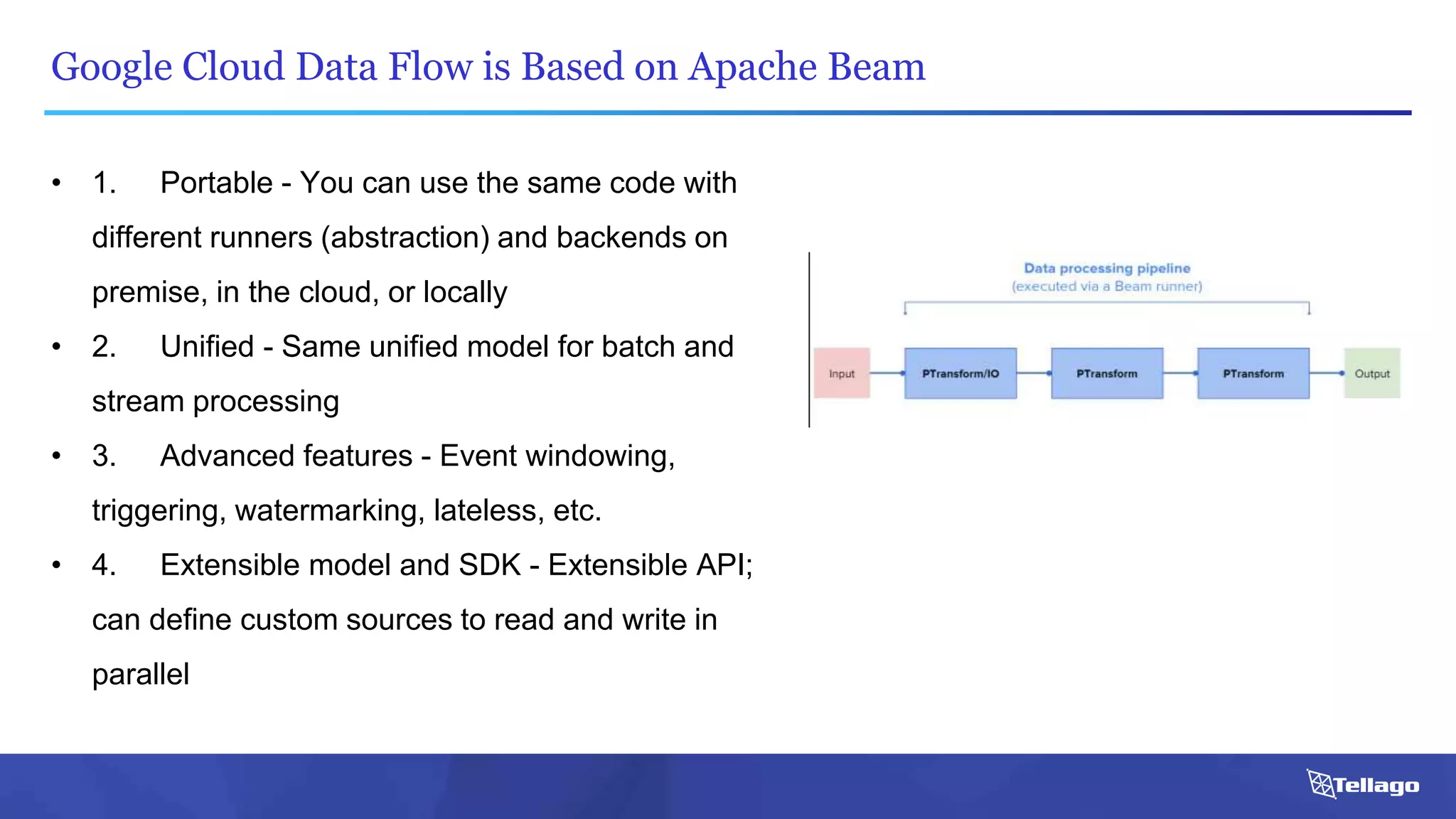

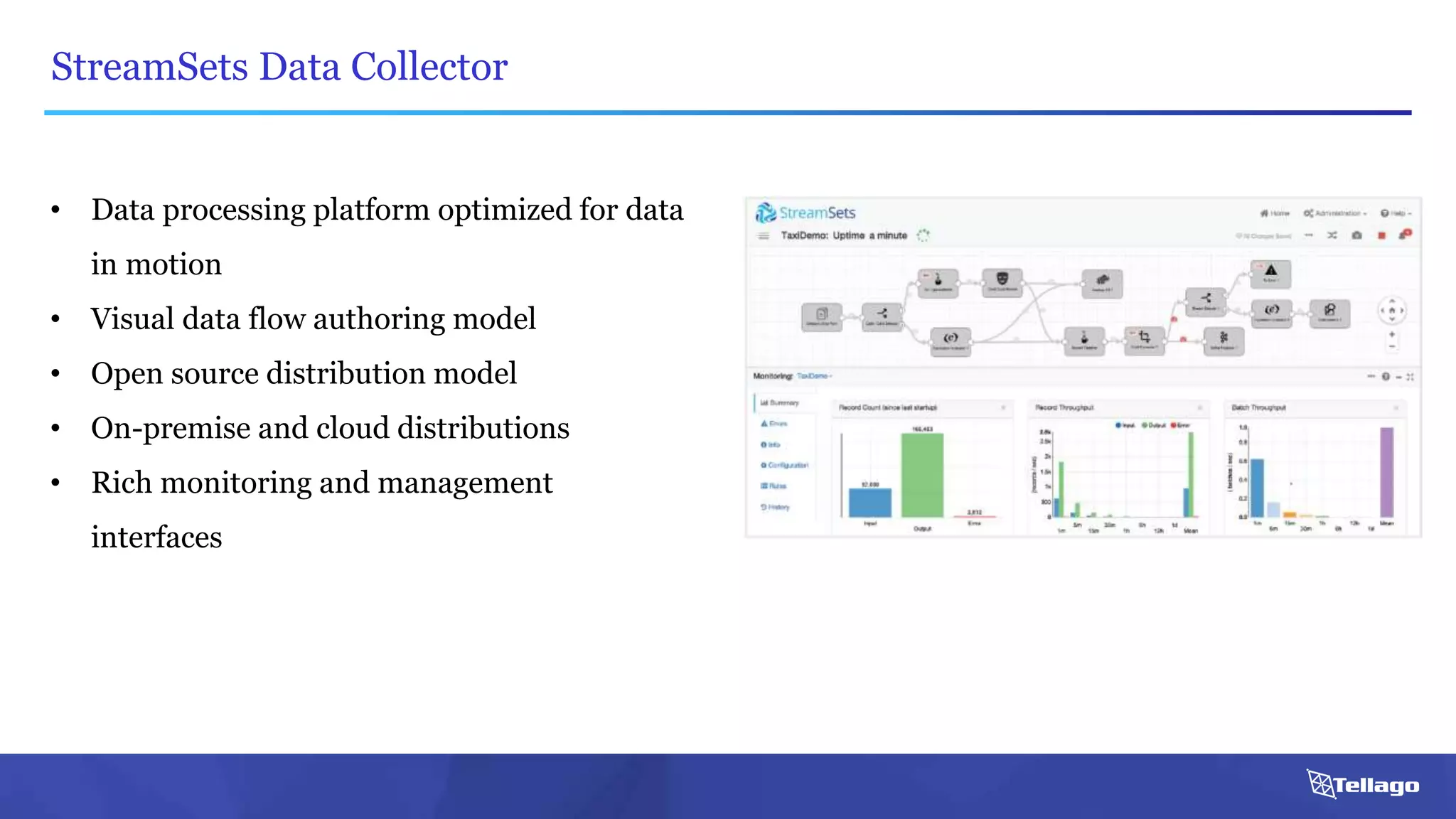

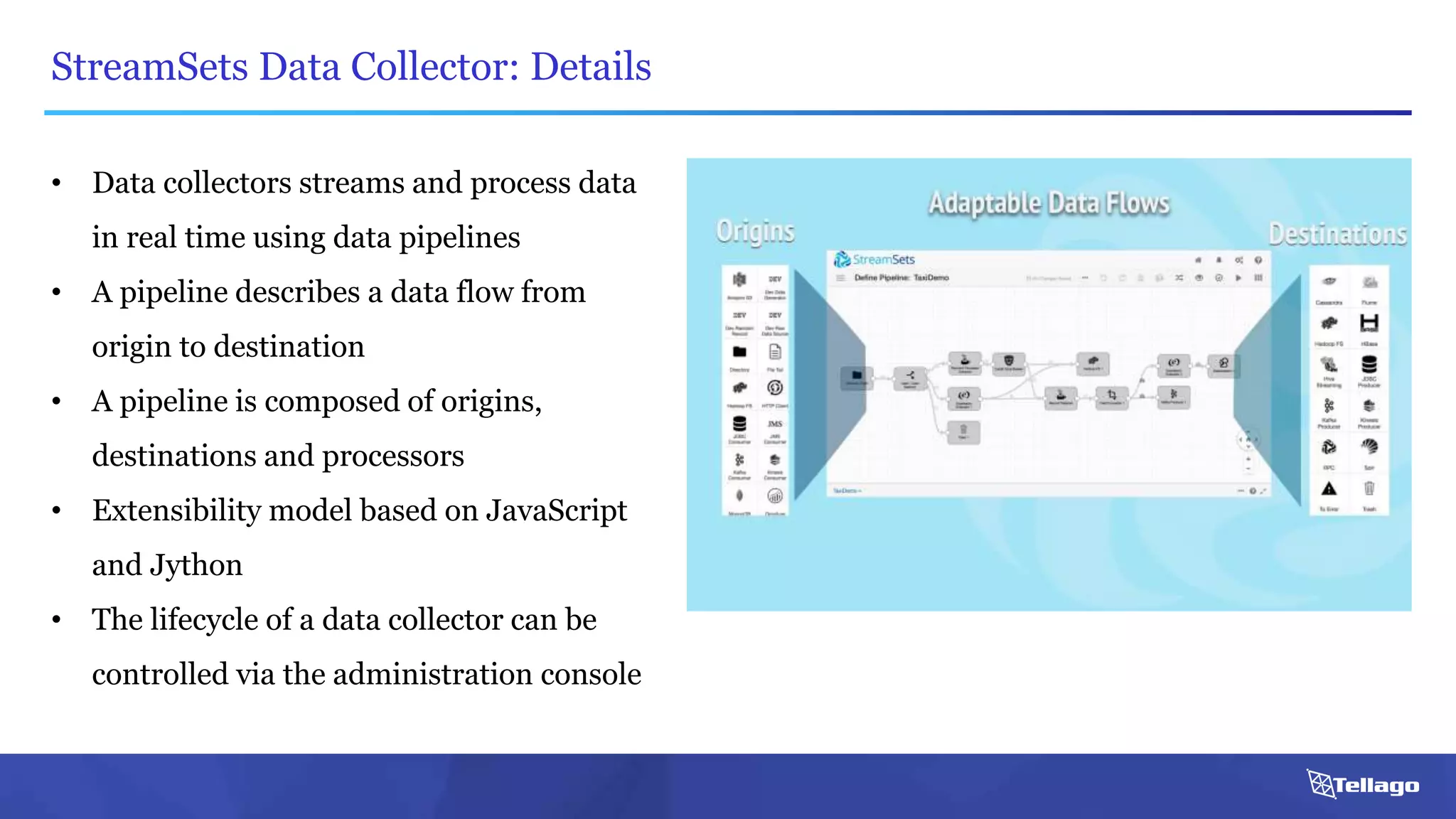

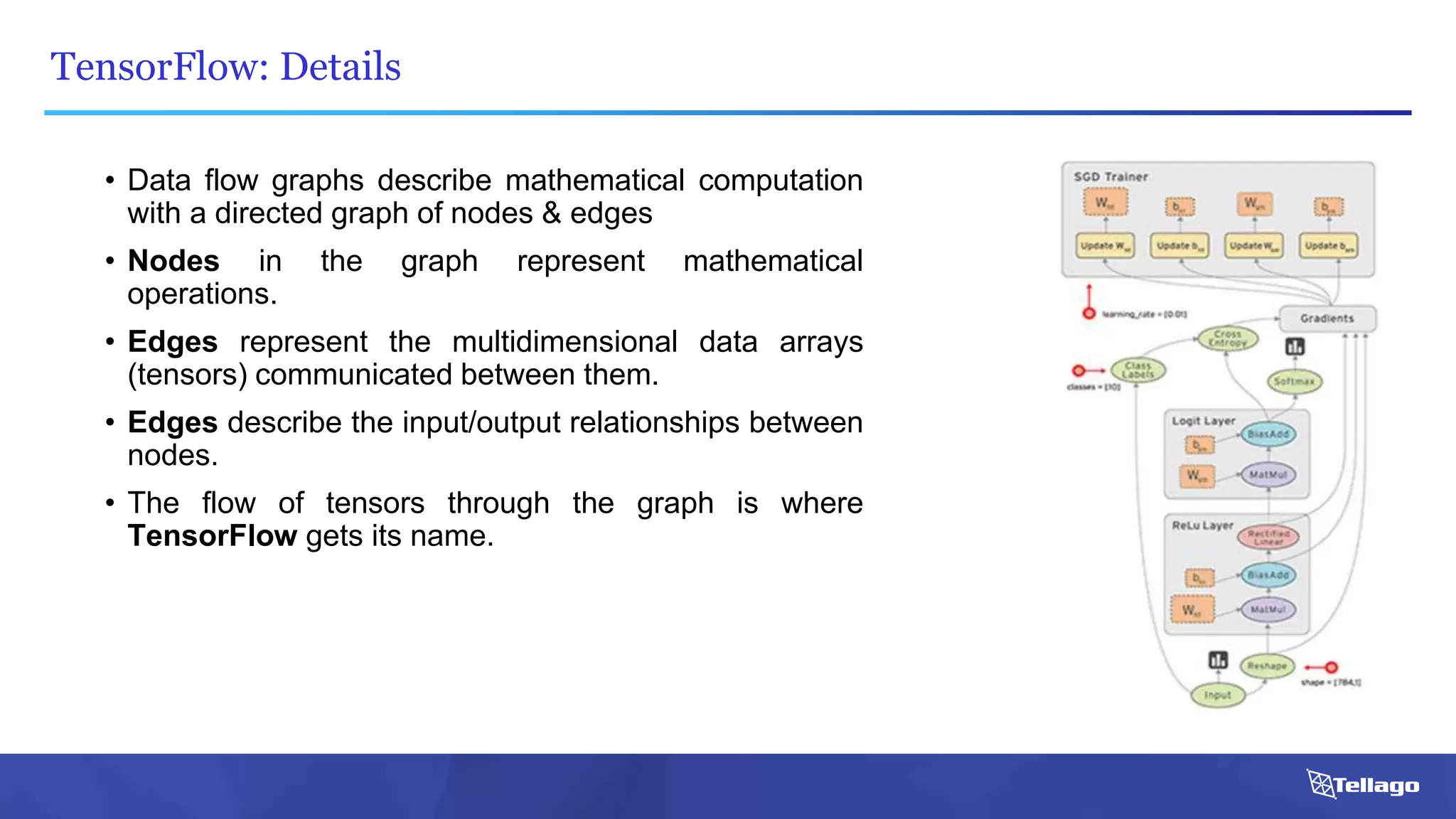

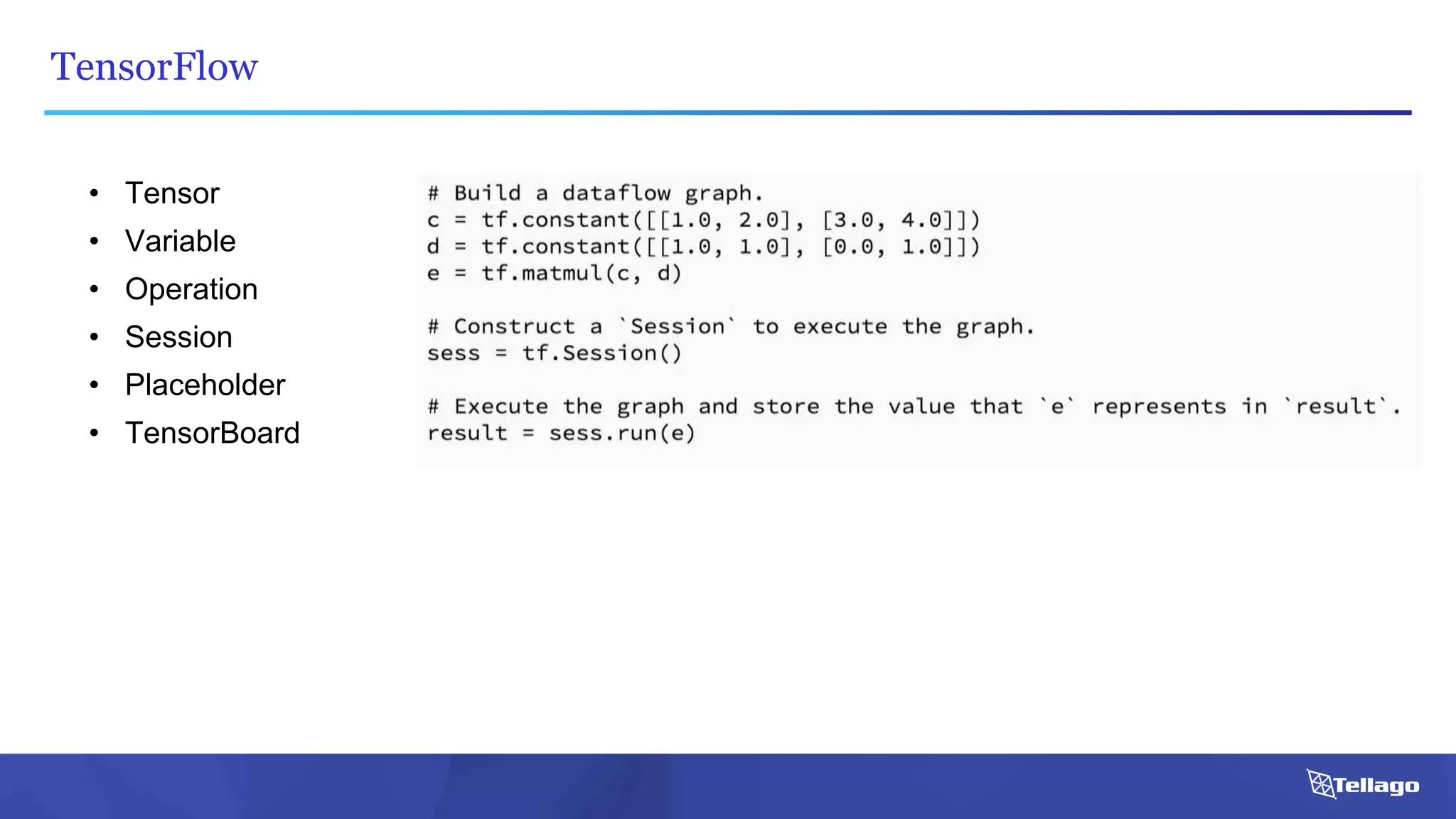

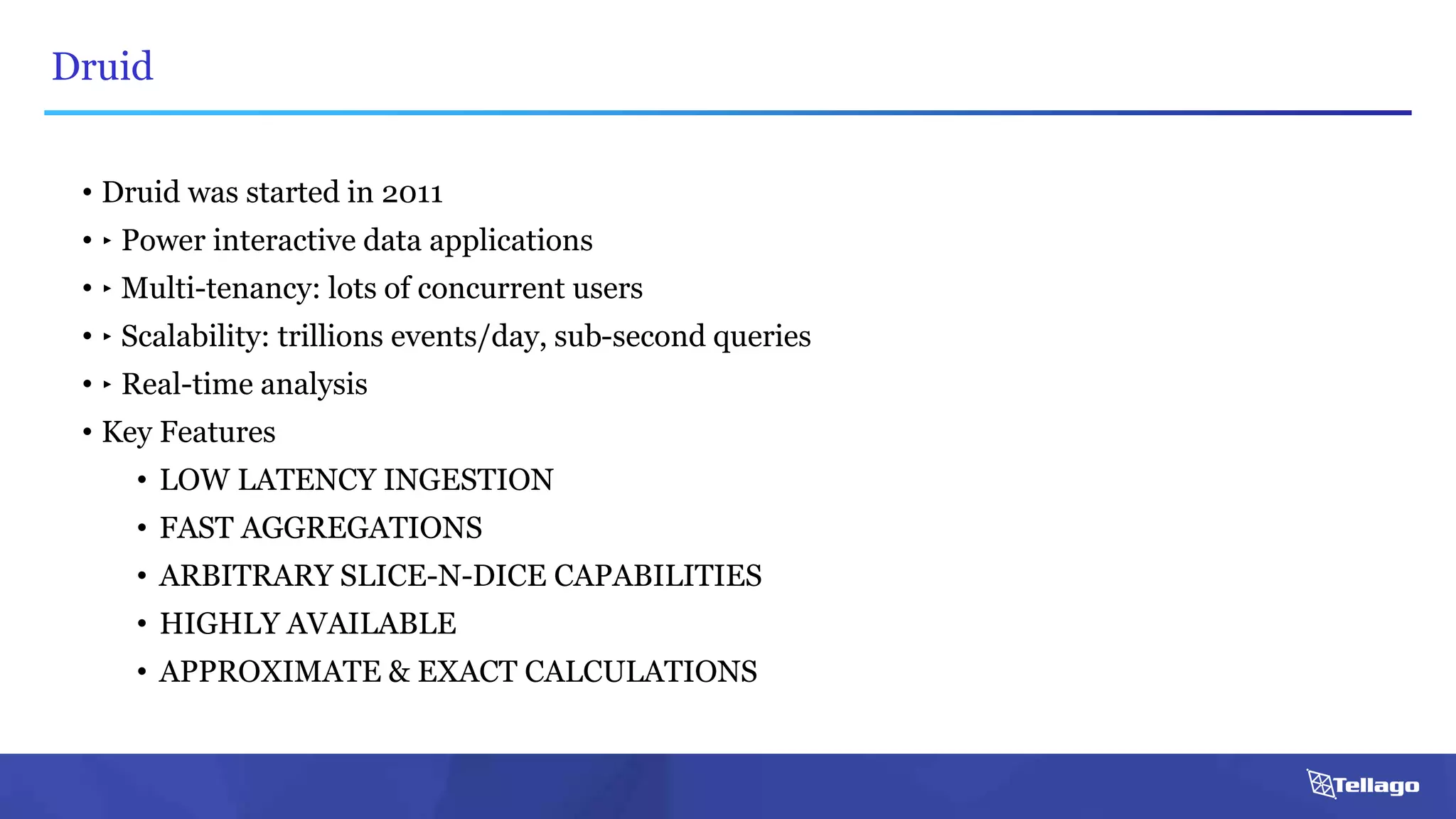

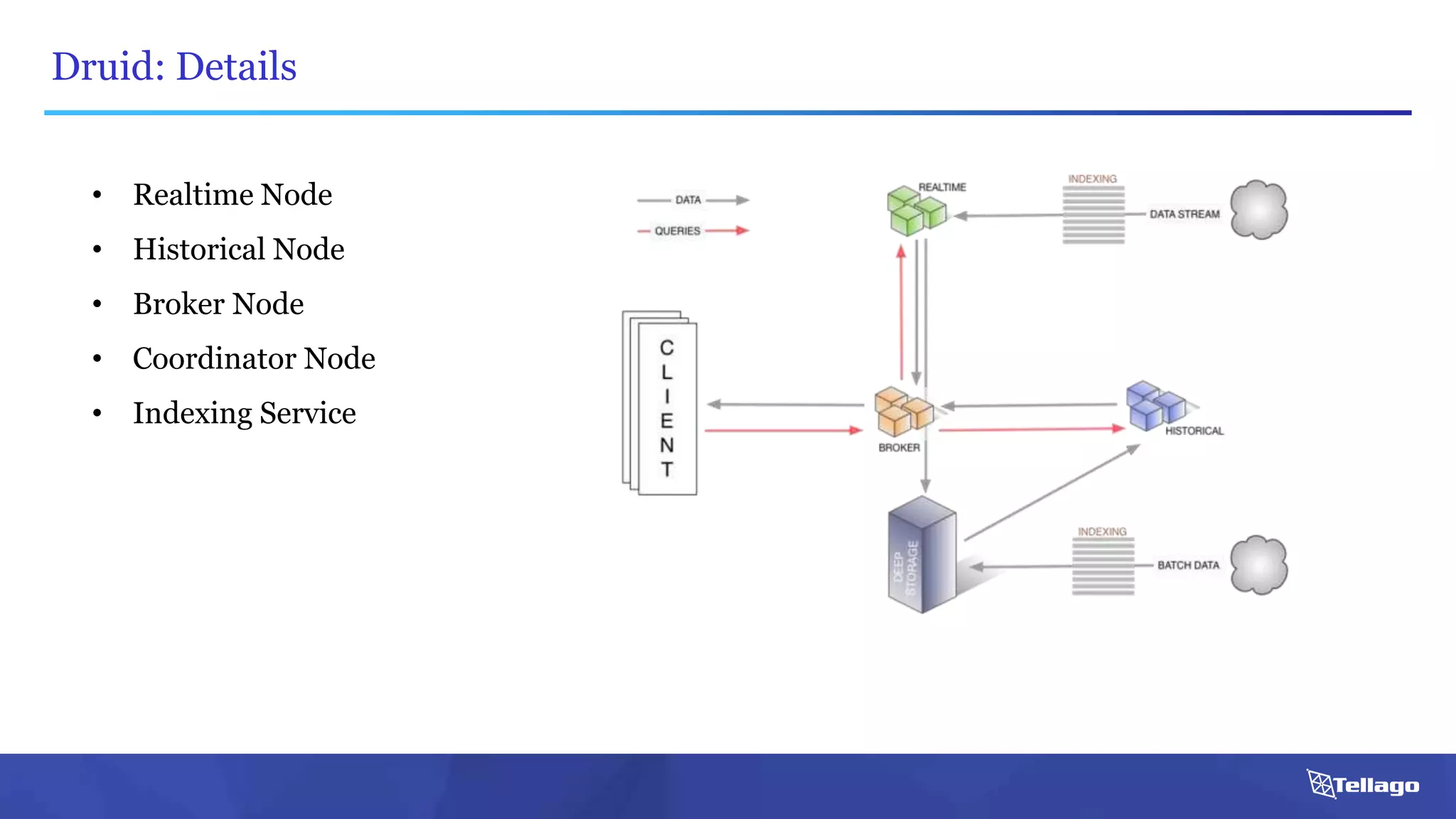

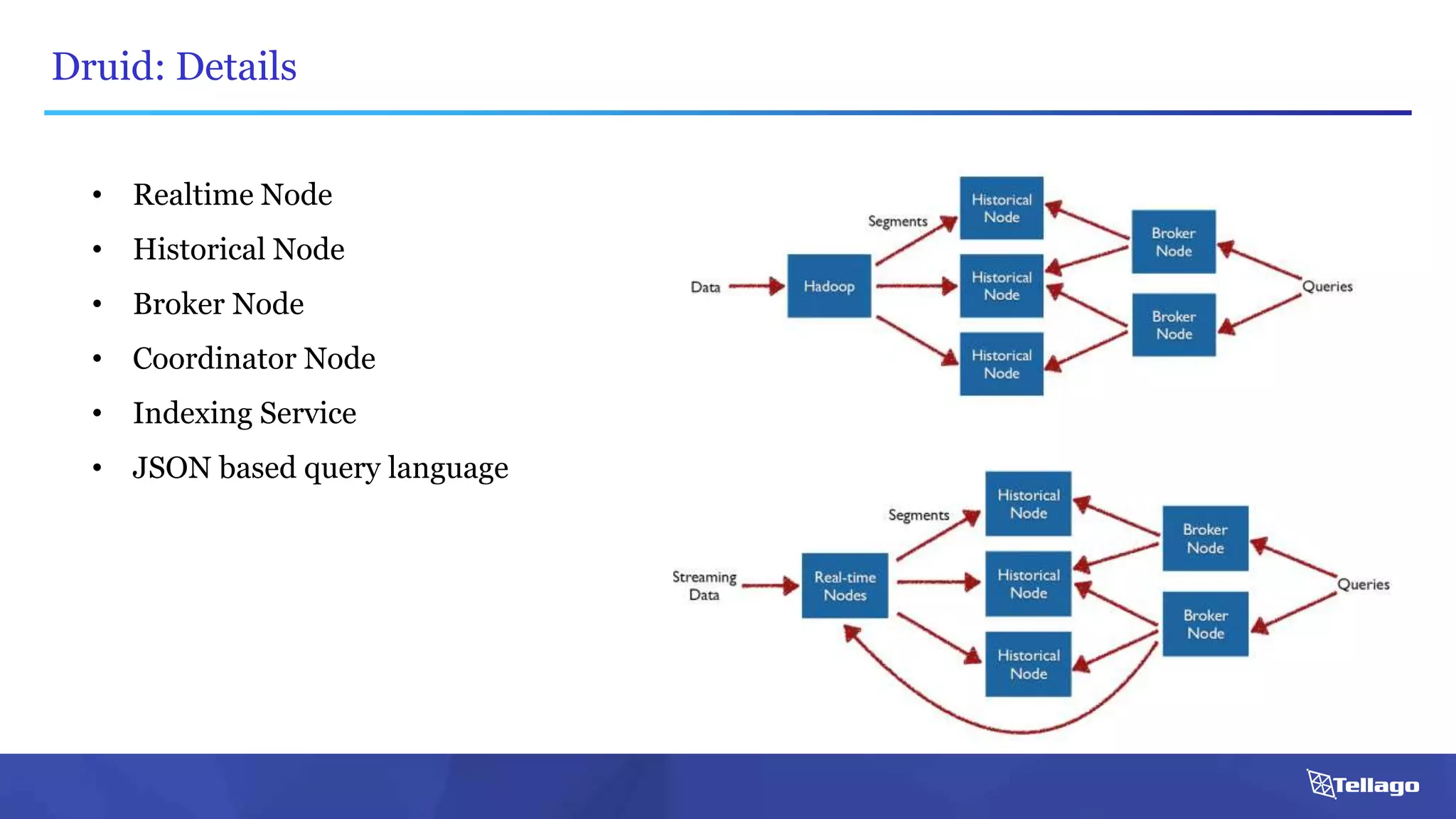

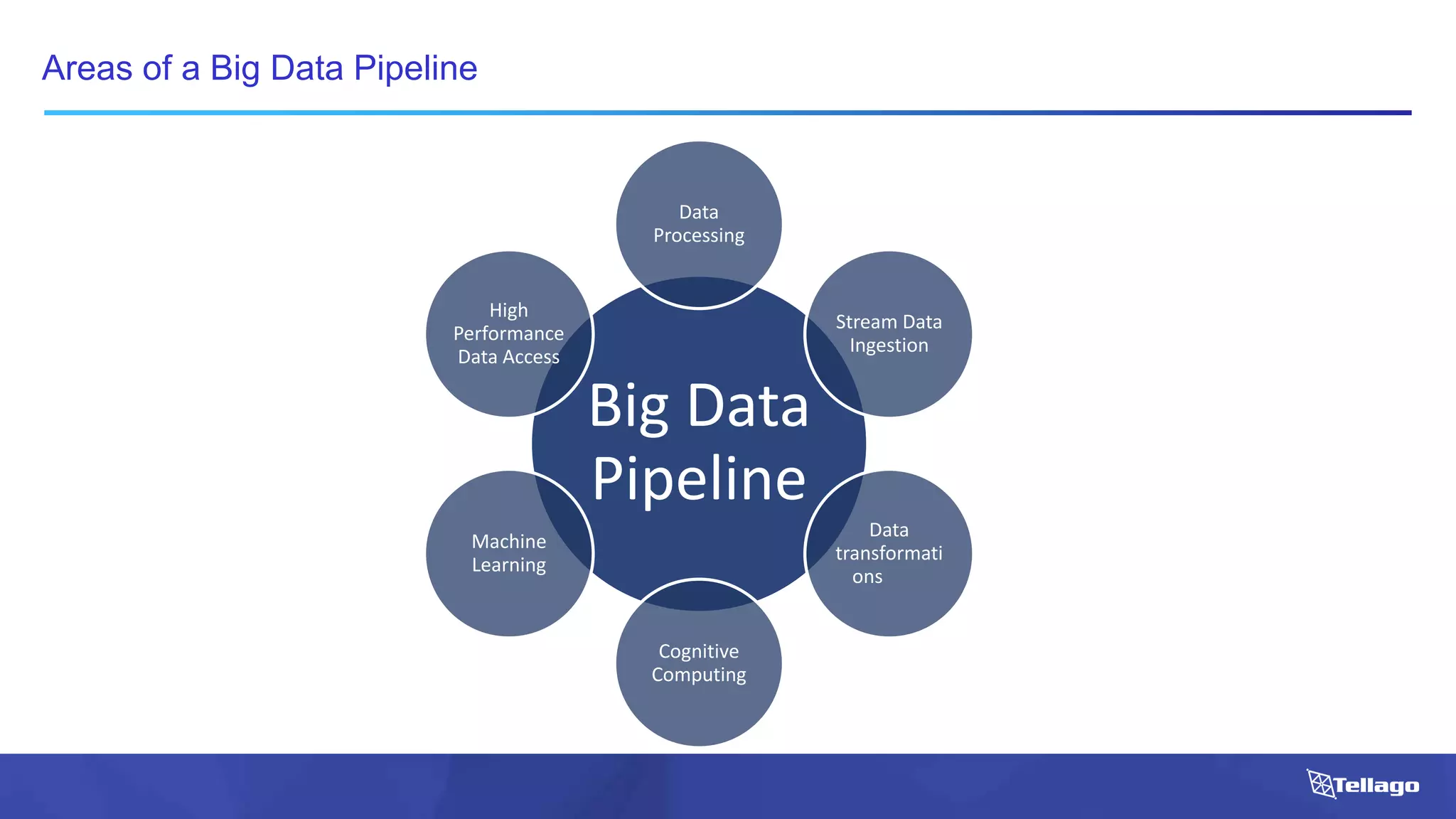

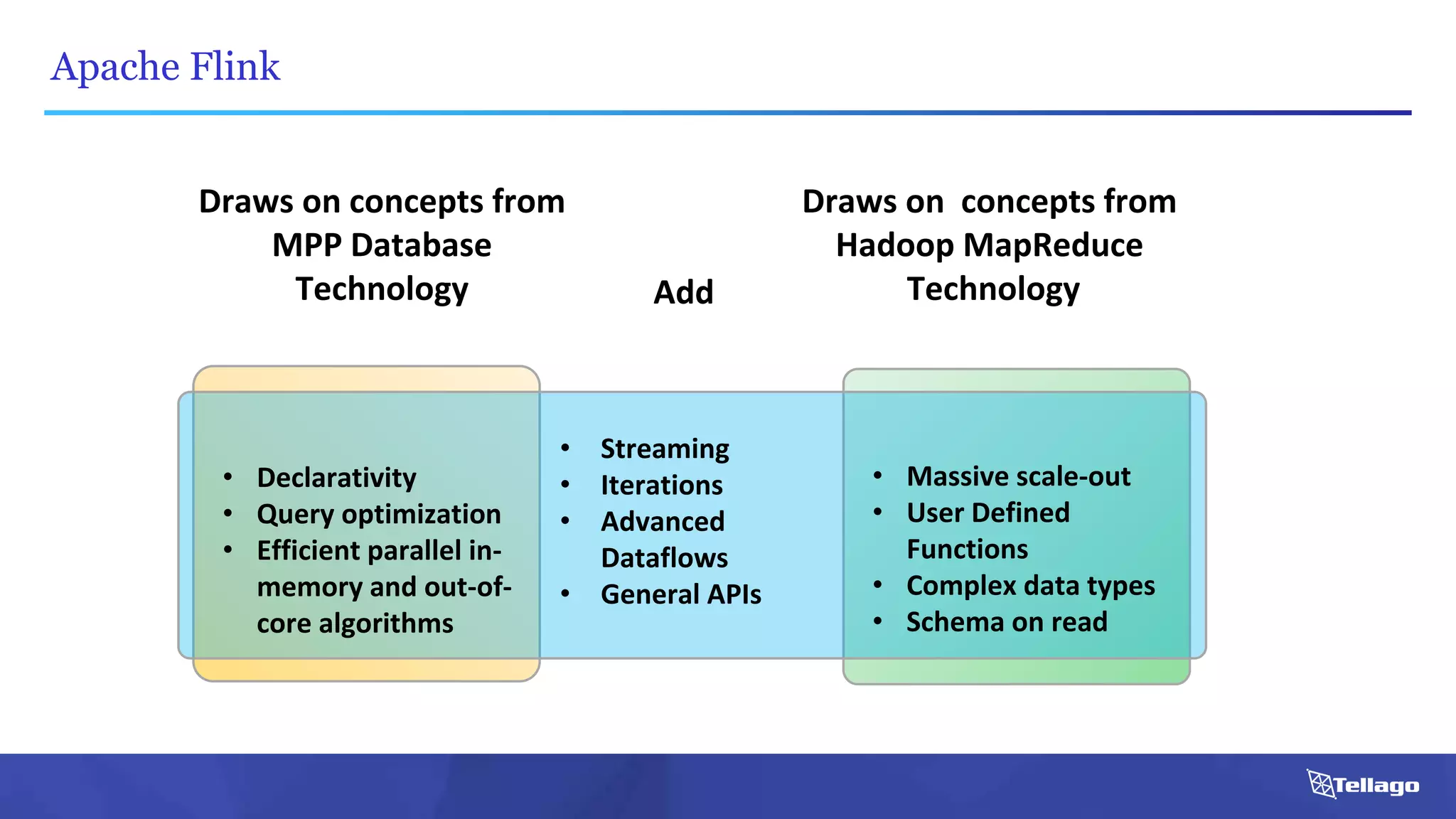

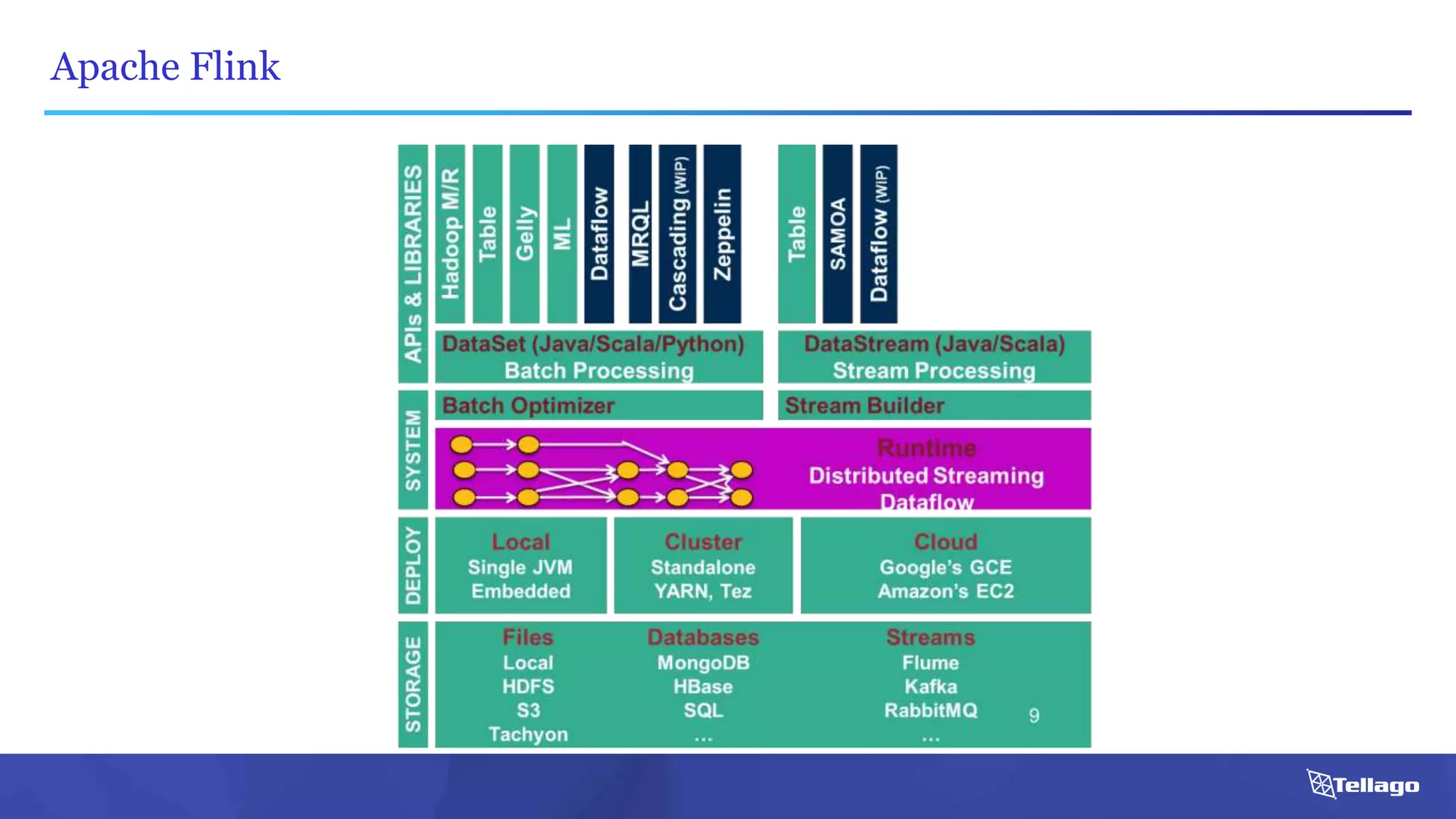

This document introduces several big data technologies that are less well known than traditional solutions like Hadoop and Spark. It discusses Apache Flink for stream processing, Apache Samza for processing real-time data from Kafka, Google Cloud Dataflow which provides a managed service for batch and stream data processing, and StreamSets Data Collector for collecting and processing data in real-time. It also covers machine learning technologies like TensorFlow for building dataflow graphs, and cognitive computing services from Microsoft. The document aims to think beyond traditional stacks and learn from companies building pipelines at scale.

![Apache Flink: An Example

case class Word (word: String, frequency: Int)

val lines: DataStream[String] = env.fromSocketStream(...)

lines.flatMap {line => line.split(" ")

.map(word => Word(word,1))}

.window(Time.of(5,SECONDS)).every(Time.of(1,SECONDS))

.groupBy("word").sum("frequency")

.print()

val lines: DataSet[String] = env.readTextFile(...)

lines.flatMap {line => line.split(" ")

.map(word => Word(word,1))}

.groupBy("word").sum("frequency")

.print()

DataSet API (batch):

DataStream API (streaming):](https://image.slidesharecdn.com/bigdatatechnologiesyoudidntknowaboutpublic-160331190552/75/10-Big-Data-Technologies-you-Didn-t-Know-About-15-2048.jpg)