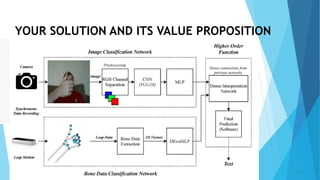

The document discusses a proposed project titled "Real-time Sign Language Translation using Computer Vision and Machine Learning". The project aims to develop a system that can accurately and efficiently translate American Sign Language (ASL) gestures into spoken language in real-time. It would involve researching current techniques, collecting a dataset, developing a computer vision and machine learning model, integrating them into a real-time system, testing and improving the system, and deploying it to facilitate communication for deaf individuals.