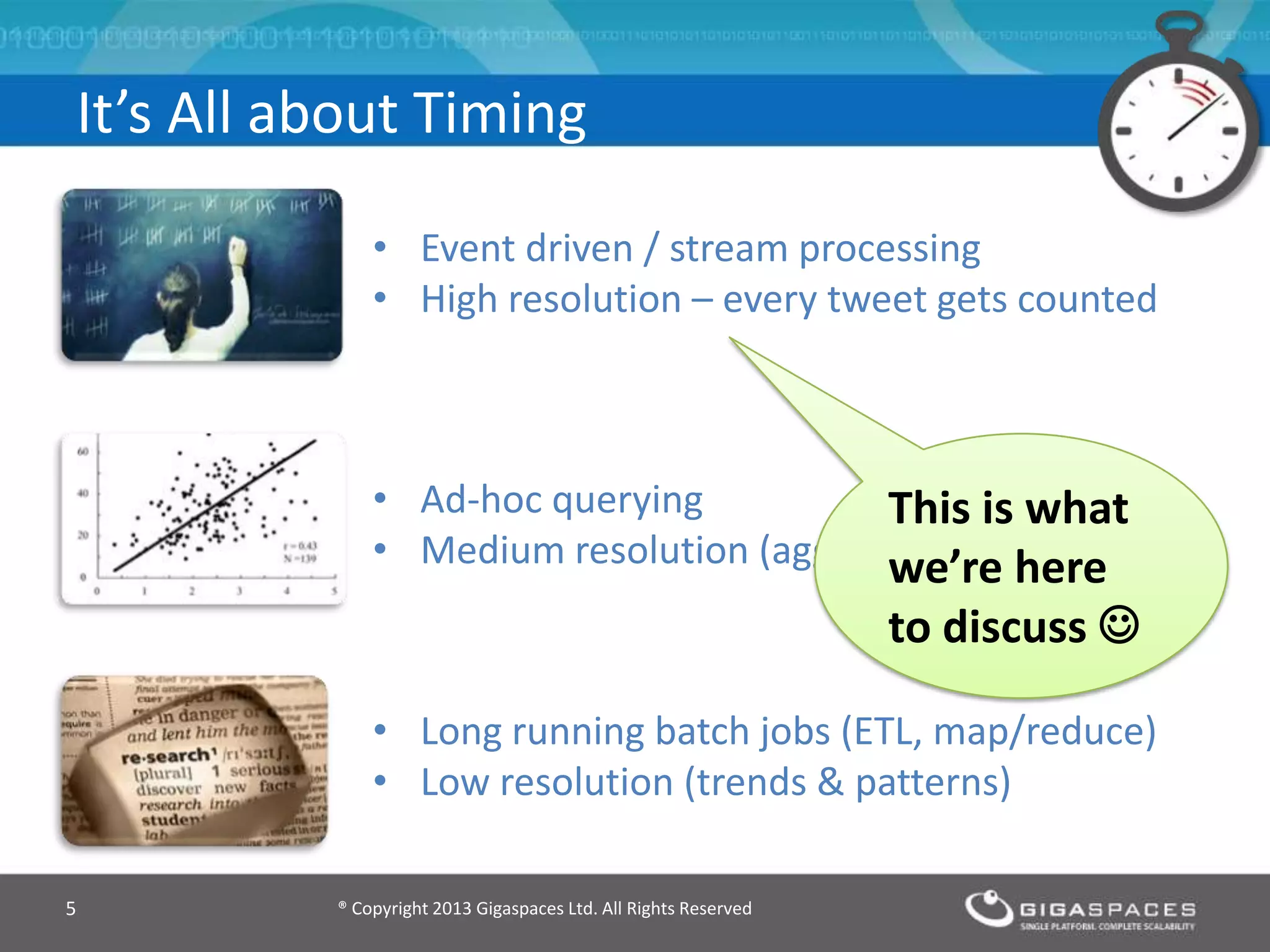

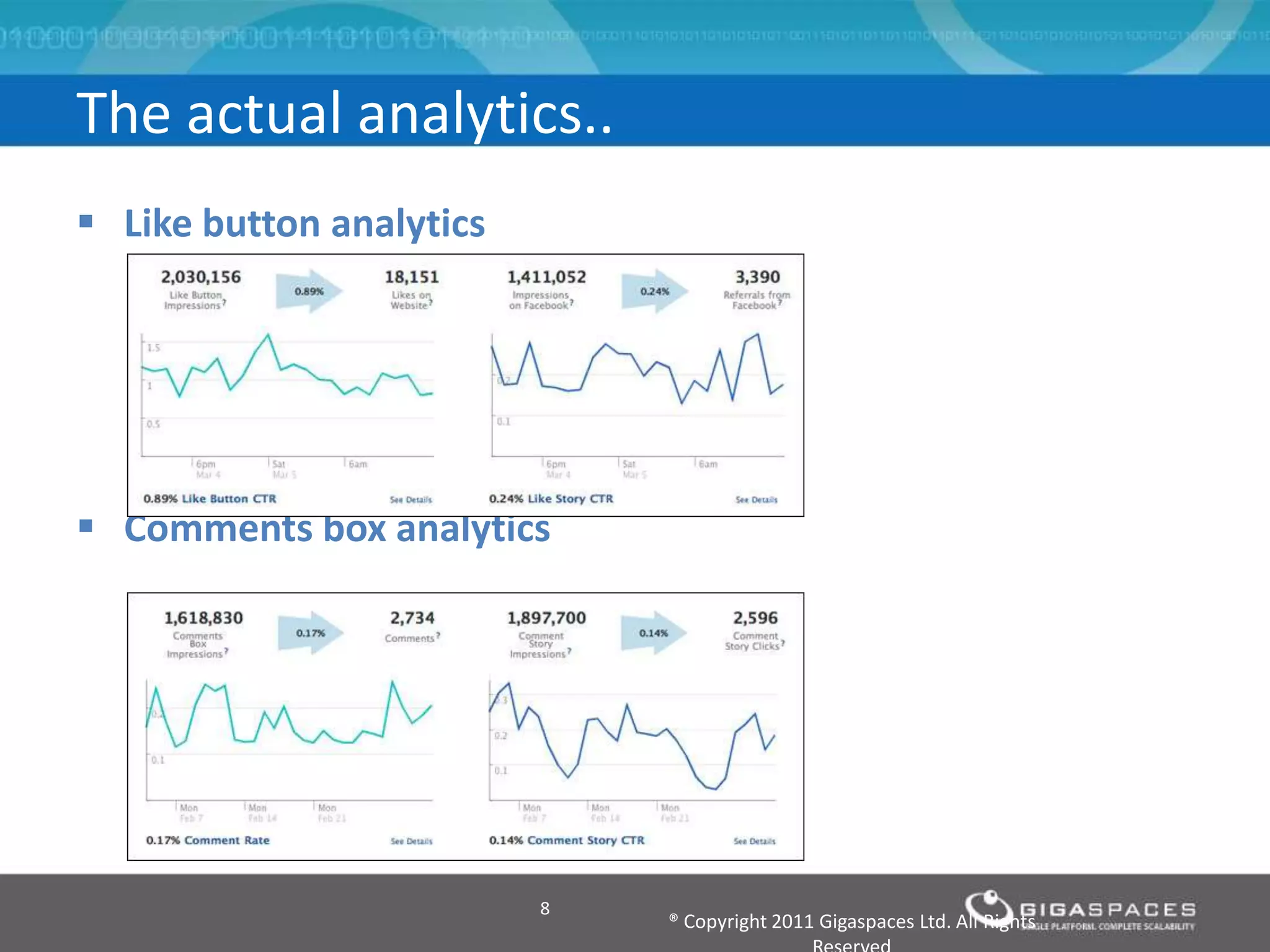

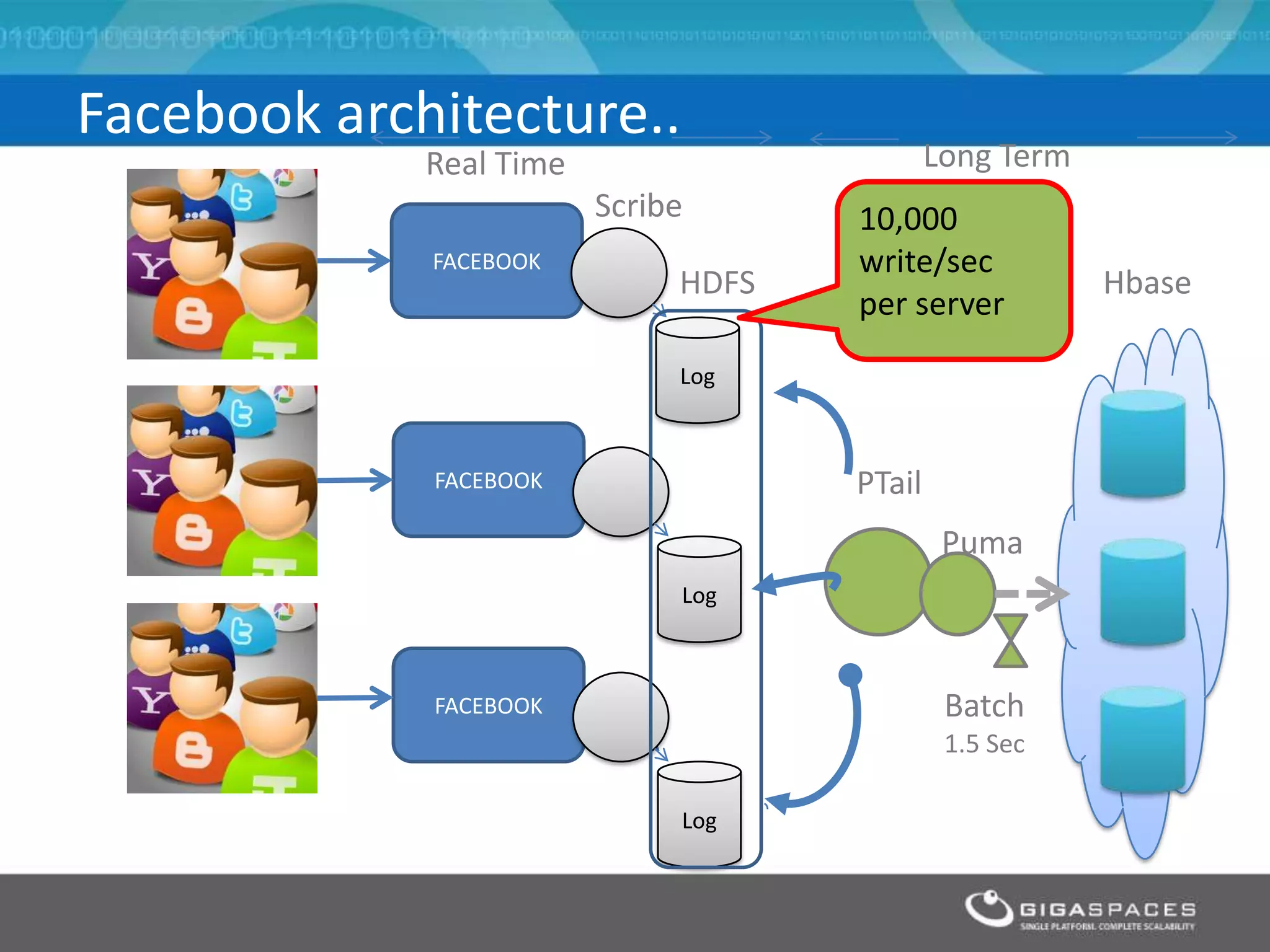

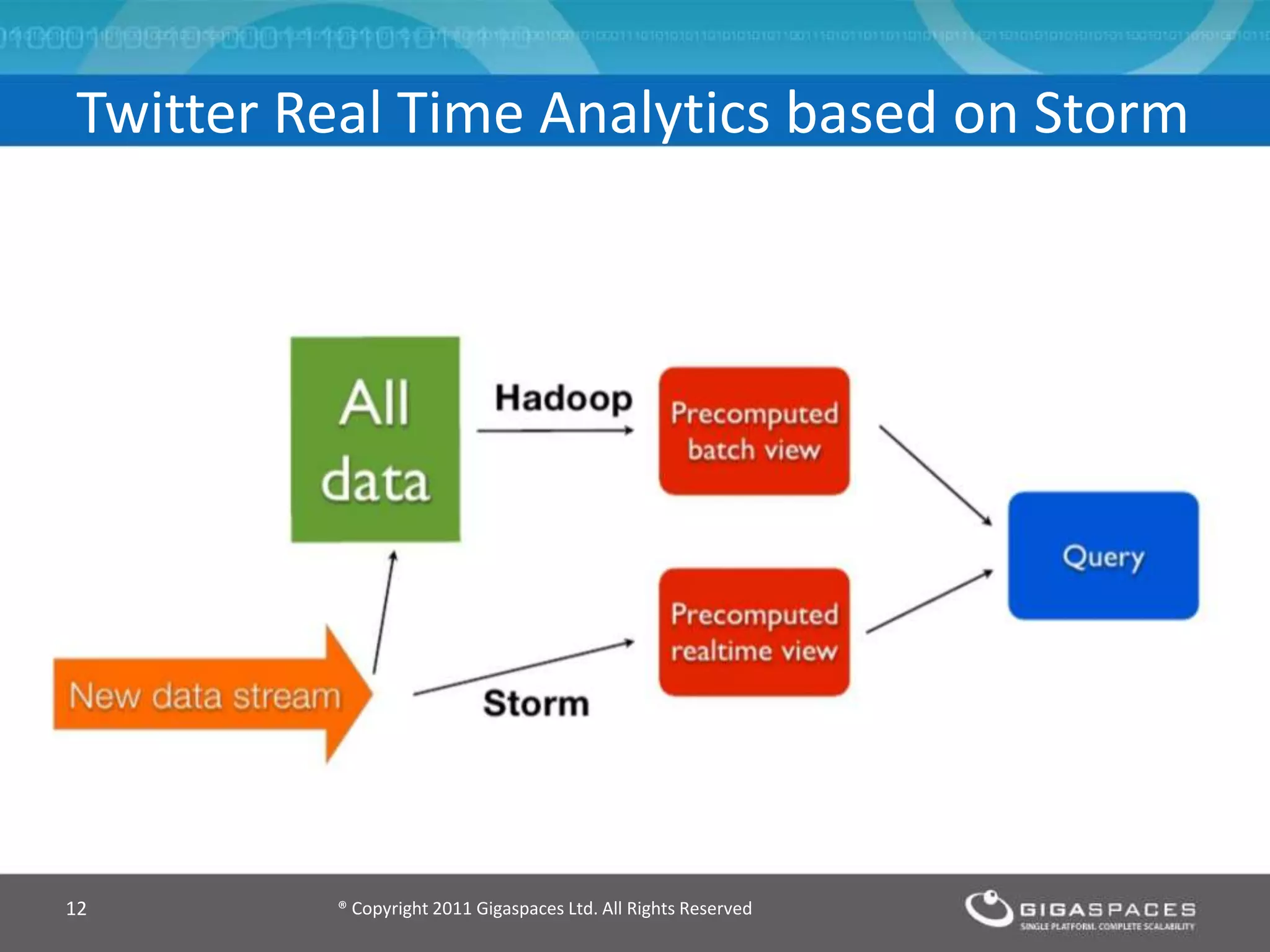

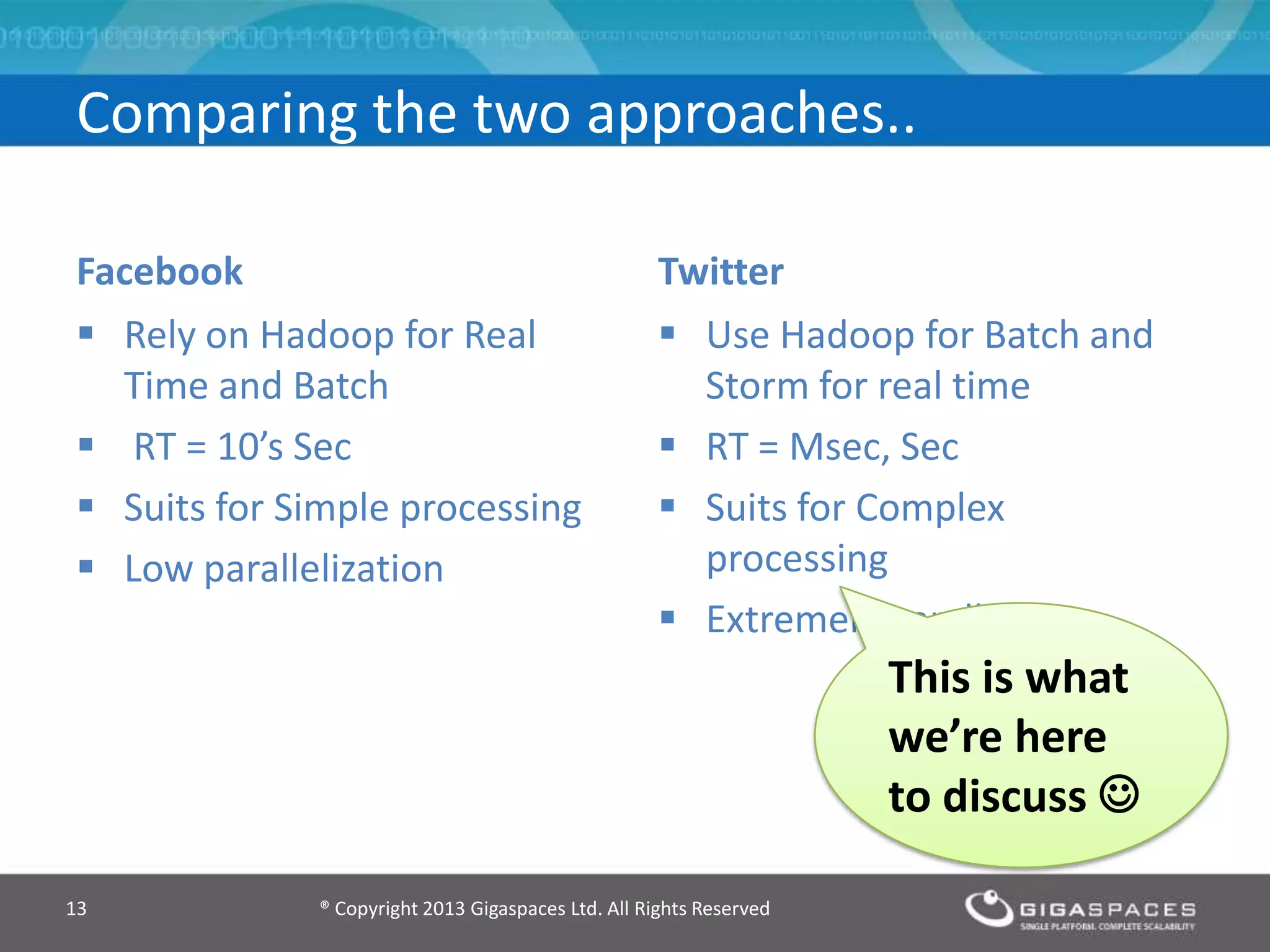

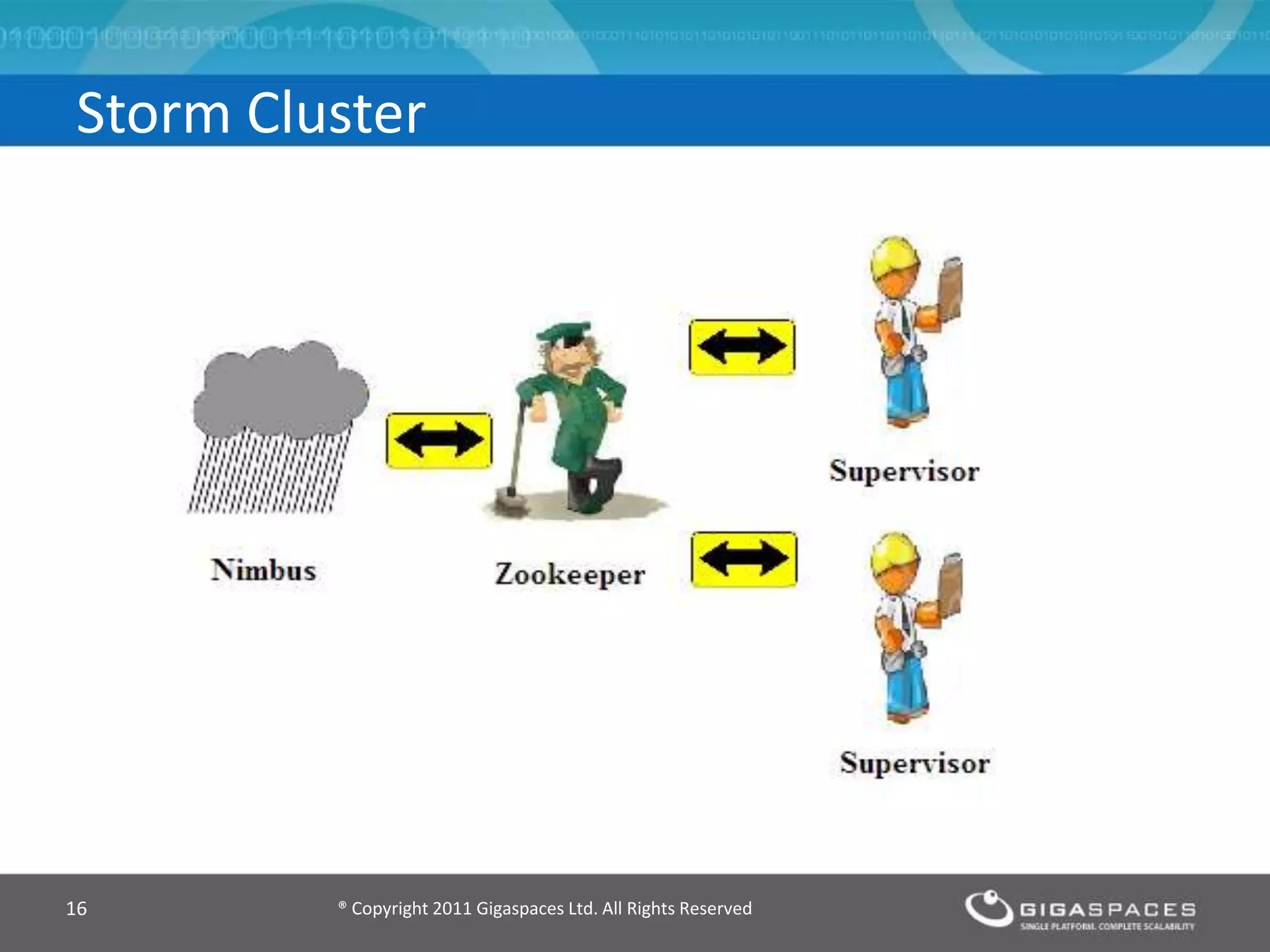

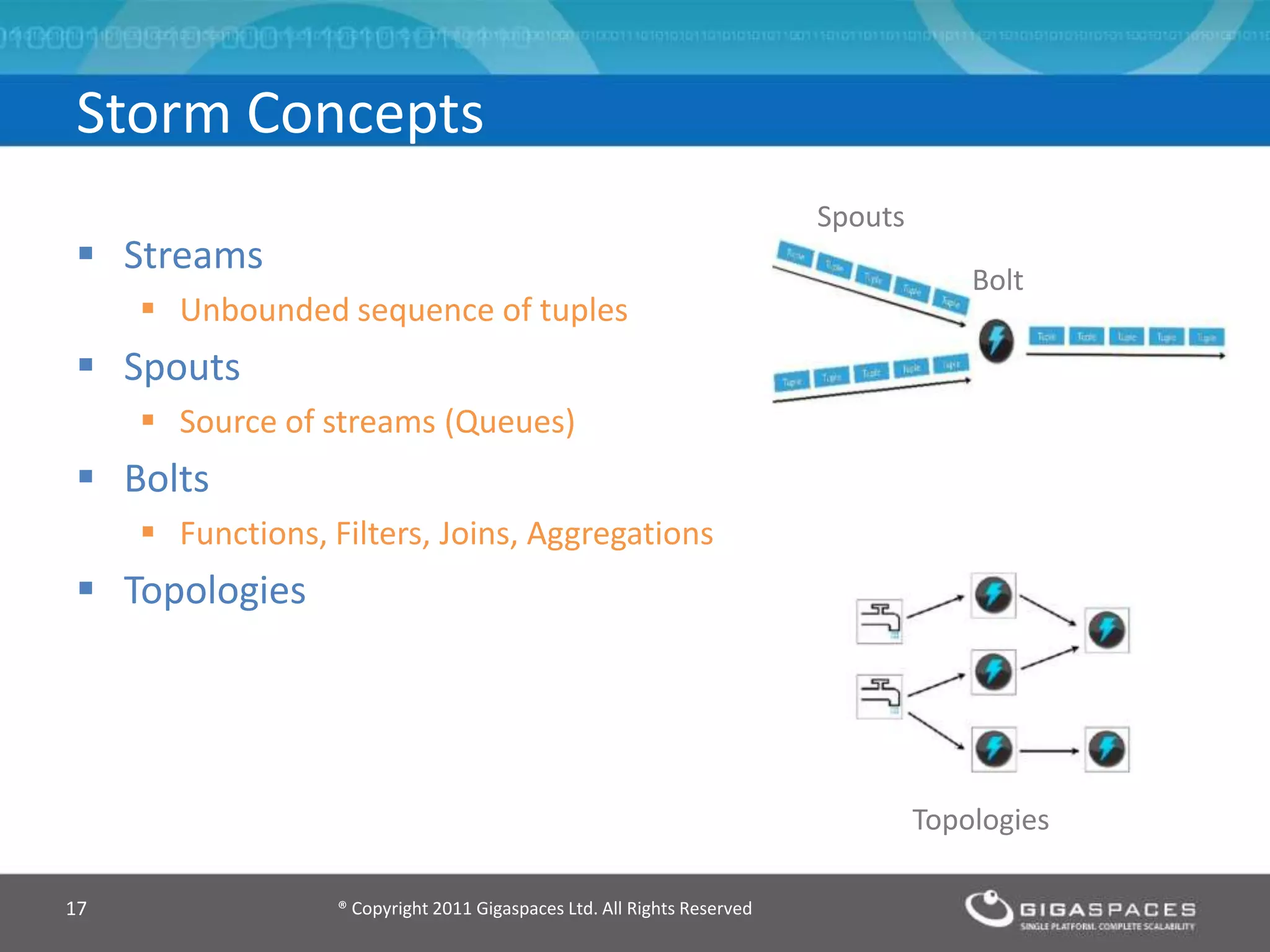

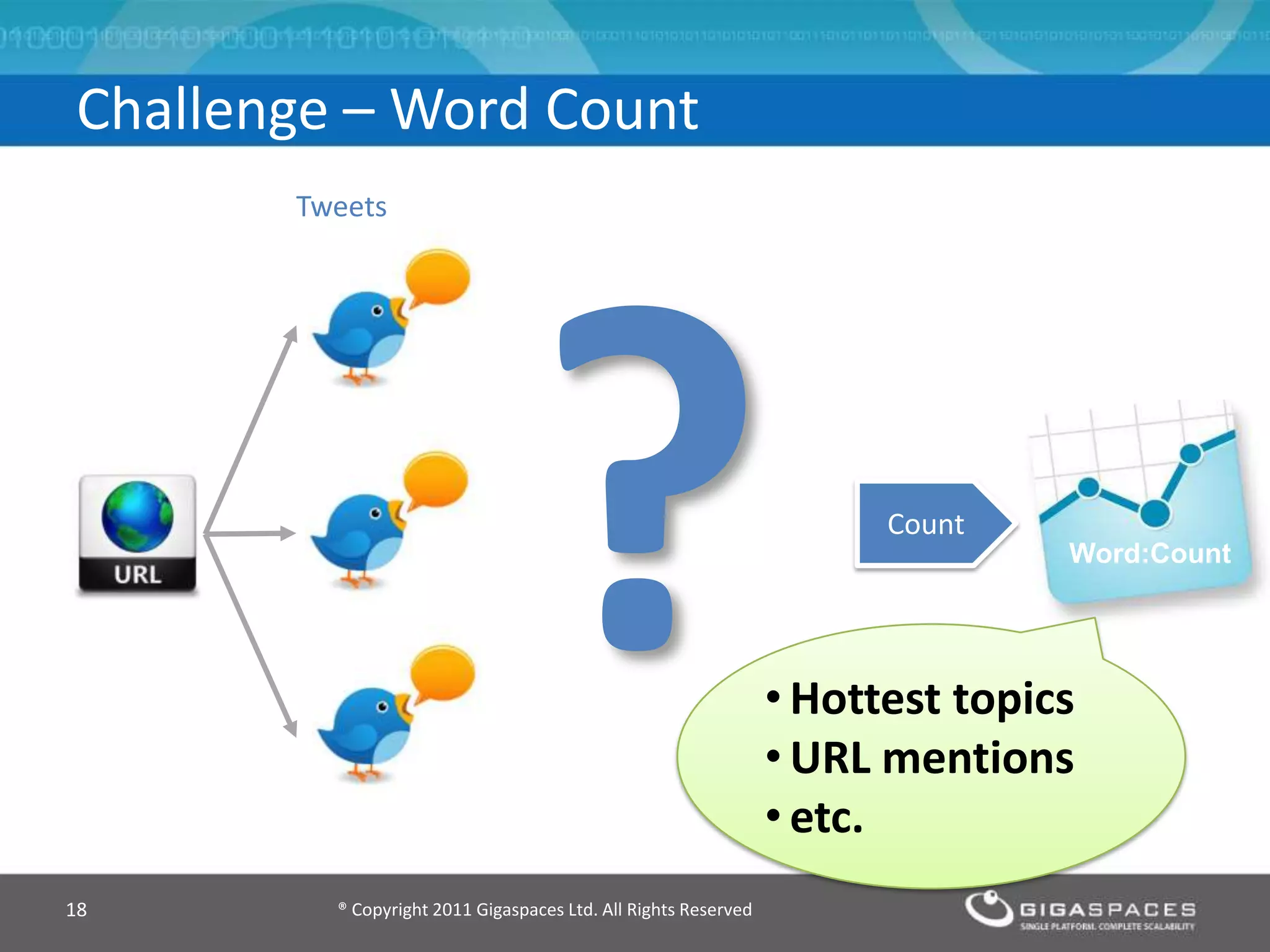

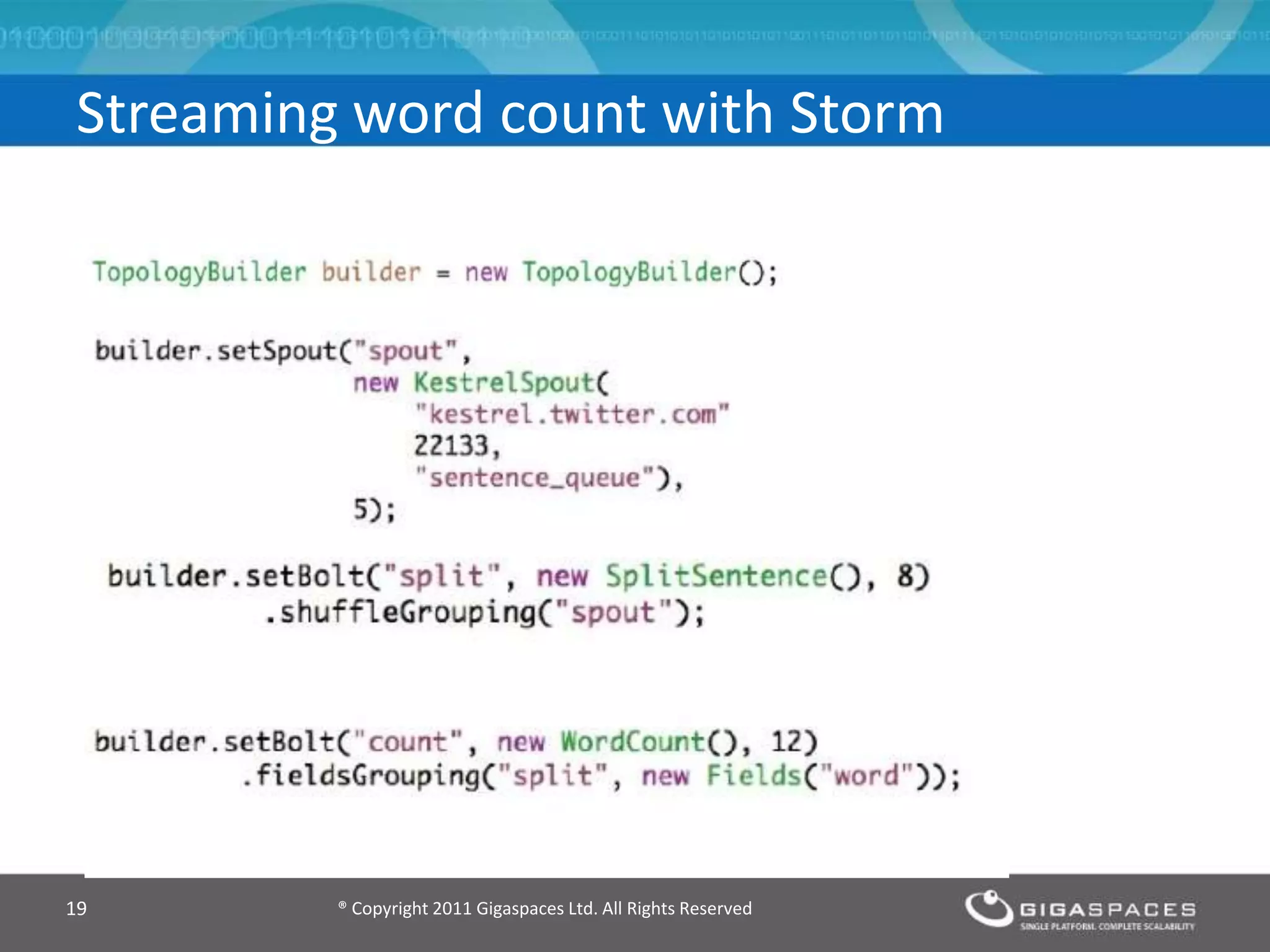

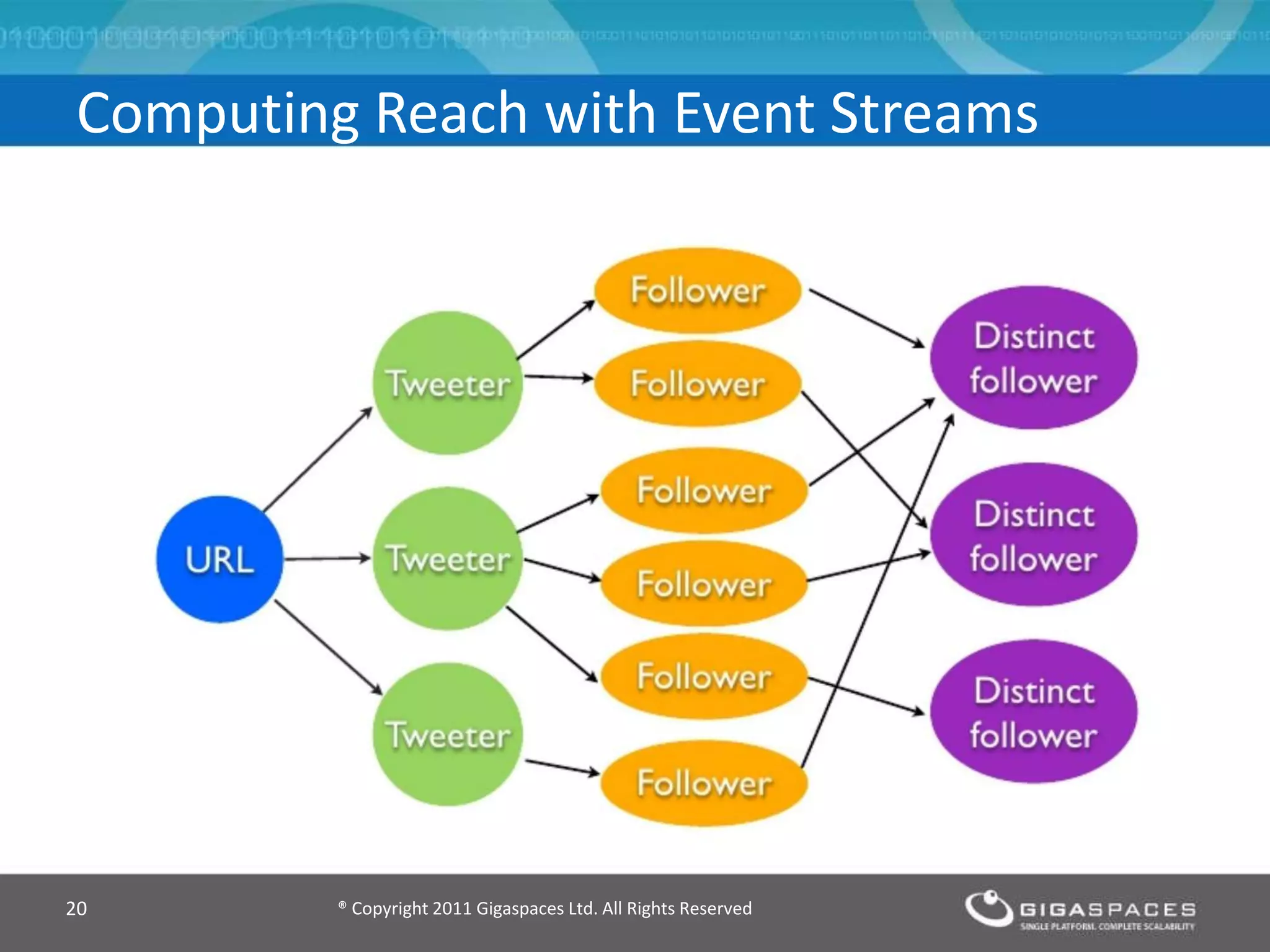

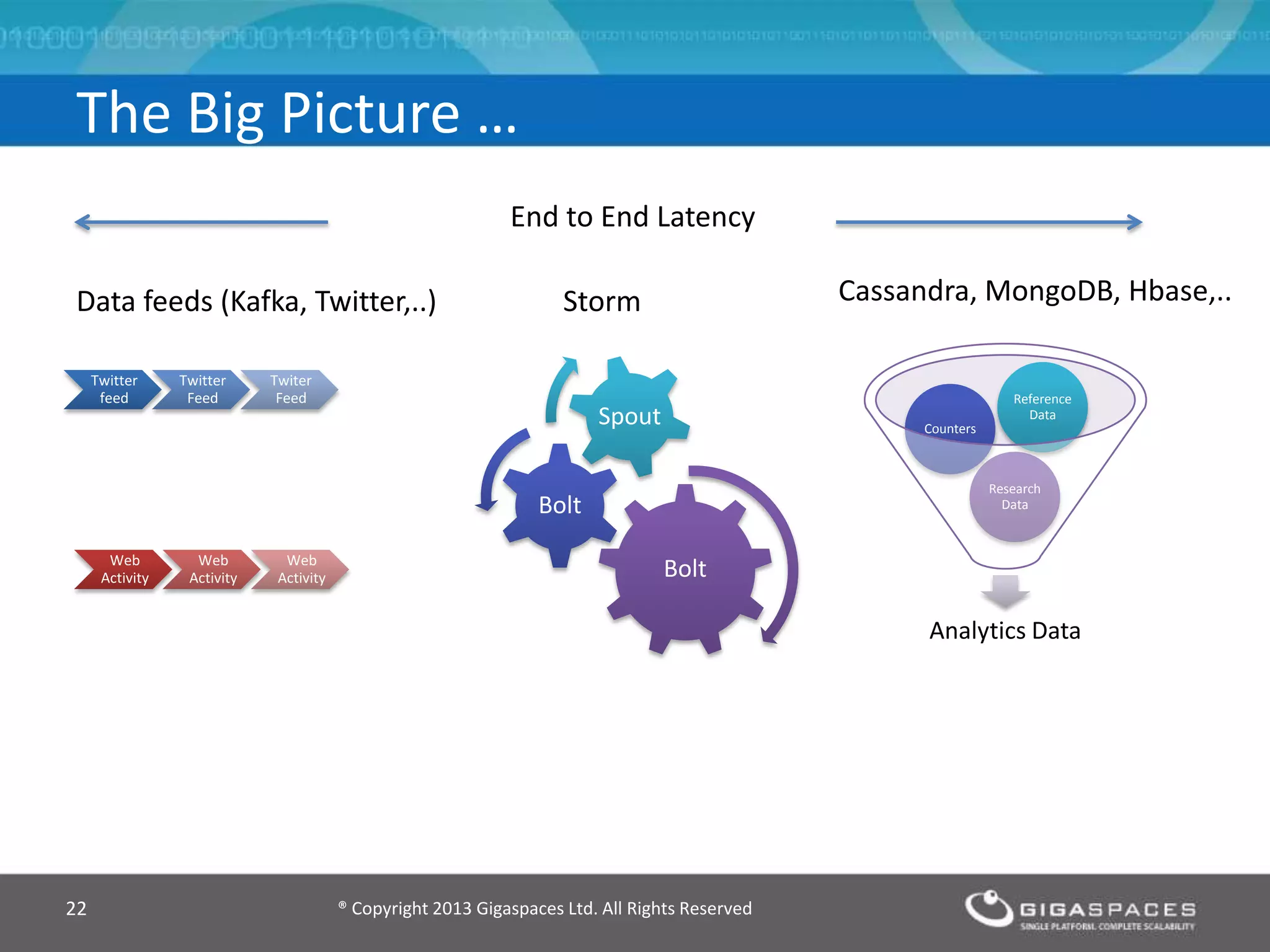

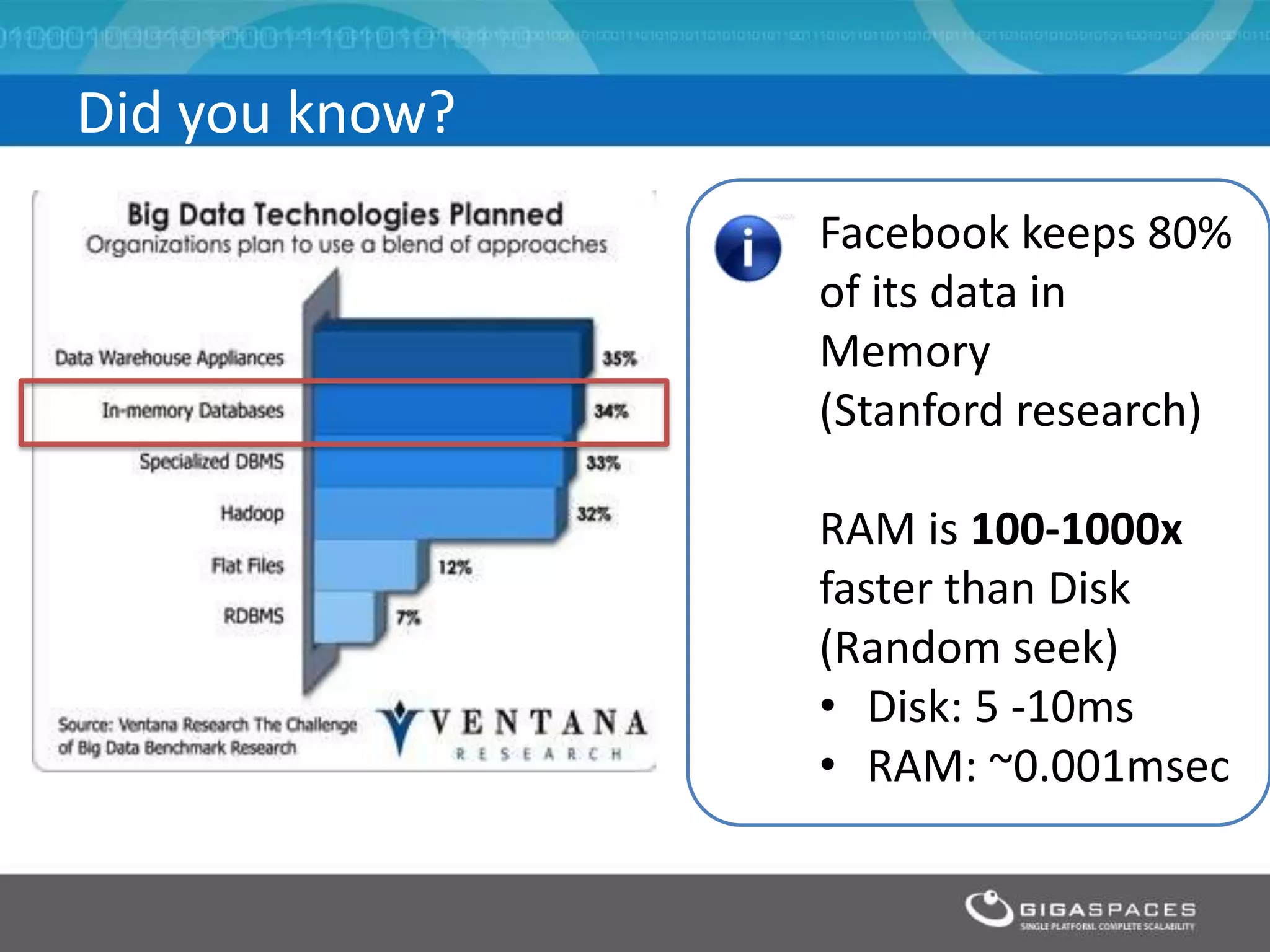

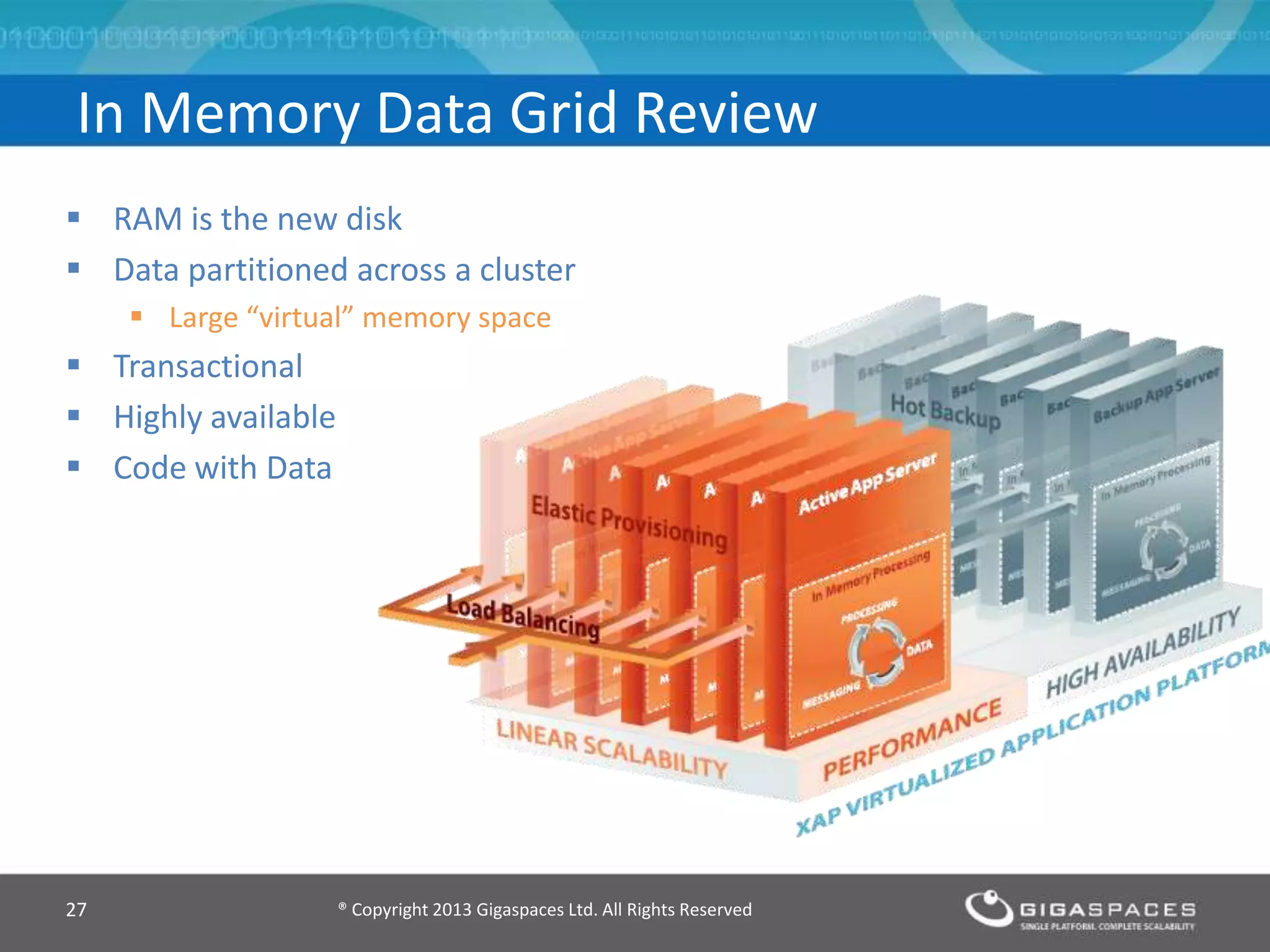

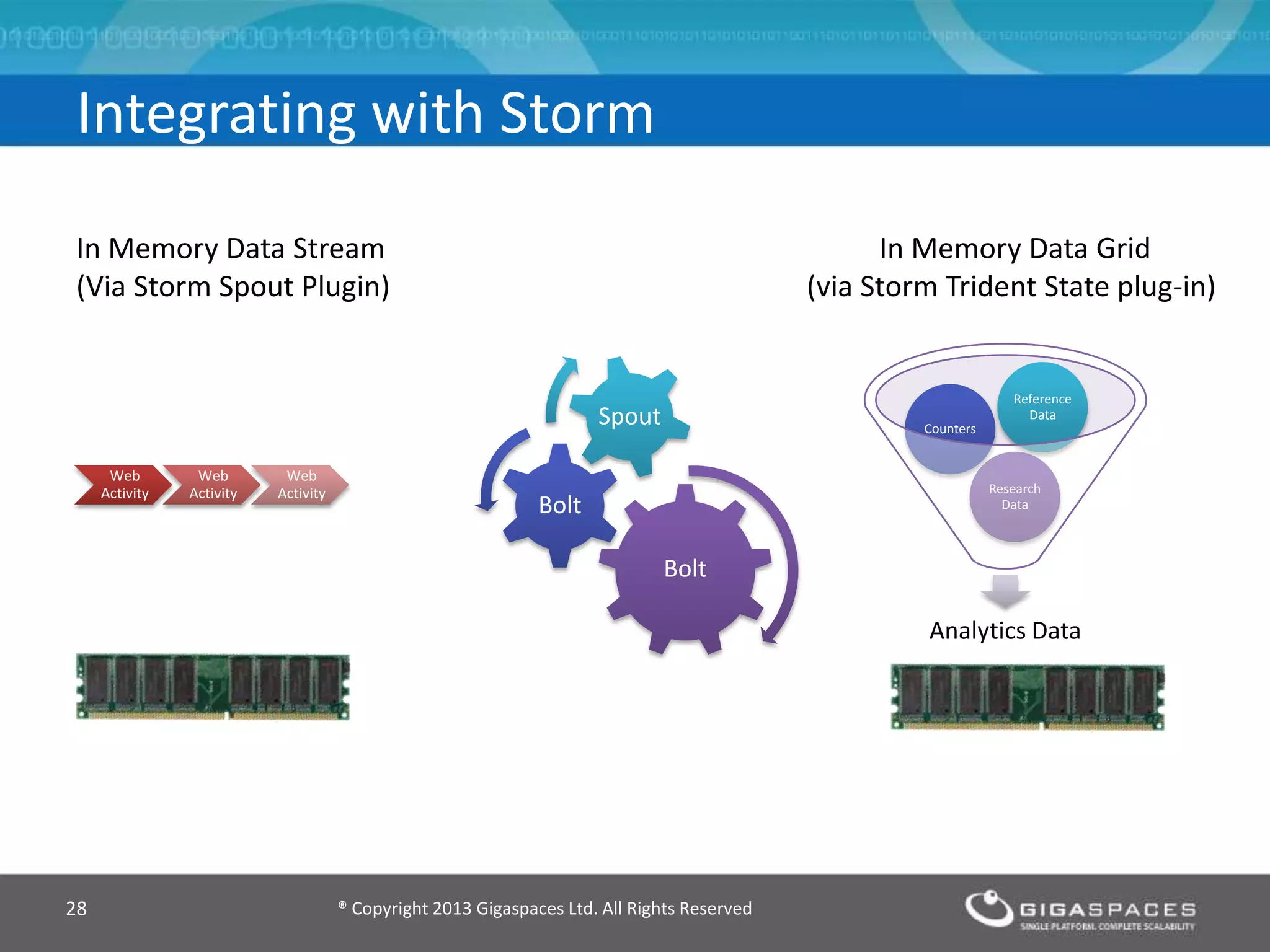

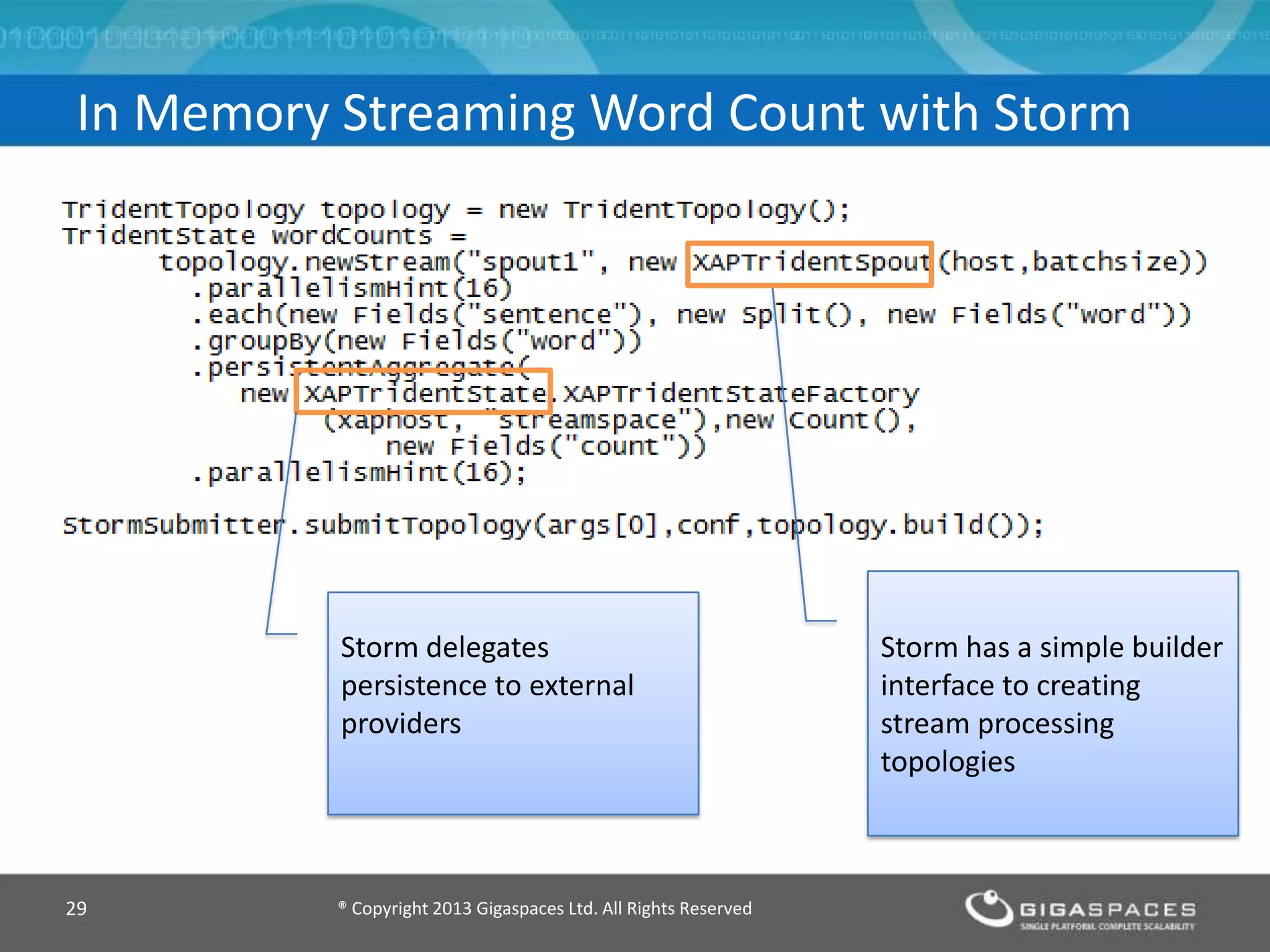

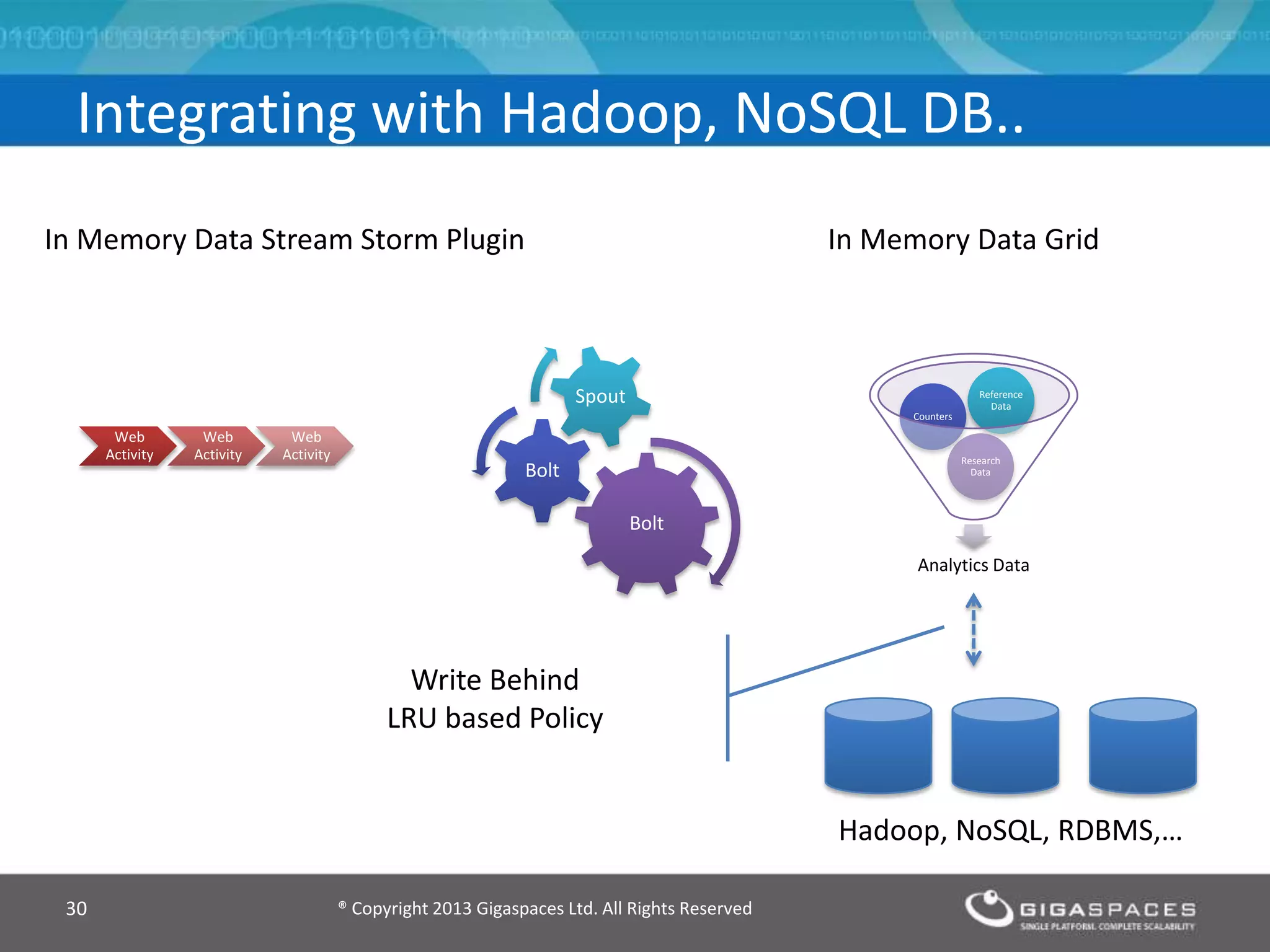

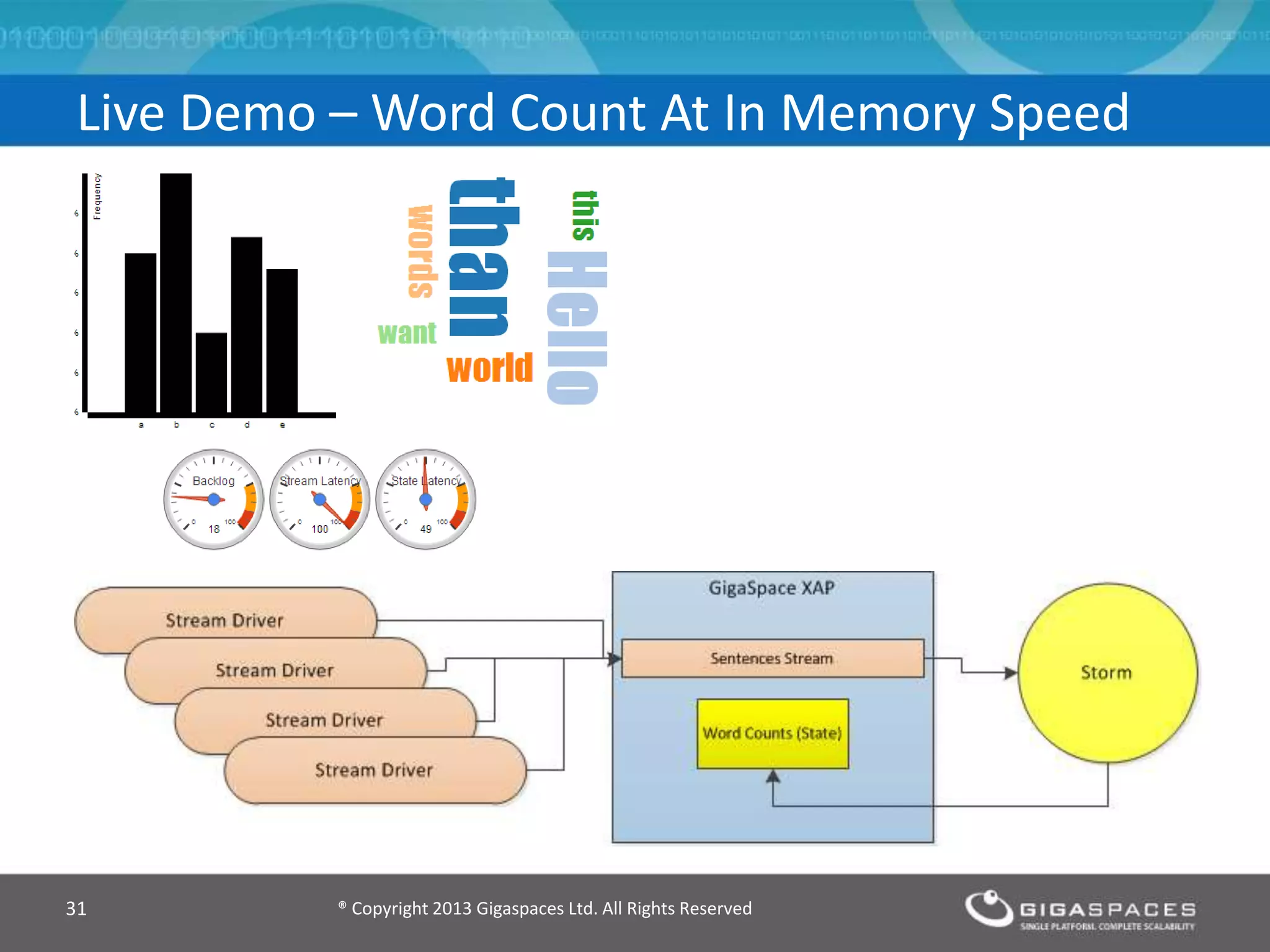

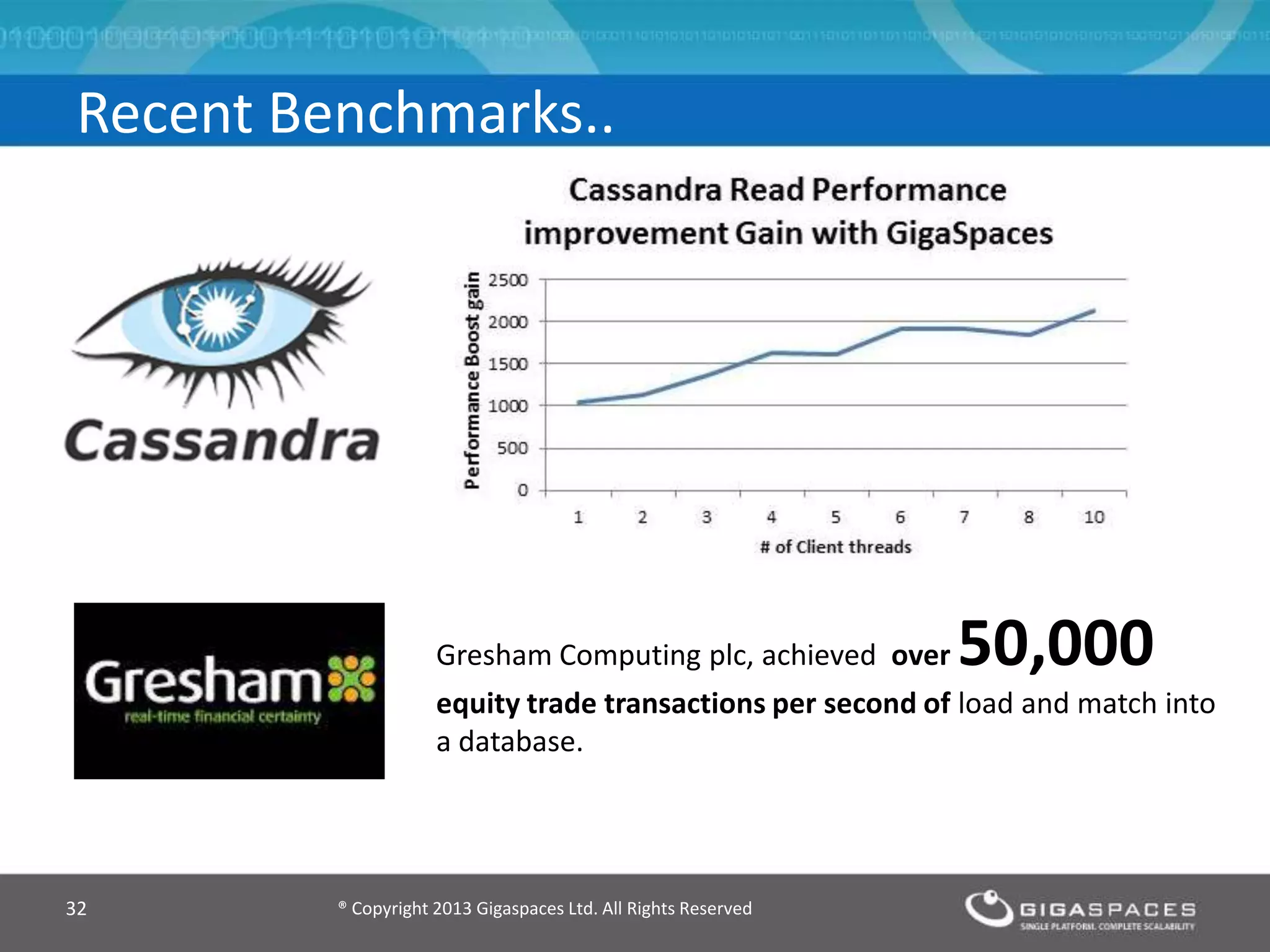

The document discusses real-time big data analytics using Storm, Cassandra, and in-memory computing, highlighting their applications in various fields such as social media and financial services. It contrasts the real-time analytics approaches of Facebook and Twitter, emphasizing Storm's capabilities for high-speed processing of streaming data. Additionally, it presents the advantages of in-memory data processing and explores the integration of Storm with various data sources and storage solutions.