Qm0021 statistical process control

•Download as DOCX, PDF•

0 likes•128 views

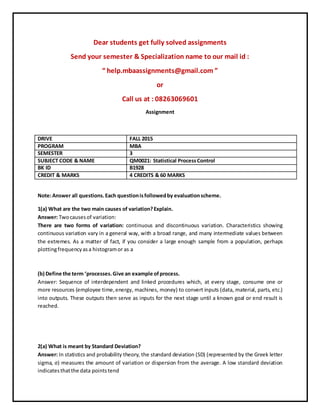

Dear students get fully solved assignments Send your semester & Specialization name to our mail id : “ help.mbaassignments@gmail.com ” or Call us at : 08263069601

Report

Share

Report

Share

Recommended

Qm0021 statistical process control

Dear students get fully solved assignments by professionals

Send your semester & Specialization name to our mail id :

stuffstudy5@gmail.com

or

call us at : 098153-33456

Qm0021 statistical process control

Dear students get fully solved assignments

Send your semester & Specialization name to our mail id :

“ help.mbaassignments@gmail.com ”

or

Call us at : 08263069601

Parametric estimation of construction cost using combined bootstrap and regre...

The document discusses a method for estimating construction costs using a combined bootstrap and regression technique. It involves using historical project data to develop a regression model relating cost to key parameters. A bootstrap resampling method is then used to generate multiple simulated datasets from the original. Regression analysis is performed on each resampled dataset to calculate coefficients and develop a cost range estimate that captures uncertainty. This allows integrating probabilistic and parametric estimation methods while requiring fewer assumptions than traditional statistical techniques. The goal is to provide more accurate conceptual cost estimates early in projects when design information is limited.

Quantitative Risk Assessment - Road Development Perspective

This document outlines an approach for quantitative risk assessment in road transport infrastructure projects using stochastic analysis with triangular distributions. It discusses determining the combined influence of parameters like project cost and traffic on economic indicators. Traditionally, risks from cost and traffic changing from base cases are analyzed separately using triangular distributions defined by minimum, most likely and maximum limits. The document proposes a method to analyze the combined influence of both parameters varying simultaneously using bivariate distributions and conditional probabilities.

Experimental design

This document provides an overview of experimental design, response surface analysis, and optimization. It discusses key terminology like factors, responses, and treatments. Full factorial designs are explained as a way to systematically vary factor levels to estimate main and interaction effects. Response surfaces and metamodels like regression analysis are introduced as tools to approximate simulation responses. Response surface methodology is outlined as a sequential approach combining metamodeling and optimization. Examples help illustrate concepts like estimating effects from a full factorial design. Situations with many factors and terminology in experimental design are also covered at a high level.

Managerial Economics (Chapter 5 - Demand Estimation)

This document provides an overview of demand estimation and regression analysis. It discusses how demand estimation is an essential process that informs various business decisions. Regression analysis uses statistical techniques to model the relationship between a dependent variable (e.g. demand) and independent variables (e.g. price, income). Simple regression uses one independent variable, while multiple regression uses more variables. Ordinary least squares is used to estimate the coefficients in the regression equation. These coefficients represent the impact of each independent variable on demand and can be used to forecast demand under different scenarios.

The Sample Average Approximation Method for Stochastic Programs with Integer ...

The document describes a sample average approximation method for solving stochastic programs with integer recourse. It approximates the expected recourse cost function using a sample average based on a sample of scenarios. It shows that as the sample size increases, the solution to the sample average approximation problem converges exponentially fast to the optimal solution of the true stochastic program. It also describes statistical and deterministic techniques for validating candidate solutions. Preliminary computational results applying this method are also mentioned.

Adaptive response surface by kriging using pilot points for structural reliab...

Structural reliability analysis aims to compute the probability of failure by considering system uncertainties. However, this approach may require very time-consuming computation and becomes impracticable for complex structures especially when complex computer analysis and simulation codes are involved such as finite element method. Approximation methods are widely used to build simplified approximations, or metamodels providing a surrogate model of the original codes. The most popular surrogate model is the response surface methodology, which typically employs second order polynomial approximation using least-squares regression techniques. Several authors have been used response surface methods in reliability analysis. However, another approximation method based on kriging approach has successfully applied in the field of deterministic optimization. Few studies have treated the use of kriging approximation in reliability analysis and reliability-based design optimization. In this paper, the kriging approximation is used an alternative to the traditional response surface method, to approximate the performance function of the reliability analysis. The main objective of this work is to develop an efficient global approximation while controlling the computational cost and accurate prediction. A pilot point method is proposed to the kriging approximation in order to increase the prior predictivity of the approximation, which the pilot points are good candidates for numerical simulation. In other words, the predictive quality of the initial kriging approximation is improved by adding adaptive information called “pilot points” in areas where the kriging variance is maximum. This methodology allows for an efficient modeling of highly non-linear responses, while the number of simulations is reduced compared to Latin Hypercubes approach. Numerical examples show the efficiency and the interest of the proposed method.

Recommended

Qm0021 statistical process control

Dear students get fully solved assignments by professionals

Send your semester & Specialization name to our mail id :

stuffstudy5@gmail.com

or

call us at : 098153-33456

Qm0021 statistical process control

Dear students get fully solved assignments

Send your semester & Specialization name to our mail id :

“ help.mbaassignments@gmail.com ”

or

Call us at : 08263069601

Parametric estimation of construction cost using combined bootstrap and regre...

The document discusses a method for estimating construction costs using a combined bootstrap and regression technique. It involves using historical project data to develop a regression model relating cost to key parameters. A bootstrap resampling method is then used to generate multiple simulated datasets from the original. Regression analysis is performed on each resampled dataset to calculate coefficients and develop a cost range estimate that captures uncertainty. This allows integrating probabilistic and parametric estimation methods while requiring fewer assumptions than traditional statistical techniques. The goal is to provide more accurate conceptual cost estimates early in projects when design information is limited.

Quantitative Risk Assessment - Road Development Perspective

This document outlines an approach for quantitative risk assessment in road transport infrastructure projects using stochastic analysis with triangular distributions. It discusses determining the combined influence of parameters like project cost and traffic on economic indicators. Traditionally, risks from cost and traffic changing from base cases are analyzed separately using triangular distributions defined by minimum, most likely and maximum limits. The document proposes a method to analyze the combined influence of both parameters varying simultaneously using bivariate distributions and conditional probabilities.

Experimental design

This document provides an overview of experimental design, response surface analysis, and optimization. It discusses key terminology like factors, responses, and treatments. Full factorial designs are explained as a way to systematically vary factor levels to estimate main and interaction effects. Response surfaces and metamodels like regression analysis are introduced as tools to approximate simulation responses. Response surface methodology is outlined as a sequential approach combining metamodeling and optimization. Examples help illustrate concepts like estimating effects from a full factorial design. Situations with many factors and terminology in experimental design are also covered at a high level.

Managerial Economics (Chapter 5 - Demand Estimation)

This document provides an overview of demand estimation and regression analysis. It discusses how demand estimation is an essential process that informs various business decisions. Regression analysis uses statistical techniques to model the relationship between a dependent variable (e.g. demand) and independent variables (e.g. price, income). Simple regression uses one independent variable, while multiple regression uses more variables. Ordinary least squares is used to estimate the coefficients in the regression equation. These coefficients represent the impact of each independent variable on demand and can be used to forecast demand under different scenarios.

The Sample Average Approximation Method for Stochastic Programs with Integer ...

The document describes a sample average approximation method for solving stochastic programs with integer recourse. It approximates the expected recourse cost function using a sample average based on a sample of scenarios. It shows that as the sample size increases, the solution to the sample average approximation problem converges exponentially fast to the optimal solution of the true stochastic program. It also describes statistical and deterministic techniques for validating candidate solutions. Preliminary computational results applying this method are also mentioned.

Adaptive response surface by kriging using pilot points for structural reliab...

Structural reliability analysis aims to compute the probability of failure by considering system uncertainties. However, this approach may require very time-consuming computation and becomes impracticable for complex structures especially when complex computer analysis and simulation codes are involved such as finite element method. Approximation methods are widely used to build simplified approximations, or metamodels providing a surrogate model of the original codes. The most popular surrogate model is the response surface methodology, which typically employs second order polynomial approximation using least-squares regression techniques. Several authors have been used response surface methods in reliability analysis. However, another approximation method based on kriging approach has successfully applied in the field of deterministic optimization. Few studies have treated the use of kriging approximation in reliability analysis and reliability-based design optimization. In this paper, the kriging approximation is used an alternative to the traditional response surface method, to approximate the performance function of the reliability analysis. The main objective of this work is to develop an efficient global approximation while controlling the computational cost and accurate prediction. A pilot point method is proposed to the kriging approximation in order to increase the prior predictivity of the approximation, which the pilot points are good candidates for numerical simulation. In other words, the predictive quality of the initial kriging approximation is improved by adding adaptive information called “pilot points” in areas where the kriging variance is maximum. This methodology allows for an efficient modeling of highly non-linear responses, while the number of simulations is reduced compared to Latin Hypercubes approach. Numerical examples show the efficiency and the interest of the proposed method.

IJPR (2015) A Distance-based Methodology for Increased Extraction Of Informat...

IJPR (2015) A Distance-based Methodology for Increased Extraction Of Informat...Nicky Campbell-Allen

This document describes a new methodology for incorporating information from the roof matrices in Quality Function Deployment (QFD) studies. The roof matrices contain correlations between customer requirements (voice of customers) and technical characteristics, but existing methods for including this information in QFD analyses have limitations. The proposed new methodology uses the Manhattan Distance Measure to integrate roof matrix correlation data into the final weightings of technical characteristics. This provides a more consistent way to select technical characteristics by identifying those that are negatively or positively correlated. The methodology is demonstrated using a published QFD case study.The Use of ARCH and GARCH Models for Estimating and Forecasting Volatility-ru...

This document discusses volatility modeling using ARCH and GARCH models. It first provides background on ARCH and GARCH models, noting they were developed to model characteristics of financial time series data like volatility clustering and fat tails. It then describes the specific ARCH and GARCH models that will be used in the study, including the ARCH, GARCH, EGARCH, GJR, APARCH, IGARCH, FIGARCH and FIAPARCH models. The document aims to apply these models to daily stock index data from the IMKB 100 to analyze and forecast volatility, and better understand risk in the Turkish market.

Lecture slides stats1.13.l20.air

This document summarizes a lecture on binary logistic regression. It begins with an overview of binary logistic regression, noting that it is used to predict a binary categorical outcome variable from predictor variables that may be continuous or categorical. The second segment provides an example using data from mock jury research, with the outcome being a death penalty verdict and predictors being jurors' beliefs. Key outputs of binary logistic regression are explained such as regression coefficients, odds ratios, Wald tests, and measures of model fit and classification success.

Study on Evaluation of Venture Capital Based onInteractive Projection Algorithm

International Journal of Business and Management Invention (IJBMI) is an international journal intended for professionals and researchers in all fields of Business and Management. IJBMI publishes research articles and reviews within the whole field Business and Management, new teaching methods, assessment, validation and the impact of new technologies and it will continue to provide information on the latest trends and developments in this ever-expanding subject. The publications of papers are selected through double peer reviewed to ensure originality, relevance, and readability. The articles published in our journal can be accessed online.

PRIORITIZING THE BANKING SERVICE QUALITY OF DIFFERENT BRANCHES USING FACTOR A...

In recent years, India’s service industry is developing rapidly. The objective of the study is to explore the

dimensions of customer perceived service quality in the context of the Indian banking industry. In order to

categorize the customer needs into quality dimensions, Factor analysis (FA) has been carried out on

customer responses obtained through questionnaire survey. Analytic Hierarchy Process (AHP) is employed

to determine the weights of the banking service quality dimensions. The priority structure of the quality

dimensions provides an idea for the Banking management to allocate the resources in an effective manner

to achieve more customer satisfaction. Technique for Order Preference Similarity to Ideal Solution

(TOPSIS) is used to obtain final ranking of different branches.

Real time clustering of time series

This document discusses methods for clustering time series data in a way that allows the cluster structure to change over time. It begins by introducing the problem and defining relevant terms. It then provides spectral clustering as a preliminary benchmark approach before exploring an alternative method using triangular potentials within a graphical model framework. The document presents the proposed method and provides illustrative examples and discussion of extensions.

GARCH

1) This paper proposes an adaptive quasi-maximum likelihood estimation approach for GARCH models when the distribution of volatility data is unspecified or heavy-tailed.

2) The approach works by using a scale parameter ηf to identify the discrepancy between the wrongly specified innovation density and the true innovation density.

3) Simulation studies and an application show that the adaptive approach gains better efficiency compared to other methods, especially when the innovation error is heavy-tailed.

essay

1) The document analyzes the relationship between stock-commodity correlation and business cycles from 1991-2014 using regression analysis. It finds the stock-commodity correlation is positively related to periods of economic weakness, as evidenced by a positive relationship with default spread.

2) Regression models show stock-commodity correlation is serially correlated and has a negative relationship with default spread, indicating higher correlation during recessions. However, the effect of real GDP growth and inflation on correlation is unclear.

3) In conclusion, the findings are consistent with prior research that stock-commodity correlation increases during economic downturns, when firms adjust behaviors and investor pessimism rises.

cian mcdonnell- aluminium prices fyp

This document discusses time series analysis and forecasting of aluminium prices from January 2012 to December 2015. It begins with an introduction to time series concepts and components. It then examines using multiple linear regression and Box-Jenkins methods to model and forecast aluminium prices. Regression analysis found aluminium futures prices highly correlated with prices, but production was not significant. Box-Jenkins is discussed as flexible but identification techniques are difficult and long-term forecasts become straight lines. The document aims to accurately model and forecast future aluminium prices.

A Fuzzy Arithmetic Approach for Perishable Items in Discounted Entropic Order...

This paper uses fuzzy arithmetic approach to the system cost for perishable items with instant deterioration for the discounted entropic order quantity model. Traditional crisp system cost observes that some costs may belong to the uncertain factors. It is necessary to extend the system cost to treat also the vague costs. We introduce a new concept which we call entropy and show that the total payoff satisfies the optimization property. We show how special case of this problem reduce to perfect results, and how post deteriorated discounted entropic order quantity model is a generalization of optimization. It has been imperative to demonstrate this model by analysis, which reveals important characteristics of discounted structure. Further numerical experiments are conducted to evaluate the relative performance between the fuzzy and crisp cases in EnOQ and EOQ separately.

[M3A4] Data Analysis and Interpretation Specialization![[M3A4] Data Analysis and Interpretation Specialization](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[M3A4] Data Analysis and Interpretation Specialization](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

- The document discusses testing a logistic regression model with a binary response variable (trouble paying attention in school) and multiple explanatory variables using data from the AddHealth dataset.

- A logistic regression model is created with "NOBREAKFAST" as the single explanatory variable, finding students with no breakfast are 1.37 times more likely to have trouble paying attention.

- A second model adds the variable "ENOUGHSLEEP", finding enough sleep reduces the likelihood by a factor of 0.44.

- A third full model is created to check for confounding, but findings remain consistent with no breakfast increasing the likelihood of trouble paying attention.

Qm0022 tqm tools and techniques

Dear students get fully solved assignments

Send your semester & Specialization name to our mail id :

“ help.mbaassignments@gmail.com ”

or

Call us at : 08263069601

Qm0019 foundations of quality management

This document provides information about getting fully solved assignments for the MBA semester 3 subject Foundations of Quality Management. It includes details like the semester, subject code, credit hours, and contact information to email or call for assistance. The document then provides a sample assignment question and answers related to topics in quality management, including definitions of total quality management, contributions of quality gurus, quality policy and objectives, quality audits, productivity, knowledge management, quality awards, and types of quality costs.

Presentatie proversie

Een verkorte versie van de presentatie, gehouden tijdens de bijeenkomst van Stigas op 17 november 2015 in Utrecht.

Virus y fraudes

El documento habla sobre diferentes tipos de virus, fraudes y amenazas a la seguridad informática. Define términos como antivirus, cifrado, ciberdelincuencia, cracker, correo electrónico, copias de seguridad, firewall, hacker, hacktivismo, keylogger, phishing, ransomware, rogueware, spam, gusano, malware, software, spyware, suplantación de identidad, troyano, virus, vulnerabilidades y webs maliciosas.

Megharaja_Pedde_Delphi Developer 5+YearExp

This document is a resume for Megharaja Pedde seeking a software development position. It summarizes his objective, qualifications, experience, skills, education and technical projects. He has over 4 years of experience in software development using technologies like Delphi, Oracle and SQL. His most recent role is as an Associate Project at Cognizant developing applications like Stanchion for inventory management and sales tracking.

越南的中秋节 - Vietnam Moon Festival

Homework from my Mandarin class.

Little approach into Vietnam culture, specifically Vietnam Moon Festival - a national occasional day.

Through this project, hopefully Chinese-learning audiences may have a better understanding about Vietnam Moon Festival Day.

Rock shop

The document discusses the benefits of exercise for mental health. Regular physical activity can help reduce anxiety and depression and improve mood and cognitive functioning. Exercise causes chemical changes in the brain that may help protect against mental illness and improve symptoms.

Bab 2

The document discusses the benefits of exercise for both physical and mental health. Regular exercise can improve cardiovascular health, reduce symptoms of depression and anxiety, enhance mood, and boost brain health. Staying physically active aims to reap these rewards by incorporating exercise into a daily routine.

More Related Content

What's hot

IJPR (2015) A Distance-based Methodology for Increased Extraction Of Informat...

IJPR (2015) A Distance-based Methodology for Increased Extraction Of Informat...Nicky Campbell-Allen

This document describes a new methodology for incorporating information from the roof matrices in Quality Function Deployment (QFD) studies. The roof matrices contain correlations between customer requirements (voice of customers) and technical characteristics, but existing methods for including this information in QFD analyses have limitations. The proposed new methodology uses the Manhattan Distance Measure to integrate roof matrix correlation data into the final weightings of technical characteristics. This provides a more consistent way to select technical characteristics by identifying those that are negatively or positively correlated. The methodology is demonstrated using a published QFD case study.The Use of ARCH and GARCH Models for Estimating and Forecasting Volatility-ru...

This document discusses volatility modeling using ARCH and GARCH models. It first provides background on ARCH and GARCH models, noting they were developed to model characteristics of financial time series data like volatility clustering and fat tails. It then describes the specific ARCH and GARCH models that will be used in the study, including the ARCH, GARCH, EGARCH, GJR, APARCH, IGARCH, FIGARCH and FIAPARCH models. The document aims to apply these models to daily stock index data from the IMKB 100 to analyze and forecast volatility, and better understand risk in the Turkish market.

Lecture slides stats1.13.l20.air

This document summarizes a lecture on binary logistic regression. It begins with an overview of binary logistic regression, noting that it is used to predict a binary categorical outcome variable from predictor variables that may be continuous or categorical. The second segment provides an example using data from mock jury research, with the outcome being a death penalty verdict and predictors being jurors' beliefs. Key outputs of binary logistic regression are explained such as regression coefficients, odds ratios, Wald tests, and measures of model fit and classification success.

Study on Evaluation of Venture Capital Based onInteractive Projection Algorithm

International Journal of Business and Management Invention (IJBMI) is an international journal intended for professionals and researchers in all fields of Business and Management. IJBMI publishes research articles and reviews within the whole field Business and Management, new teaching methods, assessment, validation and the impact of new technologies and it will continue to provide information on the latest trends and developments in this ever-expanding subject. The publications of papers are selected through double peer reviewed to ensure originality, relevance, and readability. The articles published in our journal can be accessed online.

PRIORITIZING THE BANKING SERVICE QUALITY OF DIFFERENT BRANCHES USING FACTOR A...

In recent years, India’s service industry is developing rapidly. The objective of the study is to explore the

dimensions of customer perceived service quality in the context of the Indian banking industry. In order to

categorize the customer needs into quality dimensions, Factor analysis (FA) has been carried out on

customer responses obtained through questionnaire survey. Analytic Hierarchy Process (AHP) is employed

to determine the weights of the banking service quality dimensions. The priority structure of the quality

dimensions provides an idea for the Banking management to allocate the resources in an effective manner

to achieve more customer satisfaction. Technique for Order Preference Similarity to Ideal Solution

(TOPSIS) is used to obtain final ranking of different branches.

Real time clustering of time series

This document discusses methods for clustering time series data in a way that allows the cluster structure to change over time. It begins by introducing the problem and defining relevant terms. It then provides spectral clustering as a preliminary benchmark approach before exploring an alternative method using triangular potentials within a graphical model framework. The document presents the proposed method and provides illustrative examples and discussion of extensions.

GARCH

1) This paper proposes an adaptive quasi-maximum likelihood estimation approach for GARCH models when the distribution of volatility data is unspecified or heavy-tailed.

2) The approach works by using a scale parameter ηf to identify the discrepancy between the wrongly specified innovation density and the true innovation density.

3) Simulation studies and an application show that the adaptive approach gains better efficiency compared to other methods, especially when the innovation error is heavy-tailed.

essay

1) The document analyzes the relationship between stock-commodity correlation and business cycles from 1991-2014 using regression analysis. It finds the stock-commodity correlation is positively related to periods of economic weakness, as evidenced by a positive relationship with default spread.

2) Regression models show stock-commodity correlation is serially correlated and has a negative relationship with default spread, indicating higher correlation during recessions. However, the effect of real GDP growth and inflation on correlation is unclear.

3) In conclusion, the findings are consistent with prior research that stock-commodity correlation increases during economic downturns, when firms adjust behaviors and investor pessimism rises.

cian mcdonnell- aluminium prices fyp

This document discusses time series analysis and forecasting of aluminium prices from January 2012 to December 2015. It begins with an introduction to time series concepts and components. It then examines using multiple linear regression and Box-Jenkins methods to model and forecast aluminium prices. Regression analysis found aluminium futures prices highly correlated with prices, but production was not significant. Box-Jenkins is discussed as flexible but identification techniques are difficult and long-term forecasts become straight lines. The document aims to accurately model and forecast future aluminium prices.

A Fuzzy Arithmetic Approach for Perishable Items in Discounted Entropic Order...

This paper uses fuzzy arithmetic approach to the system cost for perishable items with instant deterioration for the discounted entropic order quantity model. Traditional crisp system cost observes that some costs may belong to the uncertain factors. It is necessary to extend the system cost to treat also the vague costs. We introduce a new concept which we call entropy and show that the total payoff satisfies the optimization property. We show how special case of this problem reduce to perfect results, and how post deteriorated discounted entropic order quantity model is a generalization of optimization. It has been imperative to demonstrate this model by analysis, which reveals important characteristics of discounted structure. Further numerical experiments are conducted to evaluate the relative performance between the fuzzy and crisp cases in EnOQ and EOQ separately.

[M3A4] Data Analysis and Interpretation Specialization![[M3A4] Data Analysis and Interpretation Specialization](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[M3A4] Data Analysis and Interpretation Specialization](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

- The document discusses testing a logistic regression model with a binary response variable (trouble paying attention in school) and multiple explanatory variables using data from the AddHealth dataset.

- A logistic regression model is created with "NOBREAKFAST" as the single explanatory variable, finding students with no breakfast are 1.37 times more likely to have trouble paying attention.

- A second model adds the variable "ENOUGHSLEEP", finding enough sleep reduces the likelihood by a factor of 0.44.

- A third full model is created to check for confounding, but findings remain consistent with no breakfast increasing the likelihood of trouble paying attention.

What's hot (11)

IJPR (2015) A Distance-based Methodology for Increased Extraction Of Informat...

IJPR (2015) A Distance-based Methodology for Increased Extraction Of Informat...

The Use of ARCH and GARCH Models for Estimating and Forecasting Volatility-ru...

The Use of ARCH and GARCH Models for Estimating and Forecasting Volatility-ru...

Study on Evaluation of Venture Capital Based onInteractive Projection Algorithm

Study on Evaluation of Venture Capital Based onInteractive Projection Algorithm

PRIORITIZING THE BANKING SERVICE QUALITY OF DIFFERENT BRANCHES USING FACTOR A...

PRIORITIZING THE BANKING SERVICE QUALITY OF DIFFERENT BRANCHES USING FACTOR A...

A Fuzzy Arithmetic Approach for Perishable Items in Discounted Entropic Order...

A Fuzzy Arithmetic Approach for Perishable Items in Discounted Entropic Order...

[M3A4] Data Analysis and Interpretation Specialization![[M3A4] Data Analysis and Interpretation Specialization](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[M3A4] Data Analysis and Interpretation Specialization](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[M3A4] Data Analysis and Interpretation Specialization

Viewers also liked

Qm0022 tqm tools and techniques

Dear students get fully solved assignments

Send your semester & Specialization name to our mail id :

“ help.mbaassignments@gmail.com ”

or

Call us at : 08263069601

Qm0019 foundations of quality management

This document provides information about getting fully solved assignments for the MBA semester 3 subject Foundations of Quality Management. It includes details like the semester, subject code, credit hours, and contact information to email or call for assistance. The document then provides a sample assignment question and answers related to topics in quality management, including definitions of total quality management, contributions of quality gurus, quality policy and objectives, quality audits, productivity, knowledge management, quality awards, and types of quality costs.

Presentatie proversie

Een verkorte versie van de presentatie, gehouden tijdens de bijeenkomst van Stigas op 17 november 2015 in Utrecht.

Virus y fraudes

El documento habla sobre diferentes tipos de virus, fraudes y amenazas a la seguridad informática. Define términos como antivirus, cifrado, ciberdelincuencia, cracker, correo electrónico, copias de seguridad, firewall, hacker, hacktivismo, keylogger, phishing, ransomware, rogueware, spam, gusano, malware, software, spyware, suplantación de identidad, troyano, virus, vulnerabilidades y webs maliciosas.

Megharaja_Pedde_Delphi Developer 5+YearExp

This document is a resume for Megharaja Pedde seeking a software development position. It summarizes his objective, qualifications, experience, skills, education and technical projects. He has over 4 years of experience in software development using technologies like Delphi, Oracle and SQL. His most recent role is as an Associate Project at Cognizant developing applications like Stanchion for inventory management and sales tracking.

越南的中秋节 - Vietnam Moon Festival

Homework from my Mandarin class.

Little approach into Vietnam culture, specifically Vietnam Moon Festival - a national occasional day.

Through this project, hopefully Chinese-learning audiences may have a better understanding about Vietnam Moon Festival Day.

Rock shop

The document discusses the benefits of exercise for mental health. Regular physical activity can help reduce anxiety and depression and improve mood and cognitive functioning. Exercise causes chemical changes in the brain that may help protect against mental illness and improve symptoms.

Bab 2

The document discusses the benefits of exercise for both physical and mental health. Regular exercise can improve cardiovascular health, reduce symptoms of depression and anxiety, enhance mood, and boost brain health. Staying physically active aims to reap these rewards by incorporating exercise into a daily routine.

Application Whitelisting - Complementing Threat centric with Trust centric se...

This document discusses application whitelisting as a security control that can complement traditional threat-centric security approaches. It notes that application whitelisting works on a principle of default deny by only allowing approved applications to run, whereas traditional antivirus uses a default allow approach. The document outlines challenges with traditional antivirus, including its inability to keep up with the exponential growth of malware. It advocates for implementing application whitelisting to prevent both known and unknown threats from executing. Key considerations for implementation include scope, stakeholder engagement, approval processes, and change management. The document argues that application whitelisting can significantly reduce malware incidents when implemented effectively.

Jit y manufactura esbelta 3

Es una filosofía que define la forma en que debería optimizarse un sistema de producción. Se trata de entregar materias primas o componentes a la línea de fabricación de forma que lleguen “justo a tiempo” a medida que son necesarios.

Jit y manufactura esbelta 5

Es una filosofía que define la forma en que debería optimizarse un sistema de producción. Se trata de entregar materias primas o componentes a la línea de fabricación de forma que lleguen “justo a tiempo” a medida que son necesarios.

Cosmétologie : Les impacts des biotechnologies en cosmétique.

Revue "Pharmacien demain" n°26 - ALEE-

Les biotechnologies sont des industries employant des techniques utilisant des êtres vivants, micro-organismes, animaux, ou végétaux, généralement après modification de leurs caractéristiques génétiques, pour la fabrication industrielle de composés biologiques ou chimiques comme des médicaments, matières premières industrielles ou pour l’amélioration de la production agricole comme les plantes et ou les animaux transgéniques ou O.G.M. [organismes génétiquement modifiés]

Ainsi, la biotechnologie est utilisée dans les industries pharmaceutique, agricole, chimique mais aussi dans l’industrie cosmétique.

En effet, la biotechnologie est une nouvelle source d’innovation pour la cosmétologie. Même si elle est encore un peu plus chère que les techniques issues de la chimie classique, la biotechnologie monte en puissance depuis une quinzaine d’années.

Comme dans l’industrie pharmaceutique, la biotechnologie est capable de synthétiser des molécules qui auront potentiellement une activité biologique transcutanée, lorsque la chimie lourde n’y parvient pas toujours.

Chez Solabia, on fabrique chaque année plusieurs dizaines de tonnes de molécules à des coûts compatibles avec le marché, grâce à des micro-organismes placés dans un fermenteur. Ce procédé n’est pas encore abordable pour fabriquer en très grande quantité des molécules destinées à des marchés de grand volume comme les biocarburants ou la chimie, mais présente un avenir très prometteur !

reseauprosante.fr

Jit y manufactura esbelta 4

Es una filosofía que define la forma en que debería optimizarse un sistema de producción. Se trata de entregar materias primas o componentes a la línea de fabricación de forma que lleguen “justo a tiempo” a medida que son necesarios.

Bt0075, rdbms and my sql

This document provides instructions for students to obtain fully solved assignments. It tells students to send their semester and specialization name to the email address "help.mbaassignments@gmail.com" or call the phone number 08263069601 to receive solved assignments. It provides this contact information to help students complete their coursework.

Mit401 data warehousing and data mining

Dear students get fully solved assignments

Send your semester & Specialization name to our mail id :

help.mbaassignments@gmail.com

or

call us at : 08263069601

Mbf403 crm and it in banking

This document provides information about obtaining fully solved assignments for the SMU BBA Spring 2014 semester. Students are instructed to send their semester and specialization name to the provided email address or call the given phone number to receive assistance with assignments. Details are provided about available assignments in subjects like CRM and IT in Banking, SAP, business process reengineering, e-cheques in India, data mining applications in banks, and information system security requirements for banks.

Viewers also liked (20)

Application Whitelisting - Complementing Threat centric with Trust centric se...

Application Whitelisting - Complementing Threat centric with Trust centric se...

Cosmétologie : Les impacts des biotechnologies en cosmétique.

Cosmétologie : Les impacts des biotechnologies en cosmétique.

Similar to Qm0021 statistical process control

Qm0021 statistical process control

Dear students get fully solved assignments

Send your semester & Specialization name to our mail id :

“ help.mbaassignments@gmail.com ”

or

Call us at : 08263069601

Operations Management VTU BE Mechanical 2015 Solved paper

The document provides information about operations management concepts including scientific management, productivity, ABC analysis, economic order quantity, and materials requirements planning. It defines each concept and provides examples to illustrate how they are applied. Scientific management aims to improve efficiency through systematic analysis of work processes. Productivity is a measure of output per unit of input. ABC analysis categorizes inventory items based on their value and usage to determine appropriate control methods. Economic order quantity and ordering cycle determine optimal replenishment amounts and frequencies to minimize total inventory costs. Materials requirements planning is a technique to plan material needs at different production levels based on a product structure tree.

QM0012- STATISTICAL PROCESS CONTROL AND PROCESS CAPABILITY

This document provides information about a fully solved assignment for students. It lists the semester, specialization, subject code, name, credits, and marks. It also provides 6 questions related to statistical process control and process capability. For each question, it provides the evaluation scheme and space to write the answer. The questions cover topics like Pareto charts, scatter diagrams, Poisson distribution, hypothesis testing, analysis of variance, attribute control charts, and the methodology for statistical process control implementation.

CCP_SEC2_ Cost Estimating

A CCP is an experienced practitioner with advanced knowledge and technical expertise to apply the broad principles and best practices of Total Cost Management (TCM) in the planning, execution and management of any organizational project or program. CCPs also demonstrate the ability to research and communicate aspects of TCM principles and practices to all levels of project or program stakeholders, both internally and externally.

ENTROPY-COST RATIO MAXIMIZATION MODEL FOR EFFICIENT STOCK PORTFOLIO SELECTION...

This paper introduces a new stock portfolio selection model in non-stochastic environment.Following the principle of maximum entropy, a new entropy-cost ratio function is introduced as

the objective function. The uncertain returns, risks and ividends of the securities are considered as interval numbers. Along with the objective function, eight different types of constraints are used in the model to convert it into a pragmatic one. Three different models have been proposed by defining the future inancial market optimistically, pessimistically and in hecombined form to model the portfolio selection problem. To illustrate the effectiveness and tractability of the proposed models, these are tested on a set of data from Bombay Stock Exchange (BSE). The solution has been done by genetic algorithm.

An Empirical Investigation Of The Arbitrage Pricing Theory

The study empirically tests the Arbitrage Pricing Theory (APT) developed by Ross in 1976 using daily stock return data from 1962-1972. It finds:

1) Factor analysis identifies 5 factors that explain stock returns within industry groups, supporting the APT.

2) Cross-sectional regressions show factor loadings can explain expected stock returns, as the APT predicts.

3) Adding total return variance to the regressions does not eliminate the explanatory power of factor loadings, supporting the APT over alternatives.

4) Tests across industry groups find no evidence factor structures differ, as the APT assumes consistent factors across stocks.

Mb0040 statistics for management

Dear students get fully solved assignments

Send your semester & Specialization name to our mail id :

help.mbaassignments@gmail.com

or

call us at : 08263069601

Modeling & Simulation Lecture Notes

FellowBuddy.com is an innovative platform that brings students together to share notes, exam papers, study guides, project reports and presentation for upcoming exams.

We connect Students who have an understanding of course material with Students who need help.

Benefits:-

# Students can catch up on notes they missed because of an absence.

# Underachievers can find peer developed notes that break down lecture and study material in a way that they can understand

# Students can earn better grades, save time and study effectively

Our Vision & Mission – Simplifying Students Life

Our Belief – “The great breakthrough in your life comes when you realize it, that you can learn anything you need to learn; to accomplish any goal that you have set for yourself. This means there are no limits on what you can be, have or do.”

Like Us - https://www.facebook.com/FellowBuddycom

© Charles T. Diebold, Ph.D., 73013. All Rights Reserved. Pa.docx

© Charles T. Diebold, Ph.D., 7/30/13. All Rights Reserved. Page 1 of 21

Logistic Regression Tutorial:

RSCH-8250 Advanced Quantitative Reasoning

Charles T. Diebold, Ph.D.

July 30, 2013

How to cite this document:

Diebold, C. T. (2013, July 30). Logistic regression tutorial: RSCH-8250 advanced quantitative reasoning.

Available from [email protected]

Table of Contents

Assignment and Tutorial Introduction ................................................................................................................ 2

Specific Assignment Instructions & Expectations .......................................................................................... 2

Example APA Table .................................................................................................................................. 4

SPSS Assignment Screenshots and Output ......................................................................................................... 4

Becoming Familiar with the Variables ........................................................................................................... 4

Descriptive Statistics: Dichotomous & Categorical Variables ..................................................................... 6

Descriptive Statistics: Metric Variables ...................................................................................................... 8

Binary Logistic Regression ............................................................................................................................ 9

Sorting by Participant Number Prior to Analysis ........................................................................................ 9

Screenshots Specifying the Logistic Regression ....................................................................................... 10

Logistic Regression Assignment Output....................................................................................................... 12

References and Recommended Reading ....................................................................................................... 20

Appendix: Example of Additional APA Tables for Logistic Regression ....................................................... 21

© Charles T. Diebold, Ph.D., 7/30/13. All Rights Reserved. Page 2 of 21

Odds Ratio Tutorial:

RSCH-8250 Advanced Quantitative Reasoning

Assignment and Tutorial Introduction

This tutorial is intended to assist RSCH-8250 students in completing the Week 9 application assignment. I

recommend that you use this tutorial as your first line of instruction on producing the needed output and on

what to focus on from the output. You should also re-review my odds ratio tutorial from last week.

In the textbook’s companion website, Field provides elaborate interpretation of key parts of the output that I

recommend you study even though some of his output is inconsistent with the hierarchical logistic that .

Techniques in marketing research

This document provides an overview and categorization of various marketing research techniques. It separates the techniques into mature techniques that have been used for some time, such as correlation analysis and regression analysis, and modern techniques that are newer, such as decision trees, dynamic programming, and technological forecasting. For several of the techniques, a brief explanation of the approach is given. The overall purpose is to familiarize management with the key research tools used by researchers.

dimensional_analysis.pptx

The document discusses dimensional analysis and Buckingham Pi theorem. It begins by defining dimensions, units, and fundamental vs. derived dimensions. It then discusses dimensional homogeneity and uses examples to show how dimensional analysis can be used to identify non-dimensional parameters and reduce the number of variables in equations. The Buckingham Pi theorem is introduced as a method to systematically create dimensionless pi terms from physical variables. Steps of the theorem and examples applying it are provided. Overall, the document provides an overview of dimensional analysis and Buckingham Pi theorem as tools for understanding relationships between physical quantities and reducing complexity in experimental modeling.

Qm0012 statistical process control and process capability

This document provides fully solved assignments for the MBA semester 3 course "QM0012 - Statistical Process Control and Process Capability". It includes 6 questions related to statistical process control, cause and effect diagrams, control charts, experimental design, process capability, and acceptance sampling. Students can send their semester and specialization details to the provided email or call the phone number to receive the solved assignments. The questions cover topics such as the meaning and differences between statistical quality control and statistical process control, explaining the structure and construction of control charts with examples, guidelines for experimental design and acceptance sampling, and more.

Demand forecasting

1. Demand forecasting is used to estimate future demand for products over specific time periods and is important for planning operations.

2. Demand can be categorized by the type of goods (consumer vs capital) and time period (short, medium, long term). Quantitative forecasting techniques include trend projection methods like time series analysis and regression.

3. Techniques like ARIMA combine moving averages and autoregressive methods to model trends and differences in time series data. Regression analysis uses statistical methods to model relationships between demand and influencing factors.

Week08.pdf

This document discusses static and dynamic models, deterministic and stochastic models, and various methods for studying systems with uncertainty. Deterministic models use differential equations to exactly predict outcomes, while stochastic models use random variables and can only compute probabilities. Numerical methods and simulation are introduced as ways to study more complex systems. Simulation models represent real systems and allow experiments to be performed faster and safer. Monte Carlo methods and discrete event simulation are discussed as techniques for simulation.

www.elsevier.comlocatecompstrucComputers and Structures .docx

www.elsevier.com/locate/compstruc

Computers and Structures 85 (2007) 235–243

On the treatment of uncertainties in structural mechanics and analysis q

G.I. Schuëller *

Institute of Engineering Mechanics, Leopold-Franzens University Innsbruck, Technikerstr. 13, 6020 Innsbruck, Austria

Received 9 August 2006; accepted 31 October 2006

Available online 22 December 2006

Abstract

In this paper the need for a rational treatment of uncertainties in structural mechanics and analysis is reasoned. It is shown that the

traditional deterministic conception can be easily extended by applying statistical and probabilistic concepts. The so-called Monte Carlo

simulation procedure is the key for those developments, as it allows the straightforward use of the currently used deterministic analysis

procedures.

A numerical example exemplifies the methodology. It is concluded that uncertainty analysis may ensure robust predictions of vari-

ability, model verification, safety assessment, etc.

� 2006 Elsevier Ltd. All rights reserved.

Keywords: Uncertainty; Monte Carlo simulaton; Finite elements; Response variability; Model verification; Robustness

1. Introduction

Structural mechanics analysis up to this date, generally is

still based on a deterministic conception. Observed varia-

tions in loading conditions, material properties, geometry,

etc. are taken into account by either selecting extremely

high, low or average values, respectively, for representing

the parameters. Hence, this way, uncertainties inherent in

almost every analysis process are considered just intuitively.

Observations and measurements of physical processes,

however, show not only variability, but also random char-

acteristics. Statistical and probabilistic procedures provide

a sound frame work for a rational treatment of analysis

of these uncertainties. Moreover there are various types of

uncertainties to be dealt with. While the uncertainties in

mechanical modeling can be reduced as additional knowl-

edge becomes available, the physical or intrinsic uncertain-

ties, e.g. of environmental loading, can not. Furthermore,

0045-7949/$ - see front matter � 2006 Elsevier Ltd. All rights reserved.

doi:10.1016/j.compstruc.2006.10.009

q Plenary Keynote Lecture presented at the 3rd MIT Conference on

Computational Fluid and Solid Mechanics, Boston, MA, USA, June 14–

17, 2005.

* Tel.: +43 512 507 6841; fax: +43 512 507 2905.

E-mail address: [email protected]

the entire spectrum of uncertainties is also not known. In

reality, neither the true model nor the model parameters

are deterministically known. Assuming that by finite ele-

ment (FE) procedures structures and continua can be repre-

sented reasonably well the question of the effect of the

discretization still remains. It is generally expected, that

an increase in the size of the structural models, in terms of

degrees of freedom, will increase the level of realism of the

model. Comparisons with measurements, however, clearly

show that this expect.

revision-notes-introductory-econometrics-lecture-1-11.pdf

Econometrics uses economic theory and statistical tools to quantify economic relationships and answer questions about economic data. An econometric model includes both a systematic component based on economic theory and an error term that represents unpredictable factors. Most economic data comes from non-experimental sources and is in time-series, cross-sectional, or panel form. The goal of econometrics is statistical inference like estimating parameters, predicting outcomes, and testing hypotheses using sample data. Econometric models incorporate probability distributions, random variables, and concepts like the mean, variance, and normal distribution to analyze economic data statistically.

Kinetic bands versus Bollinger Bands

This research paper demonstrates the invention of the kinetic bands, based on Romanian mathematician and statistician Octav Onicescu’s kinetic energy, also known as “informational energy”, where we use historical data of foreign exchange currencies or indexes to predict the trend displayed by a stock or an index and whether it will go up or down in the future. Here, we explore the imperfections of the Bollinger Bands to determine a more sophisticated triplet of indicators that predict the future movement of prices in the Stock Market. An Extreme Gradient Boosting Modelling was conducted in Python using historical data set from Kaggle, the historical data set spanning all current 500 companies listed. An invariable importance feature was plotted. The results displayed that Kinetic Bands, derived from (KE) are very influential as features or technical indicators of stock market trends. Furthermore, experiments done through this invention provide tangible evidence of the empirical aspects of it. The machine learning code has low chances of error if all the proper procedures and coding are in play. The experiment samples are attached to this study for future references or scrutiny.

Homework 21. Complete Chapter 3, Problem #1 under Project.docx

Homework 2

1. Complete Chapter 3, Problem #1 under “Project: Statistical Analysis in Inverse Problems

Using Simulated Data” on pages 58–59 of B&T. Use the same initial conditions as before from

Chapter 2.

2. Consider the logistic population growth model

ẋ = ax− bx2, x(0) = x0.

Let K = a

b

. We will examine the model for the q = (a, b, x0) parameter vectors

(i) q = (0.5, 0.1, 0.1) ⇒ K = 5 (relatively flat curve),

(ii) q = (0.7, 0.04, 0.1) ⇒ K = 17.5 (moderately sloped curve),

(iii) q = (0.8, 0.01, 0.1) ⇒ K = 80 (relatively steep curve).

Define the regions R0, R1, and R2 as follows:

• R0 is the region where t ∈ [0, 2],

• R1 is the region where t ∈ (2, 12],

• R2 is the region where t ∈ (12, 16].

For each parameter vector q:

(a) Let n = 15. For i = 0, sample n points from region Ri, distributed uniformly over the interval.

Find the qOLS optimized parameters for 3 different initial guesses that are far from the true

solution. (You can use the same initial guesses for all regions and all q parameter vectors).

Calculate J(qOLS) where J is the cost function of the least squares criterion. Calculate

K̂ = â

b̂

. Include all results in a table.

For the optimal qOLS with the lowest cost J(qOLS), plot the solution curve for the true

solution and the estimated solution on the same plot with the sampled data points. How

do the results compare to the true solution? Determine the standard errors and confidence

intervals. Are the true parameters contained within the confidence interval?

Then repeat for i = 1. Then repeat for i = 2.

(b) Repeat problem (a) with n = 50.

(c/d) Repeat problems (a) and (b), but sampling from a uniform distribution over the entire region

t ∈ [0, 16] instead of a single Ri region.

(e) When sampling from only region Ri, does increasing the sample size improve the results?

How does this vary for i = 0, 1, 2? What if you sample over all three regions?

1

MATHEMATICAL

AND EXPERIMENTAL

MODELING OF

PHYSICAL AND

BIOLOGICAL

PROCESSES

TEXTBOOKS in MATHEMATICS

Series Editor: Denny Gulick

PUBLISHED TITLES

COMPLEX VARIABLES: A PHYSICAL APPROACH WITH APPLICATIONS AND MATLAB®

Steven G. Krantz

INTRODUCTION TO ABSTRACT ALGEBRA

Jonathan D. H. Smith

LINEAR ALBEBRA: A FIRST COURSE WITH APPLICATIONS

Larry E. Knop

MATHEMATICAL AND EXPERIMENTAL MODELING OF PHYSICAL AND BIOLOGICAL PROCESSES

H. T. Banks and H. T. Tran

FORTHCOMING TITLES

ENCOUNTERS WITH CHAOS AND FRACTALS

Denny Gulick

MATHEMATICAL

AND EXPERIMENTAL

MODELING OF

PHYSICAL AND

BIOLOGICAL

PROCESSES

H. T. Banks

H. T. Tran

TEXTBOOKS in MATHEMATICS

CRC Press

Taylor & Francis Group

6000 Broken Sound Parkway NW, Suite 300

Boca Raton, FL 33487-2742

© 2009 by Taylor & Francis Group, LLC

CRC Press is an imprint of Taylor & Francis Group, an Informa business

No claim to original U.S. Government works

Version Date: 20130920

International Standard Book Number-13: 978-1-4200-7338-6 (eBook - PDF)

This book contains information obtained from authentic a.

Principles of design of experiments (doe)20 5-2014

This document discusses experimental design and optimization. It defines key terms like factors, responses, and residuals. It explains that experimental design is used to systematically examine problems in research, development and production. Factorial design is introduced as a method to study the effects of all factors and interactions on responses. The document provides an example experimental design to investigate if playing violent video games causes violent behavior. It outlines defining the population, randomly selecting a sample, using control and experimental conditions, measuring dependent variables, and comparing results to draw conclusions.

Six sigma statistics

This document provides an overview and examples of various statistical concepts and tools, including:

- Useful statistical measures such as mean, median, mode, range, variance, and standard deviation.

- The normal distribution and how to calculate proportions of values that fall within a certain range using normal distribution tables or Excel functions.

- Common values from the normal distribution such as what proportion of values fall within 1, 2, or 3 standard deviations of the mean.

- Six Sigma "sigma values" and how they correspond to defects per million opportunities.

- Visualization tools like histograms, Pareto charts, stem-and-leaf plots, scatter graphs, multi-vari charts, and box plots; including

Similar to Qm0021 statistical process control (20)

Operations Management VTU BE Mechanical 2015 Solved paper

Operations Management VTU BE Mechanical 2015 Solved paper

QM0012- STATISTICAL PROCESS CONTROL AND PROCESS CAPABILITY

QM0012- STATISTICAL PROCESS CONTROL AND PROCESS CAPABILITY

ENTROPY-COST RATIO MAXIMIZATION MODEL FOR EFFICIENT STOCK PORTFOLIO SELECTION...

ENTROPY-COST RATIO MAXIMIZATION MODEL FOR EFFICIENT STOCK PORTFOLIO SELECTION...

An Empirical Investigation Of The Arbitrage Pricing Theory

An Empirical Investigation Of The Arbitrage Pricing Theory

© Charles T. Diebold, Ph.D., 73013. All Rights Reserved. Pa.docx

© Charles T. Diebold, Ph.D., 73013. All Rights Reserved. Pa.docx

Qm0012 statistical process control and process capability

Qm0012 statistical process control and process capability

www.elsevier.comlocatecompstrucComputers and Structures .docx

www.elsevier.comlocatecompstrucComputers and Structures .docx

revision-notes-introductory-econometrics-lecture-1-11.pdf

revision-notes-introductory-econometrics-lecture-1-11.pdf

Homework 21. Complete Chapter 3, Problem #1 under Project.docx

Homework 21. Complete Chapter 3, Problem #1 under Project.docx

Principles of design of experiments (doe)20 5-2014

Principles of design of experiments (doe)20 5-2014

Recently uploaded

Chapter 4 - Islamic Financial Institutions in Malaysia.pptx

Chapter 4 - Islamic Financial Institutions in Malaysia.pptxMohd Adib Abd Muin, Senior Lecturer at Universiti Utara Malaysia

This slide is special for master students (MIBS & MIFB) in UUM. Also useful for readers who are interested in the topic of contemporary Islamic banking.

ISO/IEC 27001, ISO/IEC 42001, and GDPR: Best Practices for Implementation and...

Denis is a dynamic and results-driven Chief Information Officer (CIO) with a distinguished career spanning information systems analysis and technical project management. With a proven track record of spearheading the design and delivery of cutting-edge Information Management solutions, he has consistently elevated business operations, streamlined reporting functions, and maximized process efficiency.

Certified as an ISO/IEC 27001: Information Security Management Systems (ISMS) Lead Implementer, Data Protection Officer, and Cyber Risks Analyst, Denis brings a heightened focus on data security, privacy, and cyber resilience to every endeavor.

His expertise extends across a diverse spectrum of reporting, database, and web development applications, underpinned by an exceptional grasp of data storage and virtualization technologies. His proficiency in application testing, database administration, and data cleansing ensures seamless execution of complex projects.

What sets Denis apart is his comprehensive understanding of Business and Systems Analysis technologies, honed through involvement in all phases of the Software Development Lifecycle (SDLC). From meticulous requirements gathering to precise analysis, innovative design, rigorous development, thorough testing, and successful implementation, he has consistently delivered exceptional results.

Throughout his career, he has taken on multifaceted roles, from leading technical project management teams to owning solutions that drive operational excellence. His conscientious and proactive approach is unwavering, whether he is working independently or collaboratively within a team. His ability to connect with colleagues on a personal level underscores his commitment to fostering a harmonious and productive workplace environment.

Date: May 29, 2024

Tags: Information Security, ISO/IEC 27001, ISO/IEC 42001, Artificial Intelligence, GDPR

-------------------------------------------------------------------------------

Find out more about ISO training and certification services

Training: ISO/IEC 27001 Information Security Management System - EN | PECB

ISO/IEC 42001 Artificial Intelligence Management System - EN | PECB

General Data Protection Regulation (GDPR) - Training Courses - EN | PECB

Webinars: https://pecb.com/webinars

Article: https://pecb.com/article

-------------------------------------------------------------------------------

For more information about PECB:

Website: https://pecb.com/

LinkedIn: https://www.linkedin.com/company/pecb/

Facebook: https://www.facebook.com/PECBInternational/

Slideshare: http://www.slideshare.net/PECBCERTIFICATION

BBR 2024 Summer Sessions Interview Training

Qualitative research interview training by Professor Katrina Pritchard and Dr Helen Williams

Your Skill Boost Masterclass: Strategies for Effective Upskilling

Your Skill Boost Masterclass: Strategies for Effective UpskillingExcellence Foundation for South Sudan

Strategies for Effective Upskilling is a presentation by Chinwendu Peace in a Your Skill Boost Masterclass organisation by the Excellence Foundation for South Sudan on 08th and 09th June 2024 from 1 PM to 3 PM on each day.PCOS corelations and management through Ayurveda.

This presentation includes basic of PCOS their pathology and treatment and also Ayurveda correlation of PCOS and Ayurvedic line of treatment mentioned in classics.

Main Java[All of the Base Concepts}.docx

This is part 1 of my Java Learning Journey. This Contains Custom methods, classes, constructors, packages, multithreading , try- catch block, finally block and more.

Pengantar Penggunaan Flutter - Dart programming language1.pptx

Pengantar Penggunaan Flutter - Dart programming language1.pptx

The Diamonds of 2023-2024 in the IGRA collection

A review of the growth of the Israel Genealogy Research Association Database Collection for the last 12 months. Our collection is now passed the 3 million mark and still growing. See which archives have contributed the most. See the different types of records we have, and which years have had records added. You can also see what we have for the future.

RPMS TEMPLATE FOR SCHOOL YEAR 2023-2024 FOR TEACHER 1 TO TEACHER 3

RPMS Template 2023-2024 by: Irene S. Rueco

Advanced Java[Extra Concepts, Not Difficult].docx![Advanced Java[Extra Concepts, Not Difficult].docx](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Advanced Java[Extra Concepts, Not Difficult].docx](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

This is part 2 of my Java Learning Journey. This contains Hashing, ArrayList, LinkedList, Date and Time Classes, Calendar Class and more.

বাংলাদেশ অর্থনৈতিক সমীক্ষা (Economic Review) ২০২৪ UJS App.pdf

বাংলাদেশের অর্থনৈতিক সমীক্ষা ২০২৪ [Bangladesh Economic Review 2024 Bangla.pdf] কম্পিউটার , ট্যাব ও স্মার্ট ফোন ভার্সন সহ সম্পূর্ণ বাংলা ই-বুক বা pdf বই " সুচিপত্র ...বুকমার্ক মেনু 🔖 ও হাইপার লিংক মেনু 📝👆 যুক্ত ..

আমাদের সবার জন্য খুব খুব গুরুত্বপূর্ণ একটি বই ..বিসিএস, ব্যাংক, ইউনিভার্সিটি ভর্তি ও যে কোন প্রতিযোগিতা মূলক পরীক্ষার জন্য এর খুব ইম্পরট্যান্ট একটি বিষয় ...তাছাড়া বাংলাদেশের সাম্প্রতিক যে কোন ডাটা বা তথ্য এই বইতে পাবেন ...

তাই একজন নাগরিক হিসাবে এই তথ্য গুলো আপনার জানা প্রয়োজন ...।

বিসিএস ও ব্যাংক এর লিখিত পরীক্ষা ...+এছাড়া মাধ্যমিক ও উচ্চমাধ্যমিকের স্টুডেন্টদের জন্য অনেক কাজে আসবে ...

Executive Directors Chat Leveraging AI for Diversity, Equity, and Inclusion

Let’s explore the intersection of technology and equity in the final session of our DEI series. Discover how AI tools, like ChatGPT, can be used to support and enhance your nonprofit's DEI initiatives. Participants will gain insights into practical AI applications and get tips for leveraging technology to advance their DEI goals.

Hindi varnamala | hindi alphabet PPT.pdf

हिंदी वर्णमाला पीपीटी, hindi alphabet PPT presentation, hindi varnamala PPT, Hindi Varnamala pdf, हिंदी स्वर, हिंदी व्यंजन, sikhiye hindi varnmala, dr. mulla adam ali, hindi language and literature, hindi alphabet with drawing, hindi alphabet pdf, hindi varnamala for childrens, hindi language, hindi varnamala practice for kids, https://www.drmullaadamali.com

Natural birth techniques - Mrs.Akanksha Trivedi Rama University

Natural birth techniques - Mrs.Akanksha Trivedi Rama UniversityAkanksha trivedi rama nursing college kanpur.

Natural birth techniques are various type such as/ water birth , alexender method, hypnosis, bradley method, lamaze method etcRecently uploaded (20)

Chapter 4 - Islamic Financial Institutions in Malaysia.pptx

Chapter 4 - Islamic Financial Institutions in Malaysia.pptx

ISO/IEC 27001, ISO/IEC 42001, and GDPR: Best Practices for Implementation and...

ISO/IEC 27001, ISO/IEC 42001, and GDPR: Best Practices for Implementation and...

Your Skill Boost Masterclass: Strategies for Effective Upskilling

Your Skill Boost Masterclass: Strategies for Effective Upskilling

Pengantar Penggunaan Flutter - Dart programming language1.pptx

Pengantar Penggunaan Flutter - Dart programming language1.pptx

RPMS TEMPLATE FOR SCHOOL YEAR 2023-2024 FOR TEACHER 1 TO TEACHER 3

RPMS TEMPLATE FOR SCHOOL YEAR 2023-2024 FOR TEACHER 1 TO TEACHER 3

Film vocab for eal 3 students: Australia the movie

Film vocab for eal 3 students: Australia the movie

বাংলাদেশ অর্থনৈতিক সমীক্ষা (Economic Review) ২০২৪ UJS App.pdf

বাংলাদেশ অর্থনৈতিক সমীক্ষা (Economic Review) ২০২৪ UJS App.pdf

Executive Directors Chat Leveraging AI for Diversity, Equity, and Inclusion

Executive Directors Chat Leveraging AI for Diversity, Equity, and Inclusion

Digital Artefact 1 - Tiny Home Environmental Design

Digital Artefact 1 - Tiny Home Environmental Design

Natural birth techniques - Mrs.Akanksha Trivedi Rama University

Natural birth techniques - Mrs.Akanksha Trivedi Rama University

Qm0021 statistical process control

- 1. Dear students get fully solved assignments Send your semester & Specialization name to our mail id : “ help.mbaassignments@gmail.com ” or Call us at : 08263069601 Assignment DRIVE FALL 2015 PROGRAM MBA SEMESTER 3 SUBJECT CODE & NAME QM0021: Statistical ProcessControl BK ID B1928 CREDIT & MARKS 4 CREDITS & 60 MARKS Note:Answer all questions.Each questionisfollowedby evaluationscheme. 1(a) What are the two main causes of variation?Explain. Answer: Twocausesof variation: There are two forms of variation: continuous and discontinuous variation. Characteristics showing continuous variation vary in a general way, with a broad range, and many intermediate values between the extremes. As a matter of fact, if you consider a large enough sample from a population, perhaps plottingfrequencyasa histogramor as a (b) Define the term ‘processes.Give an example ofprocess. Answer: Sequence of interdependent and linked procedures which, at every stage, consume one or more resources (employee time,energy, machines, money) to convert inputs (data, material, parts, etc.) into outputs. These outputs then serve as inputs for the next stage until a known goal or end result is reached. 2(a) What is meant by Standard Deviation? Answer: In statistics and probability theory, the standard deviation (SD) (represented by the Greek letter sigma, σ) measures the amount of variation or dispersion from the average. A low standard deviation indicatesthatthe data pointstend

- 2. (b) Calculate the standard deviation of the following data, which represents the number of defective products by a machine:4, 2, 5, 8 and 6 Answer: Total Numbers:5 Mean (Average):5 Standarddeviation: 2. 3a) Give the meaningof the followingbasicterminologiesin Probability: (i) Sample Space Answer: The sample space of an experiment or random trial is the set of all possible outcomes or results of that experiment.A sample space is usually denoted using set notation, and the possible outcomes are listed as elements in the set. It is common to refer to a sample space by the labels S, Ω, or U (for "universal set"). ii) MutuallyExclusive events Answer: Two events are mutually exclusive if they cannot occur at the same time. An example is tossing a coin once,which can resultineitherheadsortails,butnotboth. In the coin-tossingexample, b) Mentionthe propertiesof probability Answer: Property 1: If A is an outcome ina sample space S,then P(A) > 0 Property 2: c) Define the term ‘random variable’ Answer: A random variable, aleatory variable or stochastic variable is a variable whose value is subject to variations due to chance .A random variable can take on a set of possible different values (similarly to other mathematical variables), each with an associated probability, in contrast to other mathematical variables.

- 3. 4(a) Differentiate betweenaccuracyand precision. Answer: Accuracy and precision are defined in terms of systematic and random errors. The more common definition associates accuracy with systematic errors and precision with random errors. Another definition, advanced by ISO, associates trueness with systematic errors and precision with randomerrors,and definesaccuracyas the combinationof bothtruenessandprecision. A measurementsystemcanbe (b) Write a briefnote on ‘Funnel Experiment’ Answer: The Funnel Experiment was devised by Dr. Deming to describe the adverse effects of tampering with a process by making changes to it without first making a careful study of the possible causes of the variationinthatprocess. In the experiment, a marble is dropped through a funnel onto a sheet of paper, which contains a target. The objective of the process is to get the marble to come to a stop as close to the target as possible. The experiment uses several methods to attempt to manipulate the funnel’s location to achieve the objective. 5 Define the terms: ‘processcapability’and ‘processstability’.ExplainCp indexand Cpk index. Answer: A process is a unique combination of tools, materials, methods, and people engaged in producing a measurable output; for example a manufacturing line for machine parts. All processes have inherentstatistical variabilitywhichcanbe evaluatedbystatisticalmethods. The Process Capability is a measurable property of a process to the specification, expressed as a process capability index (e.g., Cpk or Cpm) or as a process performance index (e.g., Ppk or Ppm). The output of thismeasurementisusuallyillustratedby 6. Give the meaningof the following: i) Hypothesistesting Answer: A statistical hypothesis is a scientific hypothesis that is testable on the basis of observing a process that is modeled via a set of random variables. A statistical hypothesis test is a method of statistical inference usedfortestingastatistical hypothesis. A test result is called statistically significant if it has been predicted as unlikely to have occurred by chance alone,accordingto a ii) Control chart

- 4. Answer: Control charts, also known as Shewhart charts (after Walter A. Shewhart) or process-behavior charts, instatistical iii) Experimental design Answer: In an experiment, we deliberately change one or more process variables (or factors) in order to observe the effectthe changes iv) Acceptance Sampling Answer: Acceptance sampling uses statistical sampling to determine whether to accept or reject a production lot of material. It has been a common quality control technique used in industry. It is usually done as products leave the factory, or in some cases even within the factory. Most often a producer suppliesaconsumer Dear students get fully solved assignments Send your semester & Specialization name to our mail id : “ help.mbaassignments@gmail.com ” or Call us at : 08263069601