Paragon Software Case Study Partition Manager For Datacenters & Hosting Providers

•

1 like•295 views

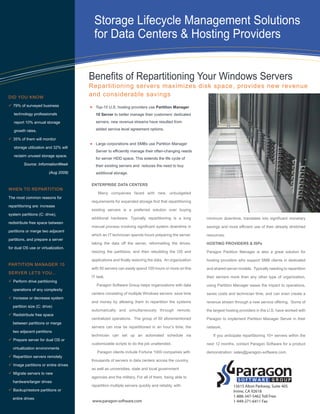

Top-10 U.S. hosting providers use Partition Manager 10 Server to better manage their customers’ dedicated servers; new revenue streams have resulted from added service level agreement options.

Report

Share

Report

Share

Download to read offline

Recommended

BCP and DR Plan With NAS Solution

© Happiest Minds Technologies Pvt. Ltd. All Rights Reserved

Organizations today operate in a highly competitive environment. While technological advancement has helped them reach

unimaginable heights, the impending threats of any disaster, have also evolved with the new age technology. In today’s fast-

paced digital landscape, where 90% of the data is stored electronically, loss of important data means loss of business.

Managing clients with Dell Client Integration Pack 3.0 and Microsoft System C...

Managing clients with Dell Client Integration Pack 3.0 and Microsoft System C...Principled Technologies

Client management is an important part of any enterprise. Employees have workstations in their offices or notebooks that travel with them around the globe, and efficient updates and remote management capabilities keep an organization’s IT assets ordered and secure. Microsoft System Center Configuration Manager 2012 can provide a robust, efficient, management system for your IT infrastructure. Selecting clients that not only operate within your IT framework, but that have built-in software to integrate with it seamlessly to make client management tasks even easier is an intelligent strategy for your IT department.

In our tests, we found that Dell client management tools (Dell Client Integration Pack, Dell Client Configuration Toolkit, and Dell OpenManage Client Instrumentation) integrated in a typical SCCM 2012 environment reduced the steps it took to complete client management tasks by as much as 77 percent, and included a number of features that weren’t available with clients from HP and Lenovo.

New Features For Your Software Defined Storage

New Features in PSP2 for SANsymphony™-V10 Software-defined Storage Platform and DataCore™ Virtual SAN. New enhancements include OpenStack support, deduplication and compression, veeam backup integration and random write accelerator.

VMWare Forum Winnipeg - 2012

Keynote speech and presentation by Anil Sedha alongwith the Postmedia team at the VMWare Forum in Winnipeg.

Data Domain Architecture

This is an explanation of how the Data Domain Data De Duplication architecture works for storage environments.

Availability Considerations for SQL Server

Content from 1/27/11 Corporate Webinar on Availability Considerations for SQL Server.

Elevate your e-commerce business by upgrading to the Dell EMC PowerEdge R740x...

Elevate your e-commerce business by upgrading to the Dell EMC PowerEdge R740x...Principled Technologies

Moving from a legacy environment can drive the number of customers you support to new heights Recommended

BCP and DR Plan With NAS Solution

© Happiest Minds Technologies Pvt. Ltd. All Rights Reserved

Organizations today operate in a highly competitive environment. While technological advancement has helped them reach

unimaginable heights, the impending threats of any disaster, have also evolved with the new age technology. In today’s fast-

paced digital landscape, where 90% of the data is stored electronically, loss of important data means loss of business.

Managing clients with Dell Client Integration Pack 3.0 and Microsoft System C...

Managing clients with Dell Client Integration Pack 3.0 and Microsoft System C...Principled Technologies

Client management is an important part of any enterprise. Employees have workstations in their offices or notebooks that travel with them around the globe, and efficient updates and remote management capabilities keep an organization’s IT assets ordered and secure. Microsoft System Center Configuration Manager 2012 can provide a robust, efficient, management system for your IT infrastructure. Selecting clients that not only operate within your IT framework, but that have built-in software to integrate with it seamlessly to make client management tasks even easier is an intelligent strategy for your IT department.

In our tests, we found that Dell client management tools (Dell Client Integration Pack, Dell Client Configuration Toolkit, and Dell OpenManage Client Instrumentation) integrated in a typical SCCM 2012 environment reduced the steps it took to complete client management tasks by as much as 77 percent, and included a number of features that weren’t available with clients from HP and Lenovo.

New Features For Your Software Defined Storage

New Features in PSP2 for SANsymphony™-V10 Software-defined Storage Platform and DataCore™ Virtual SAN. New enhancements include OpenStack support, deduplication and compression, veeam backup integration and random write accelerator.

VMWare Forum Winnipeg - 2012

Keynote speech and presentation by Anil Sedha alongwith the Postmedia team at the VMWare Forum in Winnipeg.

Data Domain Architecture

This is an explanation of how the Data Domain Data De Duplication architecture works for storage environments.

Availability Considerations for SQL Server

Content from 1/27/11 Corporate Webinar on Availability Considerations for SQL Server.

Elevate your e-commerce business by upgrading to the Dell EMC PowerEdge R740x...

Elevate your e-commerce business by upgrading to the Dell EMC PowerEdge R740x...Principled Technologies

Moving from a legacy environment can drive the number of customers you support to new heights Better Backup For All Symantec Appliances NetBackup 5220 Backup Exec 3600 May...

Symantec’s latest backup appliances, NetBackup 5220 and Backup Exec 3600, which now include the latest NetBackup 7.5 and Backup Exec 2012 software from Symantec announced earlier this year. The new appliances deliver on Symantec’s Better Backup for All initiative to advance what Gartner has called “The Broken State of Backup.”

White Paper: MoreVRP for EMC Greenplum

MoreVRP is a database performance monitoring and acceleration tool, and offers DBAs the capability to have real-time monitoring and resource management and control.

Automated SAN Storage Tiering: Four Use Cases - Dell 8 sept 2010

Automated SAN Storage Tiering: Four Use Cases - Dell 8 sept 2010

Penn State slashes backup time by 80 percent

Learn how Penn State slashes backup time by 80 percent. For more information on IBM FlashSystem, visit http://ibm.co/10KodHl.

Visit http://bit.ly/KWh5Dx to 'Follow' the official Twitter handle of IBM India Smarter Computing.

TECHNICAL WHITE PAPER▶Symantec Backup Exec 2014 Blueprints - OST Powered Appl...

This Blueprint is designed to help with customers who are utilising OST technology with Backup Exec’s deduplication Option to improve back end storage capabilities within a complex backup environment.

Relentless Information Growth

The data deduplication technology within Backup Exec 2014 breaks down streams of backup data into “blocks.” Each data block is identified as either unique or non-unique, and a tracking database is used to ensure that only a single copy of a data block is saved to storage by that Backup Exec server. For subsequent backups, the tracking database identifies which blocks have been protected and only stores the blocks that are new or unique. For example, if five different client systems are sending backup data to a Backup Exec server and a data block is found in backup streams from all five of those client systems, only a single copy of the data block is actually stored by the Backup Exec server. This process of reducing redundant data blocks that are saved to backup storage leads to significant reduction in storage space needed for backups.

Data has increased the necessity in making greater investments in IT infrastructure, with the increase in the duplication of data and Data protection processes, such as backup, has compound data growth creating multiple copies of primary data made for operational and disaster recovery. This has also made the Backup Infrastructure far more complex. Now that disk-based systems inherently offer faster restores, disk systems can also make backup environments more complex and difficult to manage. This creates a problem for many backup solutions to manage advanced storage device capabilities such as data deduplication, replication, and ability to write directly to tape.

Power of OpenStorage Technology (OST)

Symantec Backup Exec software and the OpenStorage technology (OST) have been designed to provide centrally managed, edge-to-core data protection in order to span multiple sites and provide disk-to-disk-to-tape (D2D2T) functionality and automate Data Movement. The OpenStorage API introduced in Backup Exec 2010 provides automated movement of data between sites and storage tiers and acts as a single Point of Management and Catalog for Backup Data, regardless of where it resides (remote office or corporate data center) or of what type of media it is stored on (disk or tape), or its age (recent backup or long term archive), providing better Control of Advanced Storage Devices.

The OpenStorage initiative allows customers to better utilize advanced, disk-based storage solutions from qualified partners. It gives the ability to ensure tighter integration between the backup software and storage, greater efficiency and performance using an easy-to-deploy, purpose-built appliance that does not have the limitation of tape emulation devices: increasing Performance and Optimization, achieving faster backups to deduplication appliances via a third-party OST plug-in enabled by Backup Exec.

Provident Financial-Cisco

Customer Name: Provident Financial

Industry: Financial services

Location: Bradford, United Kingdom

Number of Employees: 3700

Challenge

• Reduce time and effort needed for major data centre migration

• Smoothly allocate data to specific priority tiers within new data centre structure

• Quickly migrate data to new devices

Solution

• Cisco MDS Data Mobility Manager

Results

• Reduced migration effort 75 per cent by automating data backup, restore, and qualification processes

• Cut downtime by up to 90 per cent by carrying out migrations while services are still running

EACS Cloud Backup

EACS Cloud Backup is a fully managed, state of the art offsite solution that is available for a monthly per/GB cost. This technology negates the need for unreliable tape media and human intervention. It could be your first step on the Disaster Recovery ladder or a supplementary extension to your existing Business Continuity Plan.

VDI performance comparison: Dell PowerEdge FX2 and FC430 servers with VMware ...

VDI performance comparison: Dell PowerEdge FX2 and FC430 servers with VMware ...Principled Technologies

Replacing your legacy VDI servers with a new Intel Xeon processor E5-2650 v3-powered Dell PowerEdge FX2 solution using VMware Virtual SAN can be a great boon for your enterprise.

In the Principled Technologies (PT) labs, this space-efficient, affordable solution outperformed a legacy server and traditional SAN VSAN by offering 72 percent greater VDI users. Additionally, it achieved greater performance while using 91 percent less space and at a cost of only $176.52 per user.

By supporting more users, saving space, and its affordability, an upgrade to the Intel-powered Dell PowerEdge FX2 solution using VMware Virtual SAN can be a wise move when replacing your aging, older infrastructure.More Related Content

What's hot

Better Backup For All Symantec Appliances NetBackup 5220 Backup Exec 3600 May...

Symantec’s latest backup appliances, NetBackup 5220 and Backup Exec 3600, which now include the latest NetBackup 7.5 and Backup Exec 2012 software from Symantec announced earlier this year. The new appliances deliver on Symantec’s Better Backup for All initiative to advance what Gartner has called “The Broken State of Backup.”

White Paper: MoreVRP for EMC Greenplum

MoreVRP is a database performance monitoring and acceleration tool, and offers DBAs the capability to have real-time monitoring and resource management and control.

Automated SAN Storage Tiering: Four Use Cases - Dell 8 sept 2010

Automated SAN Storage Tiering: Four Use Cases - Dell 8 sept 2010

Penn State slashes backup time by 80 percent

Learn how Penn State slashes backup time by 80 percent. For more information on IBM FlashSystem, visit http://ibm.co/10KodHl.

Visit http://bit.ly/KWh5Dx to 'Follow' the official Twitter handle of IBM India Smarter Computing.

TECHNICAL WHITE PAPER▶Symantec Backup Exec 2014 Blueprints - OST Powered Appl...

This Blueprint is designed to help with customers who are utilising OST technology with Backup Exec’s deduplication Option to improve back end storage capabilities within a complex backup environment.

Relentless Information Growth

The data deduplication technology within Backup Exec 2014 breaks down streams of backup data into “blocks.” Each data block is identified as either unique or non-unique, and a tracking database is used to ensure that only a single copy of a data block is saved to storage by that Backup Exec server. For subsequent backups, the tracking database identifies which blocks have been protected and only stores the blocks that are new or unique. For example, if five different client systems are sending backup data to a Backup Exec server and a data block is found in backup streams from all five of those client systems, only a single copy of the data block is actually stored by the Backup Exec server. This process of reducing redundant data blocks that are saved to backup storage leads to significant reduction in storage space needed for backups.

Data has increased the necessity in making greater investments in IT infrastructure, with the increase in the duplication of data and Data protection processes, such as backup, has compound data growth creating multiple copies of primary data made for operational and disaster recovery. This has also made the Backup Infrastructure far more complex. Now that disk-based systems inherently offer faster restores, disk systems can also make backup environments more complex and difficult to manage. This creates a problem for many backup solutions to manage advanced storage device capabilities such as data deduplication, replication, and ability to write directly to tape.

Power of OpenStorage Technology (OST)

Symantec Backup Exec software and the OpenStorage technology (OST) have been designed to provide centrally managed, edge-to-core data protection in order to span multiple sites and provide disk-to-disk-to-tape (D2D2T) functionality and automate Data Movement. The OpenStorage API introduced in Backup Exec 2010 provides automated movement of data between sites and storage tiers and acts as a single Point of Management and Catalog for Backup Data, regardless of where it resides (remote office or corporate data center) or of what type of media it is stored on (disk or tape), or its age (recent backup or long term archive), providing better Control of Advanced Storage Devices.

The OpenStorage initiative allows customers to better utilize advanced, disk-based storage solutions from qualified partners. It gives the ability to ensure tighter integration between the backup software and storage, greater efficiency and performance using an easy-to-deploy, purpose-built appliance that does not have the limitation of tape emulation devices: increasing Performance and Optimization, achieving faster backups to deduplication appliances via a third-party OST plug-in enabled by Backup Exec.

Provident Financial-Cisco

Customer Name: Provident Financial

Industry: Financial services

Location: Bradford, United Kingdom

Number of Employees: 3700

Challenge

• Reduce time and effort needed for major data centre migration

• Smoothly allocate data to specific priority tiers within new data centre structure

• Quickly migrate data to new devices

Solution

• Cisco MDS Data Mobility Manager

Results

• Reduced migration effort 75 per cent by automating data backup, restore, and qualification processes

• Cut downtime by up to 90 per cent by carrying out migrations while services are still running

EACS Cloud Backup

EACS Cloud Backup is a fully managed, state of the art offsite solution that is available for a monthly per/GB cost. This technology negates the need for unreliable tape media and human intervention. It could be your first step on the Disaster Recovery ladder or a supplementary extension to your existing Business Continuity Plan.

VDI performance comparison: Dell PowerEdge FX2 and FC430 servers with VMware ...

VDI performance comparison: Dell PowerEdge FX2 and FC430 servers with VMware ...Principled Technologies

Replacing your legacy VDI servers with a new Intel Xeon processor E5-2650 v3-powered Dell PowerEdge FX2 solution using VMware Virtual SAN can be a great boon for your enterprise.

In the Principled Technologies (PT) labs, this space-efficient, affordable solution outperformed a legacy server and traditional SAN VSAN by offering 72 percent greater VDI users. Additionally, it achieved greater performance while using 91 percent less space and at a cost of only $176.52 per user.

By supporting more users, saving space, and its affordability, an upgrade to the Intel-powered Dell PowerEdge FX2 solution using VMware Virtual SAN can be a wise move when replacing your aging, older infrastructure.What's hot (20)

Better Backup For All Symantec Appliances NetBackup 5220 Backup Exec 3600 May...

Better Backup For All Symantec Appliances NetBackup 5220 Backup Exec 3600 May...

Automated SAN Storage Tiering: Four Use Cases - Dell 8 sept 2010

Automated SAN Storage Tiering: Four Use Cases - Dell 8 sept 2010

TECHNICAL WHITE PAPER▶Symantec Backup Exec 2014 Blueprints - OST Powered Appl...

TECHNICAL WHITE PAPER▶Symantec Backup Exec 2014 Blueprints - OST Powered Appl...

VDI performance comparison: Dell PowerEdge FX2 and FC430 servers with VMware ...

VDI performance comparison: Dell PowerEdge FX2 and FC430 servers with VMware ...

Viewers also liked

Introduction to Social Media for Social Change

This is a simple introduction to social media for social change that we use in our SMEX trainings. it's also available in Arabic.

Memoria Iº Torneo Felipe Fernandez Romero 2015

Memoria del 1º Torneo alevín Felipe Fernández Romero. Un torneo de fútbol base que se va a convertir en todo un referente en el panorama nacional del fútbol base.

Un torneo donde lo más importante son los VALORES DEL FÚTBOL BASE, y que nace en memoria de todo un referente en el fútbol base valenciano: Felipe Fernández Romero

Talleres de la Historia IES La Madraza 2015

Talleres para los alumnos/as de 6º de primaria del colegio Elena Martín Vivaldi

Diari del 18 de setmbre de 2012

Aqui podeu consultar el diari d'avui en format PDF.

Que tingueu un bon dia !! :)

Viewers also liked (20)

Similar to Paragon Software Case Study Partition Manager For Datacenters & Hosting Providers

Partition Manager Server Case Study Stirling Sotheby International Realty

Partition Manager Server Case Study Stirling Sotheby International Realty

Removing Storage Related Barriers to Server and Desktop Virtualization

An IDC Viewpoint Paper: Virtualization is among the technologies that have become increasingly attractive in the current economic climate. Organizations are implementing virtualization solutions to obtain the following benefits: Focus on efficiency and cost reduction, Simplify management and maintenance, and Improve availability and disaster recovery.

DataCore Executive Brief

10 REASONS TO ADOPT DATACORE SOFTWARE

Over 10,000 satisfied clients and more than 30,000 installations worldwide, clients in every industry sector and of every size,

testify to DataCore’s innovative spirit. It’s no wonder that we know precisely what it takes to deal with the challenges our clients face. We are there to assist you with a range of solutions aimed at dealing with increasing volumes of data and complex management of a disparate variety of infrastructures. This is why we are in the top ranking in the market of software-defined storage and hyper- converged infrastructure. Whether to boost the performance of mission-critical applications, increase efficiency, structure for enhanced availability, ensure high availability or business continuity - with DataCore you are always in control.

Lotus Admin Training Part II

Presents an overview of various design issues/decisions involved during Domino Infrastructure planning, including the factors/issues relating to the hardware infrastructure strategy in terms of server, standards, messaging, replication, security, Internet connection, etc.

Software defined storage rev. 2.0

Our new software defined storage brochure is available for download!

1cloudstar cloud store.v1.1

Headquartered in Asia with coverage across the region and beyond, 1cloudstar is a pure-play Cloud Services Provider offering cloud-related consulting and professional services. 1cloudstar brings a deep understanding of what is possible when legacy systems and cloud solutions coexist and we have a clear vision of the digital future toward which this hybrid world is leading us. We combine those insights with our traditional Enterprise IT knowledge to drive innovation and transform complex environments into high-performance engines.

Whether you’re in the early stages of evaluating how the cloud can benefit your business, need guidance on developing a cloud strategy or how to integrate new cloud technology with their existing technology investments, 1cloudstar can leverage the skills and experience gained from many other enterprise cloud projects to ensure you achieve your business objectives.

1cloudstar’s unique strategic approach and engagement model ‘1cloudstar Engage’ combined with it’s cloud infrastructure and application integration skills sets the company apart from traditional technology system integrators. 1cloudstar’s team of consultants can leverage years of technology infrastructure and applications experience along with first hand experience of public, private and hybrid cloud projects to ensure your enterprise journey to cloud is a success.

1cloudstar accelerates the cloud-powered business, helping enterprises achieve real results from cloud applications and platforms.

Run more applications without expanding your datacenter

By upgrading from the legacy solution we tested to the new Intel processor-based Dell and VMware solution, you could do 18 times the work in the same amount of space. Imagine what that performance could mean to your business: Consolidate workloads from across your company, lower your power and cooling bills, and limit datacenter expansion in the future, all while maintaining a consistent user experience—the list of potential benefits is huge.

Try running DPACK, which can help you identify bottlenecks in your environment and inform you about your current performance needs. Then consider how the consolidation ratio we proved could be helpful for your company. The Intel processor-powered Dell PowerEdge R730 solution with VMware vSphere and Dell Storage SC4020, also powered by Intel, could be the right destination for your upgrade journey.

DATA STORAGE REPLICATION aCelera and WAN Series Solution Brief

aCelera and WAN Series WAN Optimization Controllers: Accelerating storage backup, replication and recovery over the WAN, efficiently and cost-effectively.

Vizioncore Economical Disaster Recovery through Virtualization

Vizioncore Economical Disaster Recovery through Virtualization

SAP virtualization

Virtualization simply means maximizing the value of your IT resources - including hardware, software applications and infrastructure - by decoupling them from physical assets and turning them into a "pool" of resources that can be called upon and used on an on demand basis.

Virtualize Your Workforce

All Covered Cloud Virtual Infrastructure, allows for greater flexibility. All Covered was recognized as Citrix Partner of the year. We can also help in Data Migration projects as your business moved to the cloud.

Build your private cloud with paa s using linuxz cover story enterprise tech ...

This article has been published as a cover story of Enterprise Tech Journal Jun 2013 issue, at - http://enterprisesystemsmedia.com/article/build-your-private-cloud-with-paas-using-linux-on-system-z.

Do you know that according to Gartner poll, Private Cloud project deployments are increasing significantly in 2013 and nearly 90% of customers are looking at Private Cloud implementation and are either in the planning or early deployment stages? Is your shop currently looking into Private Platform as a Service (PaaS) Cloud implementation? Have you looked into what zEnterprise has to offer in this space?

This article gives insight on Private Cloud Implementation on zEnterprise and can help you learn how your organization can benefit from implementing a Dev/Test private cloud using PaaS with Linux on System z and why WebSphere Application Server (WAS) may be a perfect fit for a Dev/Test Private Cloud.

Learn how your organization can cut IT costs by deploying “self-service” for developers, using Dev/Test Private Cloud.

This article goes over what Applications are a good fit for Dev/Test Private Cloud and the benefits of this implementation, such as :

• Reduce the number of Dev/Test WAS environments using dedicated resources

• Reduce hardware and software license costs

• Improve speed of upgrades

• Standardize procedures and software levels

• Let developers set up and use new environments in minutes instead of days or weeks

• Simplify WAS infrastructure management

Article also goes over the key differences in managing cloud infrastructure on distributed platforms vs. mainframes and shows how Linux on System z plays a critical role in creating PaaS clouds.

• Mainframes can scale vertically, while distributed servers can scale horizontally.

• Linux on System z has much higher densities of virtual machines per processor core compared to distributed servers.

• Cloud implementation on zEnterprise can dynamically allocate workloads to available pooled resources.

• Linux on System z leverages z/VM’s ability to virtualize CPUs and memory, as well as share those resources among many Linux guests. One IFL can run the equivalent of many distributed servers.

• Consolidation of Linux workloads on a single physical hardware server allows multiple Linux images to run on z/VM IFLs without affecting IBM software charges for existing System z general processors.

Creating private cloud to host dev/test environments can save your company money and increase efficiency. Start small, but think big; create a cloud strategy and roadmap that involves getting leadership buy in, building business cases and presenting them in stages within your organization, standardizing procedures and using a fit-for-purpose approach to select pilot applications.

Connect with author of this article, Elena Nanos, on Linkedin at http://www.linkedin.com/pub/elena-nanos/1/a47/70a

Virtualizing Business Critical Applications

While apps may display several symptoms indicative of slow or erratic response after being virtualized, the problem boils down to contention for shared storage resources; contention

that did not occur when the apps had the storage all to themselves.

These so called “bottlenecks” occur in spurts as application requests collide randomly, resulting in spikes of sluggish, unpredictable latency. The more frequent, the greater the

users’ dissatisfaction. You may recall that one of the primary reasons these business critical apps were originally

sequestered on separate physical machines was to avoid such collisions

Similar to Paragon Software Case Study Partition Manager For Datacenters & Hosting Providers (20)

Partition Manager Server Case Study Stirling Sotheby International Realty

Partition Manager Server Case Study Stirling Sotheby International Realty

Removing Storage Related Barriers to Server and Desktop Virtualization

Removing Storage Related Barriers to Server and Desktop Virtualization

Storage Strategies Now- Virtualizaing Busines Critical applications

Storage Strategies Now- Virtualizaing Busines Critical applications

Run more applications without expanding your datacenter

Run more applications without expanding your datacenter

DATA STORAGE REPLICATION aCelera and WAN Series Solution Brief

DATA STORAGE REPLICATION aCelera and WAN Series Solution Brief

Vizioncore Economical Disaster Recovery through Virtualization

Vizioncore Economical Disaster Recovery through Virtualization

HP: HP 3PAR - Storage zrodený pre virtualizované prostredie

HP: HP 3PAR - Storage zrodený pre virtualizované prostredie

Best Practices For Virtualised Share Point T02 Brendan Law Nathan Mercer

Best Practices For Virtualised Share Point T02 Brendan Law Nathan Mercer

Build your private cloud with paa s using linuxz cover story enterprise tech ...

Build your private cloud with paa s using linuxz cover story enterprise tech ...

Recently uploaded

Elizabeth Buie - Older adults: Are we really designing for our future selves?

Elizabeth Buie - Older adults: Are we really designing for our future selves?

zkStudyClub - Reef: Fast Succinct Non-Interactive Zero-Knowledge Regex Proofs

This paper presents Reef, a system for generating publicly verifiable succinct non-interactive zero-knowledge proofs that a committed document matches or does not match a regular expression. We describe applications such as proving the strength of passwords, the provenance of email despite redactions, the validity of oblivious DNS queries, and the existence of mutations in DNA. Reef supports the Perl Compatible Regular Expression syntax, including wildcards, alternation, ranges, capture groups, Kleene star, negations, and lookarounds. Reef introduces a new type of automata, Skipping Alternating Finite Automata (SAFA), that skips irrelevant parts of a document when producing proofs without undermining soundness, and instantiates SAFA with a lookup argument. Our experimental evaluation confirms that Reef can generate proofs for documents with 32M characters; the proofs are small and cheap to verify (under a second).

Paper: https://eprint.iacr.org/2023/1886

Building RAG with self-deployed Milvus vector database and Snowpark Container...

This talk will give hands-on advice on building RAG applications with an open-source Milvus database deployed as a docker container. We will also introduce the integration of Milvus with Snowpark Container Services.

Enchancing adoption of Open Source Libraries. A case study on Albumentations.AI

Enchancing adoption of Open Source Libraries. A case study on Albumentations.AIVladimir Iglovikov, Ph.D.

Presented by Vladimir Iglovikov:

- https://www.linkedin.com/in/iglovikov/

- https://x.com/viglovikov

- https://www.instagram.com/ternaus/

This presentation delves into the journey of Albumentations.ai, a highly successful open-source library for data augmentation.

Created out of a necessity for superior performance in Kaggle competitions, Albumentations has grown to become a widely used tool among data scientists and machine learning practitioners.

This case study covers various aspects, including:

People: The contributors and community that have supported Albumentations.

Metrics: The success indicators such as downloads, daily active users, GitHub stars, and financial contributions.

Challenges: The hurdles in monetizing open-source projects and measuring user engagement.

Development Practices: Best practices for creating, maintaining, and scaling open-source libraries, including code hygiene, CI/CD, and fast iteration.

Community Building: Strategies for making adoption easy, iterating quickly, and fostering a vibrant, engaged community.

Marketing: Both online and offline marketing tactics, focusing on real, impactful interactions and collaborations.

Mental Health: Maintaining balance and not feeling pressured by user demands.

Key insights include the importance of automation, making the adoption process seamless, and leveraging offline interactions for marketing. The presentation also emphasizes the need for continuous small improvements and building a friendly, inclusive community that contributes to the project's growth.

Vladimir Iglovikov brings his extensive experience as a Kaggle Grandmaster, ex-Staff ML Engineer at Lyft, sharing valuable lessons and practical advice for anyone looking to enhance the adoption of their open-source projects.

Explore more about Albumentations and join the community at:

GitHub: https://github.com/albumentations-team/albumentations

Website: https://albumentations.ai/

LinkedIn: https://www.linkedin.com/company/100504475

Twitter: https://x.com/albumentationsRemoving Uninteresting Bytes in Software Fuzzing

Imagine a world where software fuzzing, the process of mutating bytes in test seeds to uncover hidden and erroneous program behaviors, becomes faster and more effective. A lot depends on the initial seeds, which can significantly dictate the trajectory of a fuzzing campaign, particularly in terms of how long it takes to uncover interesting behaviour in your code. We introduce DIAR, a technique designed to speedup fuzzing campaigns by pinpointing and eliminating those uninteresting bytes in the seeds. Picture this: instead of wasting valuable resources on meaningless mutations in large, bloated seeds, DIAR removes the unnecessary bytes, streamlining the entire process.

In this work, we equipped AFL, a popular fuzzer, with DIAR and examined two critical Linux libraries -- Libxml's xmllint, a tool for parsing xml documents, and Binutil's readelf, an essential debugging and security analysis command-line tool used to display detailed information about ELF (Executable and Linkable Format). Our preliminary results show that AFL+DIAR does not only discover new paths more quickly but also achieves higher coverage overall. This work thus showcases how starting with lean and optimized seeds can lead to faster, more comprehensive fuzzing campaigns -- and DIAR helps you find such seeds.

- These are slides of the talk given at IEEE International Conference on Software Testing Verification and Validation Workshop, ICSTW 2022.

Full-RAG: A modern architecture for hyper-personalization

Mike Del Balso, CEO & Co-Founder at Tecton, presents "Full RAG," a novel approach to AI recommendation systems, aiming to push beyond the limitations of traditional models through a deep integration of contextual insights and real-time data, leveraging the Retrieval-Augmented Generation architecture. This talk will outline Full RAG's potential to significantly enhance personalization, address engineering challenges such as data management and model training, and introduce data enrichment with reranking as a key solution. Attendees will gain crucial insights into the importance of hyperpersonalization in AI, the capabilities of Full RAG for advanced personalization, and strategies for managing complex data integrations for deploying cutting-edge AI solutions.

A tale of scale & speed: How the US Navy is enabling software delivery from l...

Rapid and secure feature delivery is a goal across every application team and every branch of the DoD. The Navy’s DevSecOps platform, Party Barge, has achieved:

- Reduction in onboarding time from 5 weeks to 1 day

- Improved developer experience and productivity through actionable findings and reduction of false positives

- Maintenance of superior security standards and inherent policy enforcement with Authorization to Operate (ATO)

Development teams can ship efficiently and ensure applications are cyber ready for Navy Authorizing Officials (AOs). In this webinar, Sigma Defense and Anchore will give attendees a look behind the scenes and demo secure pipeline automation and security artifacts that speed up application ATO and time to production.

We will cover:

- How to remove silos in DevSecOps

- How to build efficient development pipeline roles and component templates

- How to deliver security artifacts that matter for ATO’s (SBOMs, vulnerability reports, and policy evidence)

- How to streamline operations with automated policy checks on container images

GraphSummit Singapore | Enhancing Changi Airport Group's Passenger Experience...

Dr. Sean Tan, Head of Data Science, Changi Airport Group

Discover how Changi Airport Group (CAG) leverages graph technologies and generative AI to revolutionize their search capabilities. This session delves into the unique search needs of CAG’s diverse passengers and customers, showcasing how graph data structures enhance the accuracy and relevance of AI-generated search results, mitigating the risk of “hallucinations” and improving the overall customer journey.

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

Leonard Jayamohan, Partner & Generative AI Lead, Deloitte

This keynote will reveal how Deloitte leverages Neo4j’s graph power for groundbreaking digital twin solutions, achieving a staggering 100x performance boost. Discover the essential role knowledge graphs play in successful generative AI implementations. Plus, get an exclusive look at an innovative Neo4j + Generative AI solution Deloitte is developing in-house.

みなさんこんにちはこれ何文字まで入るの?40文字以下不可とか本当に意味わからないけどこれ限界文字数書いてないからマジでやばい文字数いけるんじゃないの?えこ...

ここ3000字までしか入らないけどタイトルの方がたくさん文字入ると思います。

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

Effective Application Security in Software Delivery lifecycle using Deployment Firewall and DBOM

The modern software delivery process (or the CI/CD process) includes many tools, distributed teams, open-source code, and cloud platforms. Constant focus on speed to release software to market, along with the traditional slow and manual security checks has caused gaps in continuous security as an important piece in the software supply chain. Today organizations feel more susceptible to external and internal cyber threats due to the vast attack surface in their applications supply chain and the lack of end-to-end governance and risk management.

The software team must secure its software delivery process to avoid vulnerability and security breaches. This needs to be achieved with existing tool chains and without extensive rework of the delivery processes. This talk will present strategies and techniques for providing visibility into the true risk of the existing vulnerabilities, preventing the introduction of security issues in the software, resolving vulnerabilities in production environments quickly, and capturing the deployment bill of materials (DBOM).

Speakers:

Bob Boule

Robert Boule is a technology enthusiast with PASSION for technology and making things work along with a knack for helping others understand how things work. He comes with around 20 years of solution engineering experience in application security, software continuous delivery, and SaaS platforms. He is known for his dynamic presentations in CI/CD and application security integrated in software delivery lifecycle.

Gopinath Rebala

Gopinath Rebala is the CTO of OpsMx, where he has overall responsibility for the machine learning and data processing architectures for Secure Software Delivery. Gopi also has a strong connection with our customers, leading design and architecture for strategic implementations. Gopi is a frequent speaker and well-known leader in continuous delivery and integrating security into software delivery.

Microsoft - Power Platform_G.Aspiotis.pdf

Revolutionizing Application Development

with AI-powered low-code, presentation by George Aspiotis, Sr. Partner Development Manager, Microsoft

GraphSummit Singapore | Neo4j Product Vision & Roadmap - Q2 2024

Maruthi Prithivirajan, Head of ASEAN & IN Solution Architecture, Neo4j

Get an inside look at the latest Neo4j innovations that enable relationship-driven intelligence at scale. Learn more about the newest cloud integrations and product enhancements that make Neo4j an essential choice for developers building apps with interconnected data and generative AI.

Climate Impact of Software Testing at Nordic Testing Days

My slides at Nordic Testing Days 6.6.2024

Climate impact / sustainability of software testing discussed on the talk. ICT and testing must carry their part of global responsibility to help with the climat warming. We can minimize the carbon footprint but we can also have a carbon handprint, a positive impact on the climate. Quality characteristics can be added with sustainability, and then measured continuously. Test environments can be used less, and in smaller scale and on demand. Test techniques can be used in optimizing or minimizing number of tests. Test automation can be used to speed up testing.

20240609 QFM020 Irresponsible AI Reading List May 2024

Everything I found interesting about the irresponsible use of machine intelligence in May 2024

Securing your Kubernetes cluster_ a step-by-step guide to success !

Today, after several years of existence, an extremely active community and an ultra-dynamic ecosystem, Kubernetes has established itself as the de facto standard in container orchestration. Thanks to a wide range of managed services, it has never been so easy to set up a ready-to-use Kubernetes cluster.

However, this ease of use means that the subject of security in Kubernetes is often left for later, or even neglected. This exposes companies to significant risks.

In this talk, I'll show you step-by-step how to secure your Kubernetes cluster for greater peace of mind and reliability.

Communications Mining Series - Zero to Hero - Session 1

This session provides introduction to UiPath Communication Mining, importance and platform overview. You will acquire a good understand of the phases in Communication Mining as we go over the platform with you. Topics covered:

• Communication Mining Overview

• Why is it important?

• How can it help today’s business and the benefits

• Phases in Communication Mining

• Demo on Platform overview

• Q/A

Recently uploaded (20)

Elizabeth Buie - Older adults: Are we really designing for our future selves?

Elizabeth Buie - Older adults: Are we really designing for our future selves?

zkStudyClub - Reef: Fast Succinct Non-Interactive Zero-Knowledge Regex Proofs

zkStudyClub - Reef: Fast Succinct Non-Interactive Zero-Knowledge Regex Proofs

Building RAG with self-deployed Milvus vector database and Snowpark Container...

Building RAG with self-deployed Milvus vector database and Snowpark Container...

Enchancing adoption of Open Source Libraries. A case study on Albumentations.AI

Enchancing adoption of Open Source Libraries. A case study on Albumentations.AI

Full-RAG: A modern architecture for hyper-personalization

Full-RAG: A modern architecture for hyper-personalization

A tale of scale & speed: How the US Navy is enabling software delivery from l...

A tale of scale & speed: How the US Navy is enabling software delivery from l...

GraphSummit Singapore | Enhancing Changi Airport Group's Passenger Experience...

GraphSummit Singapore | Enhancing Changi Airport Group's Passenger Experience...

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

GraphSummit Singapore | The Future of Agility: Supercharging Digital Transfor...

みなさんこんにちはこれ何文字まで入るの?40文字以下不可とか本当に意味わからないけどこれ限界文字数書いてないからマジでやばい文字数いけるんじゃないの?えこ...

みなさんこんにちはこれ何文字まで入るの?40文字以下不可とか本当に意味わからないけどこれ限界文字数書いてないからマジでやばい文字数いけるんじゃないの?えこ...

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

GDG Cloud Southlake #33: Boule & Rebala: Effective AppSec in SDLC using Deplo...

GraphSummit Singapore | Neo4j Product Vision & Roadmap - Q2 2024

GraphSummit Singapore | Neo4j Product Vision & Roadmap - Q2 2024

Climate Impact of Software Testing at Nordic Testing Days

Climate Impact of Software Testing at Nordic Testing Days

20240609 QFM020 Irresponsible AI Reading List May 2024

20240609 QFM020 Irresponsible AI Reading List May 2024

Securing your Kubernetes cluster_ a step-by-step guide to success !

Securing your Kubernetes cluster_ a step-by-step guide to success !

Communications Mining Series - Zero to Hero - Session 1

Communications Mining Series - Zero to Hero - Session 1

Paragon Software Case Study Partition Manager For Datacenters & Hosting Providers

- 1. Storage Lifecycle Management Solutions for Data Centers & Hosting Providers Benefits of Repartitioning Your Windows Servers Repartitioning servers maximizes disk space, provides new revenue DID YOU KNOW and considerable savings 79% of surveyed business Top-10 U.S. hosting providers use Partition Manager technology professionals 10 Server to better manage their customers’ dedicated report 10% annual storage servers; new revenue streams have resulted from growth rates. added service level agreement options. 35% of them will monitor Large corporations and SMBs use Partition Manager storage utilization and 32% will Server to efficiently manage their often-changing needs reclaim unused storage space. for server HDD space. This extends the life cycle of Source: InformationWeek their existing servers and reduces the need to buy (Aug 2009) additional storage. ENTERPRISE DATA CENTERS WHEN TO REPARTITION Many companies faced with new, unbudgeted The most common reasons for requirements for expanded storage find that repartitioning repartitioning are: increase existing servers is a preferred solution over buying system partitions (C: drive), additional hardware. Typically repartitioning is a long minimum downtime, translates into significant monetary redistribute free space between manual process involving significant system downtime in savings and more efficient use of their already stretched partitions or merge two adjacent which an IT technician spends hours preparing the server, resources. partitions, and prepare a server taking the data off the server, reformatting the drives, HOSTING PROVIDERS & ISPs for dual OS use or virtualization. resizing the partitions, and then rebuilding the OS and Paragon Partition Manager is also a great solution for applications and finally restoring the data. An organization hosting providers who support SMB clients in dedicated PARTITION MANAGER 10 with 50 servers can easily spend 100 hours or more on this and shared server models. Typically needing to repartition SERVER LETS YOU... IT task. their servers more than any other type of organization, Perform drive partitioning Paragon Software Group helps organizations with data using Partition Manager eases the impact to operations, operations of any complexity centers consisting of multiple Windows servers save time saves costs and technician time, and can even create a Increase or decrease system and money by allowing them to repartition the systems revenue stream through a new service offering. Some of partition size (C: drive) automatically and simultaneously through remote, the largest hosting providers in the U.S. have worked with Redistribute free space centralized operations. The group of 50 aforementioned Paragon to implement Partition Manager Server in their between partitions or merge servers can now be repartitioned in an hour’s time; the network. two adjacent partitions technician can set up an automated schedule via If you anticipate repartitioning 10+ servers within the Prepare server for dual OS or customizable scripts to do the job unattended. next 12 months, contact Paragon Software for a product virtualization environments Paragon clients include Fortune 1000 companies with demonstration: sales@paragon-software.com. Repartition servers remotely thousands of servers in data centers across the country, Image partitions or entire drives as well as universities, state and local government Migrate servers to new agencies and the military. For all of them, being able to hardware/larger drives repartition multiple servers quickly and reliably, with 15615 Alton Parkway, Suite 405 Backup/restore partitions or Irvine, CA 92618 1-888-347-5462 Toll Free entire drives www.paragon-software.com 1-949-271-6411 Fax