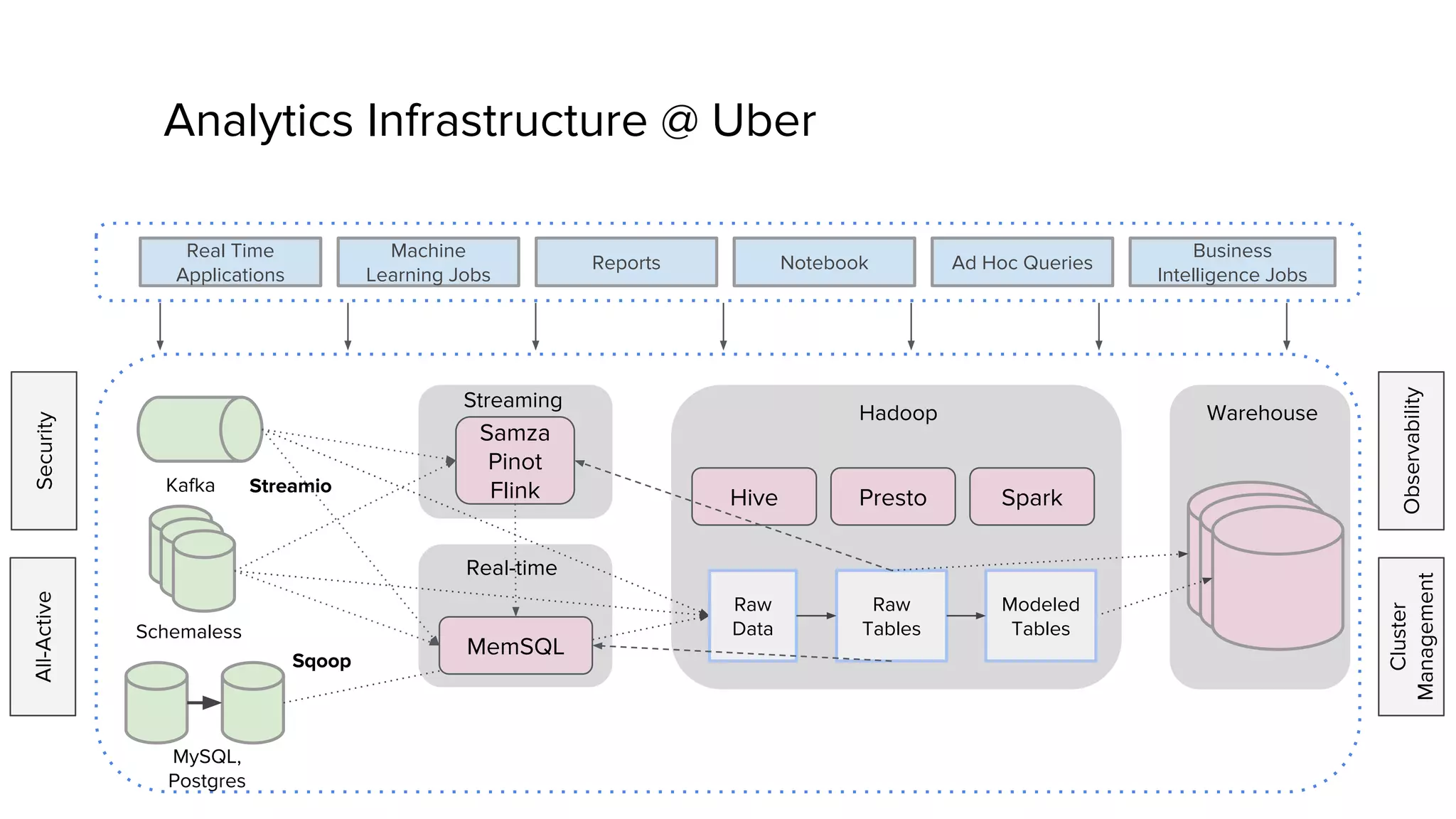

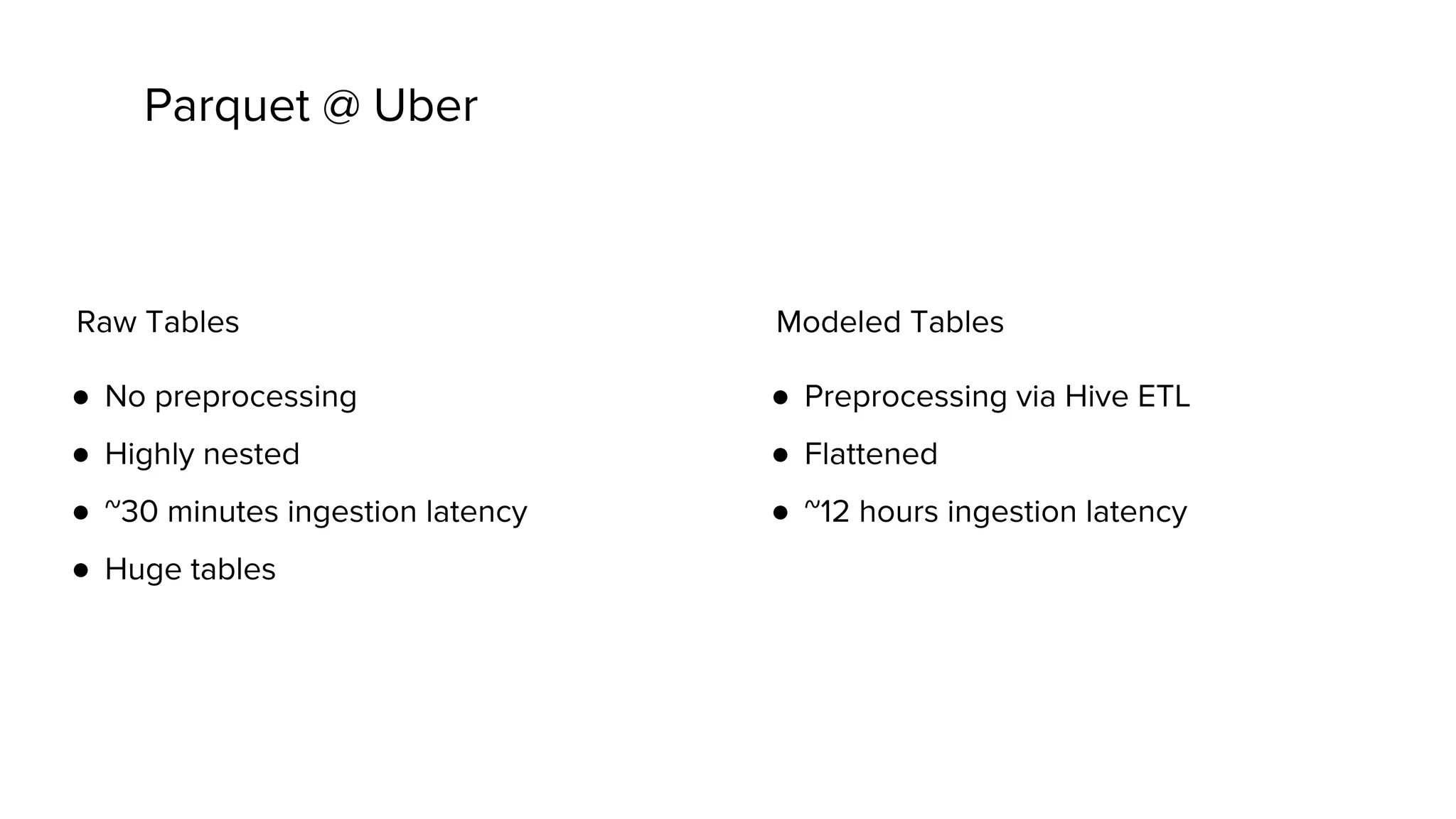

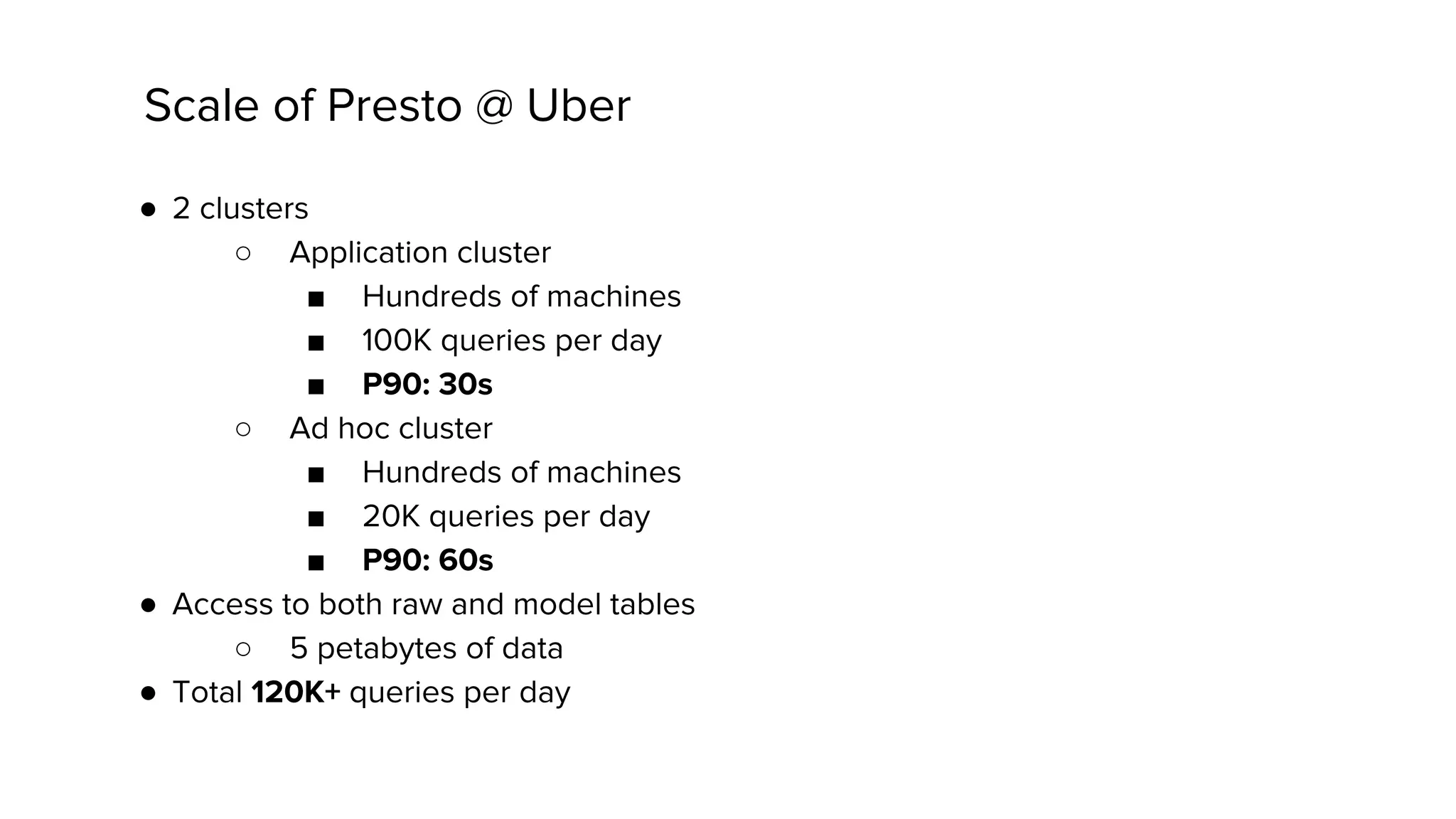

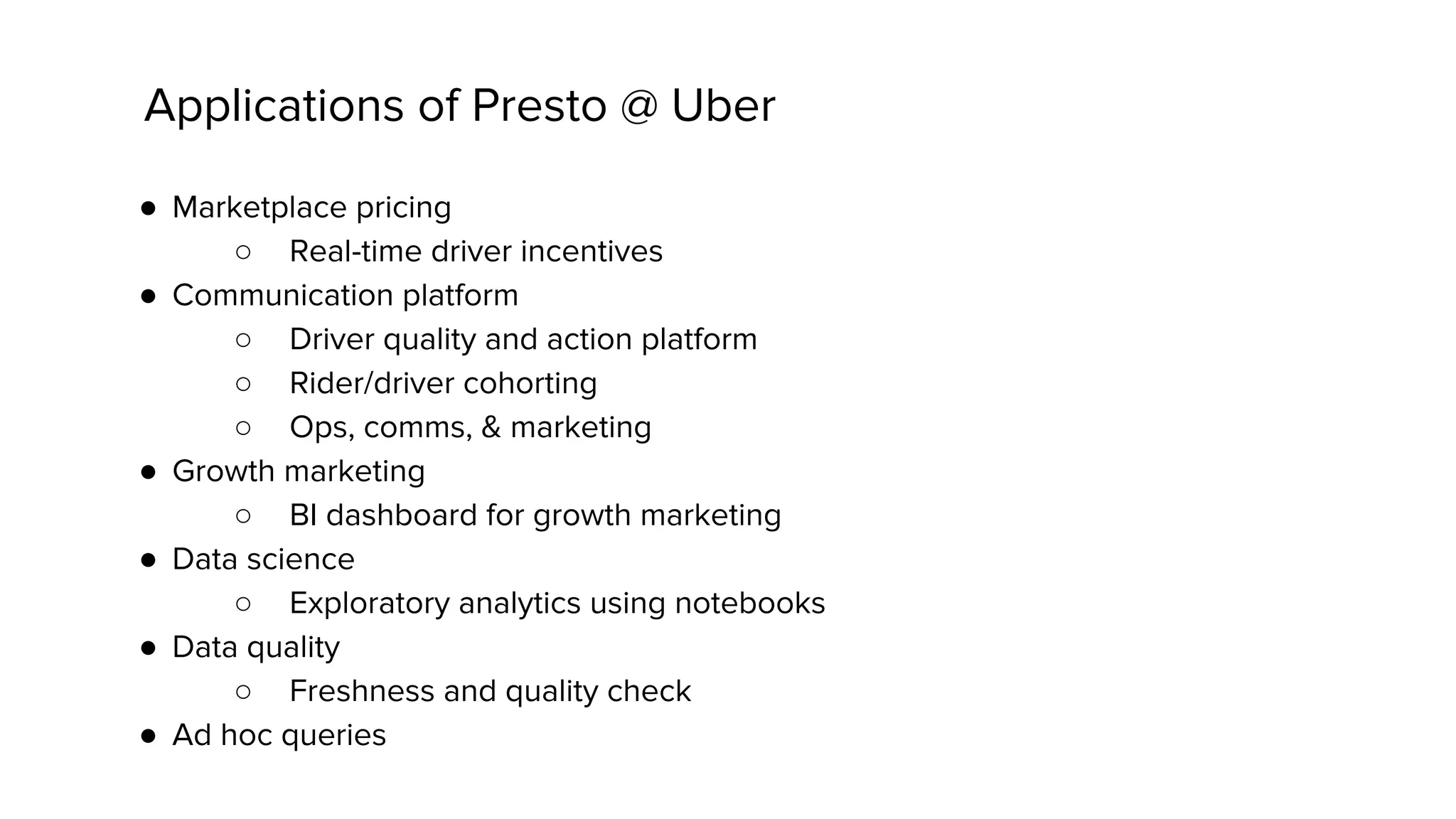

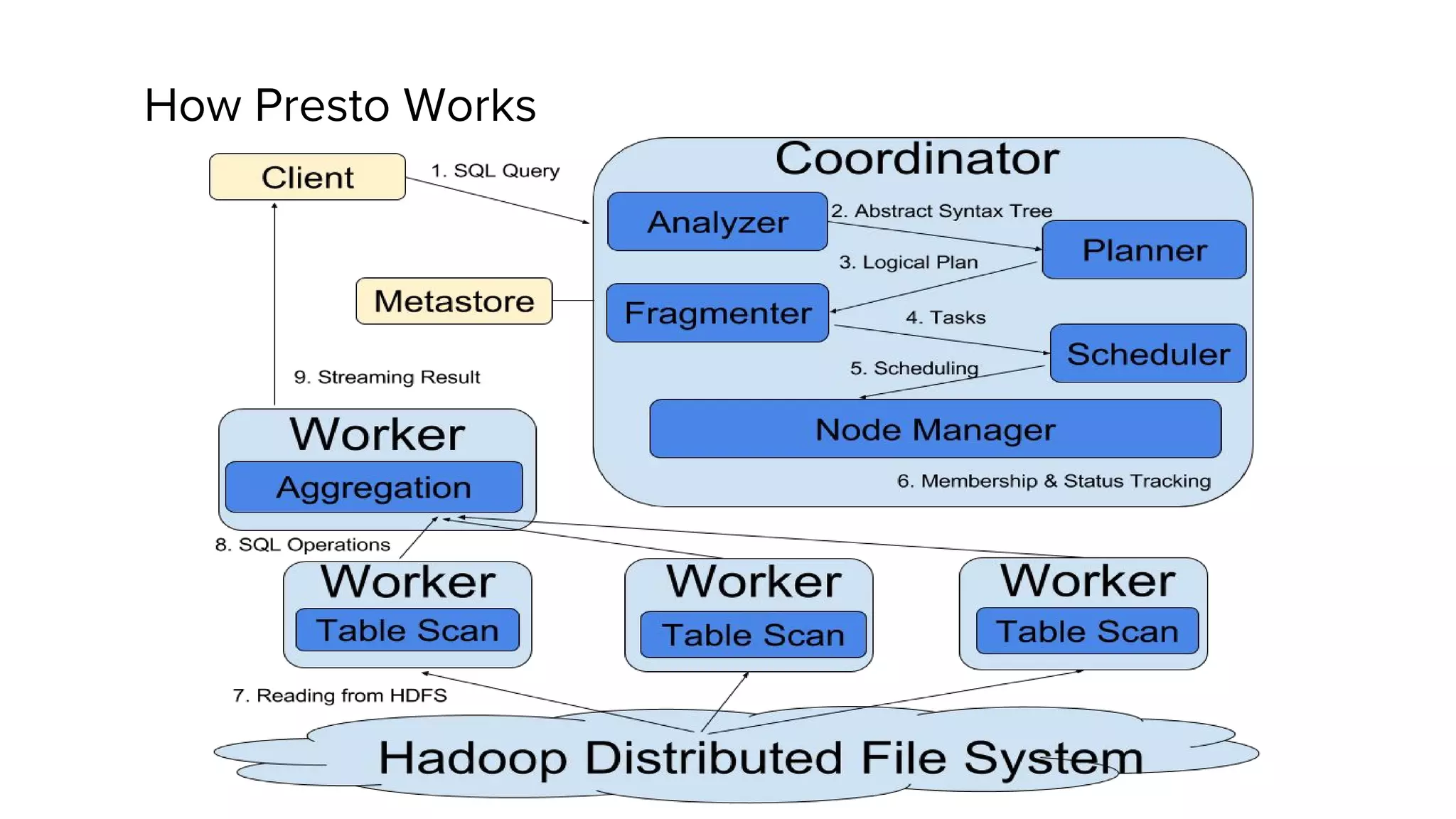

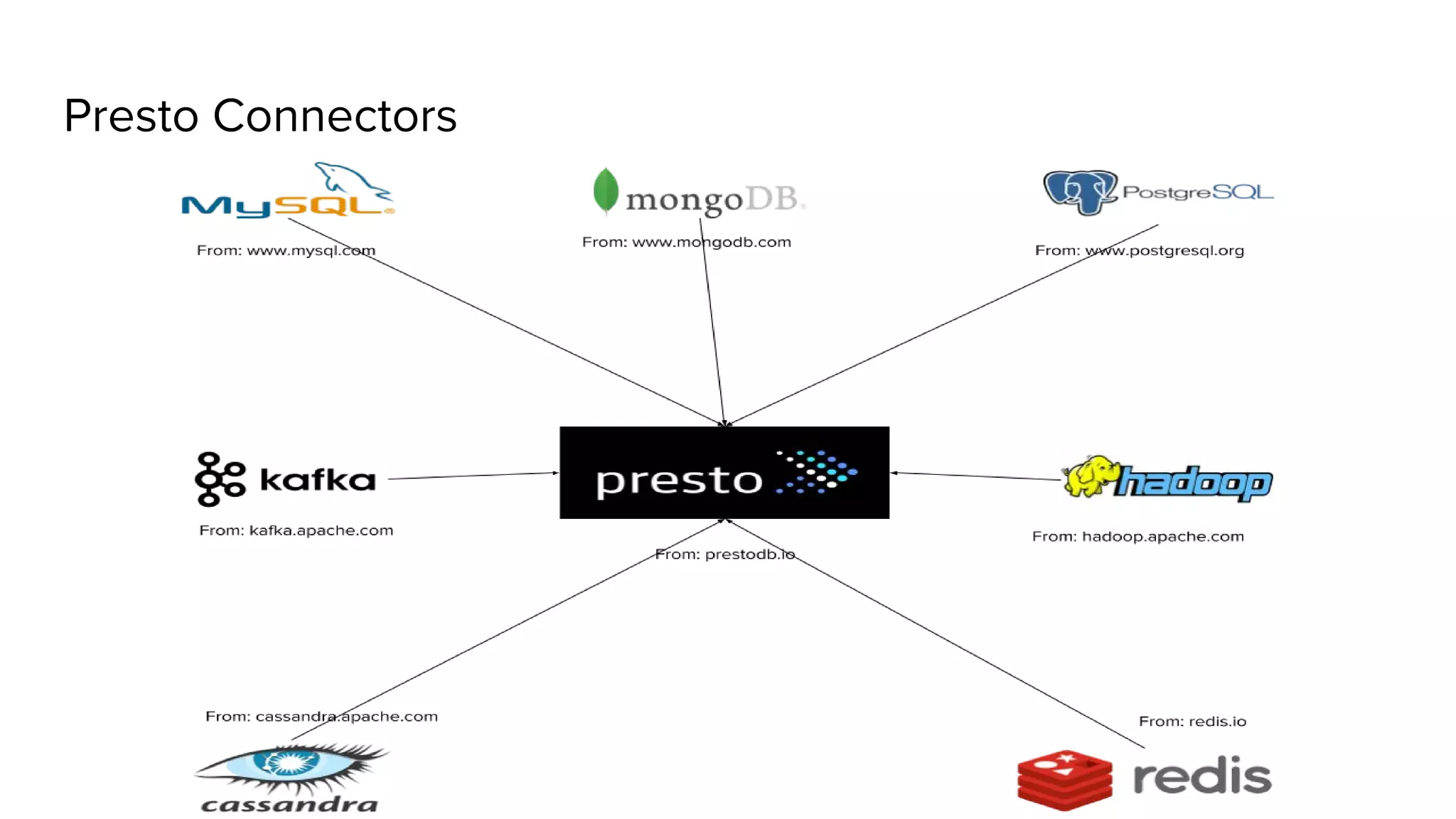

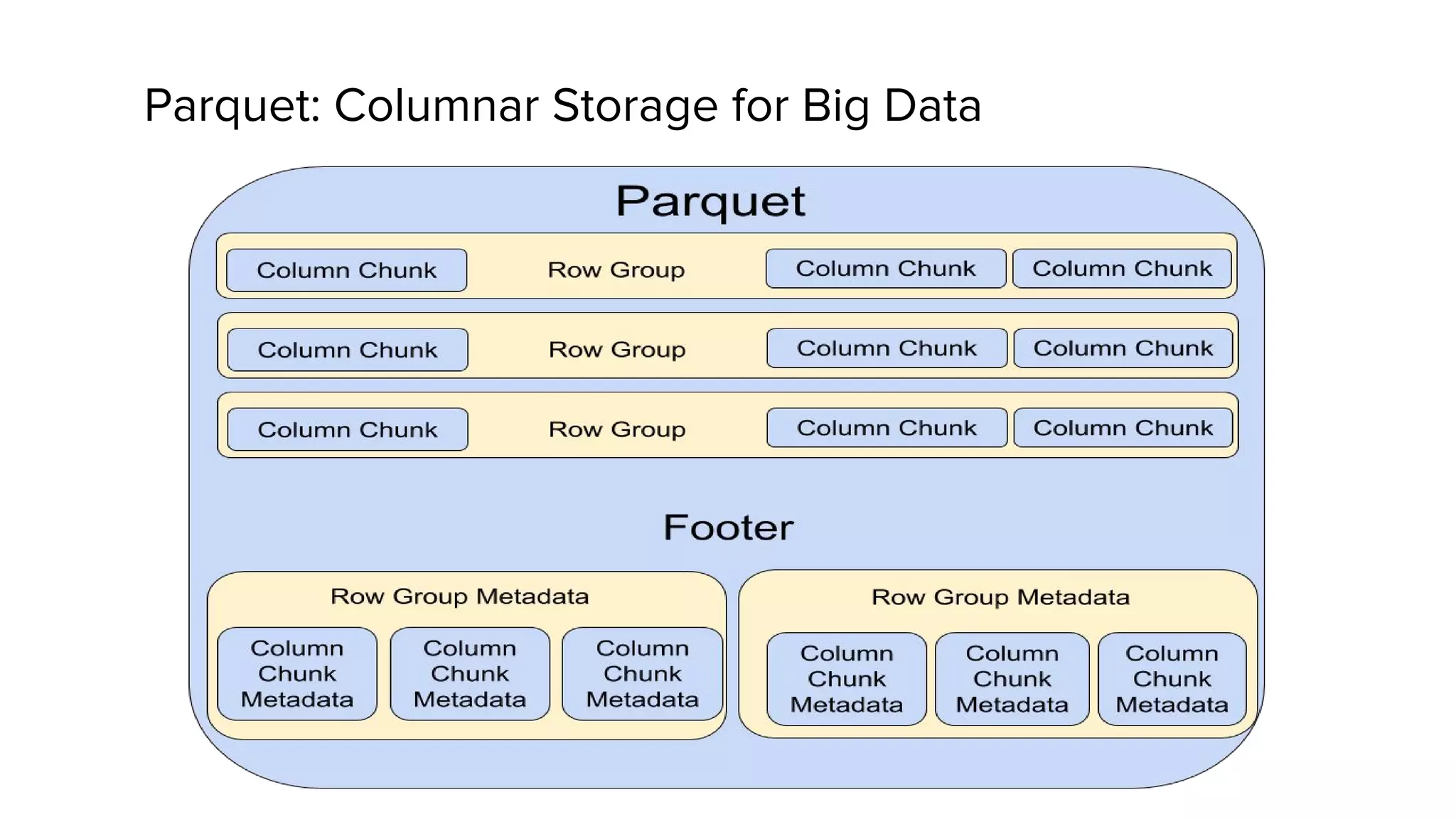

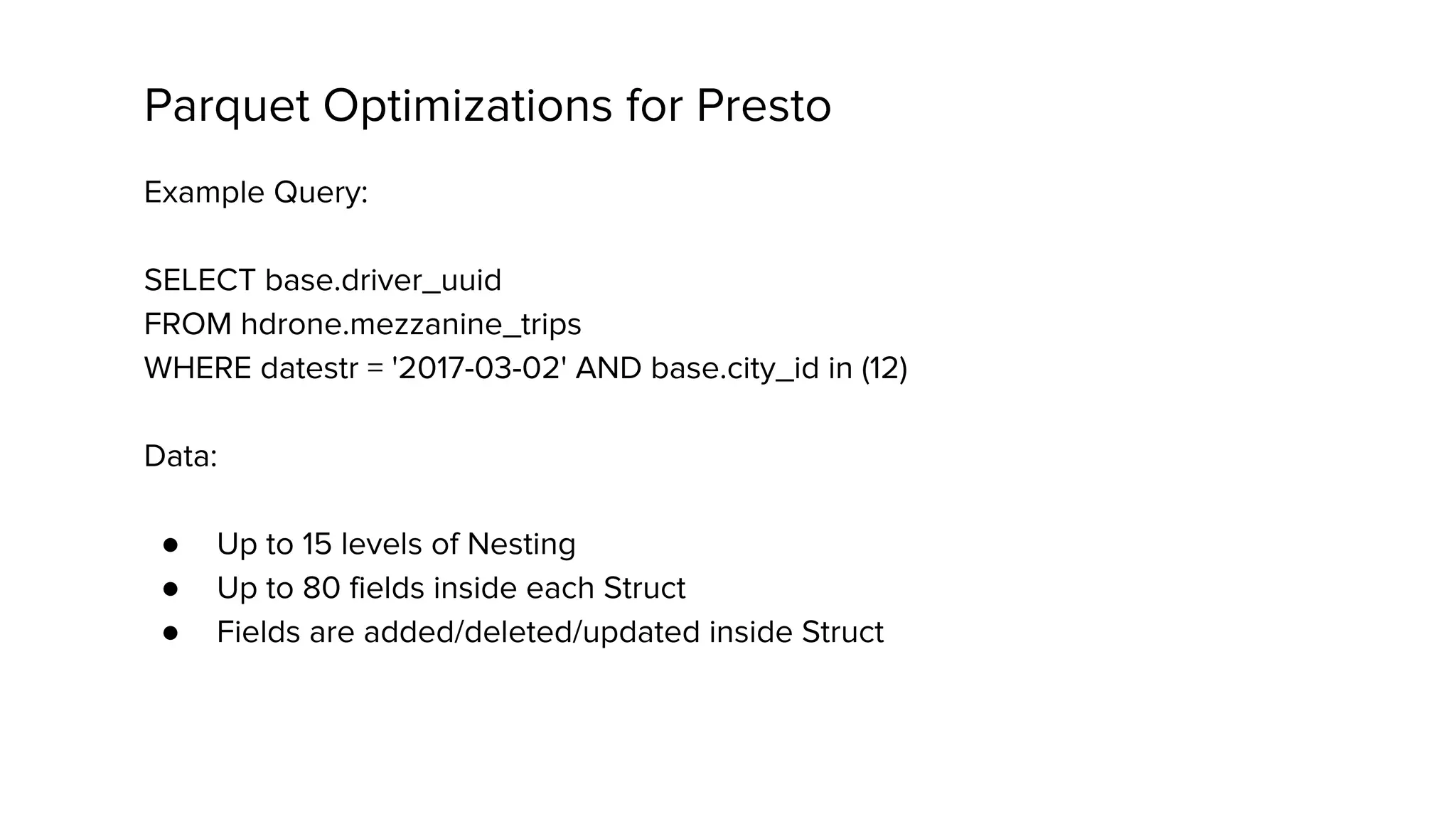

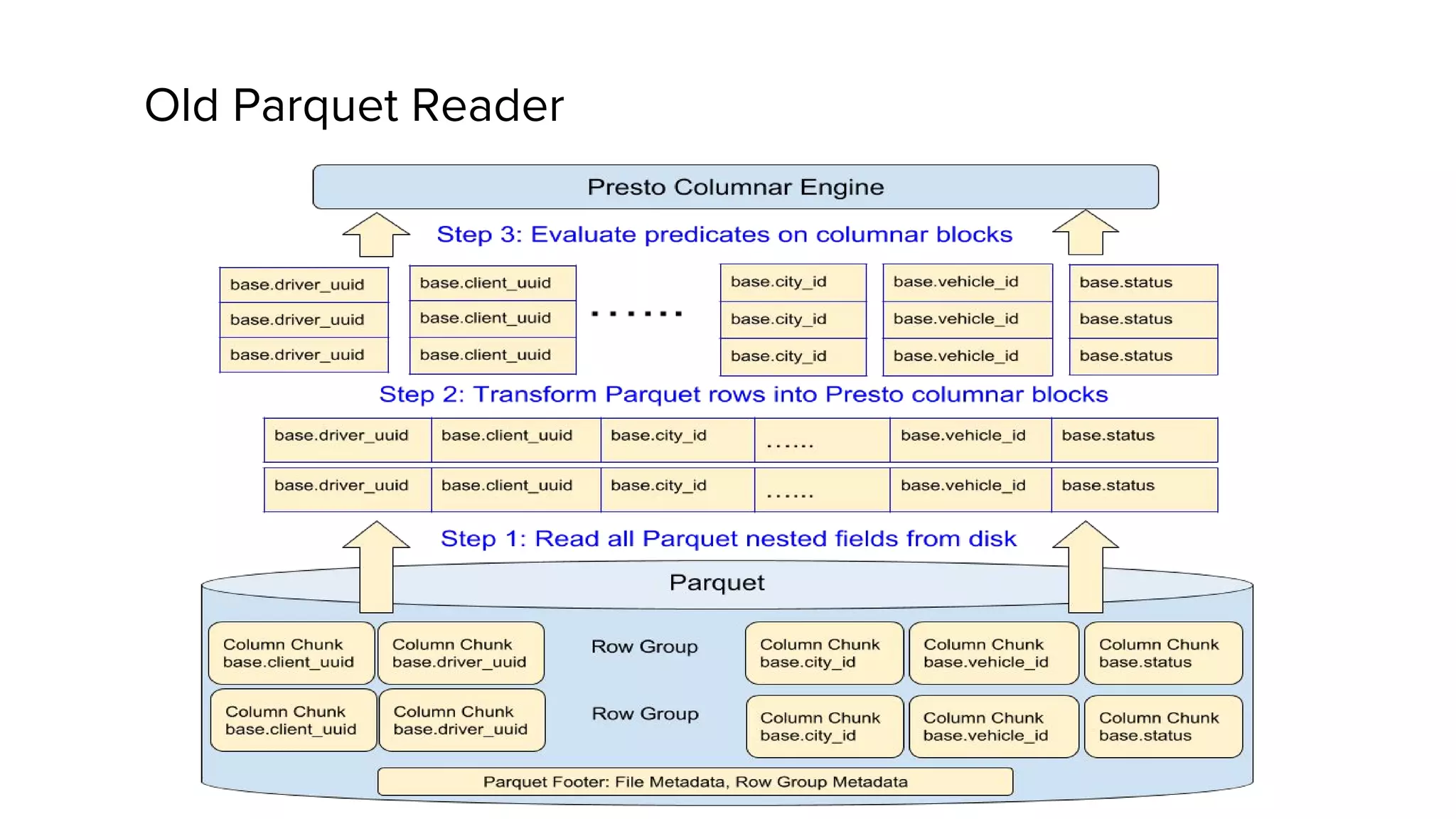

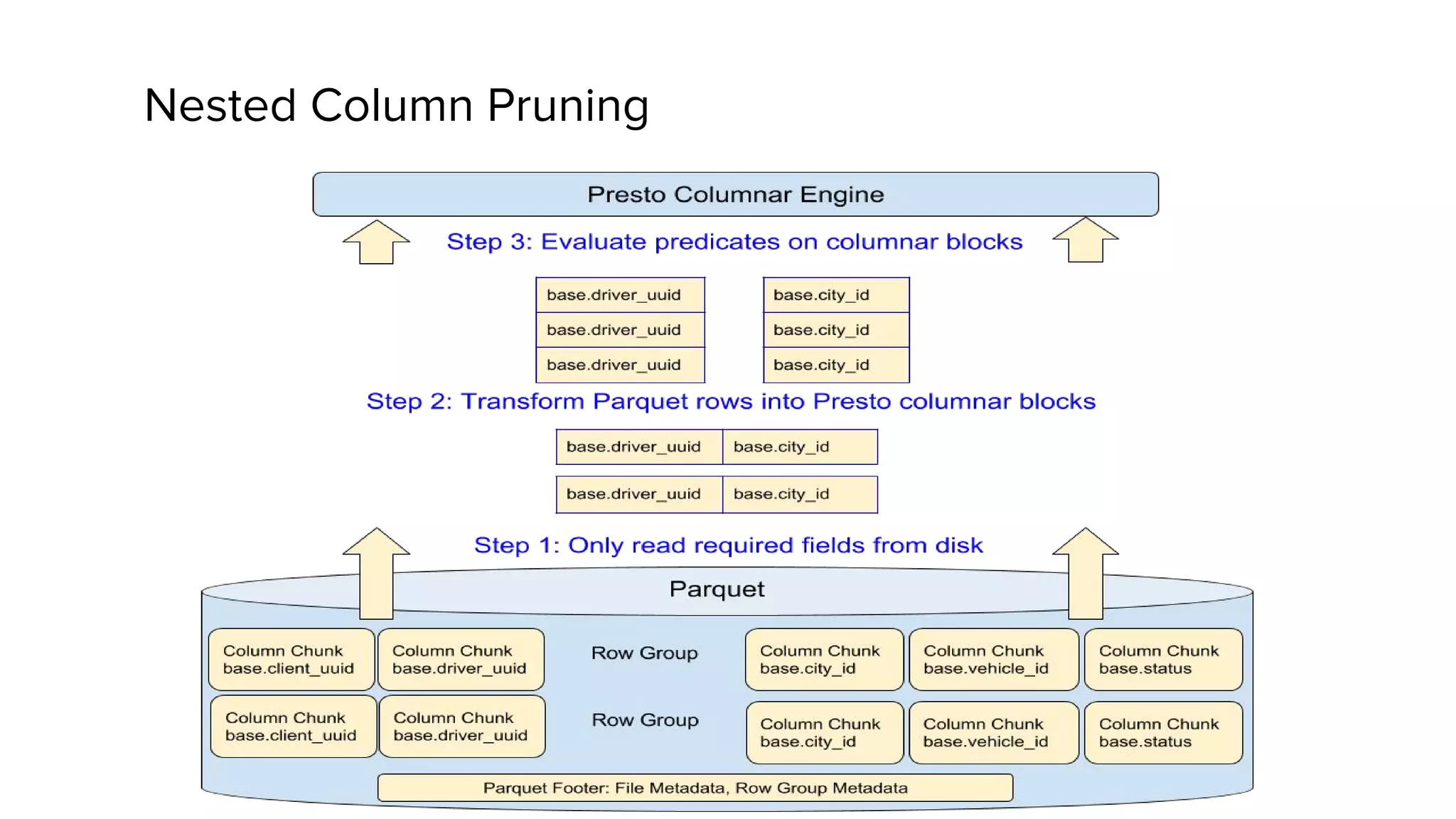

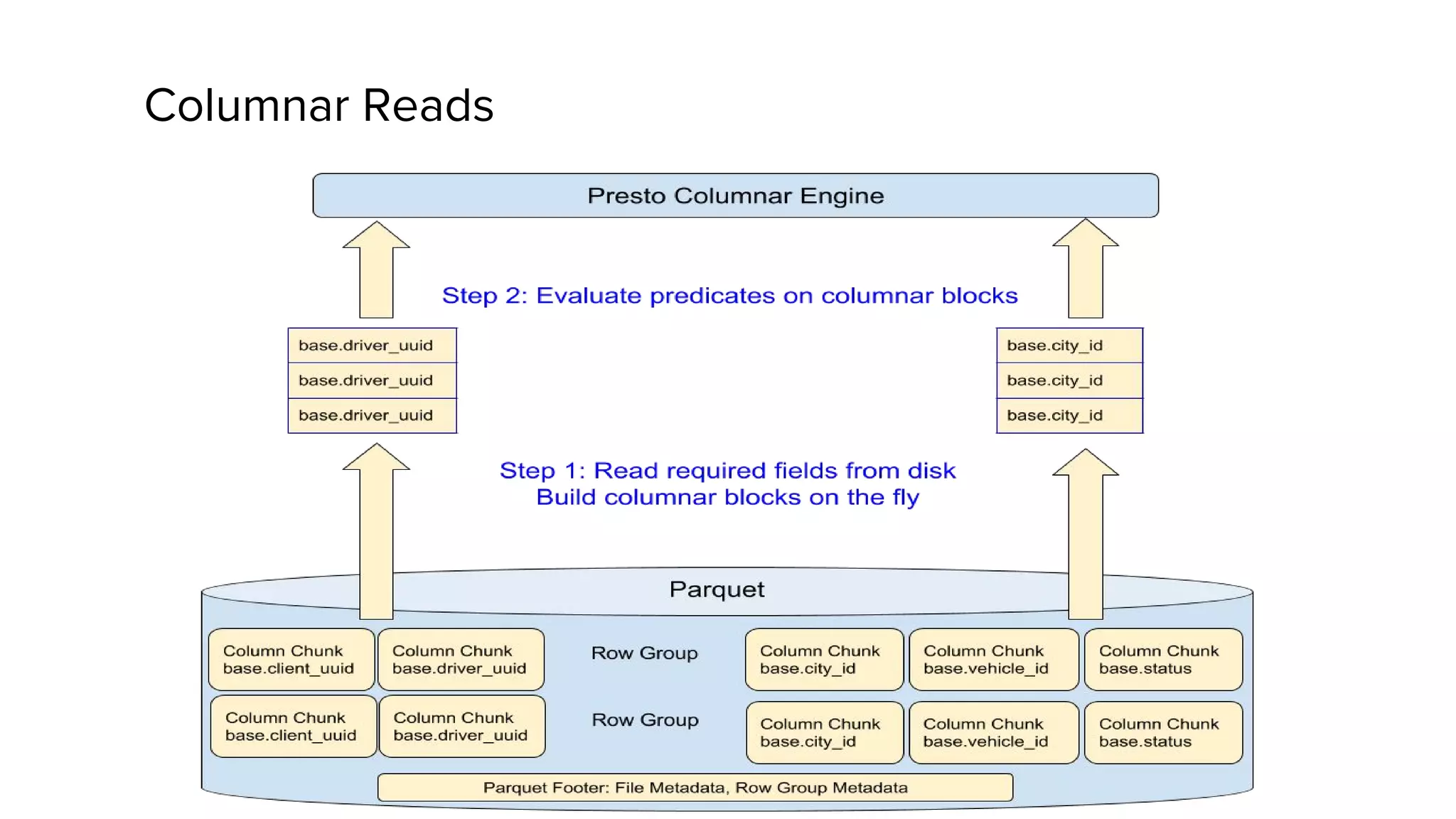

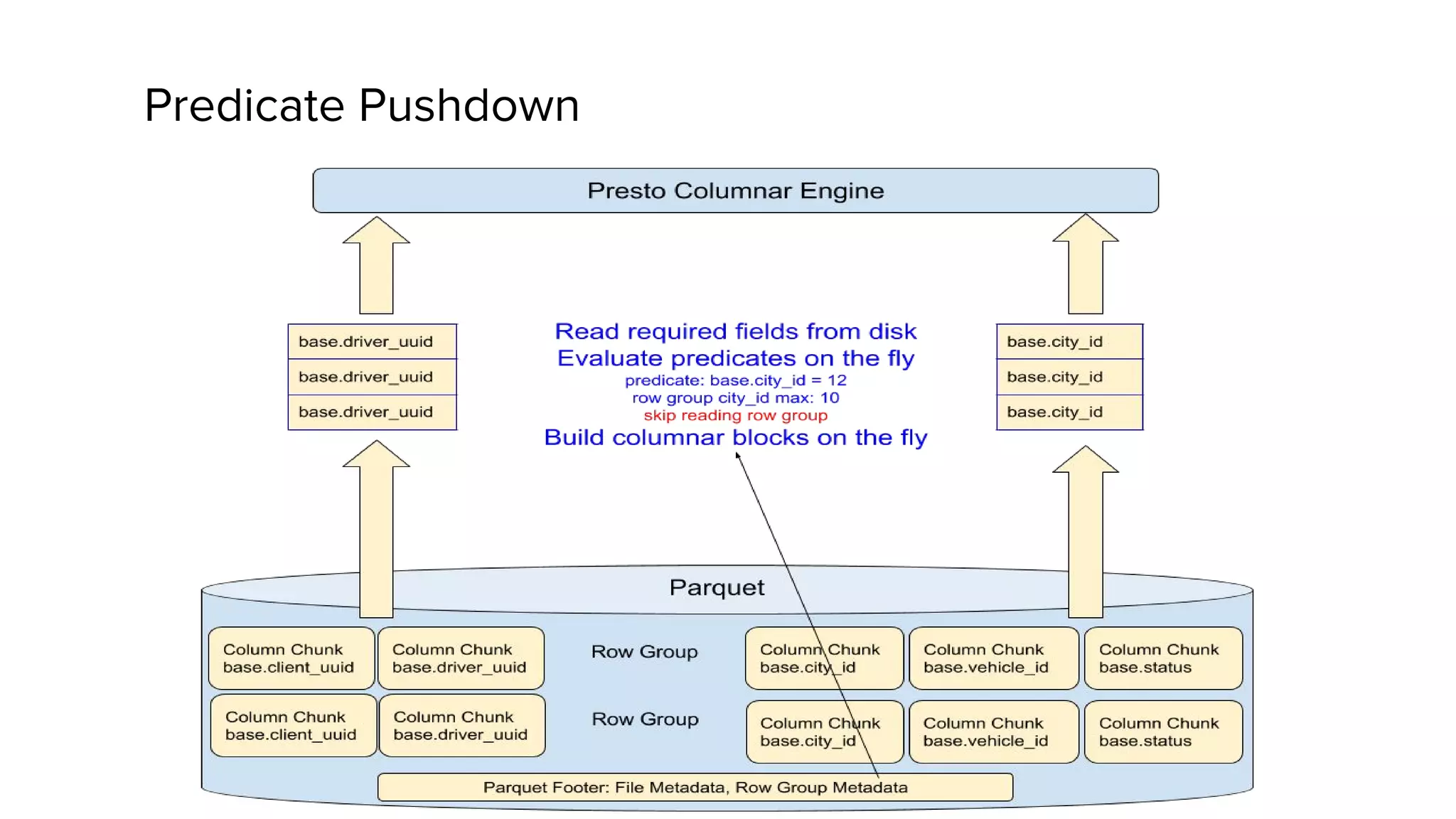

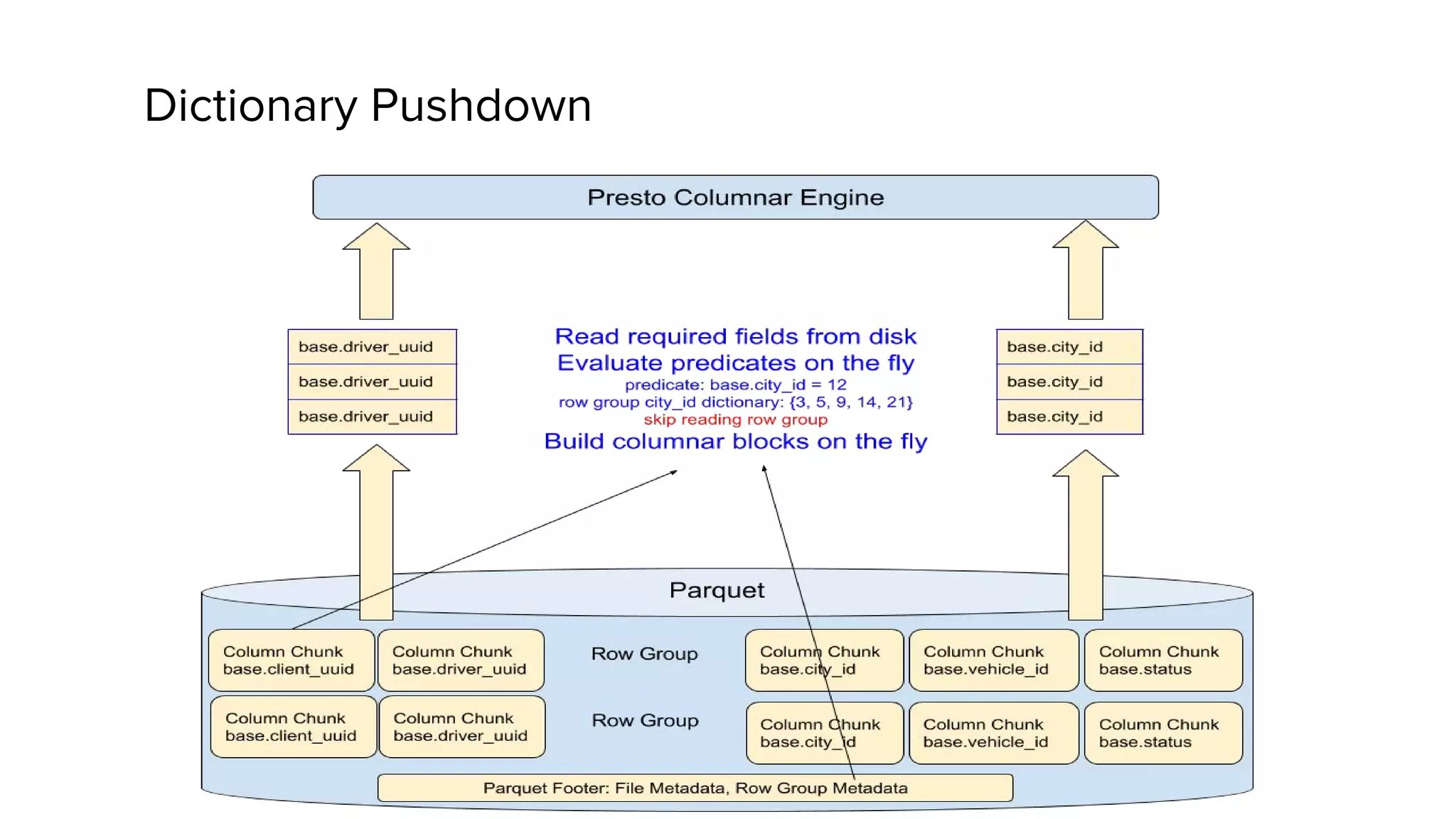

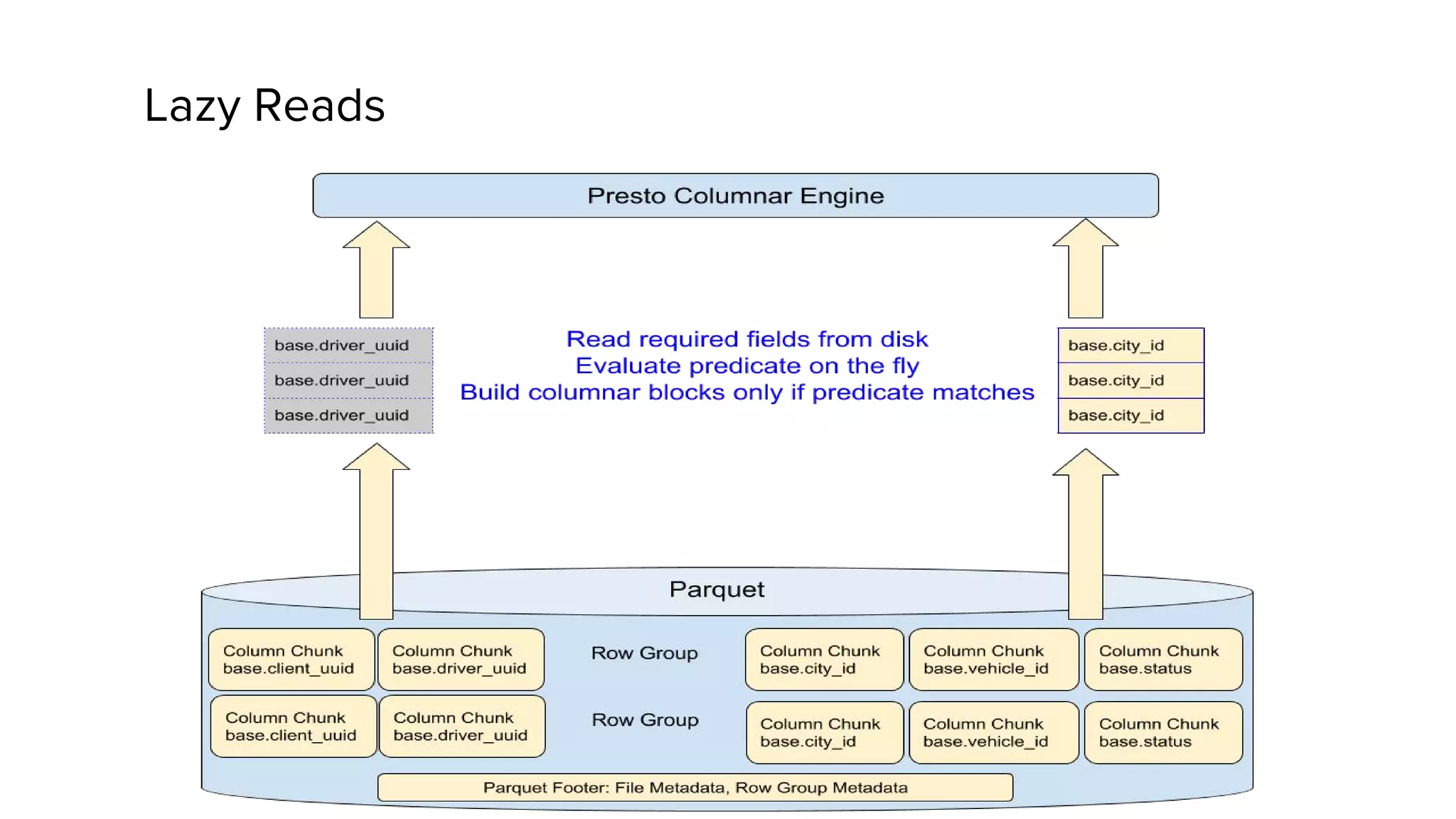

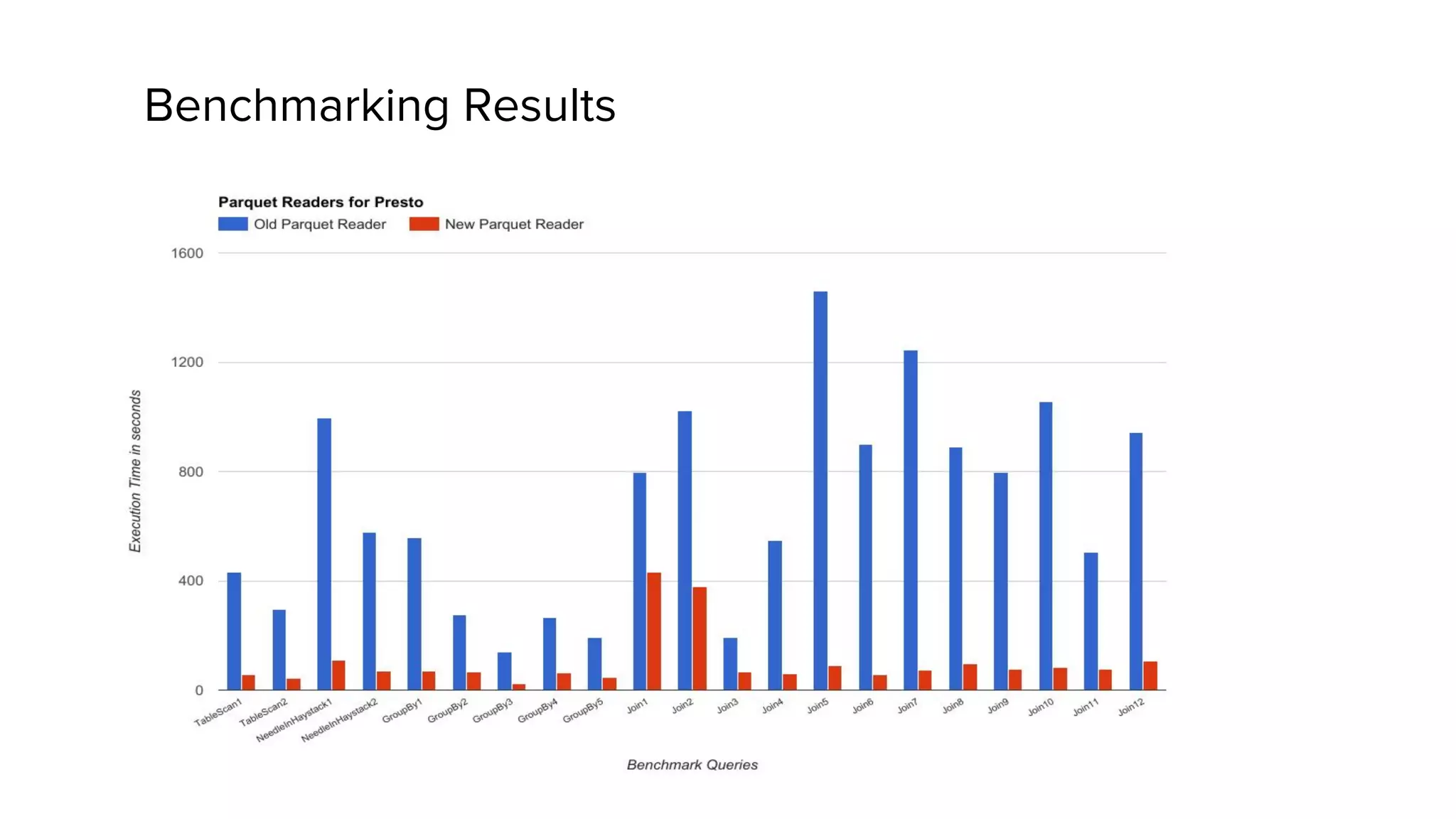

Zhenxiao Luo presented on optimizations made to Presto to improve its performance when querying Parquet files at Uber. Presto is an interactive SQL query engine used at Uber to query raw and modeled data stored in Hadoop. Parquet is a columnar storage format used at Uber. The optimizations made to Presto's Parquet reader include nested column pruning, columnar reads, predicate pushdown, dictionary pushdown, and lazy reads. These optimizations resulted in Presto query performance improvements of up to 20x for Parquet workloads at Uber's scale of petabytes of data and tens of thousands of queries per day.