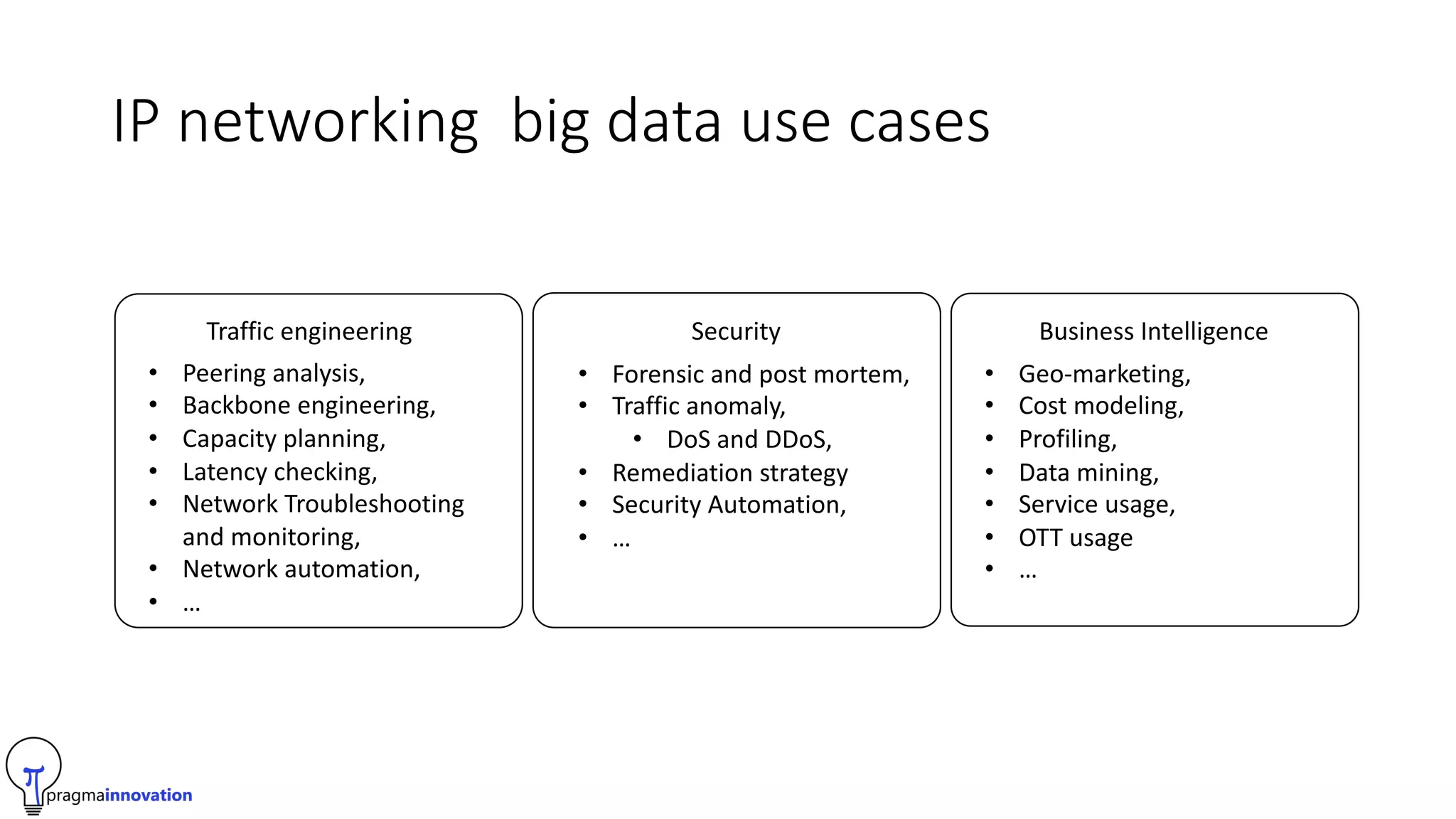

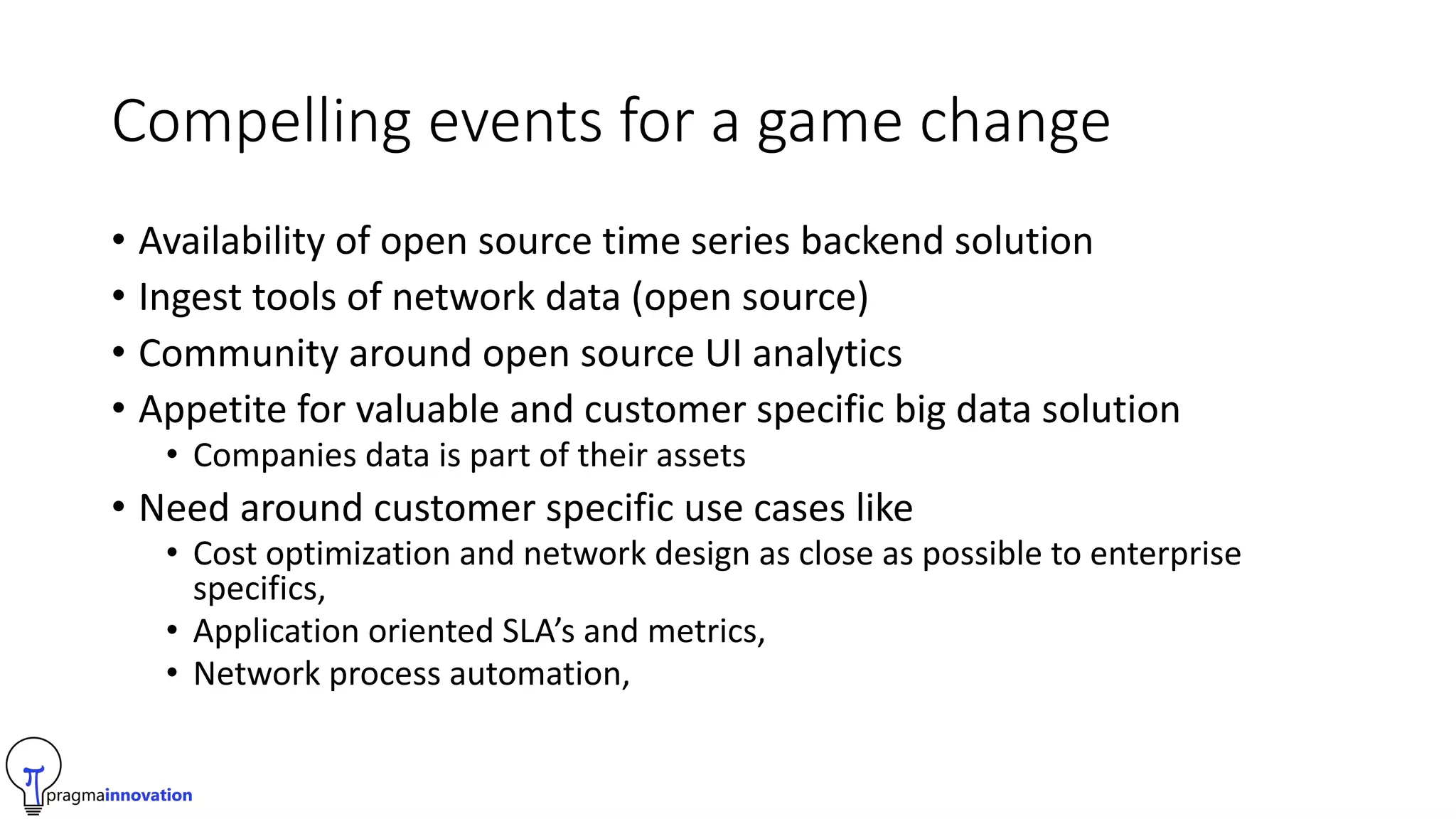

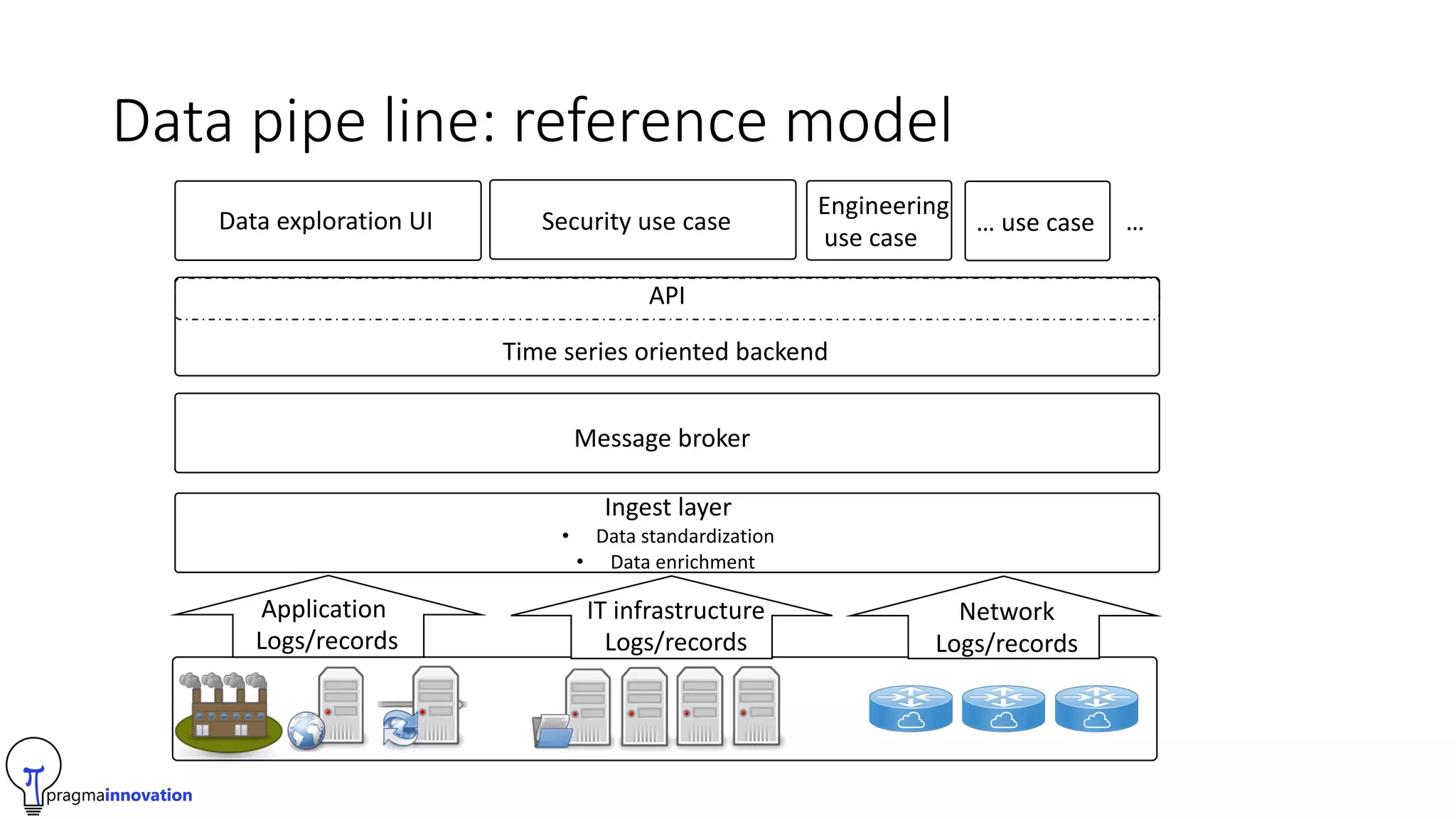

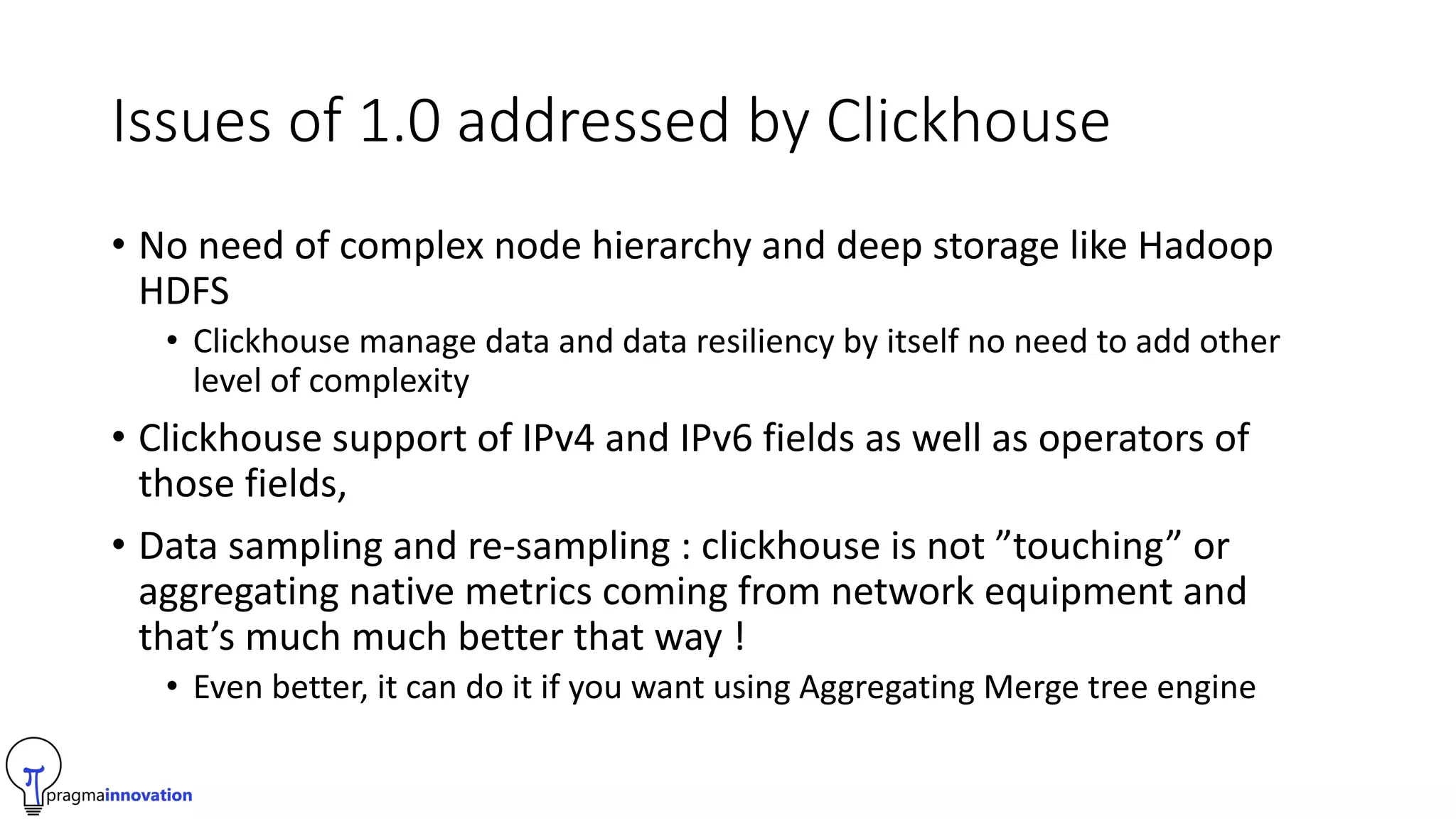

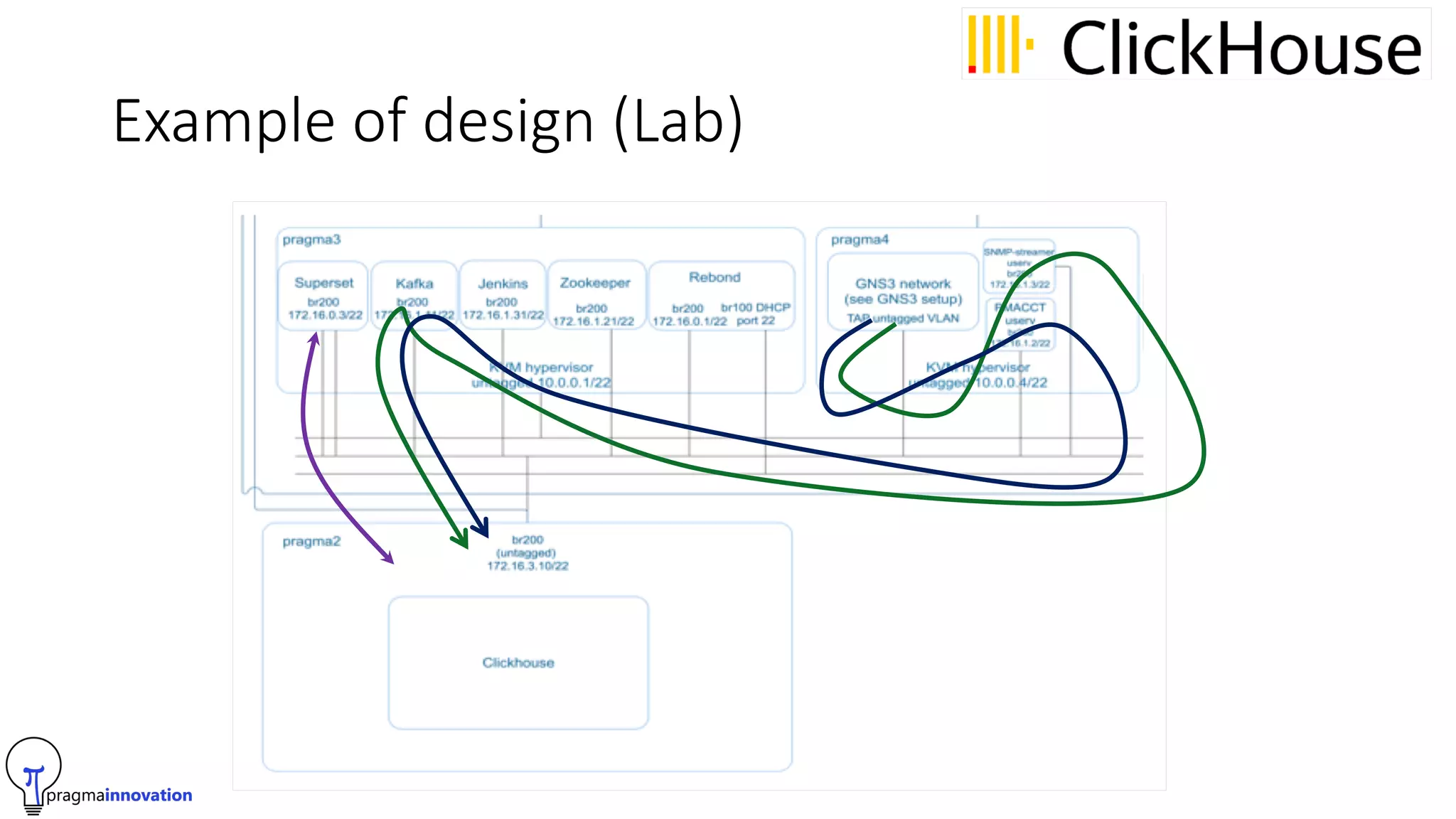

Pragma Innovation is an IT services company focused on time series data solutions. Their PASS (Pragma Analytics Software Suite) allows companies to analyze, report on, and make decisions from time series network data using open source software. It is designed for ISPs, hosting providers, and telecom companies. The solution ingests network and log data, standardizes it, enriches it using tools like GeoIP, and stores it in a time series database. This allows customers to build applications for traffic engineering, security, and business intelligence use cases. Key challenges addressed in version 2.0 of the solution include data sampling, IPv4/IPv6 support, and using ClickHouse as the time series database for its performance and simplicity