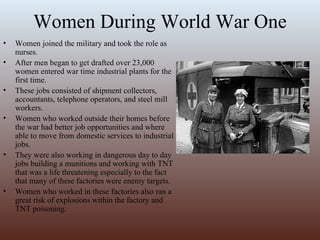

Women's roles expanded significantly during the early 20th century. During World War I, many women took jobs in factories and as nurses for the first time while men were deployed. After the war, they continued to work in greater numbers outside the home. This led to demands for better wages and women's suffrage. In 1920, the 19th amendment was passed, guaranteeing women the right to vote. In the 1920s, women gained further rights while cultural changes challenged traditional views of women's roles, as new fashions and activities made women's lives less domestic and more focused on social life and individual expression.