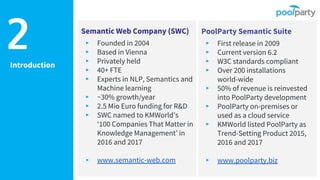

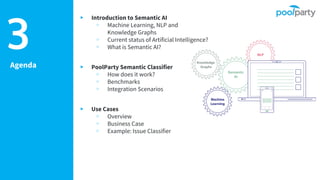

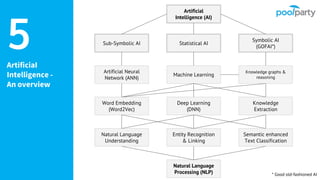

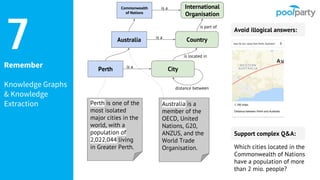

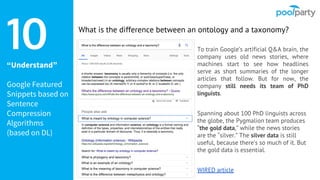

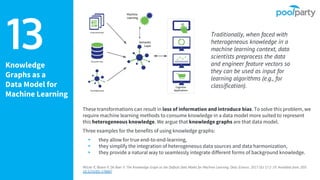

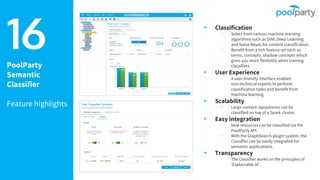

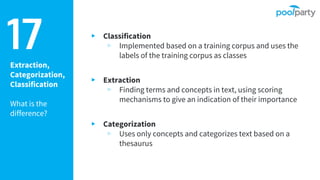

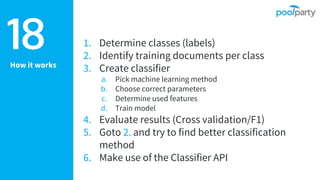

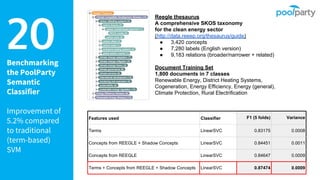

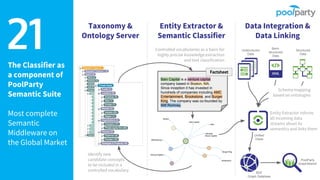

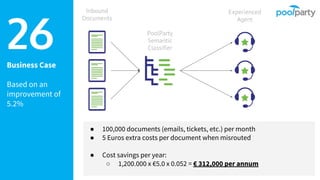

The document provides an overview of the Semantic Web Company, highlighting its Poolparty Semantic Suite, which integrates machine learning, natural language processing (NLP), and knowledge graphs. It outlines the capabilities and benefits of the Poolparty Semantic Classifier, including its features, performance benchmarks, and various use cases such as news classification and sentiment analysis. Additionally, it discusses the relationship between artificial intelligence and semantic models and examines the business case for enhanced data processing efficiency.