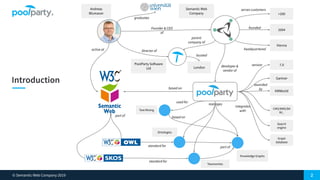

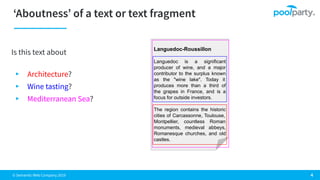

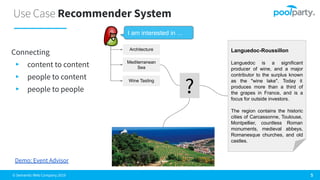

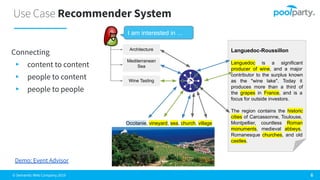

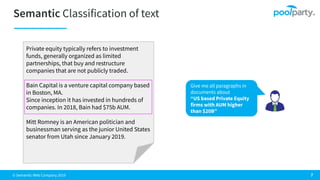

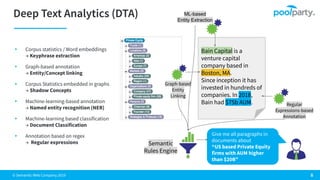

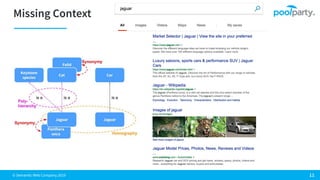

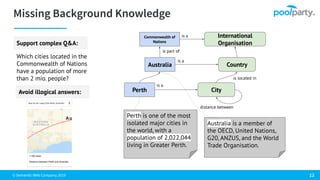

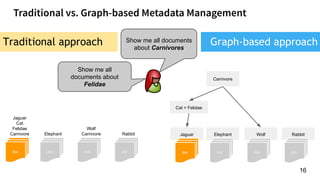

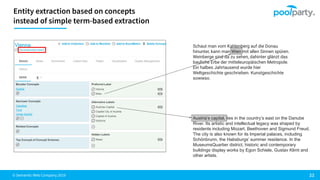

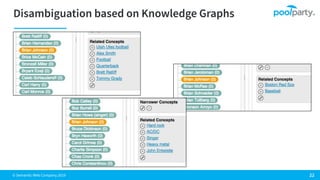

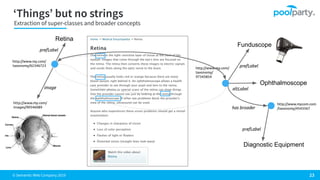

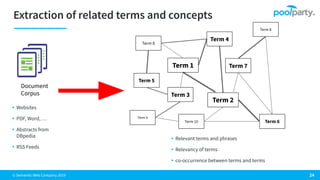

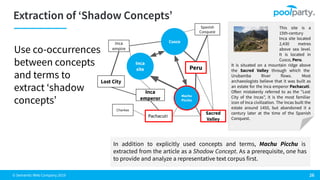

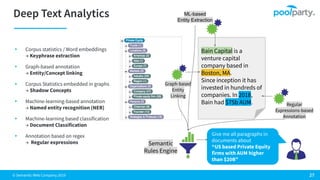

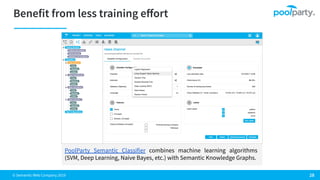

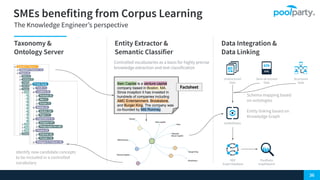

The document discusses the Semantic Web Company and its co-founder, Andreas Blumauer, highlighting its software solutions like PoolParty for knowledge graphs and text analytics. It covers topics such as private equity, particularly Bain Capital, wine production in Languedoc-Roussillon, and various applications of a recommender system connecting content and users. Additionally, it addresses challenges in text mining and semantic analysis, offering insights into deep text analytics and machine learning techniques.