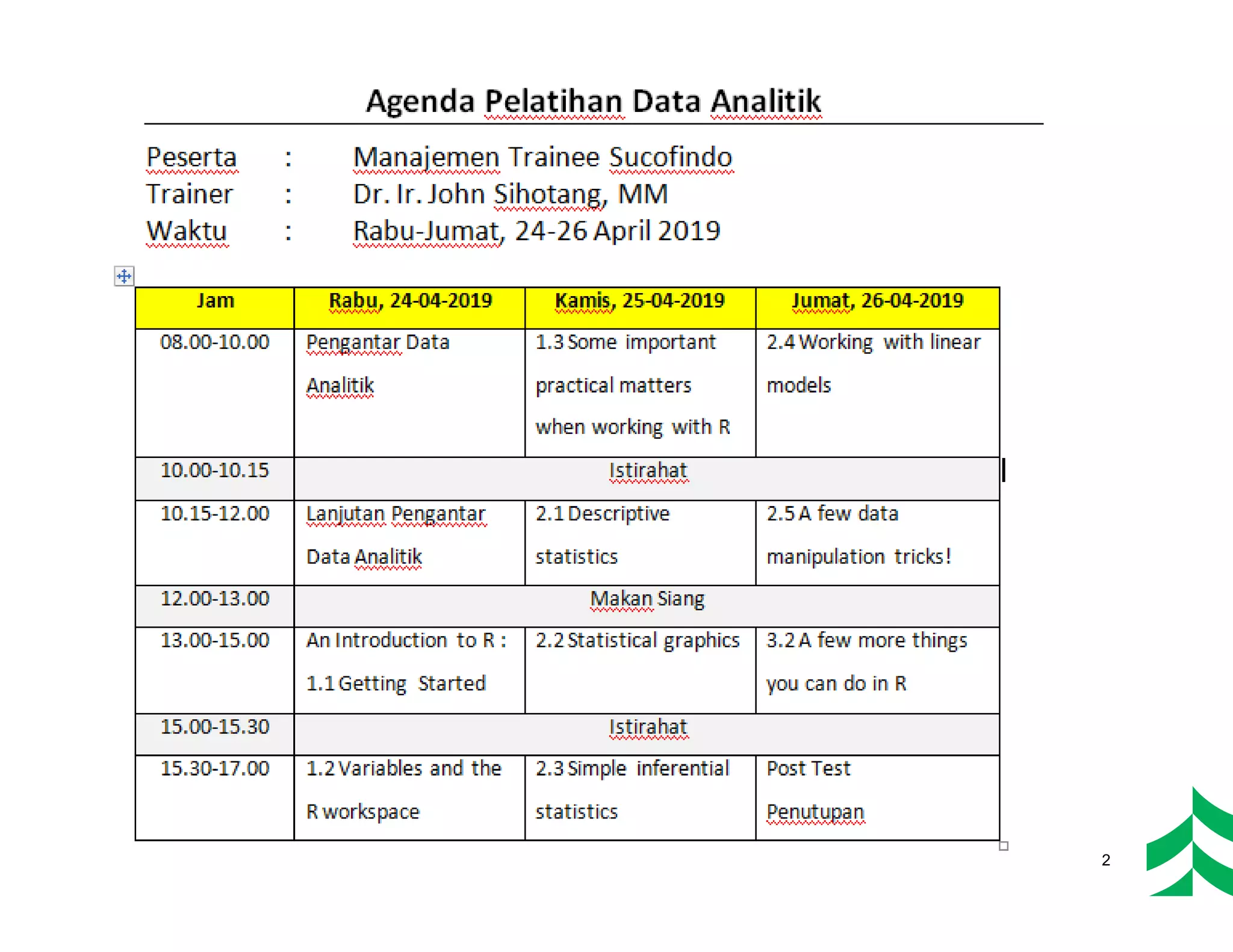

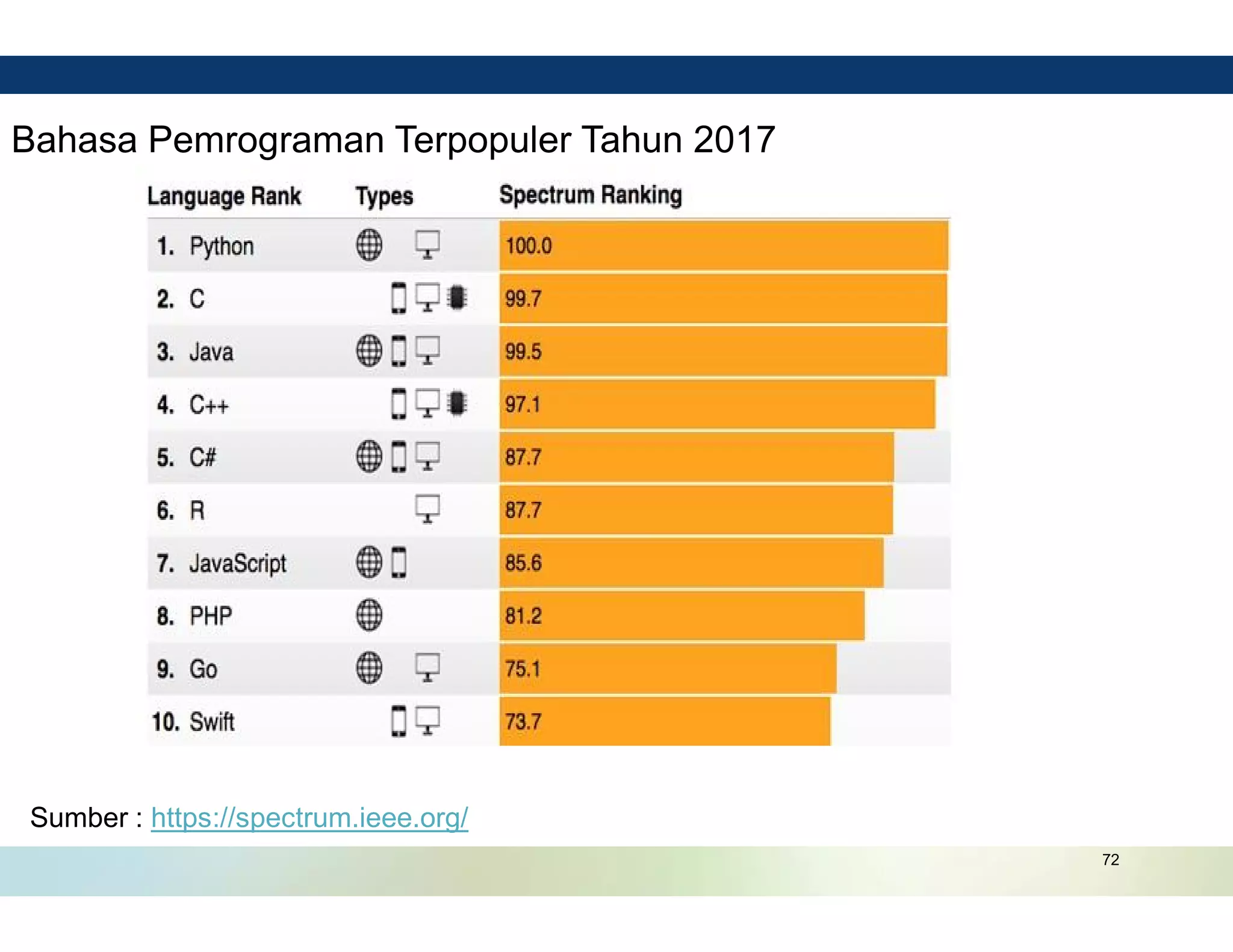

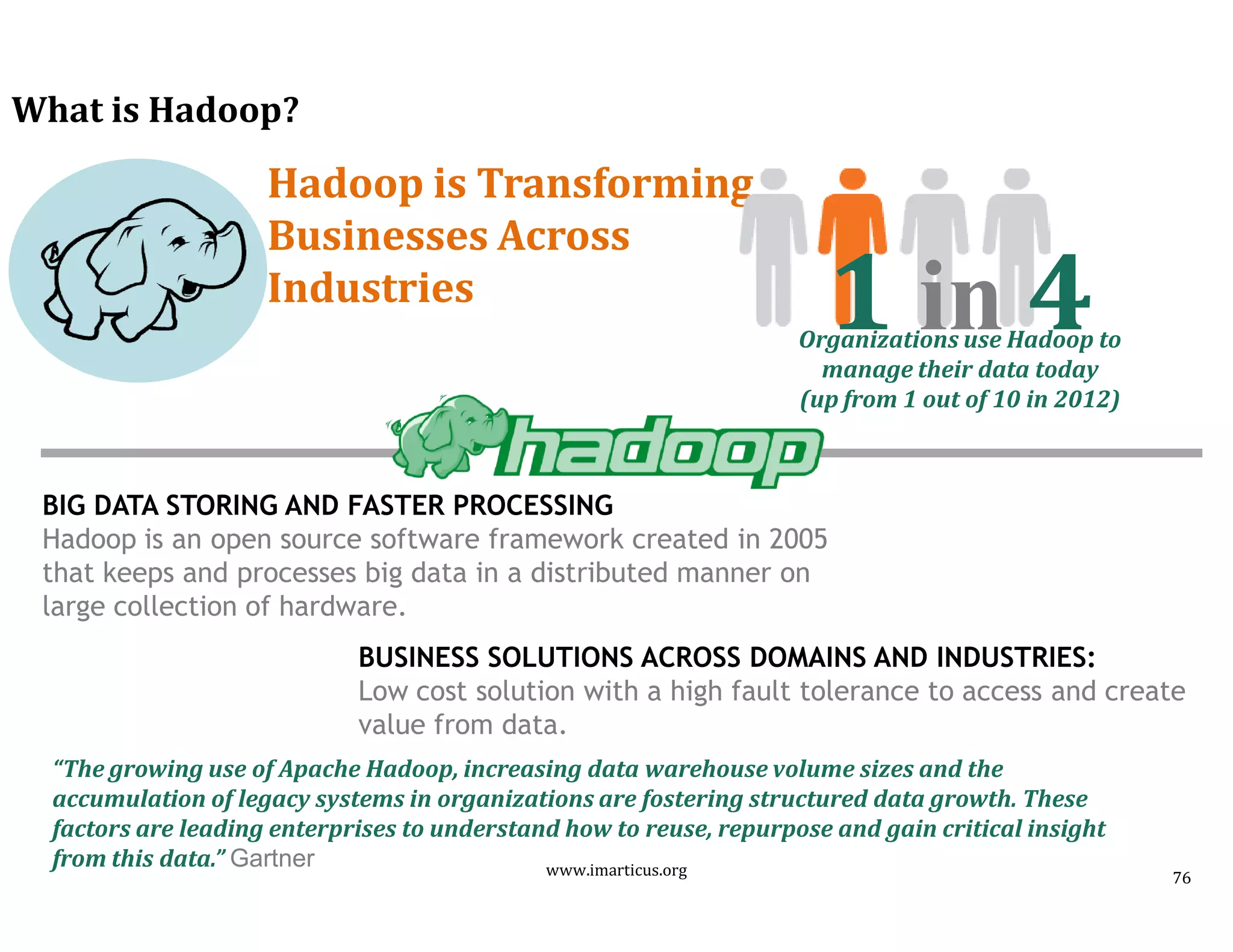

The document provides an overview of a 3-day data analytics training program held in Jakarta, Indonesia from April 24-26, 2019. It discusses topics that will be covered including big data overview, data for business analysis, data analytics concepts, and data analytics tools. The training is led by Dr. Ir. John Sihotang and is aimed at management trainees of the company Sucofindo.