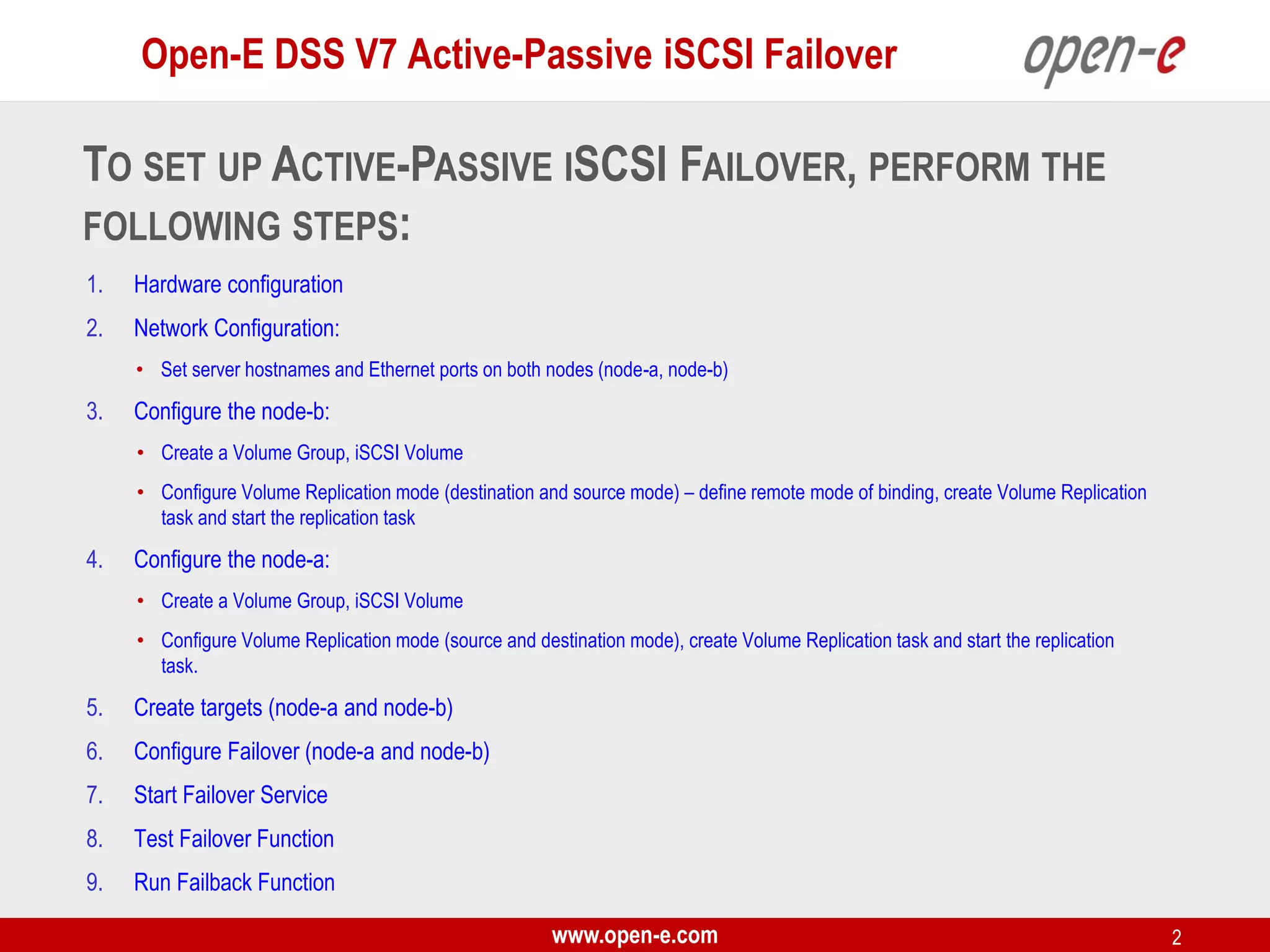

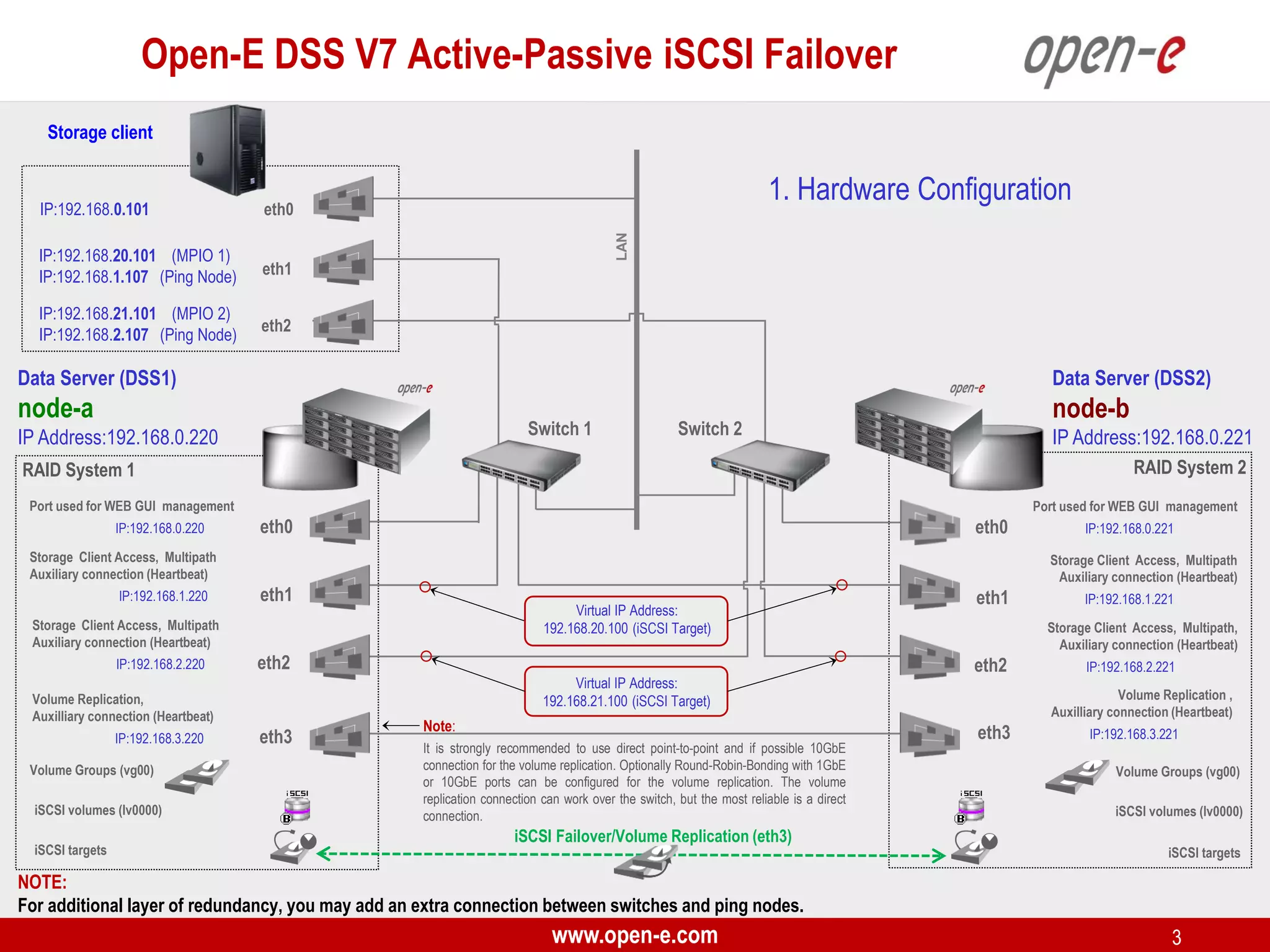

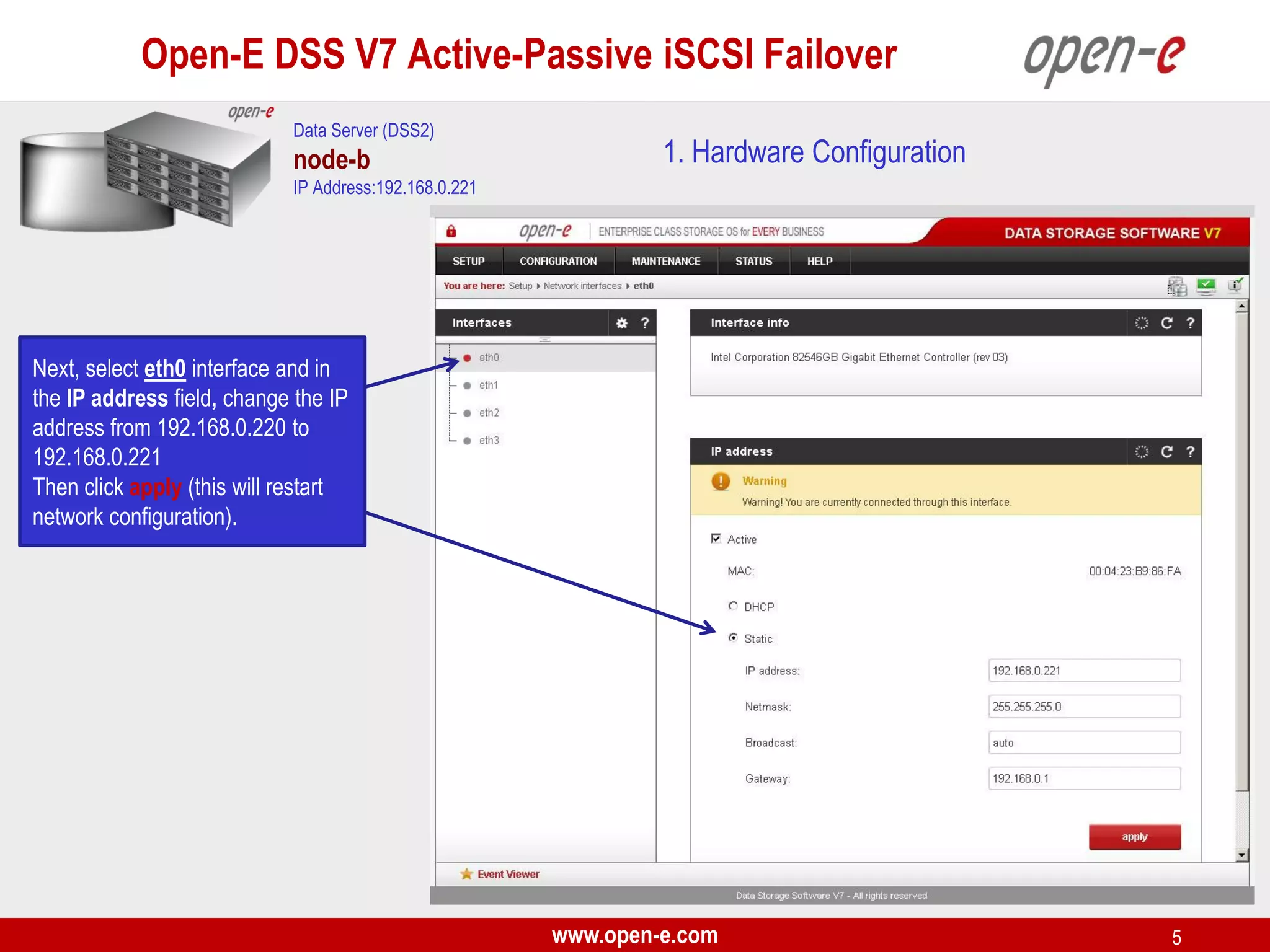

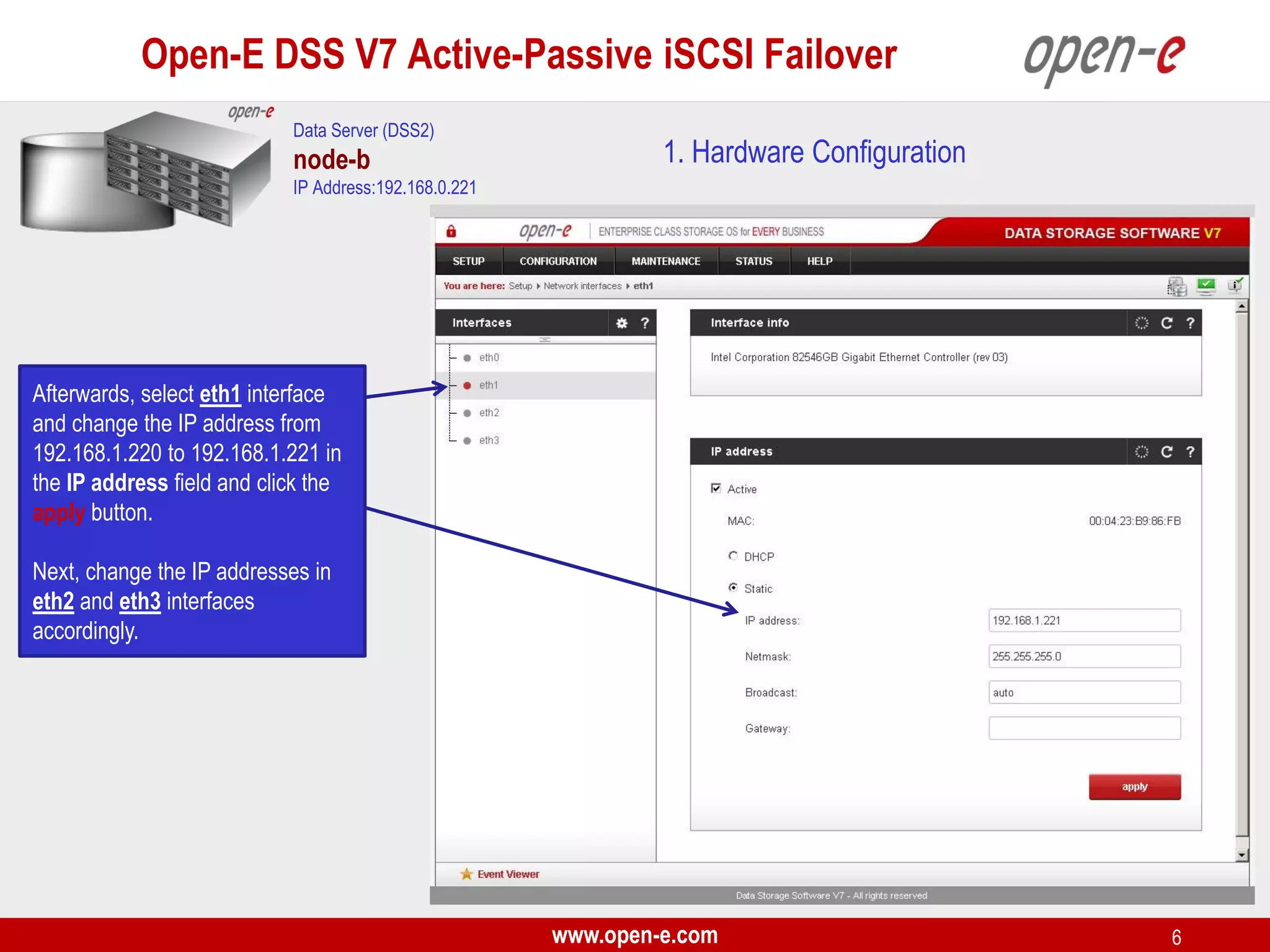

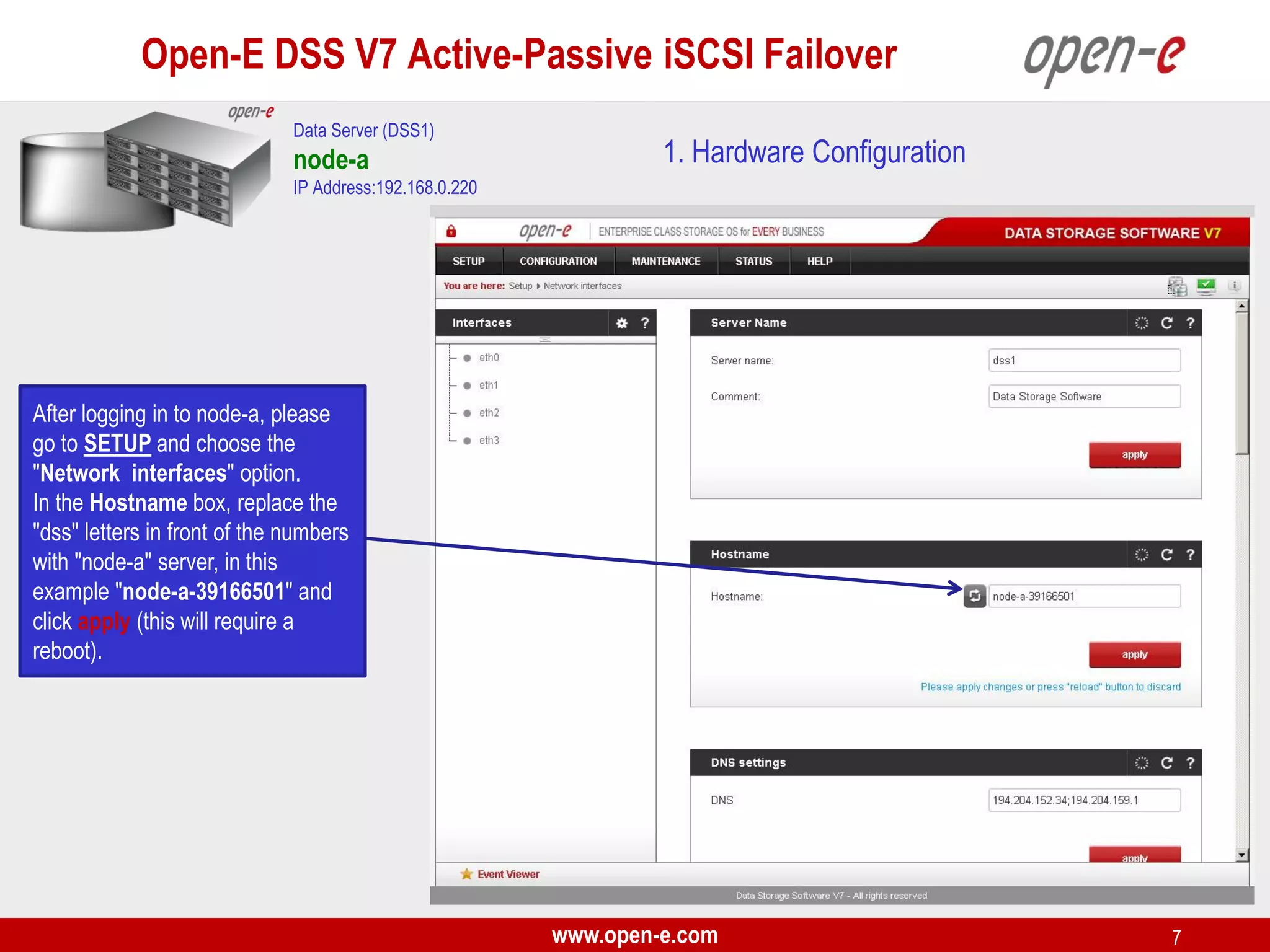

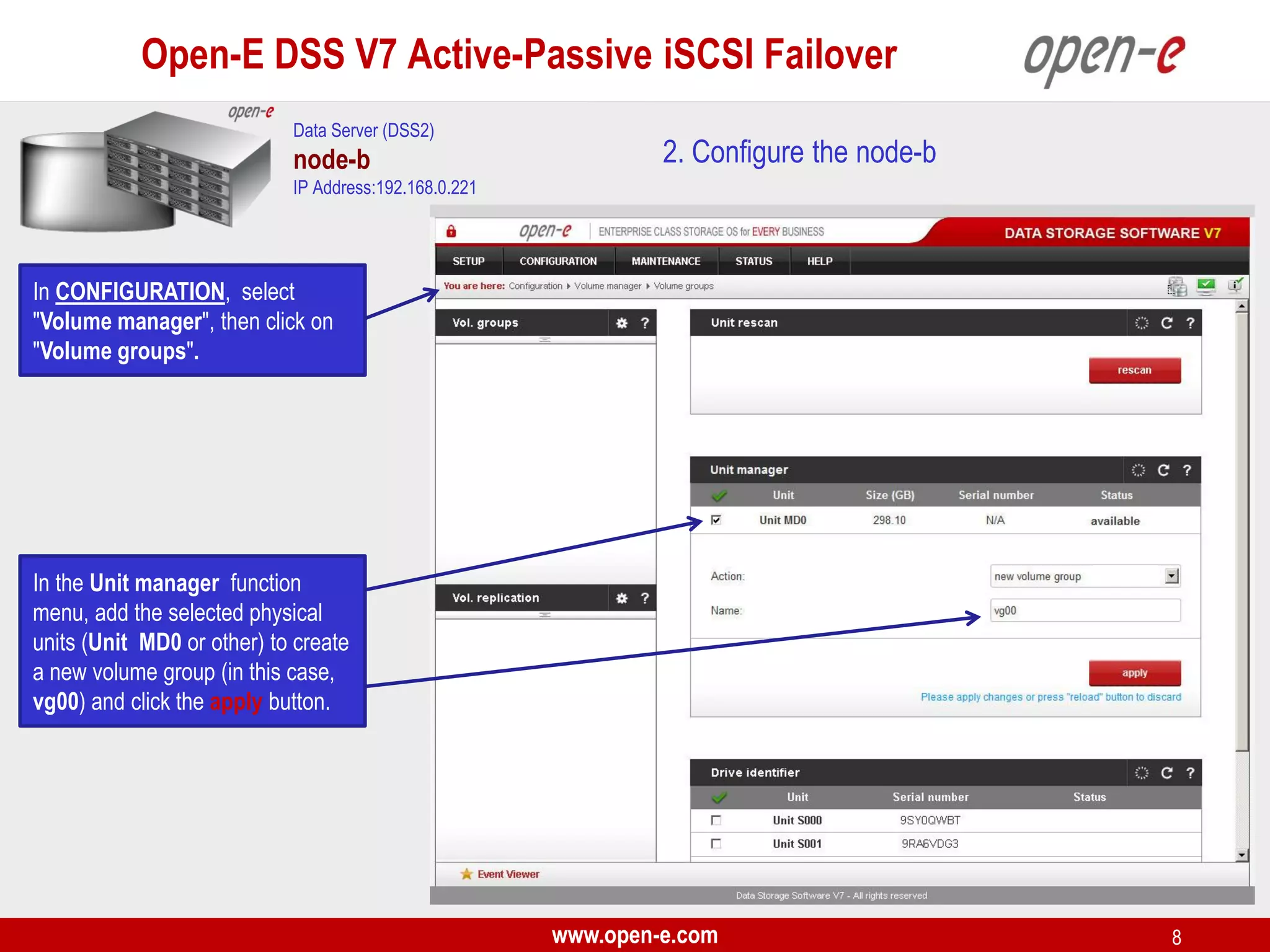

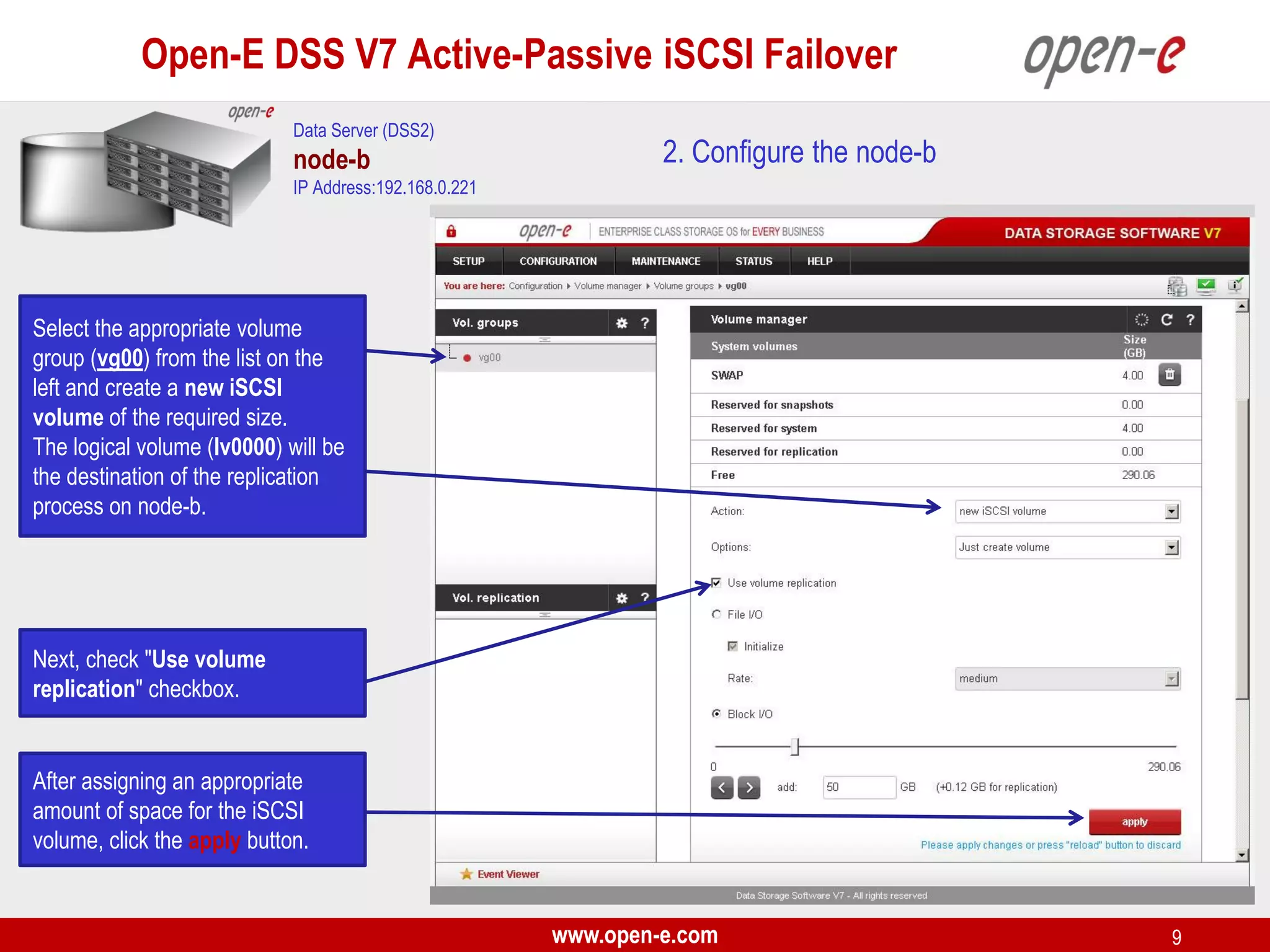

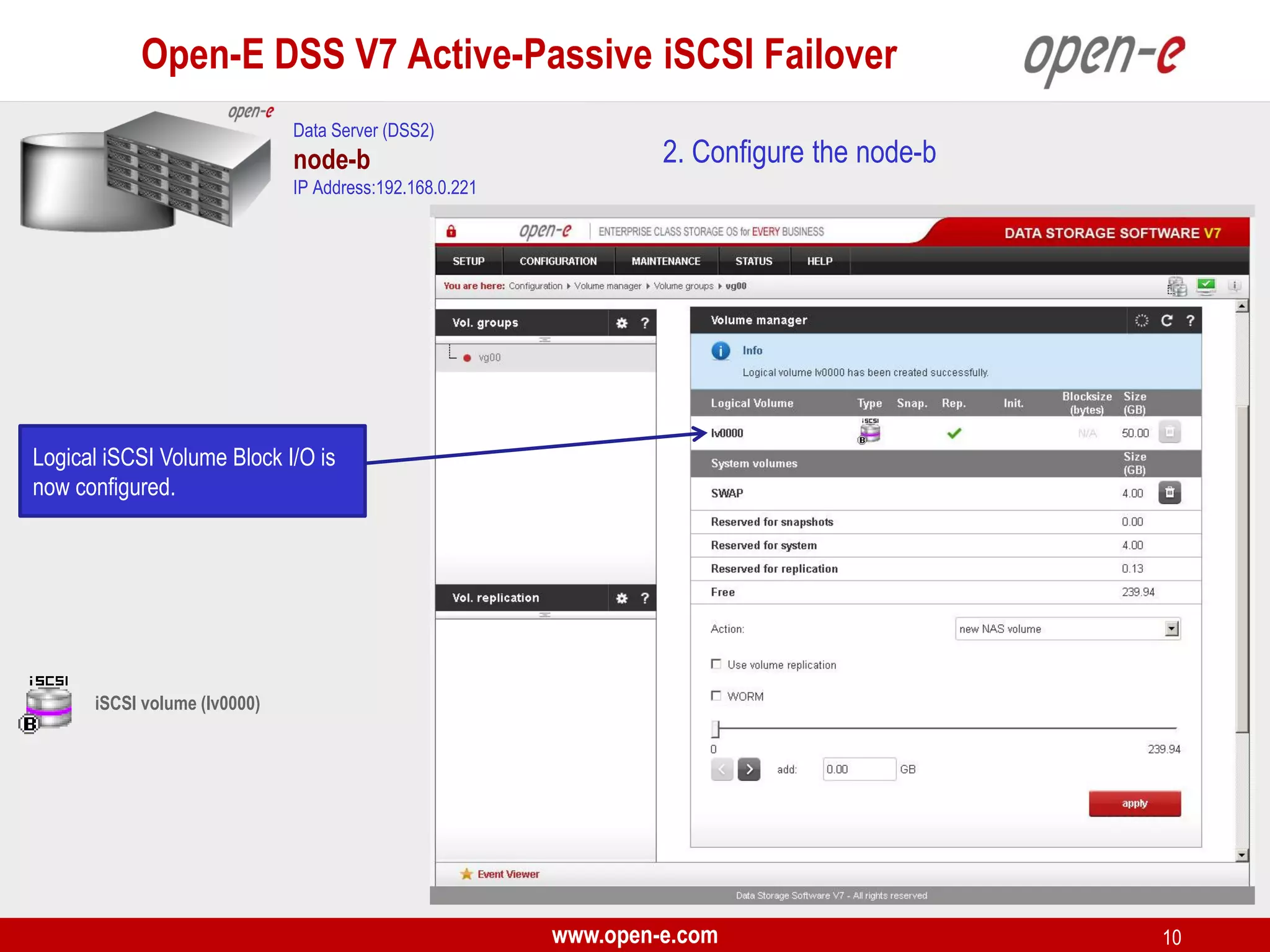

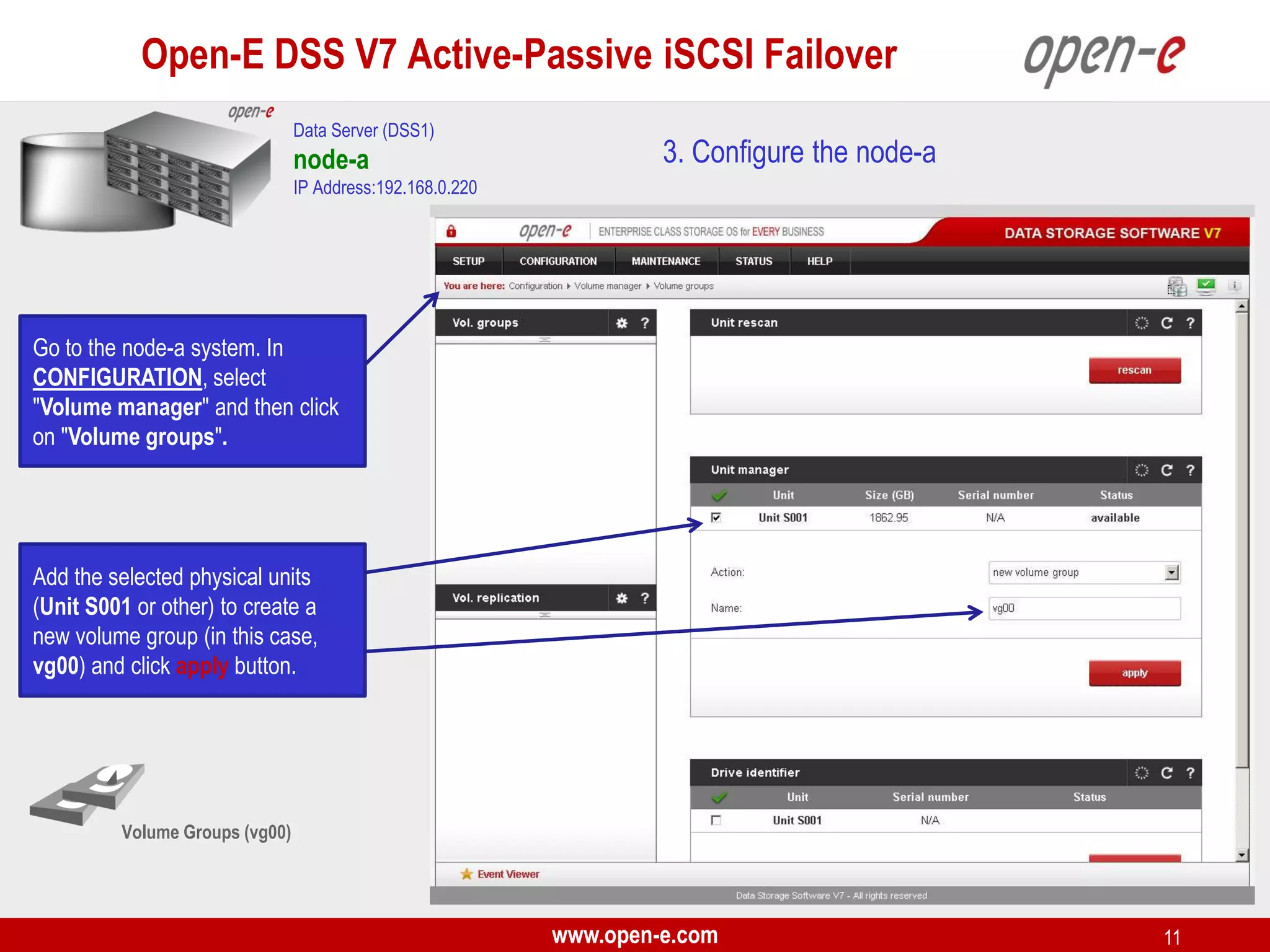

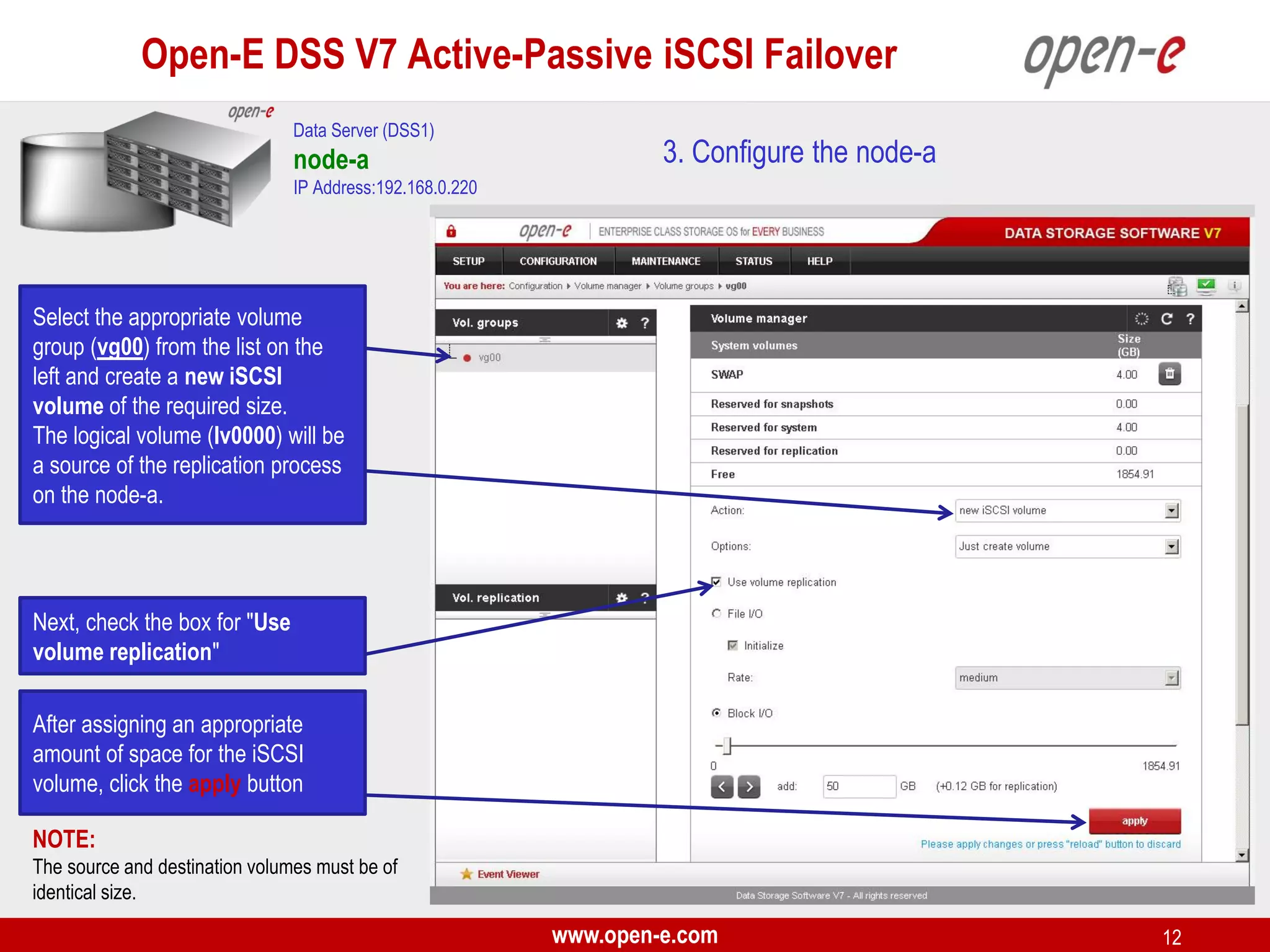

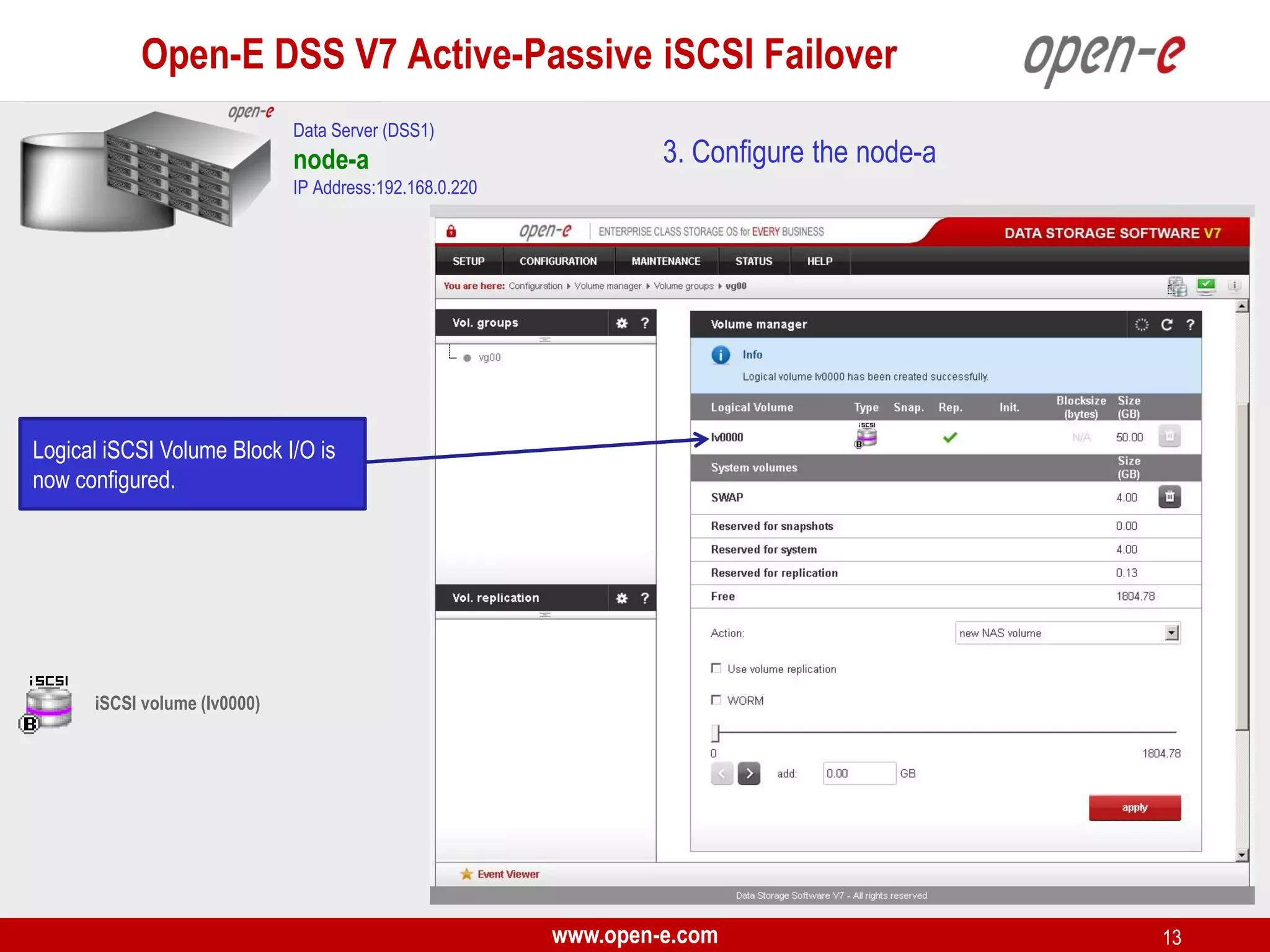

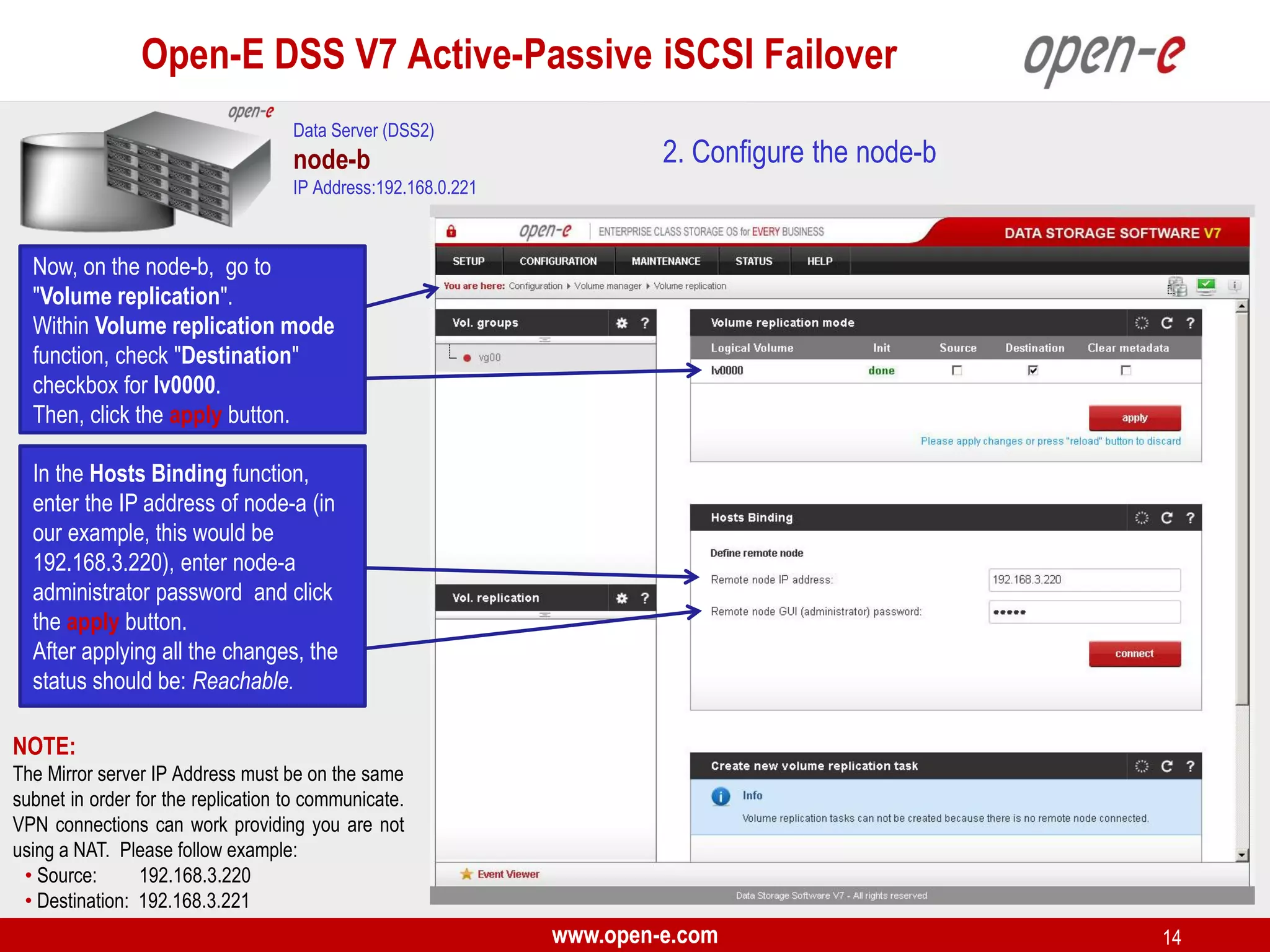

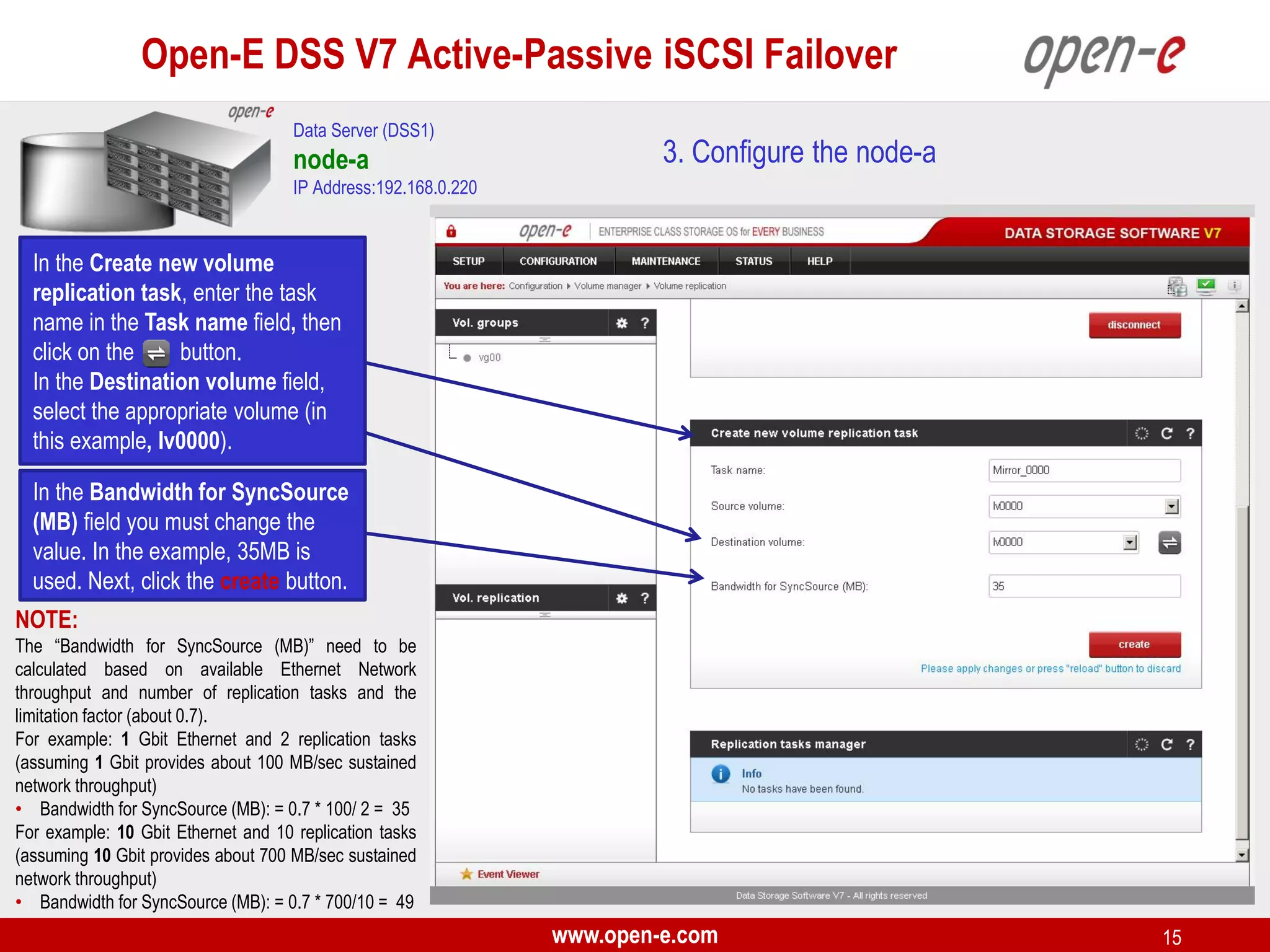

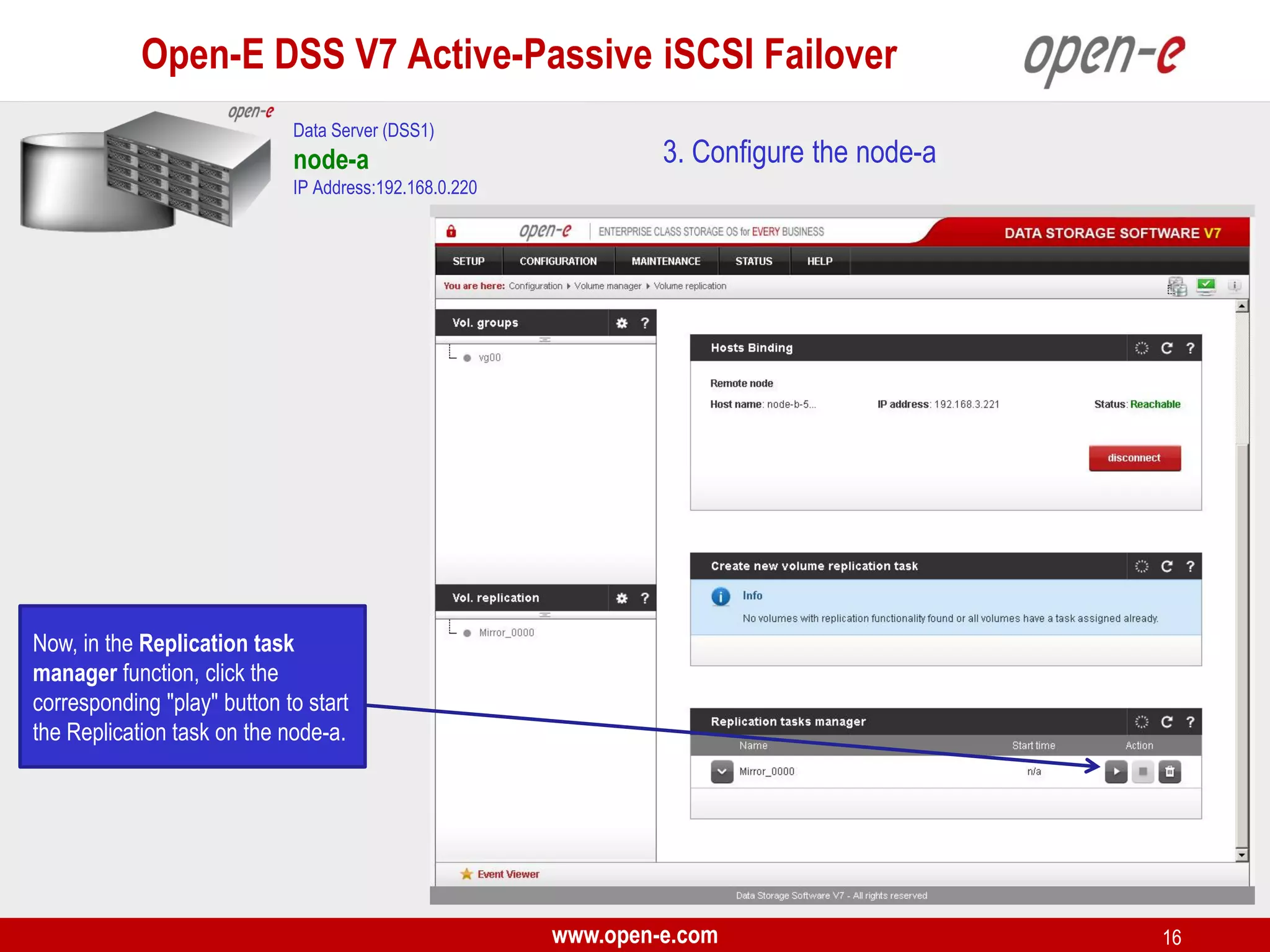

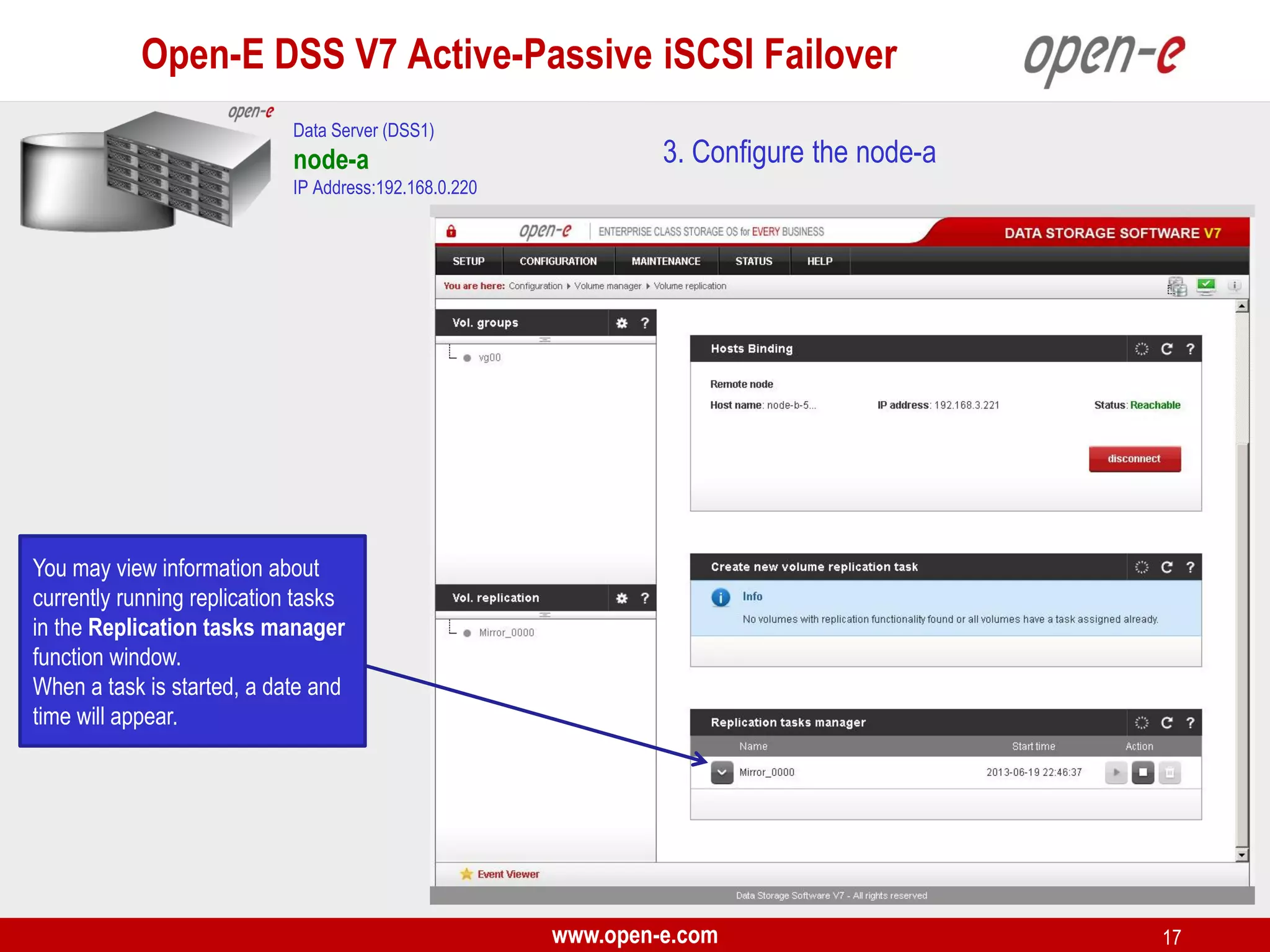

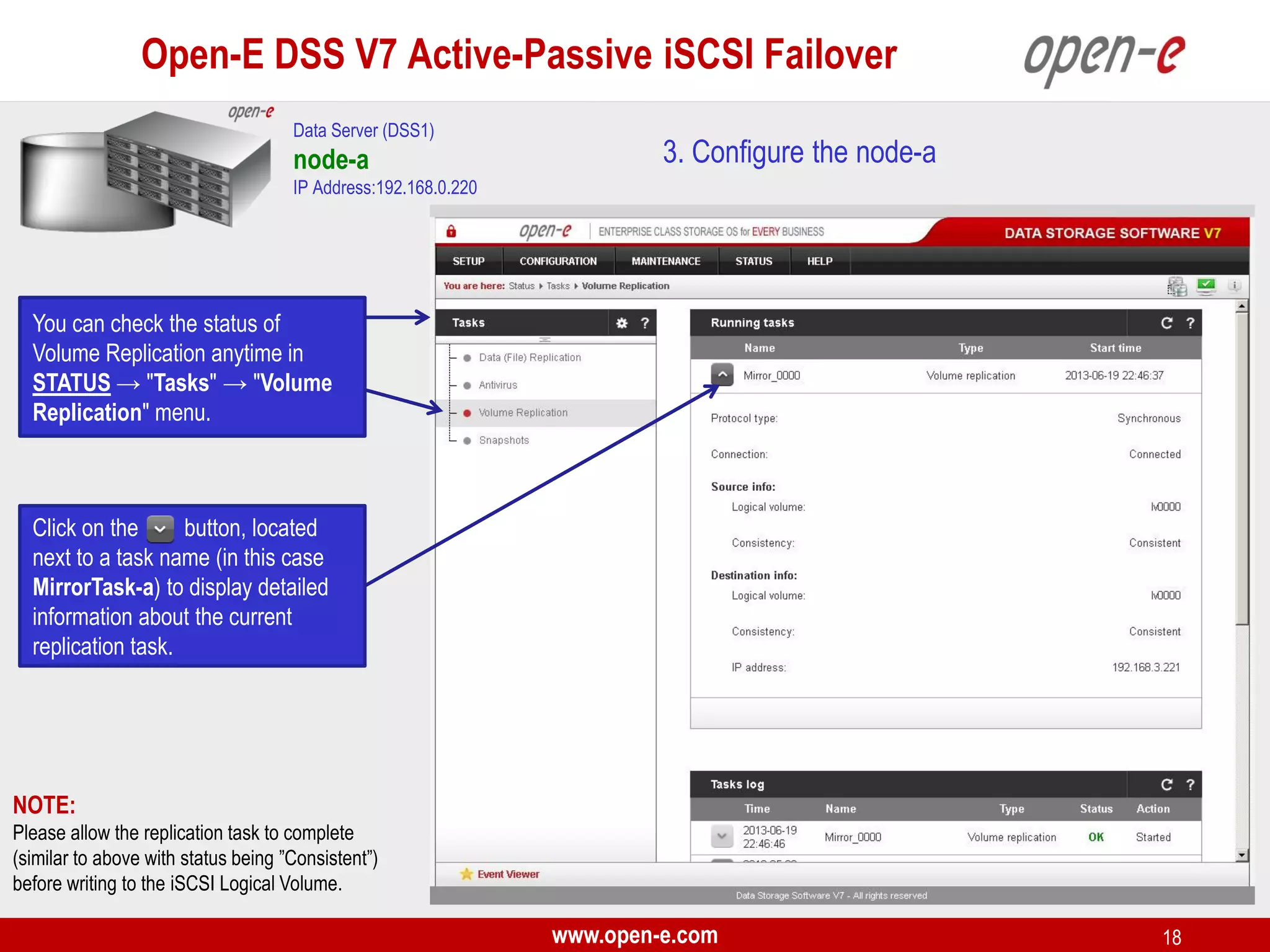

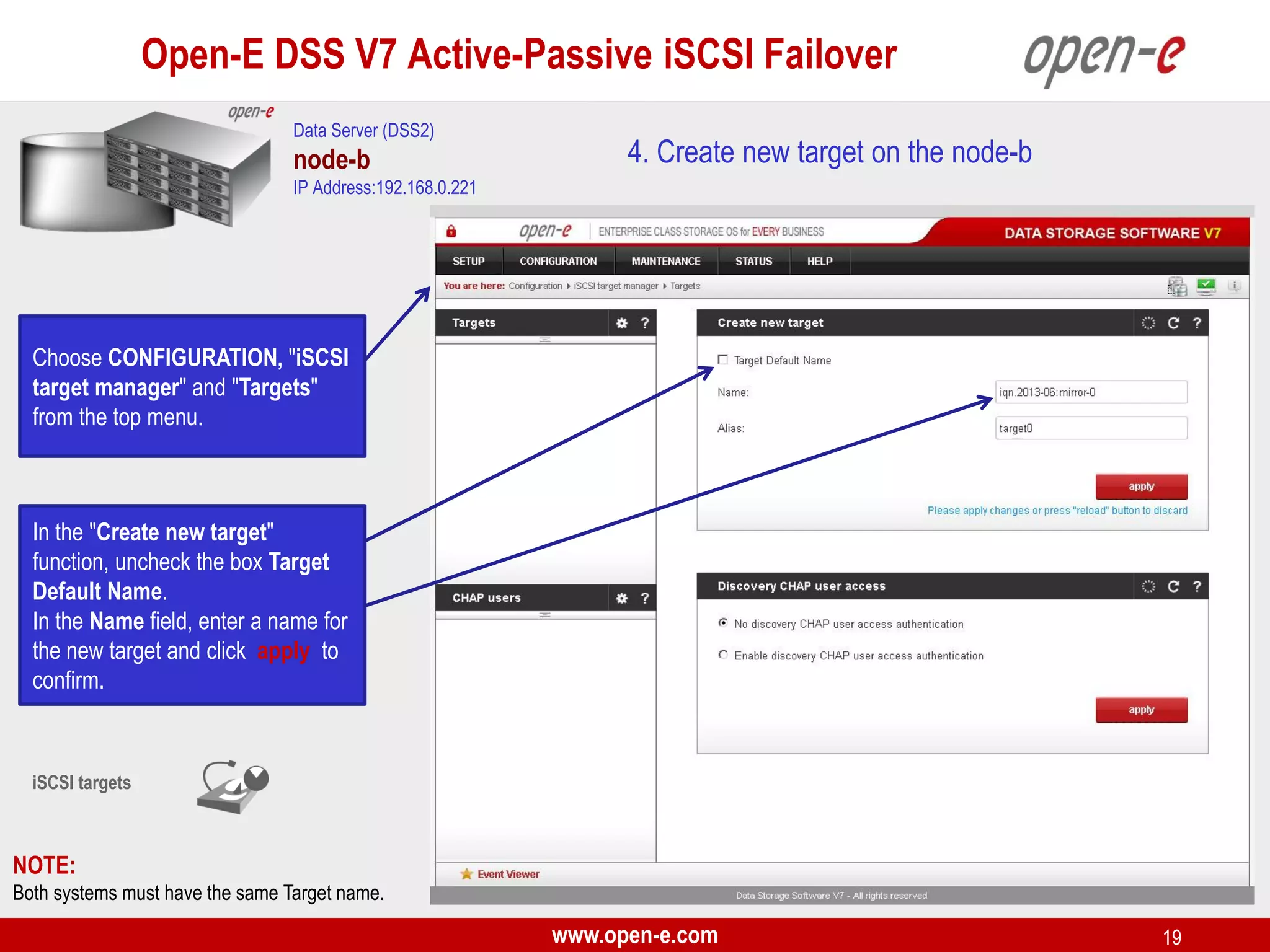

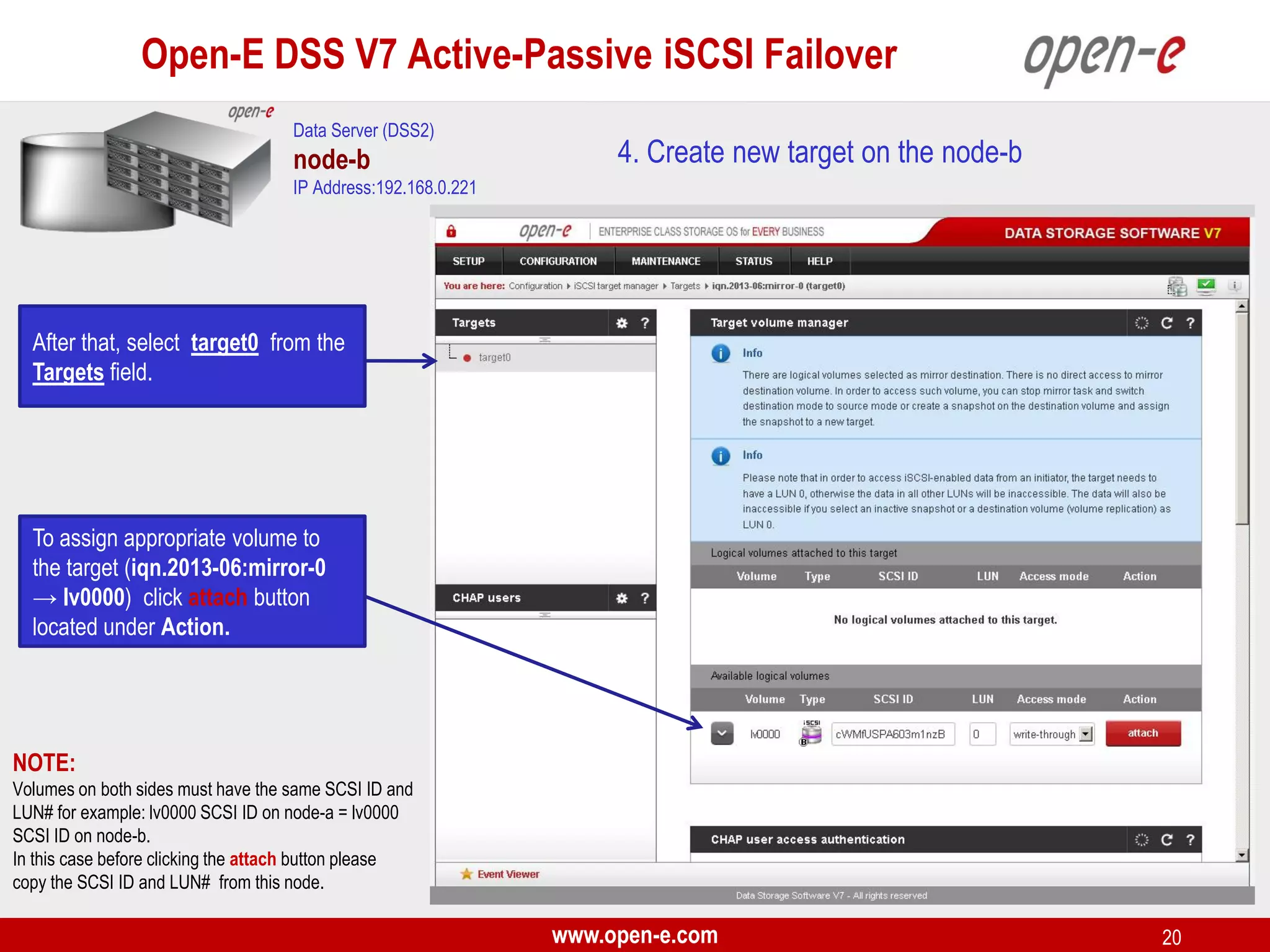

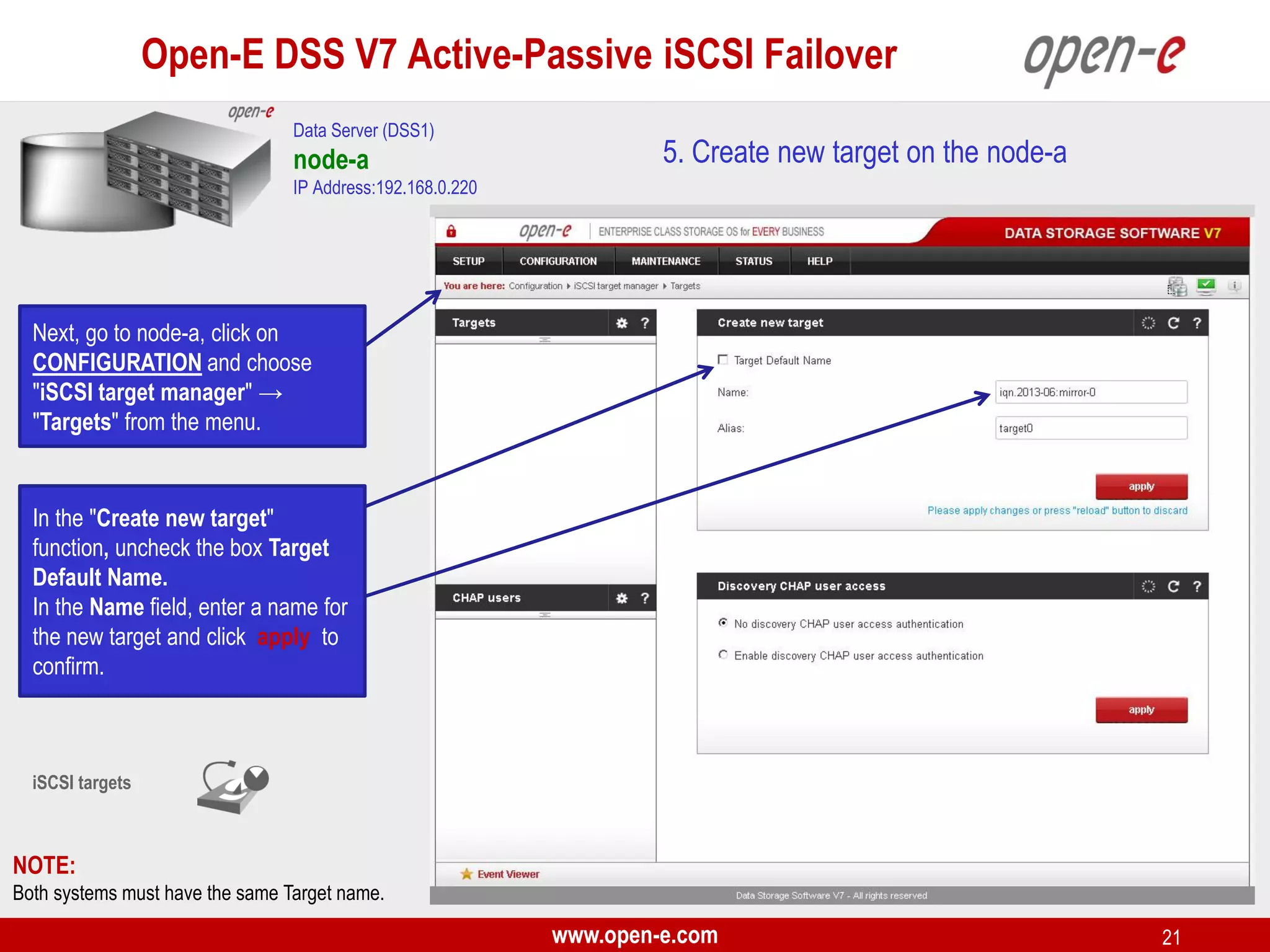

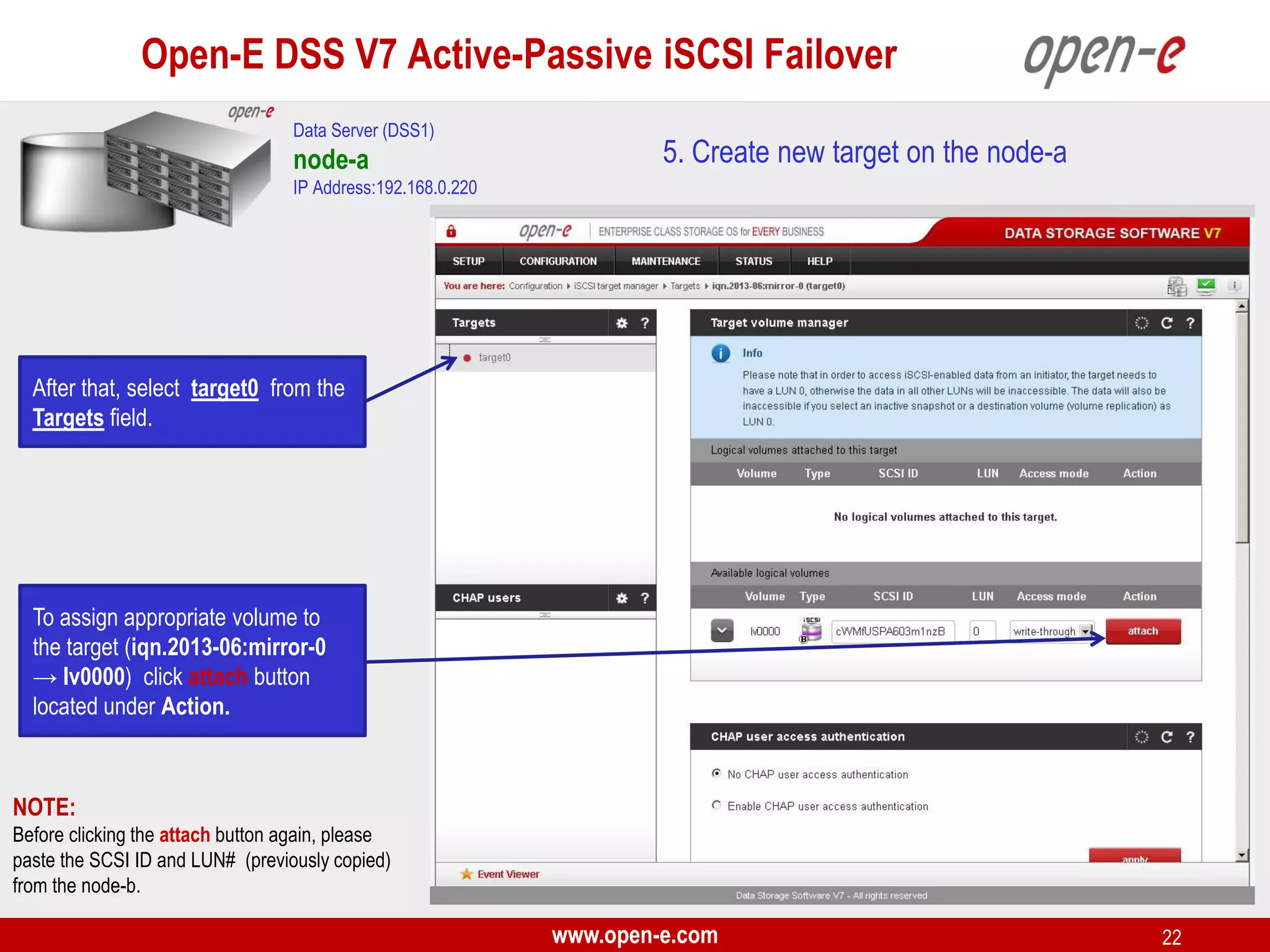

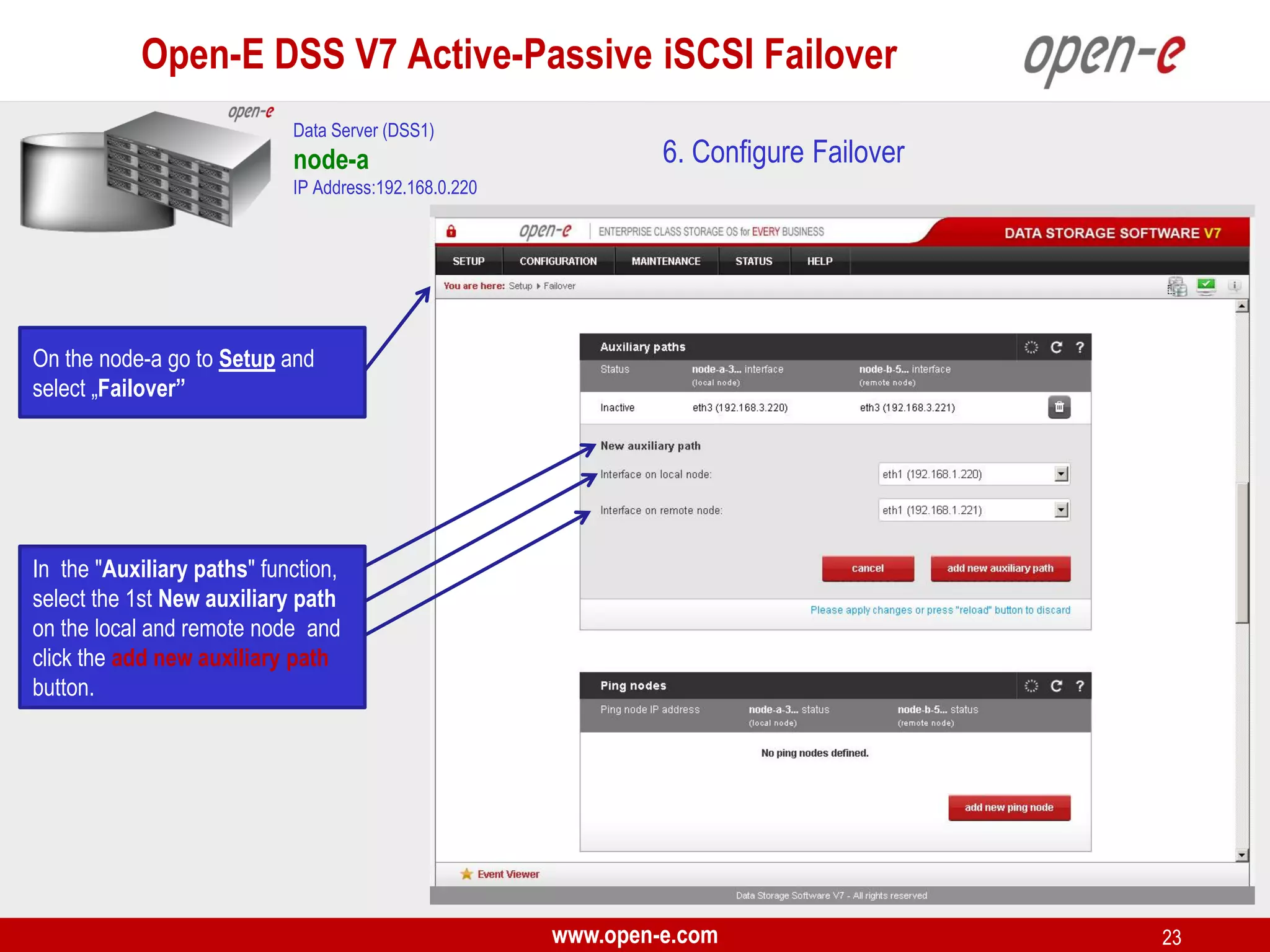

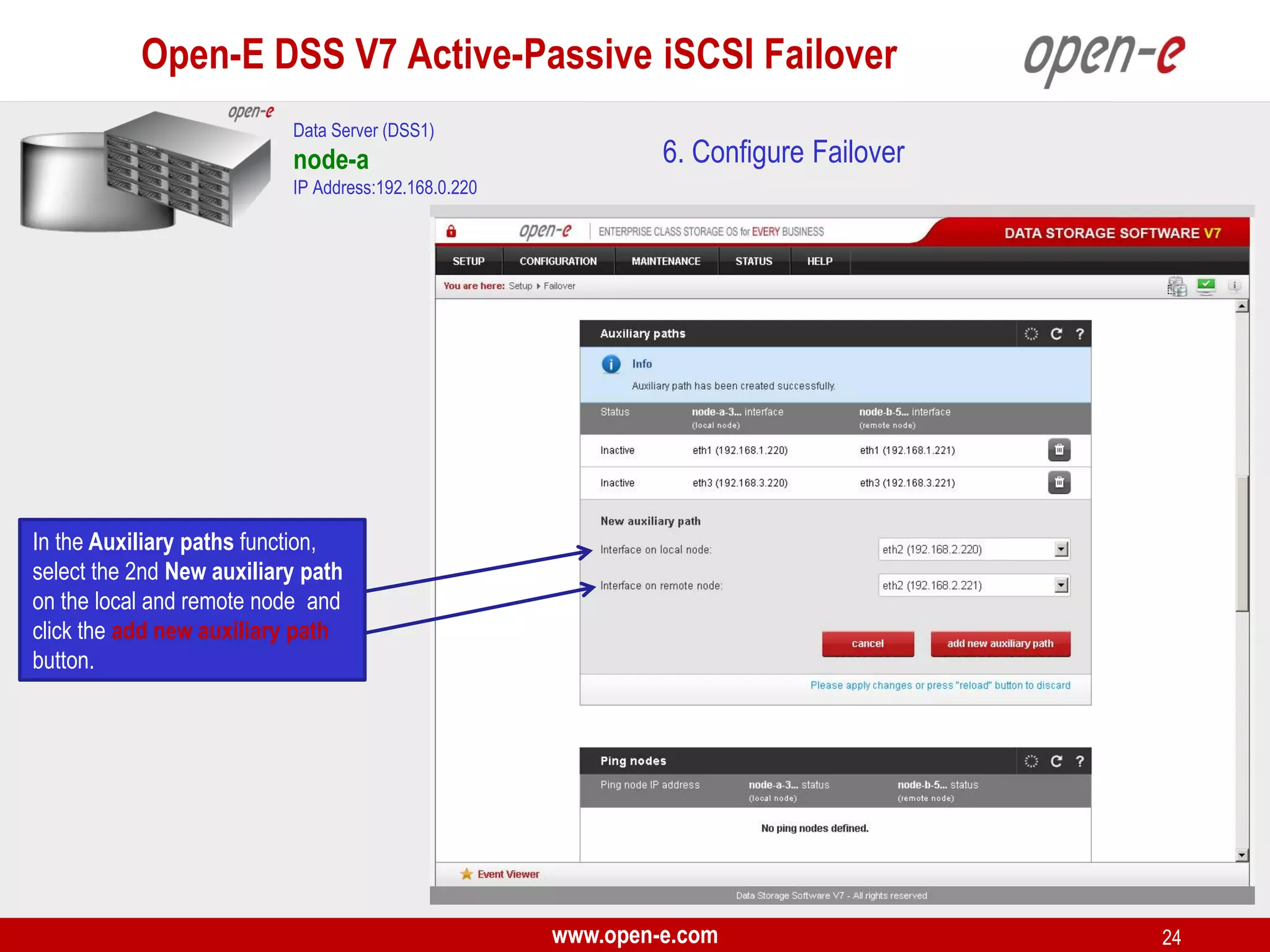

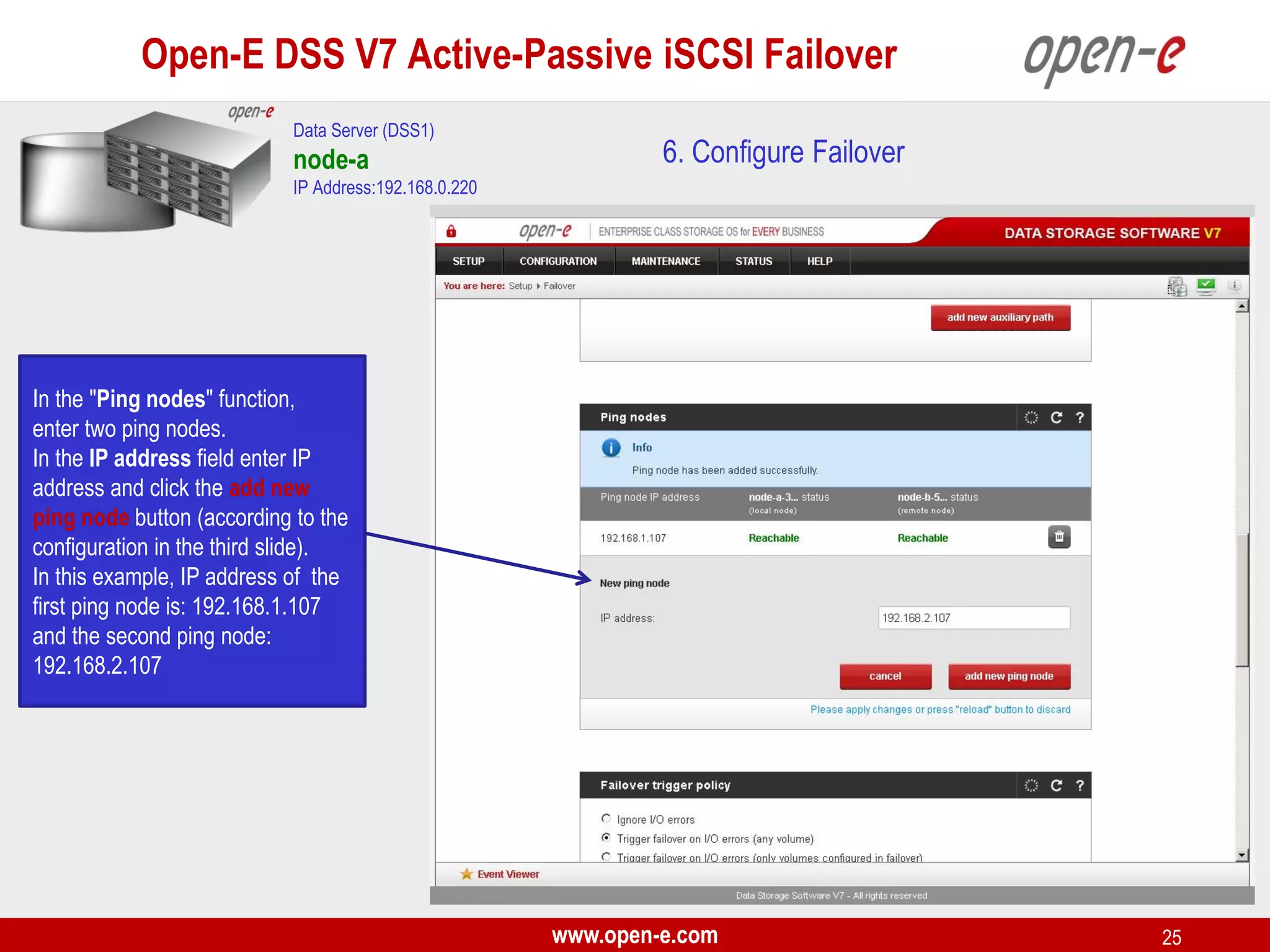

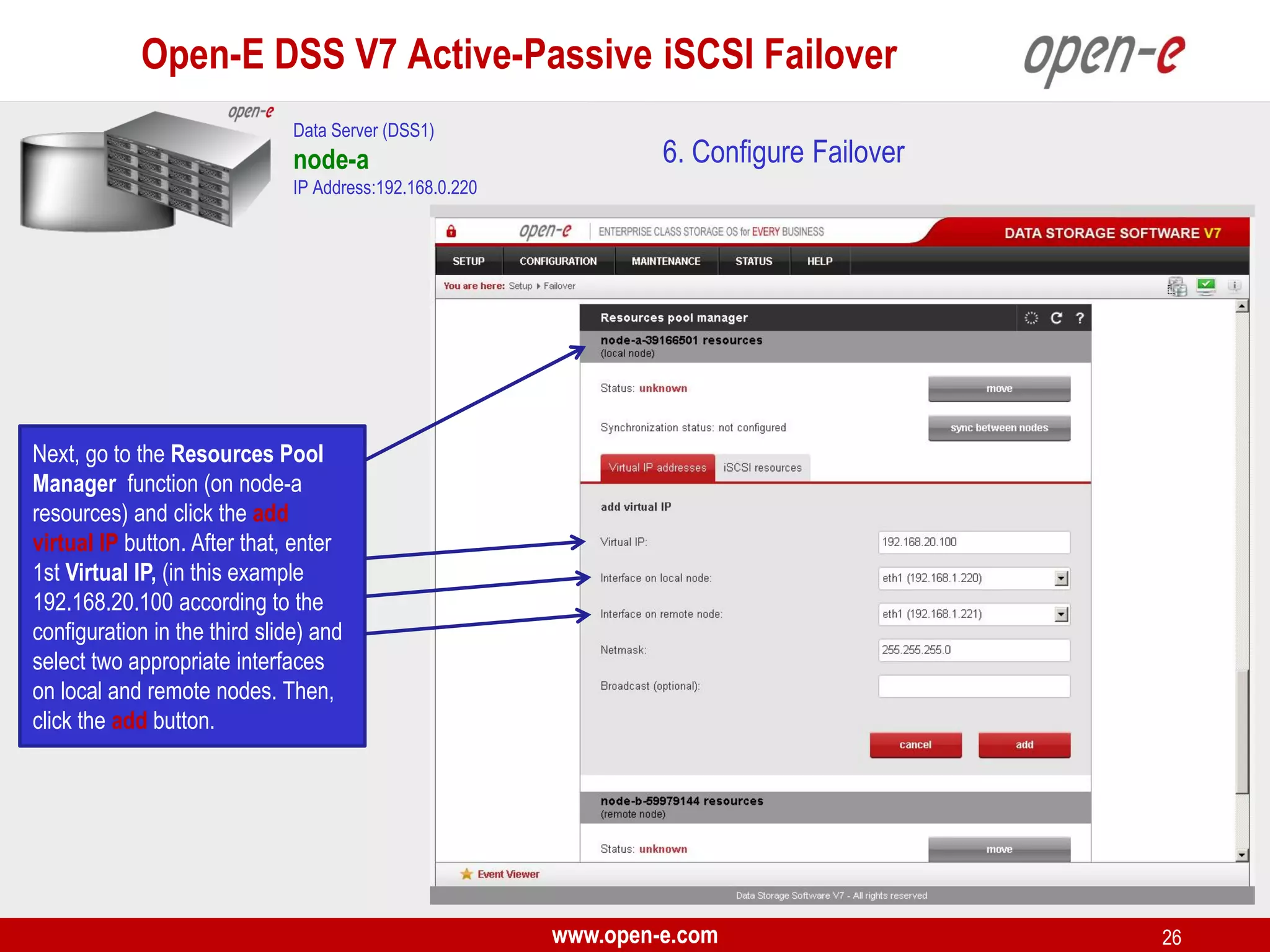

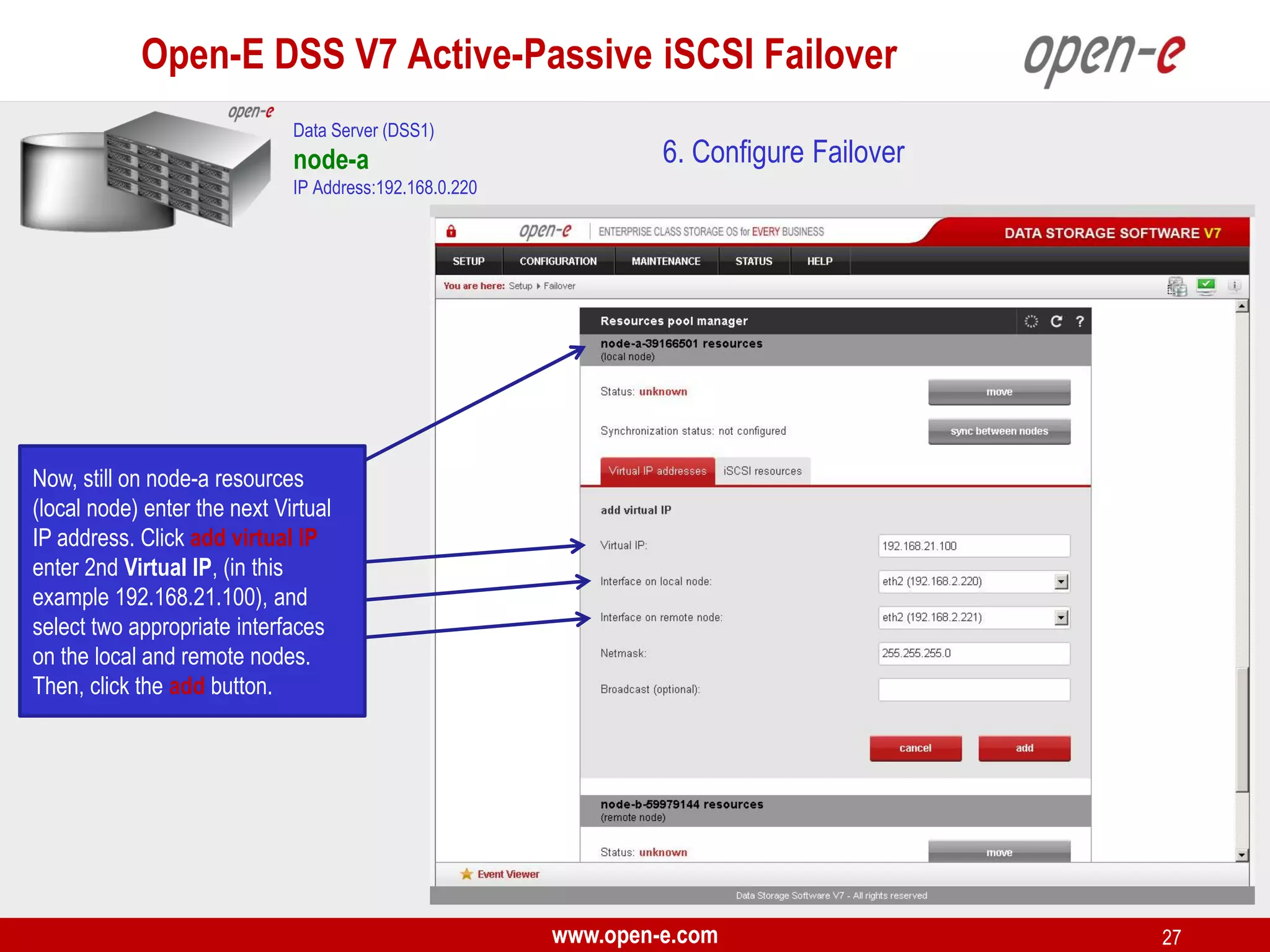

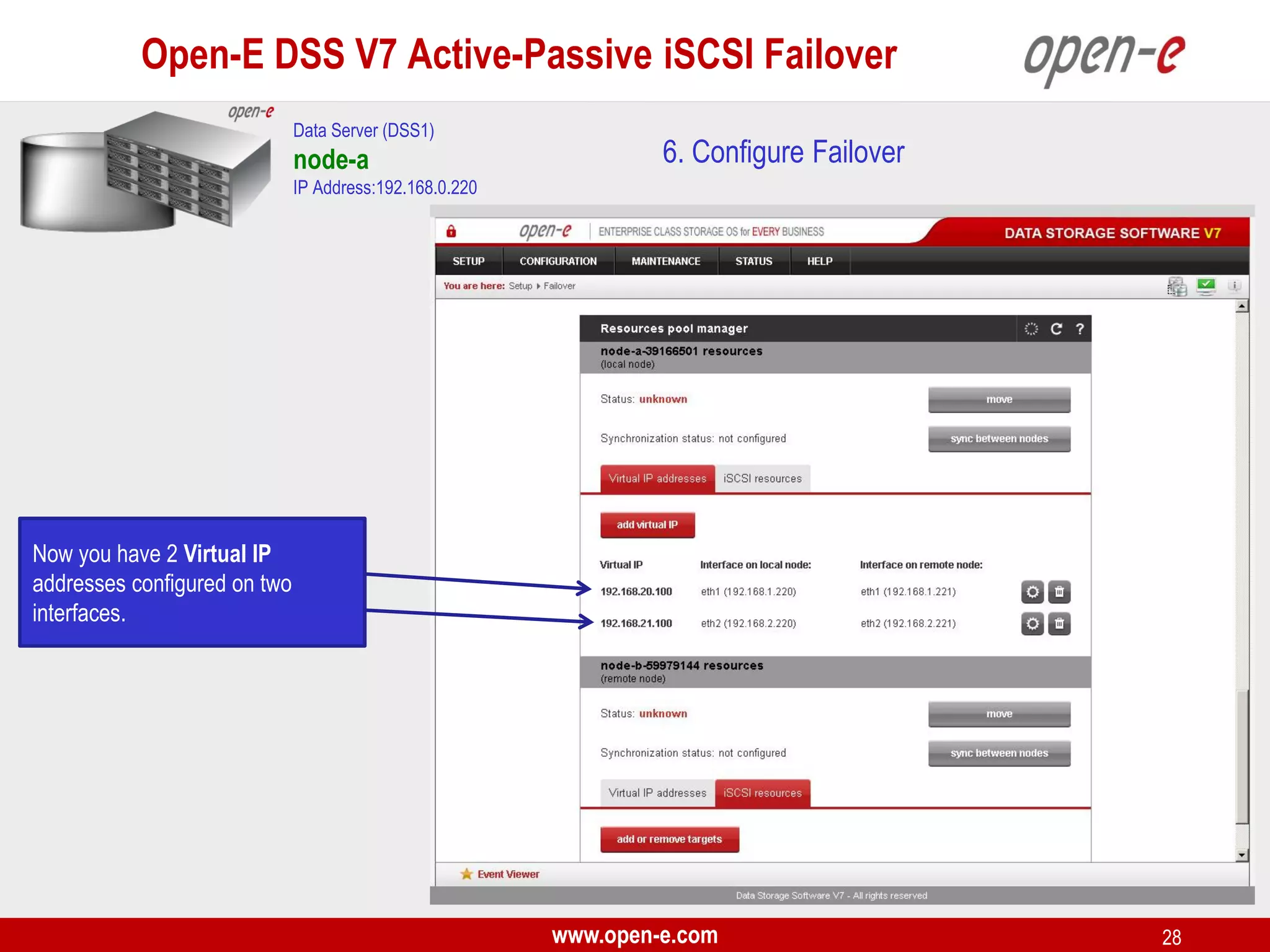

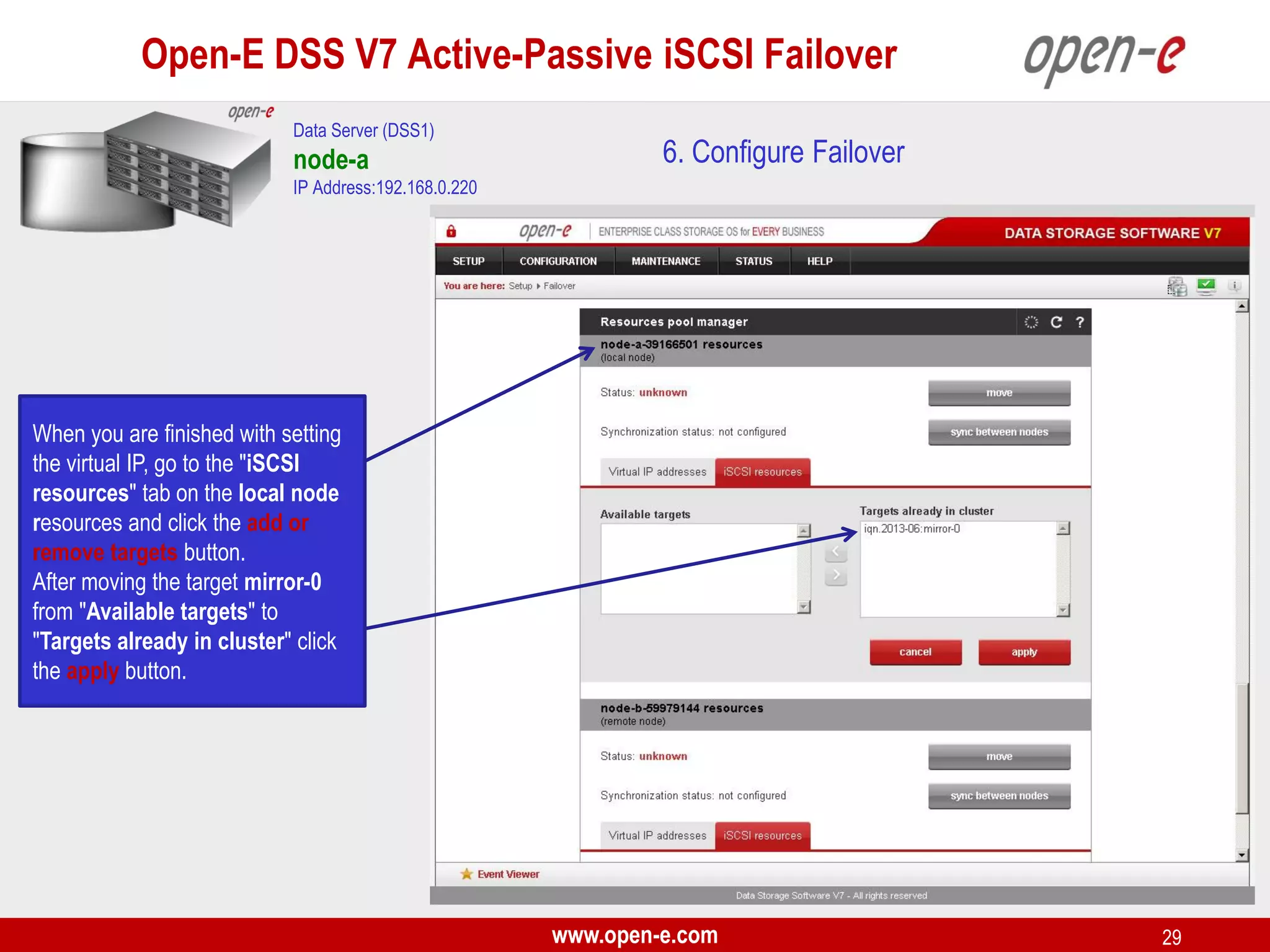

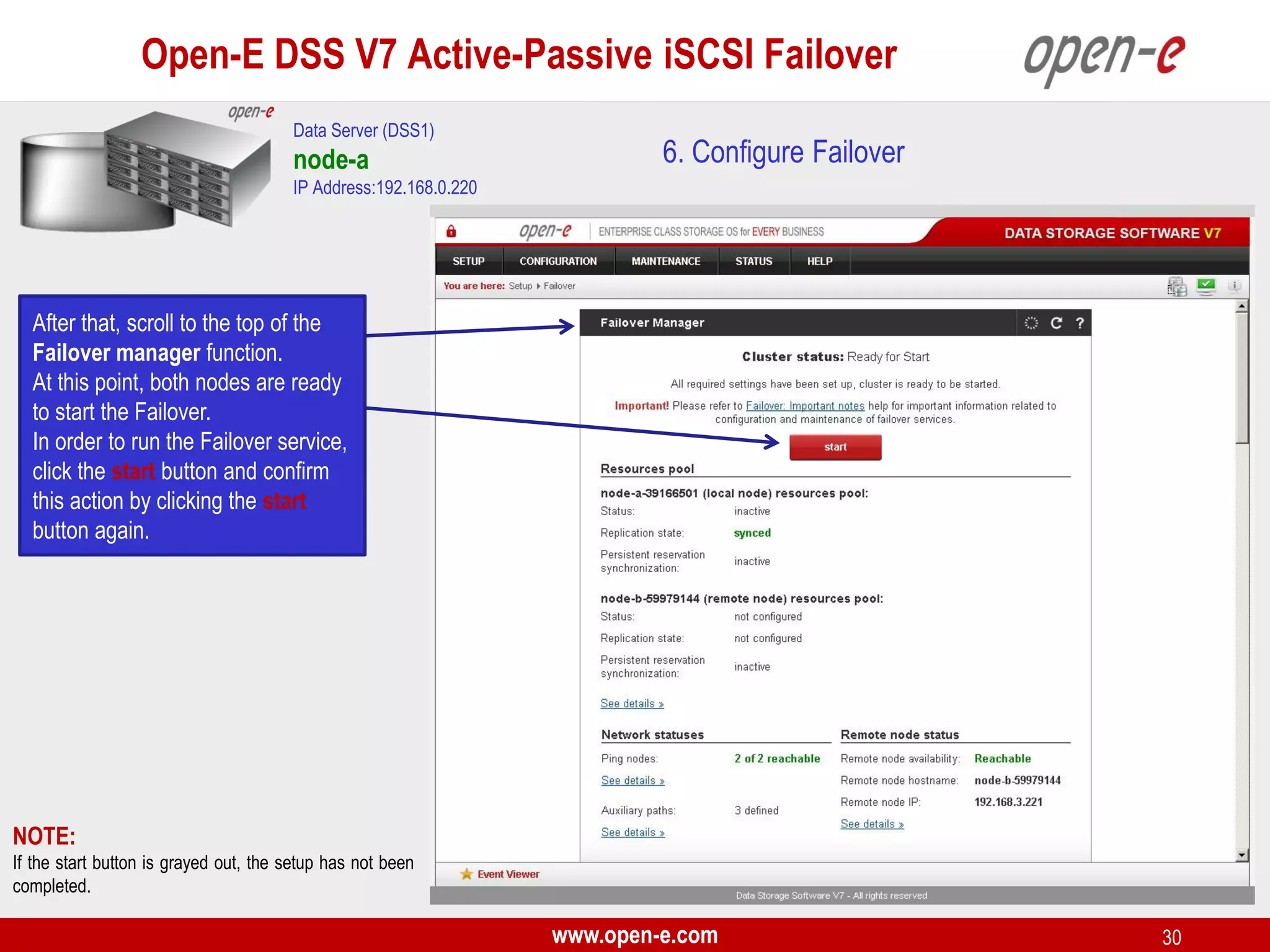

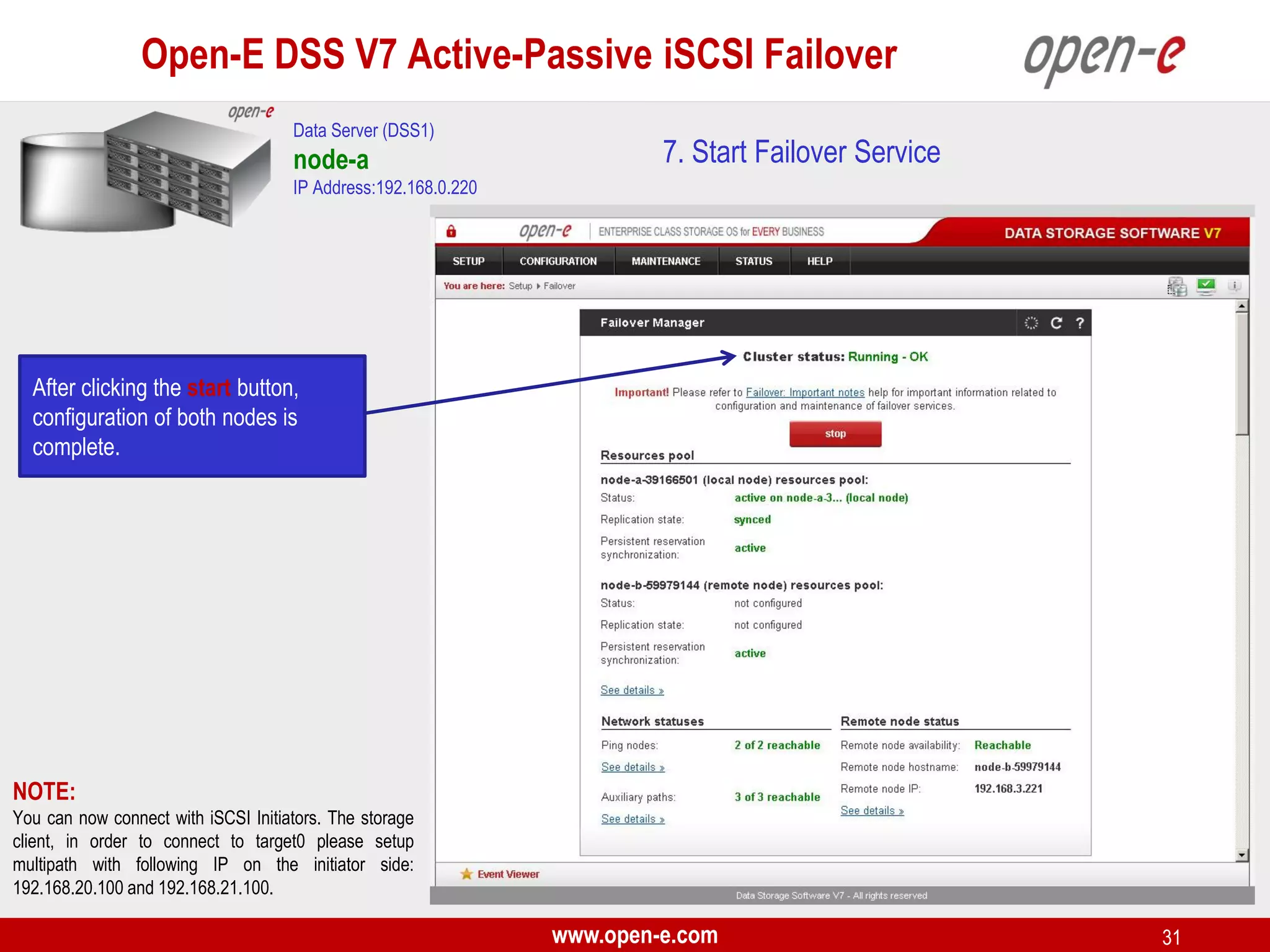

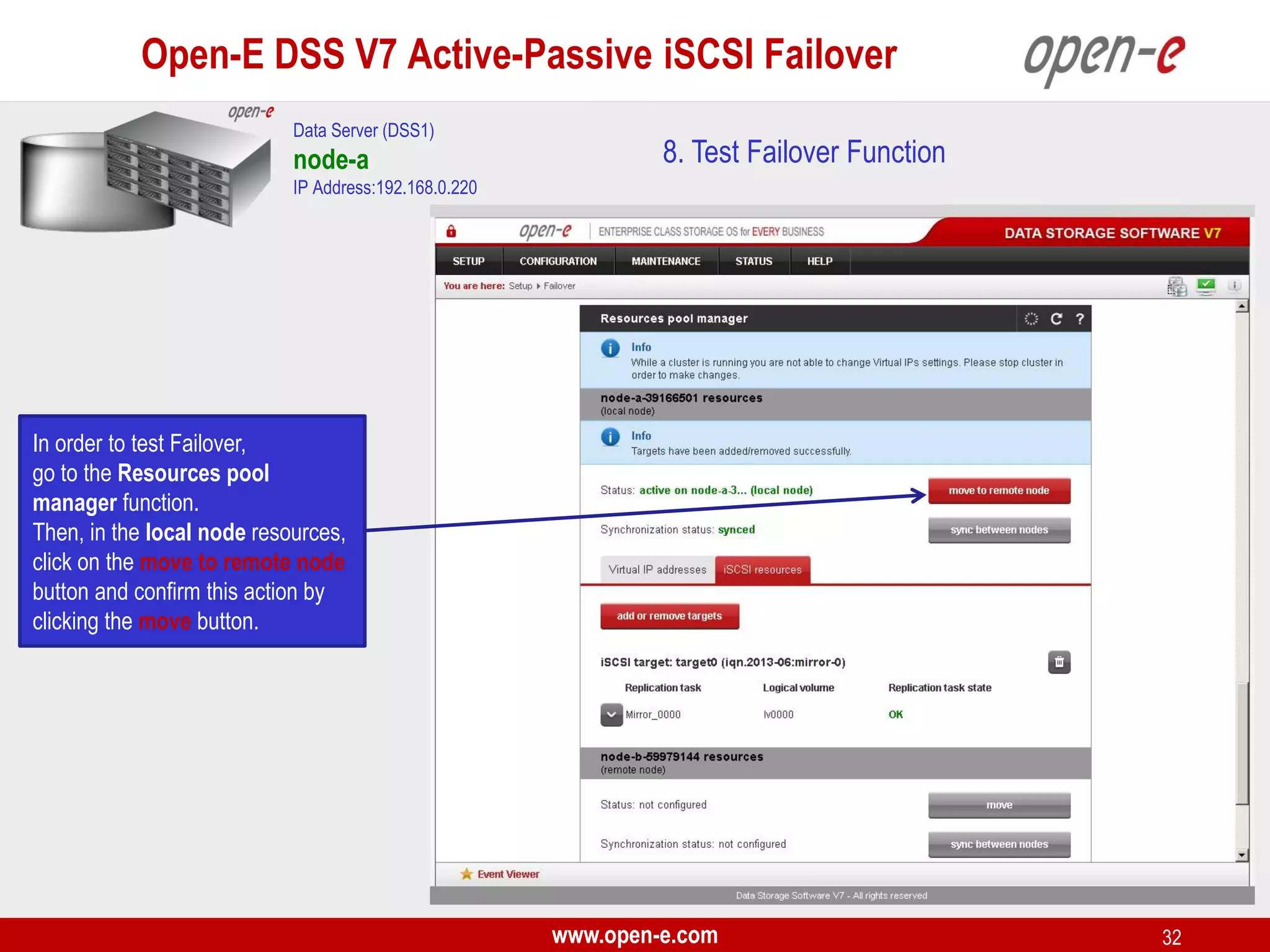

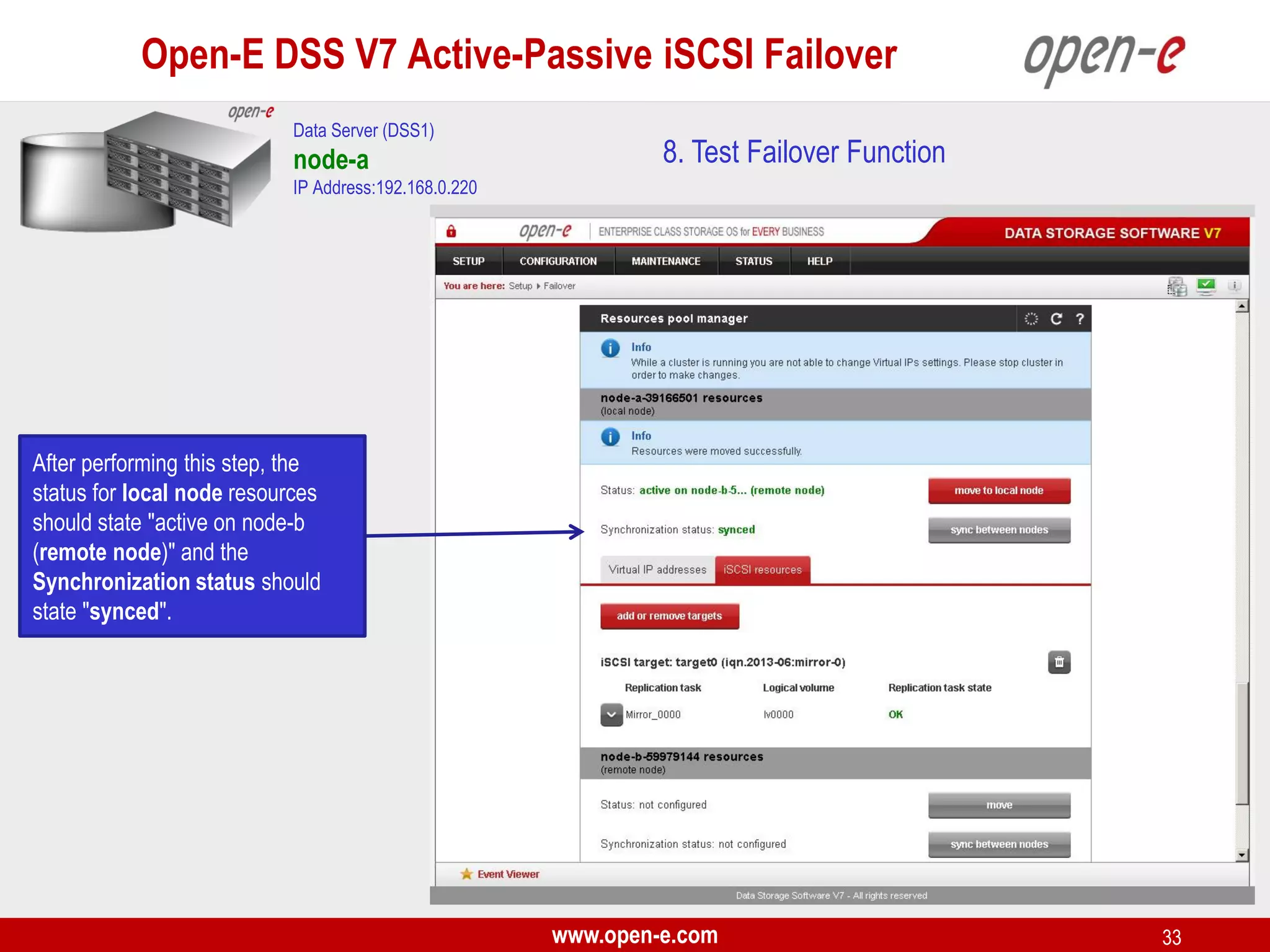

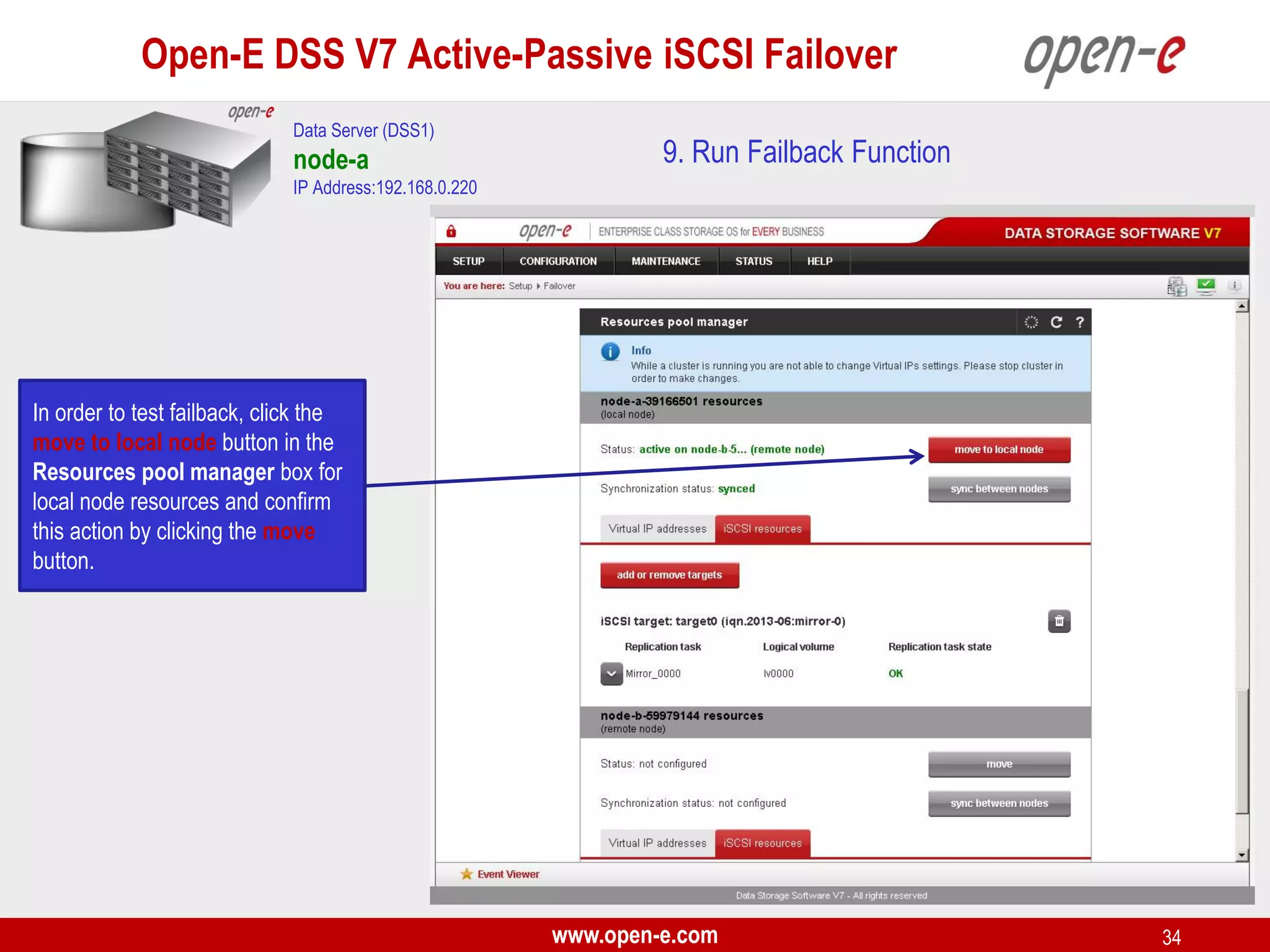

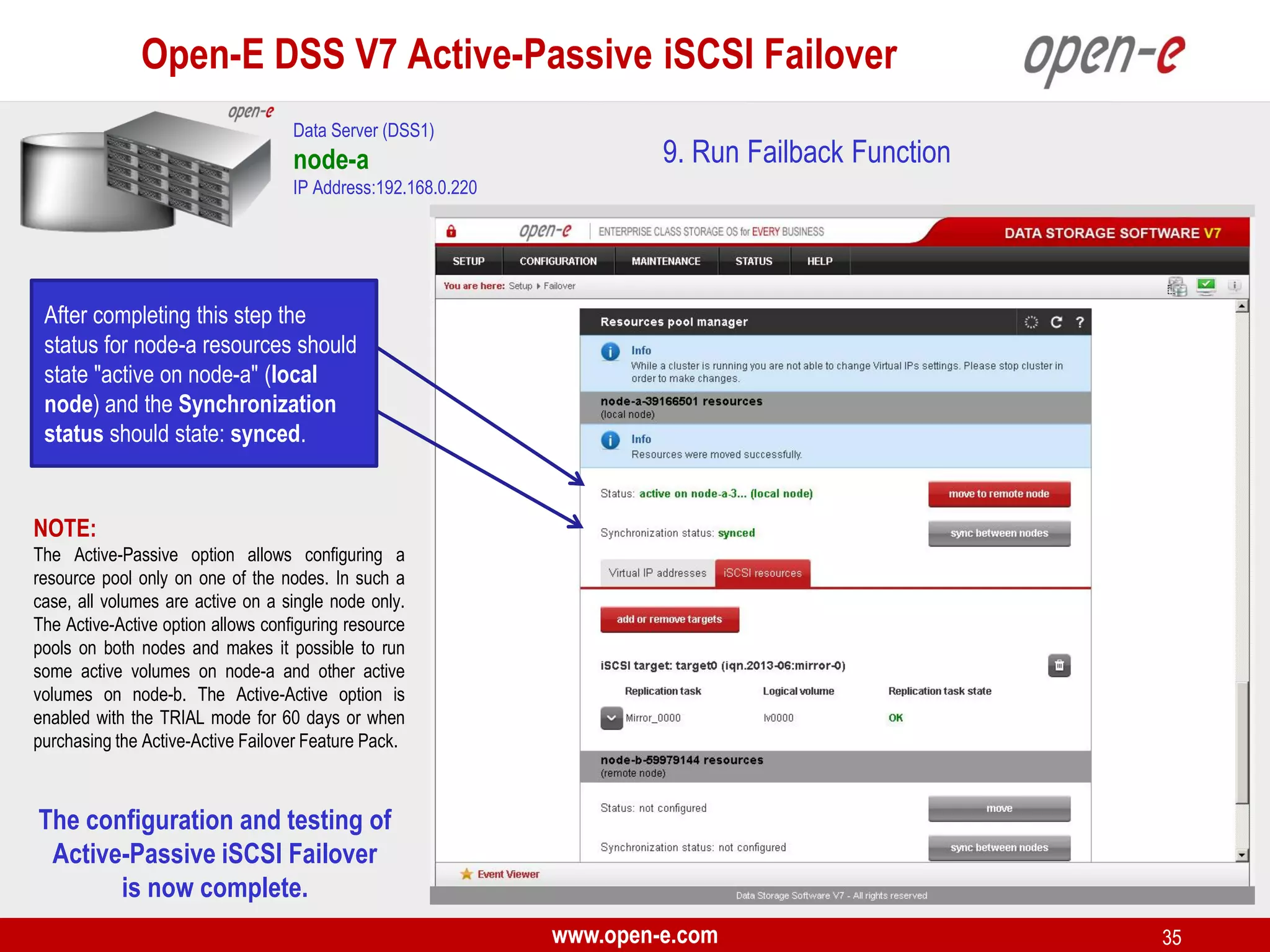

This document provides a step-by-step guide for setting up active-passive iSCSI failover between two Open-E DSS V7 nodes (node-a and node-b). The steps include: 1) configuring the hardware and network settings for each node; 2) creating volume groups and iSCSI volumes for data replication on each node; 3) configuring volume replication between the nodes; 4) creating iSCSI targets on each node; 5) configuring failover settings; and 6) testing the failover functionality. Key aspects involve replicating iSCSI volumes from the active node-a to the passive node-b, and configuring virtual IP addresses and targets on each node for seamless failover