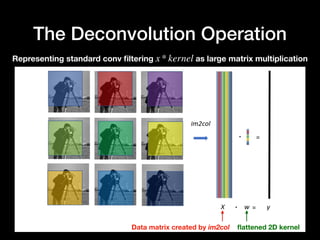

This paper proposes a method called network deconvolution to remove pixel-wise and channel-wise correlation in convolutional networks. It does this by learning a decorrelation matrix during training that whitens the input data, removing redundancy. Experiments show it converges faster than batch normalization and achieves better performance on image classification tasks. The method is inspired by the decorrelation process observed in animal visual cortex and results in sparser representations.