MongoDB performs best when documents are grouped together and data is written and indexed in a way that minimizes disk seeks. Specifically:

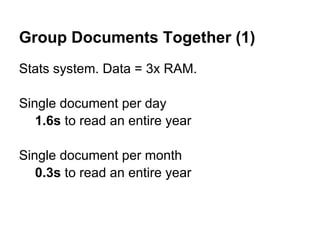

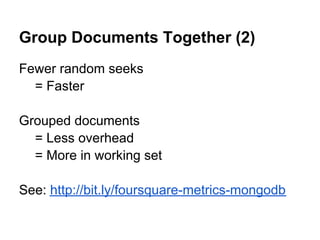

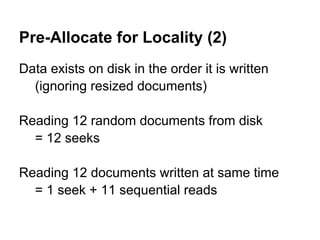

1) Group related documents together to reduce the number of random disk seeks needed to retrieve them.

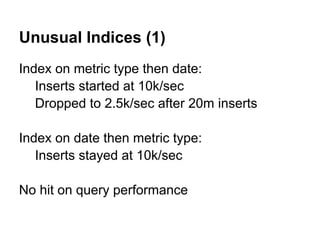

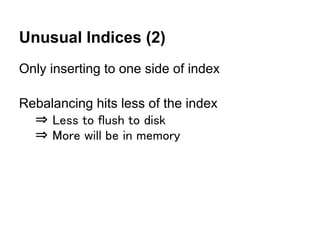

2) Create indexes on fields that data is often inserted on to improve insertion performance and keep more data in memory.

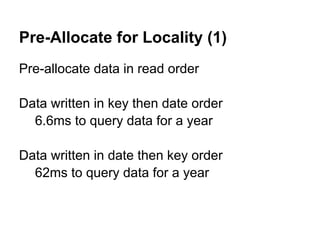

3) Pre-allocate data on disk in the order it will be read to minimize disk seeks during queries. Reading sequentially stored data requires fewer seeks than randomly accessing documents.

![[More]

MongoDB Performance

Tips

Colin Howe

@colinhowe

http://www.colinhowe.co.uk](https://image.slidesharecdn.com/moremongodbperformancetips-120423141130-phpapp02/75/More-MongoDB-Performance-Tips-1-2048.jpg)