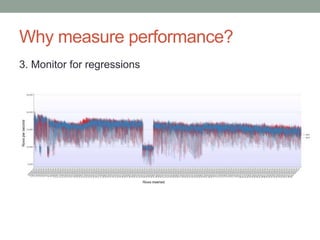

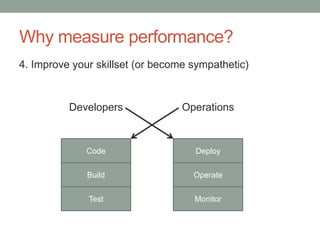

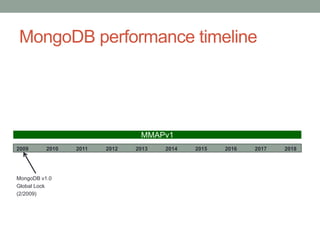

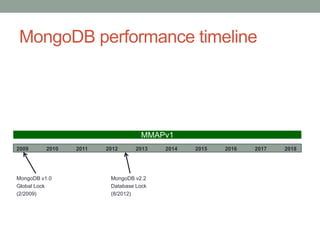

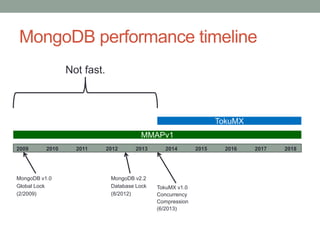

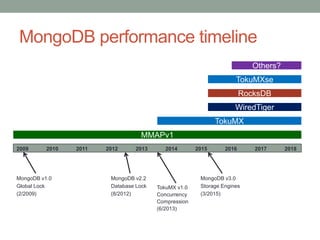

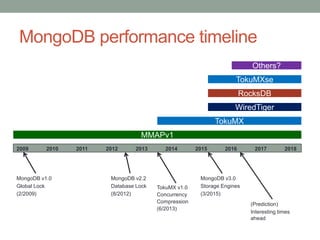

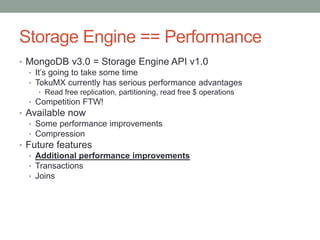

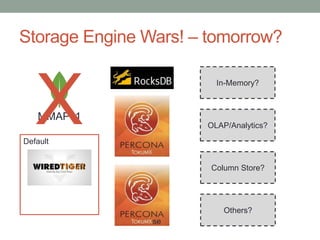

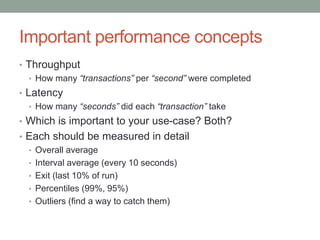

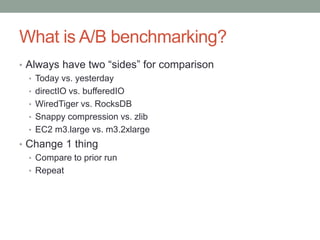

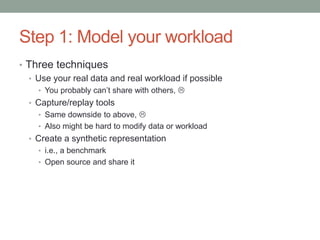

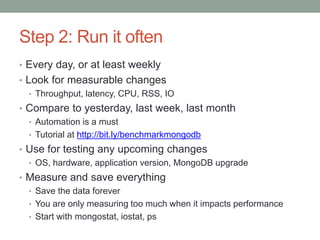

The document presents an overview of MongoDB performance, highlighting the evolution of its storage engines and performance metrics from its inception in 2009 to the present. It emphasizes the importance of measuring performance through various techniques, including modeling workloads and sharing benchmarks, to optimize database usage. The author also discusses future developments and competitive dynamics in database technologies, particularly regarding the improvements in concurrency and replication mechanisms.