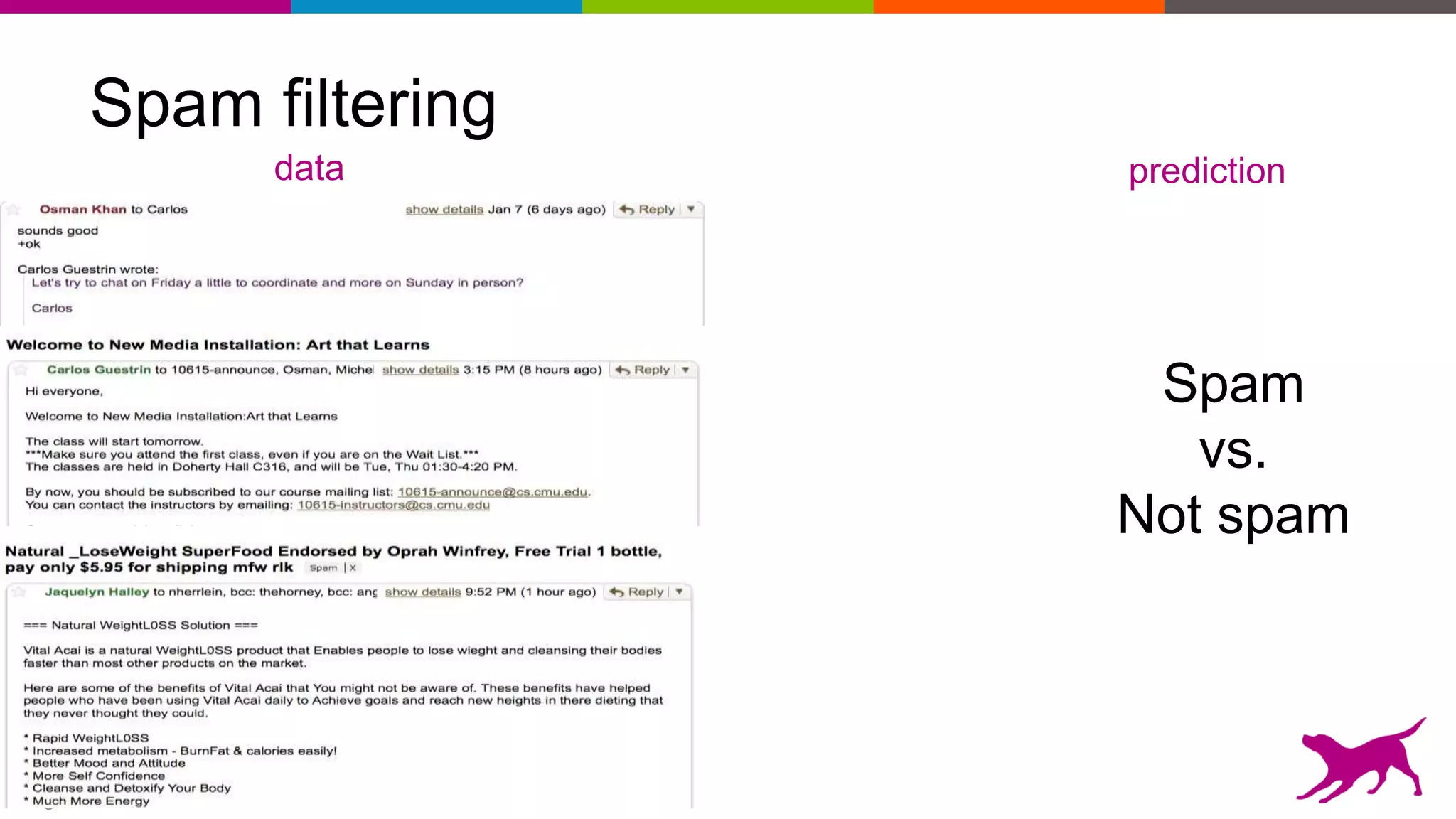

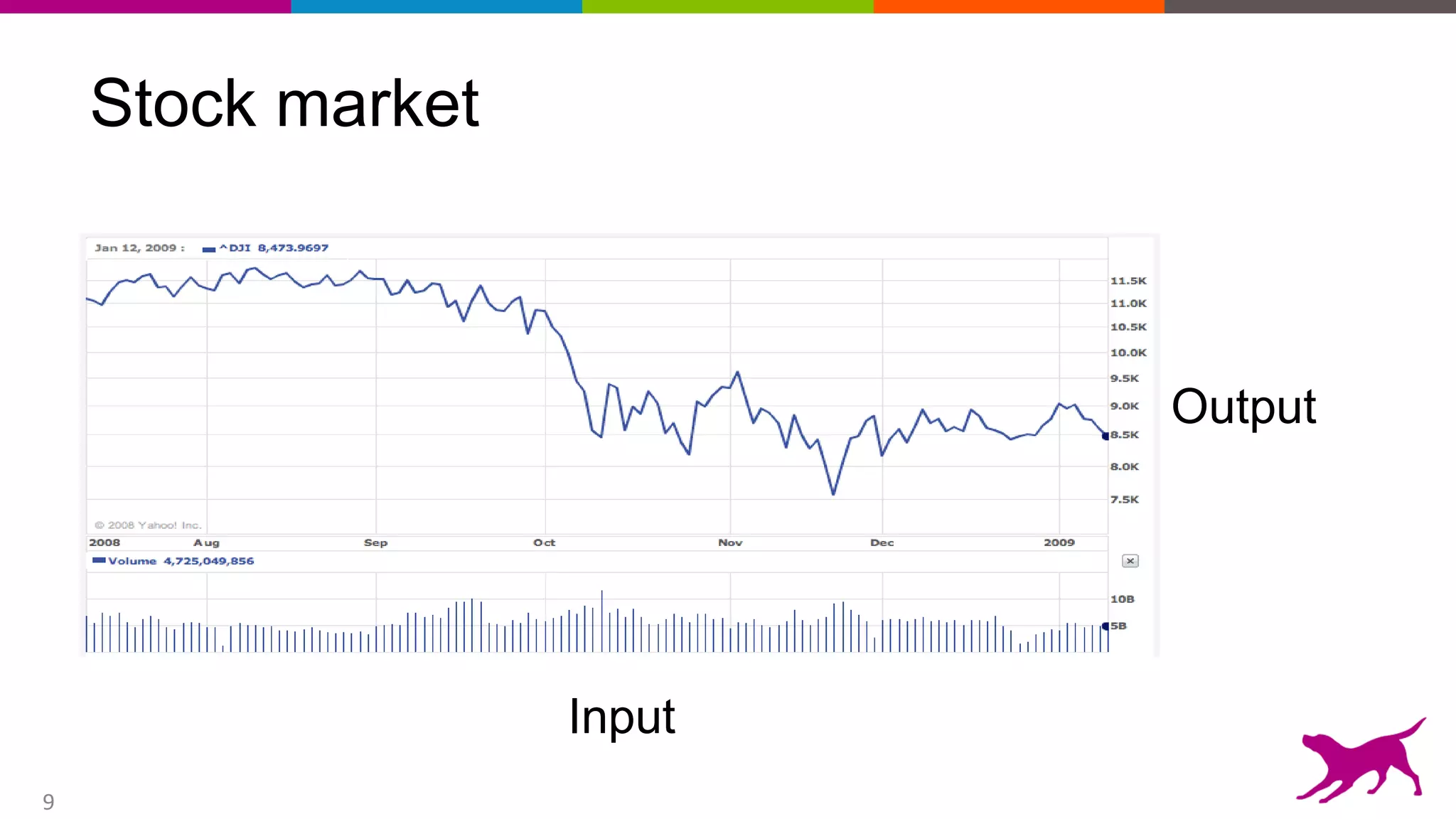

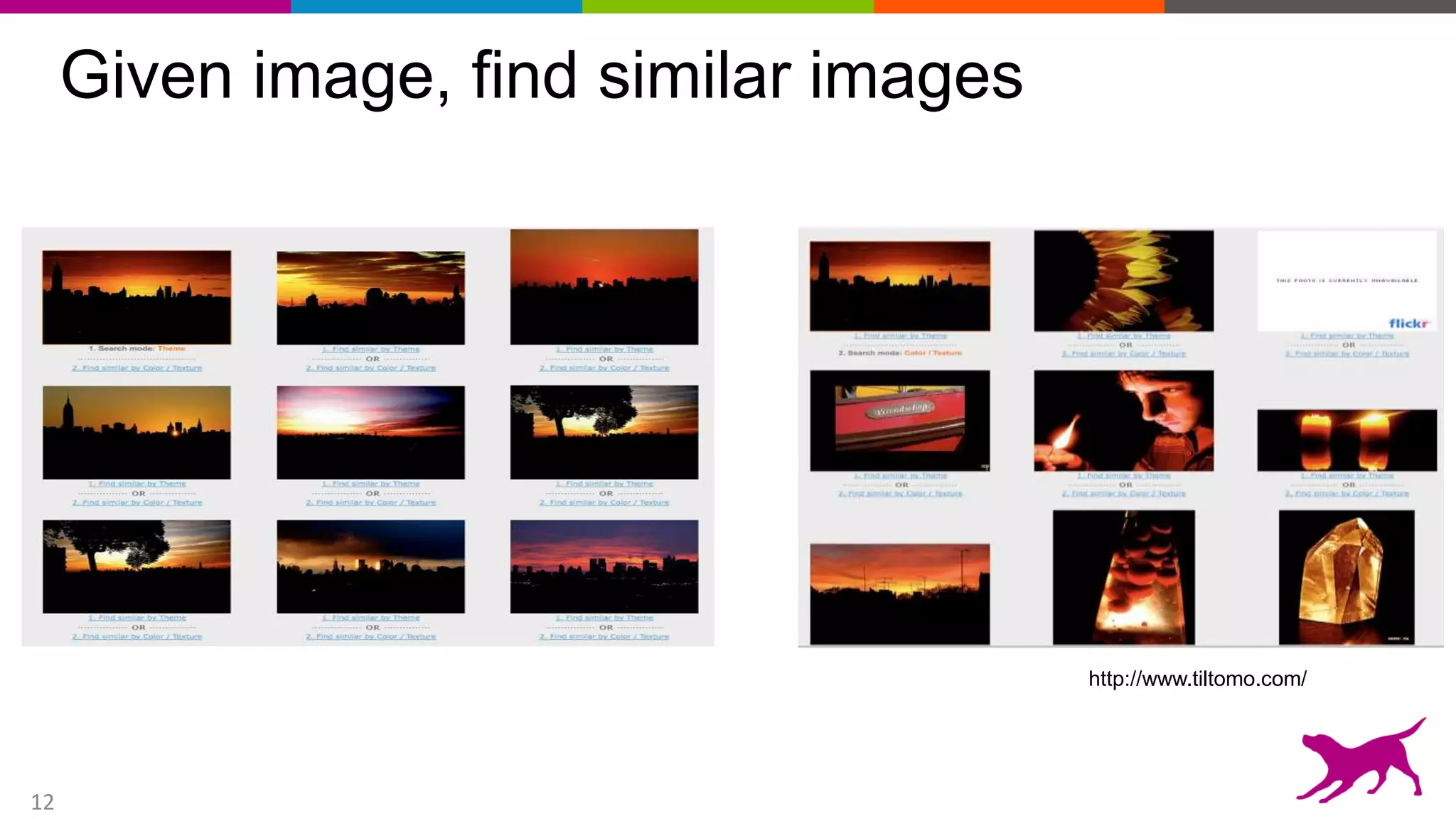

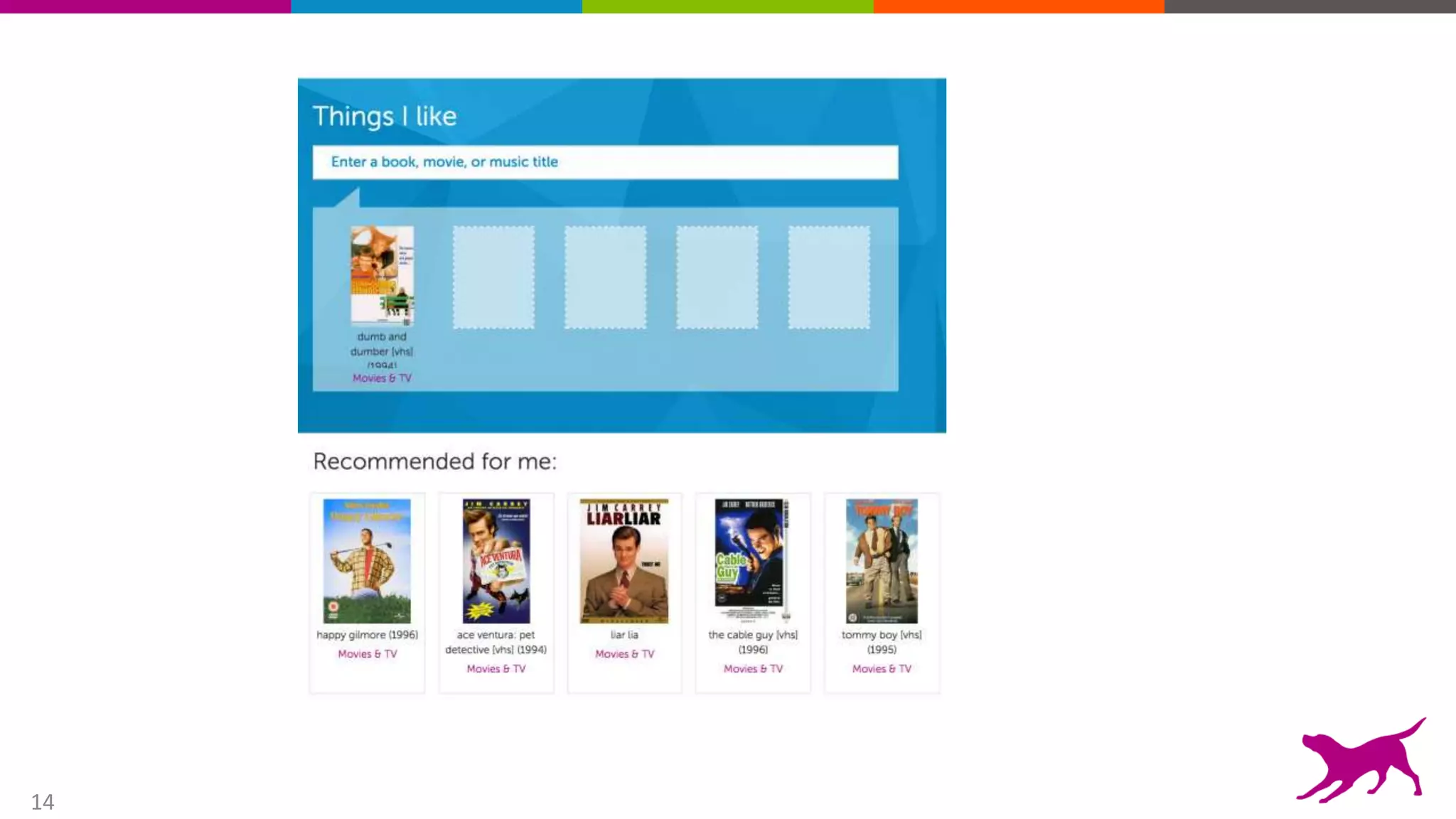

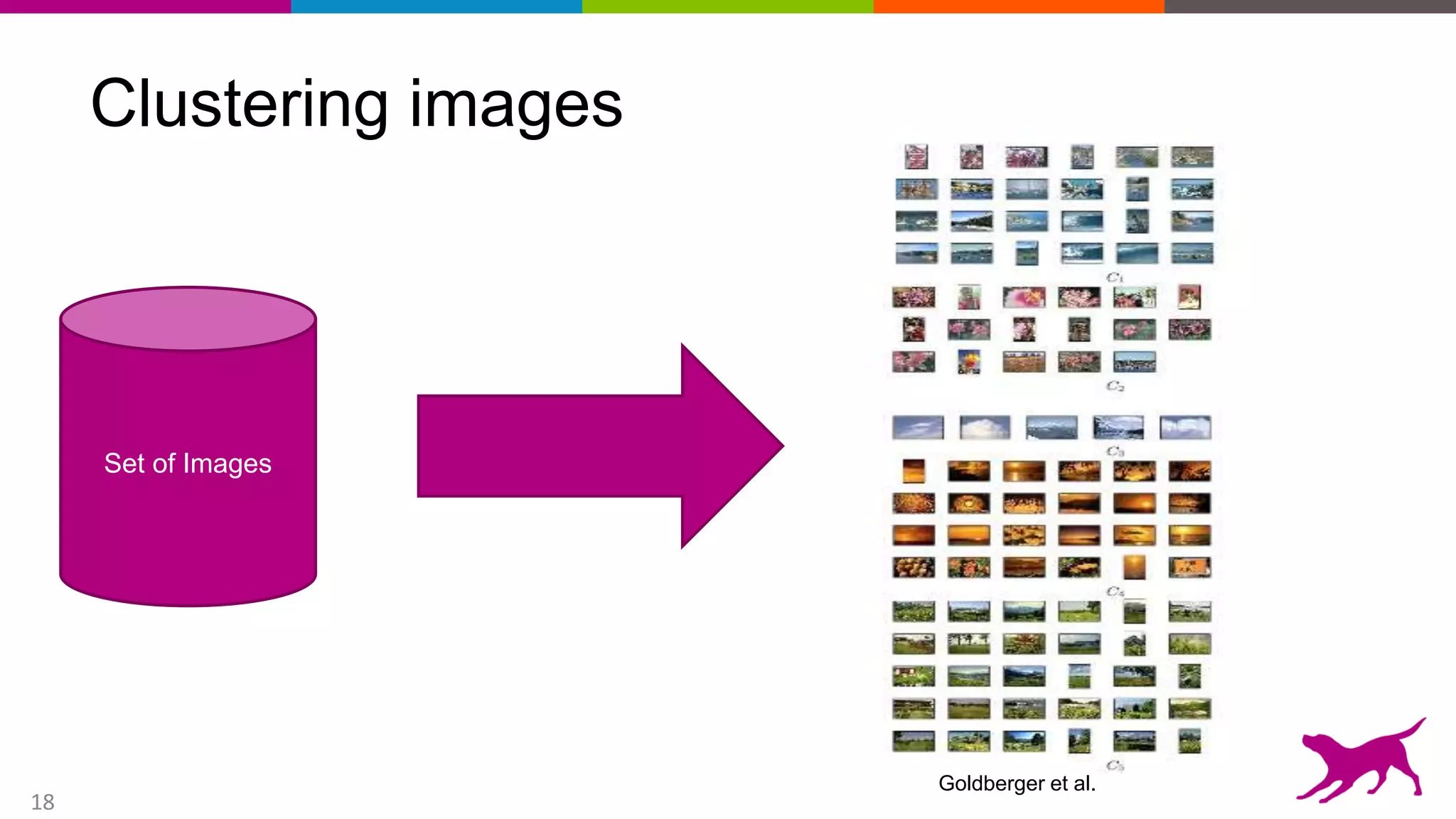

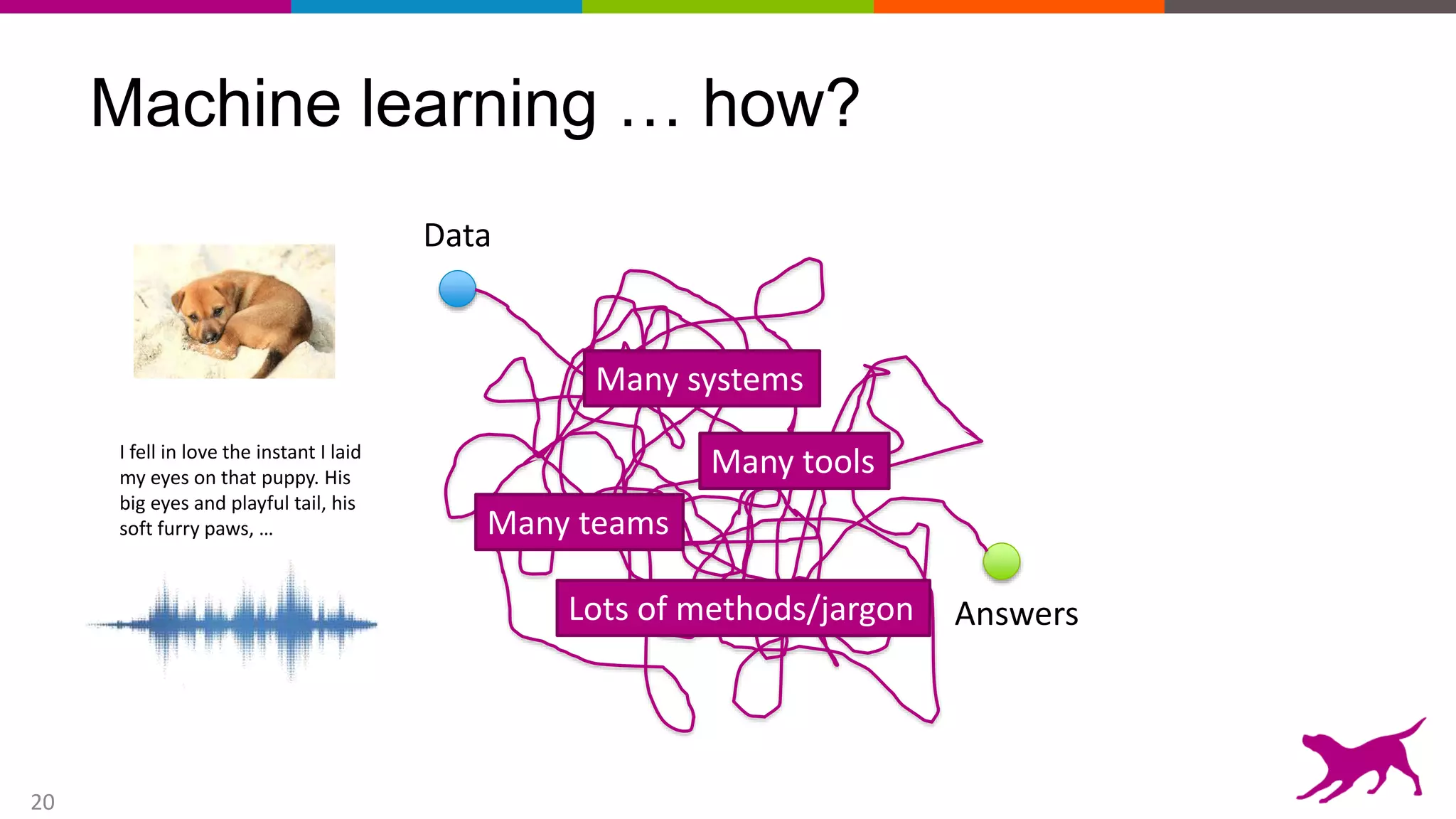

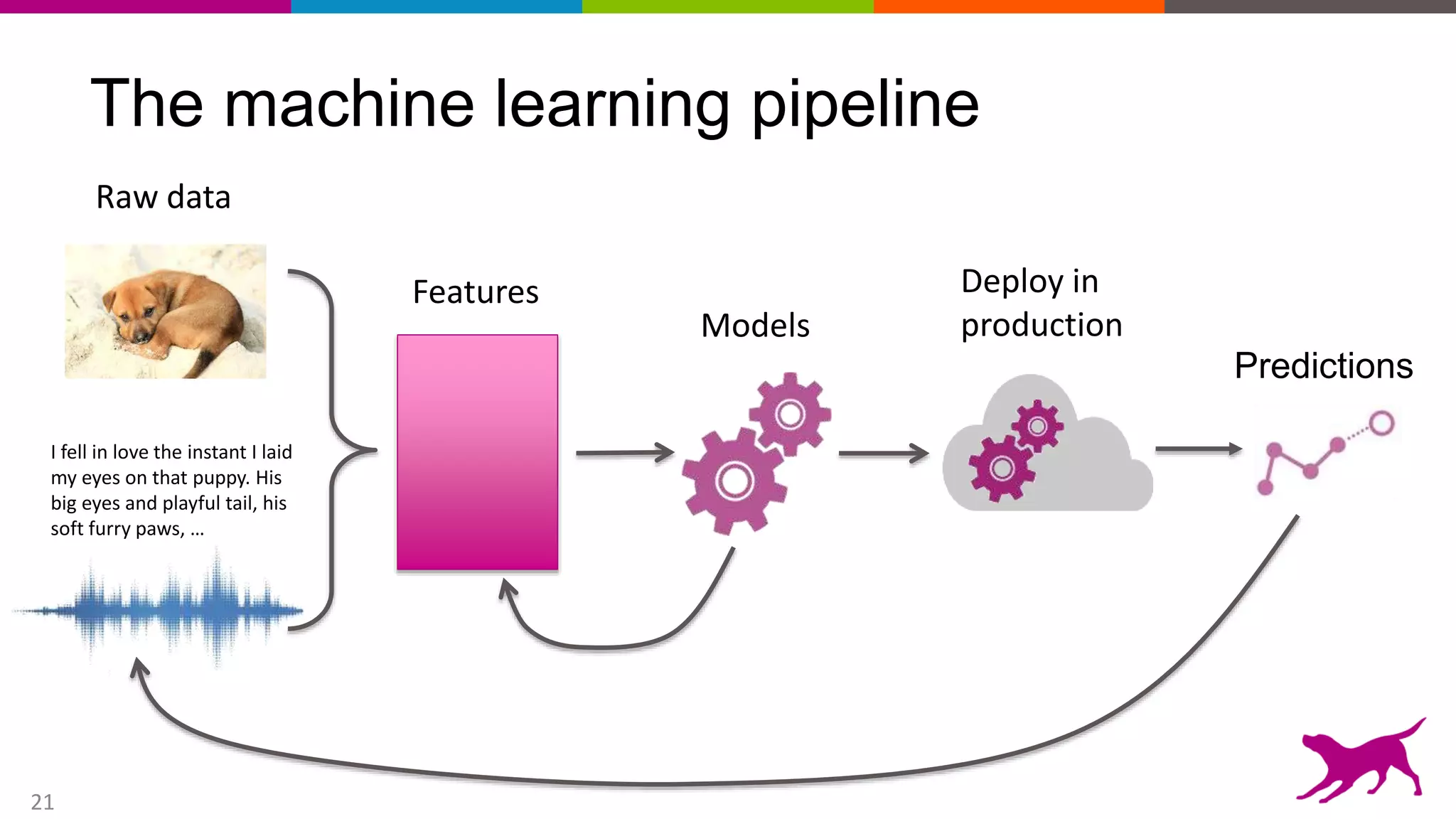

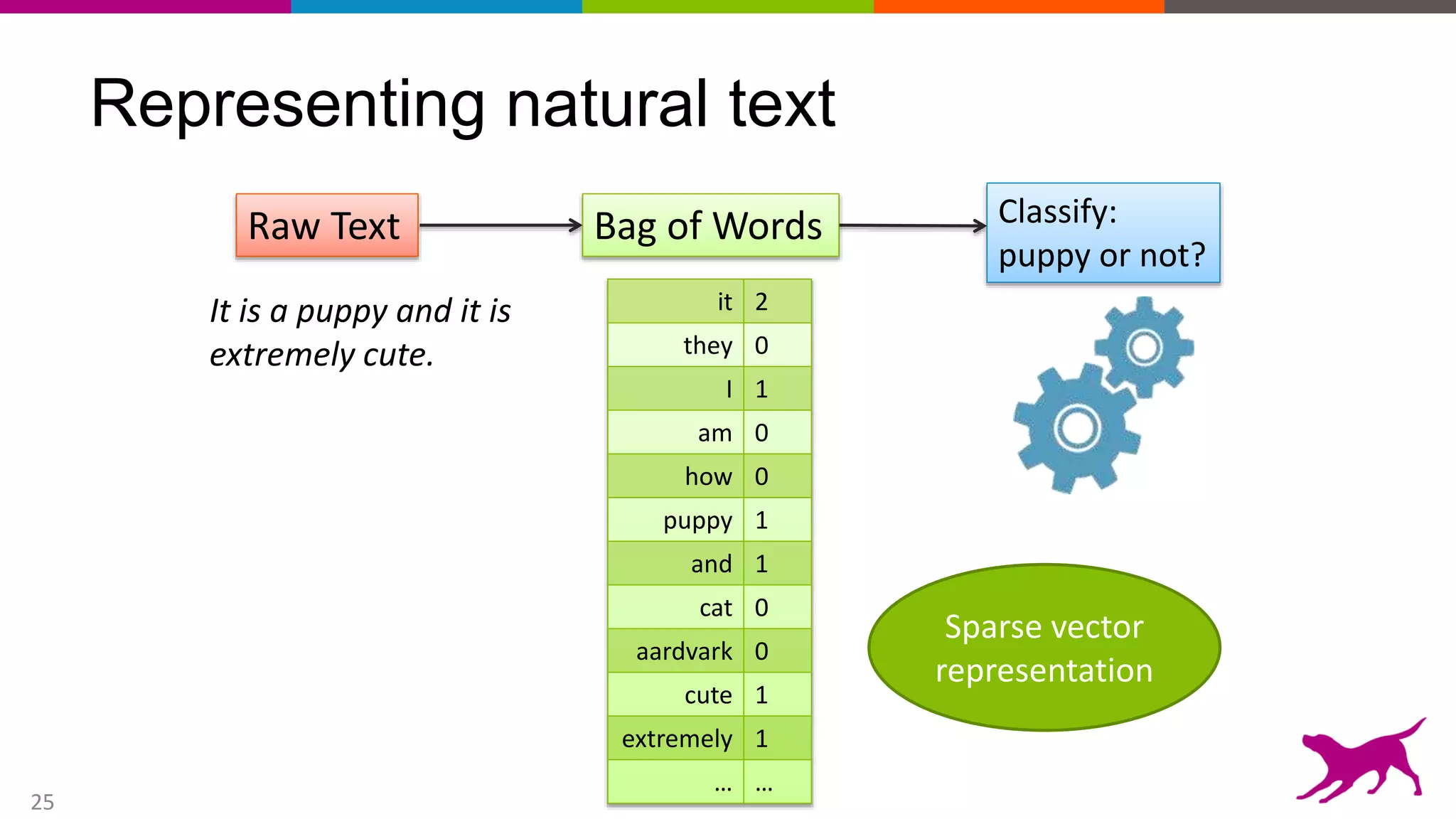

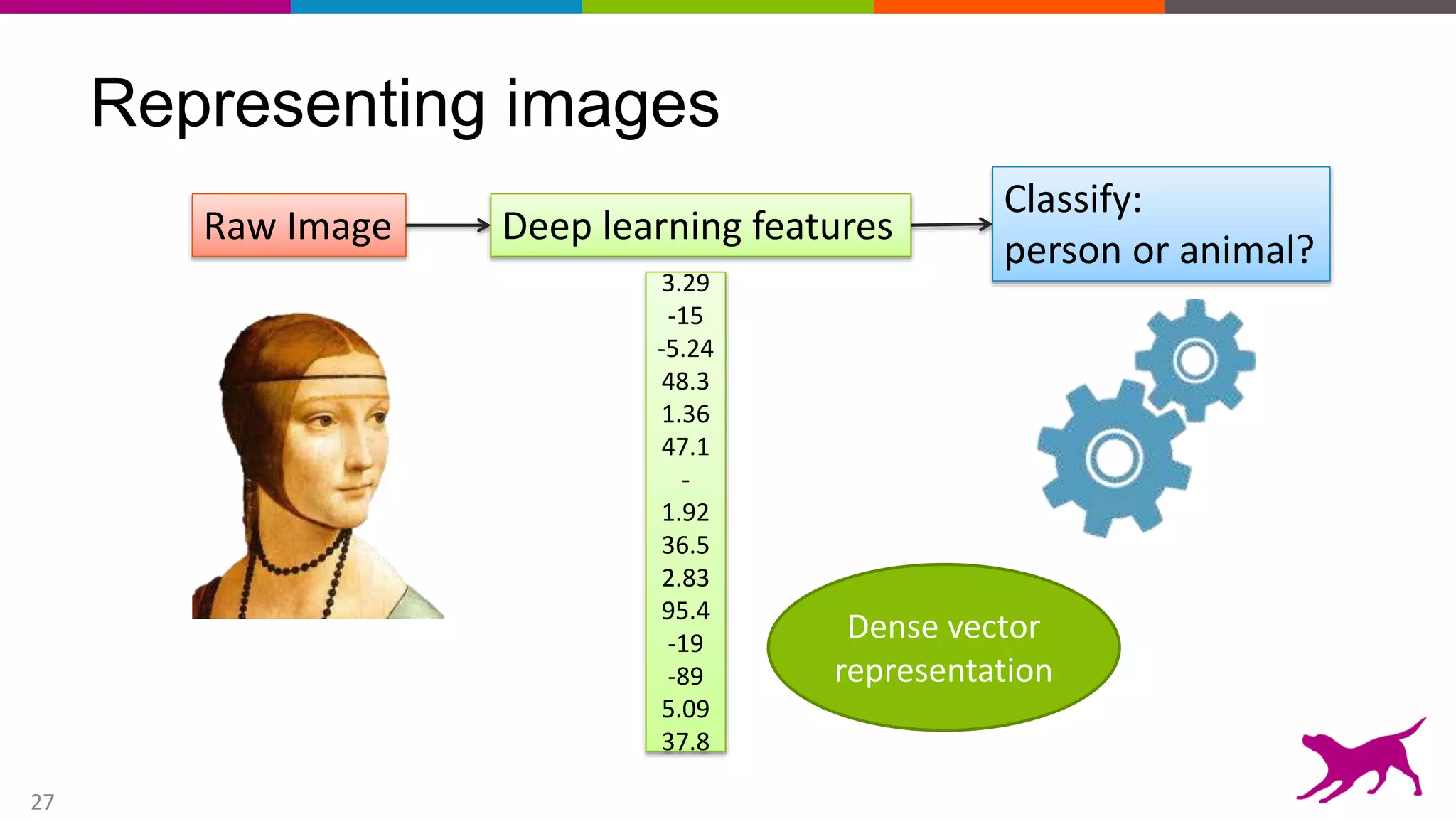

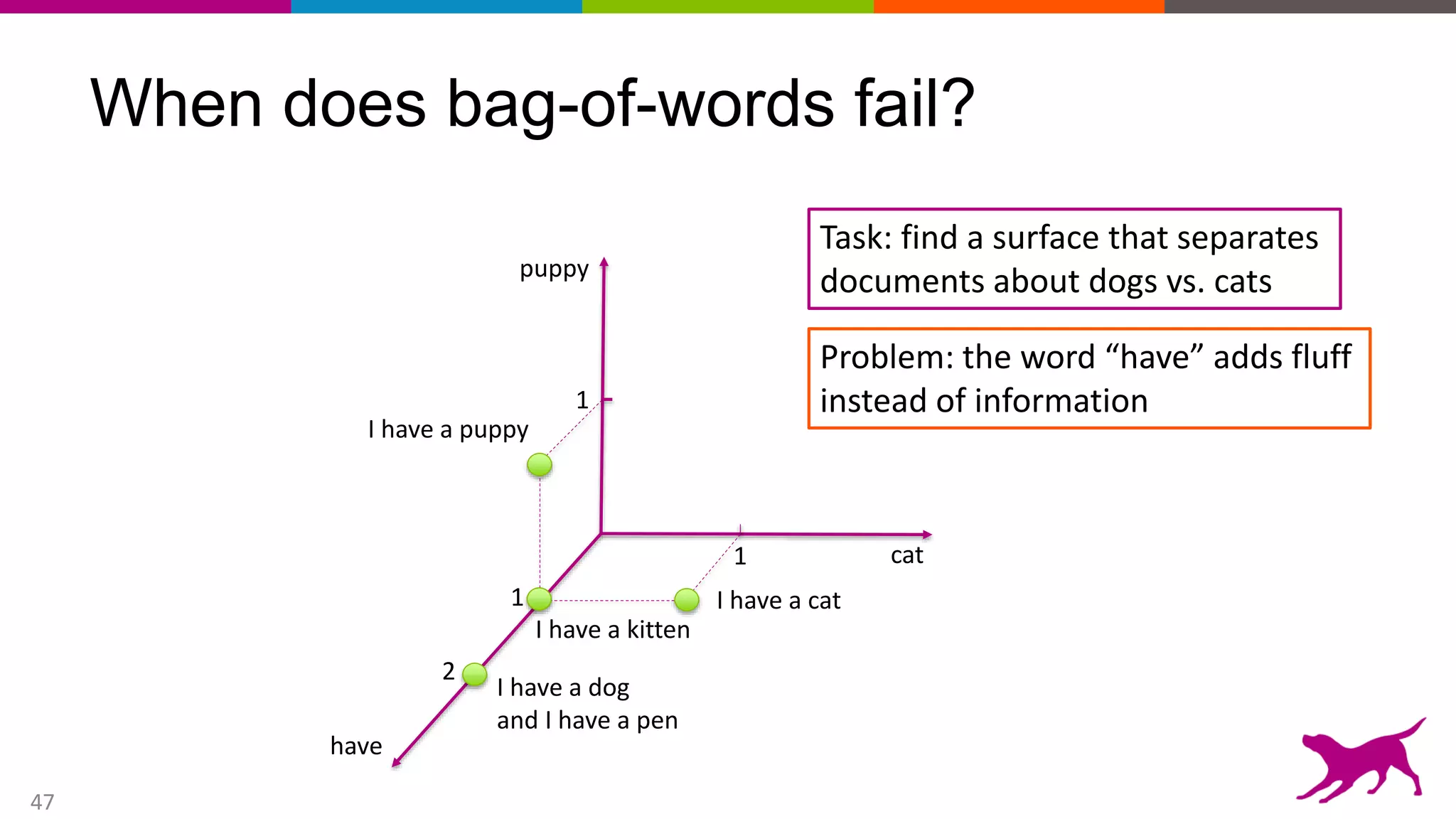

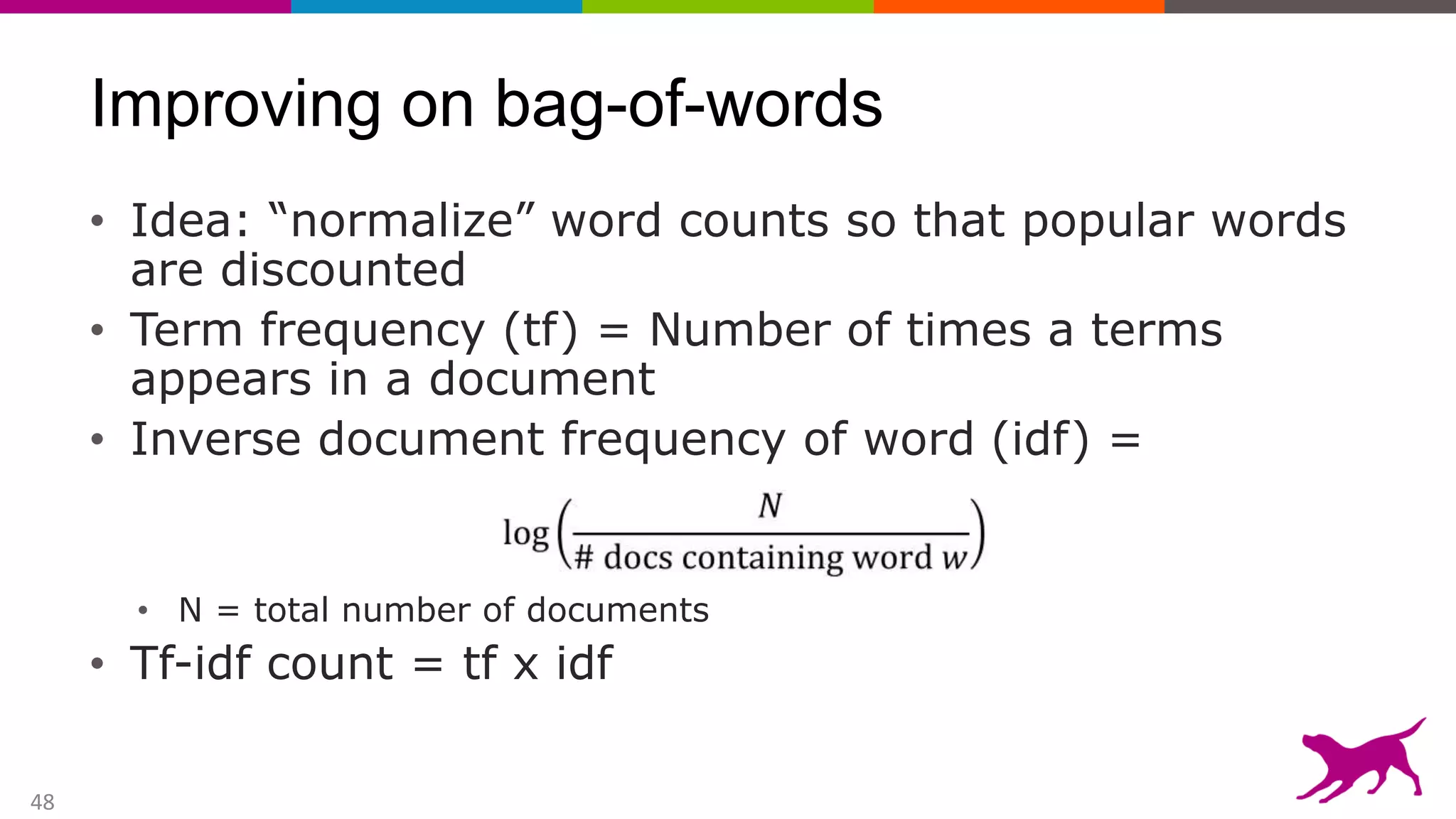

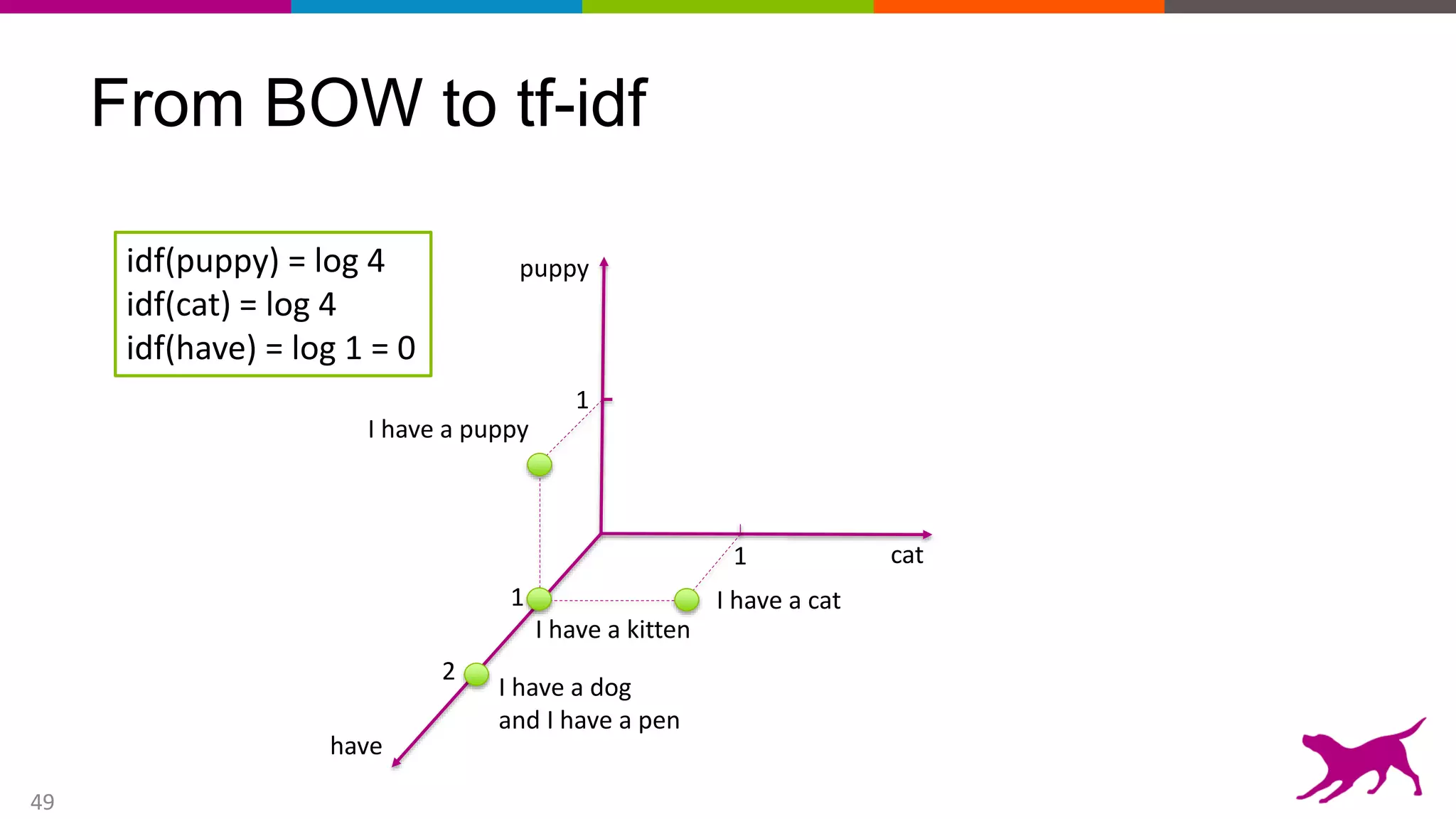

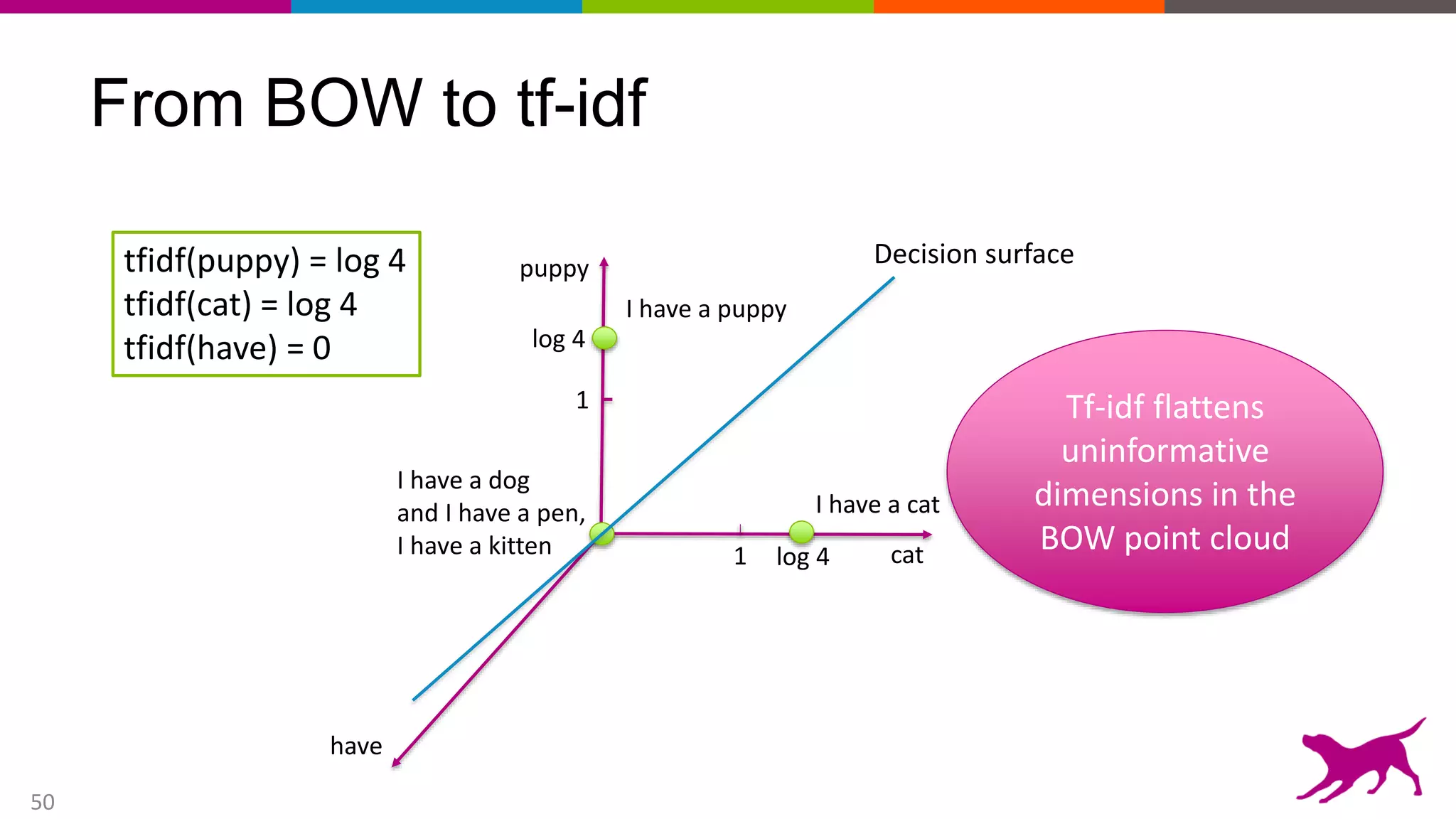

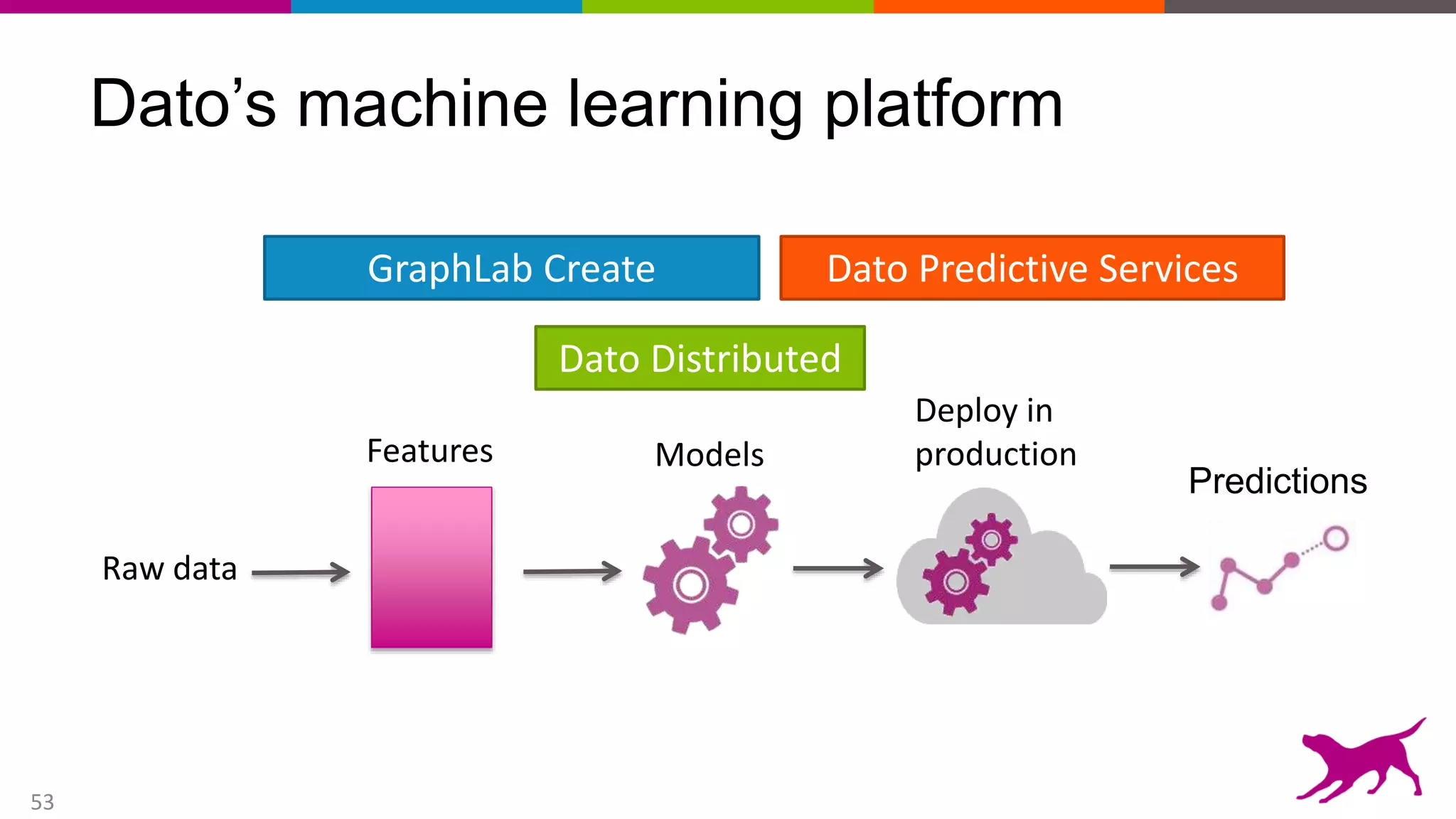

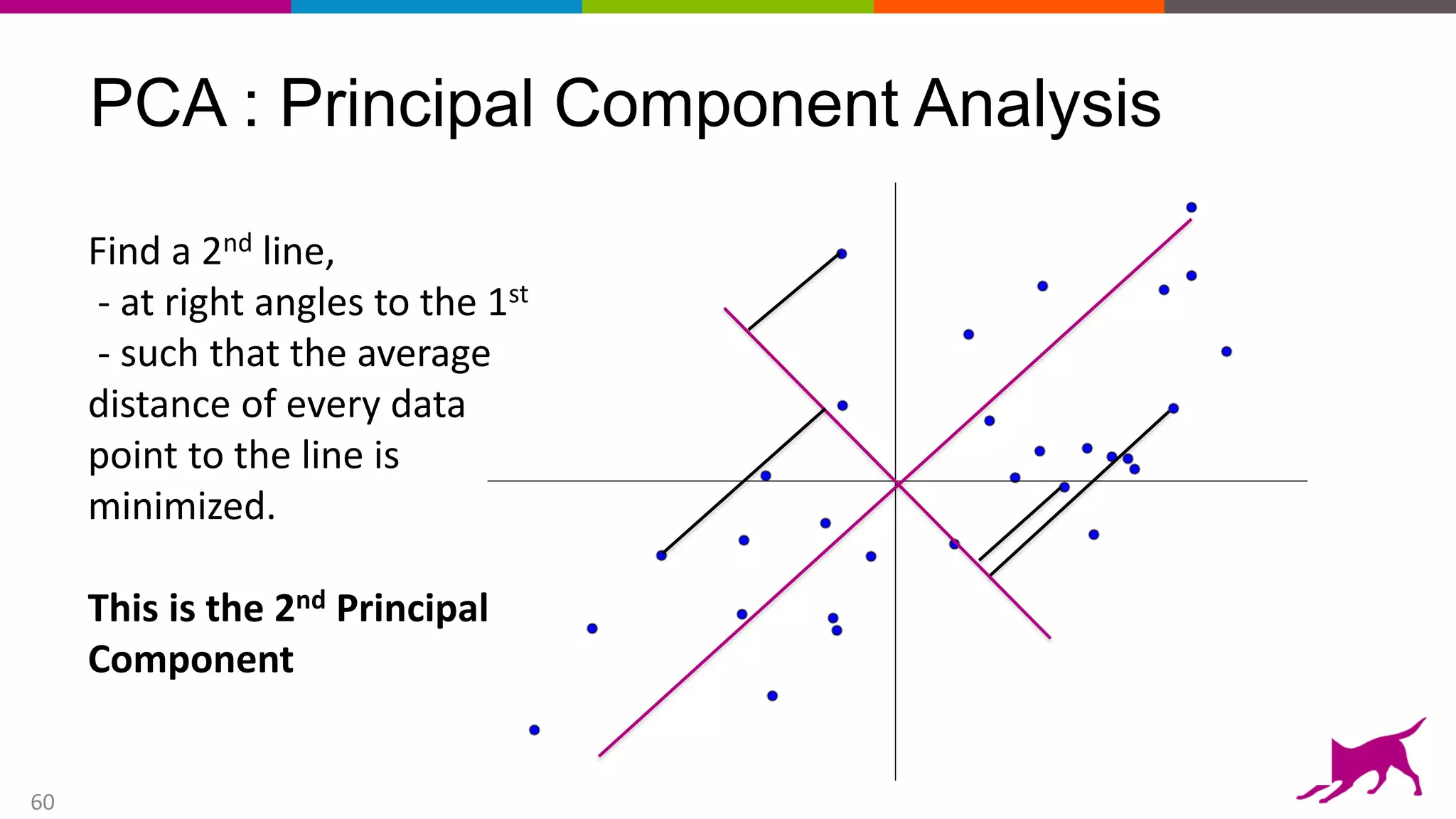

This document provides an overview of machine learning and feature engineering. It discusses how machine learning can be used for tasks like classification, regression, similarity matching, and clustering. It explains that feature engineering involves transforming raw data into numeric representations called features that machine learning models can use. Different techniques for feature engineering text and images are presented, such as bag-of-words and convolutional neural networks. Dimensionality reduction through principal component analysis is demonstrated. Finally, information is given about upcoming machine learning tutorials and Dato's machine learning platform.