Embed presentation

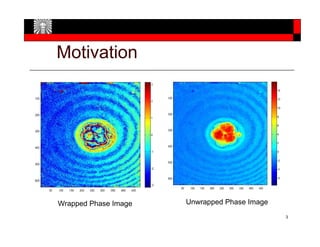

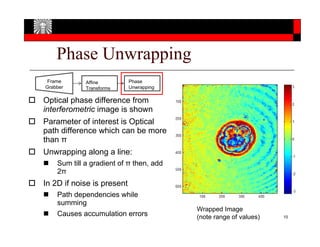

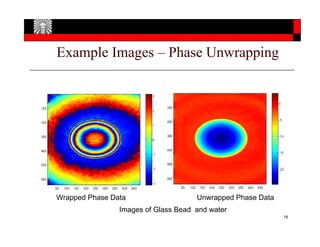

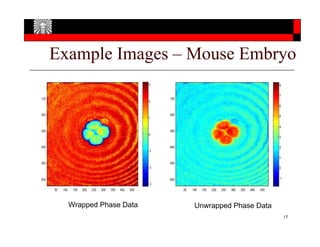

Download as PDF, PPTX

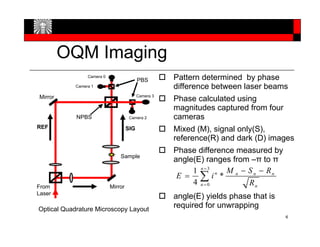

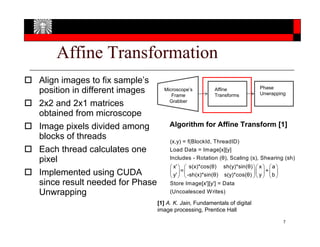

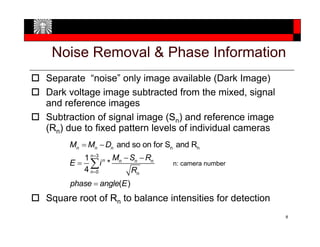

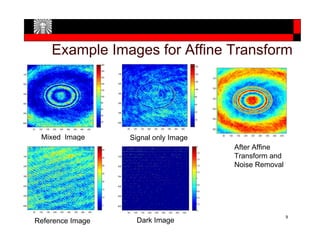

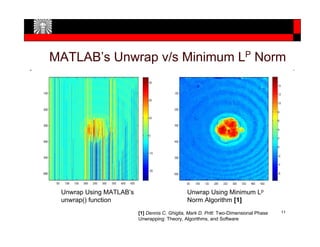

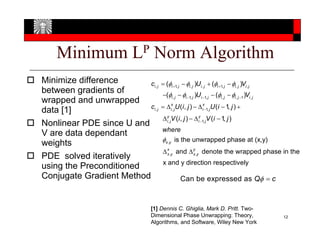

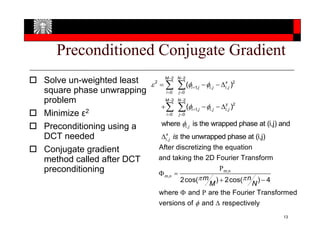

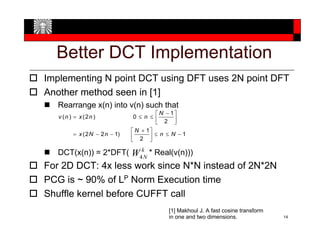

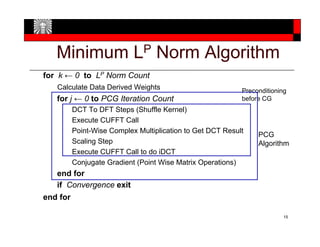

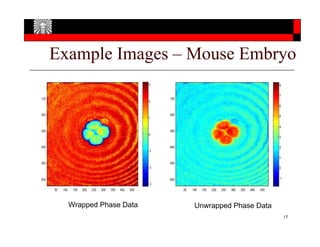

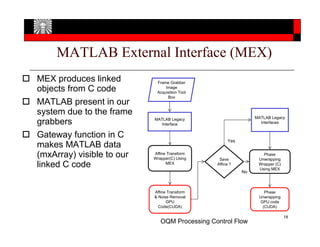

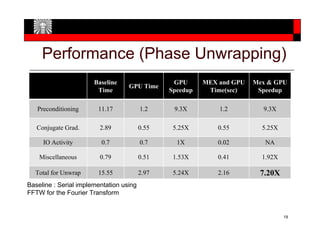

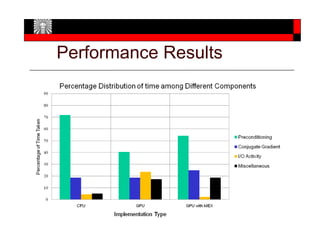

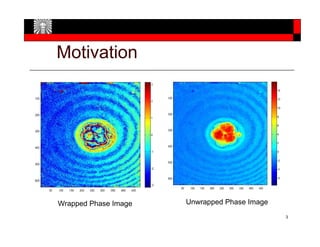

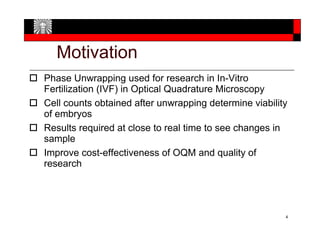

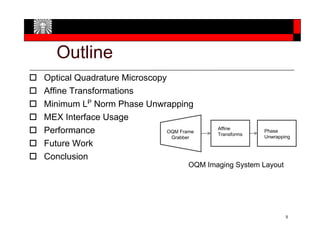

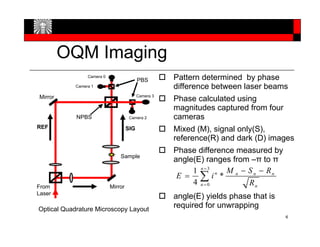

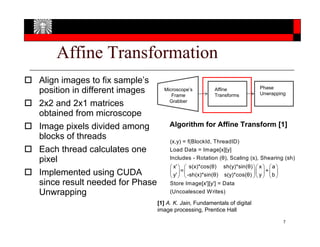

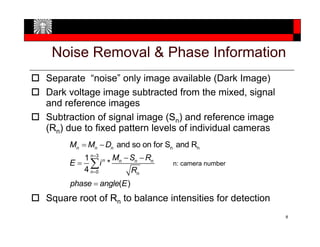

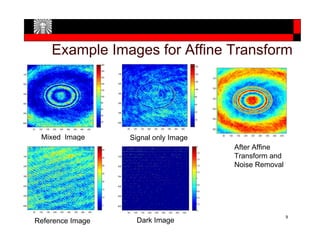

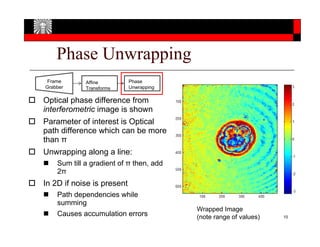

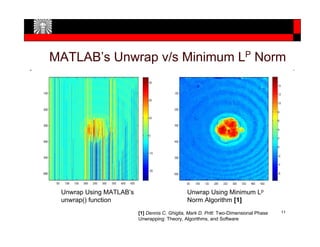

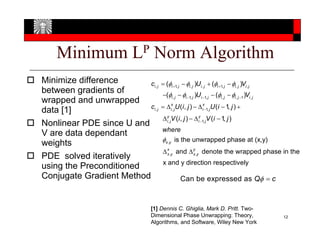

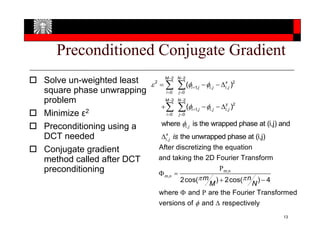

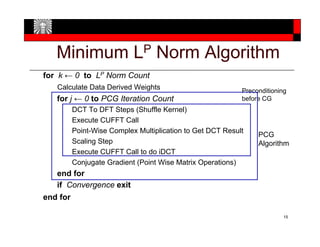

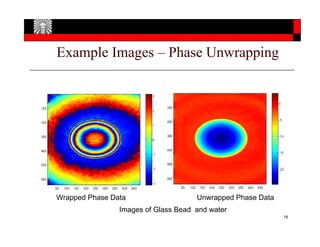

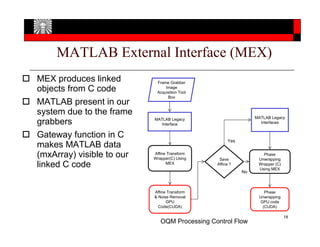

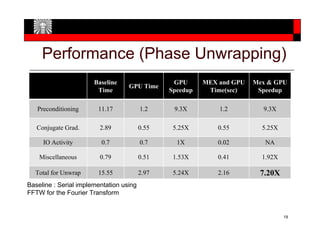

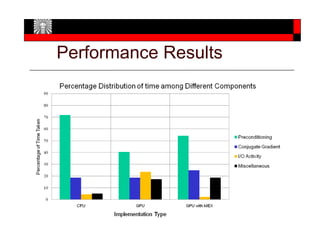

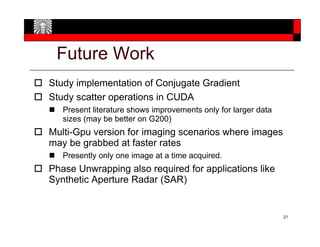

1) Phase unwrapping is used in optical quadrature microscopy to determine viability of embryos by counting cells after unwrapping. It needs to be done at near real-time speeds to analyze sample changes. 2) The paper implements minimum LP norm phase unwrapping and affine transformations on a GPU to improve performance and latency for optical microscopy research. 3) Performance results show a 5.24x speedup for total phase unwrapping time compared to a serial CPU implementation. Further optimizations like multi-GPU support could improve speeds for higher image acquisition rates.