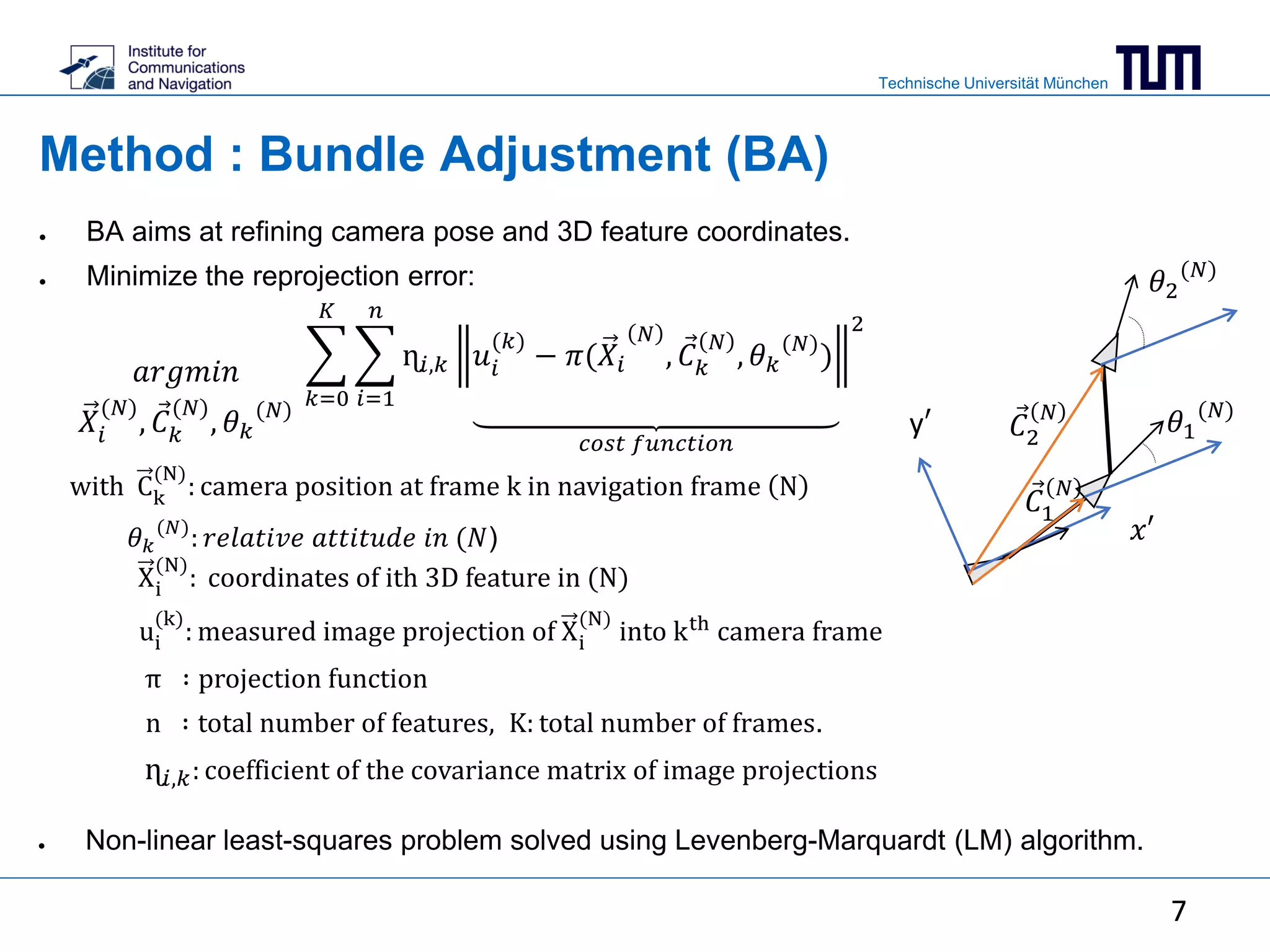

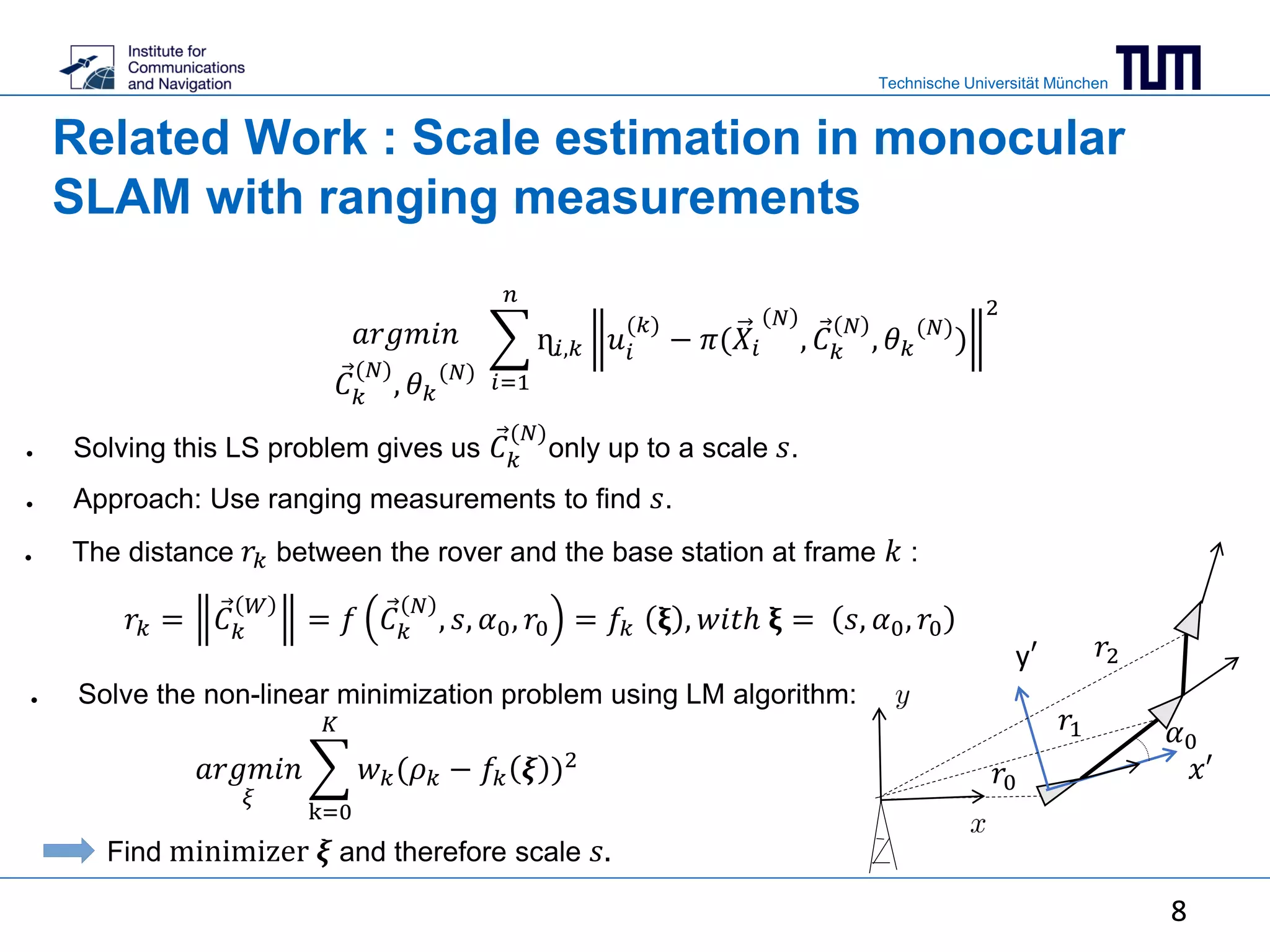

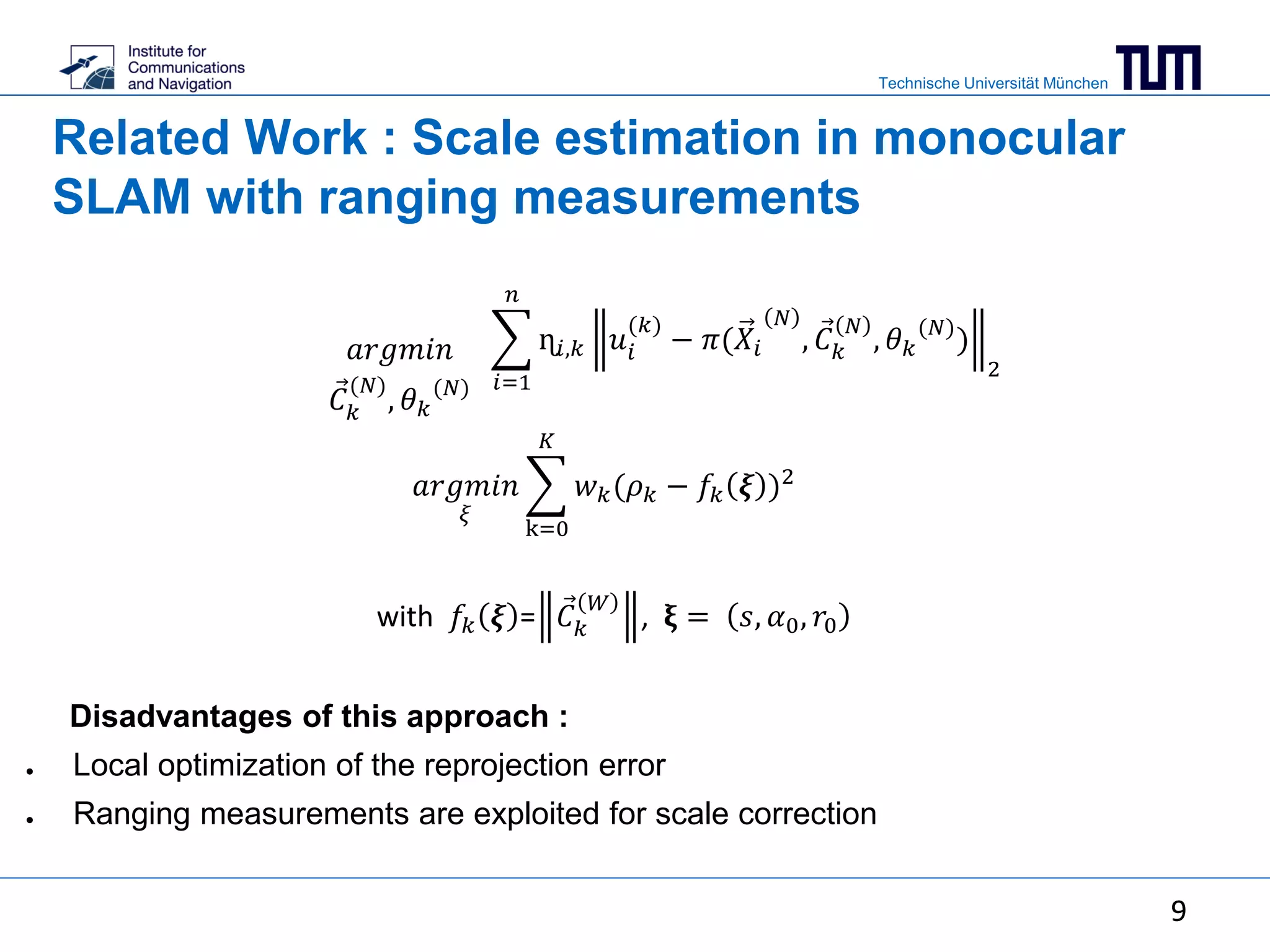

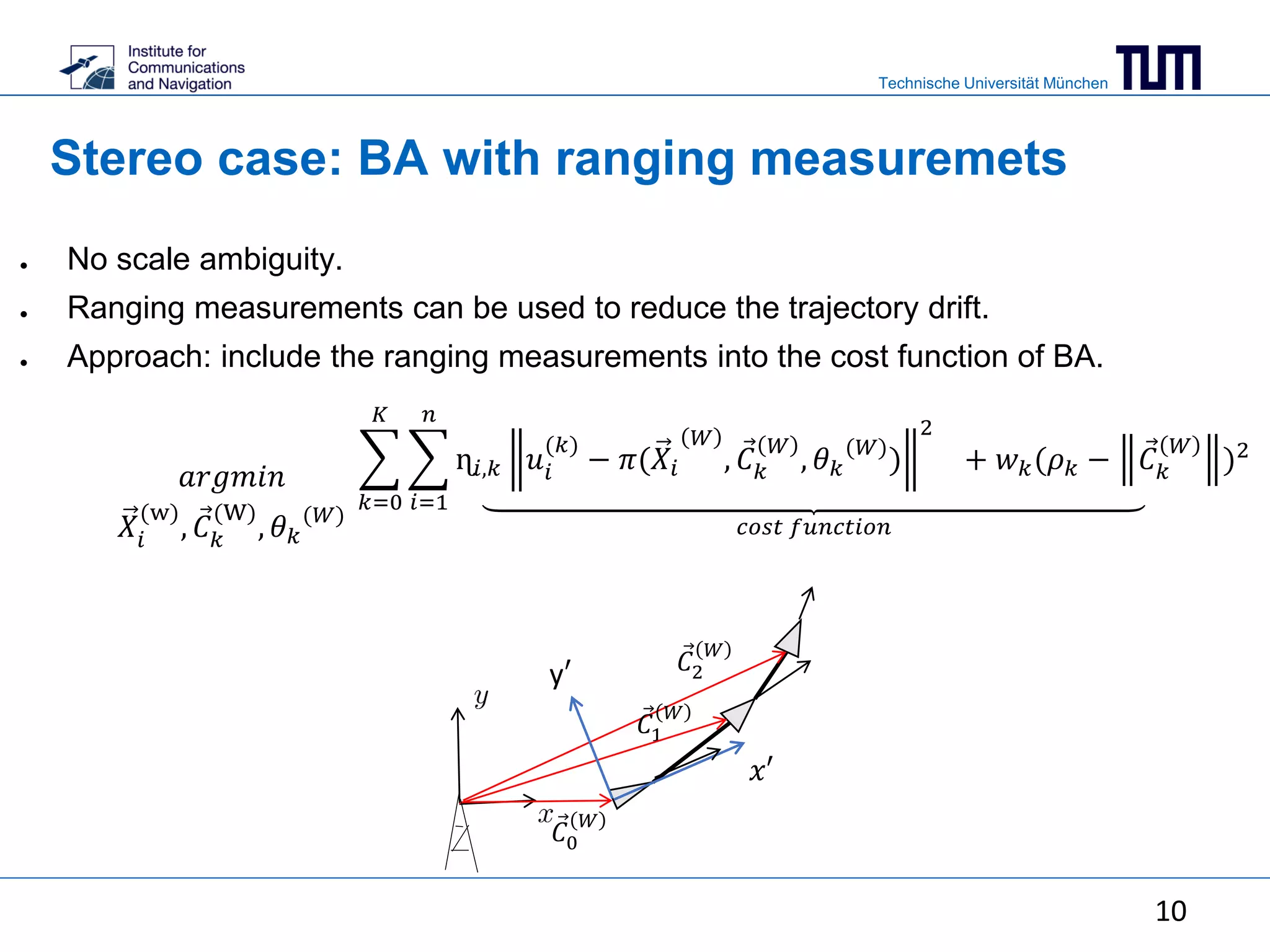

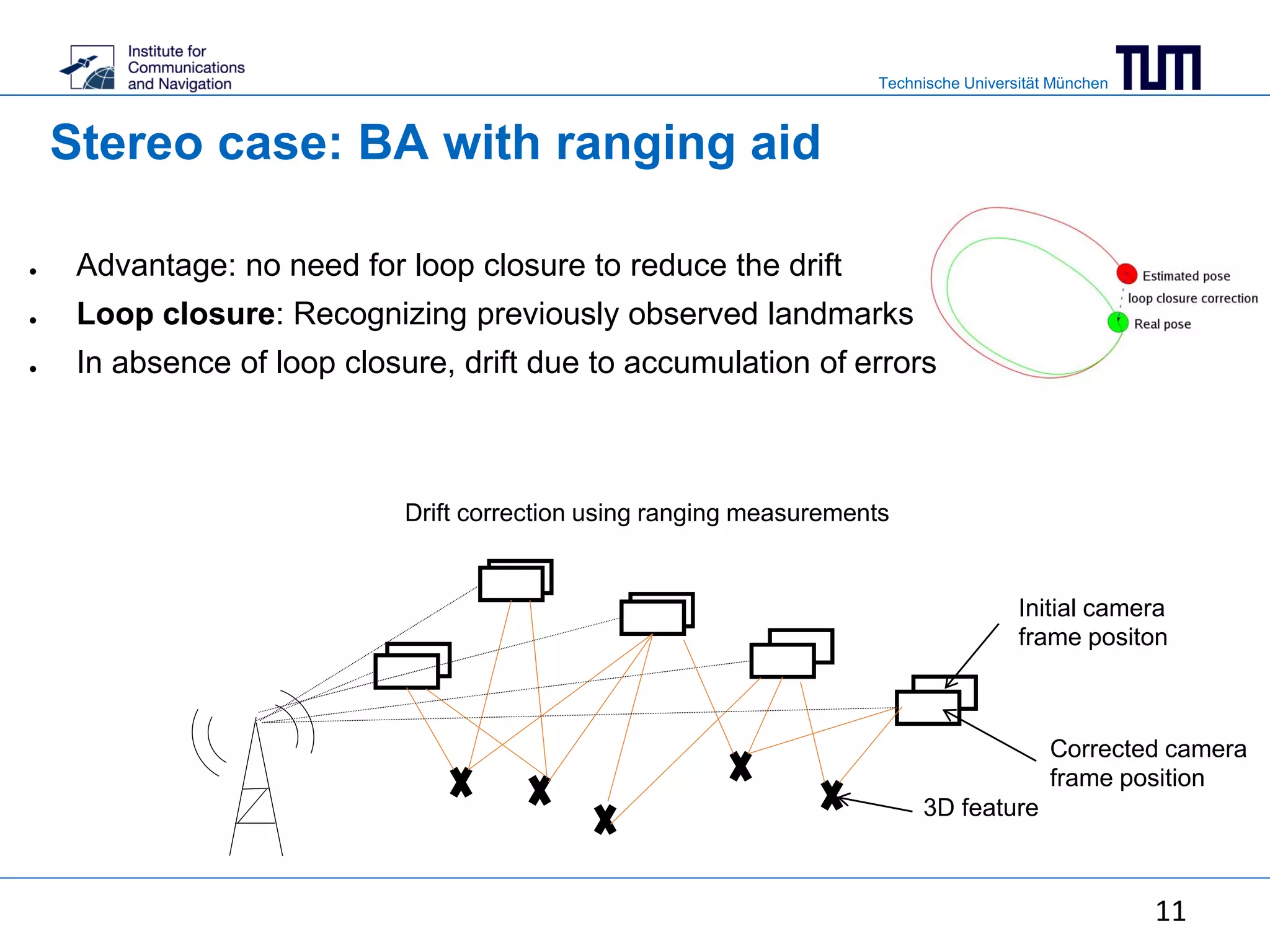

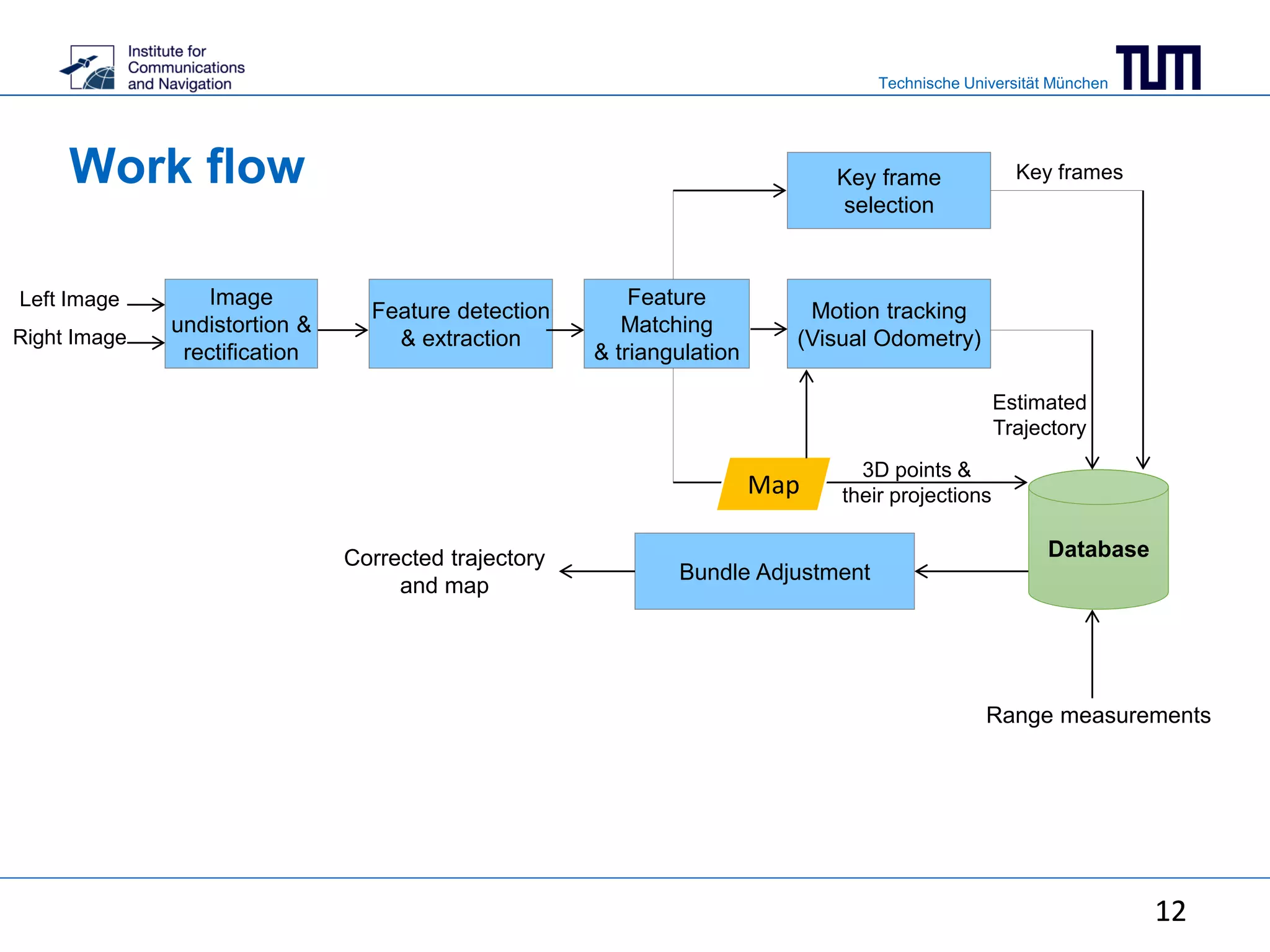

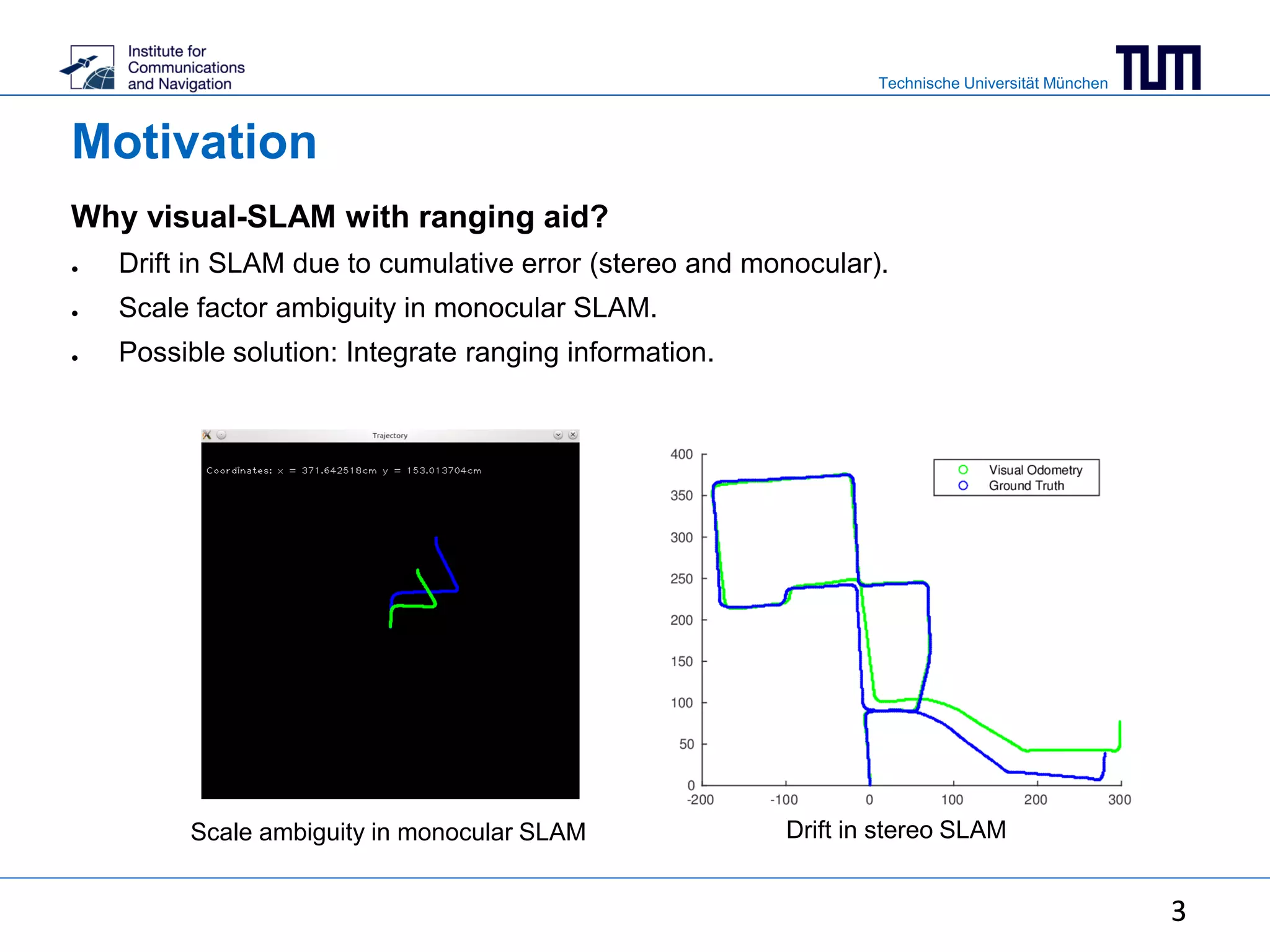

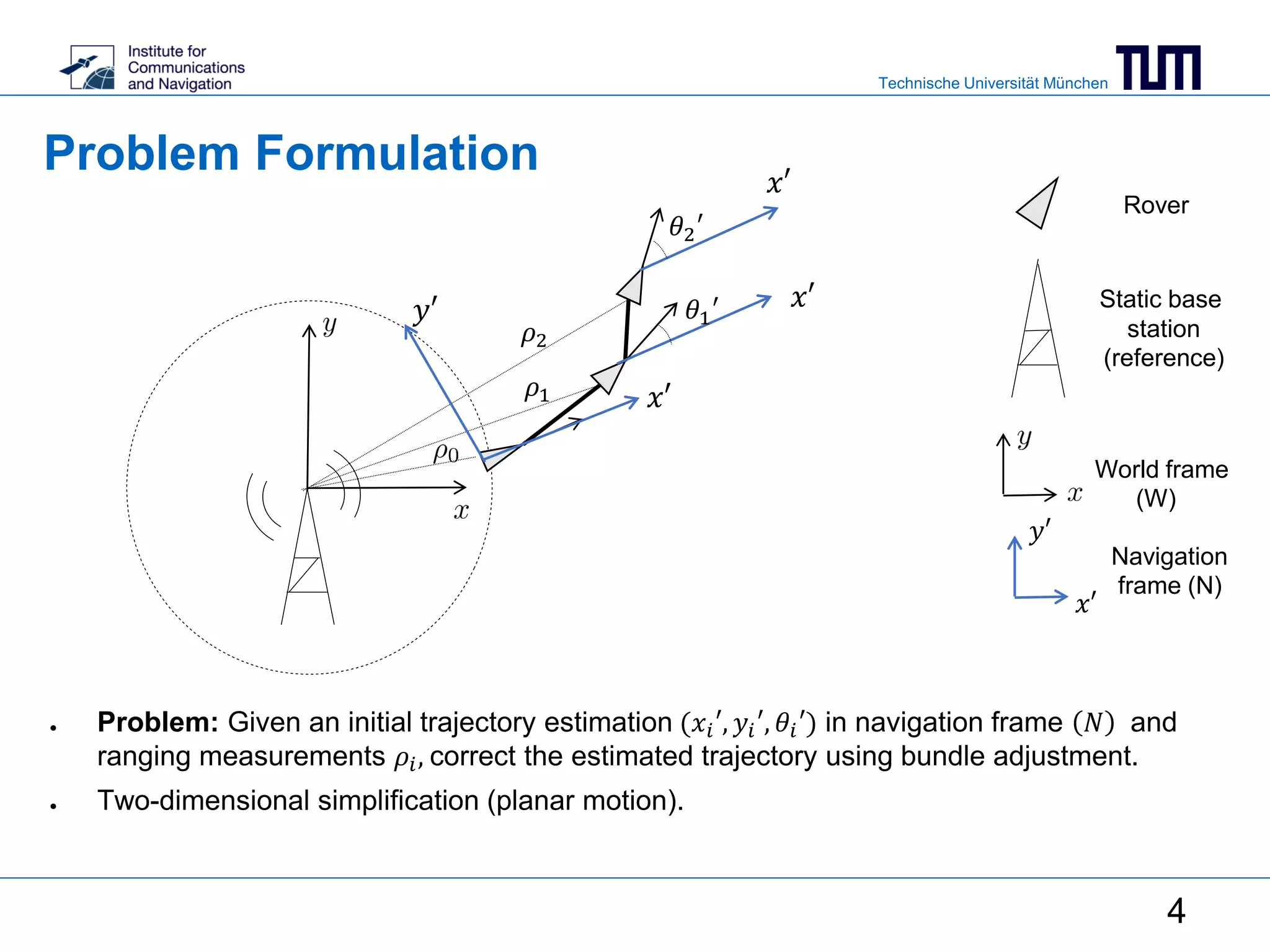

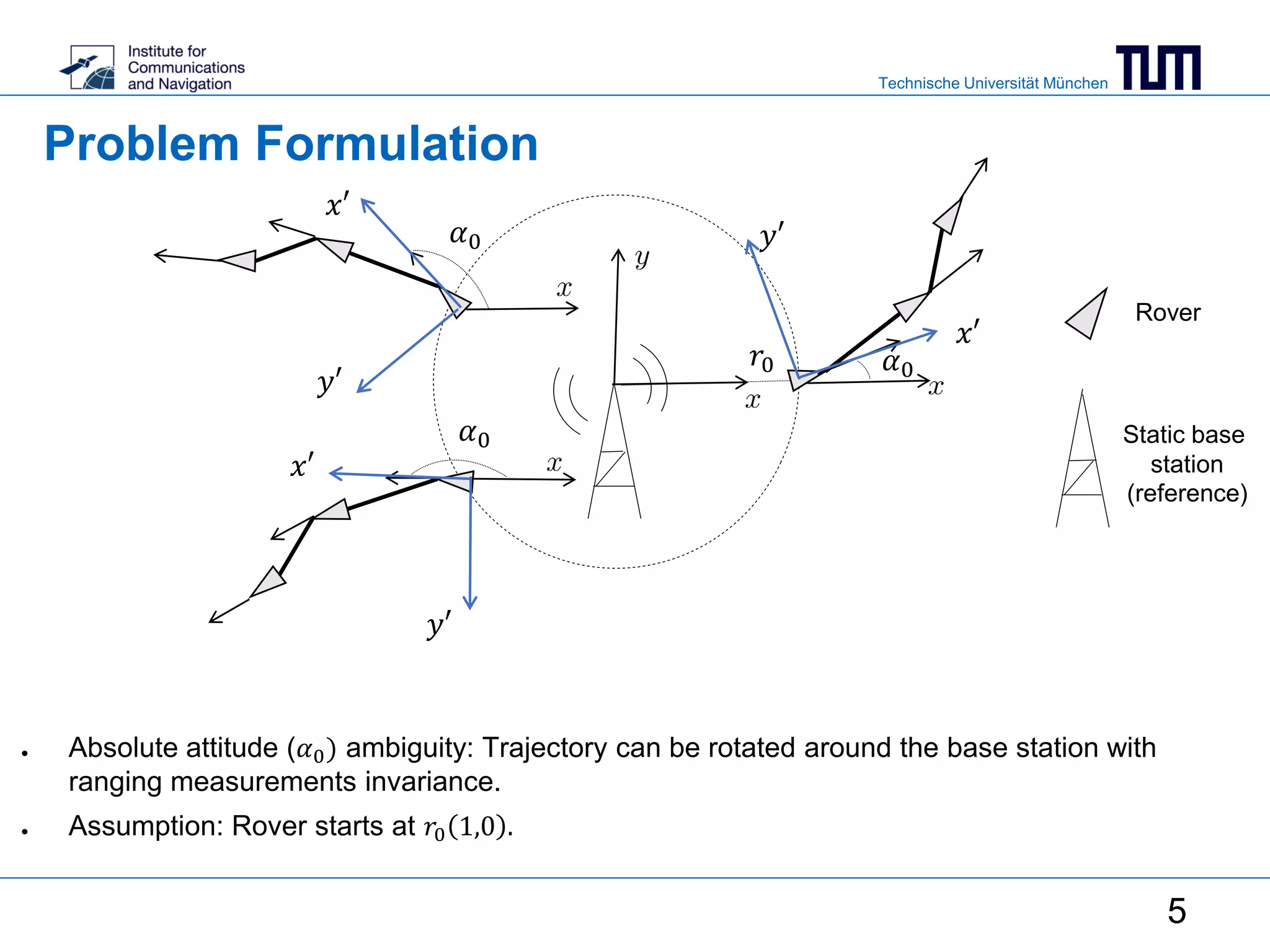

This document summarizes a master's thesis on using ranging measurements to aid monocular and stereo visual simultaneous localization and mapping (SLAM). The thesis aims to reduce drift in estimated trajectories by integrating ranging measurements into bundle adjustment. For monocular SLAM, ranging is used to resolve scale ambiguity, while for stereo SLAM it is directly included in the bundle adjustment cost function. Experimental results demonstrate reduced reprojection error through bundle adjustment of 3168 points over 100 frames using a visual-inertial sensor.

![Technische Universität München

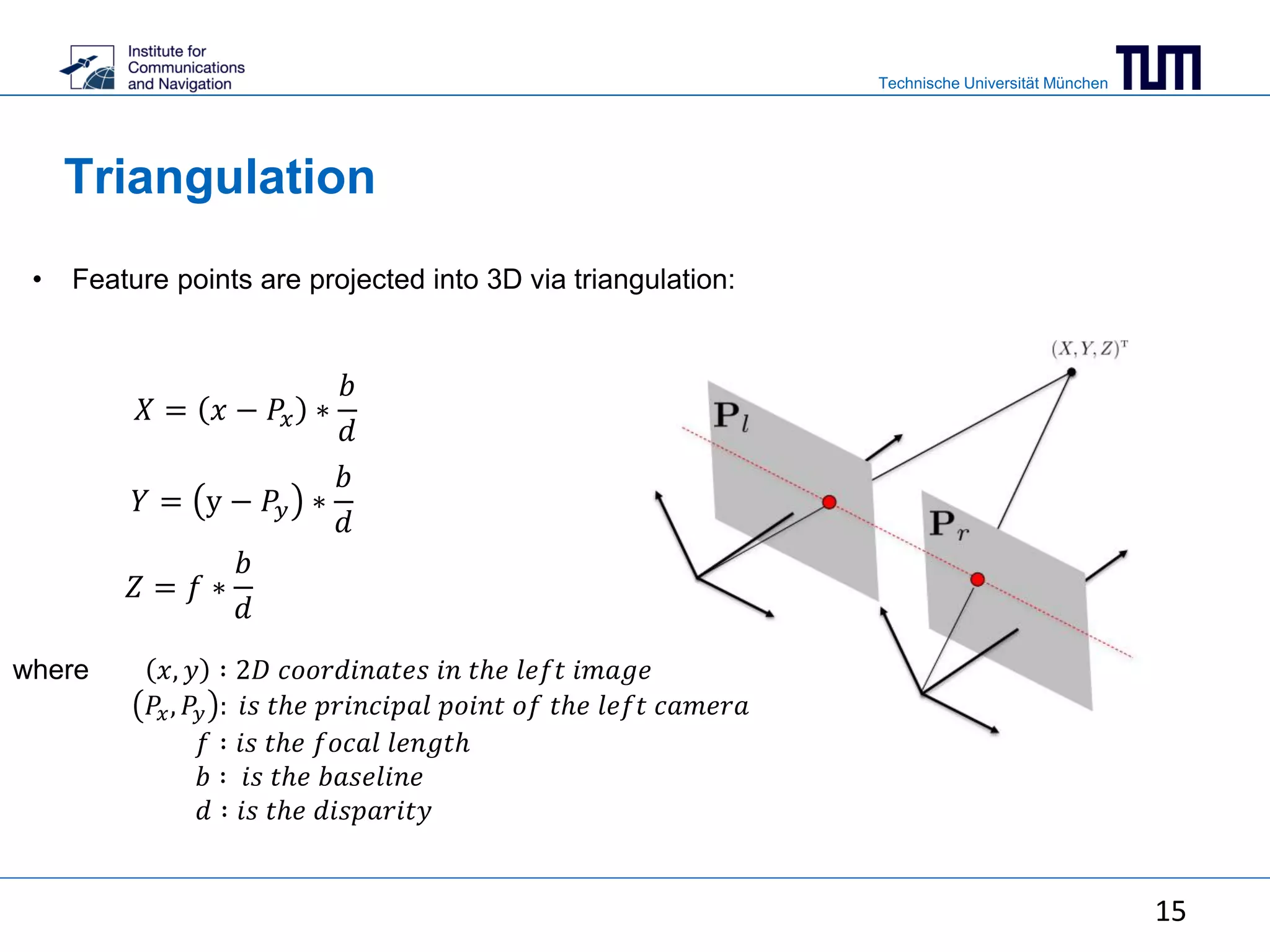

Projection of world coordinates into image

coordinates

𝑢 =

𝑥

𝑦

1

= 𝜋 𝑿, R, 𝐭 = P𝐗 = K[R 𝒕]𝐗 =

−𝑓 0 𝑃𝑥

0 −𝑓 𝑃𝑦

0 0 1

[R 𝒕]

𝑋

𝑌

𝑍

1

𝑢: ℎ𝑜𝑚𝑜𝑔𝑒𝑛𝑒𝑜𝑢𝑠 𝑖𝑚𝑎𝑔𝑒 𝑐𝑜𝑜𝑟𝑑𝑖𝑛𝑎𝑡𝑒𝑠

𝑿: 3𝐷 𝑝𝑜𝑖𝑛𝑡 𝑐𝑜𝑜𝑟𝑑𝑖𝑛𝑎𝑡𝑒𝑠

𝑅, 𝒕 ∶ 𝑟𝑜𝑡𝑎𝑡𝑖𝑜𝑛 𝑎𝑛𝑑 𝑡𝑟𝑎𝑛𝑠𝑙𝑎𝑡𝑖𝑜𝑛

𝜋: 𝑝𝑟𝑜𝑗𝑒𝑐𝑡𝑖𝑜𝑛 𝑓𝑢𝑛𝑐𝑡𝑖𝑜𝑛

𝑃: 𝑝𝑟𝑜𝑗𝑒𝑐𝑡𝑖𝑜𝑛 𝑚𝑎𝑡𝑟𝑖𝑥

𝐾: 𝑐𝑎𝑚𝑒𝑟𝑎 𝑚𝑎𝑡𝑟𝑖𝑥

𝑓: 𝑓𝑜𝑐𝑎𝑙 𝑙𝑒𝑛𝑔𝑡ℎ

(𝑃𝑥 , 𝑃𝑦): 𝑝𝑟𝑖𝑛𝑐𝑖𝑝𝑎𝑙 𝑝𝑜𝑖𝑛𝑡 𝑐𝑜𝑜𝑟𝑑𝑖𝑛𝑎𝑡𝑒𝑠

6](https://image.slidesharecdn.com/571bbdbb-cbdd-4384-a698-de5a0215c0f7-160808100042/75/mid_presentation-6-2048.jpg)