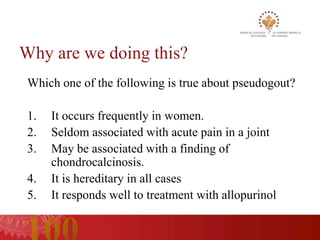

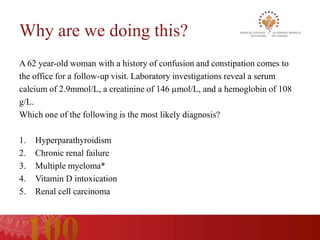

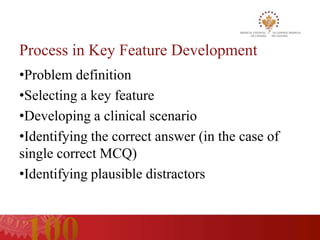

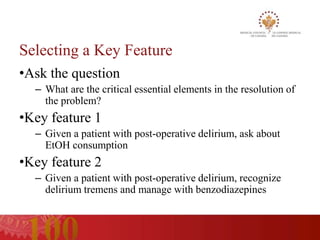

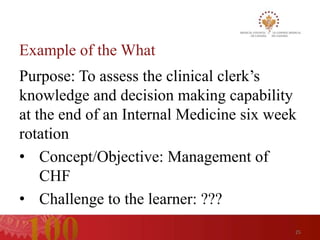

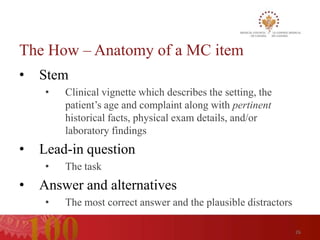

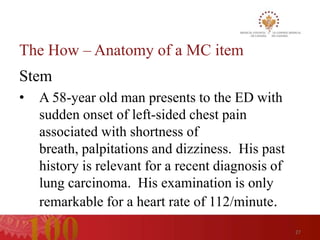

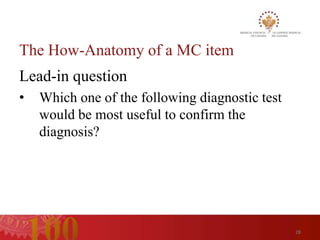

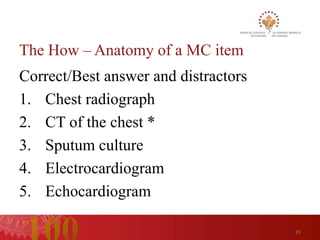

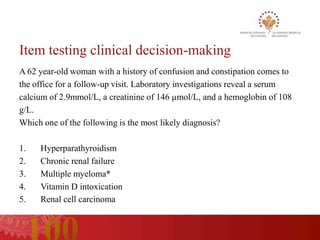

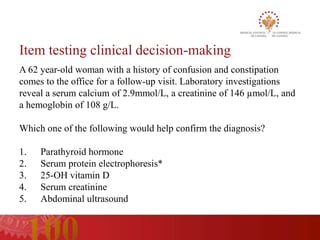

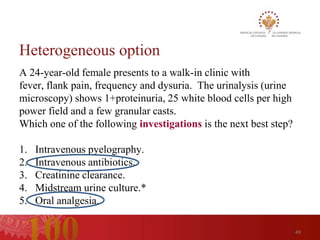

Based on the information provided, this item is testing the learner's ability to make a clinical decision by diagnosing the most likely cause of the patient's presentation based on the key laboratory findings provided in the stem. The stem provides sufficient relevant information to answer the question without being tricked or misled. The alternatives provided are plausible and independent diagnoses to consider. This item follows best practices for writing multiple choice questions that test clinical decision making abilities.