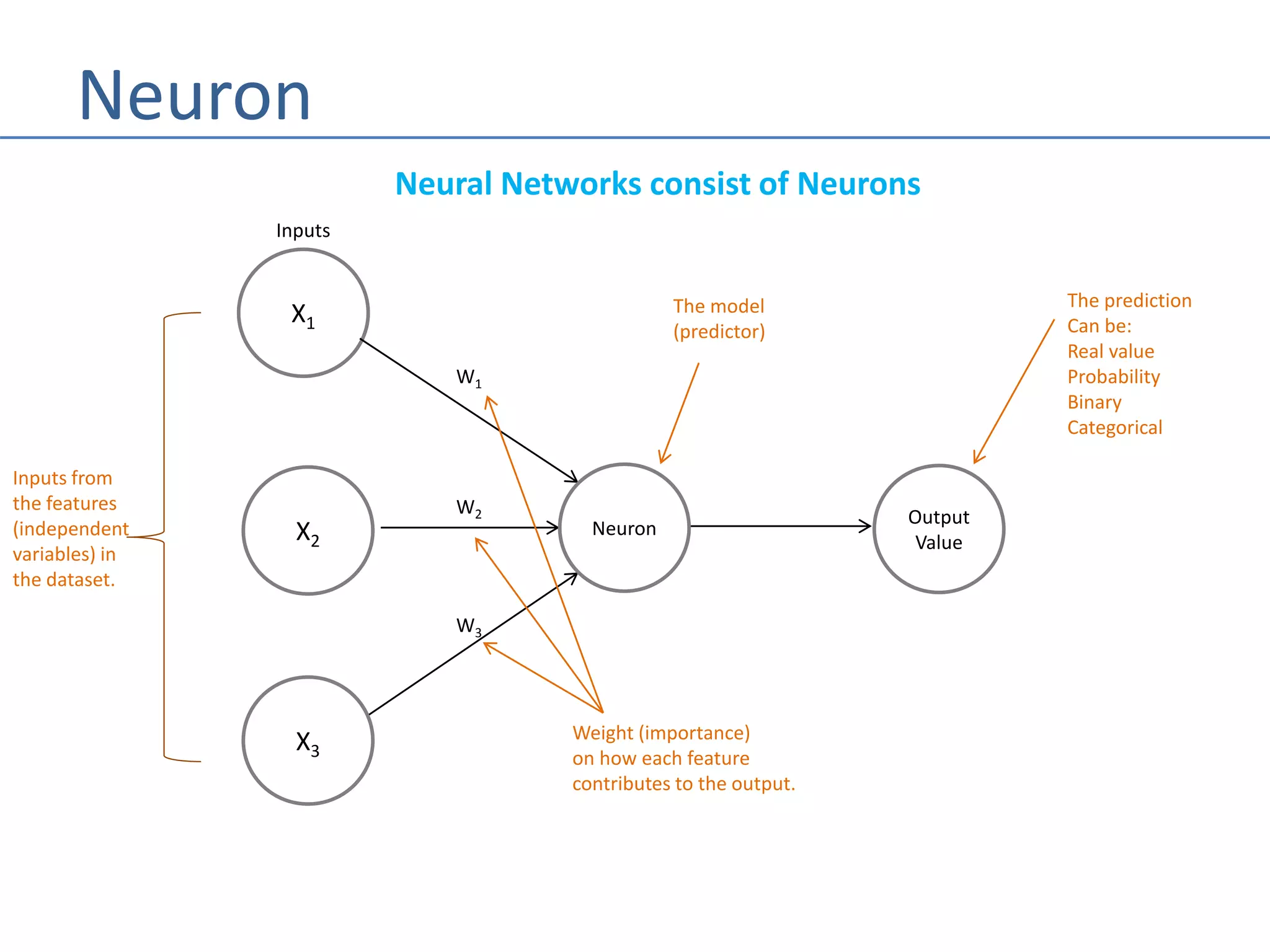

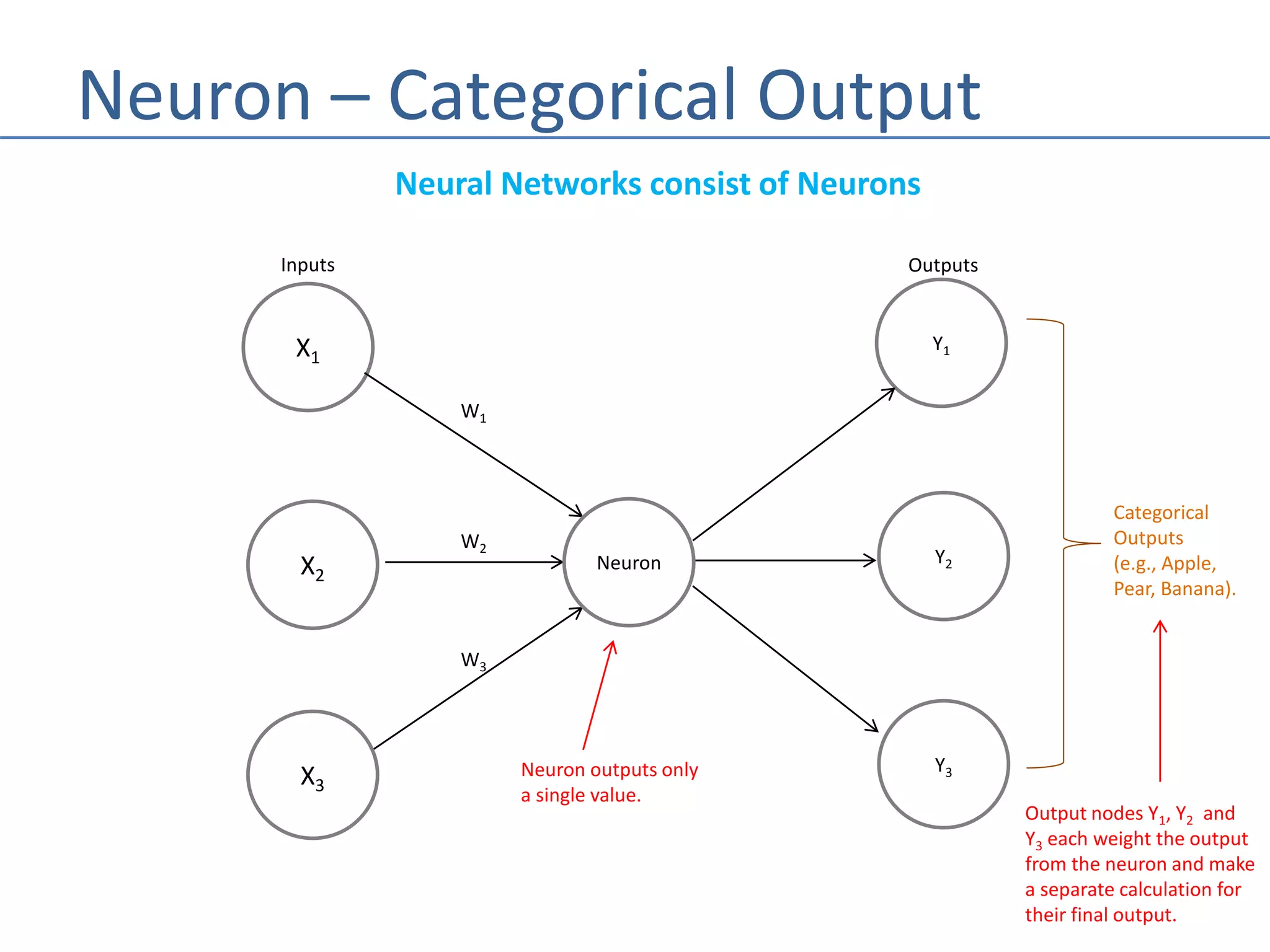

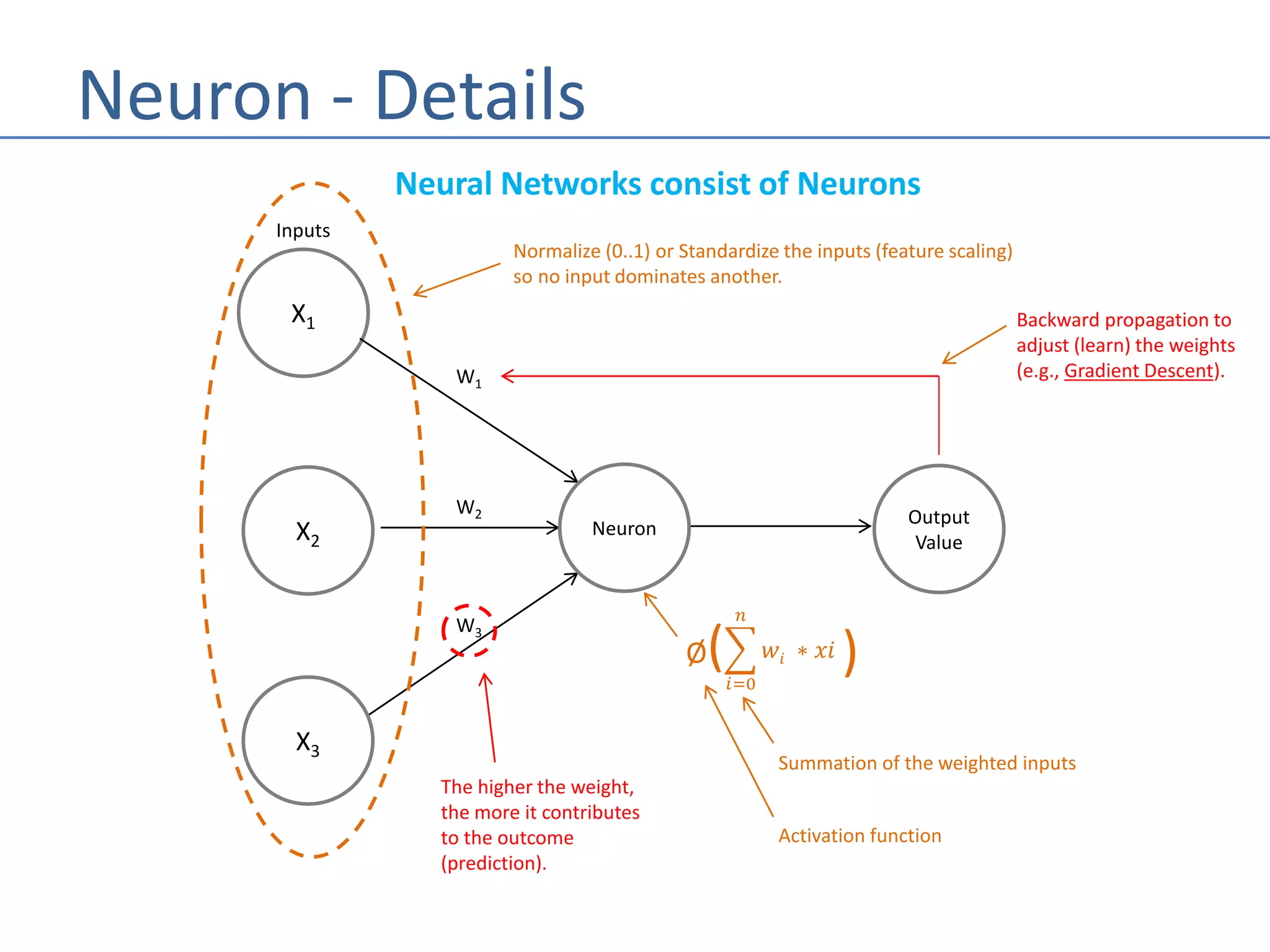

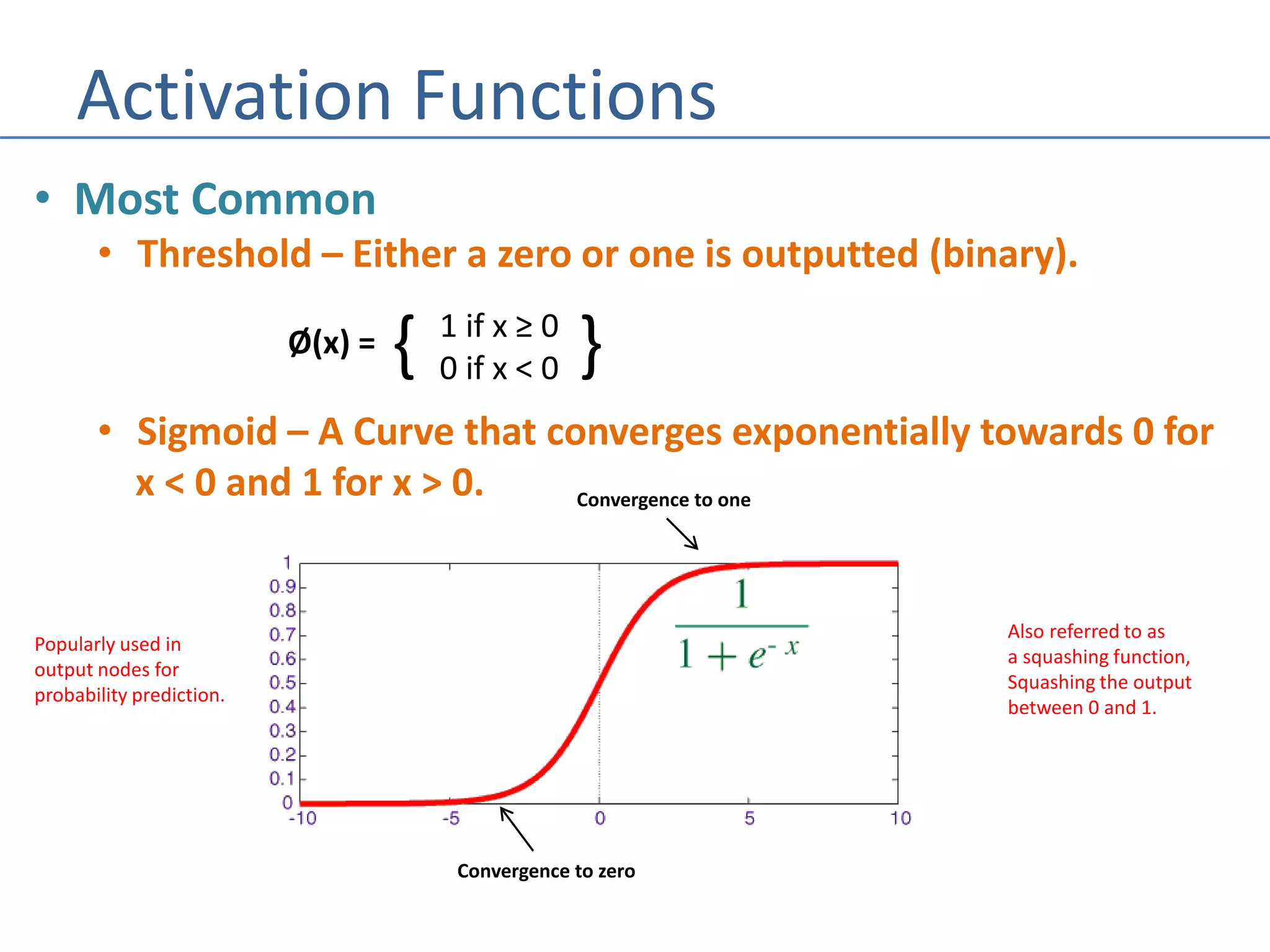

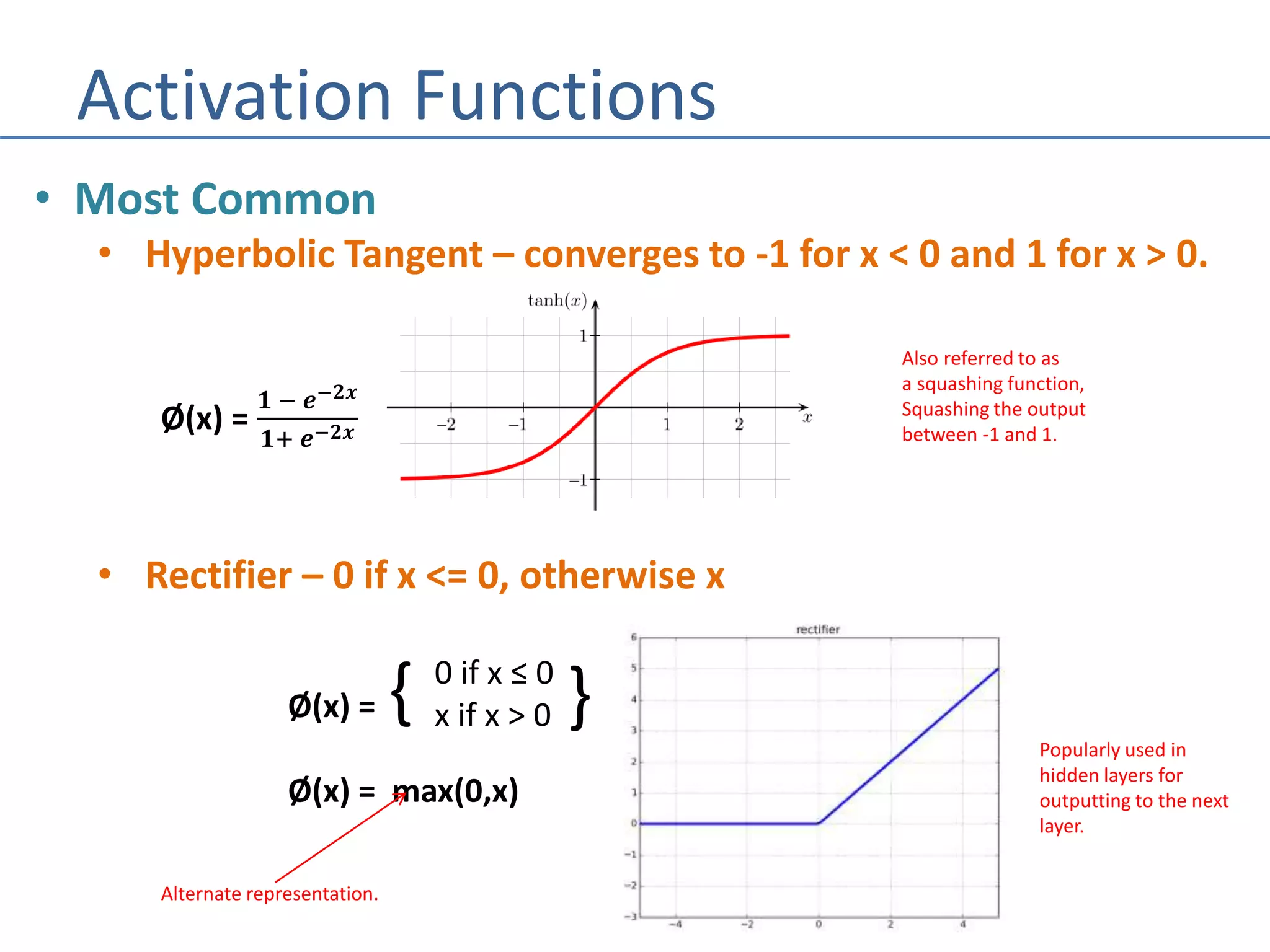

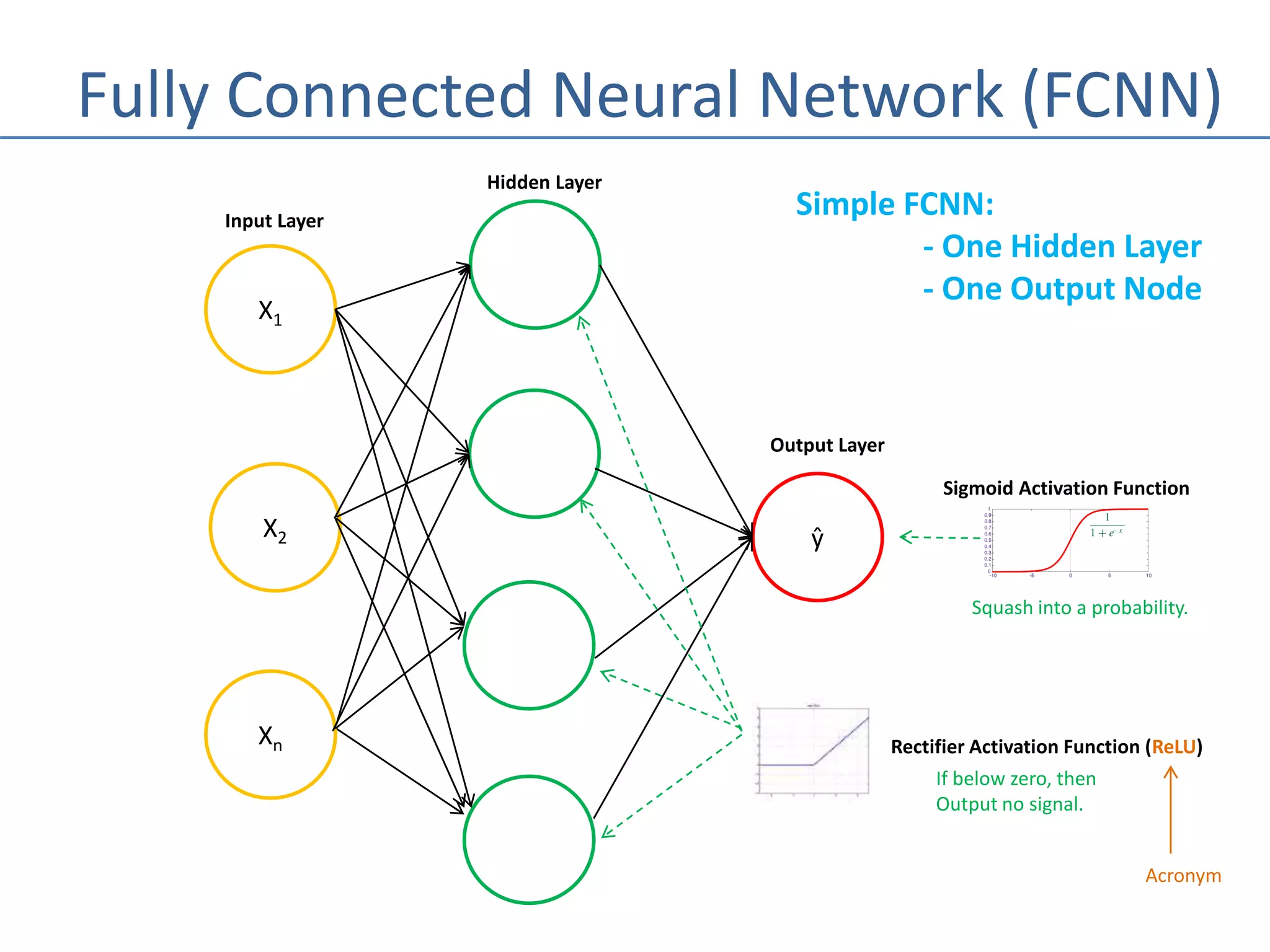

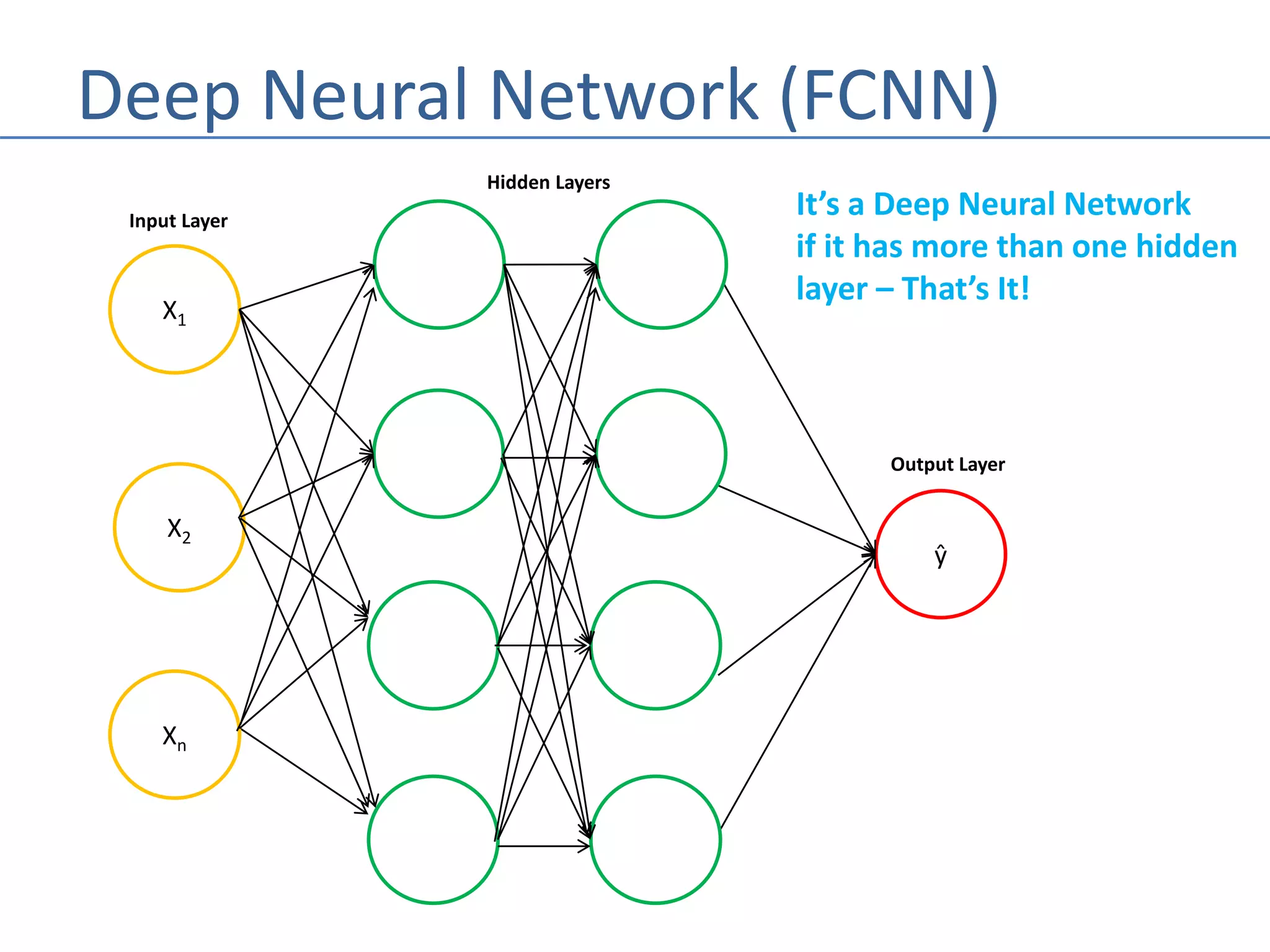

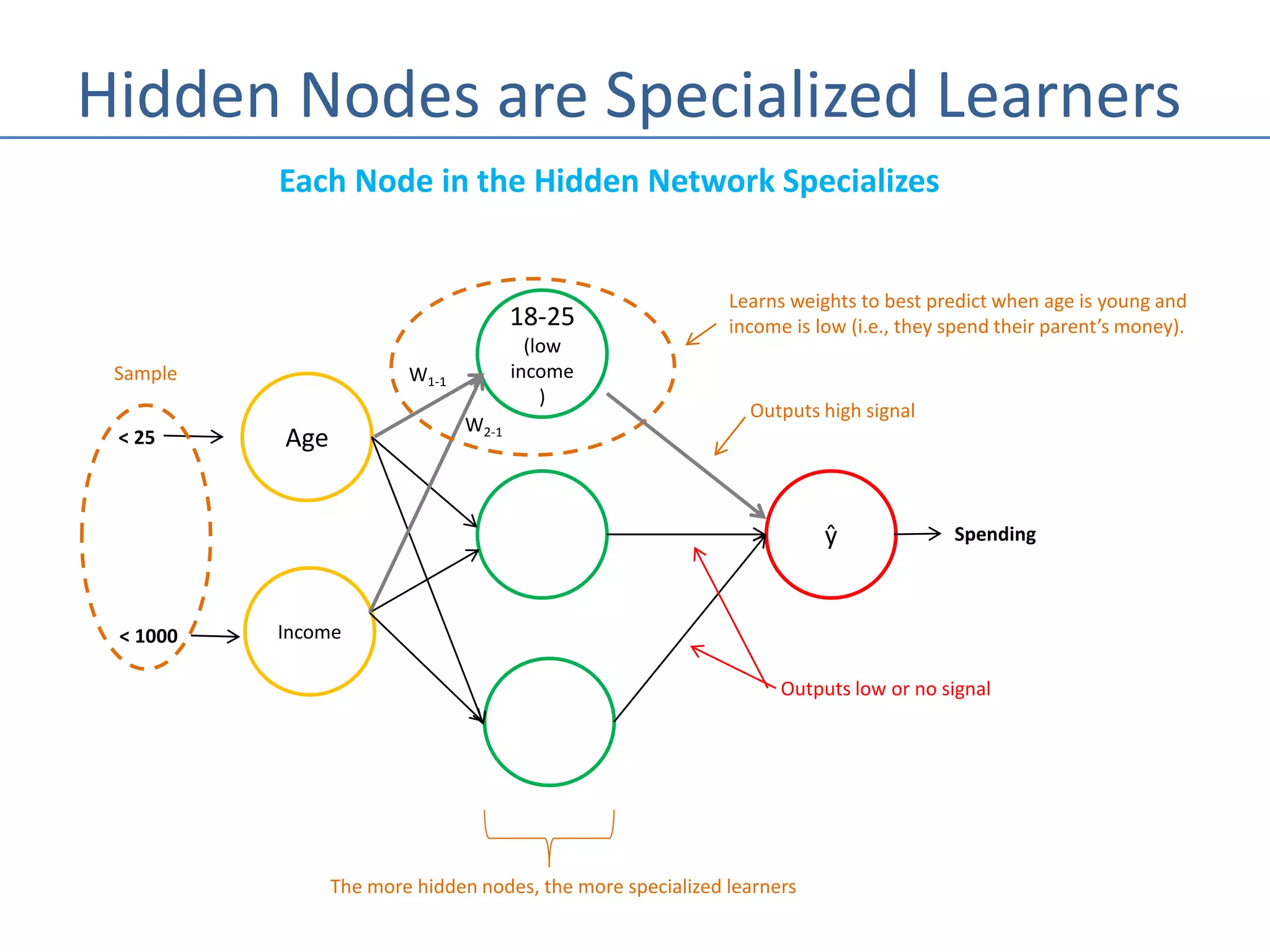

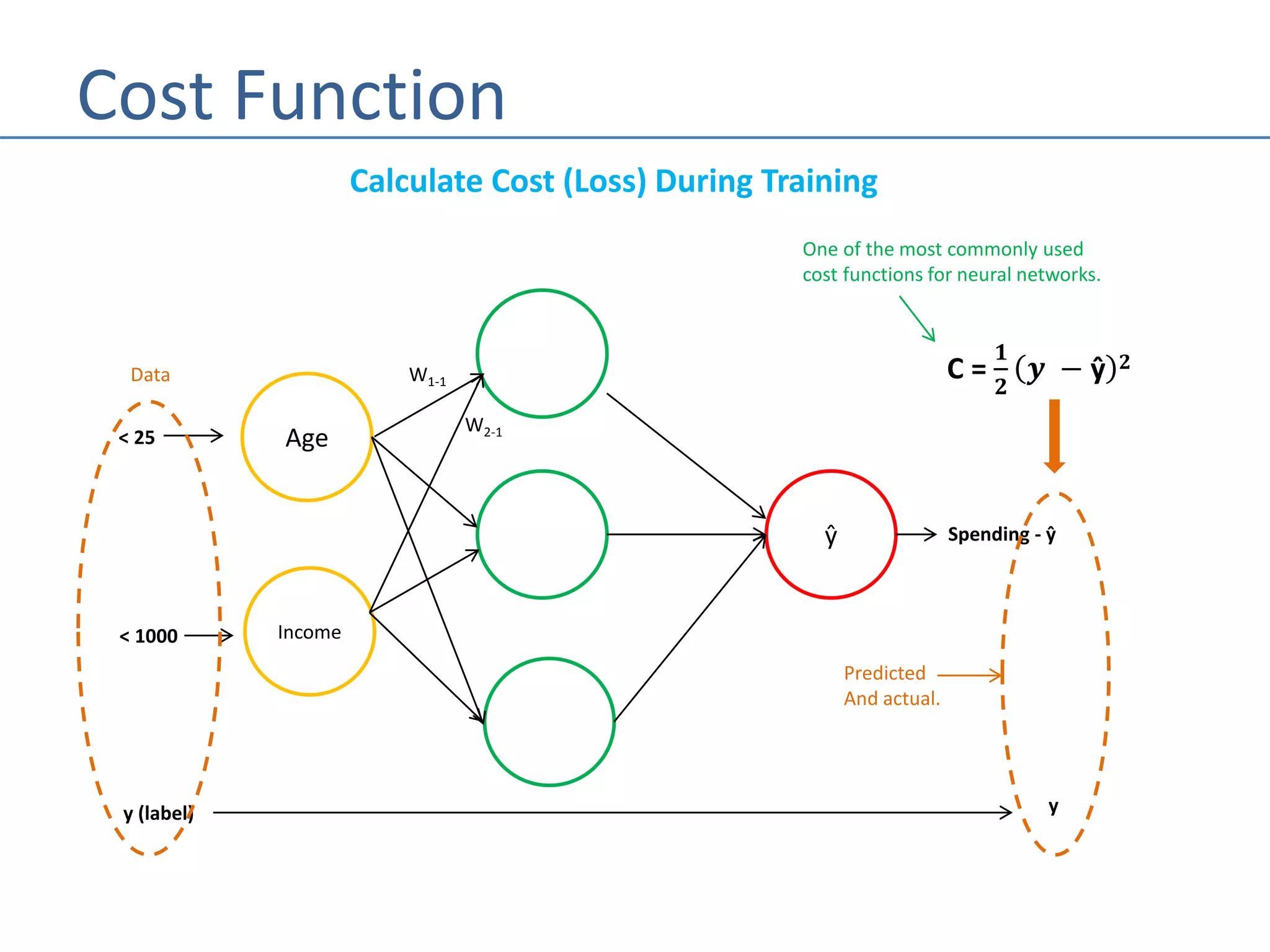

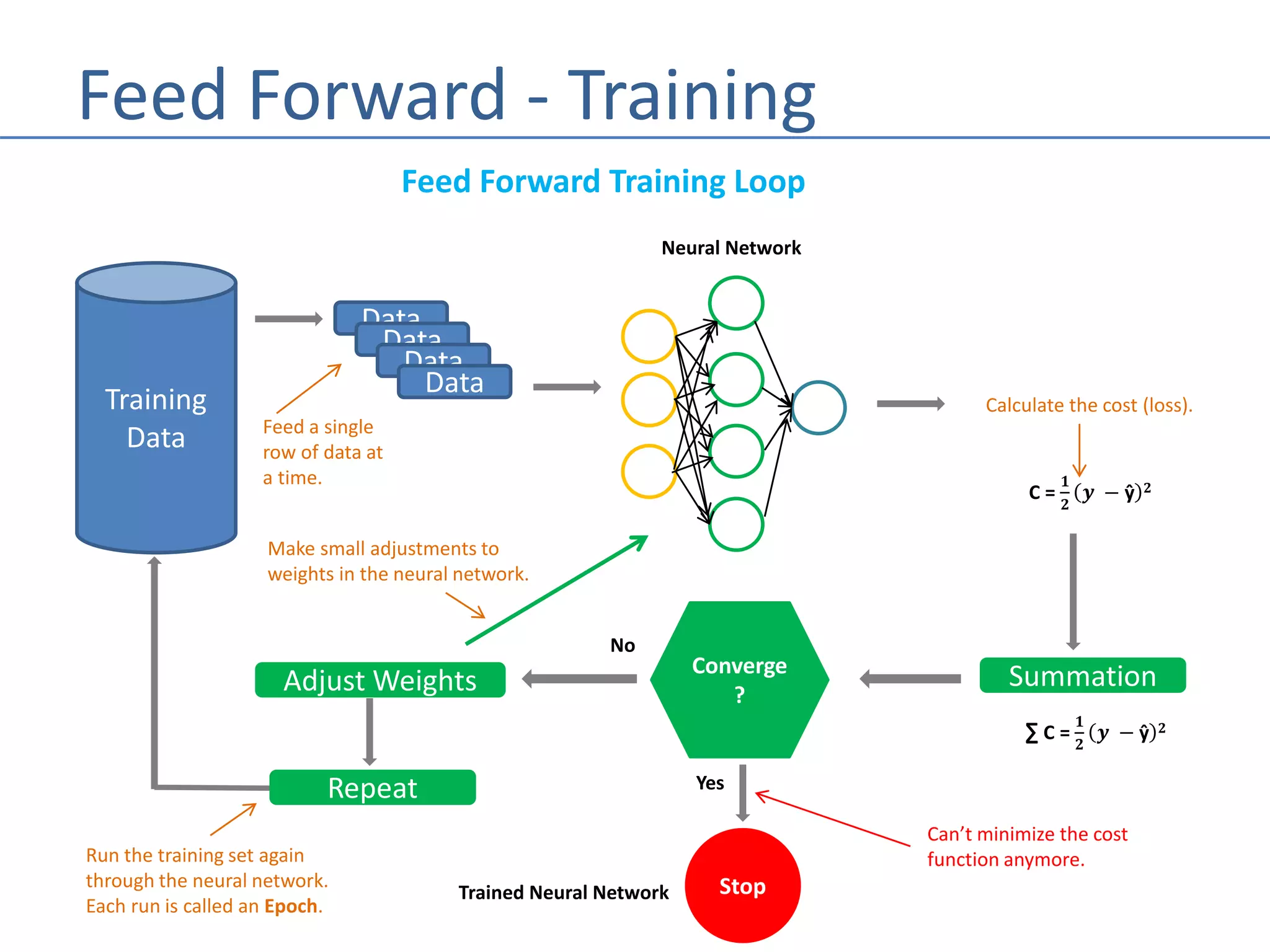

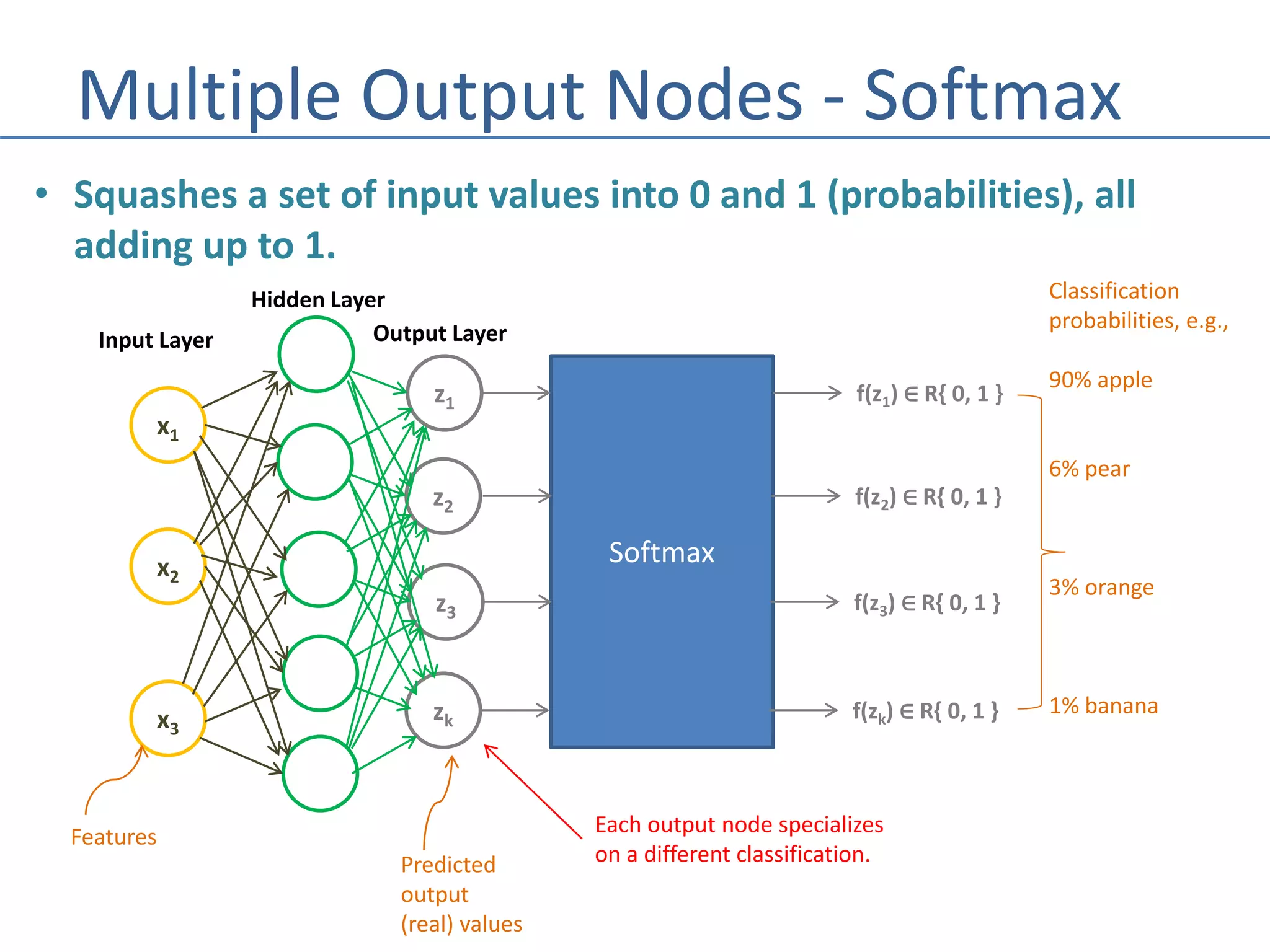

The document outlines the history and fundamentals of neural networks in machine learning, tracing their development from early experiments in the 1940s to modern techniques. It explains key concepts such as neuron operations, activation functions, and the structure of fully connected neural networks. Additionally, it differentiates between training and prediction phases, emphasizing the efficiency of predicting after the model has been trained.