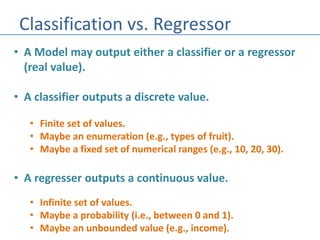

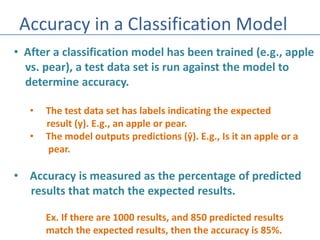

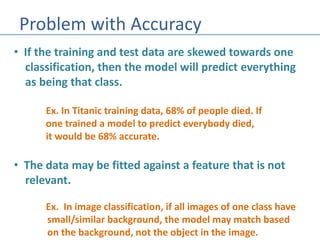

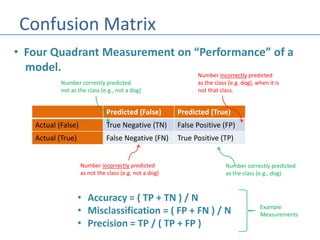

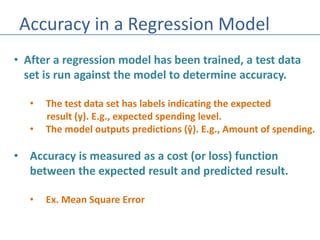

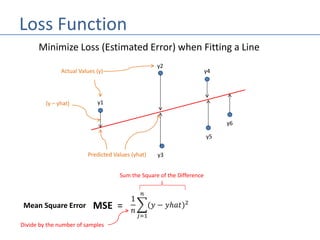

The document discusses the differences between classification and regression models in machine learning, highlighting how classifiers output discrete values while regressors provide continuous values. It explains the concept of accuracy in classification models, the associated problems, and introduces the confusion matrix as a performance measurement tool. For regression models, accuracy is evaluated using cost or loss functions such as mean square error.