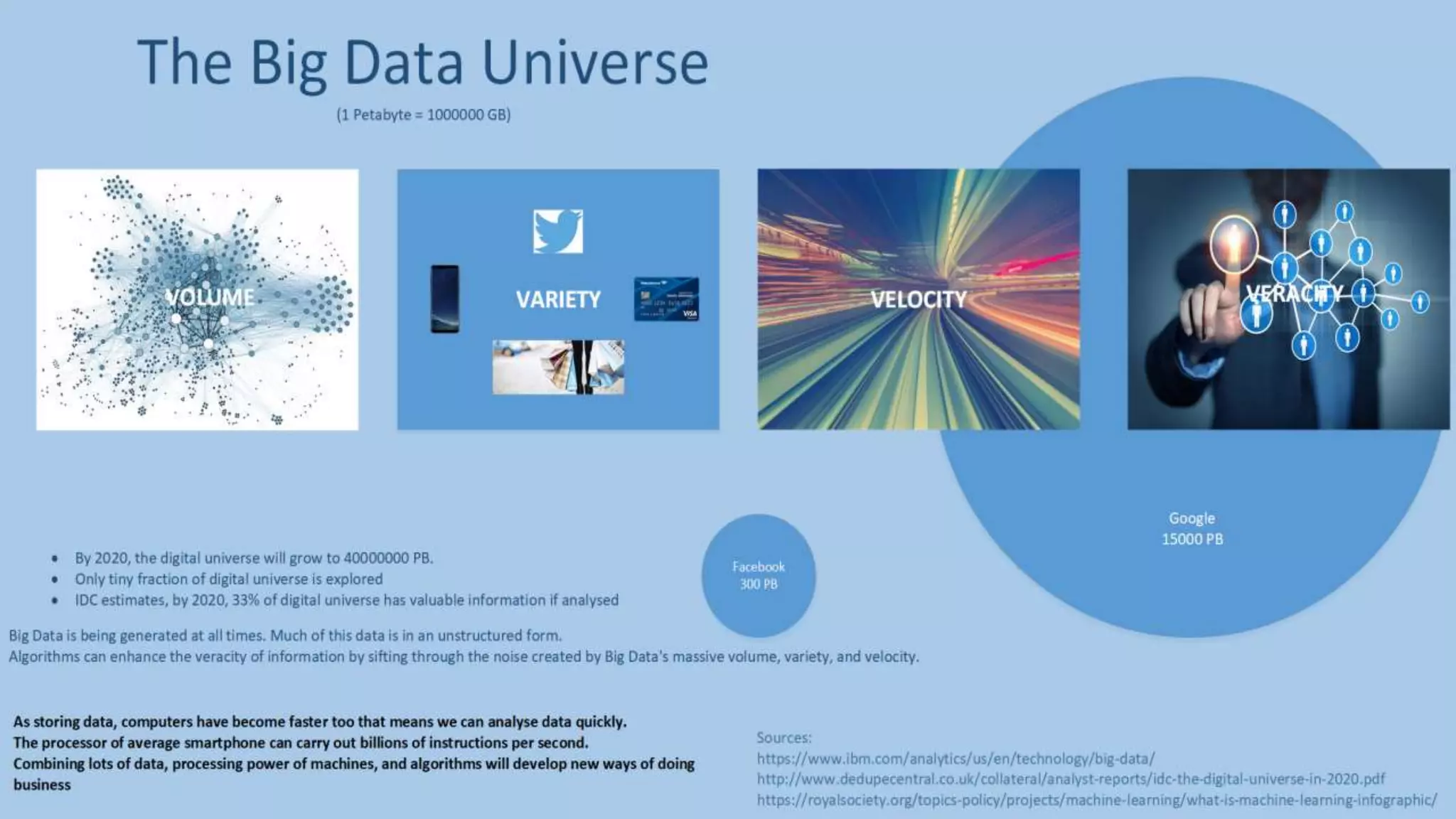

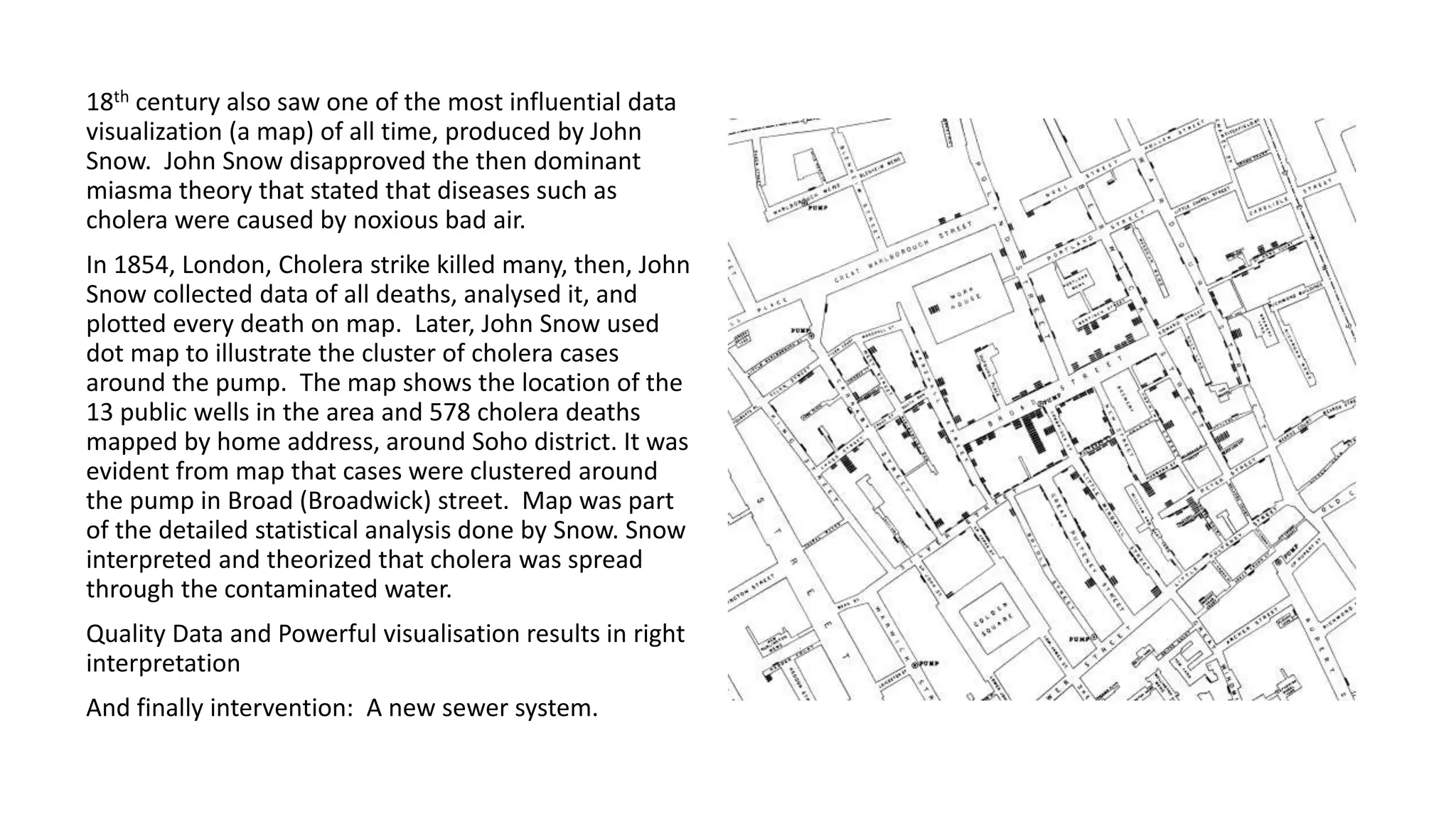

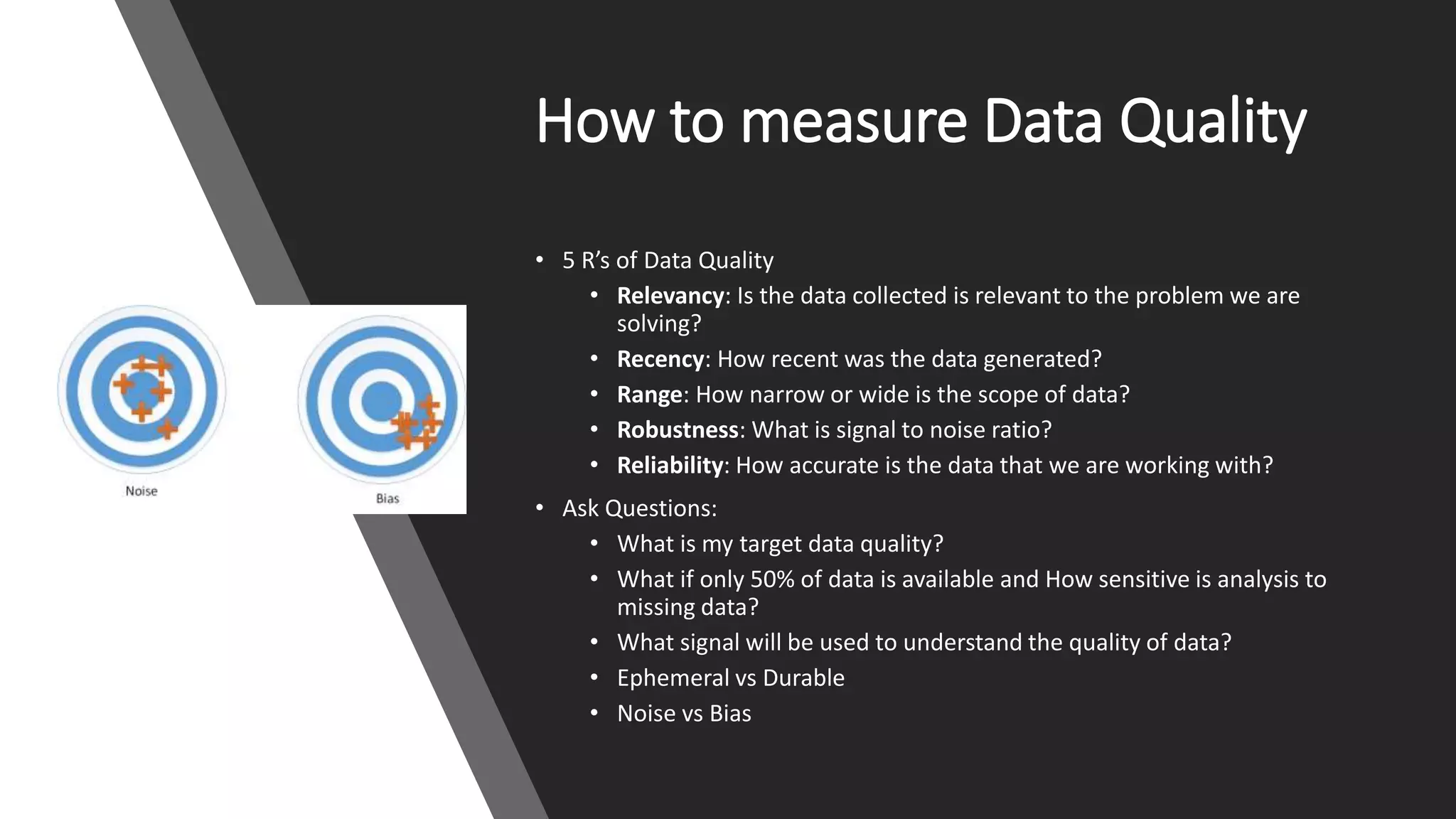

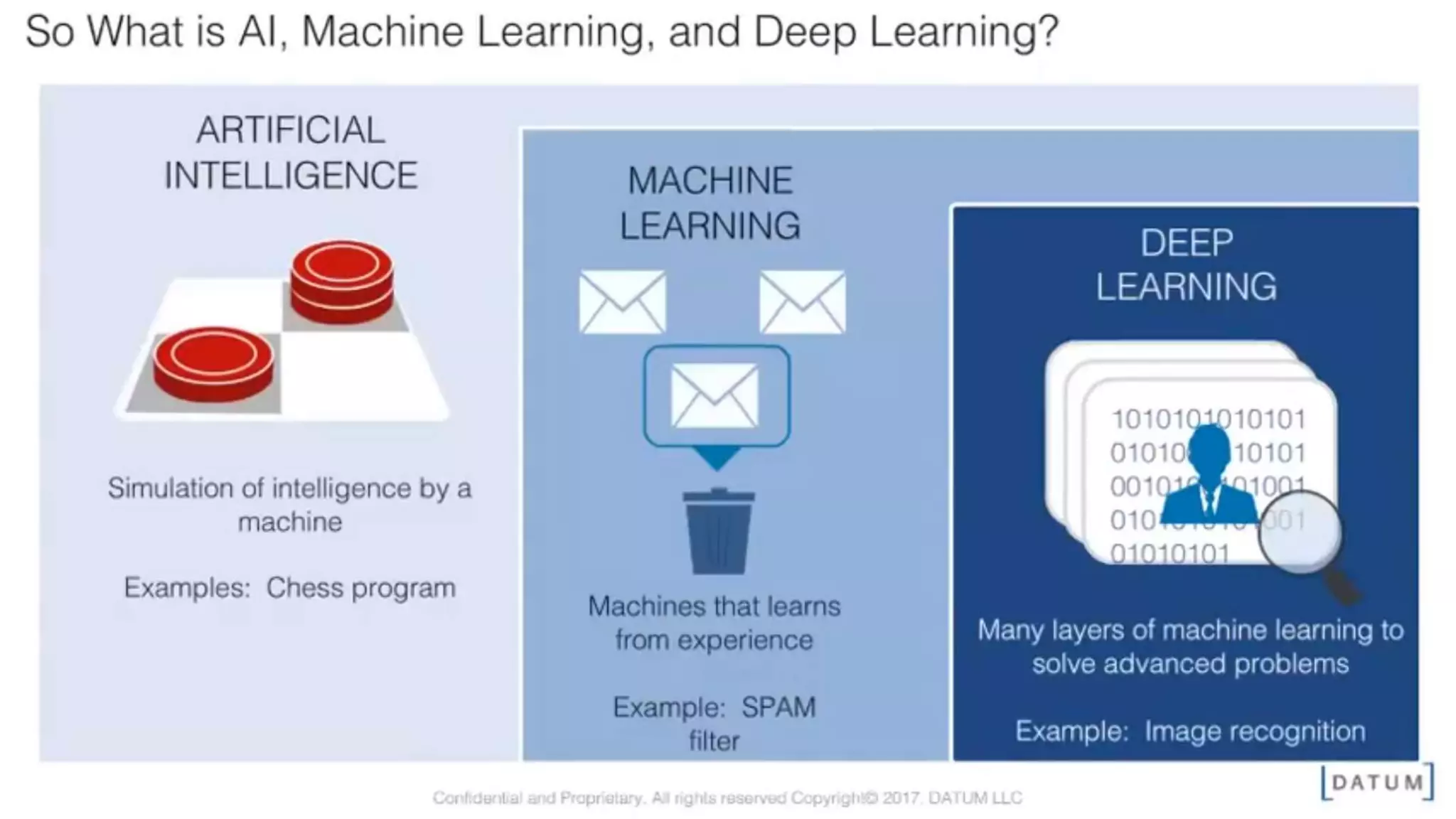

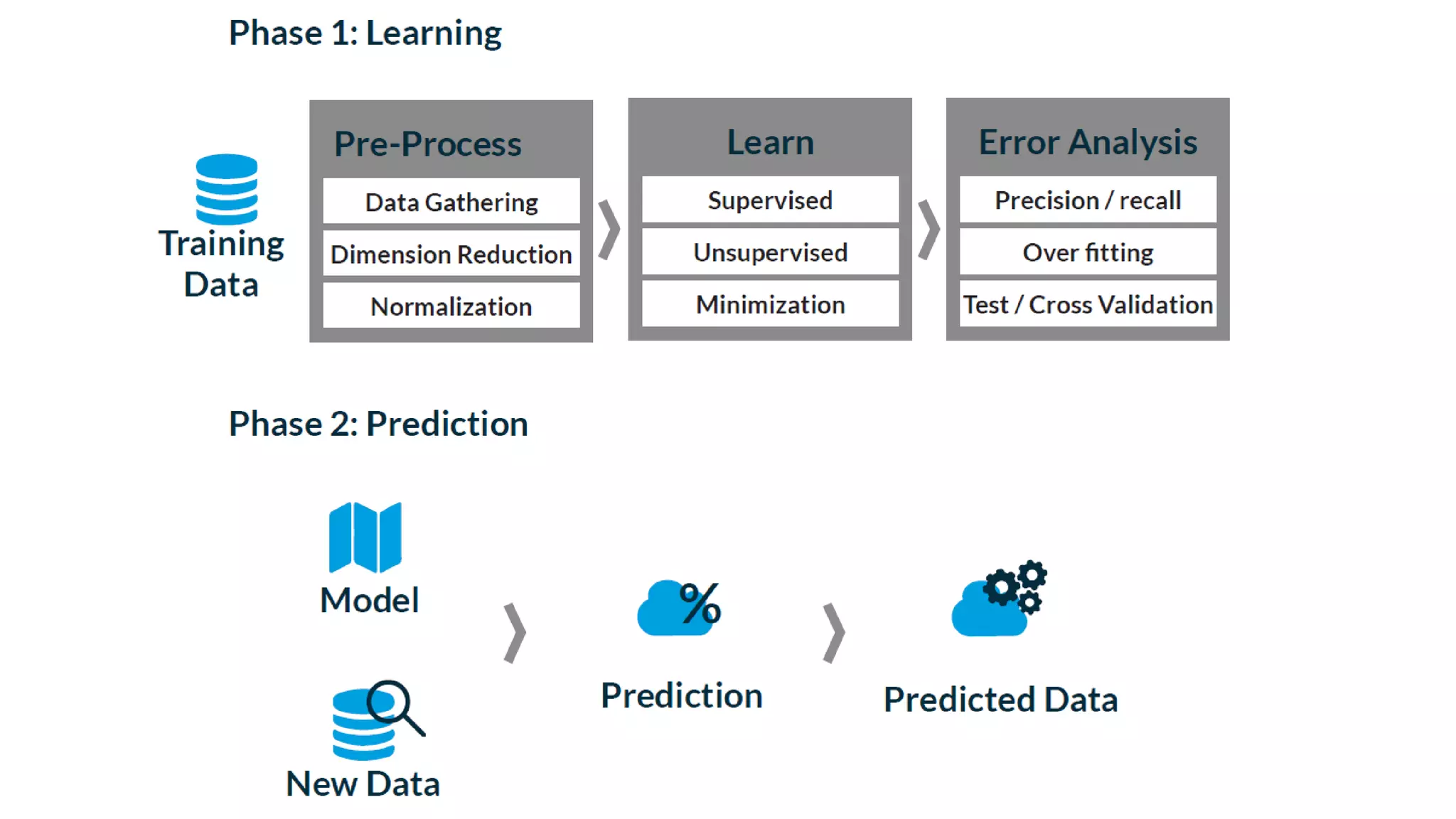

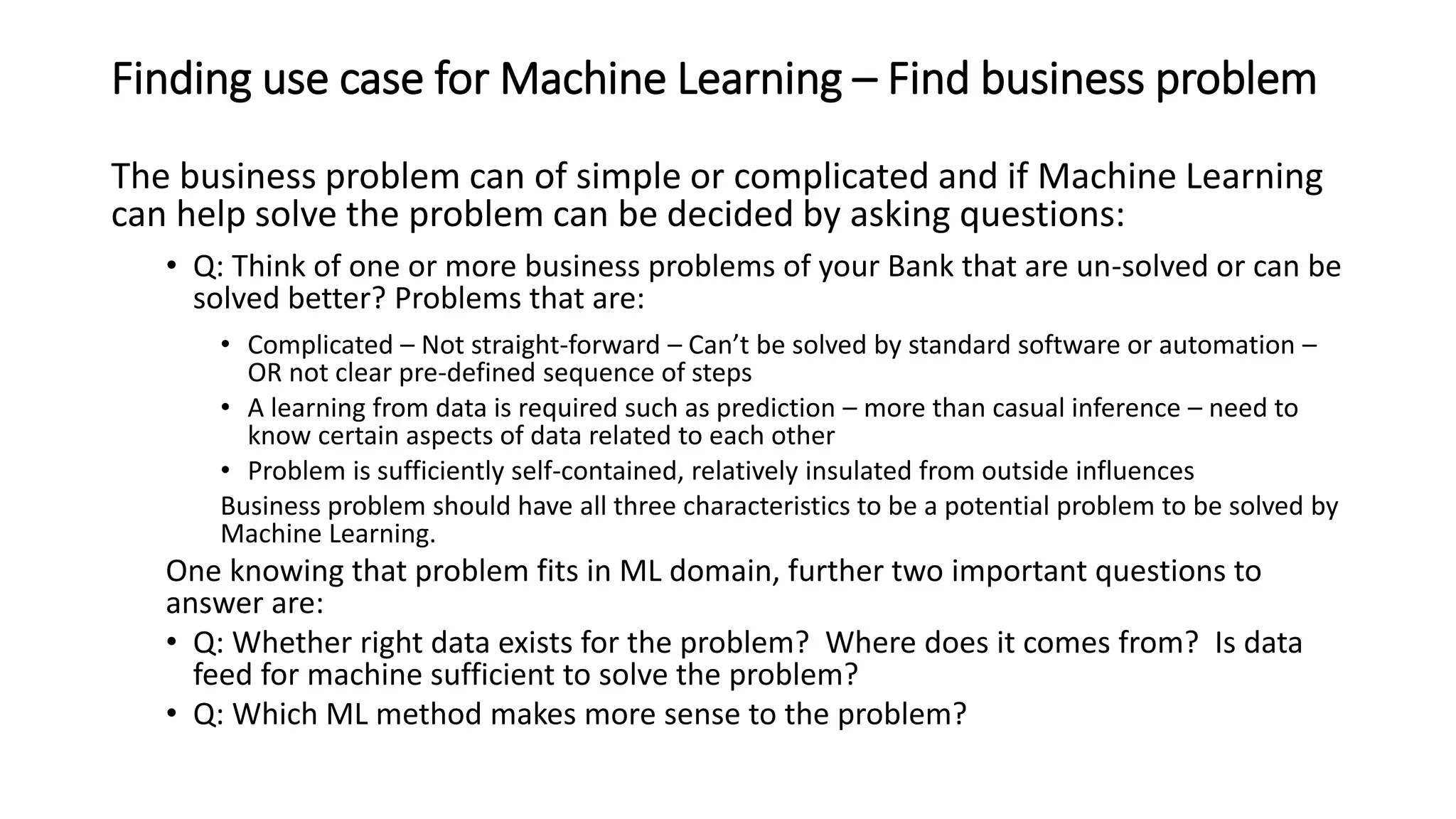

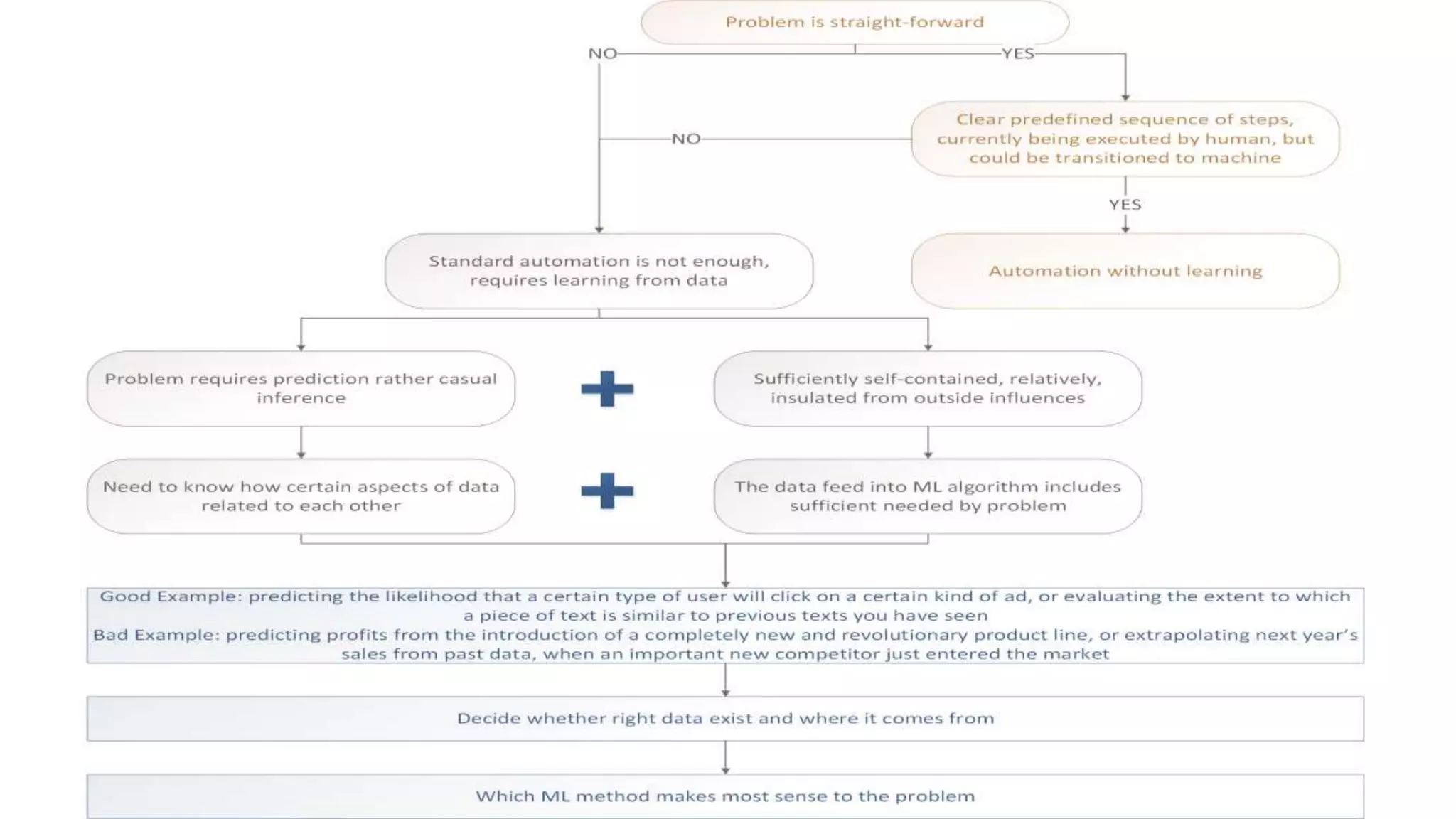

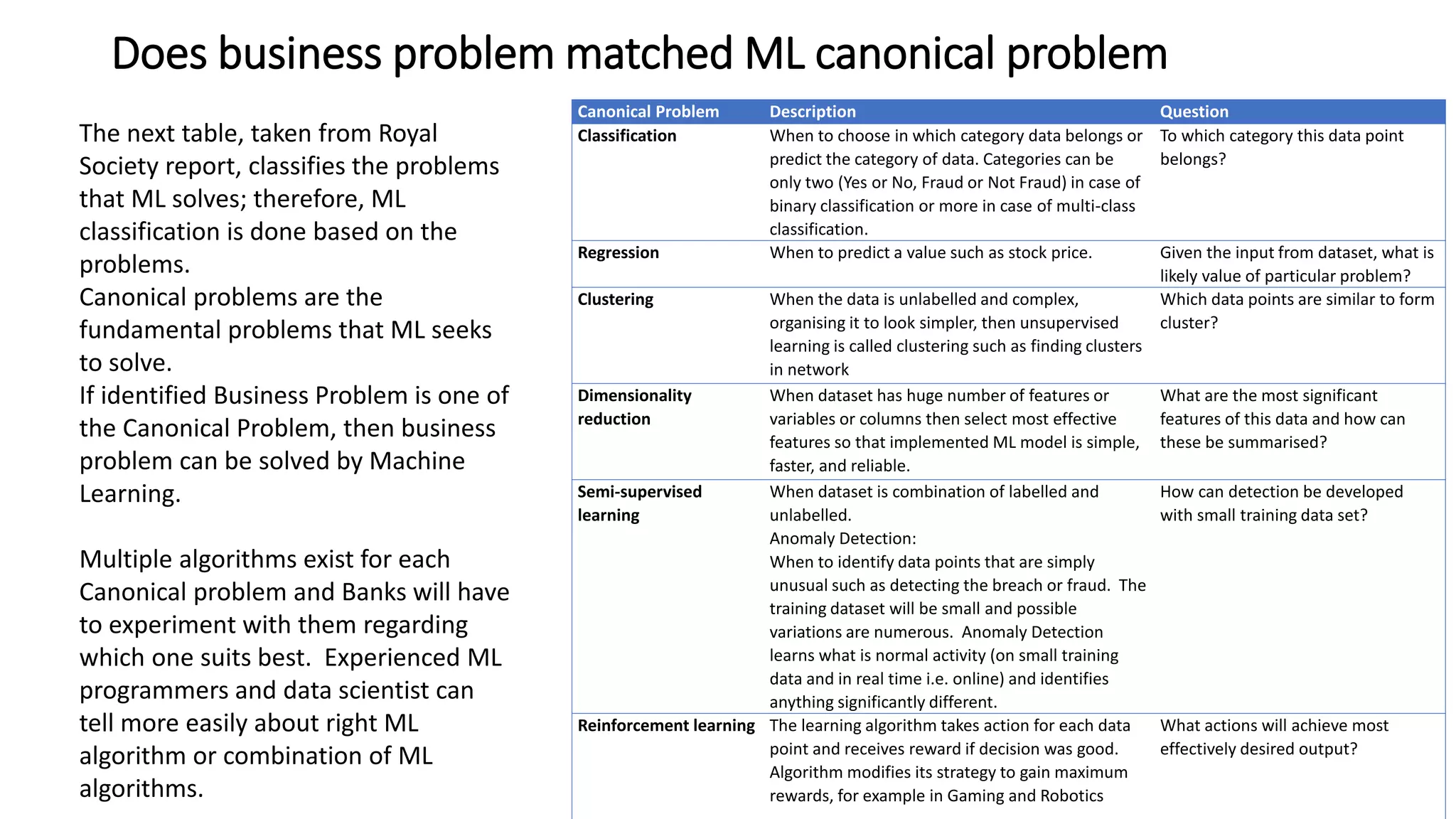

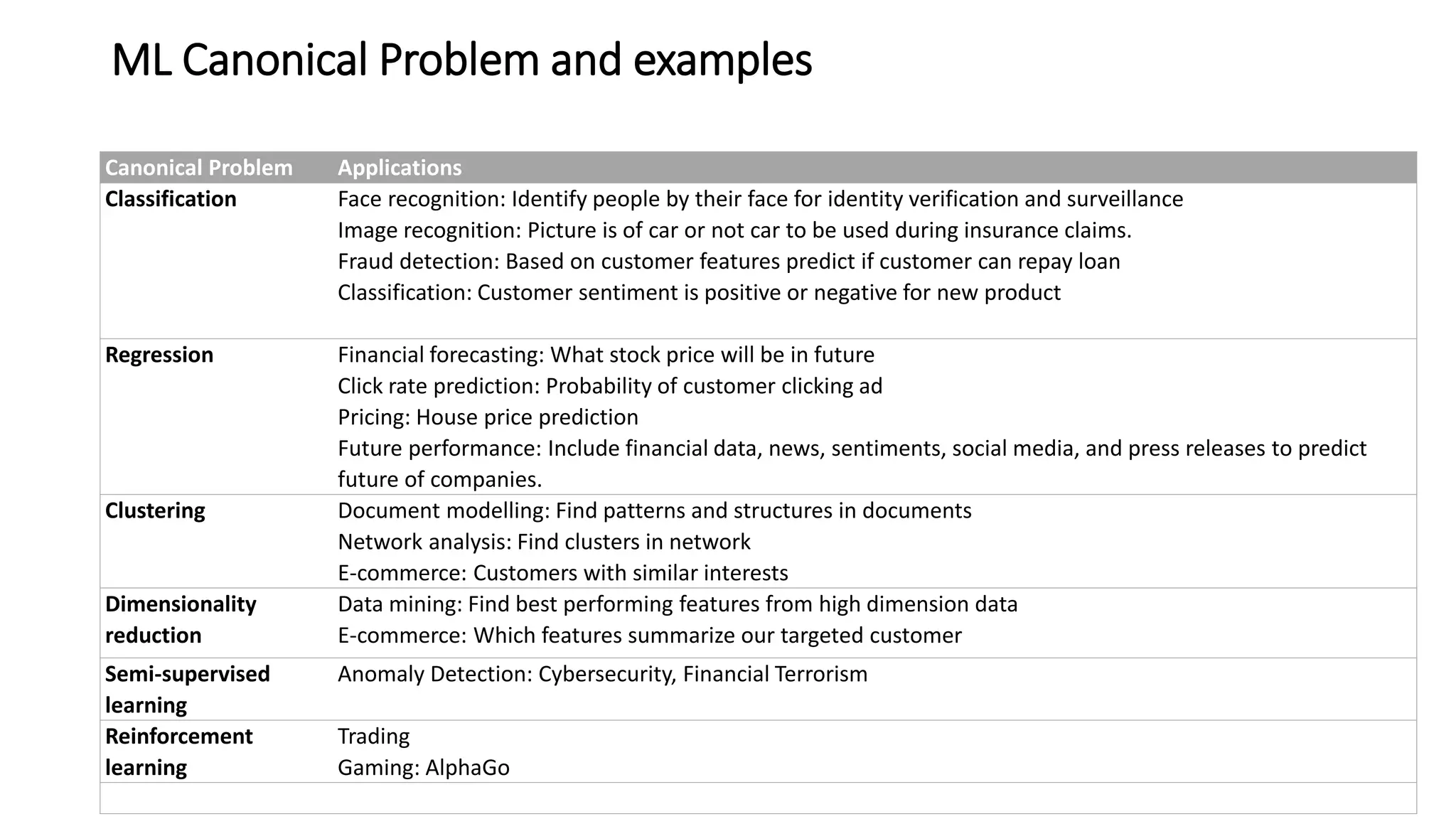

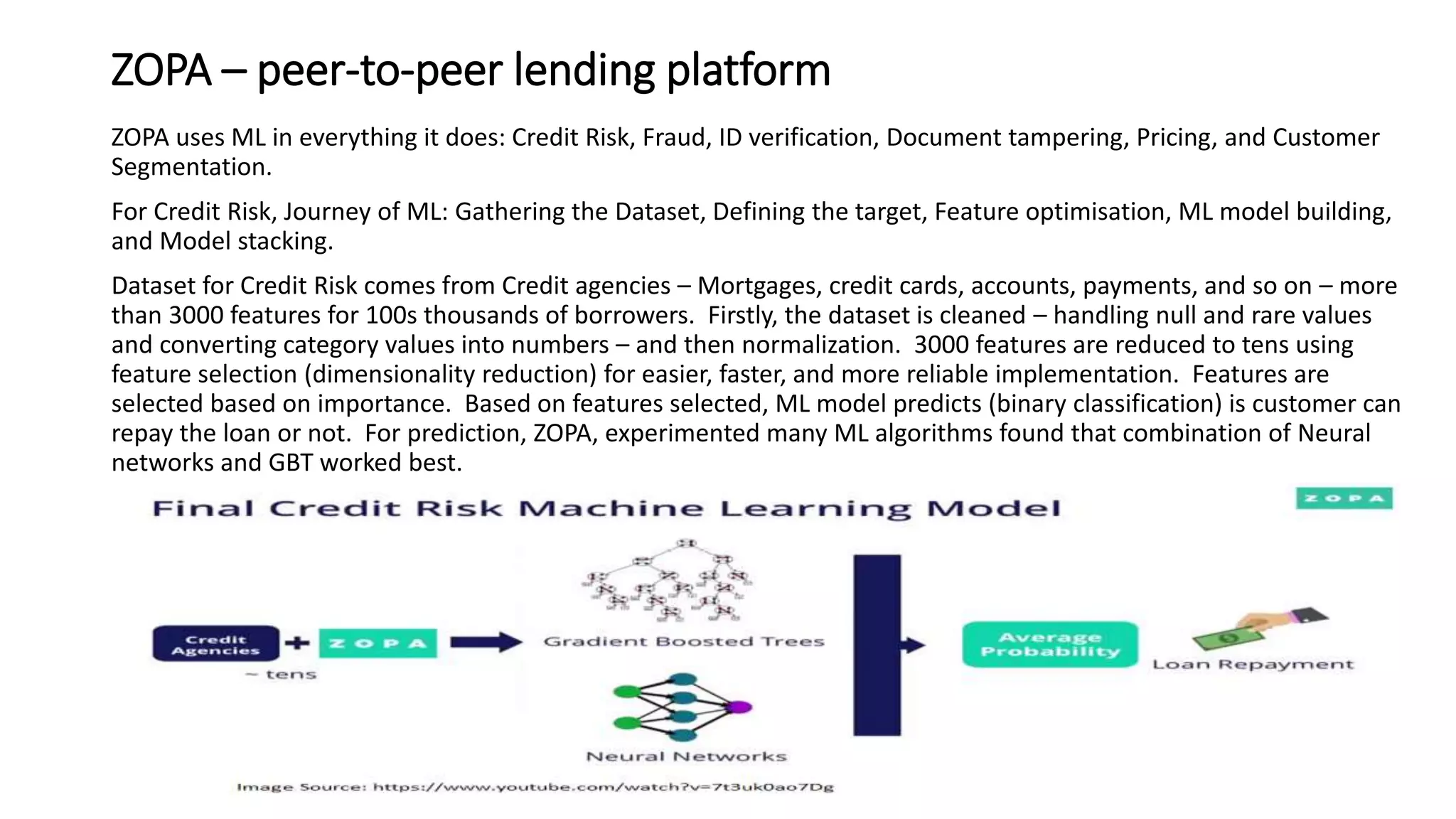

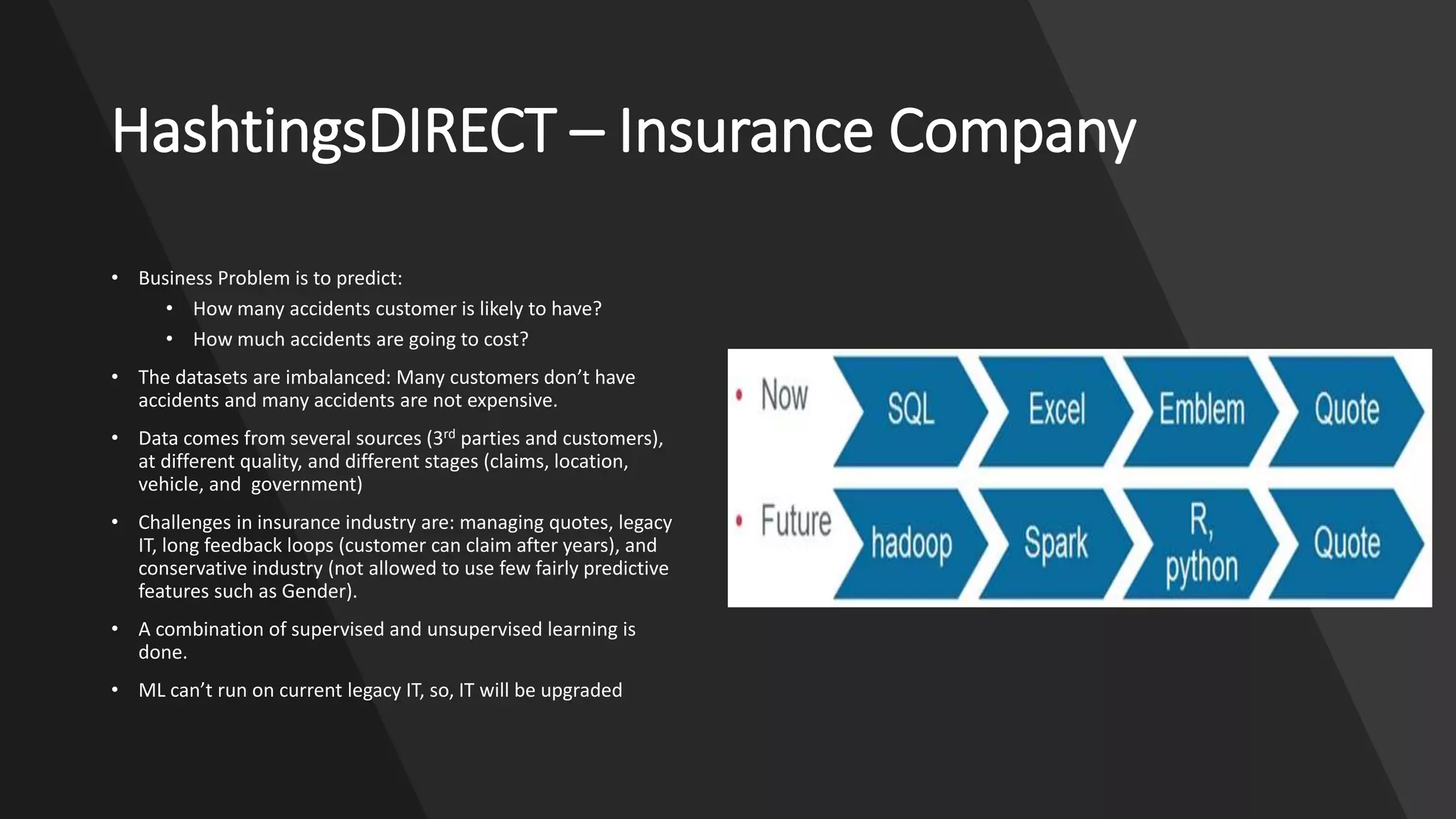

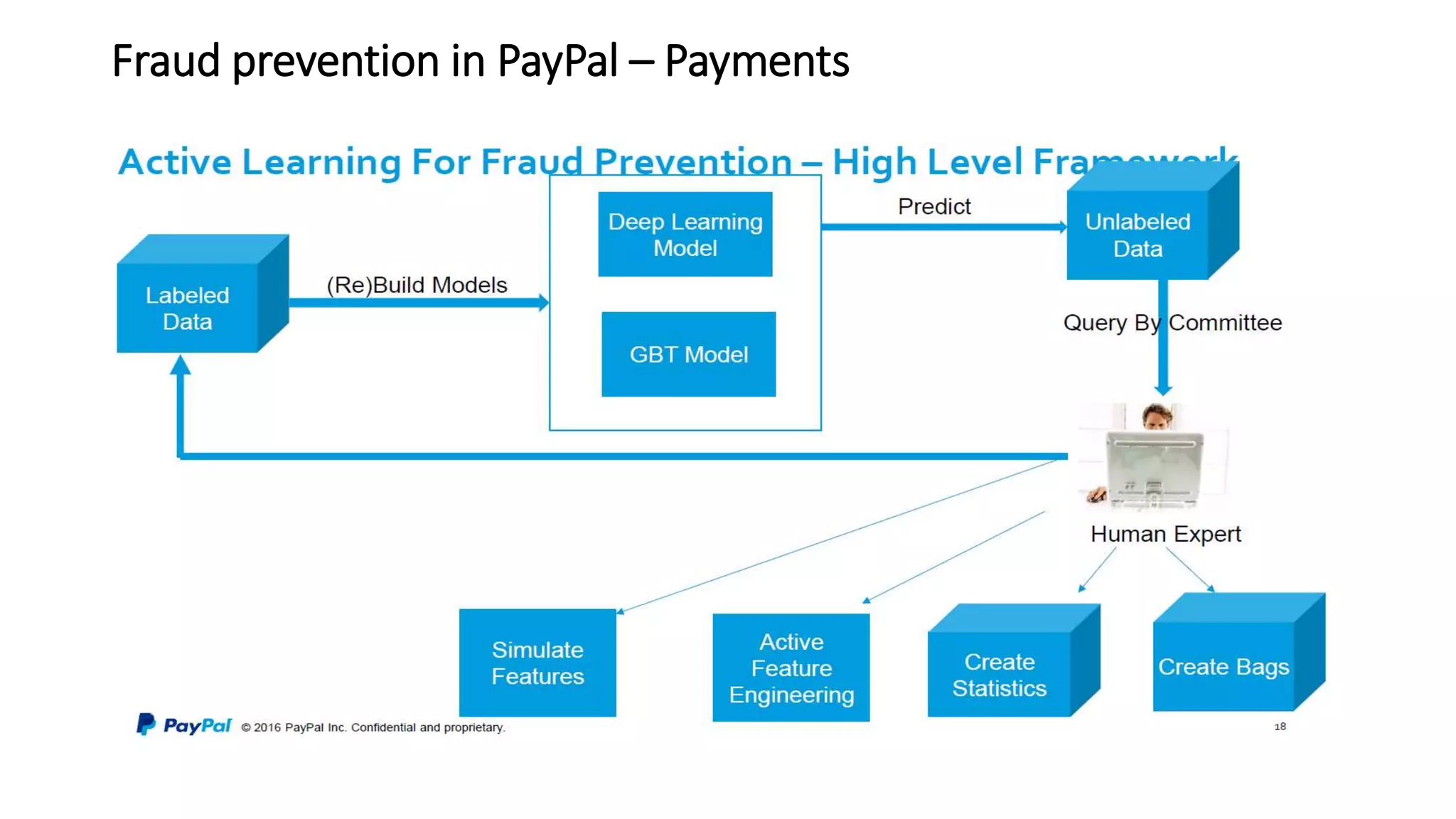

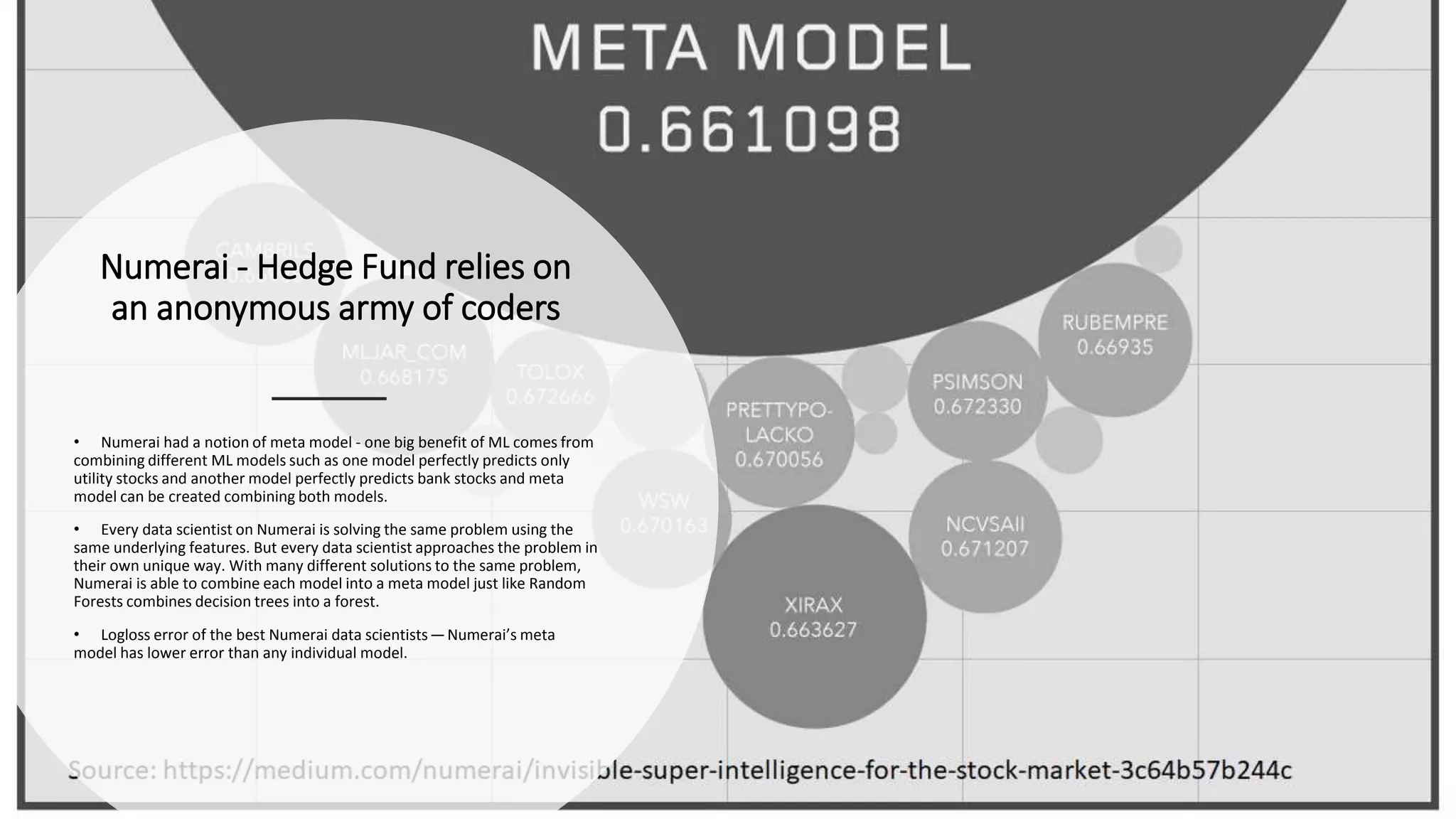

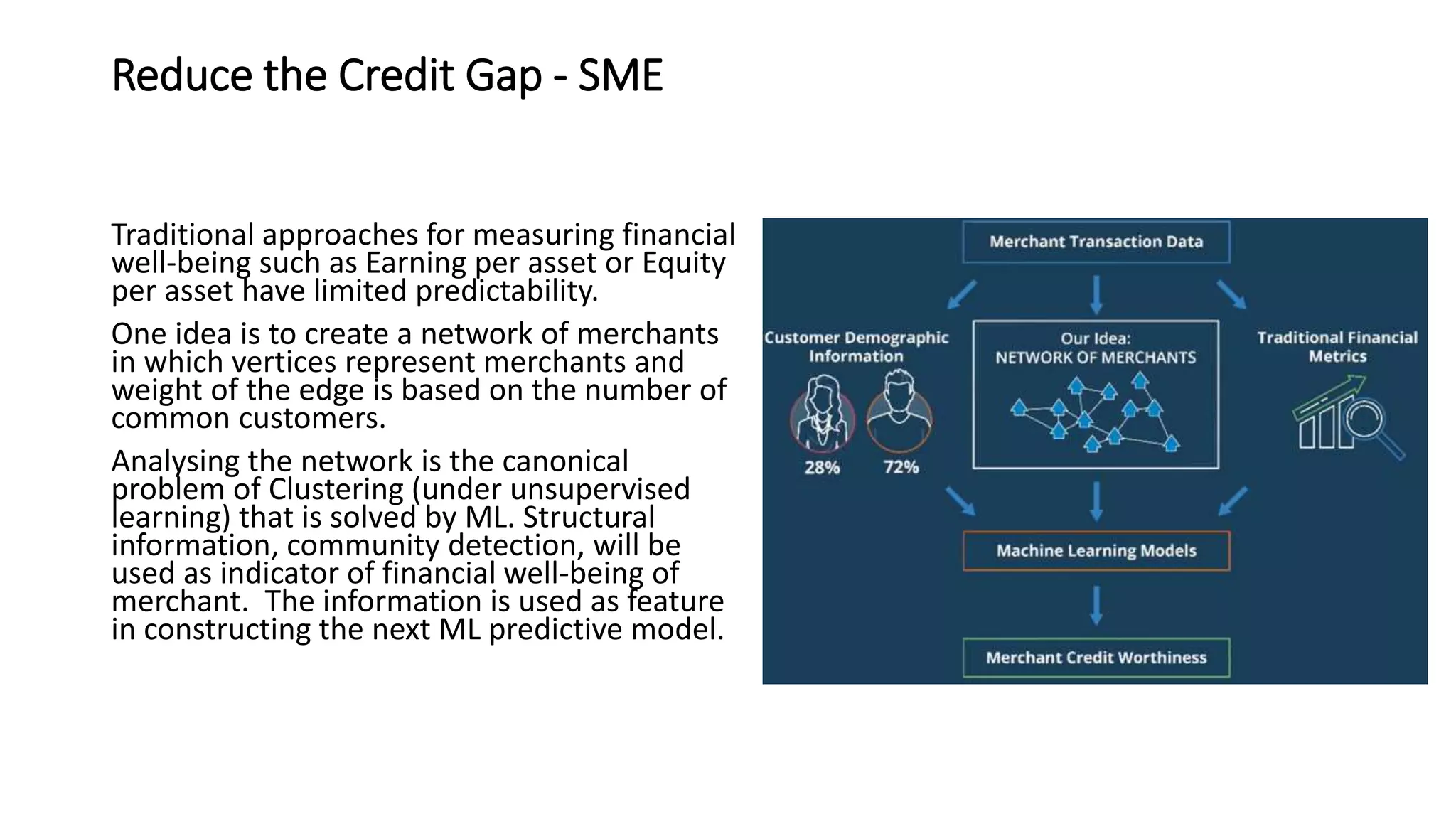

The document discusses the application and challenges of machine learning (ML) in the banking sector, emphasizing its ability to process data, automate analyses, and uncover insights without human bias. It outlines various ML techniques, such as supervised and unsupervised learning, while highlighting key challenges in adoption, including data silos and the need for governance. Use cases from several companies, including fraud prevention and credit risk assessment, illustrate the practical benefits of integrating ML into financial operations.