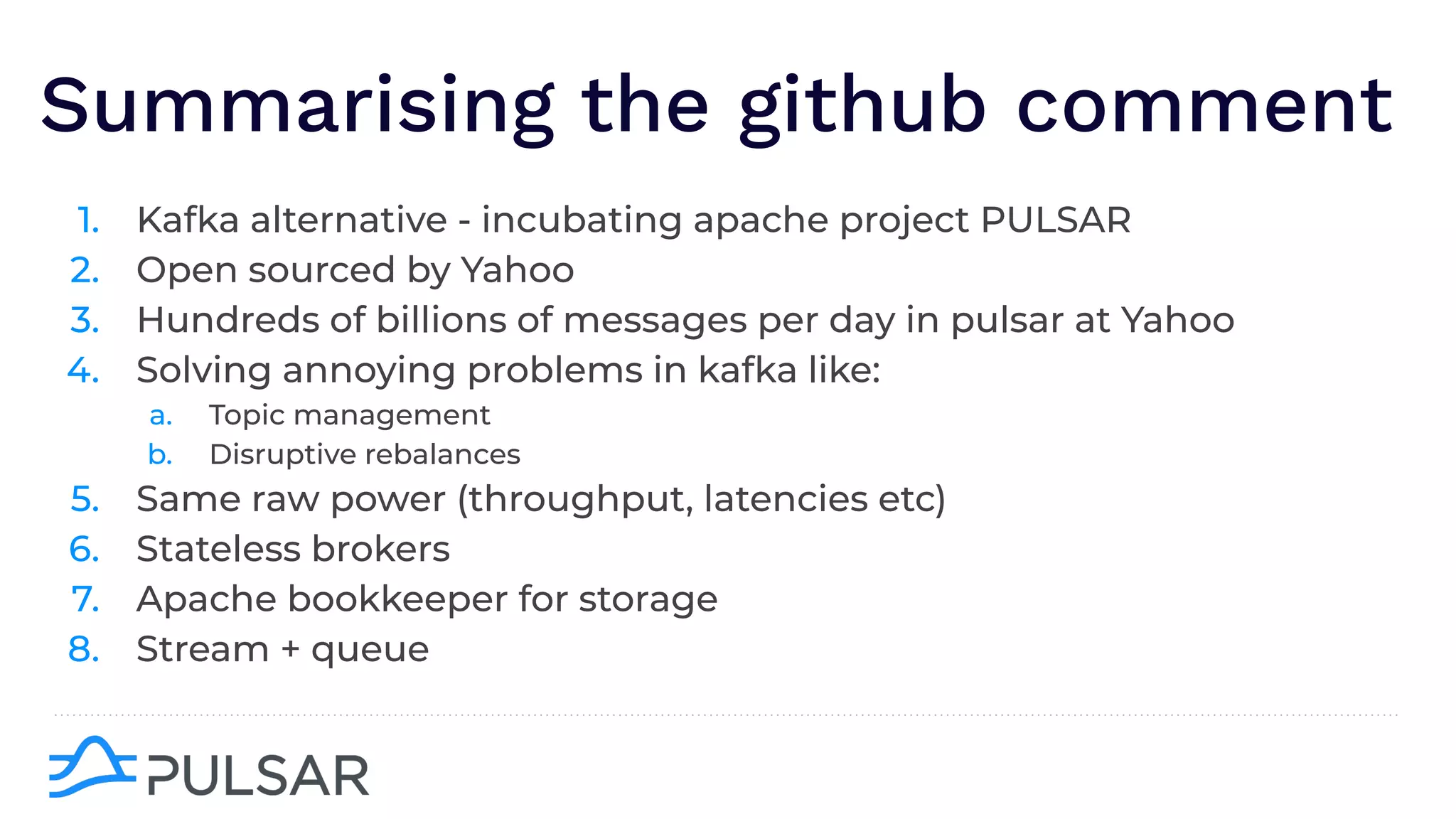

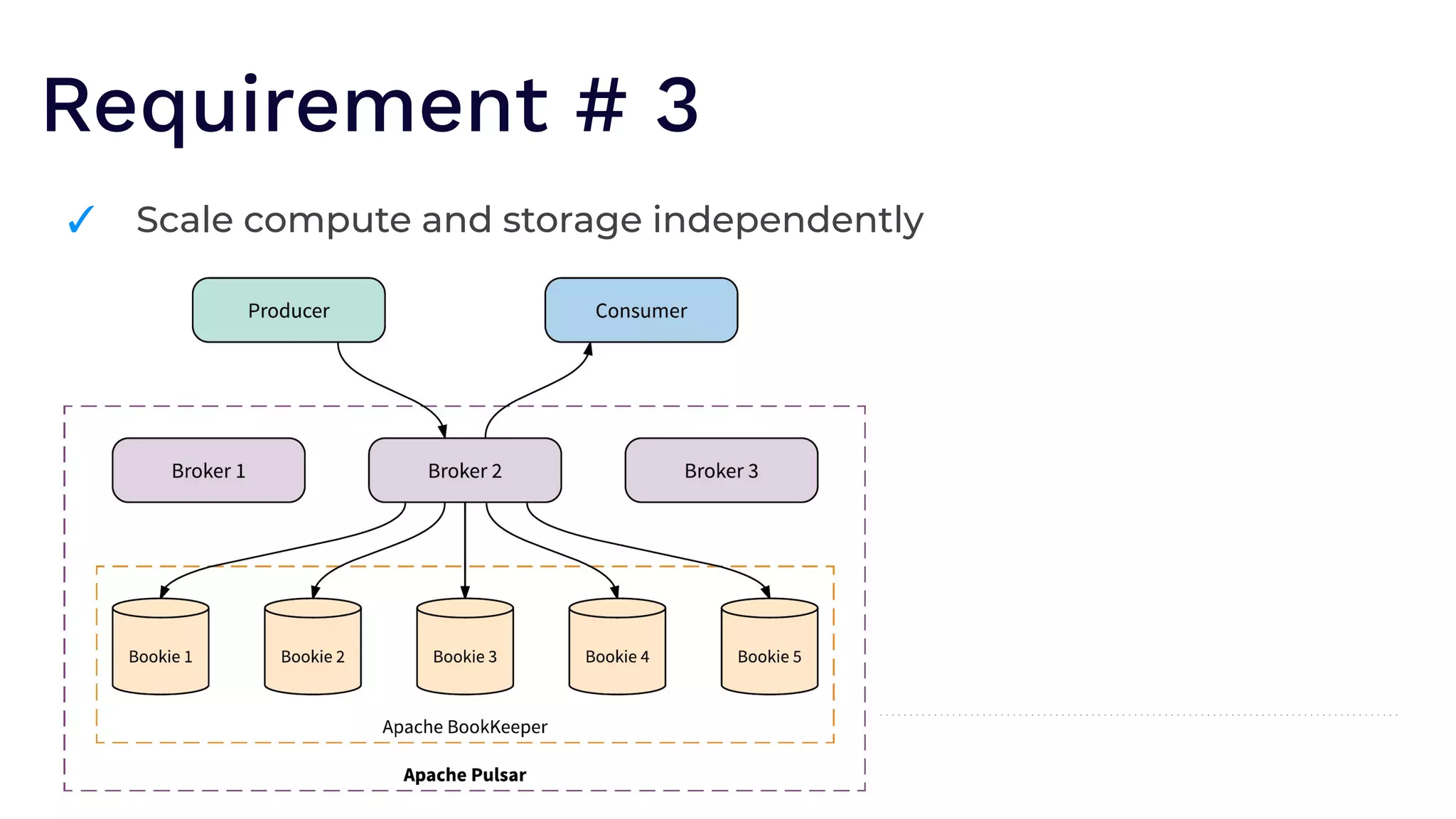

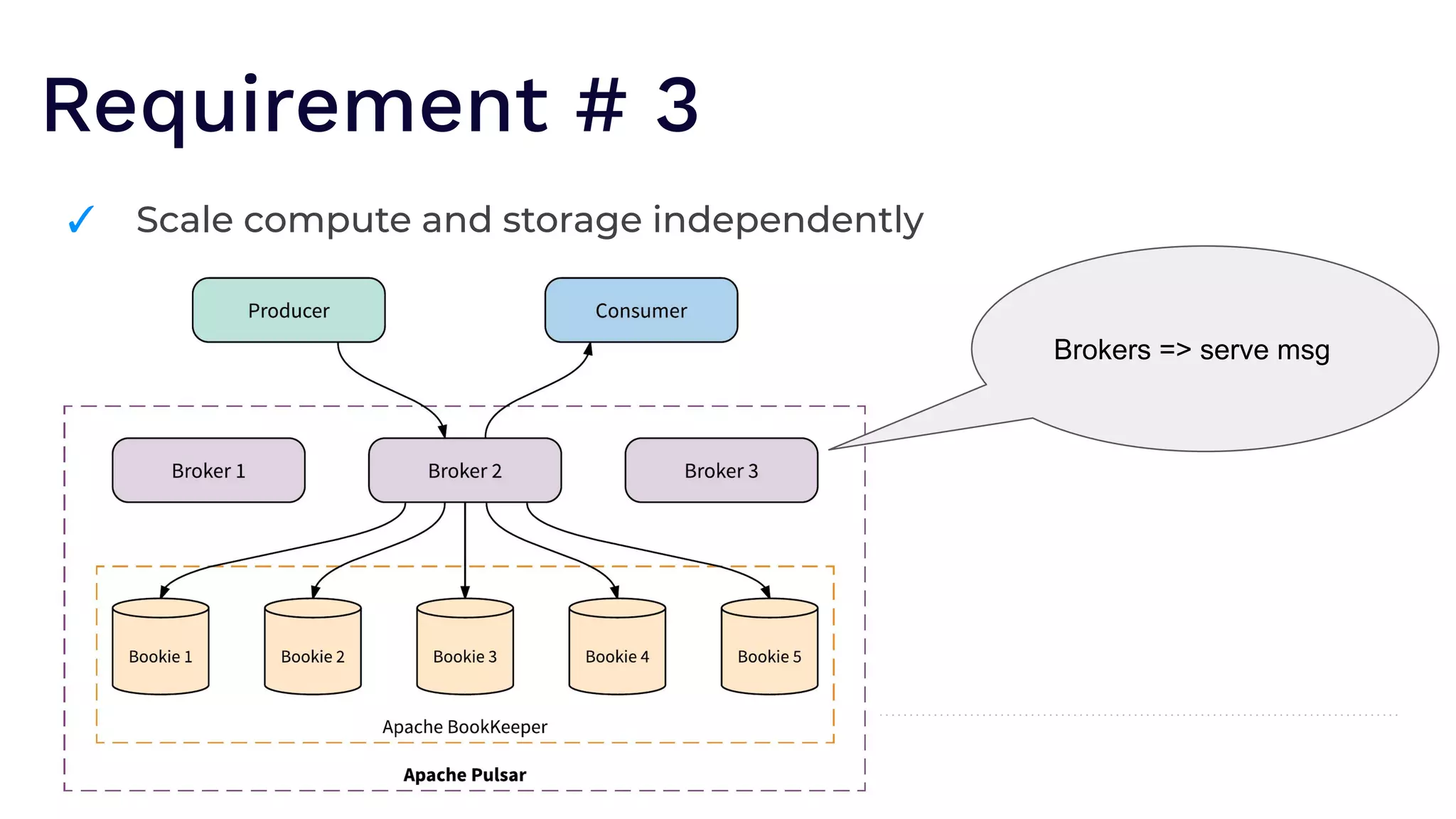

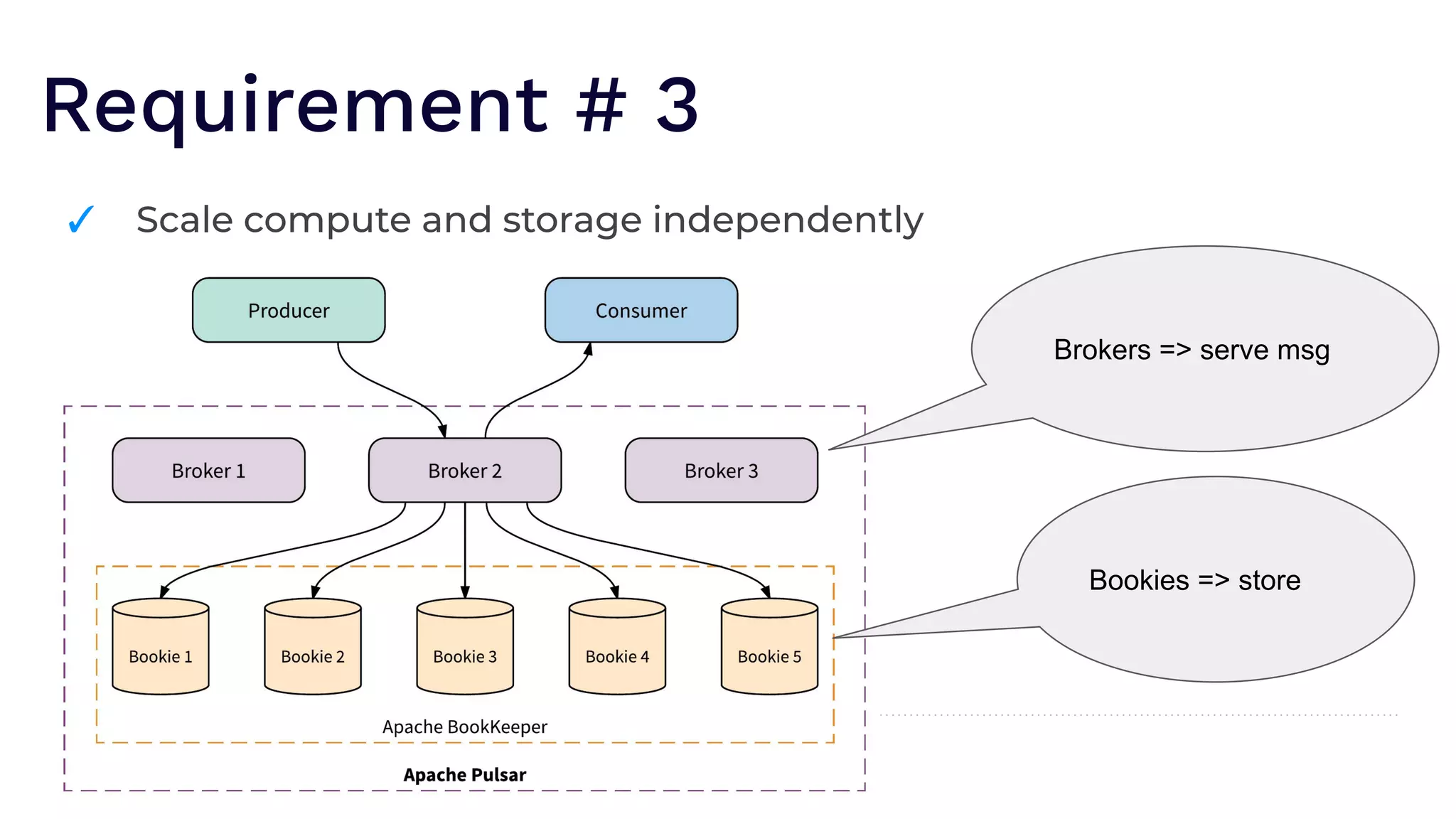

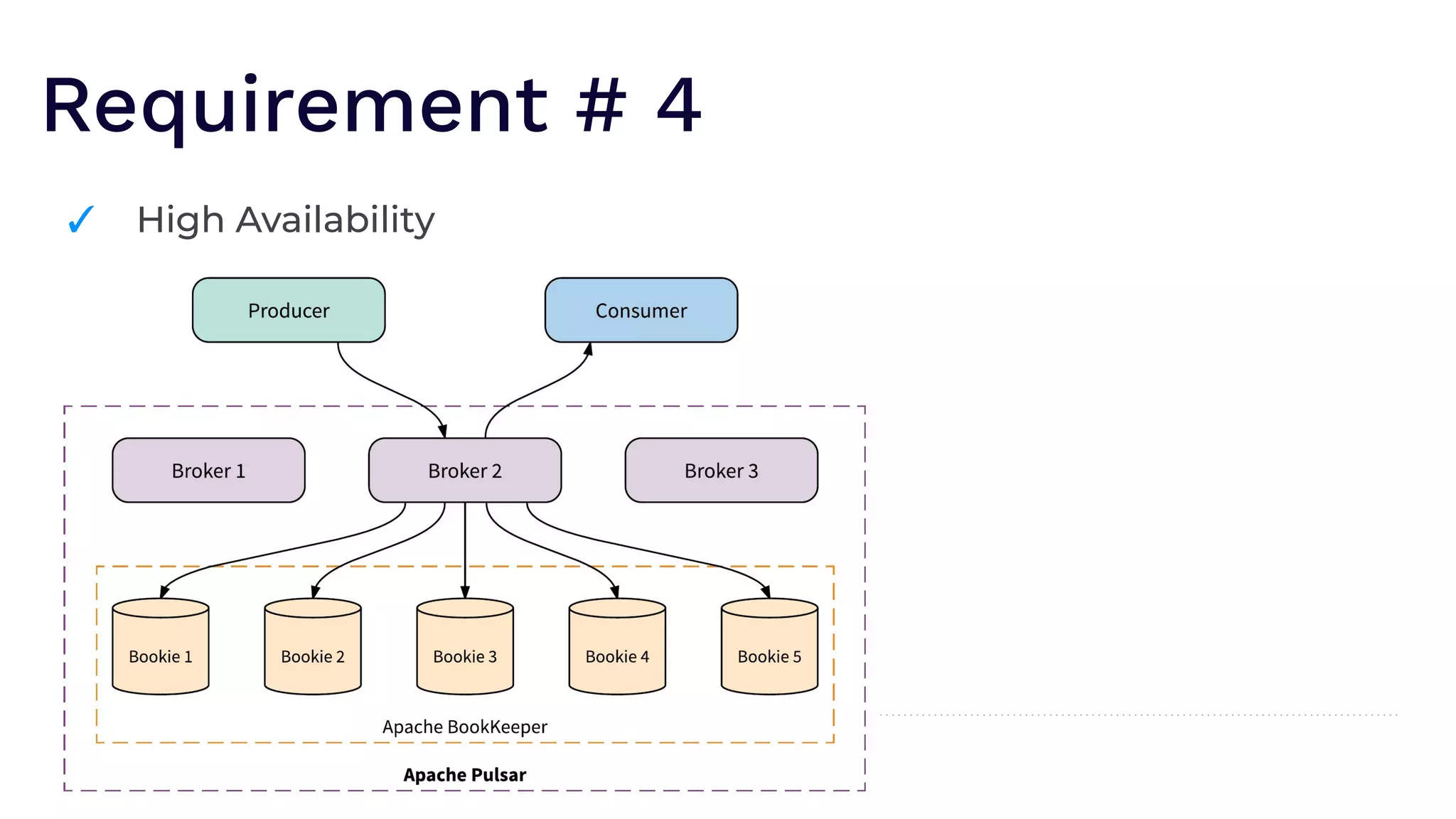

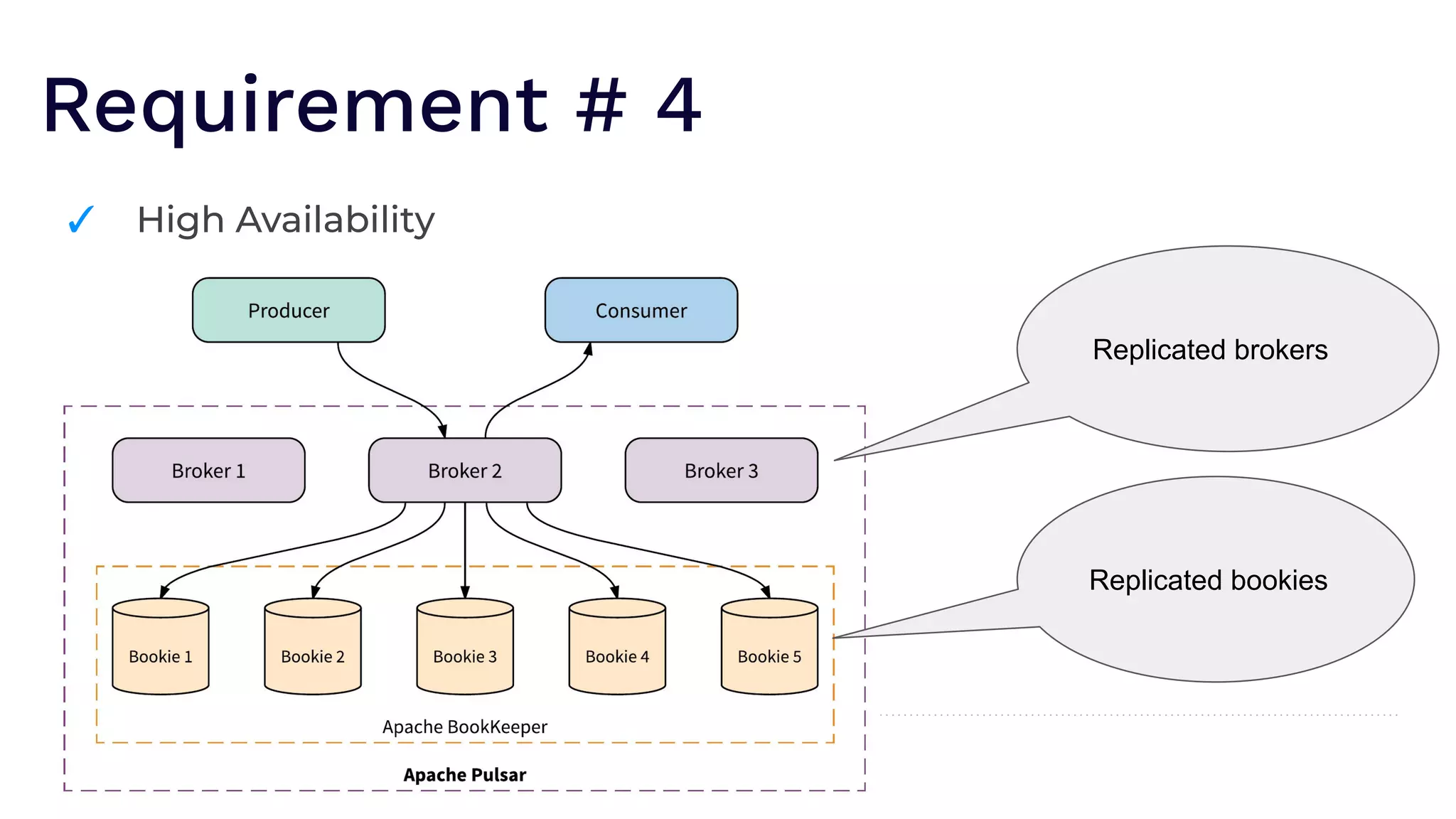

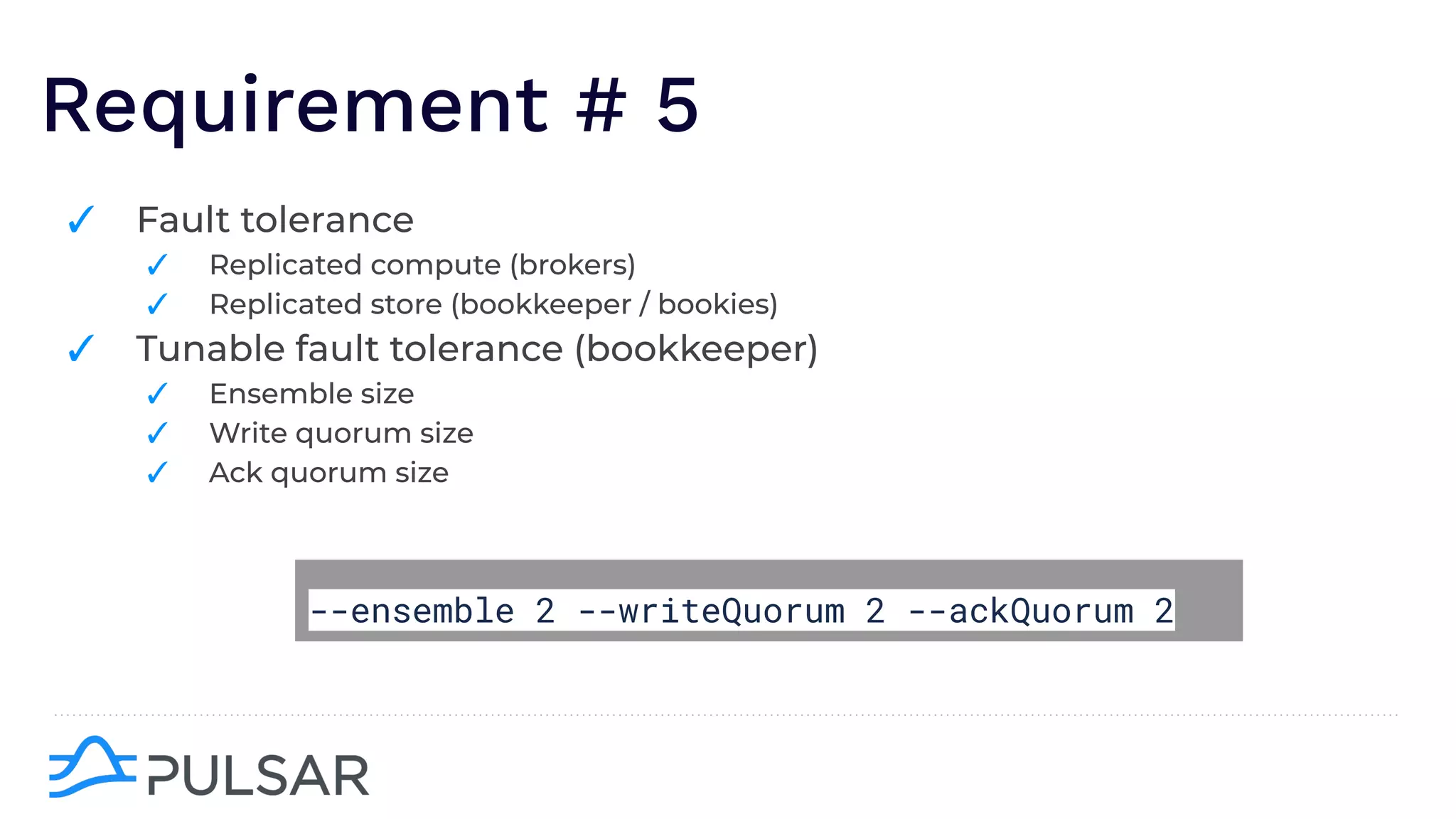

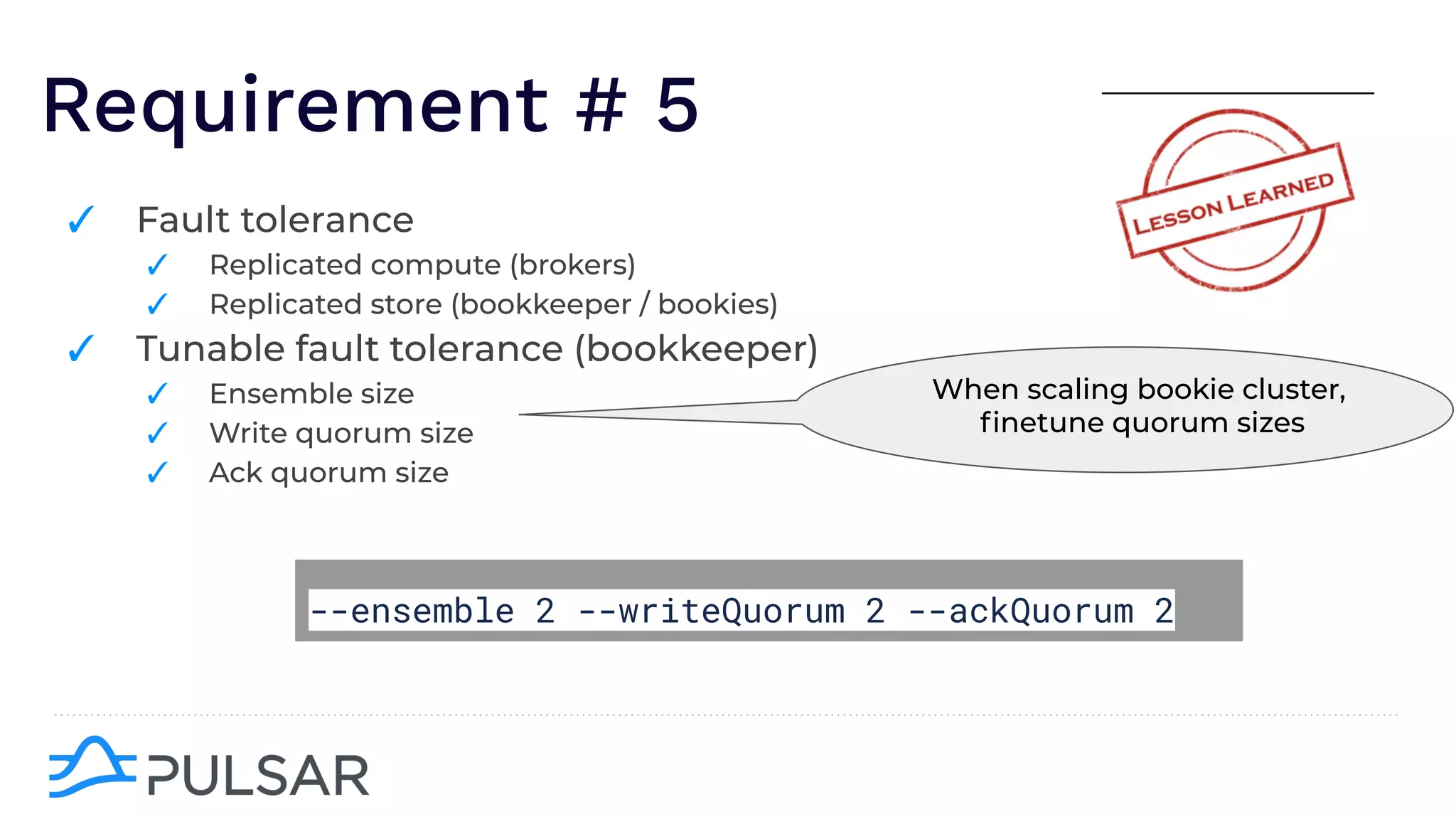

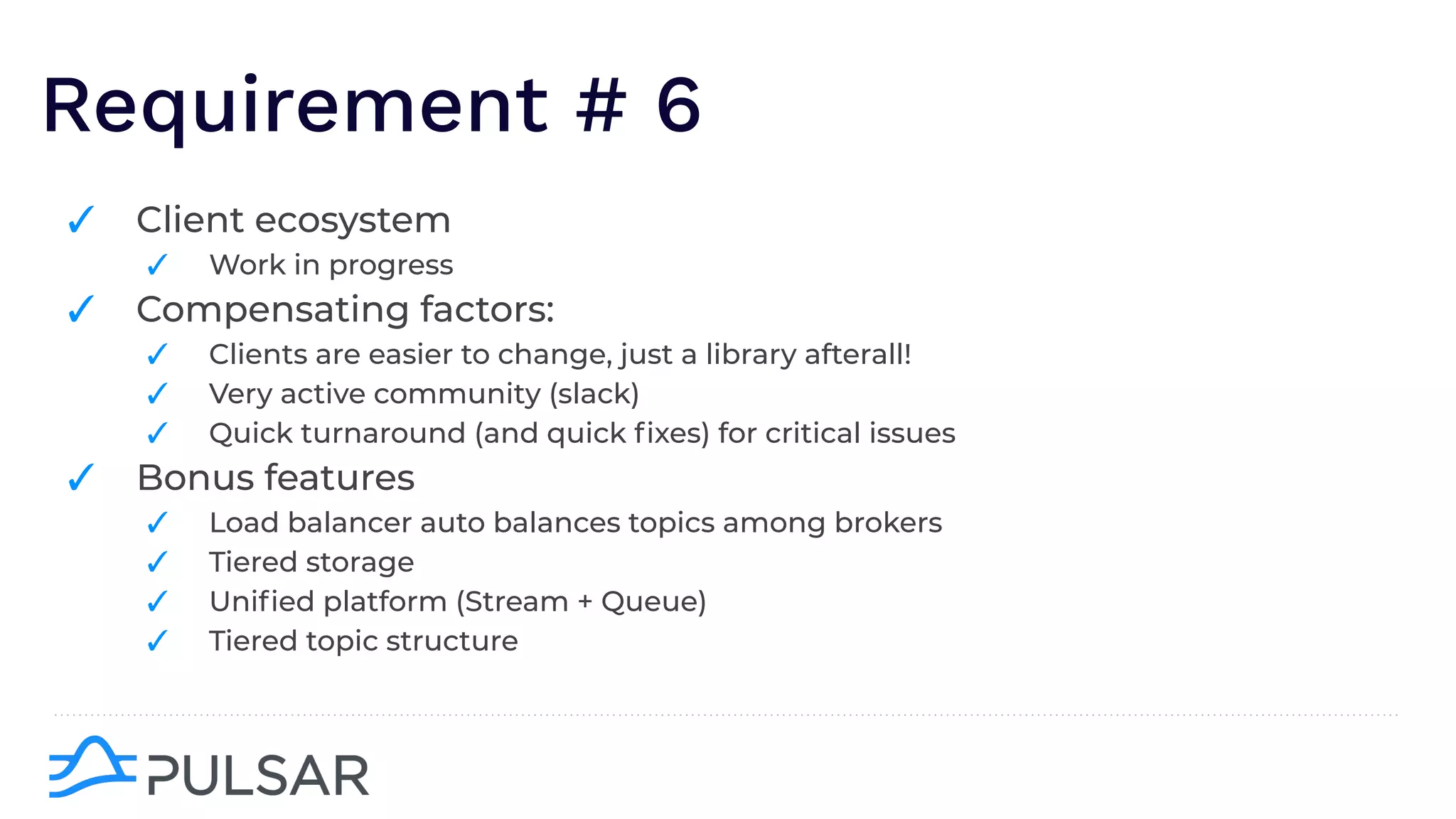

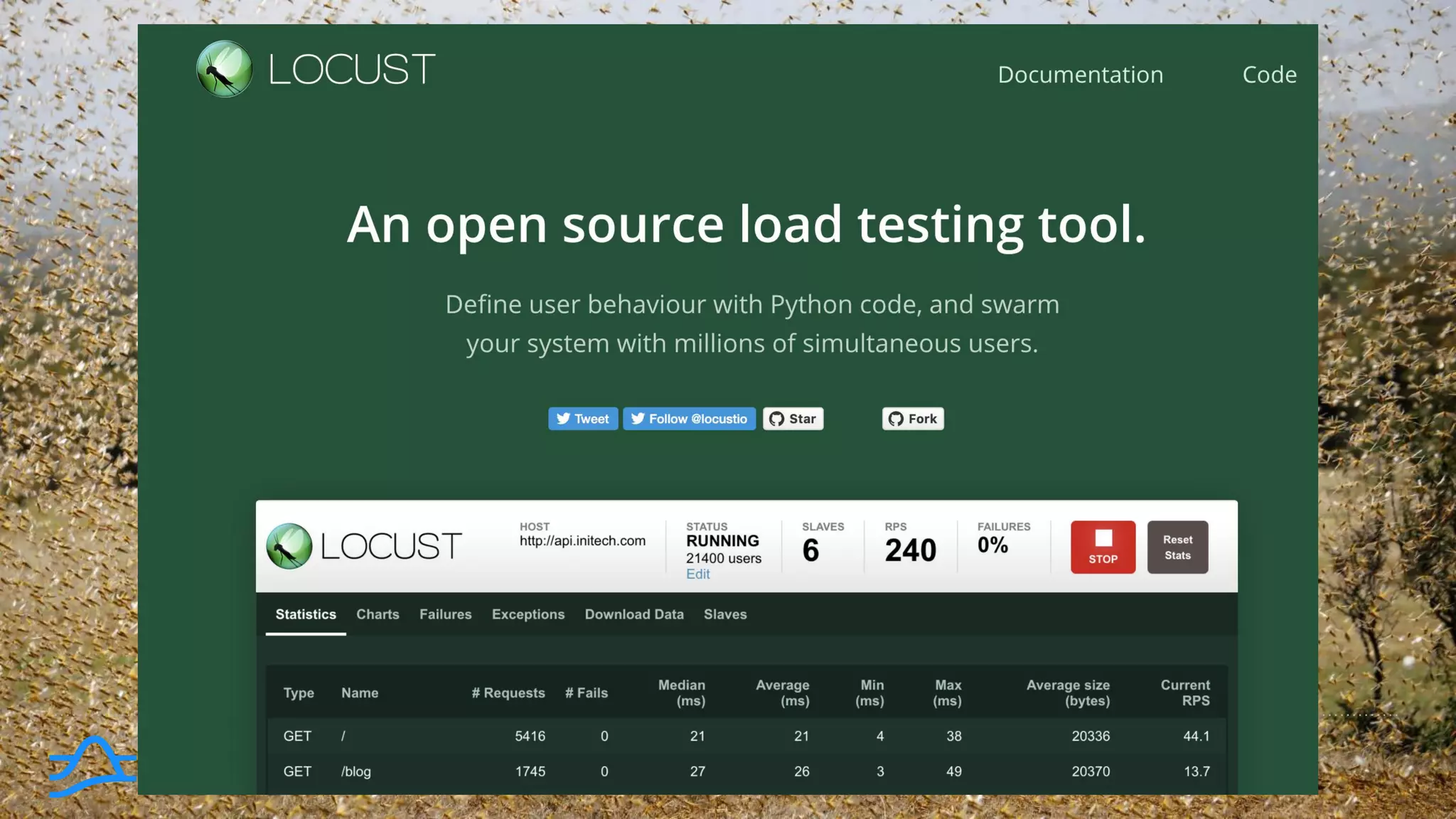

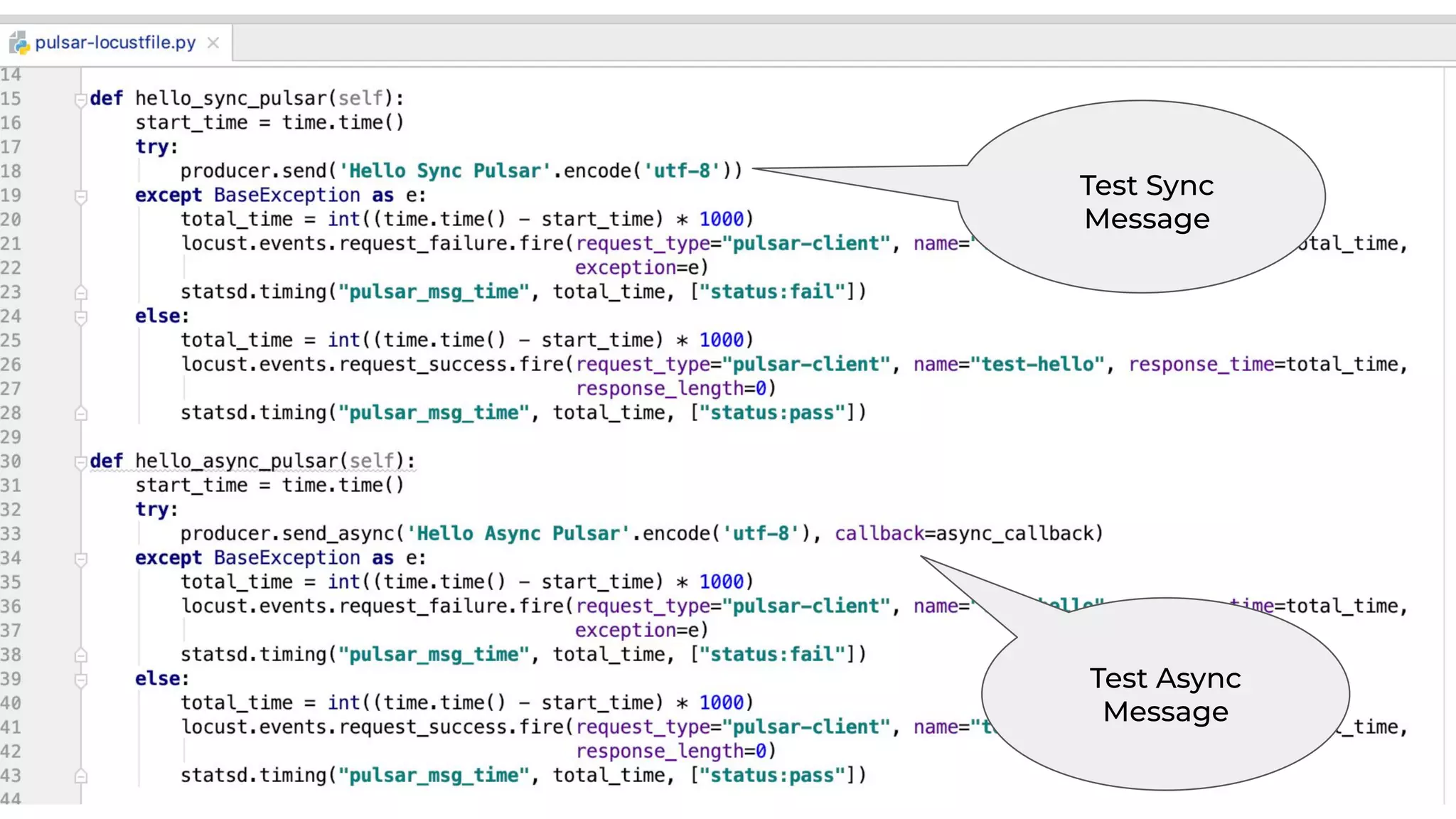

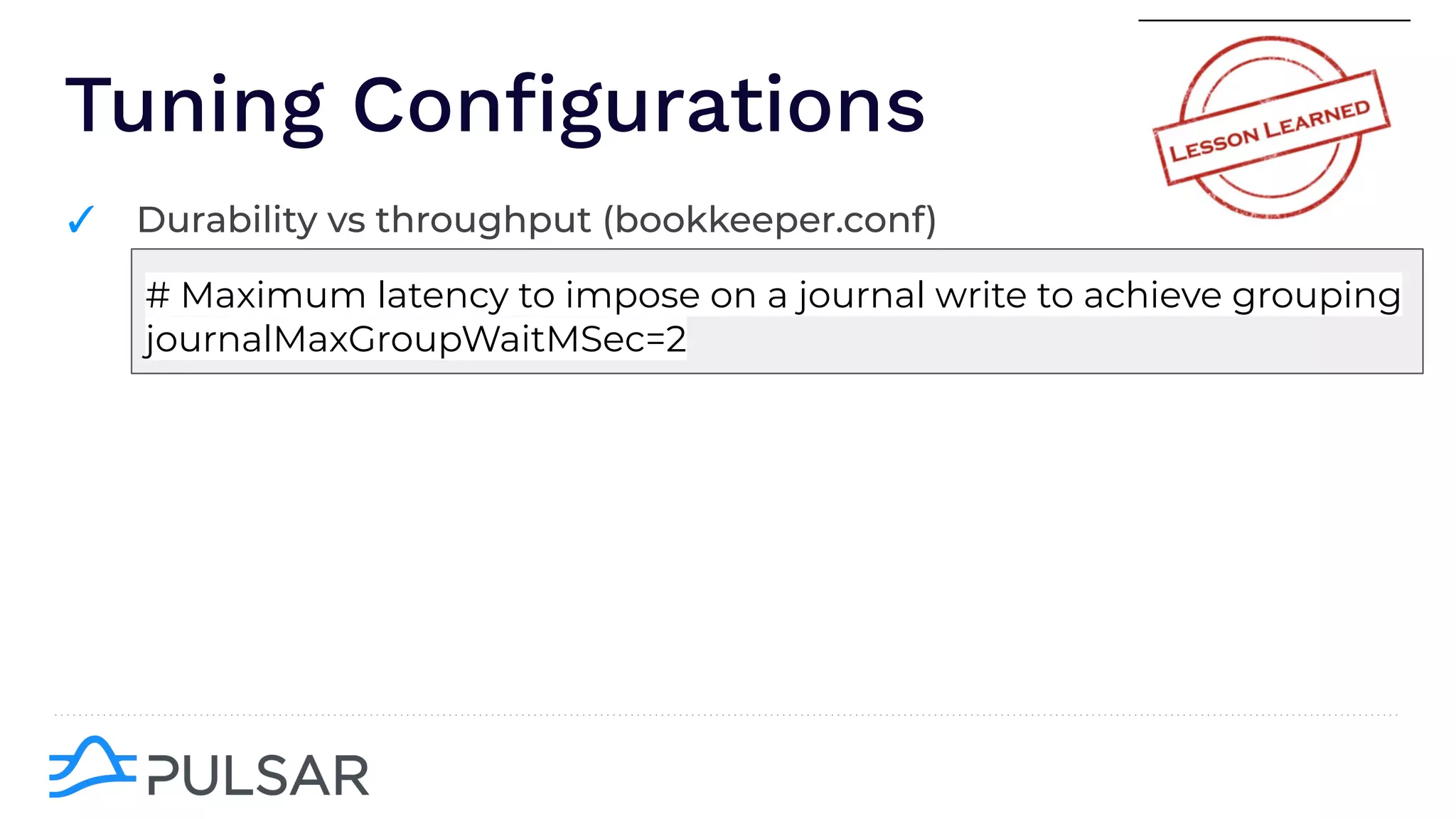

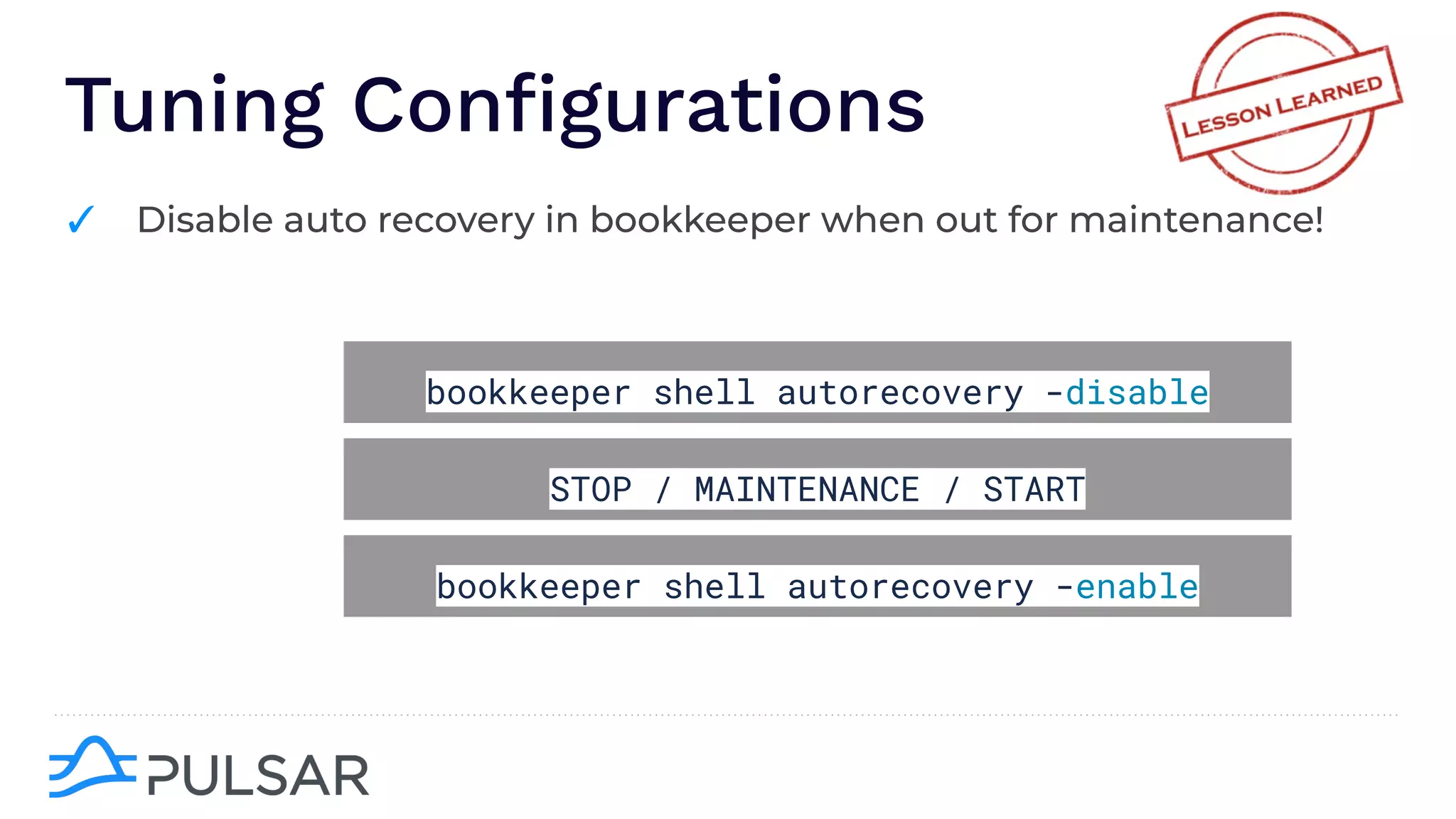

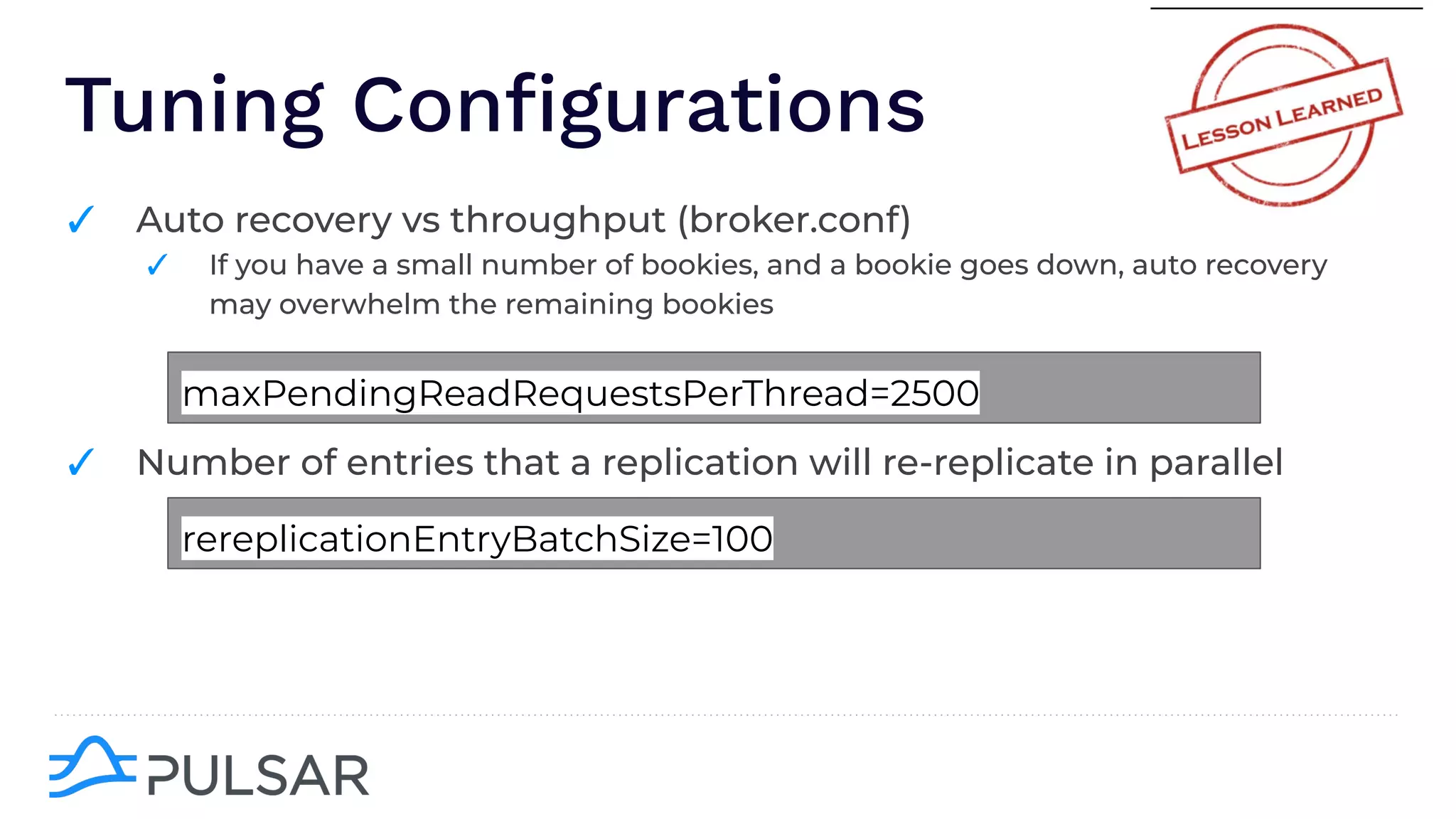

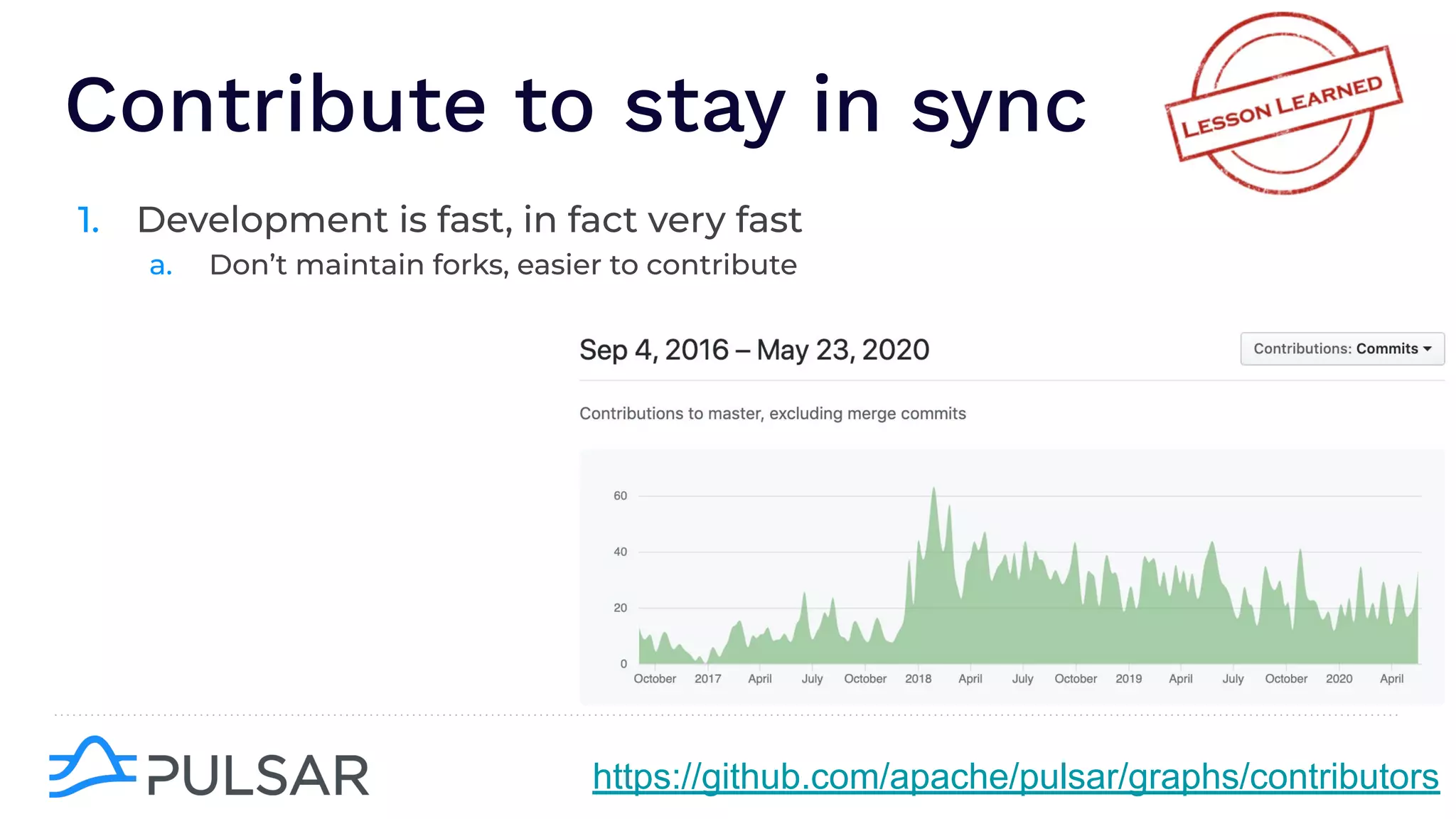

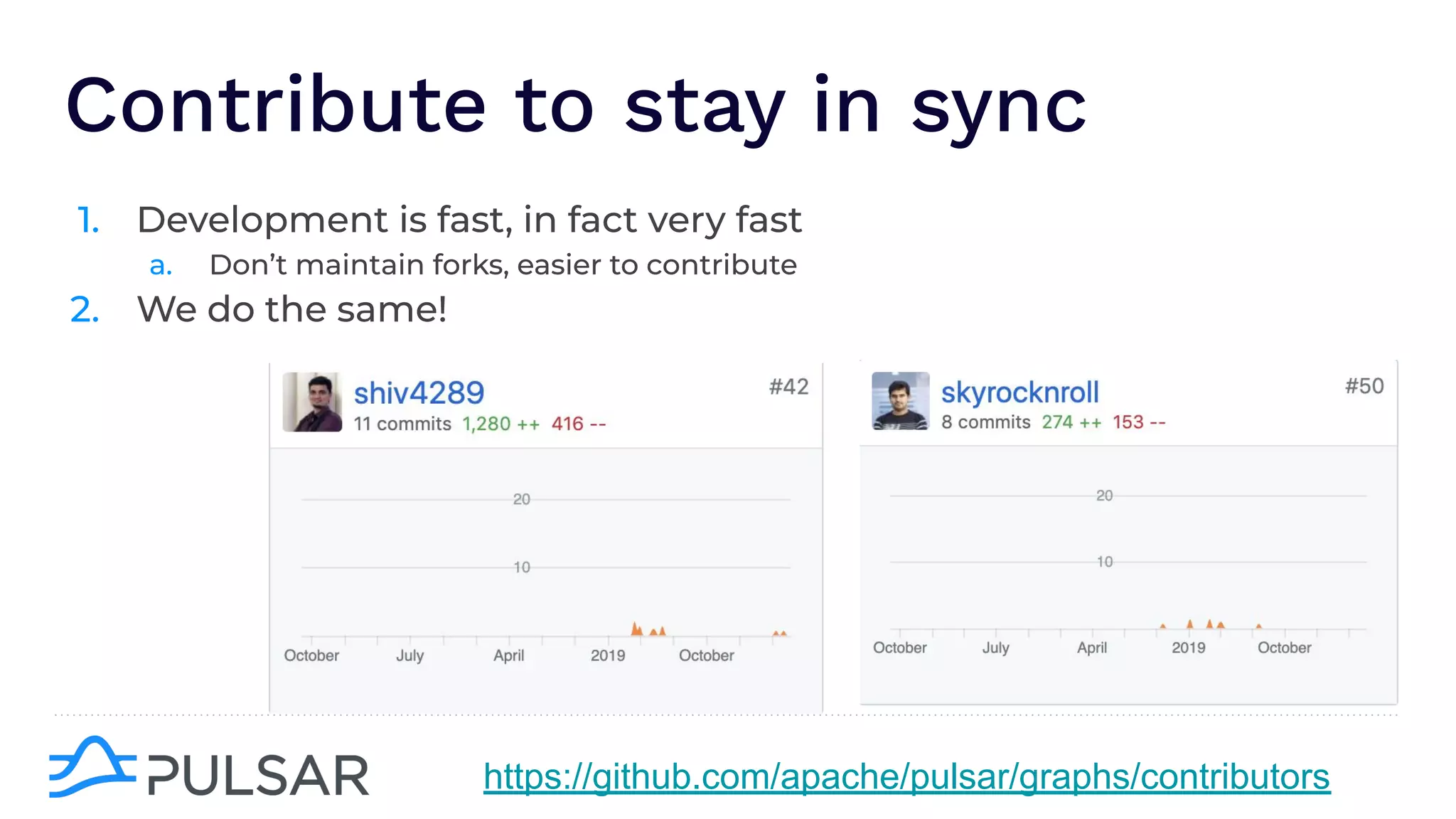

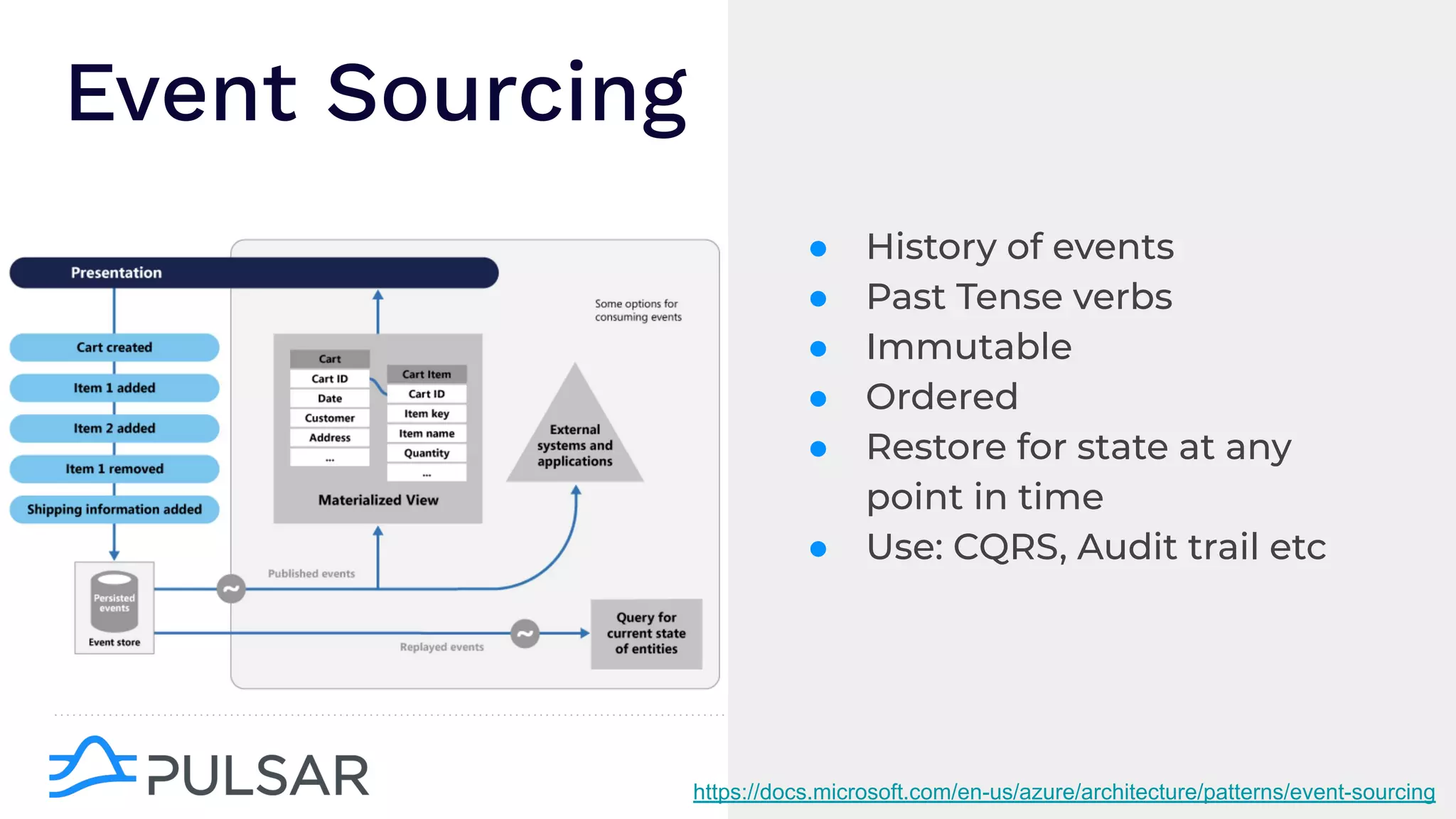

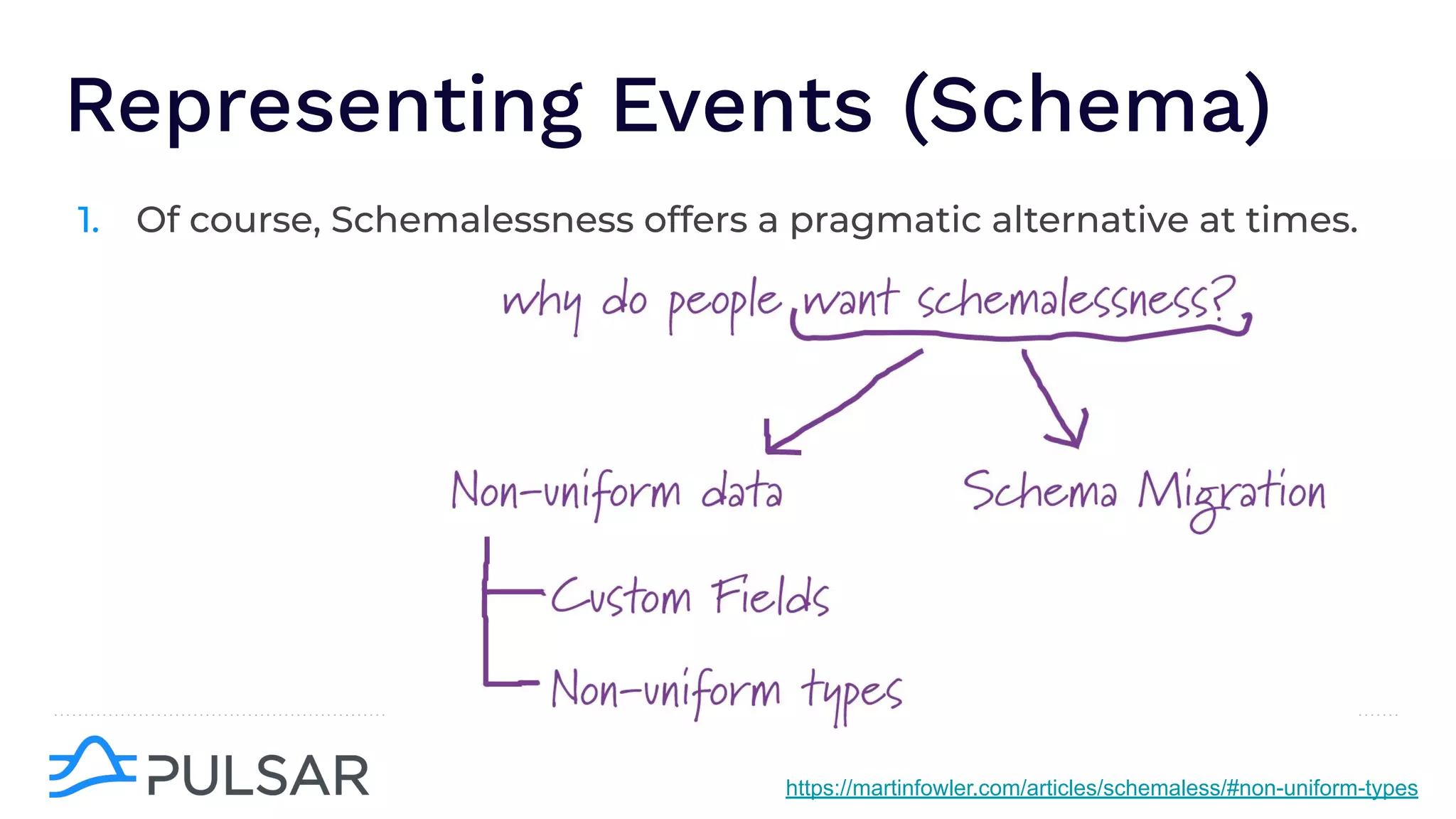

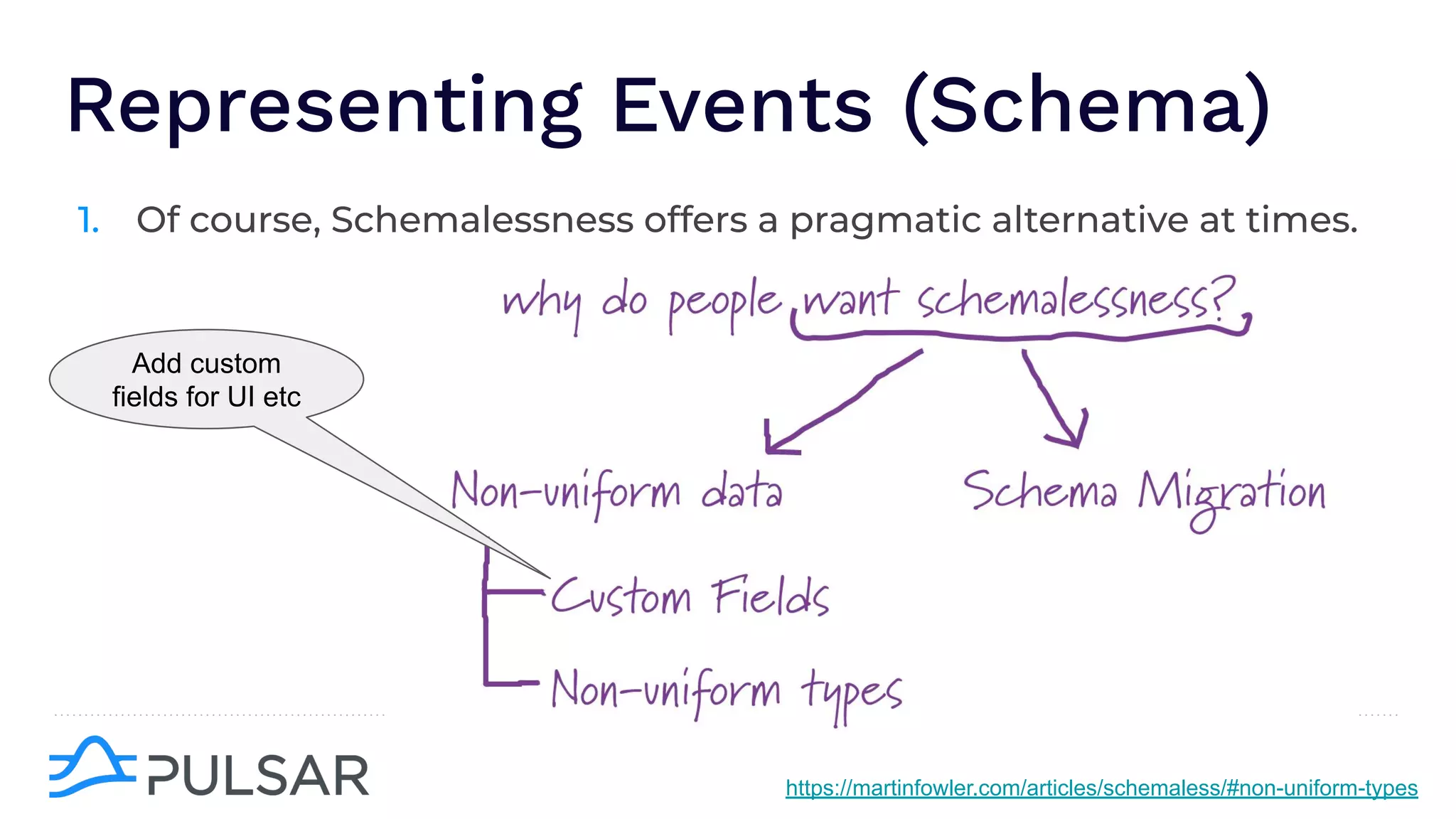

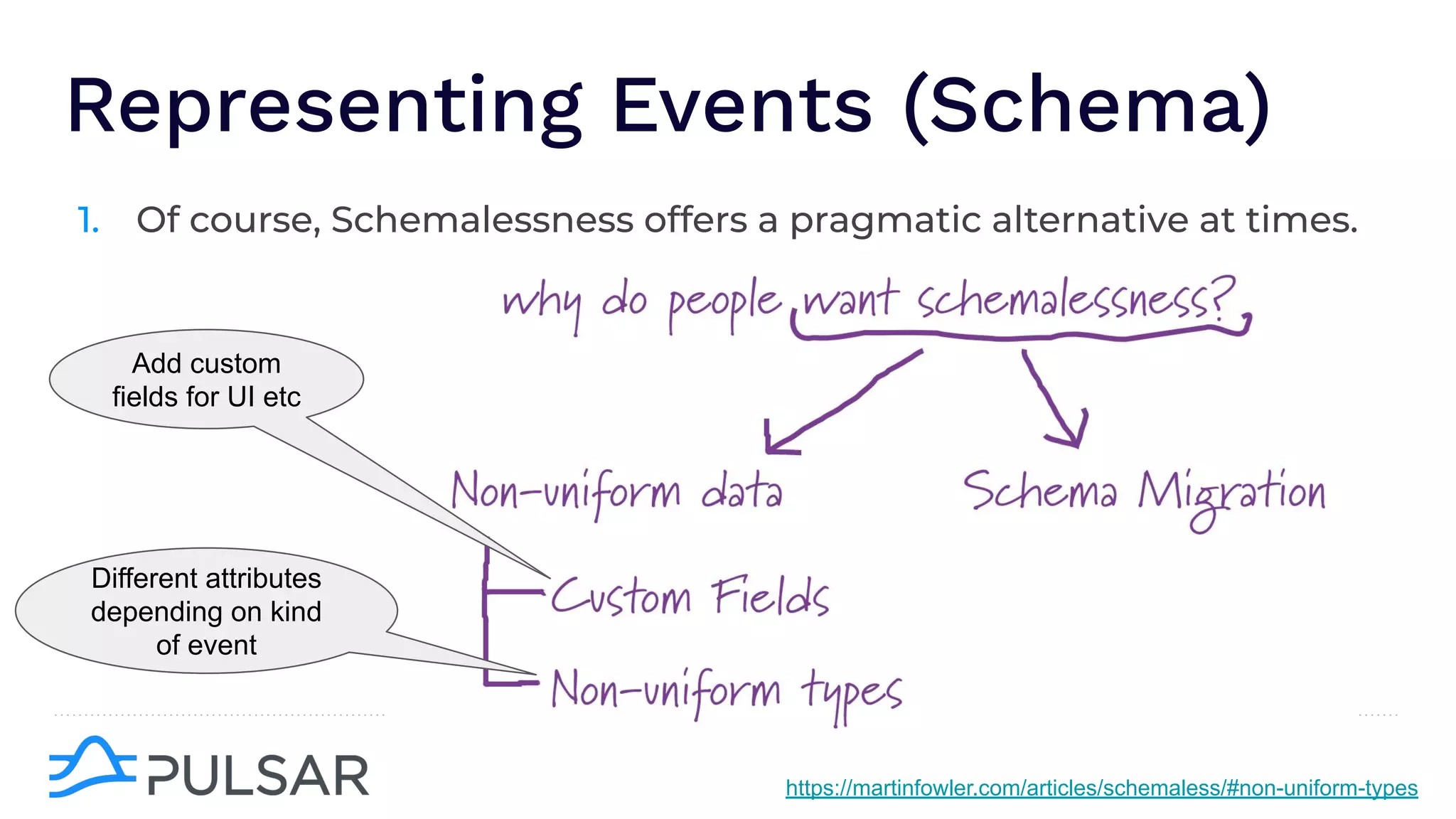

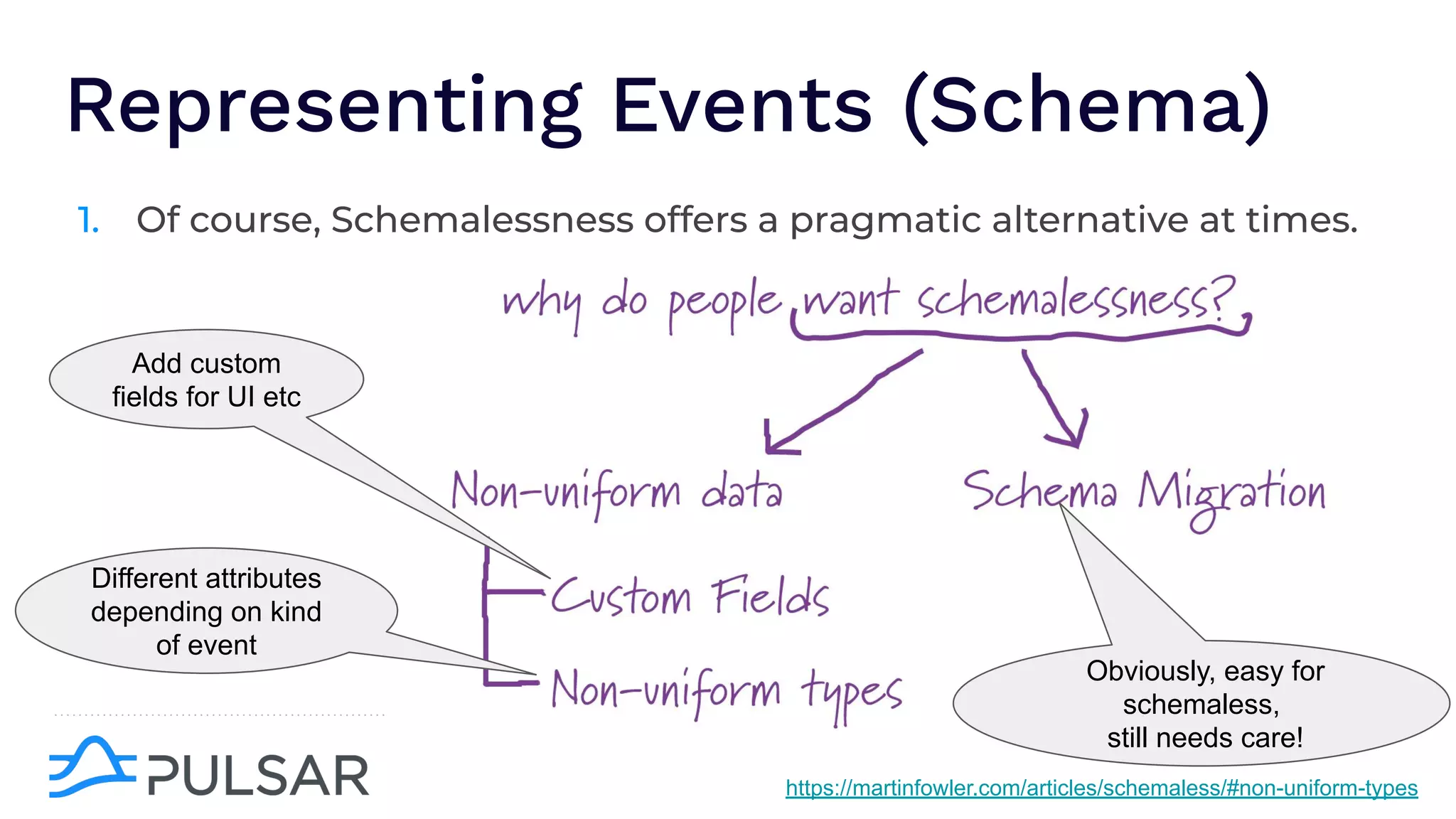

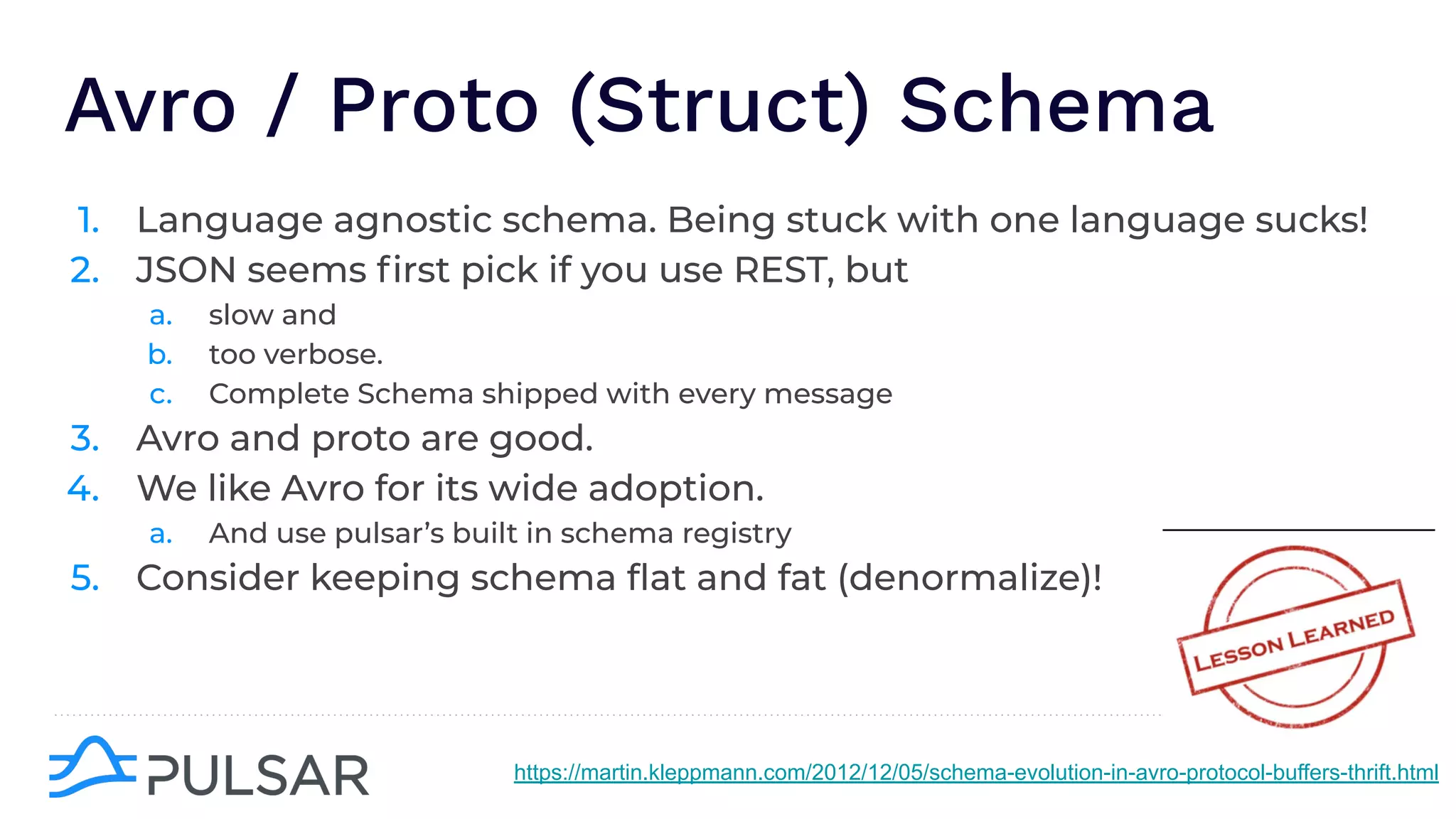

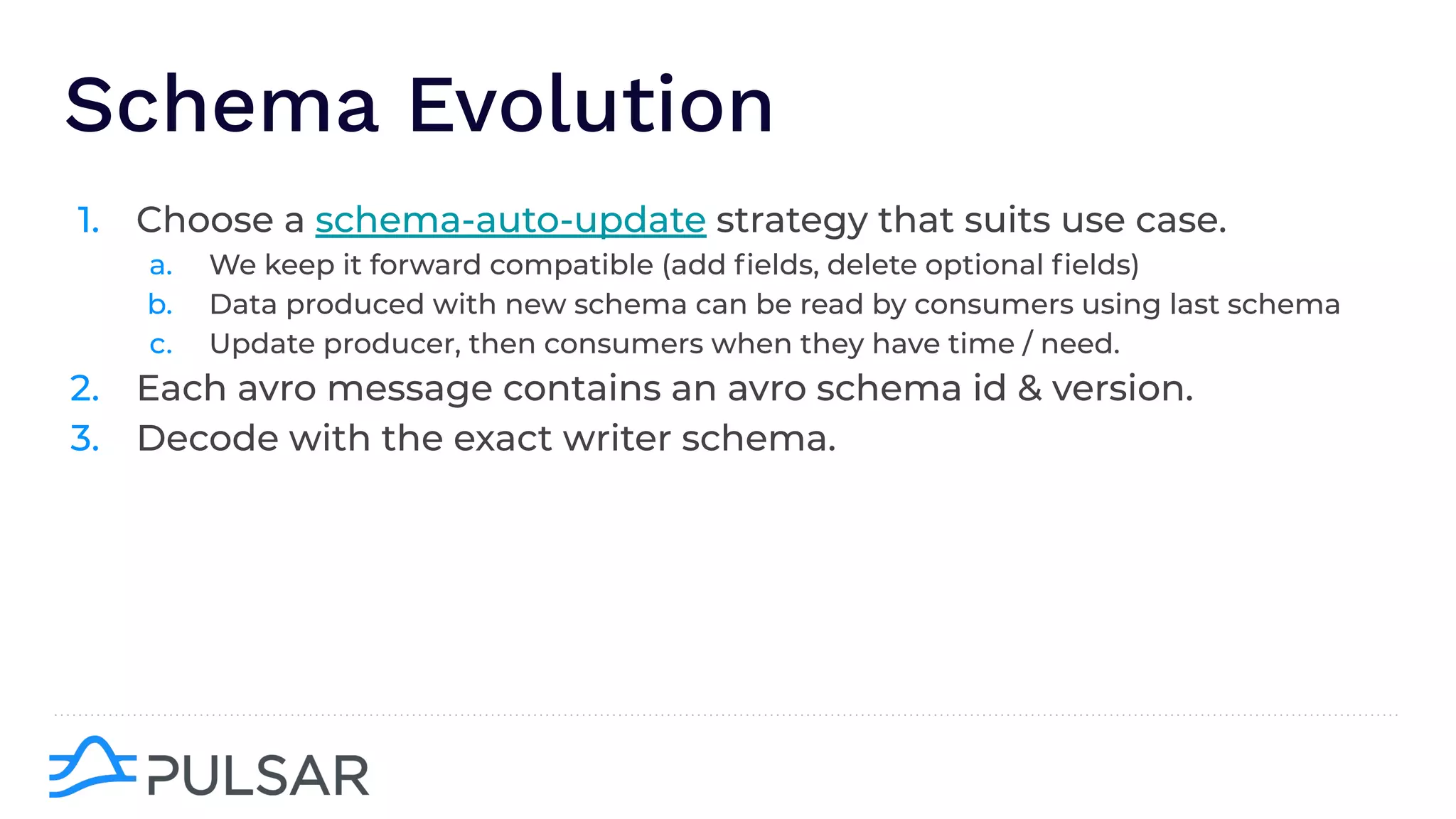

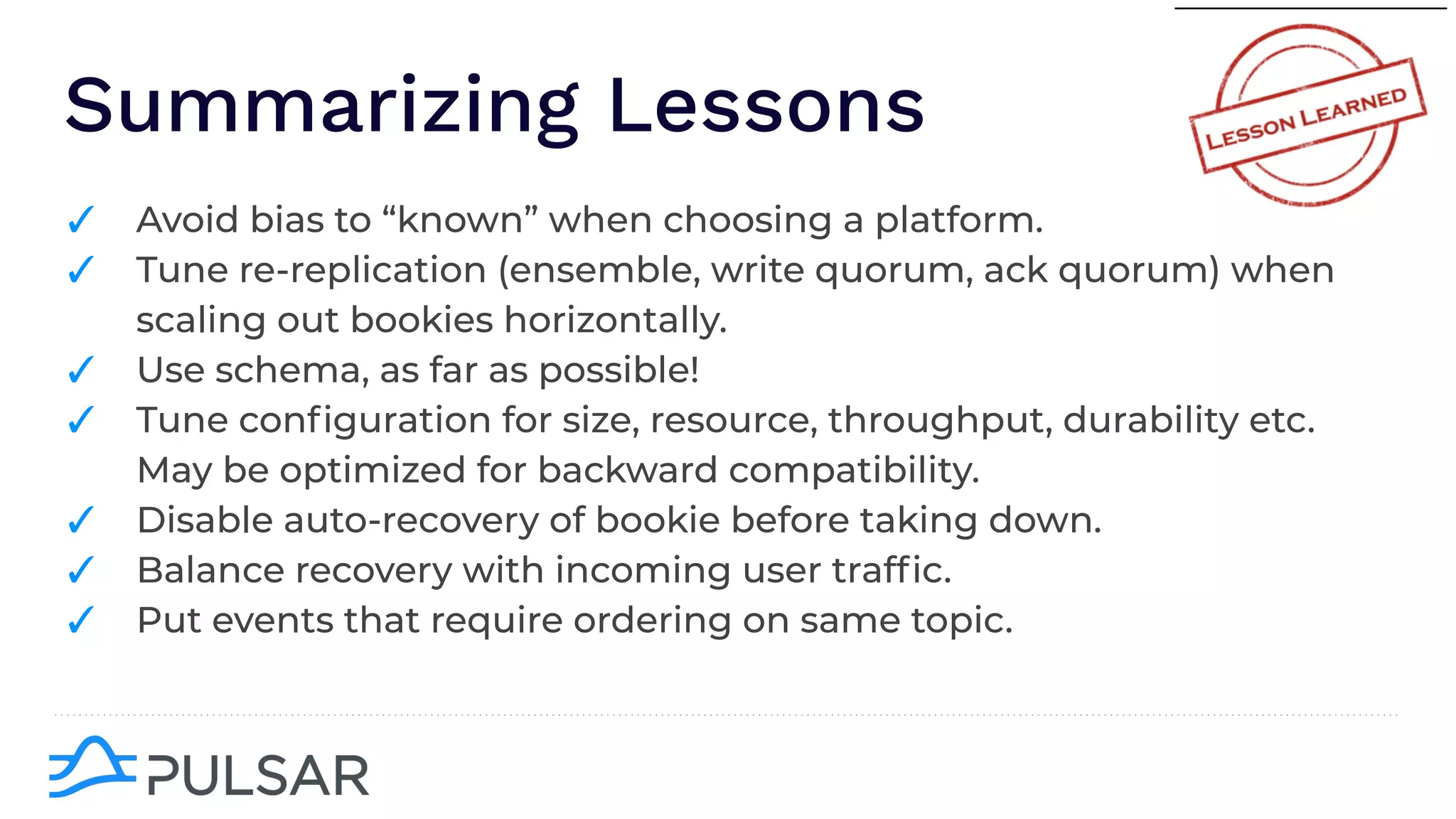

The document outlines the responsibilities of a senior developer at Nutanix, focusing on Apache Pulsar as a solution for managing data in hybrid cloud environments. It highlights key features, requirements, and tuning configurations for Pulsar, emphasizing its capabilities in efficient event storage, processing, and schema handling. The author also provides insights on best practices for utilizing Pulsar, including the use of schemas, performance tuning, and ensuring fault tolerance.