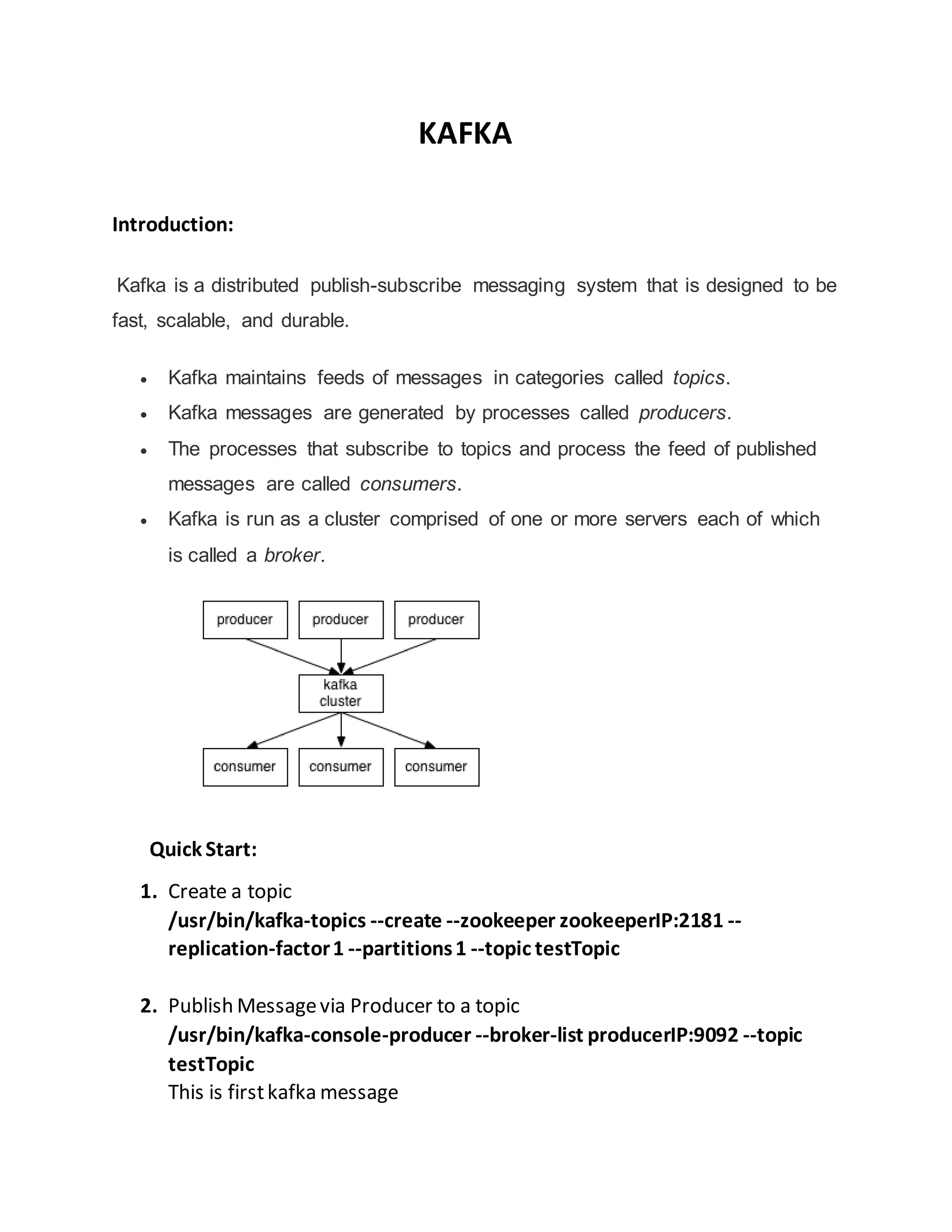

Kafka is a distributed publish-subscribe messaging system that allows processes called producers to publish messages to topics, and processes called consumers to subscribe to topics and receive the stream of published messages. It maintains feeds of messages in categories called topics, and messages are distributed across partitions in a fault-tolerant way. Kafka is run as a cluster of servers called brokers that together form a scalable and durable messaging backbone.

![3. So When touse Kafka?

Cloudera recommends using Kafka if the data will be consumed by multiple

applications

API Examples:

A sample Producer

Propertiesprops=newProperties();

props.put("metadata.broker.list",args[0]);

props.put("zk.connect",args[1]);

props.put("serializer.class","kafka.serializer.StringEncoder");

props.put("request.required.acks","1");

StringTOPIC= "event";

ProducerConfigconfig=newProducerConfig(props);

Producer<String,String>producer=new Producer<String,String>(config);

String[] events={"Normal","Normal","Normal",…];

String[] truckIds= {"1", "2", "3","4"};

String[] driverIds={"11", "12", "13", "14"};

Stringmessage = newTimestamp(newDate().getTime()) +"|"

+ truckIds[2] + "|" + driverIds[2] +"|" + events[random.nextInt(evtCnt)] );

try {

KeyedMessage<String,String>data= new KeyedMessage<String,String>(TOPIC, message);

producer.send(data);

Thread.sleep(1000);

} catch (Exceptione) {

e.printStackTrace();

}

A sample Consumer

Kafka provides a simple consumer which can be modified as per requirement

Steps for using a Simple Consumer

Find an active Broker and find out which Broker is the leader for your topic

and partition

Determine who the replica Brokers are for your topic and partition](https://image.slidesharecdn.com/d243b9ac-181c-4964-bd4d-36c665aedb7e-160215073853/85/KAFKA-Quickstart-4-320.jpg)

![ Build the request defining what data you are interested in

Fetch the data

Identify and recover from leader changes

Data fetch pseudo code

FetchRequestreq=new FetchRequestBuilder().clientId(clientName).addFetch(a_topic,a_partition,

readOffset, 100000).build();

FetchResponsefetchResponse =consumer.fetch(req);

if (fetchResponse.hasError()) {

//Error Handlingcode here

}

for (MessageAndOffset messageAndOffset:fetchResponse.messageSet(a_topic,a_partition)) {

longcurrentOffset=messageAndOffset.offset();

if (currentOffset<readOffset) {

//Properloggerhere

continue;

}

readOffset=messageAndOffset.nextOffset();

ByteBufferpayload=messageAndOffset.message().payload();

byte[] bytes=new byte[payload.limit()];

payload.get(bytes);

System.out.println(String.valueOf(messageAndOffset.offset()) + ": " + new String(bytes, "UTF-8"));

numRead++;

a_maxReads--;

}

Conclusion:

As you can see, Kafka has a unique design that makes it very useful for solving a

wide range of architectural challenges. It is important to make sure you use the

right approach for your use case and use it correctly to ensure high throughput,

low latency, high availability, and no loss of data.](https://image.slidesharecdn.com/d243b9ac-181c-4964-bd4d-36c665aedb7e-160215073853/85/KAFKA-Quickstart-5-320.jpg)