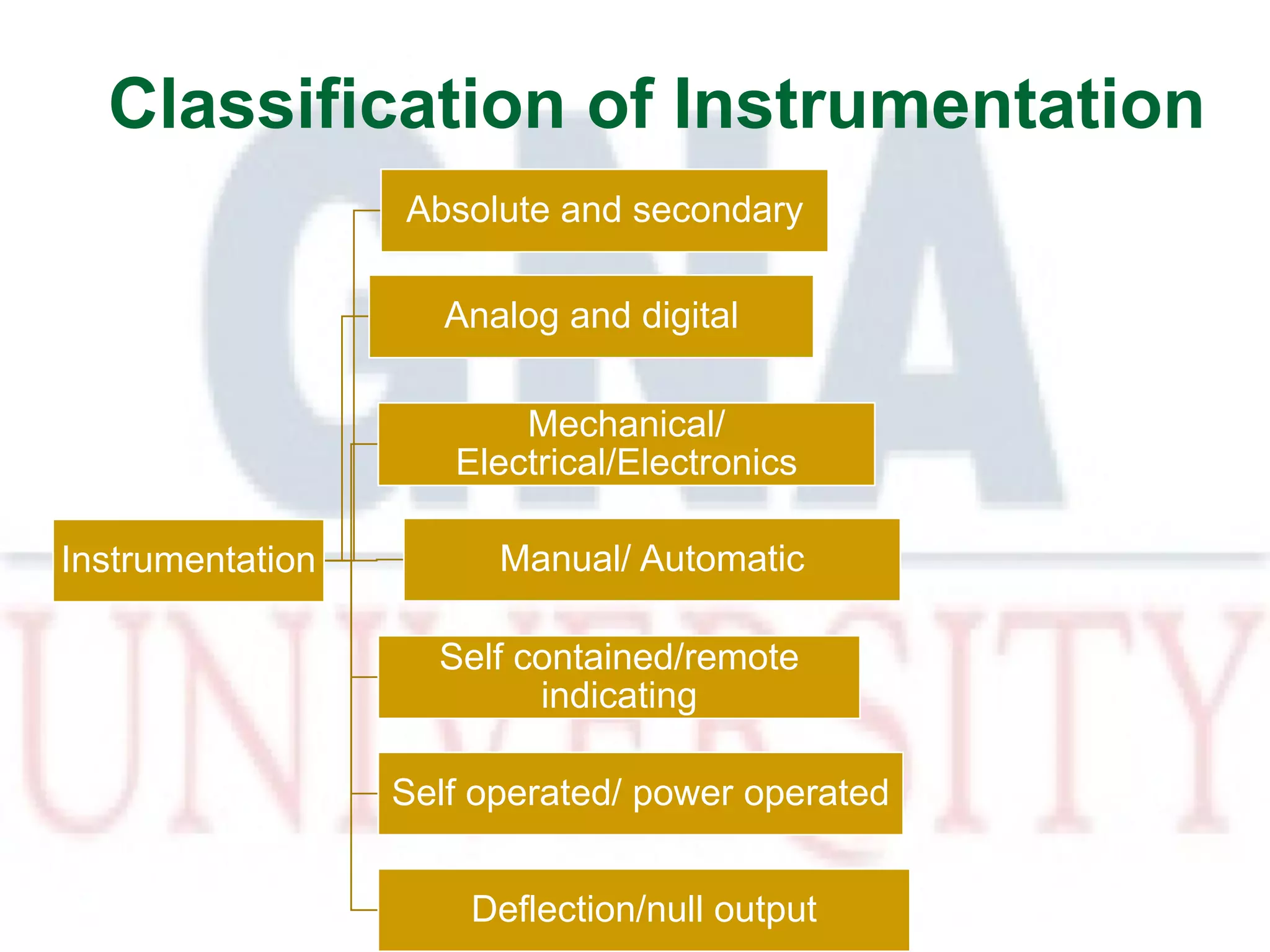

This document provides an introduction to metrology, which is the study of measurement. It defines key terms like measurement and discusses the importance of making good measurements. It explains that a good measurement is one where the standard is accurately defined and the method and instruments used are reliable. Sources of error in measurements are also outlined, including systematic, random and gross errors. Statistical analysis methods for measurements are introduced.