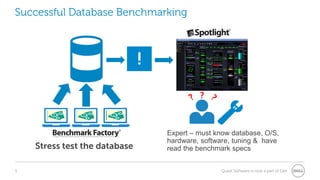

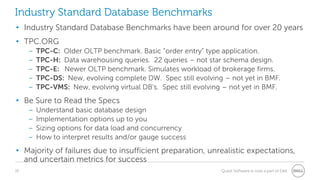

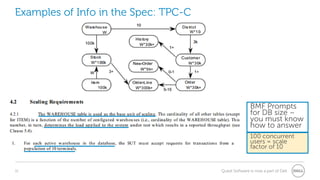

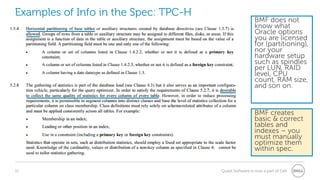

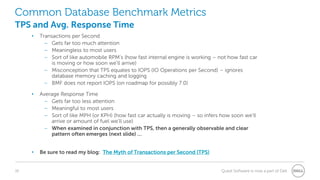

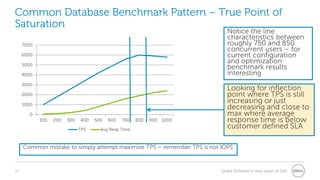

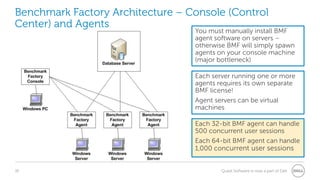

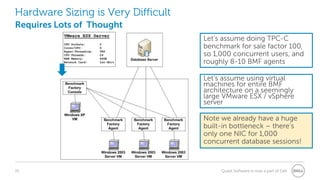

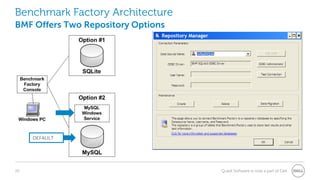

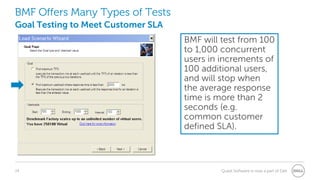

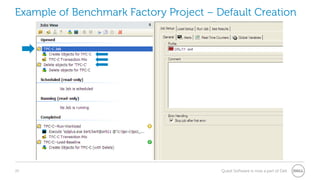

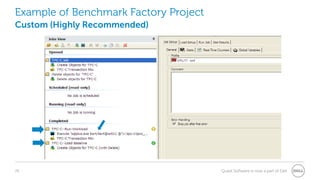

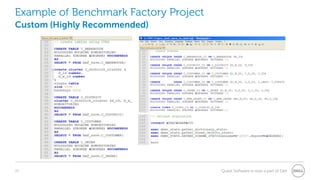

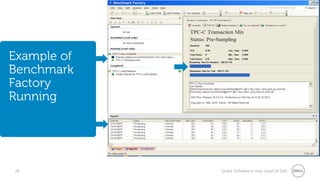

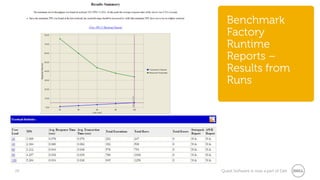

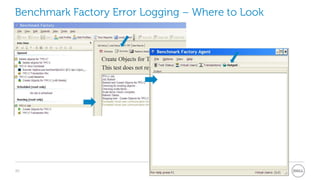

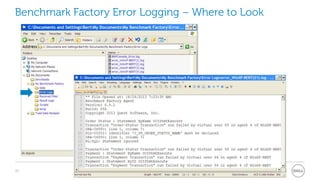

The document provides a comprehensive overview of database benchmarking using Benchmark Factory, highlighting the importance of expertise in database management, tools, and preparation to avoid common mistakes. It discusses industry-standard benchmarks, metrics, and the architecture of Benchmark Factory, emphasizing the need to optimize for average response time rather than just transactions per second. Additionally, it offers resources for further learning and insights into benchmarking best practices.