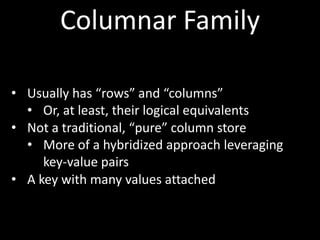

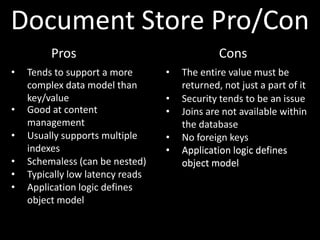

The document provides an overview of big data platforms and the concept of 'big data,' which refers to datasets that are too large for traditional database tools to manage effectively. It explains different types of big data platforms, particularly focusing on NoSQL technologies, their advantages, disadvantages, and the CAP theorem that outlines the trade-offs in database design. The document also categorizes various NoSQL approaches such as key-value stores, document stores, and columnar databases, detailing their functionality and use cases.