The document discusses approximation algorithms for solving hard combinatorial optimization problems. It defines optimization problems and covers NP-hard problems like the clique, independent set, vertex cover, and traveling salesman problems. Approaches for solving NP-hard problems include exact algorithms, approximation algorithms that provide guaranteed good solutions, and heuristics without guarantees. Approximation algorithms aim to settle for good enough solutions rather than optimal ones.

![50

Approximation

Algorithms

Jhoirene B Clemente

3 Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

Optimization Problems

[Papadimitriou and Steiglitz, 1998]

Definition (Instance of an Optimization Problem)

An instance of an optimization problem is a pair (F, c), where F

is any set, the domain of feasible points; c is the cost function, a

mapping

c : F ! R

The problem is to find an f 2 F for which

c(f ) c(y) 8 y 2 F

Such a point f is called a globally optimal solution to the given

instance, or, when no confusion can arise, simply an optimal

solution.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-3-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

3 Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

Optimization Problems

[Papadimitriou and Steiglitz, 1998]

Definition (Instance of an Optimization Problem)

An instance of an optimization problem is a pair (F, c), where F

is any set, the domain of feasible points; c is the cost function, a

mapping

c : F ! R

The problem is to find an f 2 F for which

c(f ) c(y) 8 y 2 F

Such a point f is called a globally optimal solution to the given

instance, or, when no confusion can arise, simply an optimal

solution.

Definition (Optimization Problem)

An optimization problem is a set of instances of an optimization

problem.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-4-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

4 Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

Optimization Problems

[Papadimitriou and Steiglitz, 1998]

Two categories

1. with continuous variables, where we look for a set of real

numbers or a function

2. with discrete variables, which we call combinatorial, where

we look for an object from a finite, or possibly countably

infinite set, typically an integer, set, permutation, or graph.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-5-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

5 Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

Combinatorial Optimization Problem

Definition (Combinatorial Optimization Problem

[Papadimitriou and Steiglitz, 1998])

An optimization problem = (D,R, cost, goal) consists of

1. A set of valid instances D. Let I 2 D, denote an input

instance.

2. Each I 2 D has a set of feasible solutions, R(I ).

3. Objective function, cost, that assigns a nonnegative

rational number to each pair (I , SOL), where I is an instance

and SOL is a feasible solution to I.

4. Either minimization or maximization problem:

goal 2 {min, max}.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-6-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

7 Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

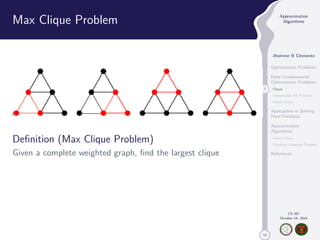

Max Clique Problem

Definition (Max Clique Problem)

Given a complete weighted graph, find the largest clique

Theorem

Max Clique is NP-hard [Garey and Johnson, 1979].](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-9-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

7 Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

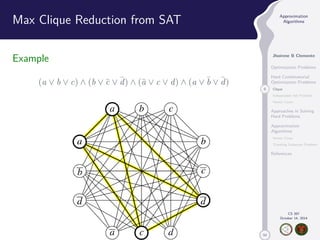

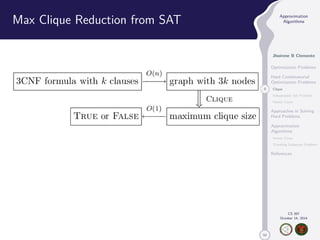

Max Clique Problem

Definition (Max Clique Problem)

Given a complete weighted graph, find the largest clique

Theorem

Max Clique is NP-hard [Garey and Johnson, 1979].

Proposition

The decision variant of MAX-SAT is NP-Complete

[Garey and Johnson, 1979].](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-10-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

10 Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

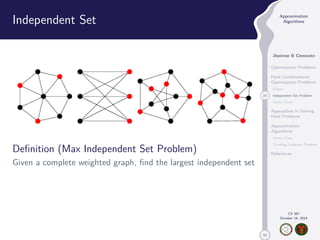

Independent Set

Definition (Max Independent Set Problem)

Given a complete weighted graph, find the largest independent set

Theorem

Independent Set Problem is NP-hard [Garey and Johnson, 1979].](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-14-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

11 Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

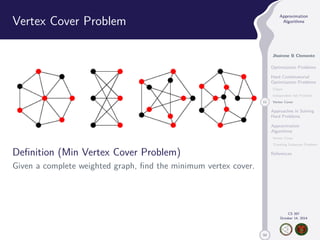

Vertex Cover Problem

Definition (Min Vertex Cover Problem)

Given a complete weighted graph, find the minimum vertex cover.

Theorem

Vertex Cover Problem is NP-hard [Garey and Johnson, 1979].](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-16-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

15 Approximation

Algorithms

Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

Approximation Algorithms

Definition (Approximation Algorithm

[Williamson and Shmoy, 2010] )

An -approximation algorithm for an optimization problem is a

polynomial-time algorithm that for all instances of the problem

produces a solution whose value is within a factor of of the

value of an optimal solution.

Given an problem instance I with an optimal solution Opt(I ), i.e.

the cost function cost(Opt(I )) is minimum/maximum.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-21-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

15 Approximation

Algorithms

Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

Approximation Algorithms

Definition (Approximation Algorithm

[Williamson and Shmoy, 2010] )

An -approximation algorithm for an optimization problem is a

polynomial-time algorithm that for all instances of the problem

produces a solution whose value is within a factor of of the

value of an optimal solution.

Given an problem instance I with an optimal solution Opt(I ), i.e.

the cost function cost(Opt(I )) is minimum/maximum.

I An algorithm for a minimization problem is called

-approximative algorithm for some 1, if the algorithm

obtains a maximum cost of · cost(Opt(I )), for any input

instance I .](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-22-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

15 Approximation

Algorithms

Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

Approximation Algorithms

Definition (Approximation Algorithm

[Williamson and Shmoy, 2010] )

An -approximation algorithm for an optimization problem is a

polynomial-time algorithm that for all instances of the problem

produces a solution whose value is within a factor of of the

value of an optimal solution.

Given an problem instance I with an optimal solution Opt(I ), i.e.

the cost function cost(Opt(I )) is minimum/maximum.

I An algorithm for a minimization problem is called

-approximative algorithm for some 1, if the algorithm

obtains a maximum cost of · cost(Opt(I )), for any input

instance I .

I An algorithm for a maximization problem is called

-approximative algorithm, for some 1, if the algorithm

obtains a minimum cost of · cost(Opt(I )), for any input

instance I .](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-23-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

18 Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

Example: Vertex Cover Problem

Definition (Vertex Cover [Vazirani, 2001] )

Given a graph G = (V,E), a vertex cover is a subset C V

such that every edge has at least one end point incident at C.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-26-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

18 Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

Example: Vertex Cover Problem

Definition (Vertex Cover [Vazirani, 2001] )

Given a graph G = (V,E), a vertex cover is a subset C V

such that every edge has at least one end point incident at C.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-27-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

18 Vertex Cover

Traveling Salesman Problem

References

CS 397

October 14, 2014

Example: Vertex Cover Problem

Definition (Vertex Cover [Vazirani, 2001] )

Given a graph G = (V,E), a vertex cover is a subset C V

such that every edge has at least one end point incident at C.

Definition (Minimum Vertex Cover Problem)

Given a complete weighted graph G = (V,E), find a minimum

cardinality vertex cover C.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-28-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

45 Traveling Salesman Problem

References

CS 397

October 14, 2014

Approximable Problems [Vazirani, 2001]

Definition (APX)

I An abbreviation for “Approximable, is the set of NP

optimization problems that allow polynomial-time

approximation algorithms with approximation ratio bounded

by a constant.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-58-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

45 Traveling Salesman Problem

References

CS 397

October 14, 2014

Approximable Problems [Vazirani, 2001]

Definition (APX)

I An abbreviation for “Approximable, is the set of NP

optimization problems that allow polynomial-time

approximation algorithms with approximation ratio bounded

by a constant.

I Problems in this class have efficient algorithms that can find

an answer within some fixed percentage of the optimal

answer.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-59-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

46 Traveling Salesman Problem

References

CS 397

October 14, 2014

Polynomial-time Approximation Schemes

Definition (PTAS [Aaronson et al., 2008])

The subclass of NPO problems that admit an approximation

scheme in the following sense.

For any 0, there is a polynomial-time algorithm that is

guaranteed to find a solution whose cost is within a 1 + factor

of the optimum cost. Contains FPTAS, and is contained in APX.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-60-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

46 Traveling Salesman Problem

References

CS 397

October 14, 2014

Polynomial-time Approximation Schemes

Definition (PTAS [Aaronson et al., 2008])

The subclass of NPO problems that admit an approximation

scheme in the following sense.

For any 0, there is a polynomial-time algorithm that is

guaranteed to find a solution whose cost is within a 1 + factor

of the optimum cost. Contains FPTAS, and is contained in APX.

Definition (FPTAS [Aaronson et al., 2008] )

The subclass of NPO problems that admit an approximation

scheme in the following sense.

For any 0, there is an algorithm that is guaranteed to find a

solution whose cost is within a 1 + factor of the optimum cost.

Furthermore, the running time of the algorithm is polynomial in n

(the size of the problem) and in 1/.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-61-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

47 Traveling Salesman Problem

References

CS 397

October 14, 2014

Approximation Ratio of well known Hard

Problems

1. FPTAS: Bin Packing Problem

2. PTAS: Makespan Scheduling Problem

3. APX:

3.1 Min Steiner Tree Problem (1.55 Approximable) [Robins,2005]

3.2 Min Metric TSP (3/2 Approximable) [Christofides,1977]

3.3 Max SAT (0.77 Approximable) [Asano,1997]

3.4 Vertex Cover (2 Approximable) [Vazirani,2001]

4. MAX SNP

4.1 Independent Set Problem

4.2 Clique Problem

4.3 Travelling Salesman Problem](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-62-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

48 Traveling Salesman Problem

References

CS 397

October 14, 2014

Complexity Classes [Ausiello et al., 2011]

FPTAS ( PTAS ( APX ( NPO](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-63-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

49 Traveling Salesman Problem

References

CS 397

October 14, 2014

Inapproximable Problems

Many problems have polynomial-time approximation schemes.

However, there exists a class of problems that is not so easy

[Williamson and Shmoy, 2010]. This class is called MAX SNP.

Theorem

For any MAXSNP-hard problem, there does not exist a

polynomial-time approximation scheme, unless P = NP

[Williamson and Shmoy, 2010].](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-64-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

49 Traveling Salesman Problem

References

CS 397

October 14, 2014

Inapproximable Problems

Many problems have polynomial-time approximation schemes.

However, there exists a class of problems that is not so easy

[Williamson and Shmoy, 2010]. This class is called MAX SNP.

Theorem

For any MAXSNP-hard problem, there does not exist a

polynomial-time approximation scheme, unless P = NP

[Williamson and Shmoy, 2010].](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-65-320.jpg)

![50

Approximation

Algorithms

Jhoirene B Clemente

Optimization Problems

Hard Combinatorial

Optimization Problems

Clique

Independent Set Problem

Vertex Cover

Approaches in Solving

Hard Problems

Approximation

Algorithms

Vertex Cover

49 Traveling Salesman Problem

References

CS 397

October 14, 2014

Inapproximable Problems

Many problems have polynomial-time approximation schemes.

However, there exists a class of problems that is not so easy

[Williamson and Shmoy, 2010]. This class is called MAX SNP.

Theorem

For any MAXSNP-hard problem, there does not exist a

polynomial-time approximation scheme, unless P = NP

[Williamson and Shmoy, 2010].

Theorem

If P6= NP, then for any constant 1, there is no

polynomial-time approximation algorithm with approximation

ratio for the general travelling salesman problem.](https://image.slidesharecdn.com/approx-141023022738-conversion-gate01/85/Introduction-to-Approximation-Algorithms-66-320.jpg)