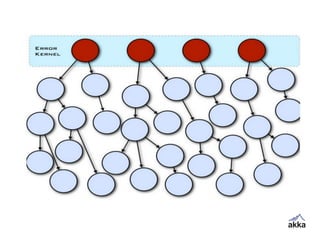

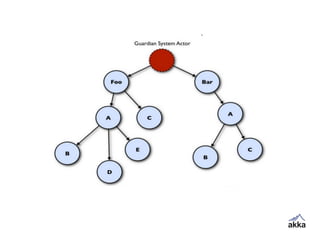

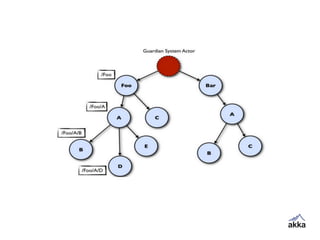

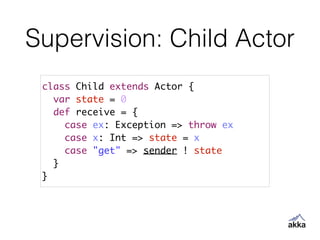

Akka is a toolkit for building highly concurrent, distributed, and fault-tolerant applications on the JVM. It provides actors as the fundamental unit of concurrency. Actors receive messages asynchronously and process them one at a time by applying behaviors. Akka uses a supervision hierarchy where actors monitor child actors and handle failures through configurable strategies like restart or stop. This provides clean separation of processing and error handling compared to traditional approaches.

![Create Actor

package com.meetu.akka

!

import akka.actor._

!

object HelloWorldAkkaApplication extends App {

val system = ActorSystem("myfirstApp")

val myFirstActor: ActorRef = system.actorOf(Props[MyFirstActor])

……..

}

Create an Actor System

!

create actor from Actor System using actorOf method

!

the actorOf method returns an ActorRef instead of Actor class type](https://image.slidesharecdn.com/introducingakka-140628092634-phpapp02/85/Introducing-Akka-23-320.jpg)

![Send Message

package com.meetu.akka

!

import akka.actor._

!

object HelloWorldAkkaApplication extends App {

val system = ActorSystem("myfirstApp")

val myFirstActor: ActorRef = system.actorOf(Props[MyFirstActor])

myFirstActor ! "Hello World"

myFirstActor.!("Hello World")

}

Scala version has a method named “!”

!

This is asynchronous thread of execution continues after sending

!

It accepts Any as a parameter

!

In Scala we can skip a dot with a space: So it feels natural to use](https://image.slidesharecdn.com/introducingakka-140628092634-phpapp02/85/Introducing-Akka-25-320.jpg)

![Ask Pattern

package com.meetu.akka

!

import akka.actor._

import akka.pattern.ask

import akka.util.Timeout

import scala.concurrent.duration._

import scala.concurrent.Await

import scala.concurrent.Future

!

object AskPatternApp extends App {

implicit val timeout = Timeout(500 millis)

val system = ActorSystem("BlockingApp")

val echoActor = system.actorOf(Props[EchoActor])

!

val future: Future[Any] = echoActor ? "Hello"

val message = Await.result(future, timeout.duration).asInstanceOf[String]

!

println(message)

}

!

class EchoActor extends Actor {

def receive = {

case msg => sender ! msg

}

}

Ask pattern is blocking

!

Thread of execution waits till response is reached](https://image.slidesharecdn.com/introducingakka-140628092634-phpapp02/85/Introducing-Akka-26-320.jpg)

![Round Robin Router

import akka.actor._

import akka.routing.RoundRobinPool

import akka.routing.Broadcast

!

object RouterApp extends App {

val system = ActorSystem("routerApp")

val router = system.actorOf(RoundRobinPool(5).props(Props[RouterWorkerActor]), "workers")

router ! Broadcast("Hello")

}

!

class RouterWorkerActor extends Actor {

def receive = {

case msg => println(s"Message: $msg received in ${self.path}")

}

}

A router sits on top of routees

!

When messages are sent to Router, Routees get messages in Round Robin](https://image.slidesharecdn.com/introducingakka-140628092634-phpapp02/85/Introducing-Akka-29-320.jpg)

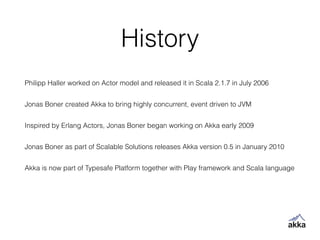

![Supervision Application

object SupervisionExampleApp extends App {

implicit val timeout = Timeout(50000 milliseconds)

val system = ActorSystem("supervisionExample")

val supervisor = system.actorOf(Props[Supervisor], "supervisor")

val future = supervisor ? Props[Child]

val child = Await.result(future, timeout.duration).asInstanceOf[ActorRef]

child ! 42

println("Normal response " + Await.result(child ? "get", timeout.duration).asInstanceOf[Int])

child ! new ArithmeticException

println("Arithmetic Exception response " + Await.result(child ? "get", timeout.duration).asInstanceOf[Int])

child ! new NullPointerException

println("Null Pointer response " + Await.result(child ? "get", timeout.duration).asInstanceOf[Int])

}](https://image.slidesharecdn.com/introducingakka-140628092634-phpapp02/85/Introducing-Akka-42-320.jpg)