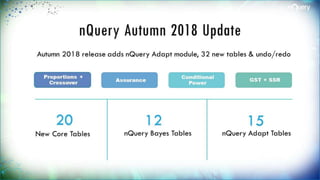

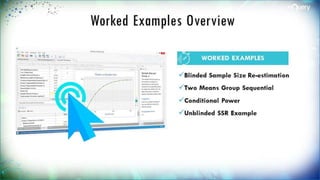

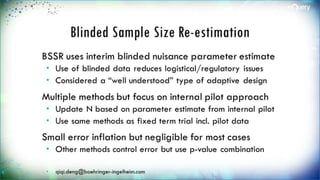

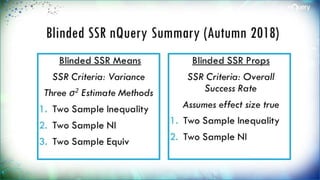

The document discusses adaptive trial designs, specifically focusing on sample size re-estimation (SSR) and its implications for clinical trials. It highlights the advantages and disadvantages of adaptive trials, the regulatory background supporting them, and the methodology behind blinded and unblinded SSR. The document also outlines recent updates to sample size calculation tools, emphasizing their widespread acceptance and expected growth in use.

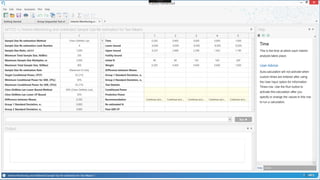

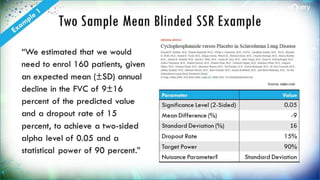

![Two Sample Proportion BSSR

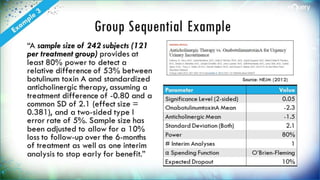

Example

“For our sample size

calculations, we assumed that

20% of women in the control arm

would be using LARC after their

6-week postpartum visit, … An

analysis population with at least

626 women (313 in each arm)

was required to provide 80%

power (using a two-sided alpha

of 0.05) to detect an absolute

10% increase to 30% in LARC use

in the intervention arm [14].

Anticipating a maximum drop-

out rate of 20% at the time of

Follow-Up Survey #2, we planned

to randomize 800 participants”

Source: Contraception

Parameter Value

Significance Level (2-

Sided)

0.05

Control Rate 0.2

Intervention Rate 0.3

n per Group 313

Target Power (%) 80%

Nuisance Parameter Overall Success

Rate](https://image.slidesharecdn.com/innovativesamplesizemethodsforadaptiveclinicaltrialswebinar-webversion0-180926141725/85/Innovative-sample-size-methods-for-adaptive-clinical-trials-webinar-web-version-0-1-16-320.jpg)