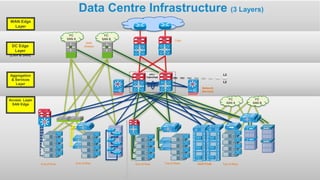

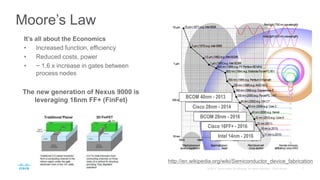

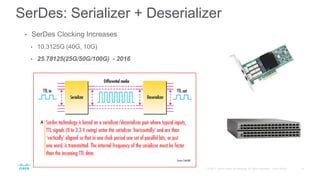

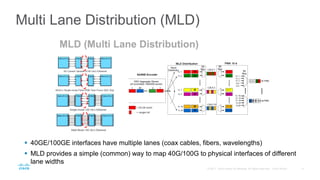

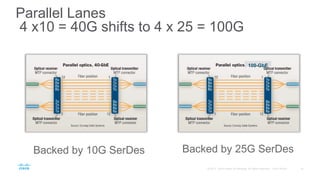

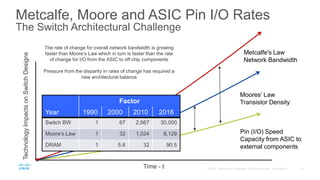

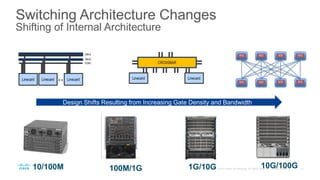

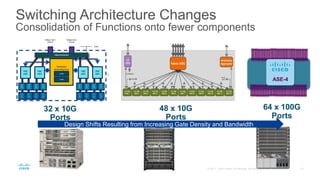

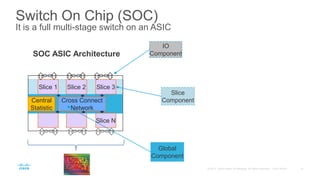

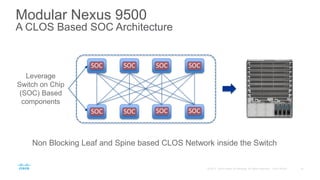

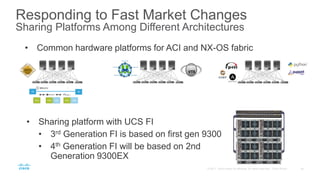

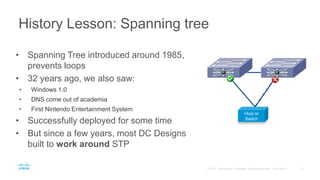

The document provides an overview of Cisco's physical infrastructure technology for data centers. It discusses the three layers of data center infrastructure including the network, access, and aggregation/services layers. It then covers topics like Moore's law, serializer-deserializer technology, multi-lane distribution, switching architecture changes, switch-on-chip architectures, and modular switch platforms. The document aims to explain how Cisco has adapted its data center switching technology to address the challenges of exponentially increasing bandwidth over time.