VXLAN BGP EVPN is a technology that uses VXLAN, BGP and EVPN to build multi-tenant IP fabrics. The document discusses VXLAN and EVPN concepts and acronyms, as well as providing sample configurations and outputs for a VXLAN BGP EVPN setup on Arista switches. Key technologies covered include VXLAN, VTEPs, VNIs, EVPN instances, MAC learning in the control plane, and the advantages of EVPN over traditional VXLAN.

![VXLAN – SAMPLE OUTPUTS

aris-lf1#sh ip route 10.1.1.4

VRF: default

Codes: C - connected, S - static, K - kernel,

O - OSPF, IA - OSPF inter area, E1 -

OSPF external type 1,

E2 - OSPF external type 2, N1 - OSPF

NSSA external type 1,

N2 - OSPF NSSA external type2, B I -

iBGP, B E - eBGP,

R - RIP, I L1 - IS-IS level 1, I L2 - IS-IS

level 2,

O3 - OSPFv3, A B - BGP Aggregate, A O -

OSPF Summary,

NG - Nexthop Group Static Route, V -

VXLAN Control Service,

DH - Dhcp client installed default route

O 10.1.1.4/32 [110/30] via 10.10.10.4,

Ethernet2

aris-lf1#

aris-lf2#sh ip route 10.1.1.3

VRF: default

Codes: C - connected, S - static, K - kernel,

O - OSPF, IA - OSPF inter area, E1 -

OSPF external type 1,

E2 - OSPF external type 2, N1 - OSPF

NSSA external type 1,

N2 - OSPF NSSA external type2, B I -

iBGP, B E - eBGP,

R - RIP, I L1 - IS-IS level 1, I L2 - IS-IS

level 2,

O3 - OSPFv3, A B - BGP Aggregate, A O -

OSPF Summary,

NG - Nexthop Group Static Route, V -

VXLAN Control Service,

DH - Dhcp client installed default route

O 10.1.1.3/32 [110/30] via 10.10.10.8,

Ethernet2

aris-lf2#](https://image.slidesharecdn.com/xpresspathvxlanbgpevpnappricot2019-v2-190227114221/85/Xpress-path-vxlan_bgp_evpn_appricot2019-v2_-16-320.jpg)

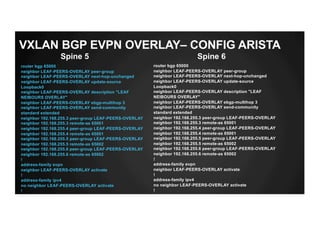

![DC1-LF9#sh ip route bgp

VRF: default

Codes: C - connected, S - static, K - kernel,

O - OSPF, IA - OSPF inter area, E1 - OSPF external type 1,

E2 - OSPF external type 2, N1 - OSPF NSSA external type 1,

N2 - OSPF NSSA external type2, B I - iBGP, B E - eBGP,

R - RIP, I L1 - IS-IS level 1, I L2 - IS-IS level 2,

O3 - OSPFv3, A B - BGP Aggregate, A O - OSPF Summary,

NG - Nexthop Group Static Route, V - VXLAN Control Service,

DH - Dhcp client installed default route

B E 172.16.255.10/32 [20/0] via 10.10.10.1, Ethernet1

via 10.10.10.3, Ethernet2

B E 172.168.254.11/32 [20/0] via 10.10.10.1, Ethernet1

via 10.10.10.3, Ethernet2

B E 172.168.255.11/32 [20/0] via 10.10.10.1, Ethernet1

via 10.10.10.3, Ethernet2

B E 172.168.255.12/32 [20/0] via 10.10.10.1, Ethernet1

via 10.10.10.3, Ethernet2

B E 192.168.255.1/32 [20/0] via 10.10.10.1, Ethernet1

B E 192.168.255.2/32 [20/0] via 10.10.10.3, Ethernet2

B E 192.168.255.4/32 [20/0] via 10.10.10.1, Ethernet1

via 10.10.10.3, Ethernet2

B E 192.168.255.5/32 [20/0] via 10.10.10.1, Ethernet1

via 10.10.10.3, Ethernet2

B E 192.168.255.6/32 [20/0] via 10.10.10.1, Ethernet1

via 10.10.10.3, Ethernet2

DC1-LF9#

Leaf9

VXLAN BGP EVPN UNDERLAY – OUTPUTS](https://image.slidesharecdn.com/xpresspathvxlanbgpevpnappricot2019-v2-190227114221/85/Xpress-path-vxlan_bgp_evpn_appricot2019-v2_-54-320.jpg)

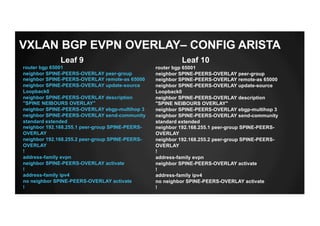

![DC1-LF11#sh ip route vrf TEST-VRF-VLAN28

VRF: TEST-VRF-VLAN28

Codes: C - connected, S - static, K - kernel,

O - OSPF, IA - OSPF inter area, E1 - OSPF external type 1,

E2 - OSPF external type 2, N1 - OSPF NSSA external type 1,

N2 - OSPF NSSA external type2, B I - iBGP, B E - eBGP,

R - RIP, I L1 - IS-IS level 1, I L2 - IS-IS level 2,

O3 - OSPFv3, A B - BGP Aggregate, A O - OSPF Summary,

NG - Nexthop Group Static Route, V - VXLAN Control Service,

DH - Dhcp client installed default route

Gateway of last resort is not set

B E 10.10.10.10/32 [200/0] via VTEP 172.16.255.10 VNI 13030 router-

mac 50:00:00:d7:ee:0b

via VTEP 172.16.255.9 VNI 13030 router-mac

50:00:00:6b:2e:70

B E 172.16.16.0/24 [200/0] via VTEP 172.16.255.10 VNI 13030 router-

mac 50:00:00:d7:ee:0b

via VTEP 172.16.255.9 VNI 13030 router-mac

50:00:00:6b:2e:70

C 192.168.20.0/24 is directly connected, Vlan28

DC1-LF11#

Leaf 11

VXLAN BGP EVPN OVERLAY SERVICE– OUTPUTS](https://image.slidesharecdn.com/xpresspathvxlanbgpevpnappricot2019-v2-190227114221/85/Xpress-path-vxlan_bgp_evpn_appricot2019-v2_-65-320.jpg)