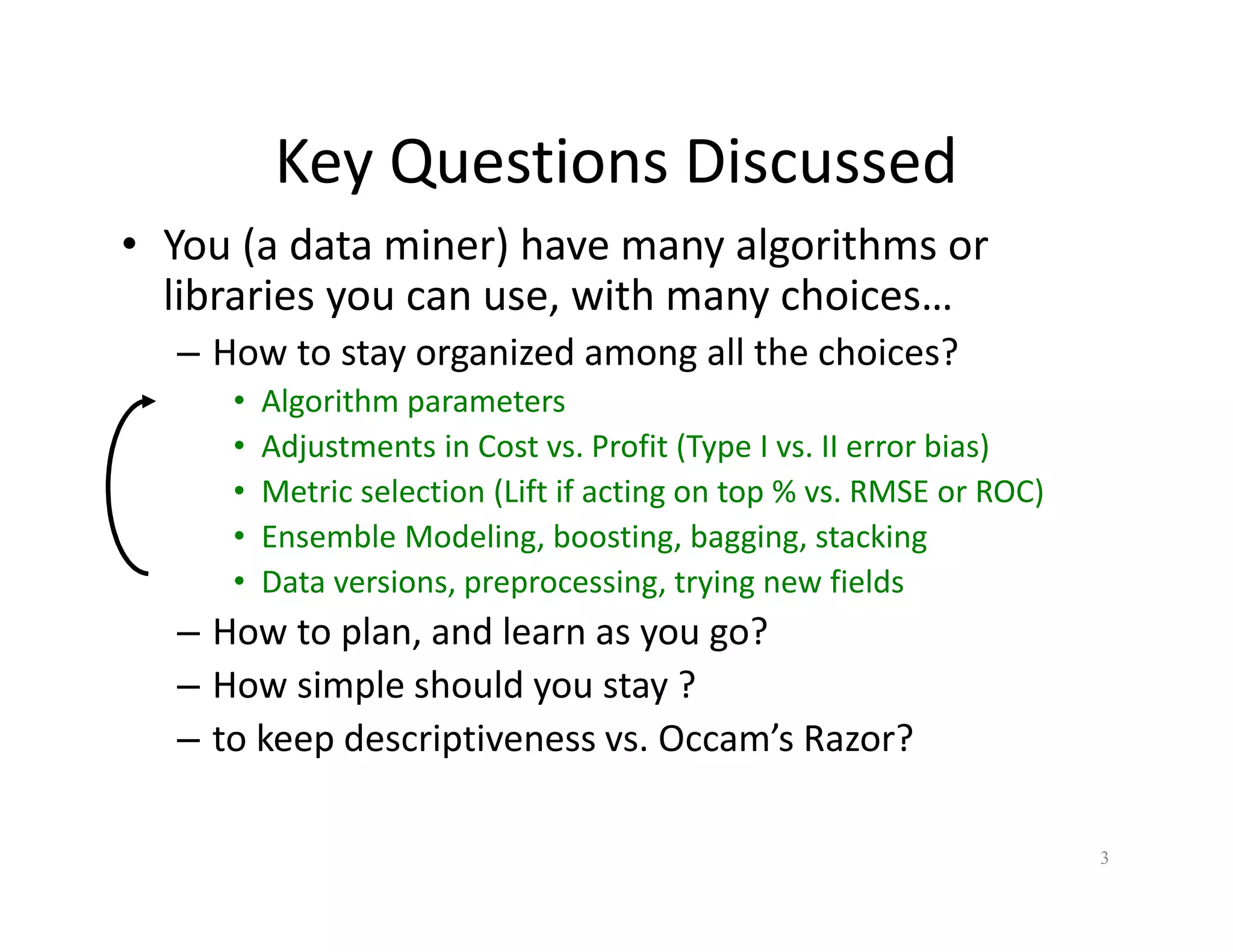

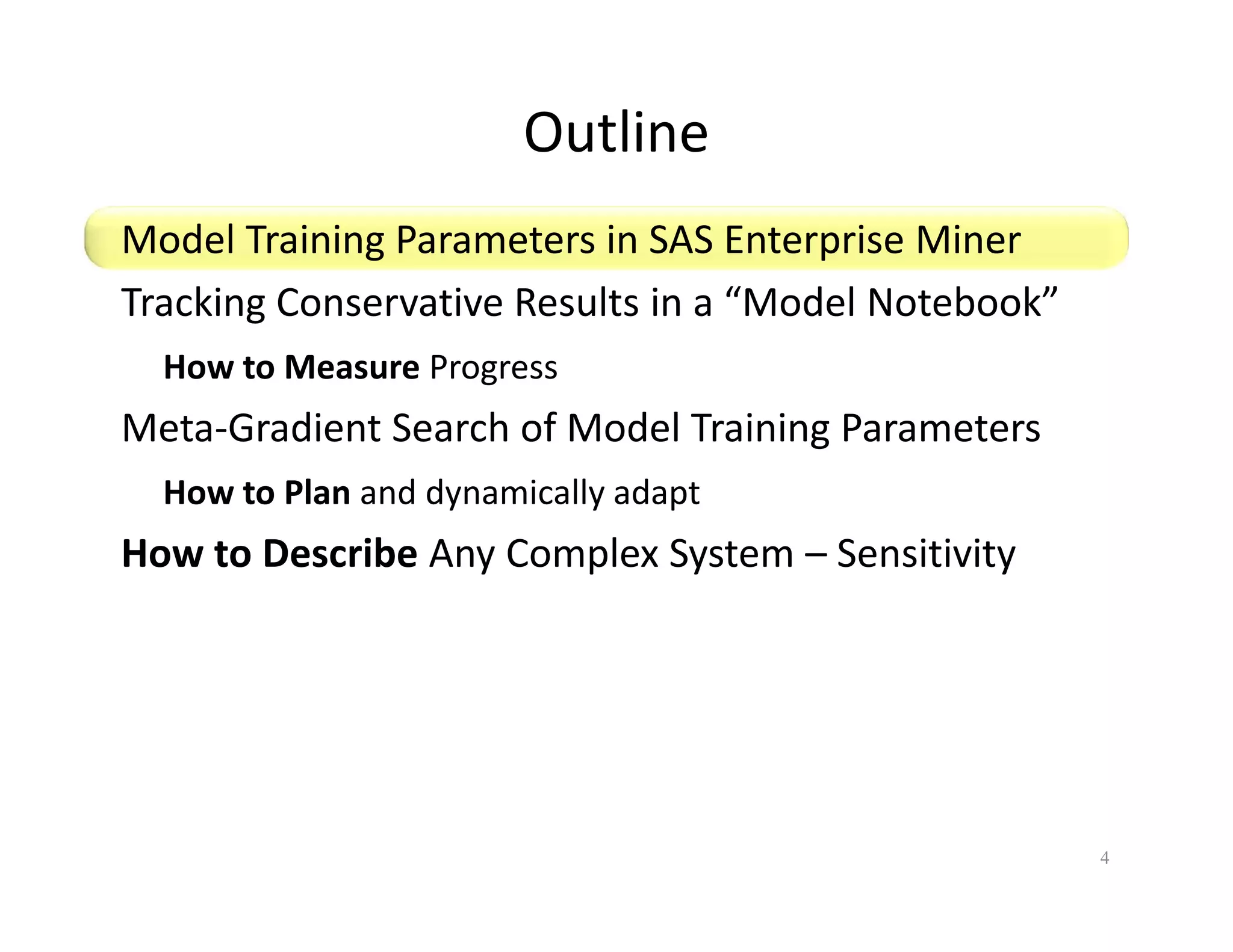

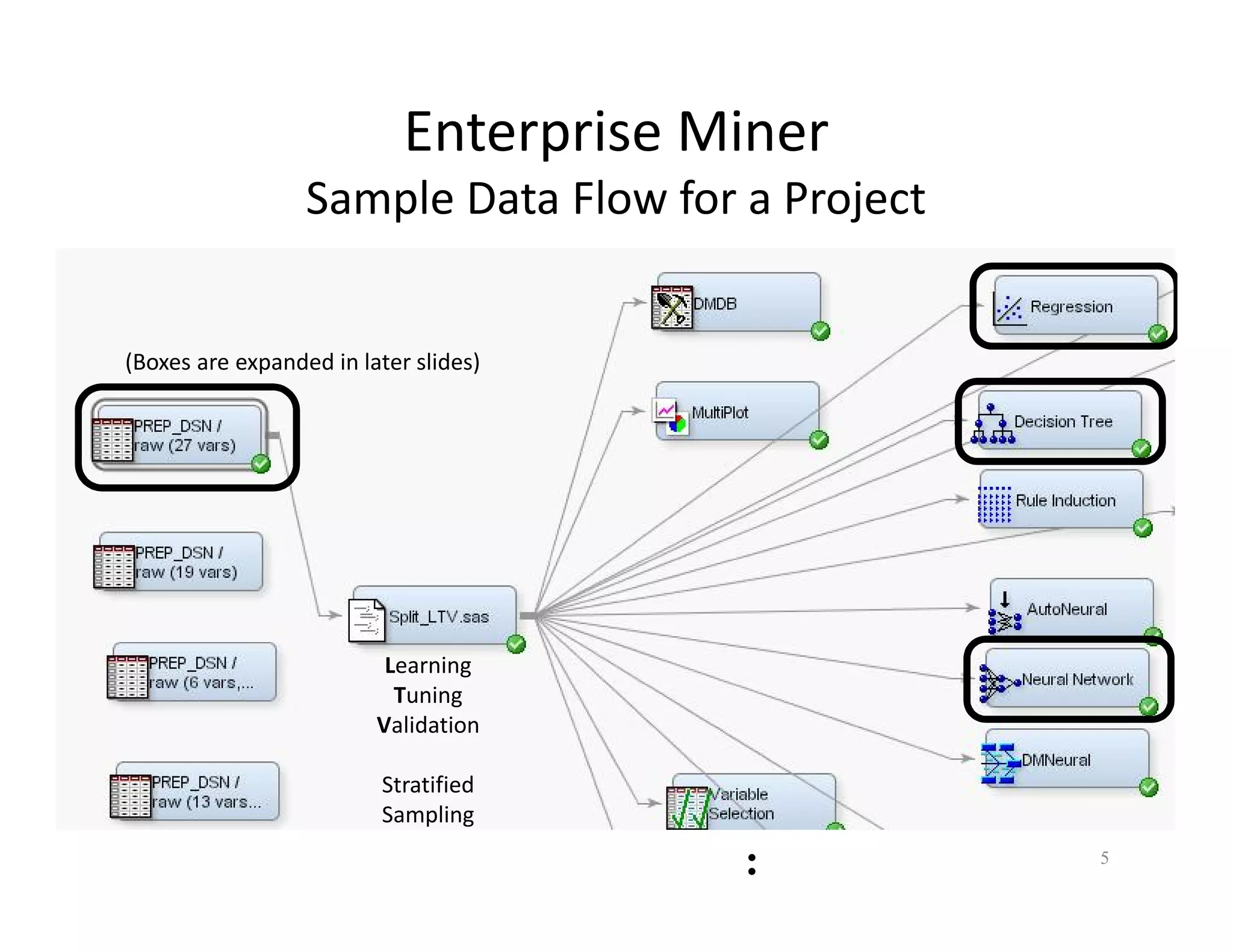

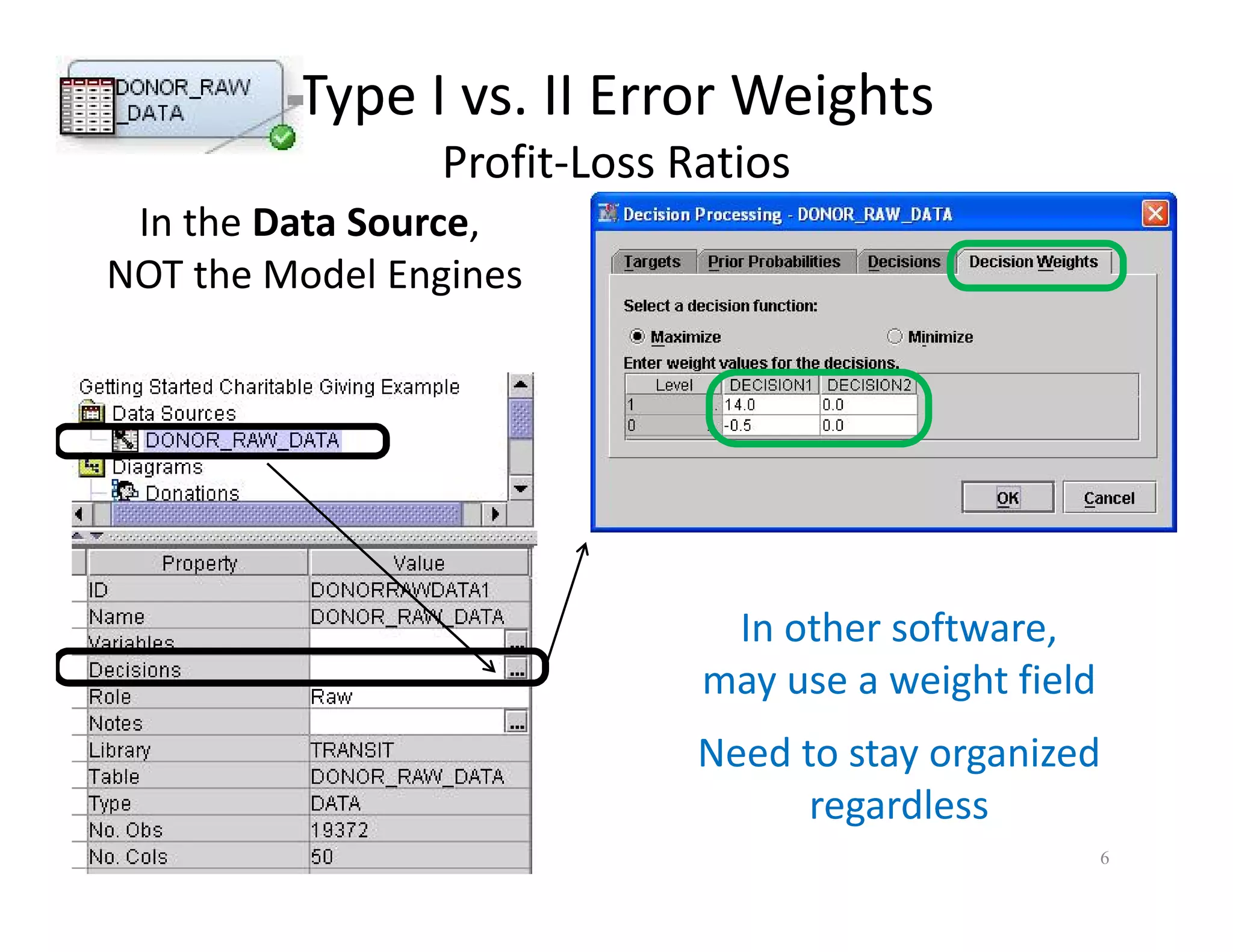

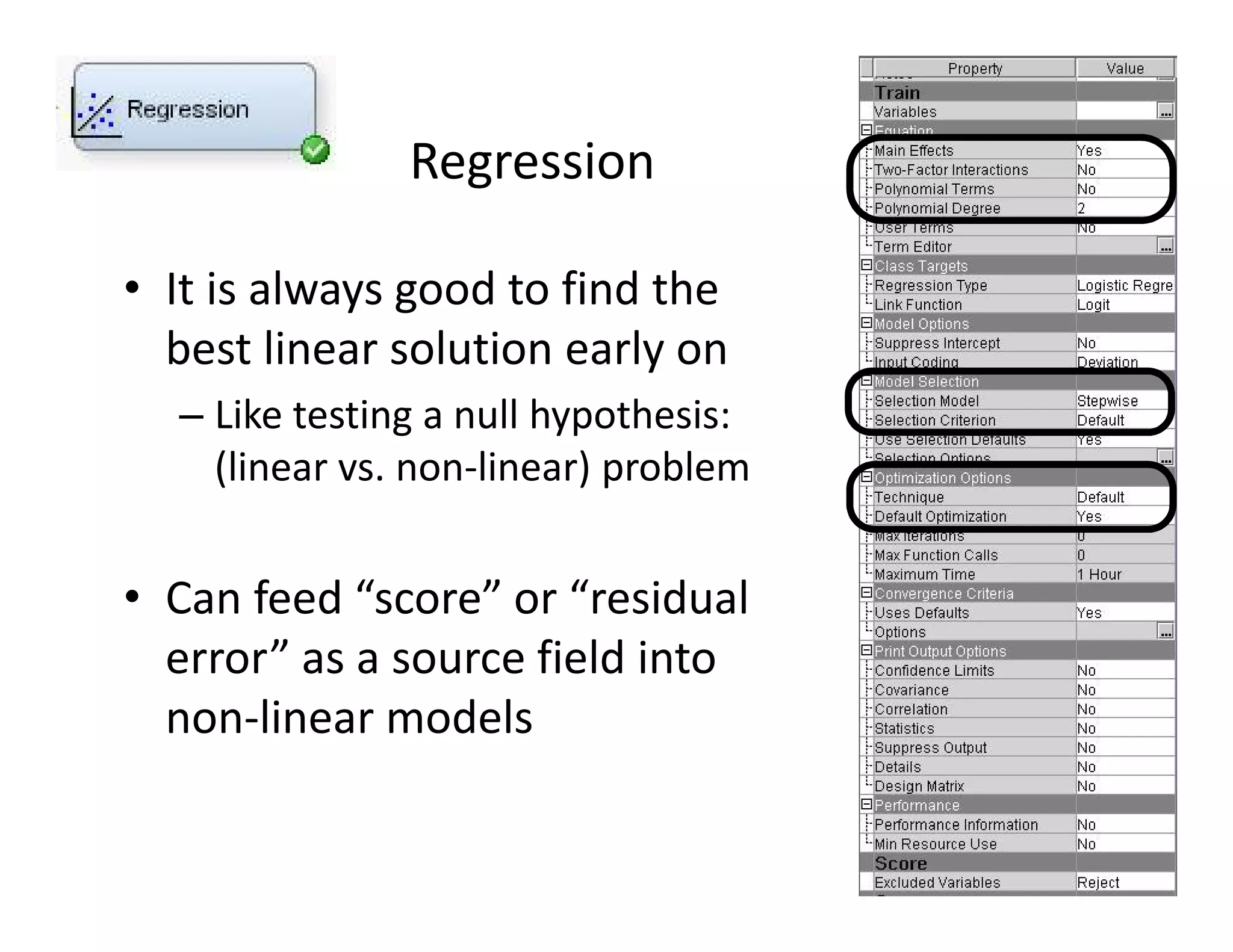

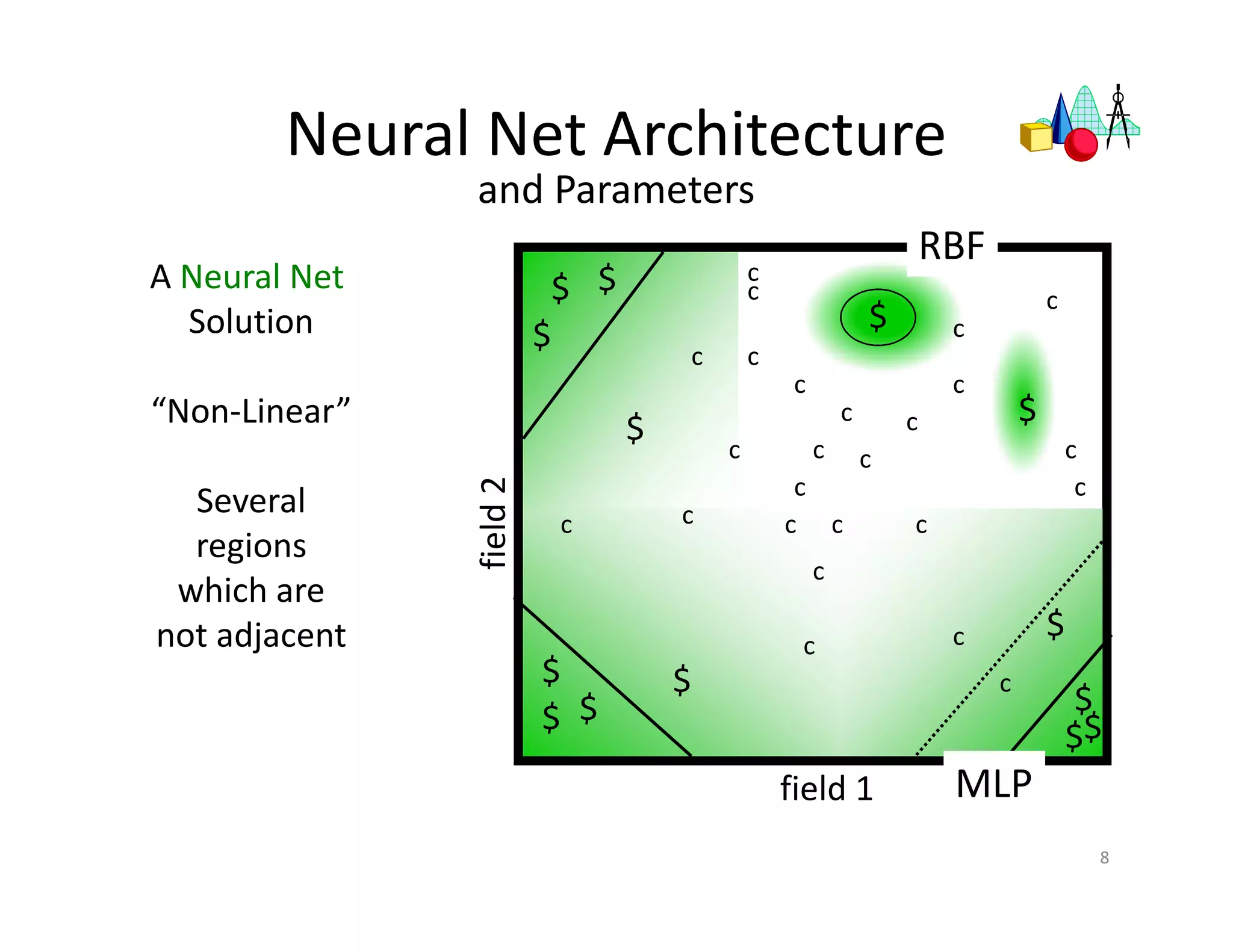

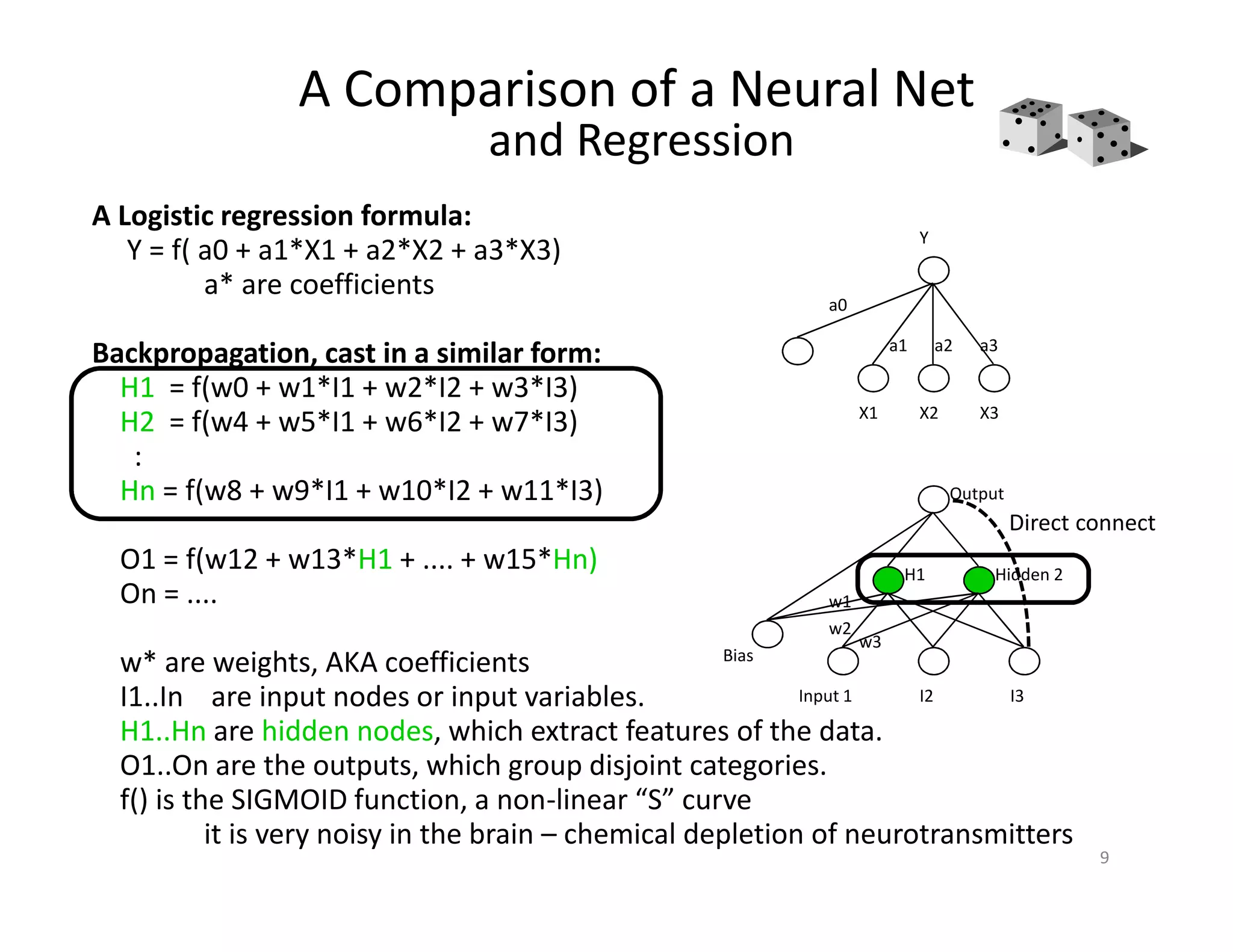

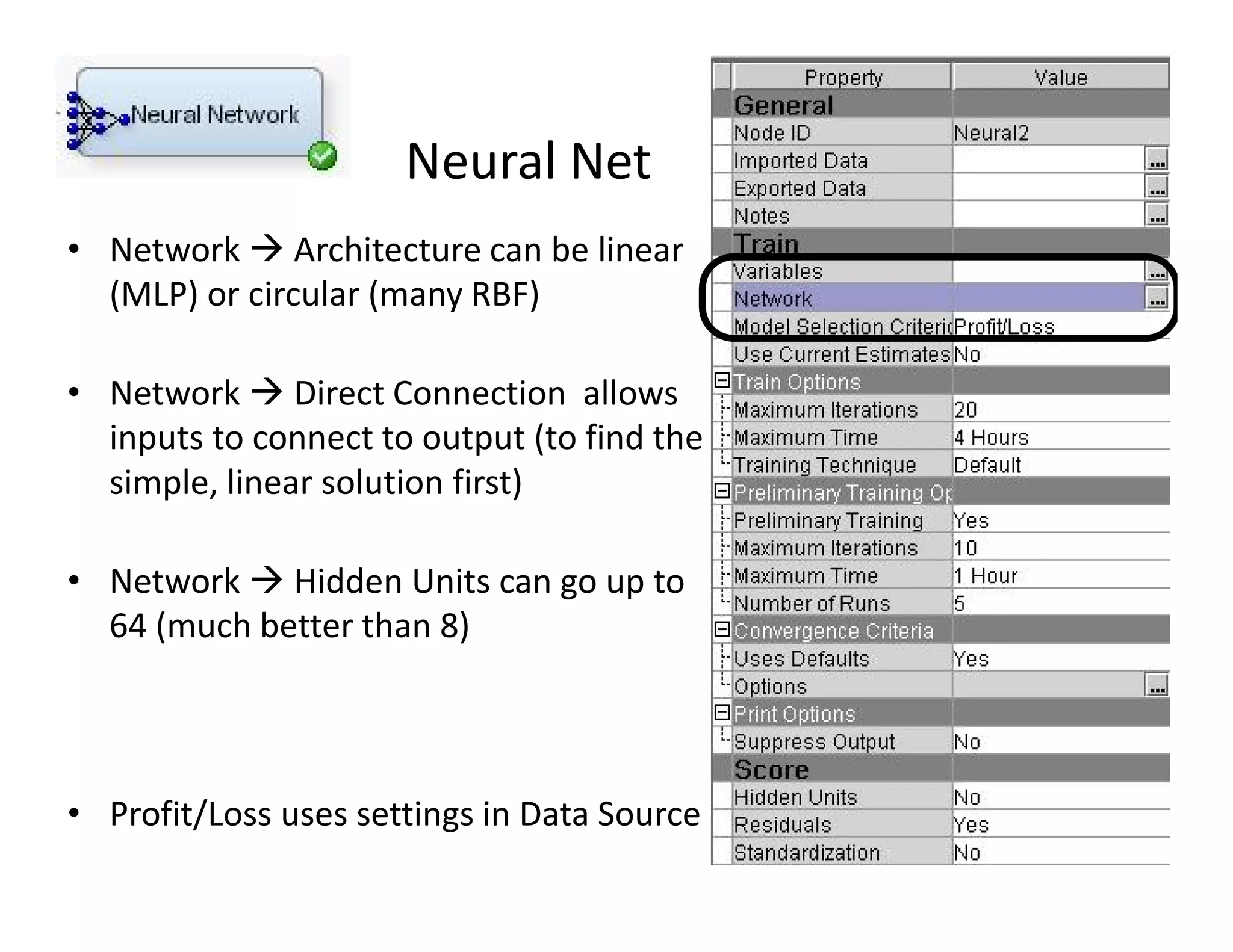

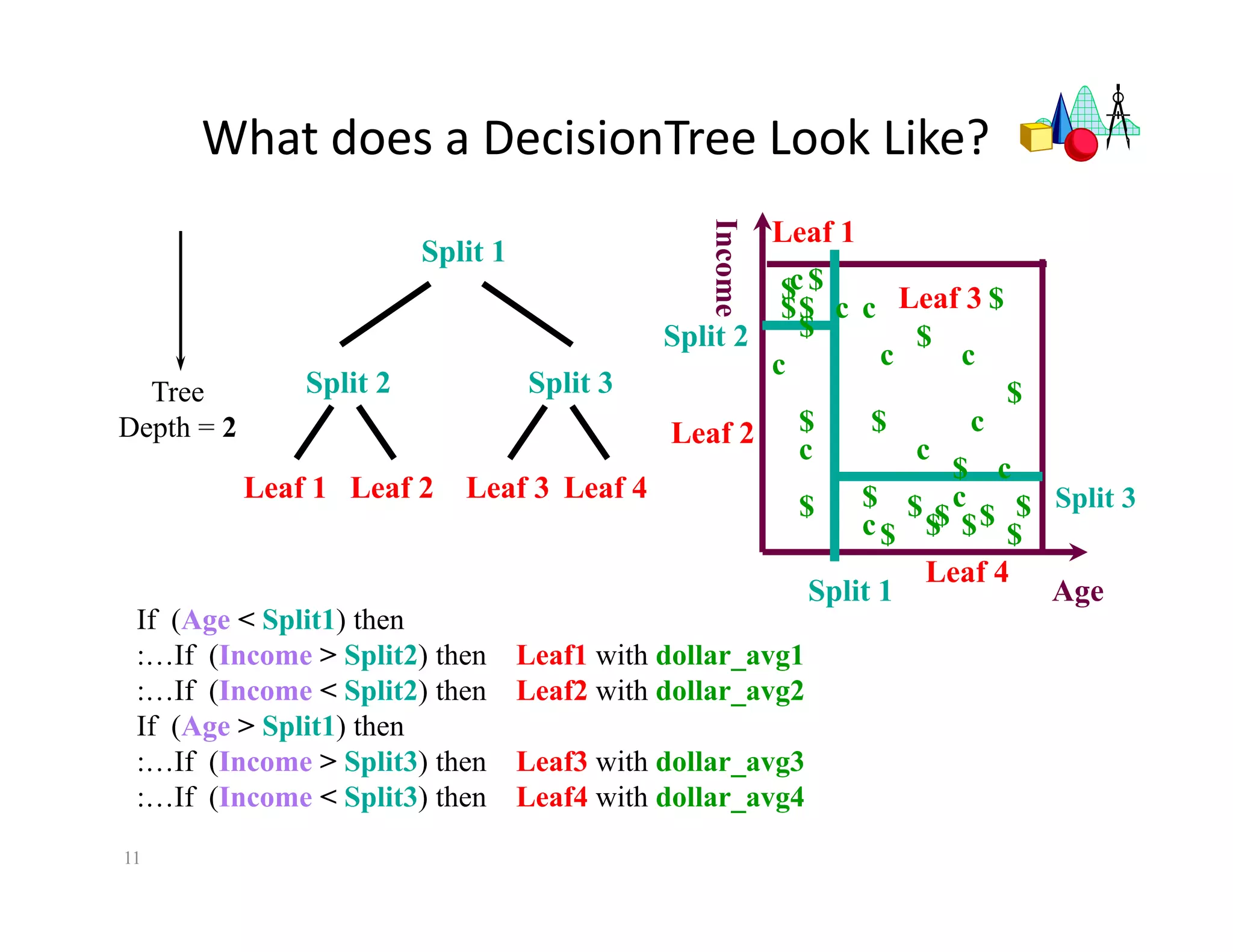

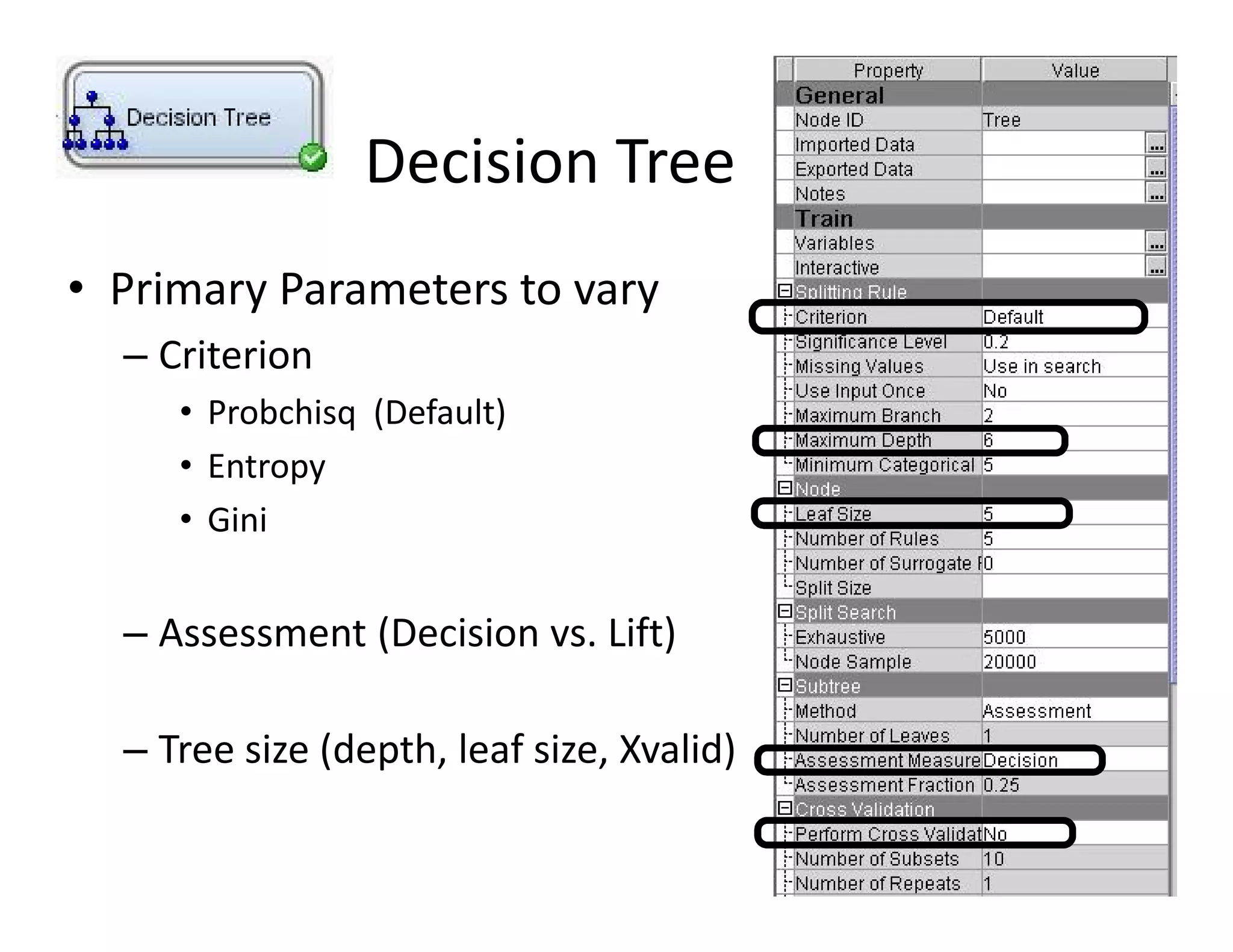

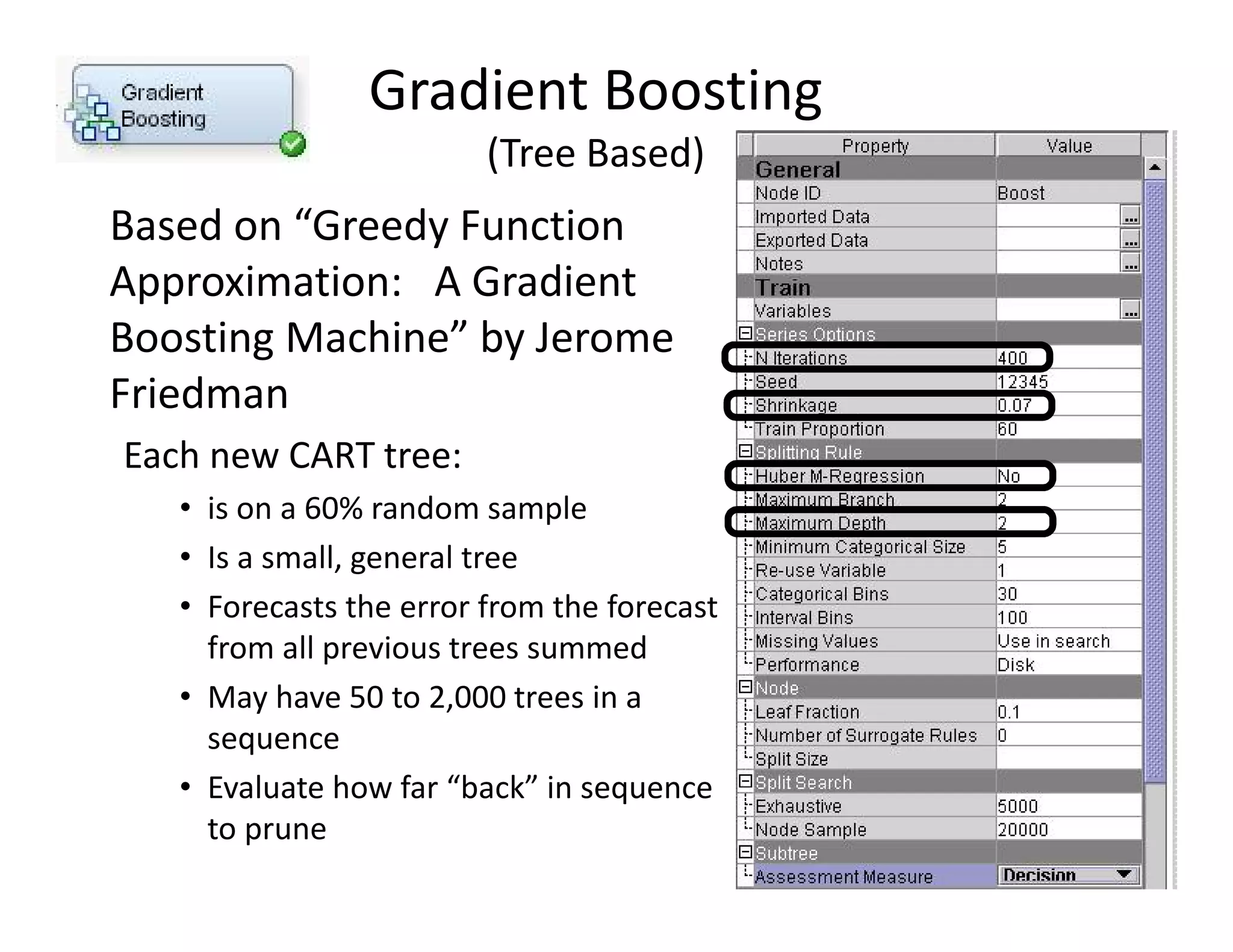

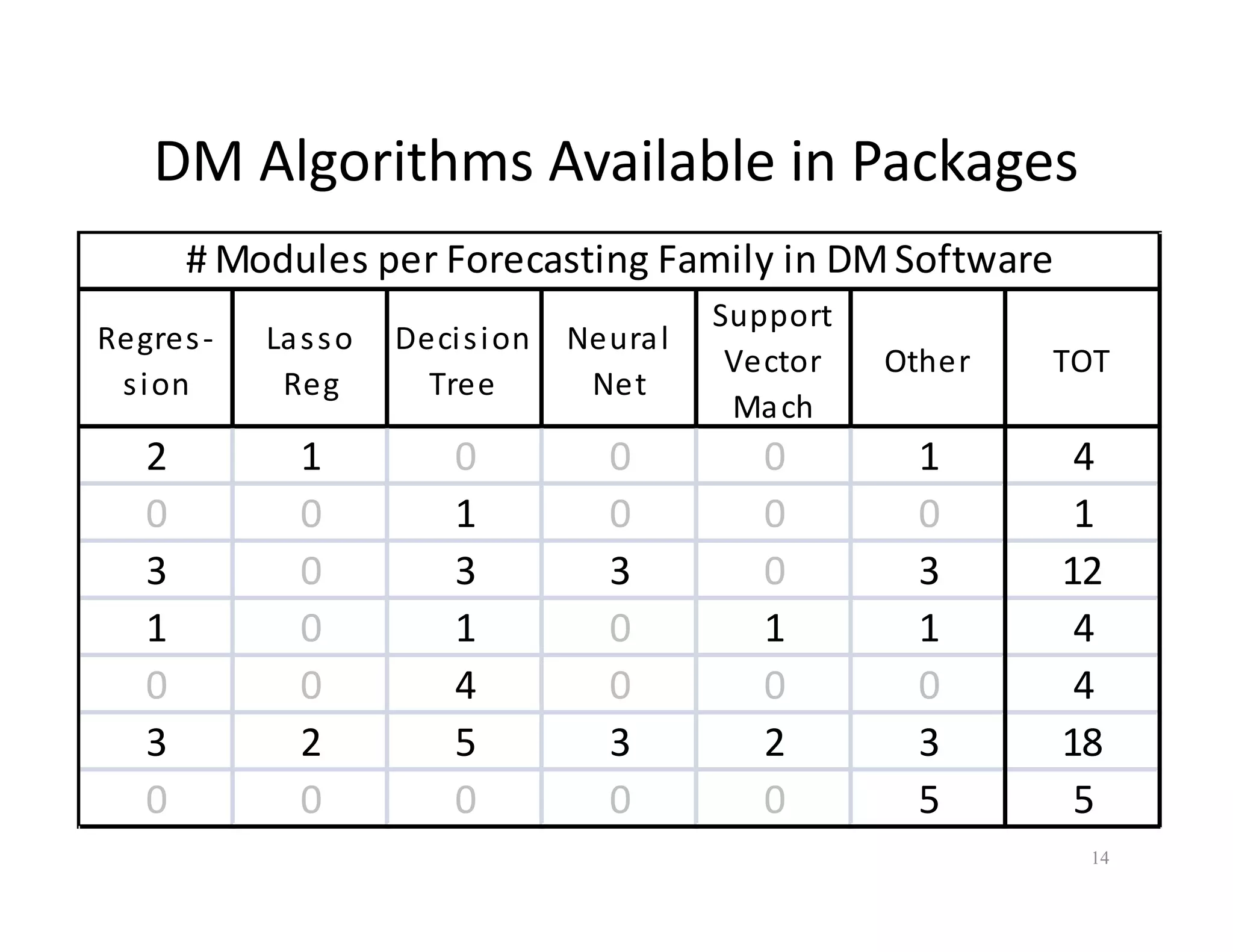

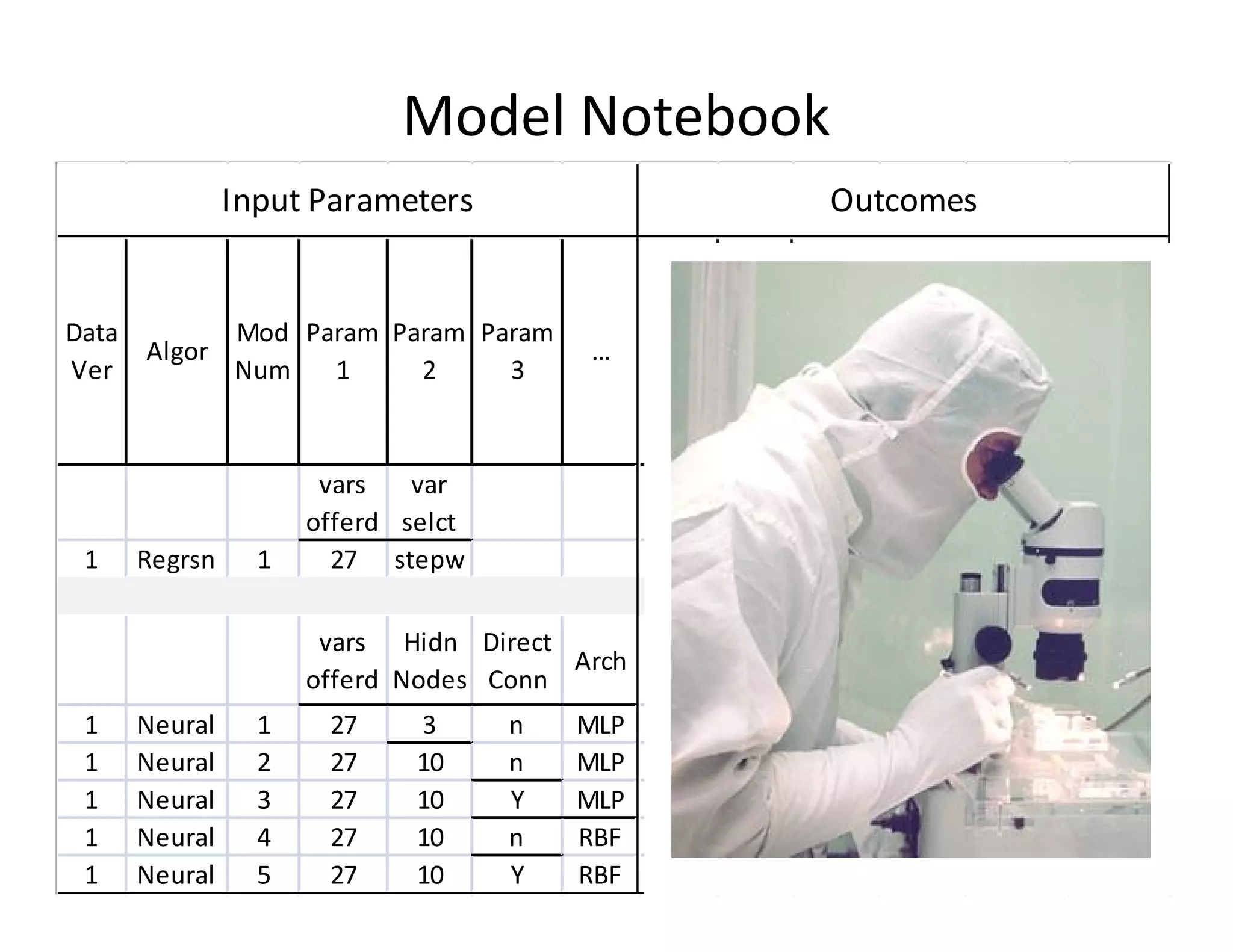

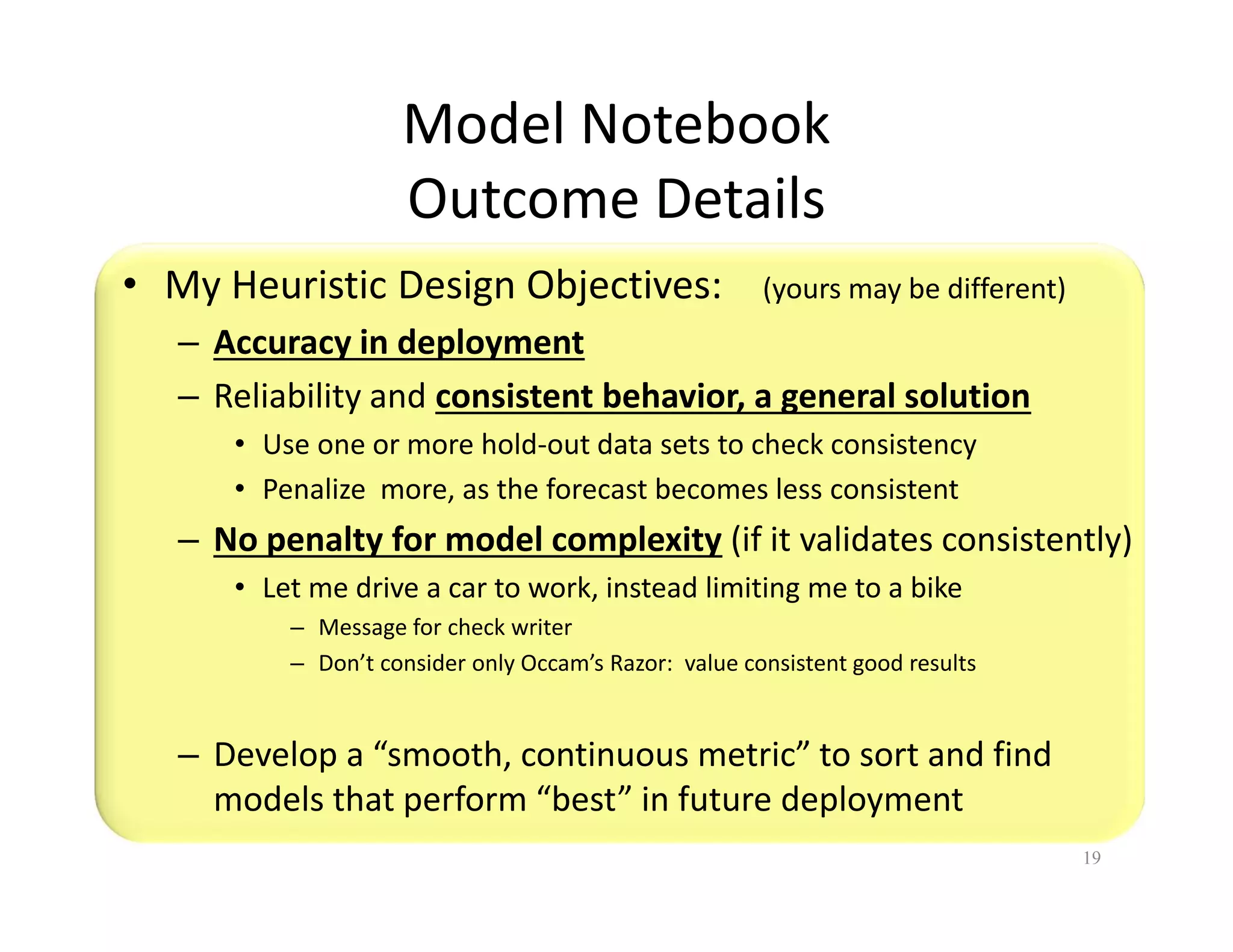

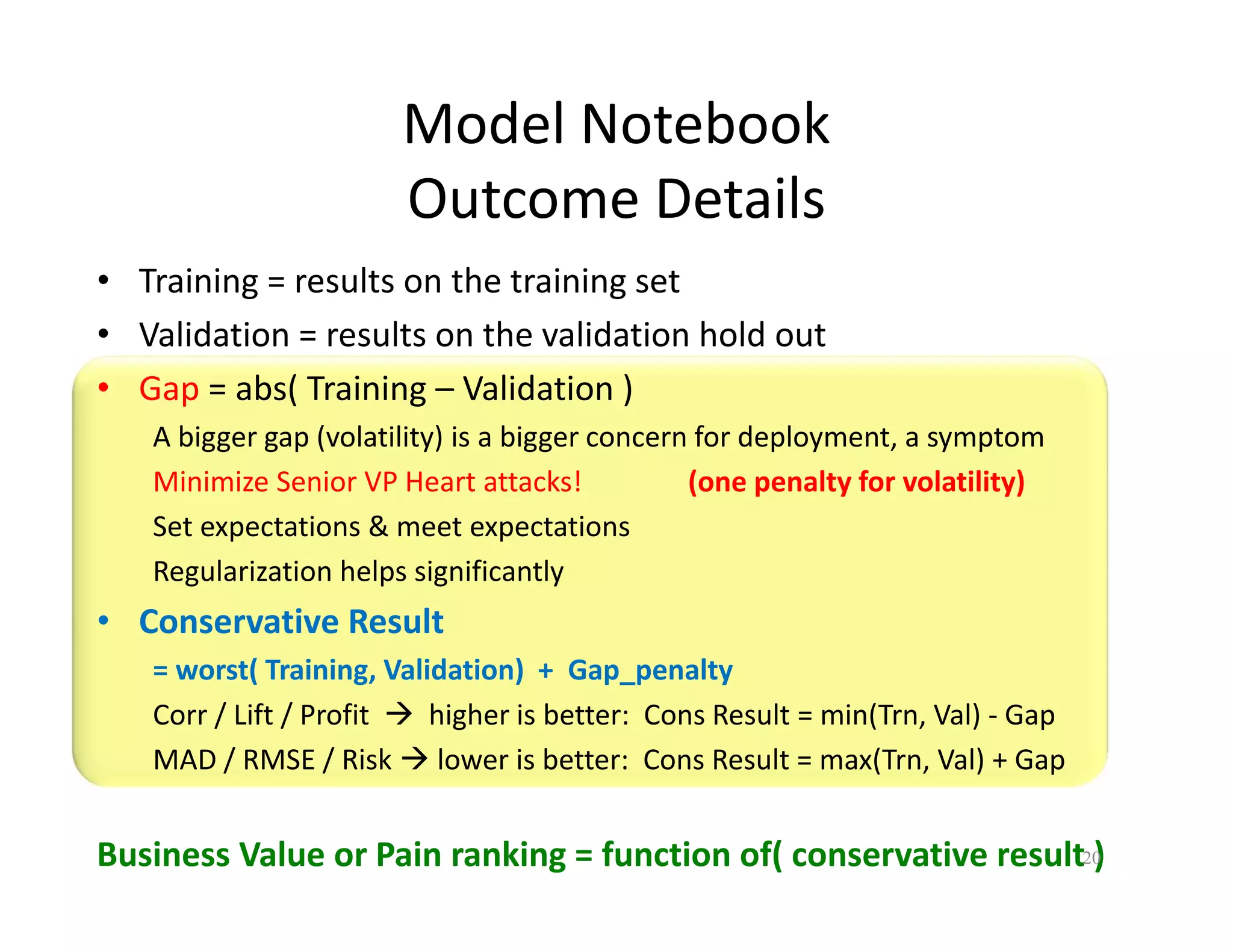

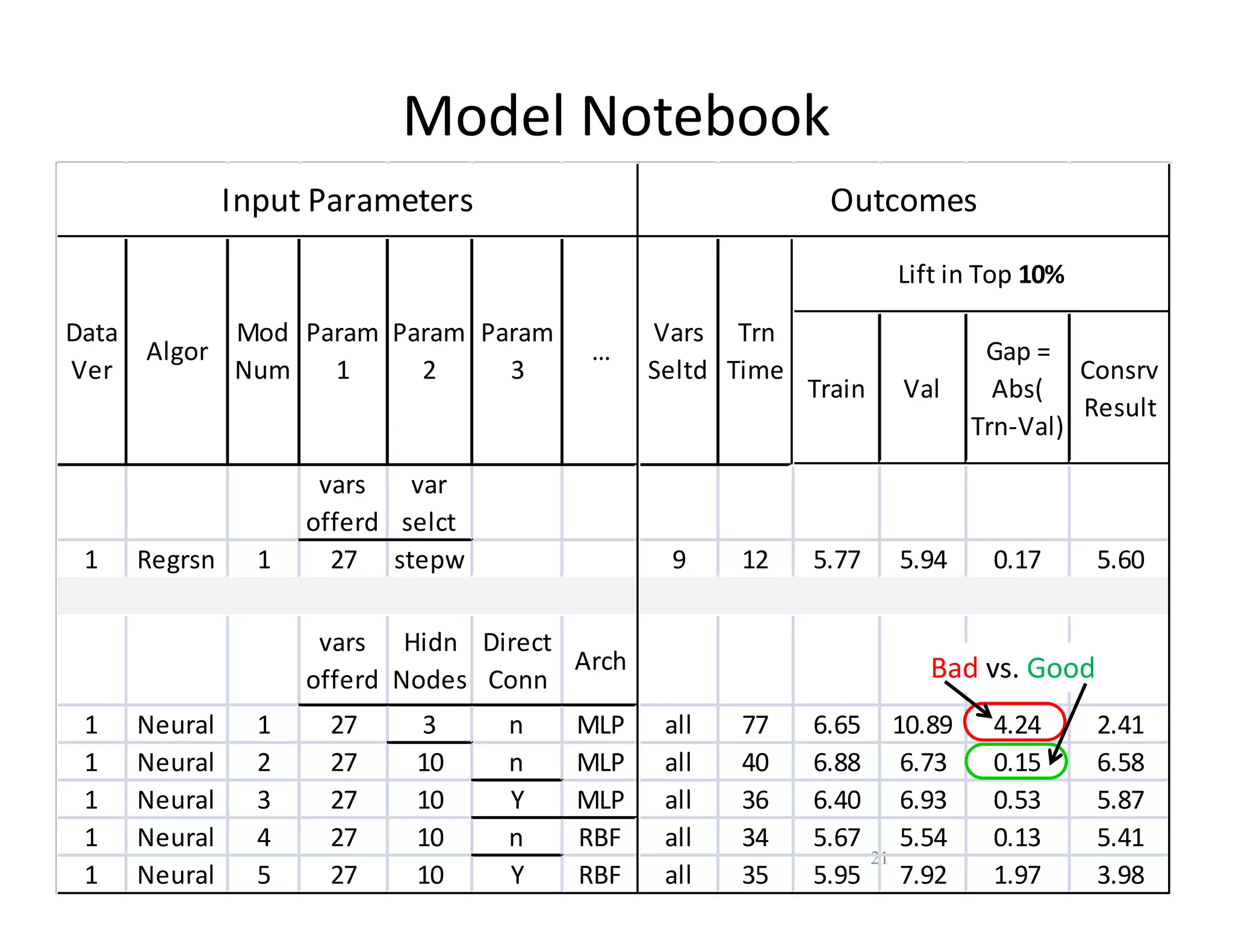

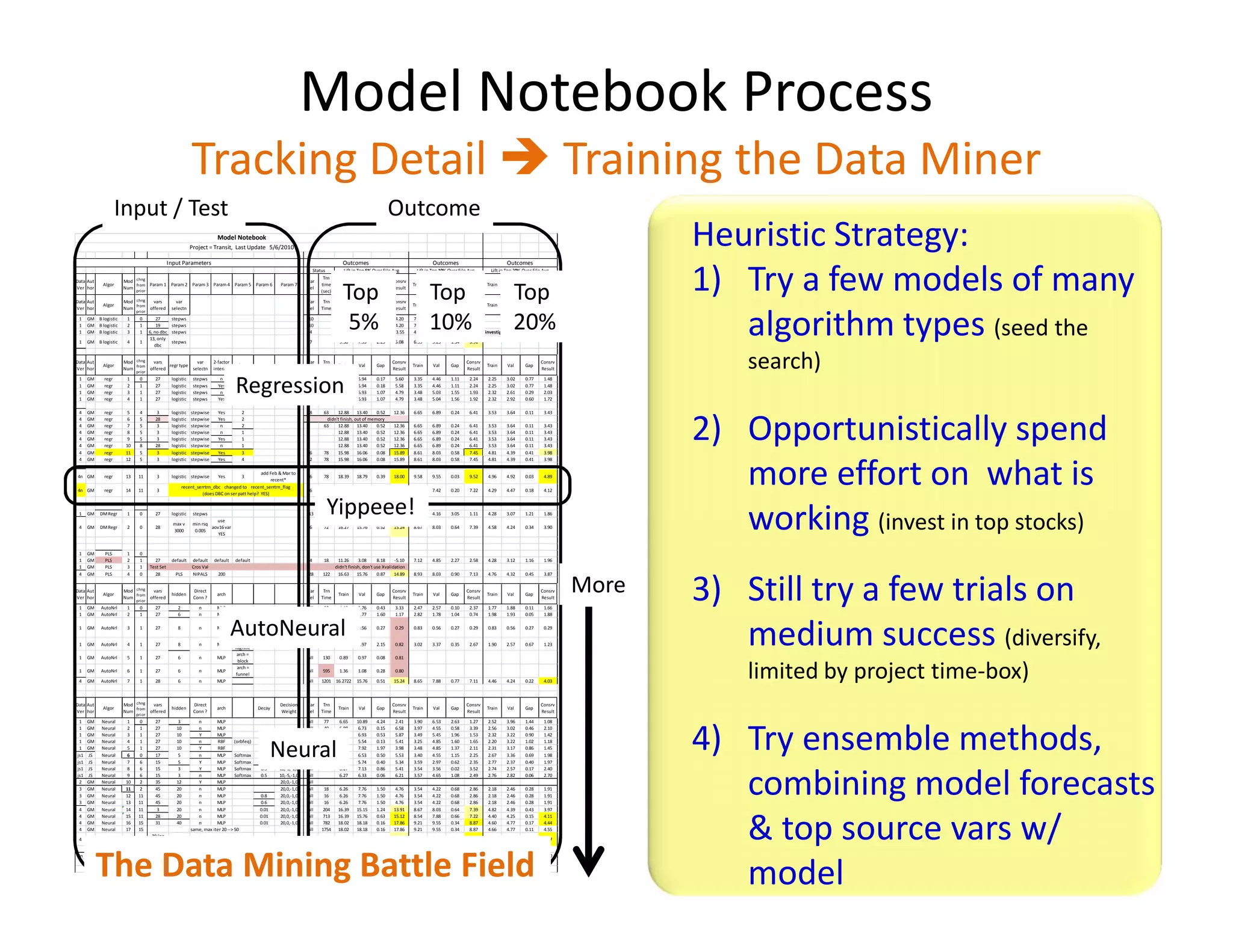

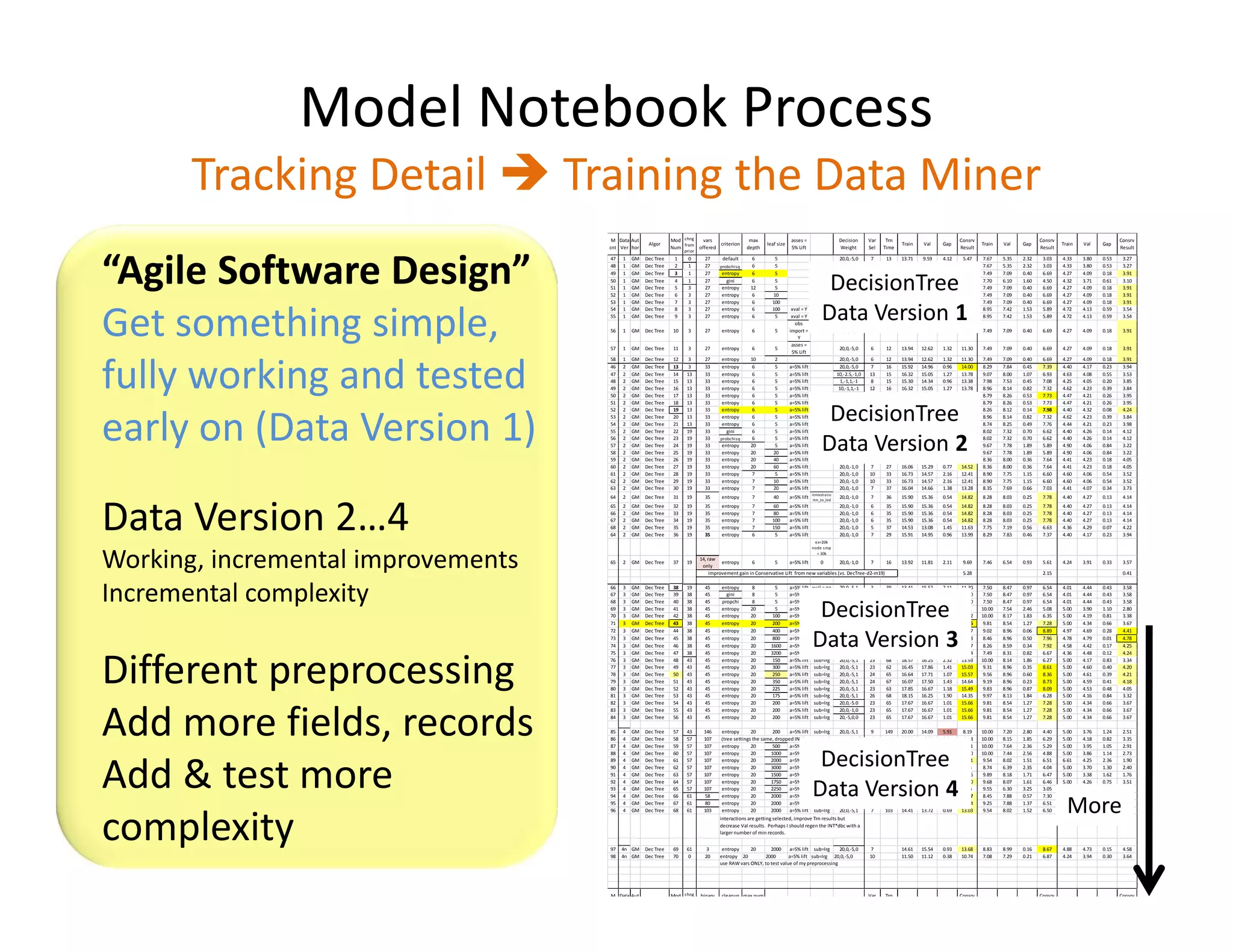

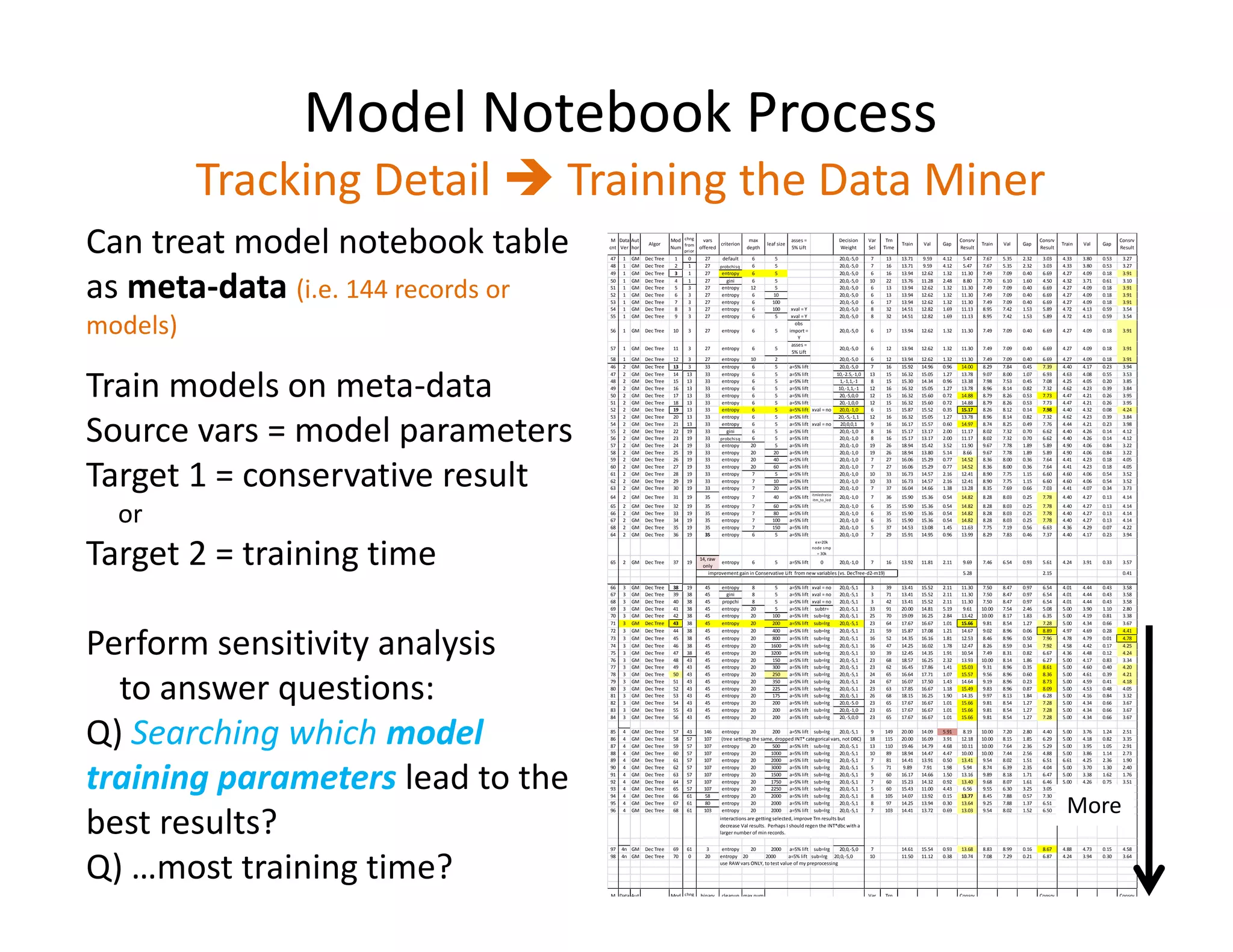

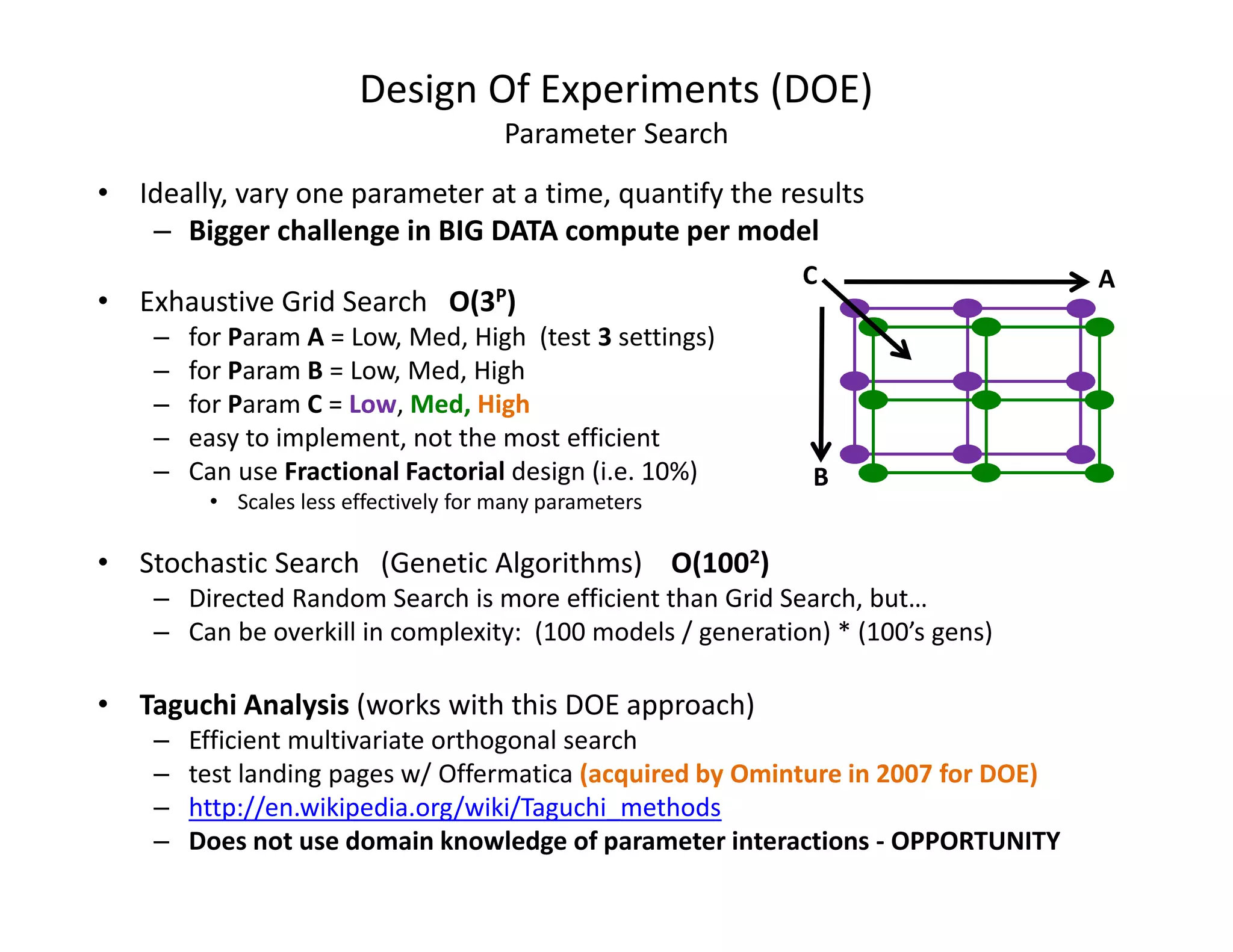

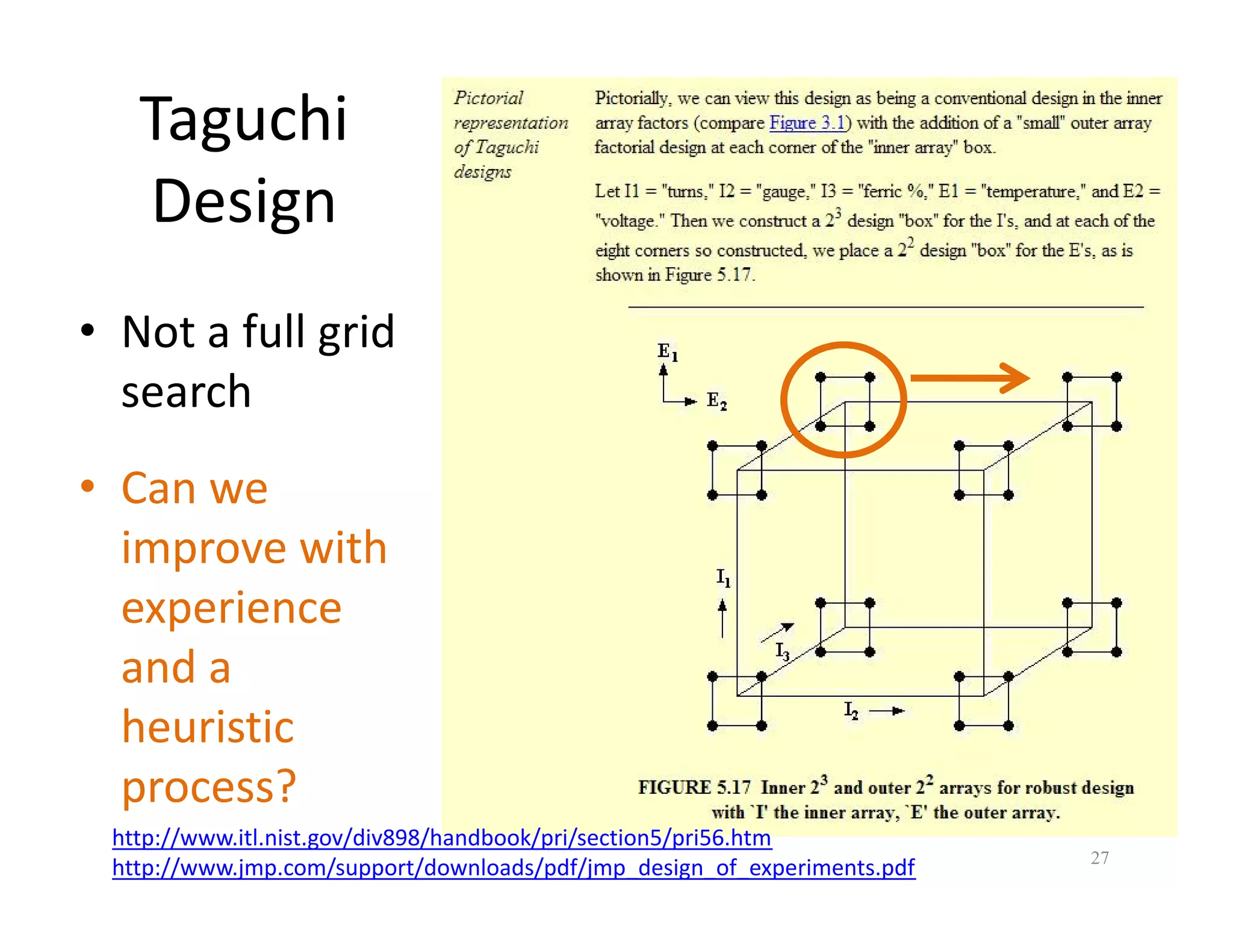

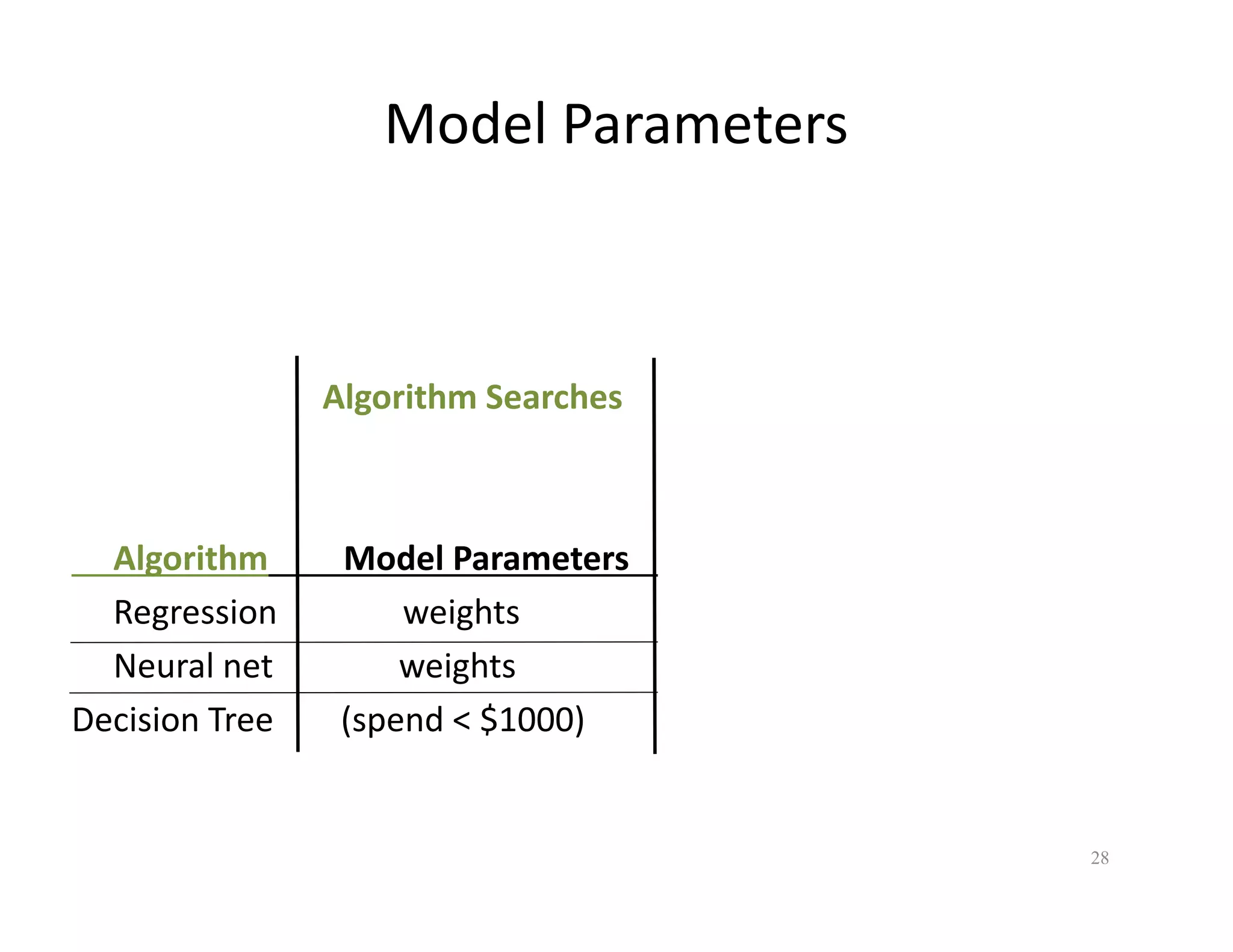

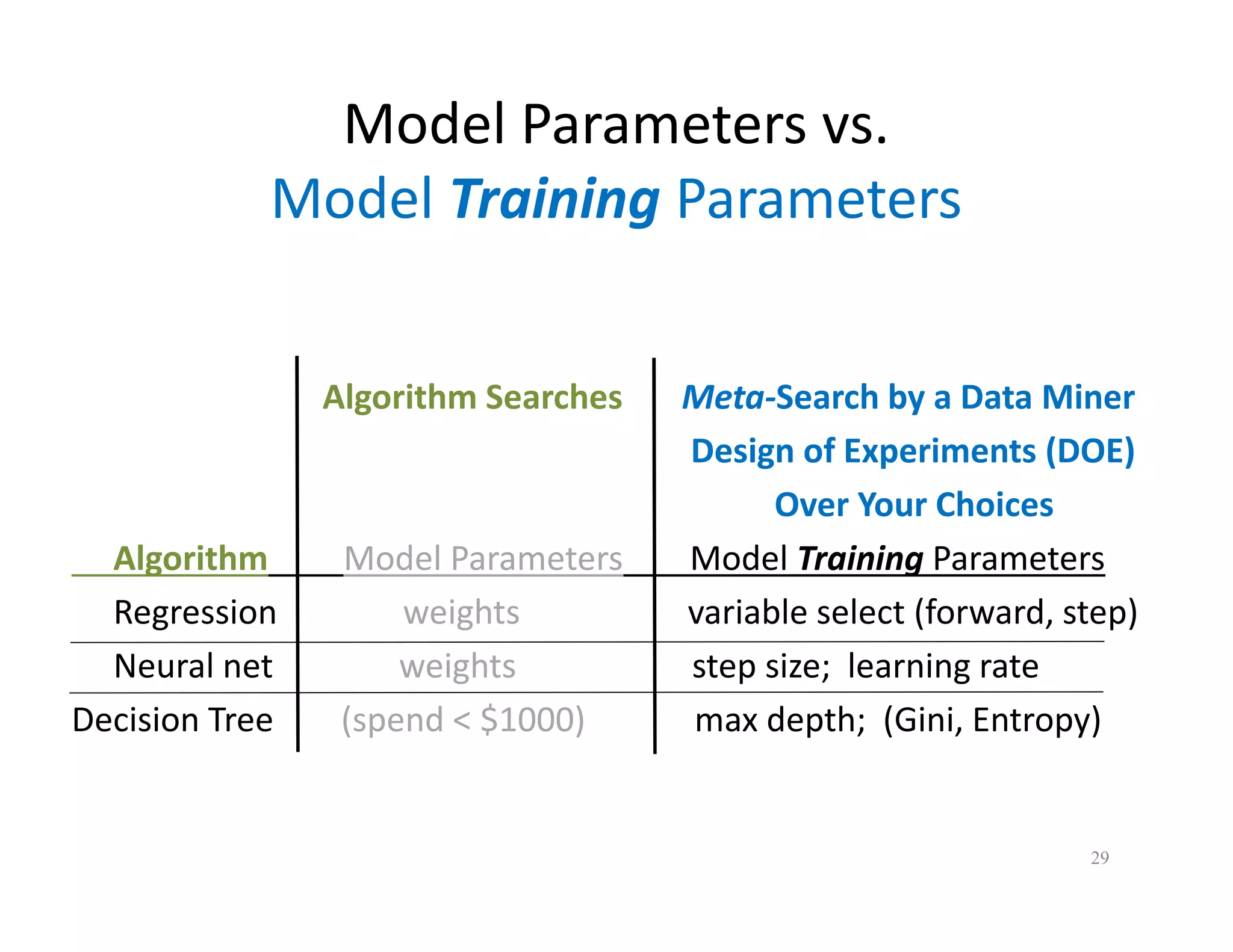

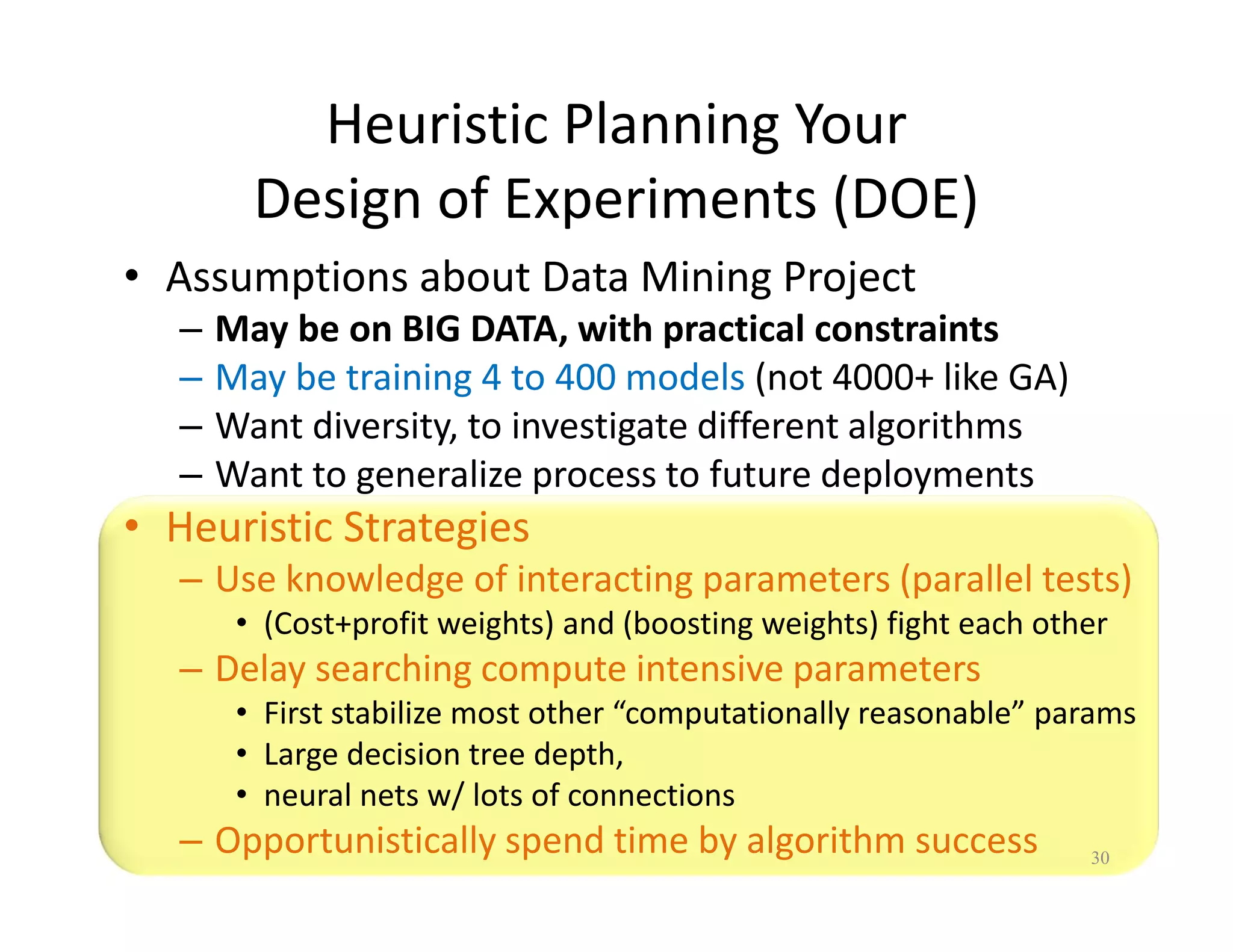

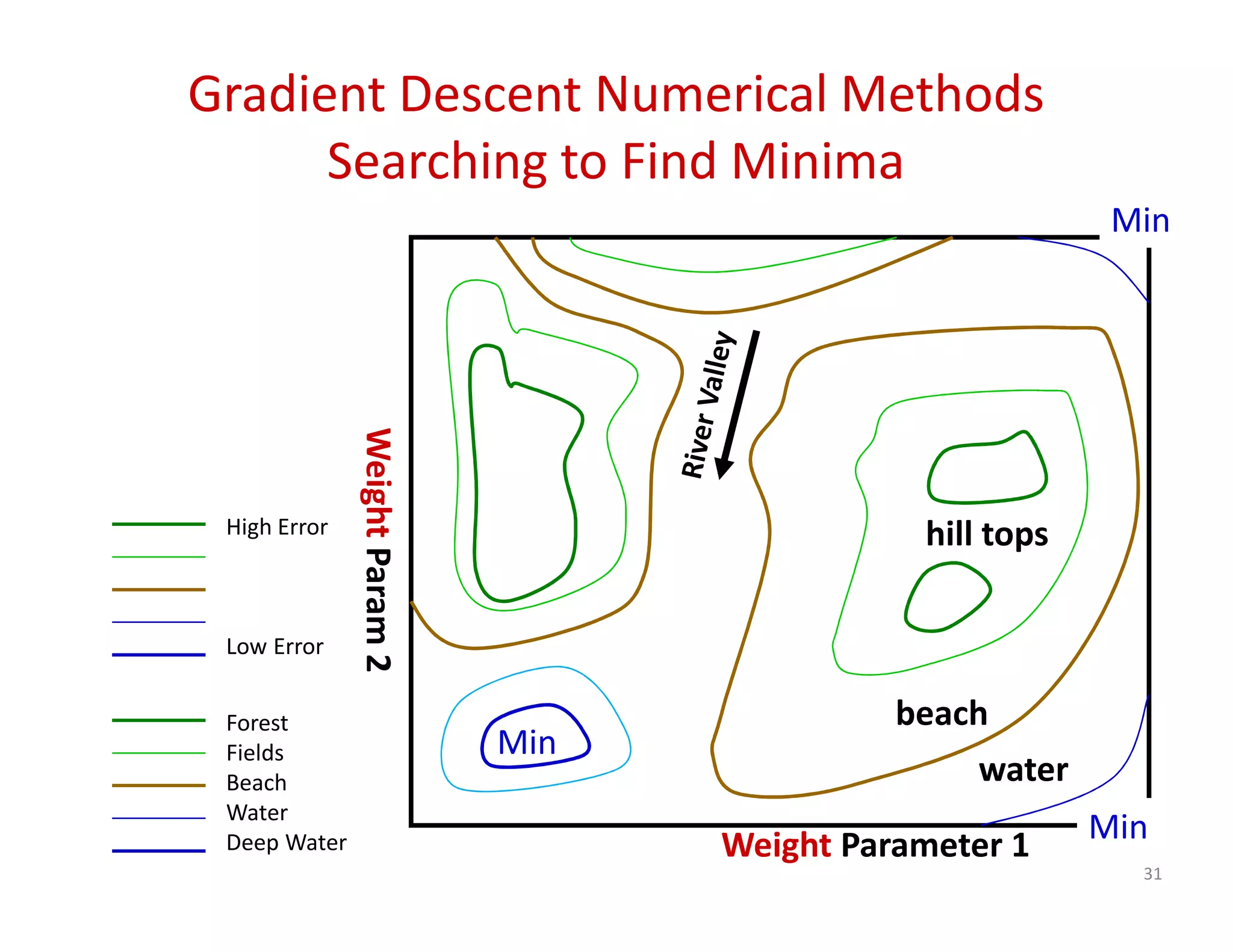

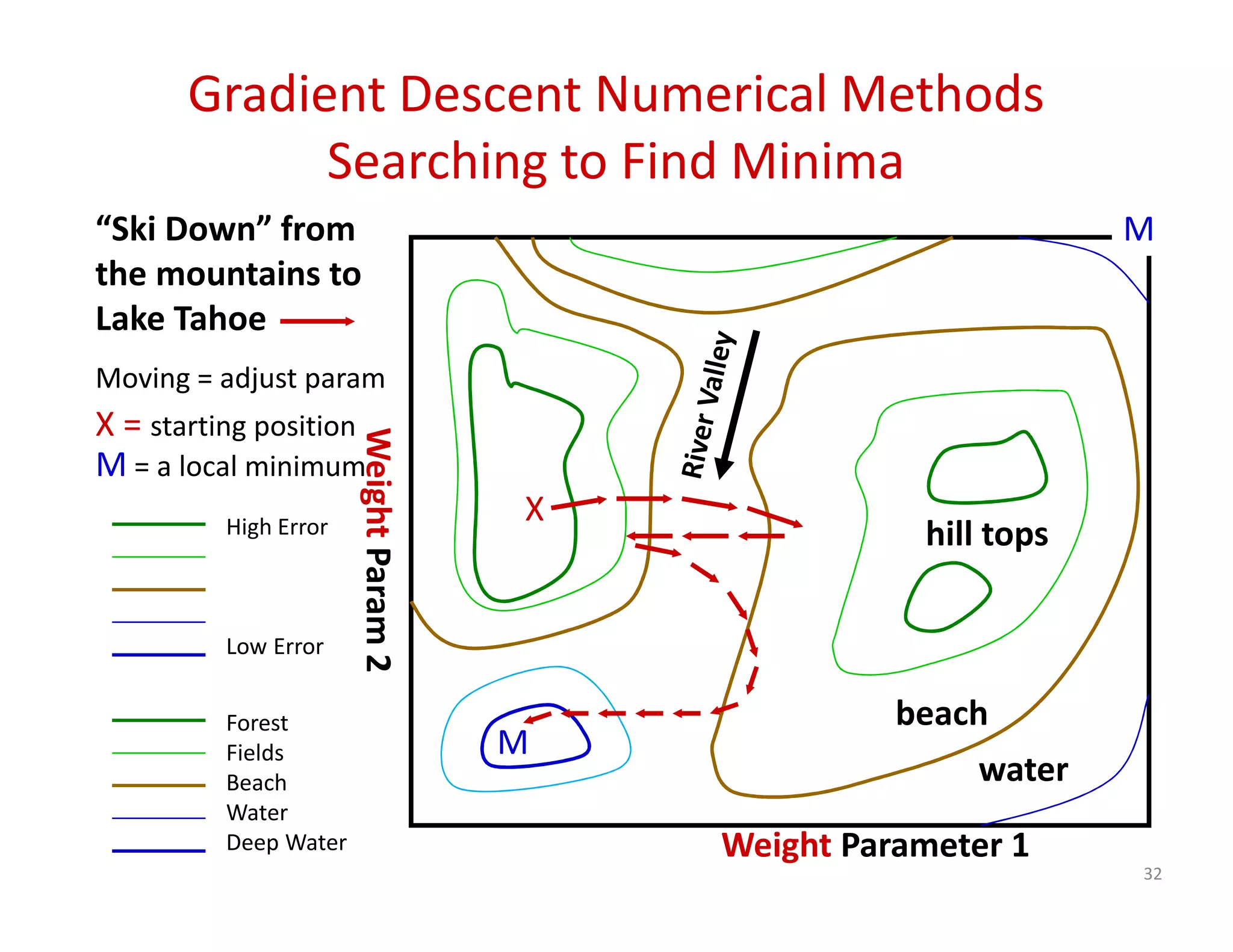

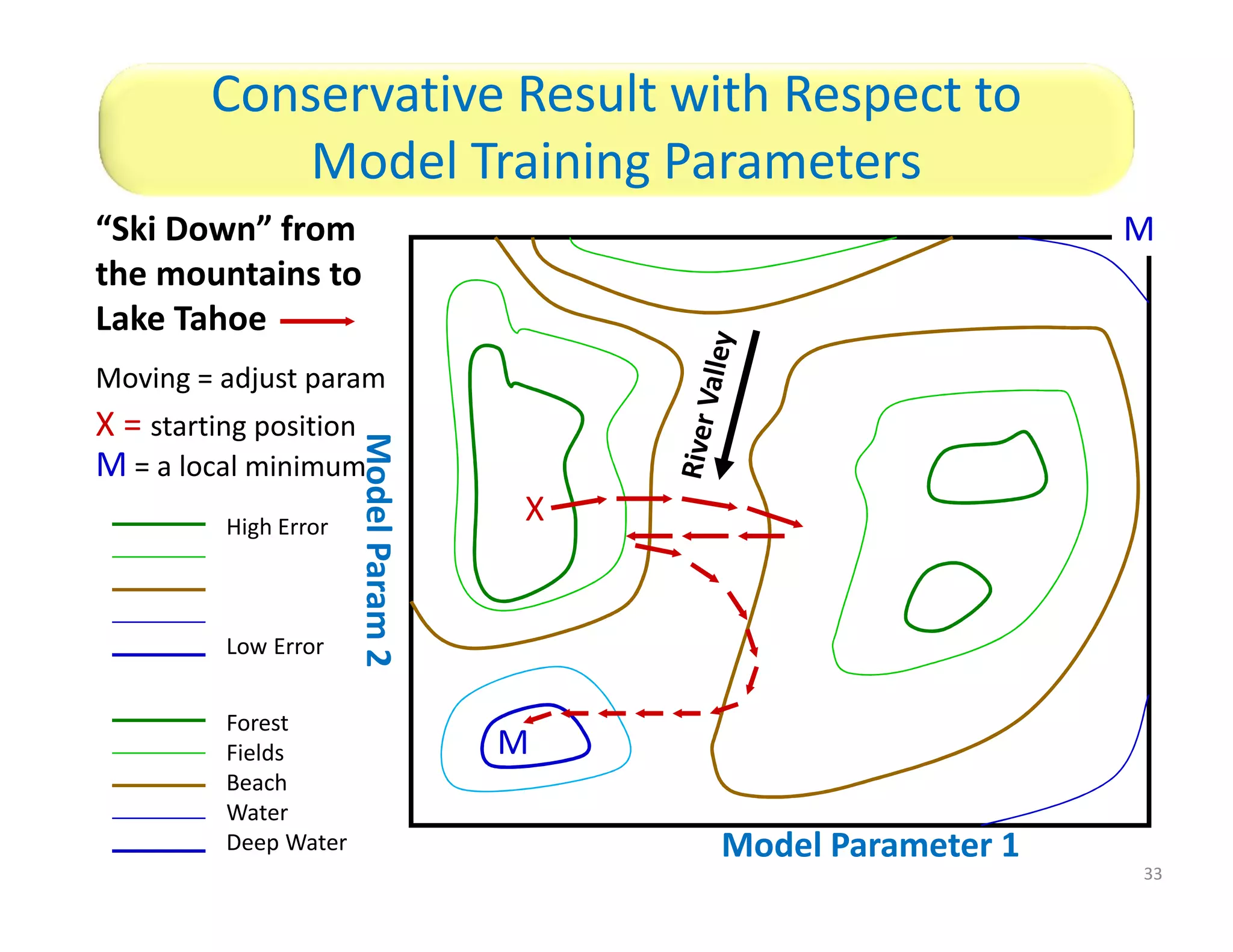

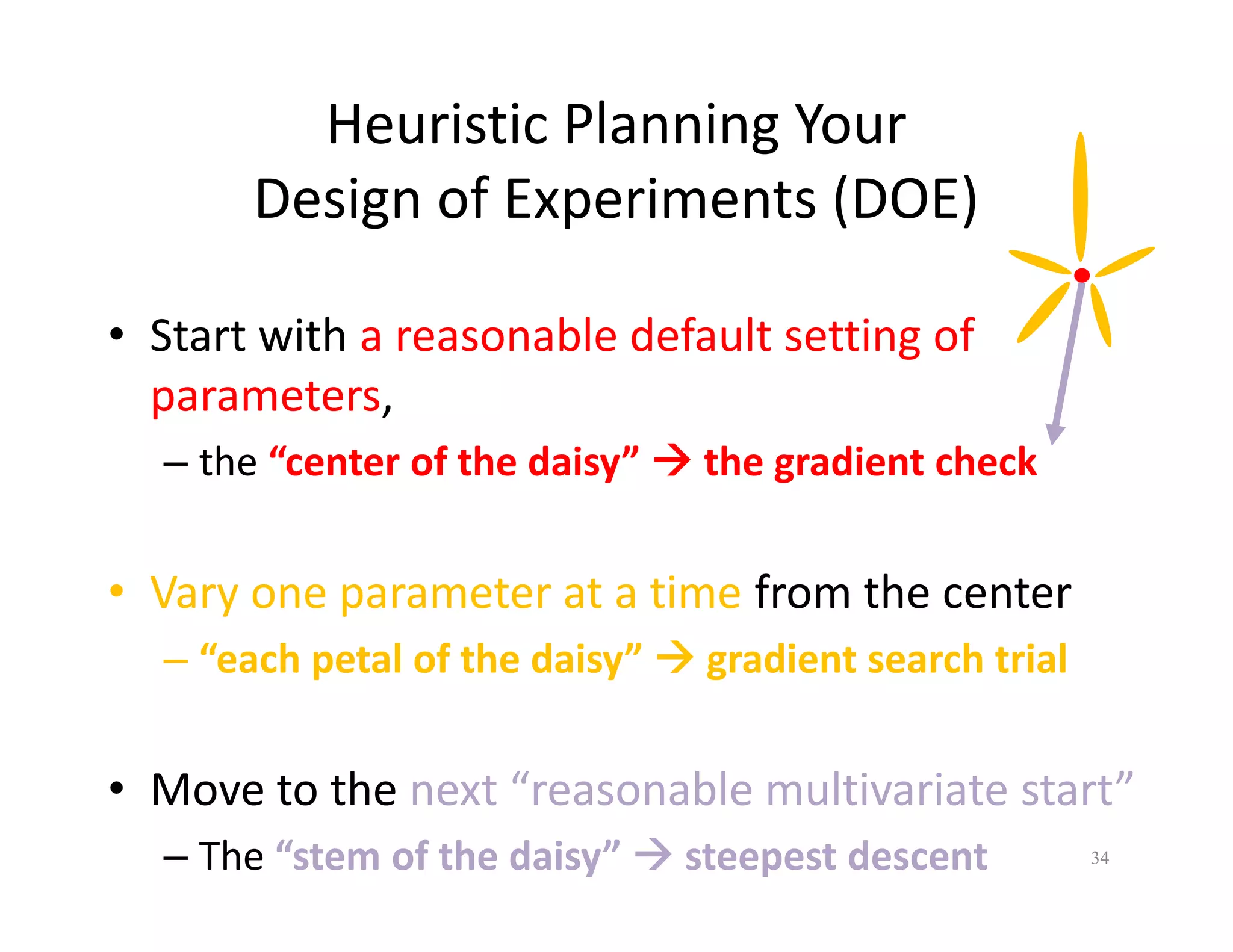

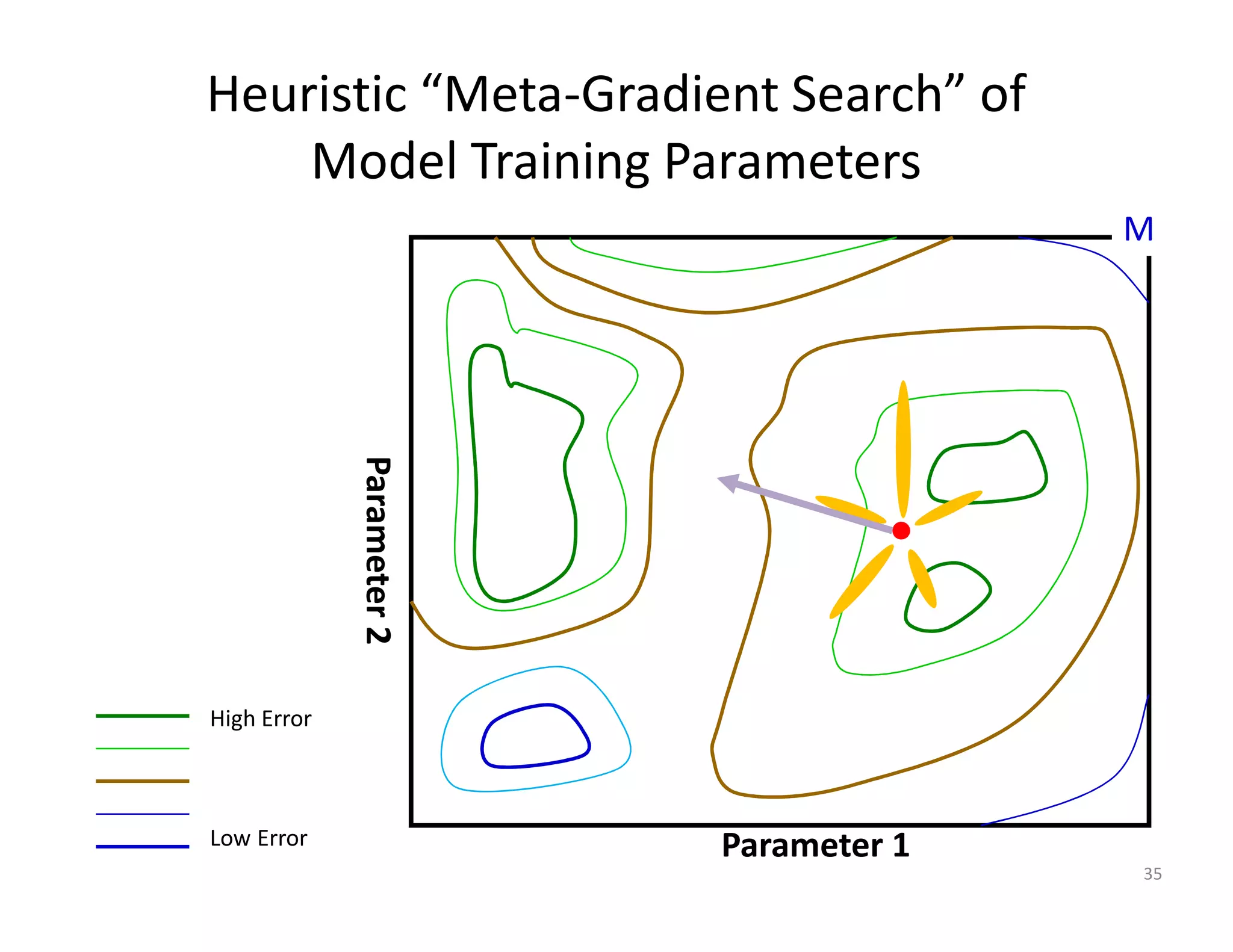

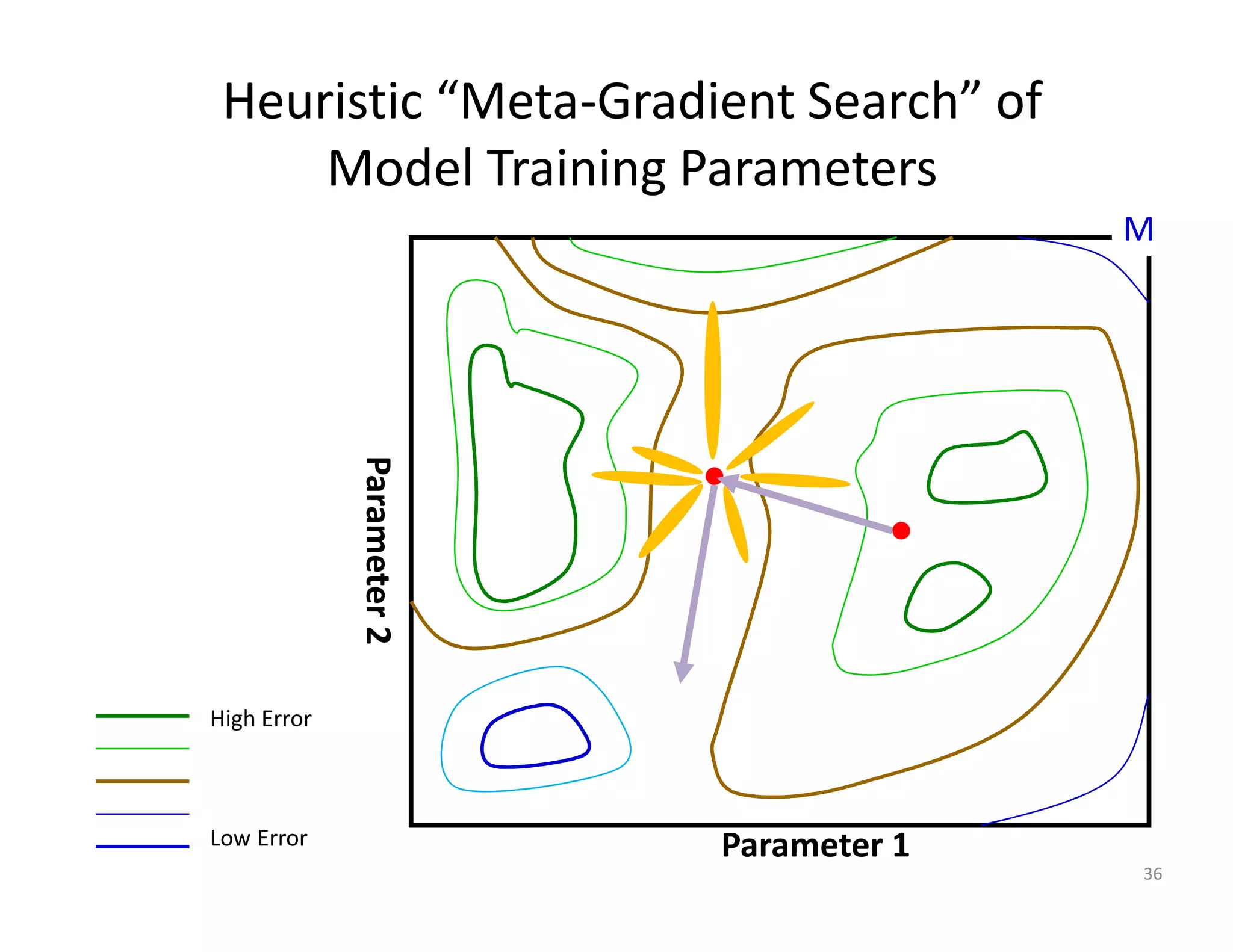

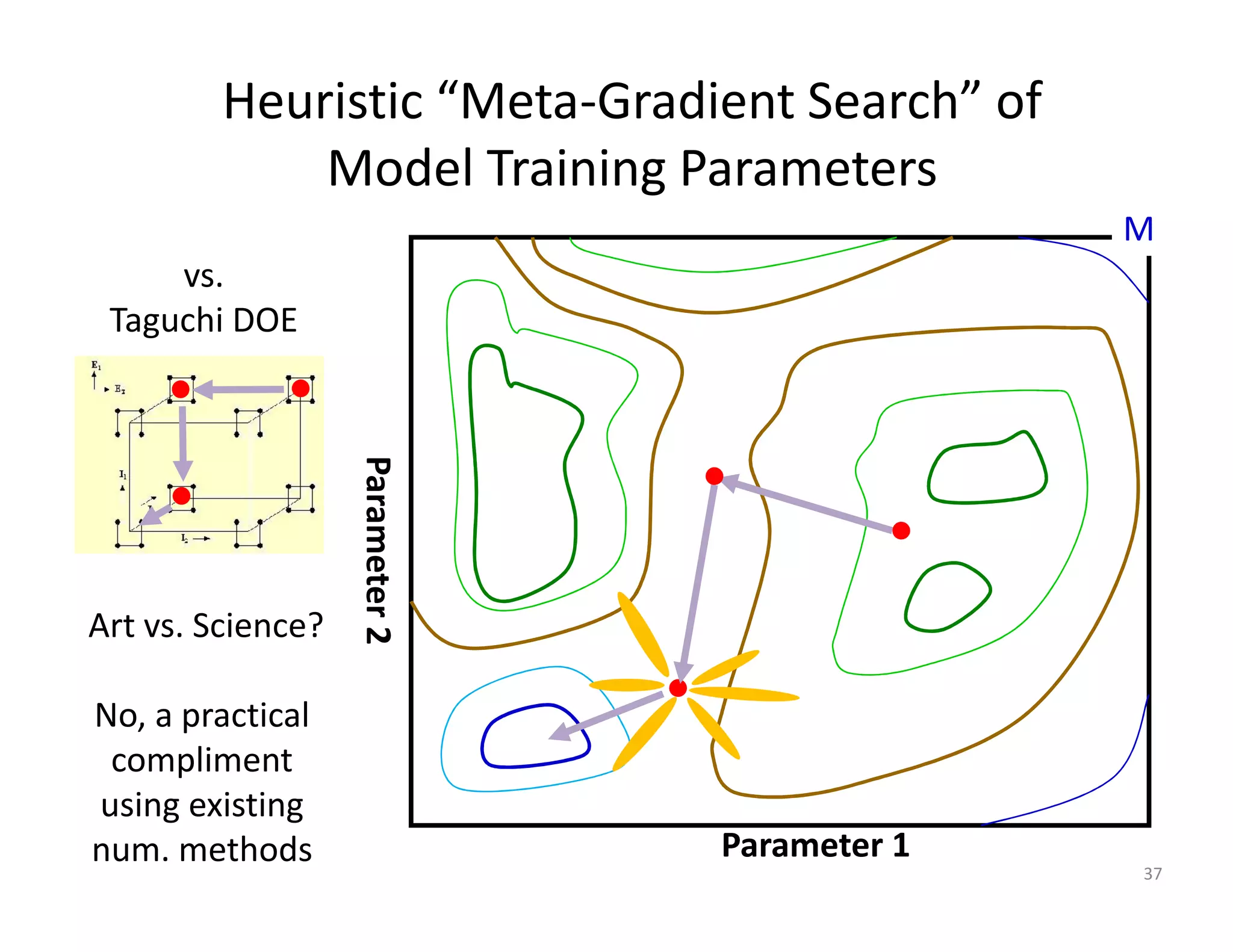

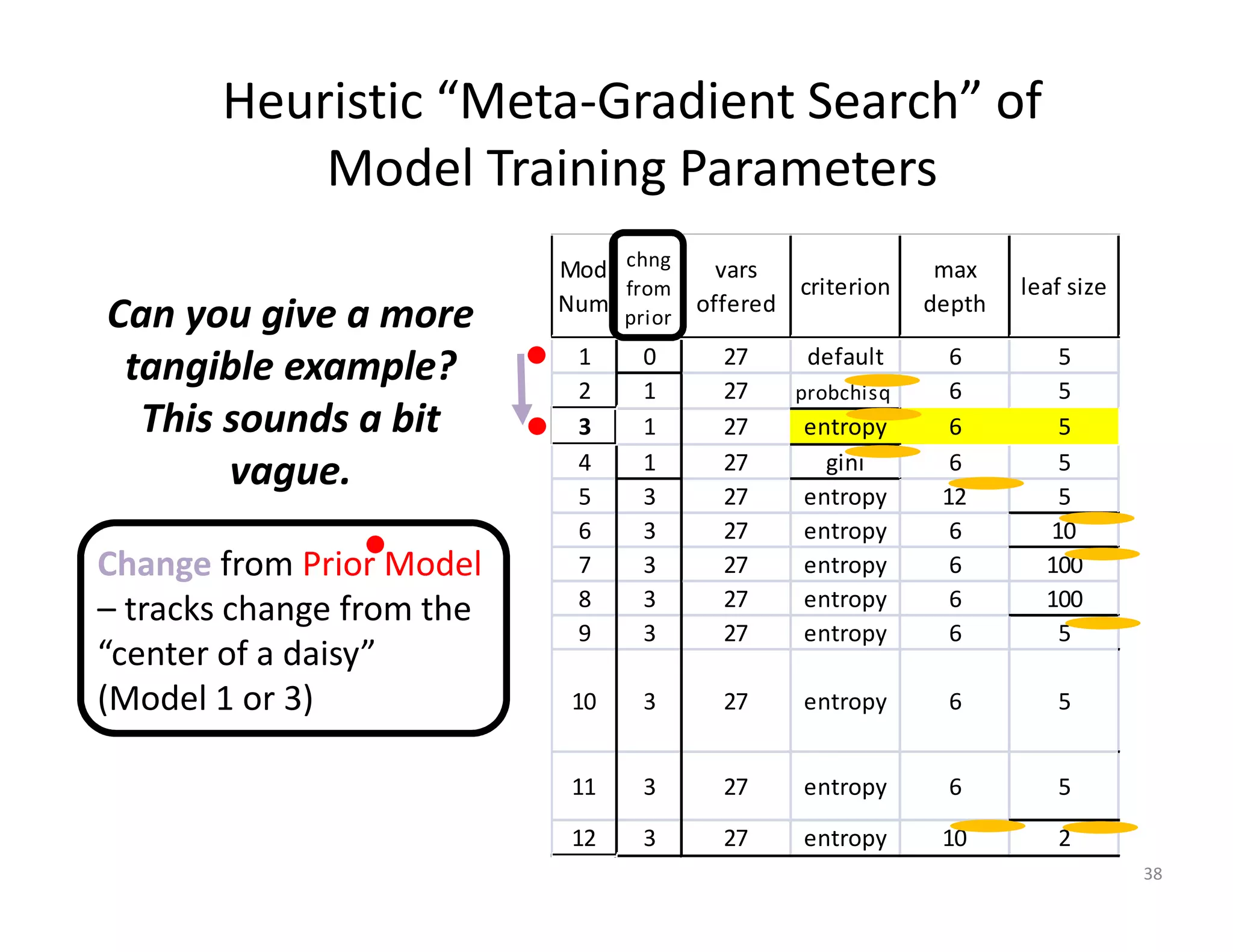

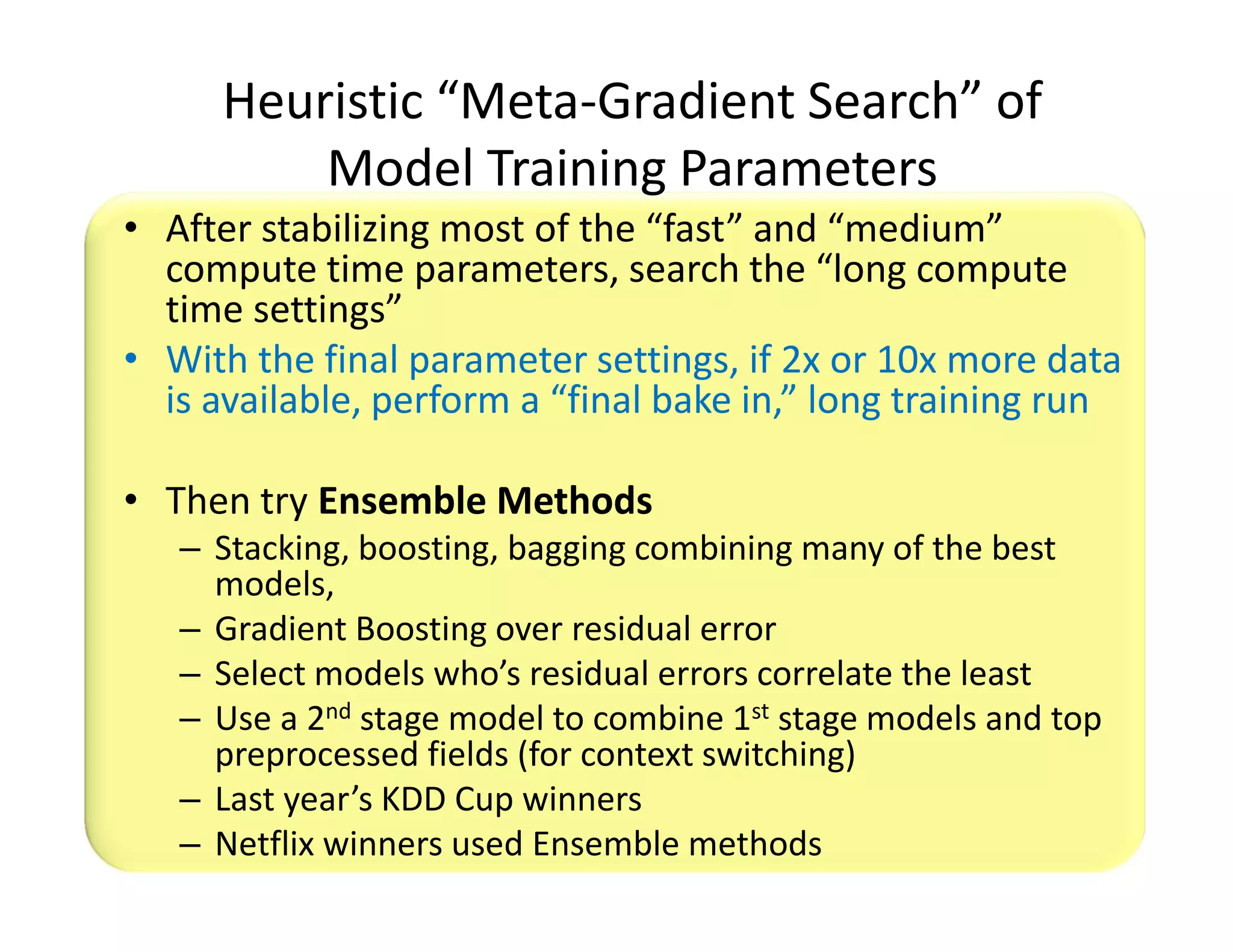

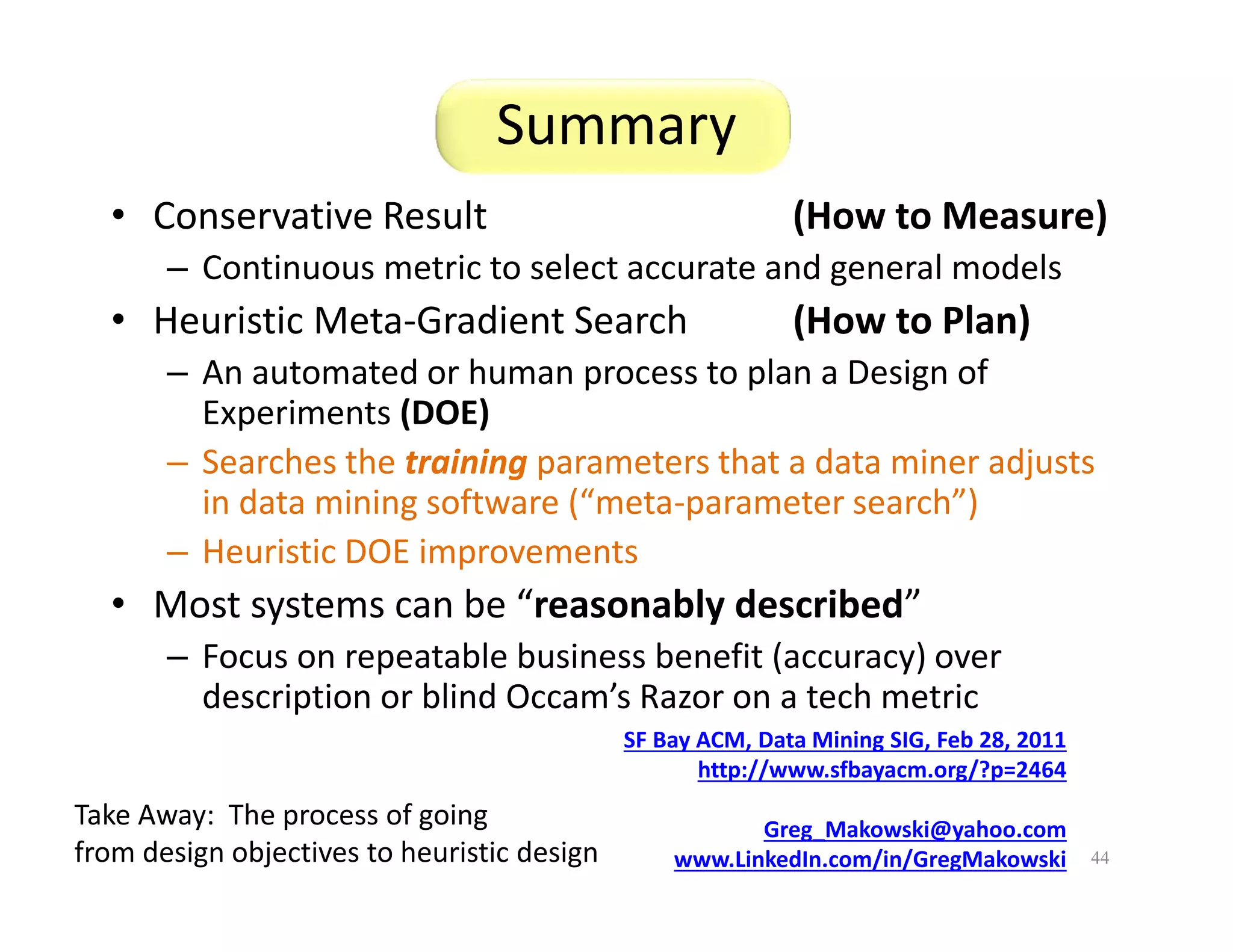

The document discusses heuristic design techniques for experimenting with model training parameters in data mining, aiming to help data miners navigate a plethora of algorithms and choices while maintaining organization and planning. It emphasizes the importance of structured methodologies, such as meta-gradient search, and the use of a model notebook to track and evaluate model performance effectively. Key concepts include regression testing, decision trees, and neural networks, along with strategies to minimize volatility and enhance reliability in model deployment.