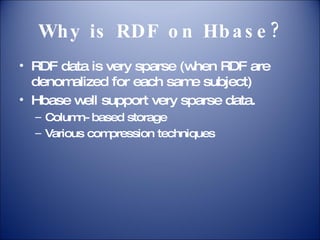

The document proposes a system called HEART for storing and processing large-scale RDF data using HBase and Hadoop. It aims to provide scalability and support for various queries while addressing the challenges of sparse RDF data. HEART will be one of the Apache incubator projects, featuring components like a data loader, storage manager, query processor, and an extended SPARQL query language.