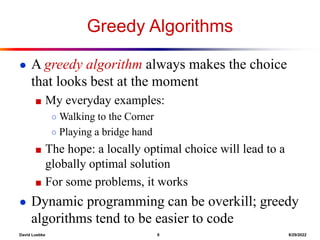

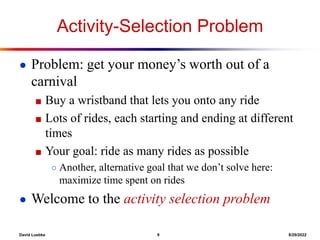

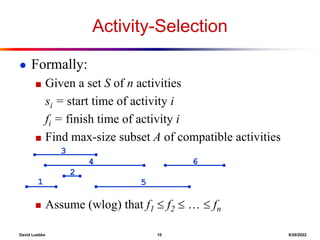

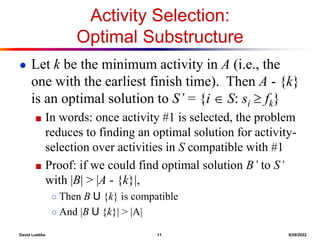

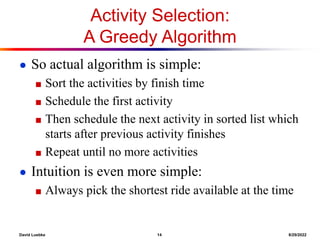

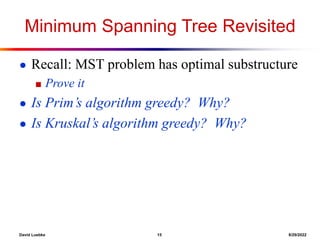

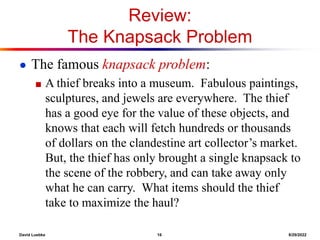

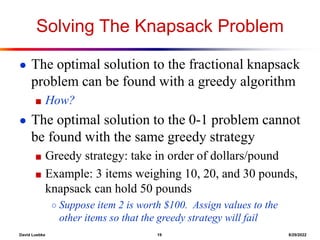

This document discusses algorithms for solving optimization problems like the activity selection problem and knapsack problem. It explains that activity selection can be solved greedily by always selecting the shortest remaining activity that does not conflict with previous selections. The knapsack problem cannot be solved greedily but can be solved using dynamic programming by breaking it into overlapping subproblems and storing results in a table.

![David Luebke 3 8/29/2022

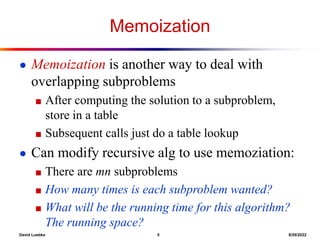

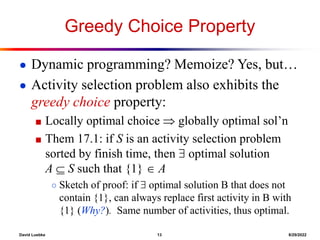

Review: Optimal Substructure of

LCS

● Observation 1: Optimal substructure

■ A simple recursive algorithm will suffice

■ Draw sample recursion tree from c[3,4]

■ What will be the depth of the tree?

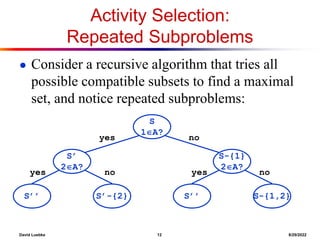

● Observation 2: Overlapping subproblems

■ Find some places where we solve the same

subproblem more than once

otherwise

])

,

1

[

],

1

,

[

max(

],

[

]

[

if

1

]

1

,

1

[

]

,

[

j

i

c

j

i

c

j

y

i

x

j

i

c

j

i

c](https://image.slidesharecdn.com/greedy1-220829050622-d187a58a/85/Greedy1-ppt-3-320.jpg)

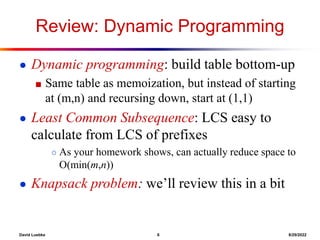

![David Luebke 4 8/29/2022

Review: Structure of Subproblems

● For the LCS problem:

■ There are few subproblems in total

■ And many recurring instances of each

(unlike divide & conquer, where subproblems unique)

● How many distinct problems exist for the LCS

of x[1..m] and y[1..n]?

● A: mn](https://image.slidesharecdn.com/greedy1-220829050622-d187a58a/85/Greedy1-ppt-4-320.jpg)